User login

Mobile Apps for Professional Dermatology Education: An Objective Review

With today’s technology, it is easier than ever to access web-based tools that enrich traditional dermatology education. The literature supports the use of these innovative platforms to enhance learning at the student and trainee levels. A controlled study of pediatric residents showed that online modules effectively supplemented clinical experience with atopic dermatitis.1 In a randomized diagnostic study of medical students, practice with an image-based web application (app) that teaches rapid recognition of melanoma proved more effective than learning a rule-based algorithm.2 Given the visual nature of dermatology, pattern recognition is an essential skill that is fostered through experience and is only made more accessible with technology.

With the added benefit of convenience and accessibility, mobile apps can supplement experiential learning. Mirroring the overall growth of mobile apps, the number of available dermatology apps has increased.3 Dermatology mobile apps serve purposes ranging from quick reference tools to comprehensive modules, journals, and question banks. At an academic hospital in Taiwan, both nondermatology and dermatology trainees’ examination performance improved after 3 weeks of using a smartphone-based wallpaper learning module displaying morphologic characteristics of fungi.4 With the expansion of virtual microscopy, mobile apps also have been created as a learning tool for dermatopathology, giving trainees the flexibility and autonomy to view slides on their own time.5 Nevertheless, the literature on dermatology mobile apps designed for the education of medical students and trainees is limited, demonstrating a need for further investigation.

Prior studies have reviewed dermatology apps for patients and practicing dermatologists.6-8 Herein, we focus on mobile apps targeting students and residents learning dermatology. General dermatology reference apps and educational aid apps have grown by 33% and 32%, respectively, from 2014 to 2017.3 As with any resource meant to educate future and current medical providers, there must be an objective review process in place to ensure accurate, unbiased, evidence-based teaching.

Well-organized, comprehensive information and a user-friendly interface are additional factors of importance when selecting an educational mobile app. When discussing supplemental resources, accessibility and affordability also are priorities given the high cost of a medical education at baseline. Overall, there is a need for a standardized method to evaluate the key factors of an educational mobile app that make it appropriate for this demographic. We conducted a search of mobile apps relating to dermatology education for students and residents.

Methods

We searched for publicly available mobile apps relating to dermatology education in the App Store (Apple Inc) from September to November 2019 using the search terms dermatology education, dermoscopy education, melanoma education, skin cancer education, psoriasis education, rosacea education, acne education, eczema education, dermal fillers education, and Mohs surgery education. We excluded apps that were not in English, were created for a conference, cost more than $5 to download, or did not include a specific dermatology education section. In this way, we hoped to evaluate apps that were relevant, accessible, and affordable.

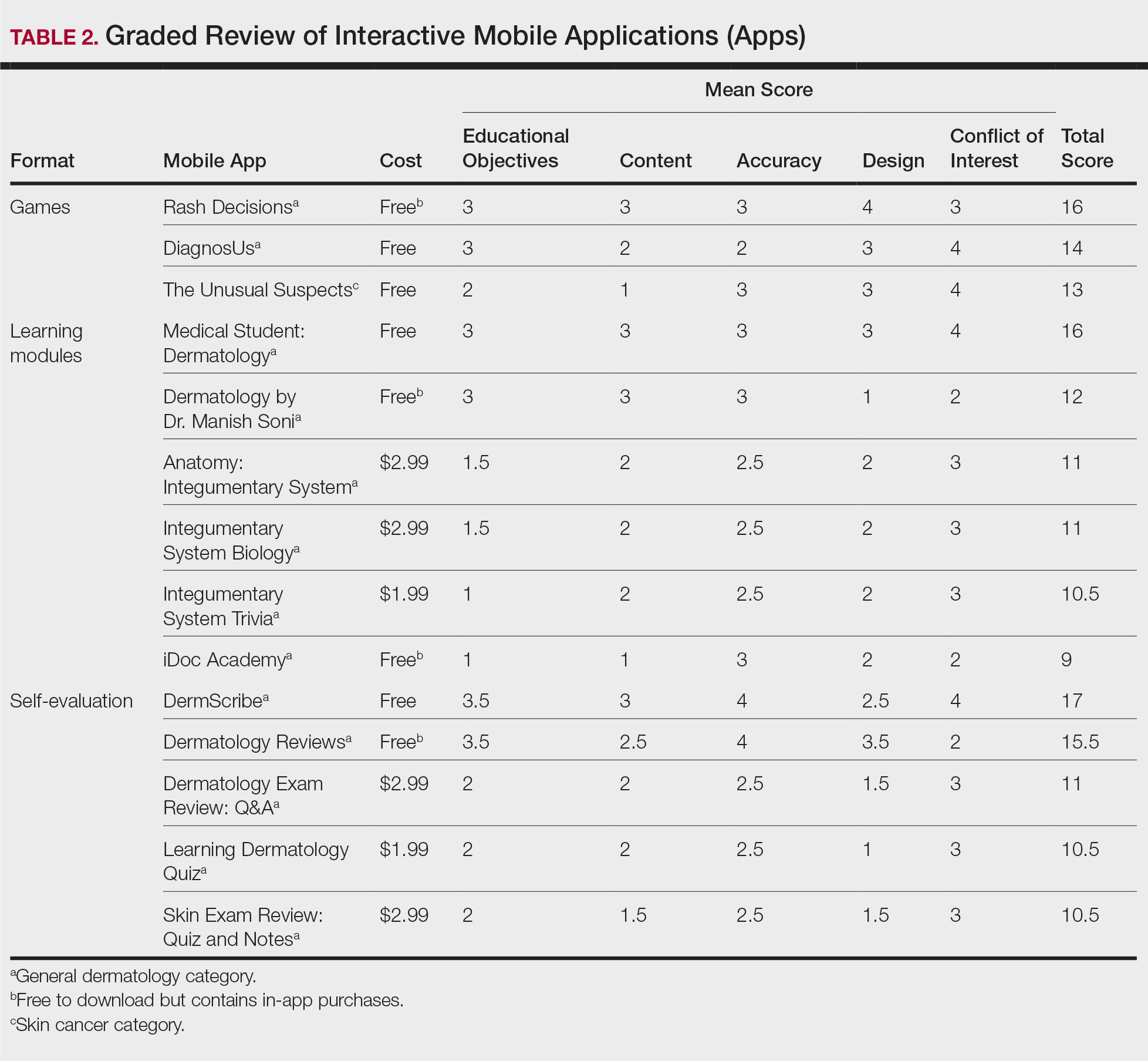

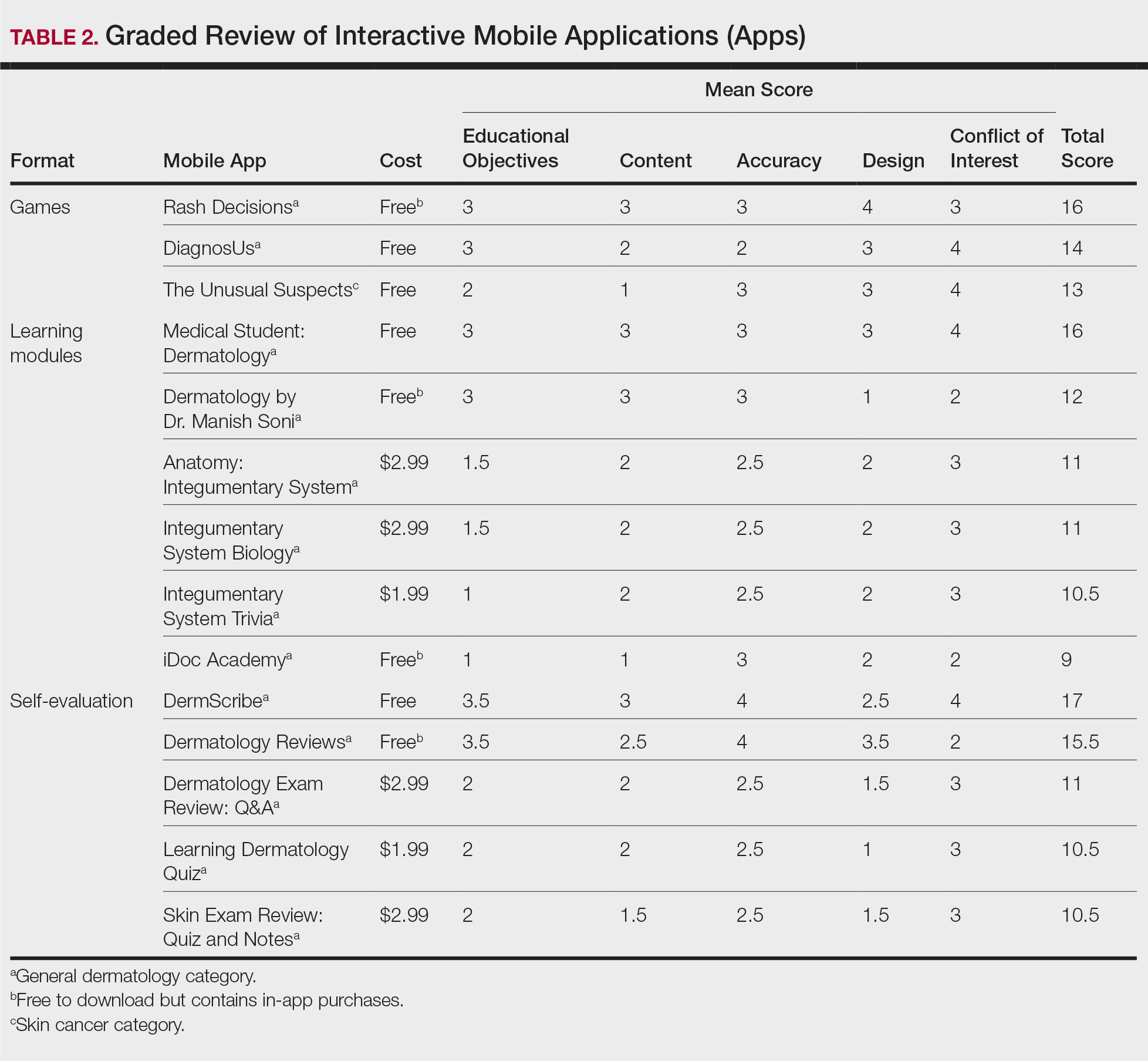

We modeled our study after a review of patient education apps performed by Masud et al6 and utilized their quantified grading rubric (scale of 1 to 4). We found their established criteria—educational objectives, content, accuracy, design, and conflict of interest—to be equally applicable for evaluating apps designed for professional education.6 Each app earned a minimum of 1 point and a maximum of 4 points per criterion. One point was given if the app did not fulfill the criterion, 2 points for minimally fulfilling the criterion, 3 points for mostly fulfilling the criterion, and 4 points if the criterion was completely fulfilled. Two medical students (E.H. and N.C.)—one at the preclinical stage and the other at the clinical stage of medical education—reviewed the apps using the given rubric, then discussed and resolved any discrepancies in points assigned. A dermatology resident (M.A.) independently reviewed the apps using the given rubric.

The mean of the student score and the resident score was calculated for each category. The sum of the averages for each category was considered the final score for an app, determining its overall quality. Apps with a total score of 5 to 10 were considered poor and inadequate for education. A total score of 10.5 to 15 indicated that an app was somewhat adequate (ie, useful for education in some aspects but falling short in others). Apps that were considered adequate for education, across all or most criteria, received a total score ranging from 15.5 to 20.

Results

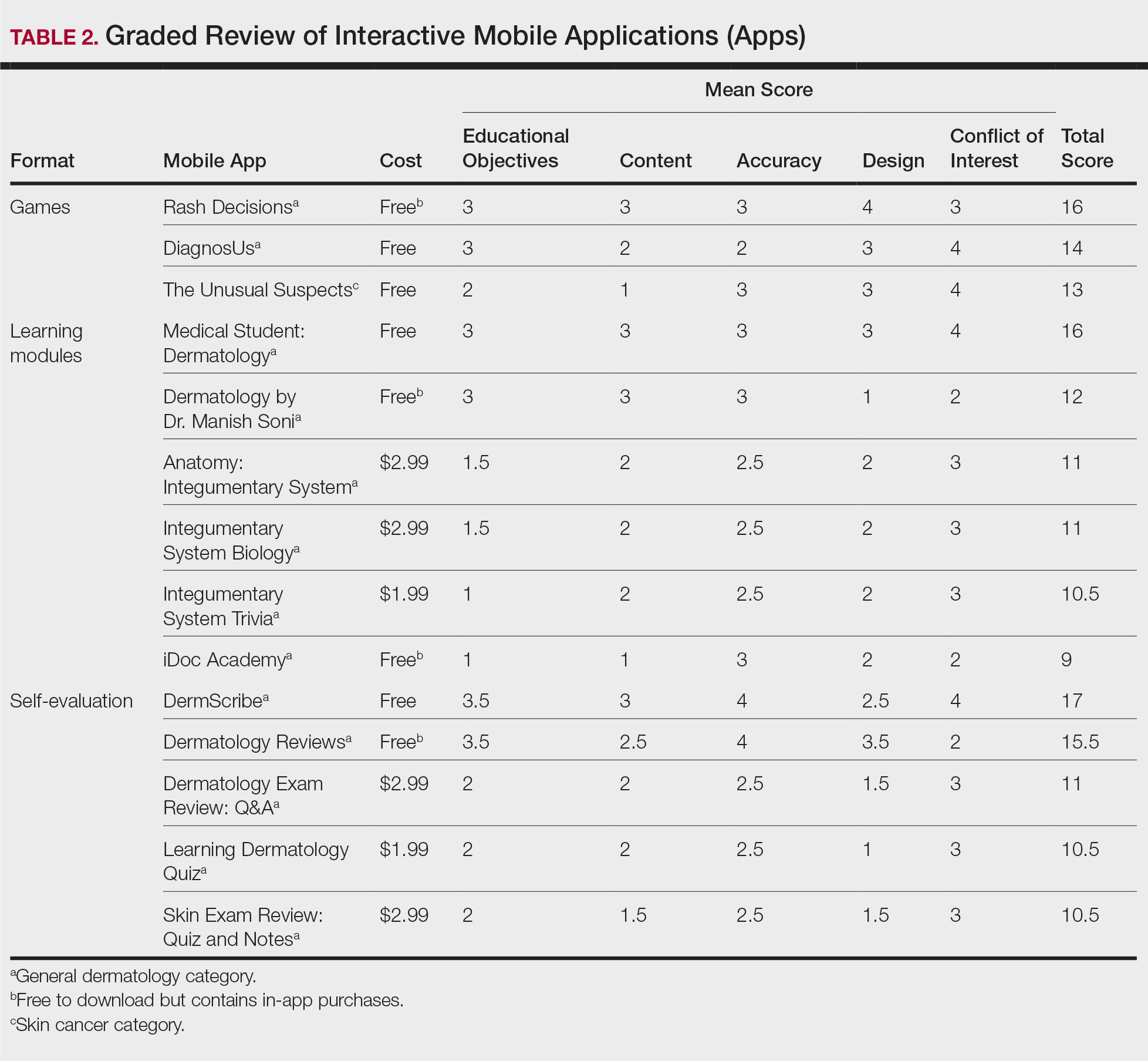

Our search generated 130 apps. After applying exclusion criteria, 42 apps were eligible for review. At the time of publication, 36 of these apps were still available. The possible range of scores based on the rubric was 5 to 20. The actual range of scores was 7 to 20. Of the 36 apps, 2 (5.6%) were poor, 16 (44.4%) were somewhat adequate, and 18 (50%) were adequate. Formats included primary resources, such as clinical decision support tools, journals, references, and a podcast (Table 1). Additionally, interactive learning tools included games, learning modules, and apps for self-evaluation (Table 2). Thirty apps covered general dermatology; others focused on skin cancer (n=5) and cosmetic dermatology (n=1). Regarding cost, 29 apps were free to download, whereas 7 charged a fee (mean price, $2.56).

Comment

In addition to the convenience of having an educational tool in their white-coat pocket, learners of dermatology have been shown to benefit from supplementing their curriculum with mobile apps, which sets the stage for formal integration of mobile apps into dermatology teaching in the future.8 Prior to widespread adoption, mobile apps must be evaluated for content and utility, starting with an objective rubric.

Without official scientific standards in place, it was unsurprising that only half of the dermatology education applications were classified as adequate in this study. Among the types of apps offered—clinical decision support tools, journals, references, podcast, games, learning modules, and self-evaluation—certain categories scored higher than others. App formats with the highest average score (16.5 out of 20) were journals and podcast.

One barrier to utilization of these apps was that a subscription to the journals and podcast was required to obtain access to all available content. Students and trainees can seek out library resources at their academic institutions to take advantage of journal subscriptions available to them at no additional cost. Dermatology residents can take advantage of their complimentary membership in the American Academy of Dermatology for a free subscription to AAD Dialogues in Dermatology (otherwise $179 annually for nonresident members and $320 annually for nonmembers).

On the other hand, learning module was the lowest-rated format (average score, 11.3 out of 20), with only Medical Student: Dermatology qualifying as adequate (total score, 16). This finding is worrisome given that students and residents might look to learning modules for quick targeted lessons on specific topics.

The lowest-scoring app, a clinical decision support tool called Naturelize, received a total score of 7. Although it listed the indications and contraindications for dermal filler types to be used in different locations on the face, there was a clear conflict of interest, oversimplified design, and little evidence-based education, mirroring the current state of cosmetic dermatology training in residency, in which trainees think they are inadequately prepared for aesthetic procedures and comparative effectiveness research is lacking.9-11

At the opposite end of the spectrum, MyDermPath+ was a reference app with a total score of 20. The app cited credible authors with a medical degree (MD) and had an easy-to-use, well-designed interface, including a reference guide, differential builder, and quiz for a range of topics within dermatology. As a free download without in-app purchases or advertisements, there was no evidence of conflict of interest. The position of a dermatopathology app as the top dermatology education mobile app might reflect an increased emphasis on dermatopathology education in residency as well as a transition to digitization of slides.5

The second-highest scoring apps (total score of 19 points) were Dermatology Database and VisualDx. Both were references covering a wide range of dermatology topics. Dermatology Database was a comprehensive search tool for diseases, drugs, procedures, and terms that was simple and entirely free to use but did not cite references. VisualDx, as its name suggests, offered quality clinical images, complete guides with references, and a unique differential builder. An annual subscription is $399.99, but the process to gain free access through a participating academic institution was simple.

Games were a unique mobile app format; however, 2 of 3 games scored in the somewhat adequate range. The game DiagnosUs, which tested users’ ability to differentiate skin cancer and psoriasis from dermatitis on clinical images, would benefit from more comprehensive content as well as professional verification of true diagnoses, which earned the app 2 points in both the content and accuracy categories. The Unusual Suspects tested the ABCDE algorithm in a short learning module, followed by a simple game that involved identification of melanoma in a timed setting. Although the design was novel and interactive, the game was limited to the same 5 melanoma tumors overlaid on pictures of normal skin. The narrow scope earned 1 point for content, the redundancy in the game earned 3 points for design, and the lack of real clinical images earned 2 points for educational objectives. Although game-format mobile apps have the capability to challenge the user’s knowledge with a built-in feedback or reward system, improvements should be made to ensure that apps are equally educational as they are engaging.

AAD Dialogues in Dermatology was the only app in the form of a podcast and provided expert interviews along with disclosures, transcripts, commentary, and references. More than half the content in the app could not be accessed without a subscription, earning 2.5 points in the conflict of interest category. Additionally, several flaws resulted in a design score of 2.5, including inconsistent availability of transcripts, poor quality of sound on some episodes, difficulty distinguishing new episodes from those already played, and a glitch that removed the episode duration. Still, the app was a valuable and comprehensive resource, with clear objectives and cited references. With improvements in content, affordability, and user experience, apps in unique formats such as games and podcasts might appeal to kinesthetic and auditory learners.

An important factor to consider when discussing mobile apps for students and residents is cost. With rising prices of board examinations and preparation materials, supplementary study tools should not come with an exorbitant price tag. Therefore, we limited our evaluation to apps that were free or cost less than $5 to download. Even so, subscriptions and other in-app purchases were an obstacle in one-third of apps, ranging from $4.99 to unlock additional content in Rash Decisions to $69.99 to access most topics in Fitzpatrick’s Color Atlas. The highest-rated app in our study, MyDermPath+, historically cost $19.99 to download but became free with a grant from the Sulzberger Foundation.12 An initial investment to develop quality apps for the purpose of dermatology education might pay off in the end.

To evaluate the apps from the perspective of the target demographic of this study, 2 medical students—one in the preclinical stage and the other in the clinical stage of medical education—and a dermatology resident graded the apps. Certain limitations exist in this type of study, including differing learning styles, which might influence the types of apps that evaluators found most impactful to their education. Interestingly, some apps earned a higher resident score than student score. In particular, RightSite (a reference that helps with anatomically correct labeling) and Mohs Surgery Appropriate Use Criteria (a clinical decision support tool to determine whether to perform Mohs surgery) each had a 3-point discrepancy (data not shown). A resident might benefit from these practical apps in day-to-day practice, but a student would be less likely to find them useful as a learning tool.

Still, by defining adequate teaching value using specific categories of educational objectives, content, accuracy, design, and conflict of interest, we attempted to minimize the effect of personal preference on the grading process. Although we acknowledge a degree of subjectivity, we found that utilizing a previously published rubric with defined criteria was crucial in remaining unbiased.

Conclusion

Further studies should evaluate additional apps available on Apple’s iPad (tablet), as well as those on other operating systems, including Google’s Android. To ensure the existence of mobile apps as adequate education tools, they should be peer reviewed prior to publication or before widespread use by future and current providers at the minimum. To maximize free access to highly valuable resources available in the palm of their hand, students and trainees should contact the library at their academic institution.

- Craddock MF, Blondin HM, Youssef MJ, et al. Online education improves pediatric residents' understanding of atopic dermatitis. Pediatr Dermatol. 2018;35:64-69.

- Lacy FA, Coman GC, Holliday AC, et al. Assessment of smartphone application for teaching intuitive visual diagnosis of melanoma. JAMA Dermatol. 2018;154:730-731.

- Flaten HK, St Claire C, Schlager E, et al. Growth of mobile applications in dermatology--2017 update. Dermatol Online J. 2018;24:13.

- Liu R-F, Wang F-Y, Yen H, et al. A new mobile learning module using smartphone wallpapers in identification of medical fungi for medical students and residents. Int J Dermatol. 2018;57:458-462.

- Shahriari N, Grant-Kels J, Murphy MJ. Dermatopathology education in the era of modern technology. J Cutan Pathol. 2017;44:763-771.

- Masud A, Shafi S, Rao BK. Mobile medical apps for patient education: a graded review of available dermatology apps. Cutis. 2018;101:141-144.

- Mercer JM. An array of mobile apps for dermatologists. J Cutan Med Surg. 2014;18:295-297.

- Tongdee E, Markowitz O. Mobile app rankings in dermatology. Cutis. 2018;102:252-256.

- Kirby JS, Adgerson CN, Anderson BE. A survey of dermatology resident education in cosmetic procedures. J Am Acad Dermatol. 2013;68:e23-e28.

- Waldman A, Sobanko JF, Alam M. Practice and educational gaps in cosmetic dermatologic surgery. Dermatol Clin. 2016;34:341-346.

- Nielson CB, Harb JN, Motaparthi K. Education in cosmetic procedural dermatology: resident experiences and perceptions. J Clin Aesthet Dermatol. 2019;12:E70-E72.

- Hanna MG, Parwani AV, Pantanowitz L, et al. Smartphone applications: a contemporary resource for dermatopathology. J Pathol Inform. 2015;6:44.

With today’s technology, it is easier than ever to access web-based tools that enrich traditional dermatology education. The literature supports the use of these innovative platforms to enhance learning at the student and trainee levels. A controlled study of pediatric residents showed that online modules effectively supplemented clinical experience with atopic dermatitis.1 In a randomized diagnostic study of medical students, practice with an image-based web application (app) that teaches rapid recognition of melanoma proved more effective than learning a rule-based algorithm.2 Given the visual nature of dermatology, pattern recognition is an essential skill that is fostered through experience and is only made more accessible with technology.

With the added benefit of convenience and accessibility, mobile apps can supplement experiential learning. Mirroring the overall growth of mobile apps, the number of available dermatology apps has increased.3 Dermatology mobile apps serve purposes ranging from quick reference tools to comprehensive modules, journals, and question banks. At an academic hospital in Taiwan, both nondermatology and dermatology trainees’ examination performance improved after 3 weeks of using a smartphone-based wallpaper learning module displaying morphologic characteristics of fungi.4 With the expansion of virtual microscopy, mobile apps also have been created as a learning tool for dermatopathology, giving trainees the flexibility and autonomy to view slides on their own time.5 Nevertheless, the literature on dermatology mobile apps designed for the education of medical students and trainees is limited, demonstrating a need for further investigation.

Prior studies have reviewed dermatology apps for patients and practicing dermatologists.6-8 Herein, we focus on mobile apps targeting students and residents learning dermatology. General dermatology reference apps and educational aid apps have grown by 33% and 32%, respectively, from 2014 to 2017.3 As with any resource meant to educate future and current medical providers, there must be an objective review process in place to ensure accurate, unbiased, evidence-based teaching.

Well-organized, comprehensive information and a user-friendly interface are additional factors of importance when selecting an educational mobile app. When discussing supplemental resources, accessibility and affordability also are priorities given the high cost of a medical education at baseline. Overall, there is a need for a standardized method to evaluate the key factors of an educational mobile app that make it appropriate for this demographic. We conducted a search of mobile apps relating to dermatology education for students and residents.

Methods

We searched for publicly available mobile apps relating to dermatology education in the App Store (Apple Inc) from September to November 2019 using the search terms dermatology education, dermoscopy education, melanoma education, skin cancer education, psoriasis education, rosacea education, acne education, eczema education, dermal fillers education, and Mohs surgery education. We excluded apps that were not in English, were created for a conference, cost more than $5 to download, or did not include a specific dermatology education section. In this way, we hoped to evaluate apps that were relevant, accessible, and affordable.

We modeled our study after a review of patient education apps performed by Masud et al6 and utilized their quantified grading rubric (scale of 1 to 4). We found their established criteria—educational objectives, content, accuracy, design, and conflict of interest—to be equally applicable for evaluating apps designed for professional education.6 Each app earned a minimum of 1 point and a maximum of 4 points per criterion. One point was given if the app did not fulfill the criterion, 2 points for minimally fulfilling the criterion, 3 points for mostly fulfilling the criterion, and 4 points if the criterion was completely fulfilled. Two medical students (E.H. and N.C.)—one at the preclinical stage and the other at the clinical stage of medical education—reviewed the apps using the given rubric, then discussed and resolved any discrepancies in points assigned. A dermatology resident (M.A.) independently reviewed the apps using the given rubric.

The mean of the student score and the resident score was calculated for each category. The sum of the averages for each category was considered the final score for an app, determining its overall quality. Apps with a total score of 5 to 10 were considered poor and inadequate for education. A total score of 10.5 to 15 indicated that an app was somewhat adequate (ie, useful for education in some aspects but falling short in others). Apps that were considered adequate for education, across all or most criteria, received a total score ranging from 15.5 to 20.

Results

Our search generated 130 apps. After applying exclusion criteria, 42 apps were eligible for review. At the time of publication, 36 of these apps were still available. The possible range of scores based on the rubric was 5 to 20. The actual range of scores was 7 to 20. Of the 36 apps, 2 (5.6%) were poor, 16 (44.4%) were somewhat adequate, and 18 (50%) were adequate. Formats included primary resources, such as clinical decision support tools, journals, references, and a podcast (Table 1). Additionally, interactive learning tools included games, learning modules, and apps for self-evaluation (Table 2). Thirty apps covered general dermatology; others focused on skin cancer (n=5) and cosmetic dermatology (n=1). Regarding cost, 29 apps were free to download, whereas 7 charged a fee (mean price, $2.56).

Comment

In addition to the convenience of having an educational tool in their white-coat pocket, learners of dermatology have been shown to benefit from supplementing their curriculum with mobile apps, which sets the stage for formal integration of mobile apps into dermatology teaching in the future.8 Prior to widespread adoption, mobile apps must be evaluated for content and utility, starting with an objective rubric.

Without official scientific standards in place, it was unsurprising that only half of the dermatology education applications were classified as adequate in this study. Among the types of apps offered—clinical decision support tools, journals, references, podcast, games, learning modules, and self-evaluation—certain categories scored higher than others. App formats with the highest average score (16.5 out of 20) were journals and podcast.

One barrier to utilization of these apps was that a subscription to the journals and podcast was required to obtain access to all available content. Students and trainees can seek out library resources at their academic institutions to take advantage of journal subscriptions available to them at no additional cost. Dermatology residents can take advantage of their complimentary membership in the American Academy of Dermatology for a free subscription to AAD Dialogues in Dermatology (otherwise $179 annually for nonresident members and $320 annually for nonmembers).

On the other hand, learning module was the lowest-rated format (average score, 11.3 out of 20), with only Medical Student: Dermatology qualifying as adequate (total score, 16). This finding is worrisome given that students and residents might look to learning modules for quick targeted lessons on specific topics.

The lowest-scoring app, a clinical decision support tool called Naturelize, received a total score of 7. Although it listed the indications and contraindications for dermal filler types to be used in different locations on the face, there was a clear conflict of interest, oversimplified design, and little evidence-based education, mirroring the current state of cosmetic dermatology training in residency, in which trainees think they are inadequately prepared for aesthetic procedures and comparative effectiveness research is lacking.9-11

At the opposite end of the spectrum, MyDermPath+ was a reference app with a total score of 20. The app cited credible authors with a medical degree (MD) and had an easy-to-use, well-designed interface, including a reference guide, differential builder, and quiz for a range of topics within dermatology. As a free download without in-app purchases or advertisements, there was no evidence of conflict of interest. The position of a dermatopathology app as the top dermatology education mobile app might reflect an increased emphasis on dermatopathology education in residency as well as a transition to digitization of slides.5

The second-highest scoring apps (total score of 19 points) were Dermatology Database and VisualDx. Both were references covering a wide range of dermatology topics. Dermatology Database was a comprehensive search tool for diseases, drugs, procedures, and terms that was simple and entirely free to use but did not cite references. VisualDx, as its name suggests, offered quality clinical images, complete guides with references, and a unique differential builder. An annual subscription is $399.99, but the process to gain free access through a participating academic institution was simple.

Games were a unique mobile app format; however, 2 of 3 games scored in the somewhat adequate range. The game DiagnosUs, which tested users’ ability to differentiate skin cancer and psoriasis from dermatitis on clinical images, would benefit from more comprehensive content as well as professional verification of true diagnoses, which earned the app 2 points in both the content and accuracy categories. The Unusual Suspects tested the ABCDE algorithm in a short learning module, followed by a simple game that involved identification of melanoma in a timed setting. Although the design was novel and interactive, the game was limited to the same 5 melanoma tumors overlaid on pictures of normal skin. The narrow scope earned 1 point for content, the redundancy in the game earned 3 points for design, and the lack of real clinical images earned 2 points for educational objectives. Although game-format mobile apps have the capability to challenge the user’s knowledge with a built-in feedback or reward system, improvements should be made to ensure that apps are equally educational as they are engaging.

AAD Dialogues in Dermatology was the only app in the form of a podcast and provided expert interviews along with disclosures, transcripts, commentary, and references. More than half the content in the app could not be accessed without a subscription, earning 2.5 points in the conflict of interest category. Additionally, several flaws resulted in a design score of 2.5, including inconsistent availability of transcripts, poor quality of sound on some episodes, difficulty distinguishing new episodes from those already played, and a glitch that removed the episode duration. Still, the app was a valuable and comprehensive resource, with clear objectives and cited references. With improvements in content, affordability, and user experience, apps in unique formats such as games and podcasts might appeal to kinesthetic and auditory learners.

An important factor to consider when discussing mobile apps for students and residents is cost. With rising prices of board examinations and preparation materials, supplementary study tools should not come with an exorbitant price tag. Therefore, we limited our evaluation to apps that were free or cost less than $5 to download. Even so, subscriptions and other in-app purchases were an obstacle in one-third of apps, ranging from $4.99 to unlock additional content in Rash Decisions to $69.99 to access most topics in Fitzpatrick’s Color Atlas. The highest-rated app in our study, MyDermPath+, historically cost $19.99 to download but became free with a grant from the Sulzberger Foundation.12 An initial investment to develop quality apps for the purpose of dermatology education might pay off in the end.

To evaluate the apps from the perspective of the target demographic of this study, 2 medical students—one in the preclinical stage and the other in the clinical stage of medical education—and a dermatology resident graded the apps. Certain limitations exist in this type of study, including differing learning styles, which might influence the types of apps that evaluators found most impactful to their education. Interestingly, some apps earned a higher resident score than student score. In particular, RightSite (a reference that helps with anatomically correct labeling) and Mohs Surgery Appropriate Use Criteria (a clinical decision support tool to determine whether to perform Mohs surgery) each had a 3-point discrepancy (data not shown). A resident might benefit from these practical apps in day-to-day practice, but a student would be less likely to find them useful as a learning tool.

Still, by defining adequate teaching value using specific categories of educational objectives, content, accuracy, design, and conflict of interest, we attempted to minimize the effect of personal preference on the grading process. Although we acknowledge a degree of subjectivity, we found that utilizing a previously published rubric with defined criteria was crucial in remaining unbiased.

Conclusion

Further studies should evaluate additional apps available on Apple’s iPad (tablet), as well as those on other operating systems, including Google’s Android. To ensure the existence of mobile apps as adequate education tools, they should be peer reviewed prior to publication or before widespread use by future and current providers at the minimum. To maximize free access to highly valuable resources available in the palm of their hand, students and trainees should contact the library at their academic institution.

With today’s technology, it is easier than ever to access web-based tools that enrich traditional dermatology education. The literature supports the use of these innovative platforms to enhance learning at the student and trainee levels. A controlled study of pediatric residents showed that online modules effectively supplemented clinical experience with atopic dermatitis.1 In a randomized diagnostic study of medical students, practice with an image-based web application (app) that teaches rapid recognition of melanoma proved more effective than learning a rule-based algorithm.2 Given the visual nature of dermatology, pattern recognition is an essential skill that is fostered through experience and is only made more accessible with technology.

With the added benefit of convenience and accessibility, mobile apps can supplement experiential learning. Mirroring the overall growth of mobile apps, the number of available dermatology apps has increased.3 Dermatology mobile apps serve purposes ranging from quick reference tools to comprehensive modules, journals, and question banks. At an academic hospital in Taiwan, both nondermatology and dermatology trainees’ examination performance improved after 3 weeks of using a smartphone-based wallpaper learning module displaying morphologic characteristics of fungi.4 With the expansion of virtual microscopy, mobile apps also have been created as a learning tool for dermatopathology, giving trainees the flexibility and autonomy to view slides on their own time.5 Nevertheless, the literature on dermatology mobile apps designed for the education of medical students and trainees is limited, demonstrating a need for further investigation.

Prior studies have reviewed dermatology apps for patients and practicing dermatologists.6-8 Herein, we focus on mobile apps targeting students and residents learning dermatology. General dermatology reference apps and educational aid apps have grown by 33% and 32%, respectively, from 2014 to 2017.3 As with any resource meant to educate future and current medical providers, there must be an objective review process in place to ensure accurate, unbiased, evidence-based teaching.

Well-organized, comprehensive information and a user-friendly interface are additional factors of importance when selecting an educational mobile app. When discussing supplemental resources, accessibility and affordability also are priorities given the high cost of a medical education at baseline. Overall, there is a need for a standardized method to evaluate the key factors of an educational mobile app that make it appropriate for this demographic. We conducted a search of mobile apps relating to dermatology education for students and residents.

Methods

We searched for publicly available mobile apps relating to dermatology education in the App Store (Apple Inc) from September to November 2019 using the search terms dermatology education, dermoscopy education, melanoma education, skin cancer education, psoriasis education, rosacea education, acne education, eczema education, dermal fillers education, and Mohs surgery education. We excluded apps that were not in English, were created for a conference, cost more than $5 to download, or did not include a specific dermatology education section. In this way, we hoped to evaluate apps that were relevant, accessible, and affordable.

We modeled our study after a review of patient education apps performed by Masud et al6 and utilized their quantified grading rubric (scale of 1 to 4). We found their established criteria—educational objectives, content, accuracy, design, and conflict of interest—to be equally applicable for evaluating apps designed for professional education.6 Each app earned a minimum of 1 point and a maximum of 4 points per criterion. One point was given if the app did not fulfill the criterion, 2 points for minimally fulfilling the criterion, 3 points for mostly fulfilling the criterion, and 4 points if the criterion was completely fulfilled. Two medical students (E.H. and N.C.)—one at the preclinical stage and the other at the clinical stage of medical education—reviewed the apps using the given rubric, then discussed and resolved any discrepancies in points assigned. A dermatology resident (M.A.) independently reviewed the apps using the given rubric.

The mean of the student score and the resident score was calculated for each category. The sum of the averages for each category was considered the final score for an app, determining its overall quality. Apps with a total score of 5 to 10 were considered poor and inadequate for education. A total score of 10.5 to 15 indicated that an app was somewhat adequate (ie, useful for education in some aspects but falling short in others). Apps that were considered adequate for education, across all or most criteria, received a total score ranging from 15.5 to 20.

Results

Our search generated 130 apps. After applying exclusion criteria, 42 apps were eligible for review. At the time of publication, 36 of these apps were still available. The possible range of scores based on the rubric was 5 to 20. The actual range of scores was 7 to 20. Of the 36 apps, 2 (5.6%) were poor, 16 (44.4%) were somewhat adequate, and 18 (50%) were adequate. Formats included primary resources, such as clinical decision support tools, journals, references, and a podcast (Table 1). Additionally, interactive learning tools included games, learning modules, and apps for self-evaluation (Table 2). Thirty apps covered general dermatology; others focused on skin cancer (n=5) and cosmetic dermatology (n=1). Regarding cost, 29 apps were free to download, whereas 7 charged a fee (mean price, $2.56).

Comment

In addition to the convenience of having an educational tool in their white-coat pocket, learners of dermatology have been shown to benefit from supplementing their curriculum with mobile apps, which sets the stage for formal integration of mobile apps into dermatology teaching in the future.8 Prior to widespread adoption, mobile apps must be evaluated for content and utility, starting with an objective rubric.

Without official scientific standards in place, it was unsurprising that only half of the dermatology education applications were classified as adequate in this study. Among the types of apps offered—clinical decision support tools, journals, references, podcast, games, learning modules, and self-evaluation—certain categories scored higher than others. App formats with the highest average score (16.5 out of 20) were journals and podcast.

One barrier to utilization of these apps was that a subscription to the journals and podcast was required to obtain access to all available content. Students and trainees can seek out library resources at their academic institutions to take advantage of journal subscriptions available to them at no additional cost. Dermatology residents can take advantage of their complimentary membership in the American Academy of Dermatology for a free subscription to AAD Dialogues in Dermatology (otherwise $179 annually for nonresident members and $320 annually for nonmembers).

On the other hand, learning module was the lowest-rated format (average score, 11.3 out of 20), with only Medical Student: Dermatology qualifying as adequate (total score, 16). This finding is worrisome given that students and residents might look to learning modules for quick targeted lessons on specific topics.

The lowest-scoring app, a clinical decision support tool called Naturelize, received a total score of 7. Although it listed the indications and contraindications for dermal filler types to be used in different locations on the face, there was a clear conflict of interest, oversimplified design, and little evidence-based education, mirroring the current state of cosmetic dermatology training in residency, in which trainees think they are inadequately prepared for aesthetic procedures and comparative effectiveness research is lacking.9-11

At the opposite end of the spectrum, MyDermPath+ was a reference app with a total score of 20. The app cited credible authors with a medical degree (MD) and had an easy-to-use, well-designed interface, including a reference guide, differential builder, and quiz for a range of topics within dermatology. As a free download without in-app purchases or advertisements, there was no evidence of conflict of interest. The position of a dermatopathology app as the top dermatology education mobile app might reflect an increased emphasis on dermatopathology education in residency as well as a transition to digitization of slides.5

The second-highest scoring apps (total score of 19 points) were Dermatology Database and VisualDx. Both were references covering a wide range of dermatology topics. Dermatology Database was a comprehensive search tool for diseases, drugs, procedures, and terms that was simple and entirely free to use but did not cite references. VisualDx, as its name suggests, offered quality clinical images, complete guides with references, and a unique differential builder. An annual subscription is $399.99, but the process to gain free access through a participating academic institution was simple.

Games were a unique mobile app format; however, 2 of 3 games scored in the somewhat adequate range. The game DiagnosUs, which tested users’ ability to differentiate skin cancer and psoriasis from dermatitis on clinical images, would benefit from more comprehensive content as well as professional verification of true diagnoses, which earned the app 2 points in both the content and accuracy categories. The Unusual Suspects tested the ABCDE algorithm in a short learning module, followed by a simple game that involved identification of melanoma in a timed setting. Although the design was novel and interactive, the game was limited to the same 5 melanoma tumors overlaid on pictures of normal skin. The narrow scope earned 1 point for content, the redundancy in the game earned 3 points for design, and the lack of real clinical images earned 2 points for educational objectives. Although game-format mobile apps have the capability to challenge the user’s knowledge with a built-in feedback or reward system, improvements should be made to ensure that apps are equally educational as they are engaging.

AAD Dialogues in Dermatology was the only app in the form of a podcast and provided expert interviews along with disclosures, transcripts, commentary, and references. More than half the content in the app could not be accessed without a subscription, earning 2.5 points in the conflict of interest category. Additionally, several flaws resulted in a design score of 2.5, including inconsistent availability of transcripts, poor quality of sound on some episodes, difficulty distinguishing new episodes from those already played, and a glitch that removed the episode duration. Still, the app was a valuable and comprehensive resource, with clear objectives and cited references. With improvements in content, affordability, and user experience, apps in unique formats such as games and podcasts might appeal to kinesthetic and auditory learners.

An important factor to consider when discussing mobile apps for students and residents is cost. With rising prices of board examinations and preparation materials, supplementary study tools should not come with an exorbitant price tag. Therefore, we limited our evaluation to apps that were free or cost less than $5 to download. Even so, subscriptions and other in-app purchases were an obstacle in one-third of apps, ranging from $4.99 to unlock additional content in Rash Decisions to $69.99 to access most topics in Fitzpatrick’s Color Atlas. The highest-rated app in our study, MyDermPath+, historically cost $19.99 to download but became free with a grant from the Sulzberger Foundation.12 An initial investment to develop quality apps for the purpose of dermatology education might pay off in the end.

To evaluate the apps from the perspective of the target demographic of this study, 2 medical students—one in the preclinical stage and the other in the clinical stage of medical education—and a dermatology resident graded the apps. Certain limitations exist in this type of study, including differing learning styles, which might influence the types of apps that evaluators found most impactful to their education. Interestingly, some apps earned a higher resident score than student score. In particular, RightSite (a reference that helps with anatomically correct labeling) and Mohs Surgery Appropriate Use Criteria (a clinical decision support tool to determine whether to perform Mohs surgery) each had a 3-point discrepancy (data not shown). A resident might benefit from these practical apps in day-to-day practice, but a student would be less likely to find them useful as a learning tool.

Still, by defining adequate teaching value using specific categories of educational objectives, content, accuracy, design, and conflict of interest, we attempted to minimize the effect of personal preference on the grading process. Although we acknowledge a degree of subjectivity, we found that utilizing a previously published rubric with defined criteria was crucial in remaining unbiased.

Conclusion

Further studies should evaluate additional apps available on Apple’s iPad (tablet), as well as those on other operating systems, including Google’s Android. To ensure the existence of mobile apps as adequate education tools, they should be peer reviewed prior to publication or before widespread use by future and current providers at the minimum. To maximize free access to highly valuable resources available in the palm of their hand, students and trainees should contact the library at their academic institution.

- Craddock MF, Blondin HM, Youssef MJ, et al. Online education improves pediatric residents' understanding of atopic dermatitis. Pediatr Dermatol. 2018;35:64-69.

- Lacy FA, Coman GC, Holliday AC, et al. Assessment of smartphone application for teaching intuitive visual diagnosis of melanoma. JAMA Dermatol. 2018;154:730-731.

- Flaten HK, St Claire C, Schlager E, et al. Growth of mobile applications in dermatology--2017 update. Dermatol Online J. 2018;24:13.

- Liu R-F, Wang F-Y, Yen H, et al. A new mobile learning module using smartphone wallpapers in identification of medical fungi for medical students and residents. Int J Dermatol. 2018;57:458-462.

- Shahriari N, Grant-Kels J, Murphy MJ. Dermatopathology education in the era of modern technology. J Cutan Pathol. 2017;44:763-771.

- Masud A, Shafi S, Rao BK. Mobile medical apps for patient education: a graded review of available dermatology apps. Cutis. 2018;101:141-144.

- Mercer JM. An array of mobile apps for dermatologists. J Cutan Med Surg. 2014;18:295-297.

- Tongdee E, Markowitz O. Mobile app rankings in dermatology. Cutis. 2018;102:252-256.

- Kirby JS, Adgerson CN, Anderson BE. A survey of dermatology resident education in cosmetic procedures. J Am Acad Dermatol. 2013;68:e23-e28.

- Waldman A, Sobanko JF, Alam M. Practice and educational gaps in cosmetic dermatologic surgery. Dermatol Clin. 2016;34:341-346.

- Nielson CB, Harb JN, Motaparthi K. Education in cosmetic procedural dermatology: resident experiences and perceptions. J Clin Aesthet Dermatol. 2019;12:E70-E72.

- Hanna MG, Parwani AV, Pantanowitz L, et al. Smartphone applications: a contemporary resource for dermatopathology. J Pathol Inform. 2015;6:44.

- Craddock MF, Blondin HM, Youssef MJ, et al. Online education improves pediatric residents' understanding of atopic dermatitis. Pediatr Dermatol. 2018;35:64-69.

- Lacy FA, Coman GC, Holliday AC, et al. Assessment of smartphone application for teaching intuitive visual diagnosis of melanoma. JAMA Dermatol. 2018;154:730-731.

- Flaten HK, St Claire C, Schlager E, et al. Growth of mobile applications in dermatology--2017 update. Dermatol Online J. 2018;24:13.

- Liu R-F, Wang F-Y, Yen H, et al. A new mobile learning module using smartphone wallpapers in identification of medical fungi for medical students and residents. Int J Dermatol. 2018;57:458-462.

- Shahriari N, Grant-Kels J, Murphy MJ. Dermatopathology education in the era of modern technology. J Cutan Pathol. 2017;44:763-771.

- Masud A, Shafi S, Rao BK. Mobile medical apps for patient education: a graded review of available dermatology apps. Cutis. 2018;101:141-144.

- Mercer JM. An array of mobile apps for dermatologists. J Cutan Med Surg. 2014;18:295-297.

- Tongdee E, Markowitz O. Mobile app rankings in dermatology. Cutis. 2018;102:252-256.

- Kirby JS, Adgerson CN, Anderson BE. A survey of dermatology resident education in cosmetic procedures. J Am Acad Dermatol. 2013;68:e23-e28.

- Waldman A, Sobanko JF, Alam M. Practice and educational gaps in cosmetic dermatologic surgery. Dermatol Clin. 2016;34:341-346.

- Nielson CB, Harb JN, Motaparthi K. Education in cosmetic procedural dermatology: resident experiences and perceptions. J Clin Aesthet Dermatol. 2019;12:E70-E72.

- Hanna MG, Parwani AV, Pantanowitz L, et al. Smartphone applications: a contemporary resource for dermatopathology. J Pathol Inform. 2015;6:44.

Practice Points

- Mobile applications (apps) are a convenient way to learn dermatology, but there is no objective method to assess their quality.

- To determine which apps are most useful for education, we performed a graded review of dermatology apps targeted to students and residents.

- By applying a rubric to 36 affordable apps, we identified 18 (50%) with adequate teaching value.

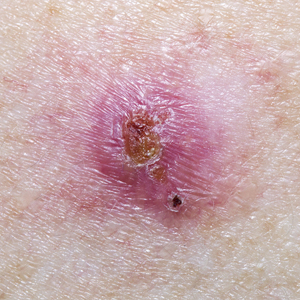

Reliability of Biopsy Margin Status for Basal Cell Carcinoma: A Retrospective Study

Basal cell carcinoma (BCC) is the most common type of skin cancer frequently encountered in both dermatology and primary care settings.1 When biopsies of these neoplasms are performed to confirm the diagnosis, pathology reports may indicate positive or negative margin status. No guidelines exist for reporting biopsy margin status for BCC, resulting in varied reporting practices among dermatopathologists. Furthermore, the terminology used to describe margin status can be ambiguous and differs among pathologists; language such as “approaches the margin” or “margins appear free” may be used, with nonuniform interpretation between pathologists and providers, leading to variability in patient management.2

When interpreting a negative margin status on a pathology report, one must question if the BCC extends beyond the margin in unexamined sections of the specimen, which could be the result of an irregular tumor growth pattern or tissue processing. It has been estimated that less than 2% of the peripheral surgical margin is ultimately examined when serial cross-sections are prepared histologically (the bread loaf technique). However, this estimation would depend on several variables, including the number and thickness of sections and the amount of tissue discarded during processing.3 Importantly, reports of a false-negative margin could lead both the clinician and patient to believe that the neoplasm has been completely removed, which could have serious consequences.

Our study sought to determine the reliability of negative biopsy margin status for BCC. We examined BCC biopsy specimens initially determined to have uninvolved margins on routine tissue processing and determined the proportion with truly negative margins after complete tissue block sectioning of the initial biopsy specimen. We felt this technique was a more accurate measurement of true margin status than examination of a re-excision specimen. We also identified any factors that were predictive of positive true margins.

Methods

We conducted a retrospective study evaluating tissue samples collected at Geisinger Health System (Danville, Pennsylvania) from January to December 2016. Specimens were queried via the electronic database system at our institution (CoPath). We included BCC biopsy specimens with negative histologic margins on initial assessment that subsequently had block exhaust levels routinely ordered. These levels are cut every 100 to 150 µm, generating approximately 8 glass slides. We excluded all tumors that did not fit these criteria as well as those in patients younger than 18 years. Data collection was performed utilizing specimen pathology reports in addition to the note from the corresponding clinician office visit from the institution’s electronic medical record (Epic). Appropriate statistical calculations were performed. This study was approved by an institutional review board at our institution, which is required for all research involving human participants. This served to ensure the proper review and storage of patients’ protected health information.

Results

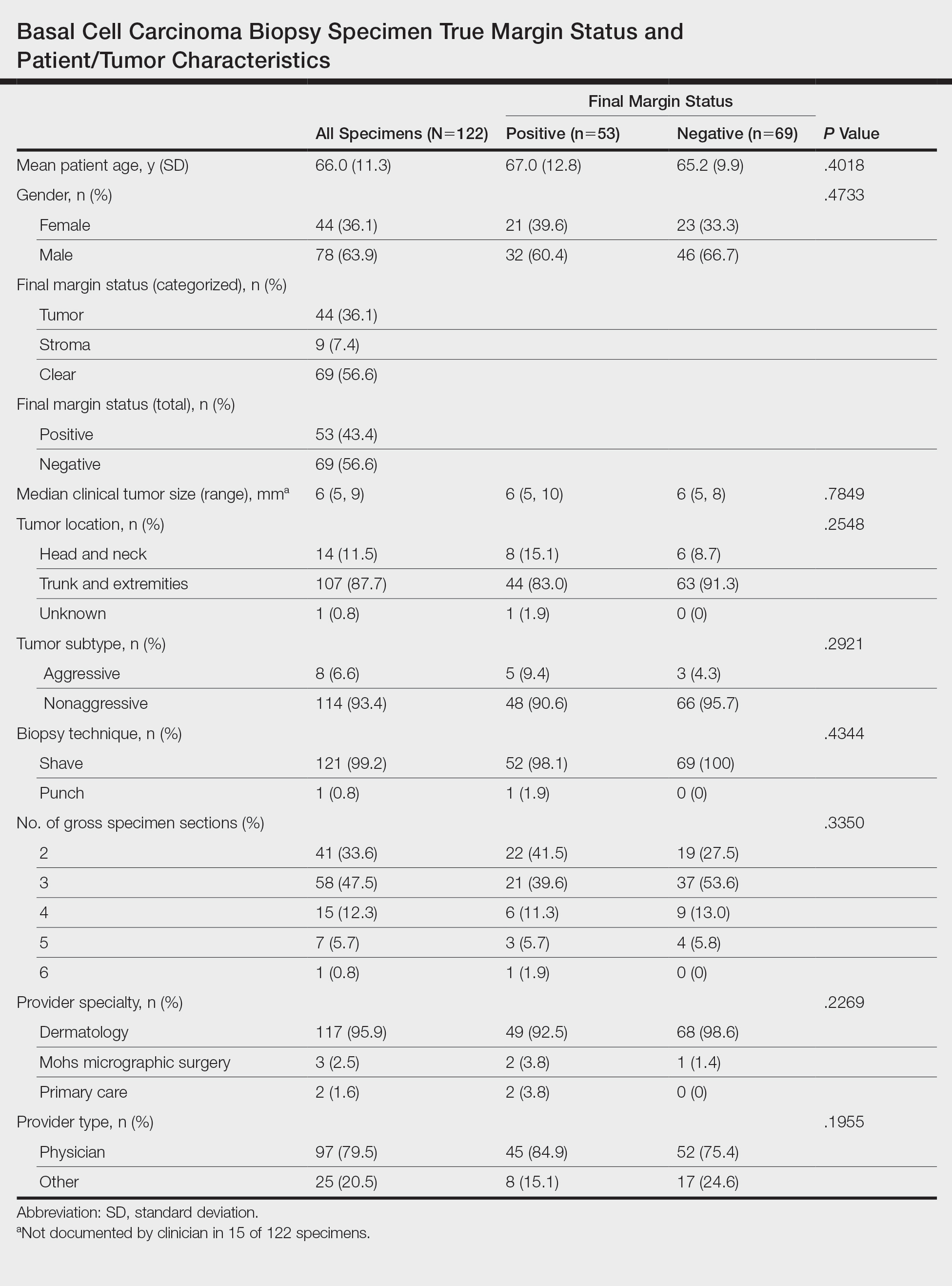

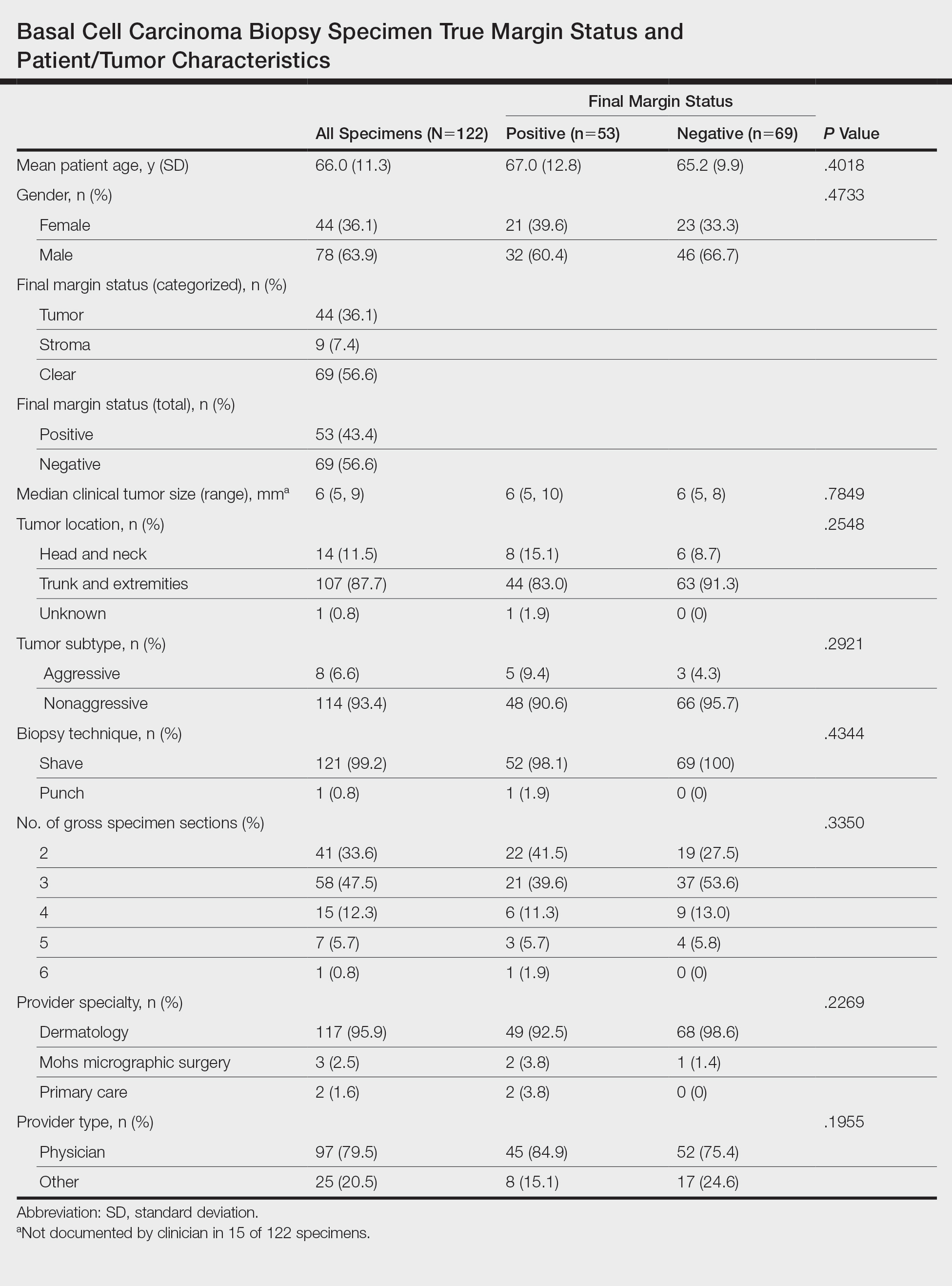

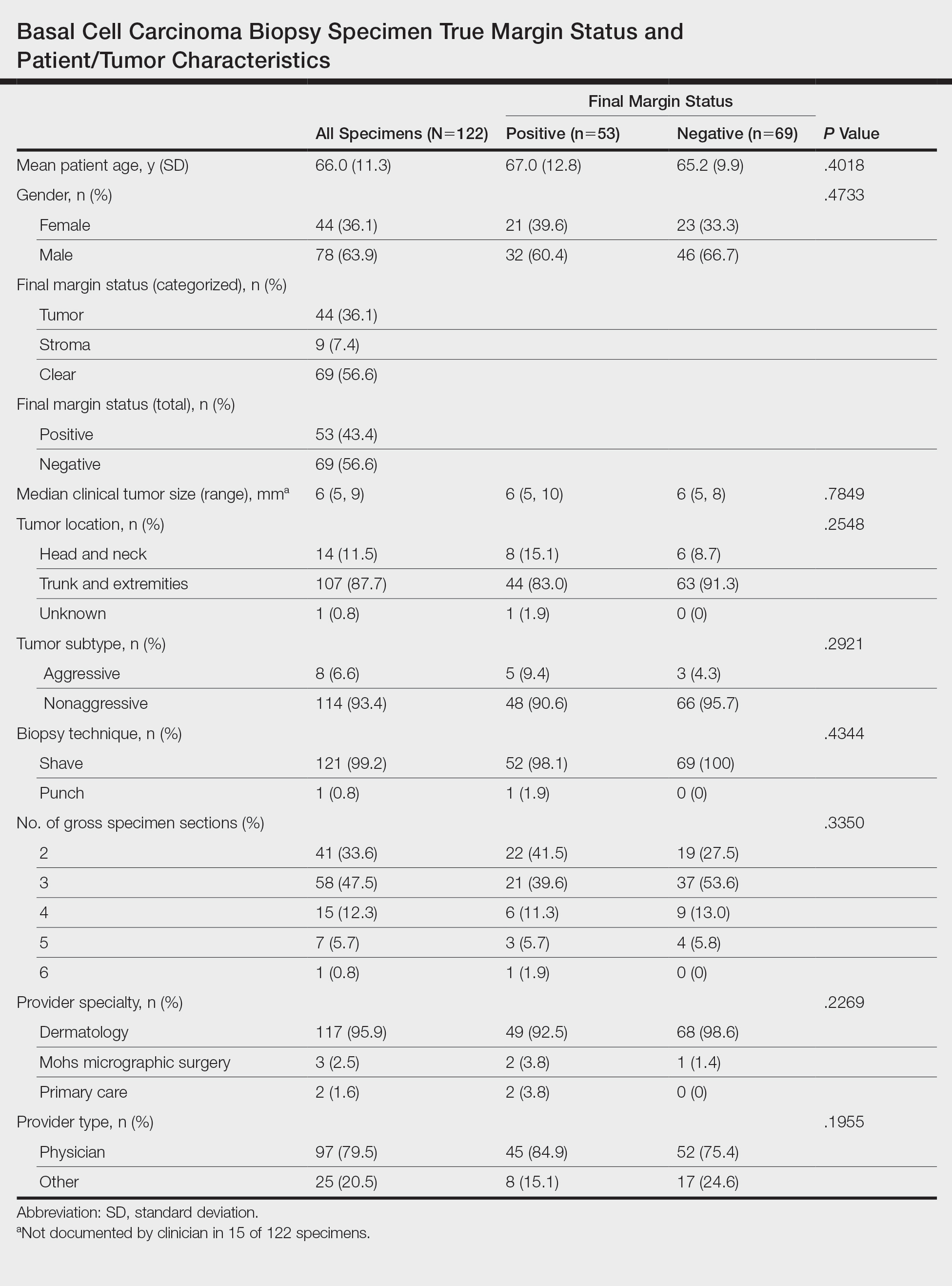

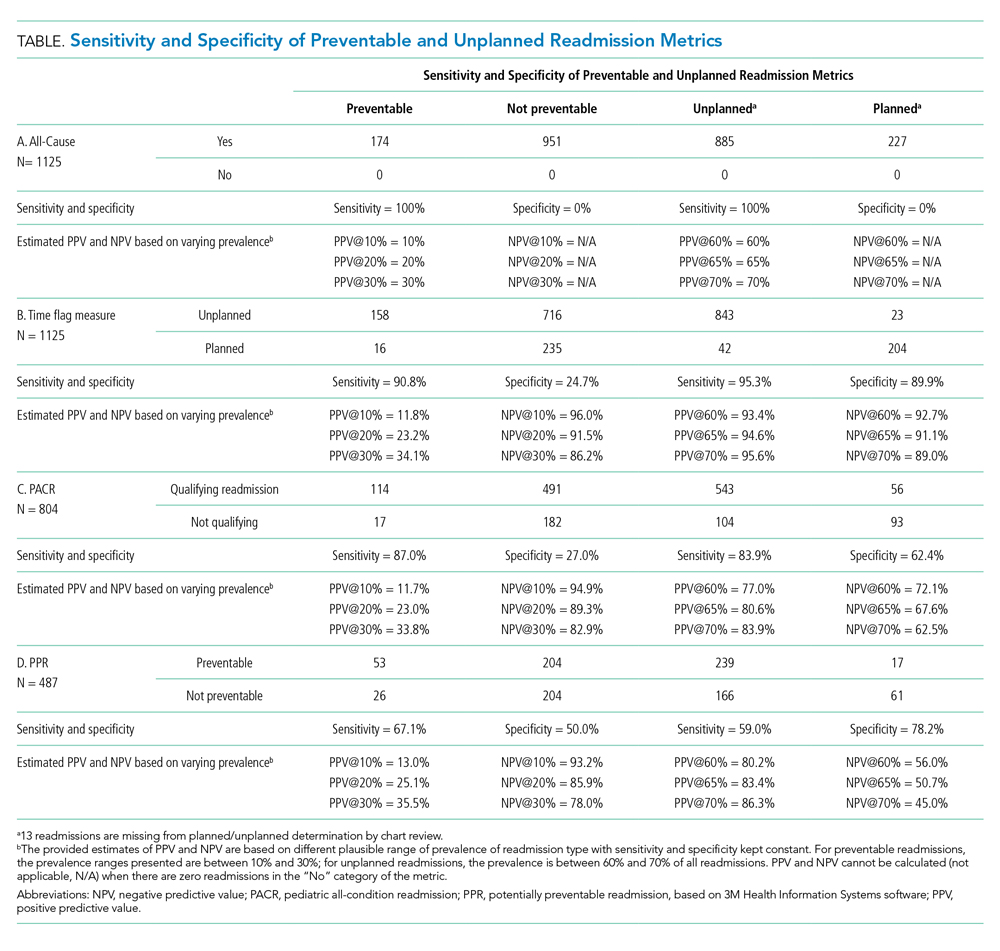

The search yielded a total of 122 specimens from 104 patients after appropriate exclusions. We examined a total of 122 BCC biopsy specimens with negative initial margins: 121 (99.2%) shave biopsies and 1 (0.8%) punch biopsy. Of 122 specimens with negative initial margins, 53 (43.4%) were found to have a truly positive margin based on the presence of either tumor or stroma at the lateral or deep tissue edge after complete tissue block sectioning. Sixty-nine (56.6%) specimens had clear margins and were categorized as truly negative after complete tissue block sectioning. Specimens with positive and negative final margin status did not differ significantly with respect to patient age; gender; biopsy technique; number of gross specimen sections; or tumor characteristics, including location, size, and subtype (Table)(P>.05).

We also examined the type of treatment performed, which varied and included curettage, electrodesiccation and curettage, excision, and Mohs micrographic surgery. Clinicians, who were not made aware of the exhaust level protocol, chose not to pursue further treatment in 6 (4.9%) of the cases because of negative biopsy margins. Four (66.7%) of the 6 providers were physicians, and 2 (33.3%) were advanced practitioners. All of the providers practiced within the Department of Dermatology.

Comment

Our findings support prior smaller studies investigating this topic. A prospective study by Schnebelen et al4 examined 27 BCC biopsy specimens and found that 8 (30%) were erroneously classified as negative on routine examination. This study similarly determined true margin status by assessing the margins at complete tissue block exhaustion.4 Willardson et al5 also demonstrated the poor predictive value of margin status based on the presence of residual BCC in subsequent excisions. They found that 34 (24%) of 143 cases with negative biopsy margins contained residual tumor in the corresponding excision.5

Our study revealed that almost half of BCC biopsy specimens that had negative histologic margins with routine sectioning had truly positive margins on complete block exhaustion. This finding was independent of multiple factors, including tumor subtype, indicating that even nonaggressive tumors are prone to false-negative margin reports. We also found that reports of negative margins persuaded some clinicians to forgo definitive treatment. This study serves to remind clinicians of the limitations of margin assessment and provides impetus for dermatopathologists to consider modifying how margin status is reported.

Limitations of this study include a small number of cases and limited generalizability. Institutions that routinely examine more levels of each biopsy specimen may be less likely to erroneously categorize a positive margin as negative. Furthermore, despite exhausting the tissue block, we still may have underestimated the number of cases with truly positive margins, as this method inherently does not allow for complete margin examination.

Acknowledgments

We thank the Geisinger Department of Dermatopathology and the Geisinger Biostatistics & Research Data Core (Danville, Pennsylvania) for their assistance with our project.

- Lukowiak TM, Aizman L, Perz A, et al. Association of age, sex, race, and geographic region with variation of the ratio of basal cell to squamous cell carcinomas in the United States. JAMA Dermatol. 2020;156:1149-1276.

- Abide JM, Nahai F, Bennett RG. The meaning of surgical margins. Plast Reconstr Surg. 1984;73:492-497.

- Kimyai-Asadi A, Goldberg LH, Jih MH. Accuracy of serial transverse cross-sections in detecting residual basal cell carcinoma at the surgical margins of an elliptical excision specimen. J Am Acad Dermatol. 2005;53:469-473.

- Schnebelen AM, Gardner JM, Shalin SC. Margin status in shave biopsies of nonmelanoma skin cancers: is it worth reporting? Arch Pathol Lab Med. 2016;140:678-681.

- Willardson HB, Lombardo J, Raines M, et al. Predictive value of basal cell carcinoma biopsies with negative margins: a retrospective cohort study. J Am Acad Dermatol. 2018;79:42-46.

Basal cell carcinoma (BCC) is the most common type of skin cancer frequently encountered in both dermatology and primary care settings.1 When biopsies of these neoplasms are performed to confirm the diagnosis, pathology reports may indicate positive or negative margin status. No guidelines exist for reporting biopsy margin status for BCC, resulting in varied reporting practices among dermatopathologists. Furthermore, the terminology used to describe margin status can be ambiguous and differs among pathologists; language such as “approaches the margin” or “margins appear free” may be used, with nonuniform interpretation between pathologists and providers, leading to variability in patient management.2

When interpreting a negative margin status on a pathology report, one must question if the BCC extends beyond the margin in unexamined sections of the specimen, which could be the result of an irregular tumor growth pattern or tissue processing. It has been estimated that less than 2% of the peripheral surgical margin is ultimately examined when serial cross-sections are prepared histologically (the bread loaf technique). However, this estimation would depend on several variables, including the number and thickness of sections and the amount of tissue discarded during processing.3 Importantly, reports of a false-negative margin could lead both the clinician and patient to believe that the neoplasm has been completely removed, which could have serious consequences.

Our study sought to determine the reliability of negative biopsy margin status for BCC. We examined BCC biopsy specimens initially determined to have uninvolved margins on routine tissue processing and determined the proportion with truly negative margins after complete tissue block sectioning of the initial biopsy specimen. We felt this technique was a more accurate measurement of true margin status than examination of a re-excision specimen. We also identified any factors that were predictive of positive true margins.

Methods

We conducted a retrospective study evaluating tissue samples collected at Geisinger Health System (Danville, Pennsylvania) from January to December 2016. Specimens were queried via the electronic database system at our institution (CoPath). We included BCC biopsy specimens with negative histologic margins on initial assessment that subsequently had block exhaust levels routinely ordered. These levels are cut every 100 to 150 µm, generating approximately 8 glass slides. We excluded all tumors that did not fit these criteria as well as those in patients younger than 18 years. Data collection was performed utilizing specimen pathology reports in addition to the note from the corresponding clinician office visit from the institution’s electronic medical record (Epic). Appropriate statistical calculations were performed. This study was approved by an institutional review board at our institution, which is required for all research involving human participants. This served to ensure the proper review and storage of patients’ protected health information.

Results

The search yielded a total of 122 specimens from 104 patients after appropriate exclusions. We examined a total of 122 BCC biopsy specimens with negative initial margins: 121 (99.2%) shave biopsies and 1 (0.8%) punch biopsy. Of 122 specimens with negative initial margins, 53 (43.4%) were found to have a truly positive margin based on the presence of either tumor or stroma at the lateral or deep tissue edge after complete tissue block sectioning. Sixty-nine (56.6%) specimens had clear margins and were categorized as truly negative after complete tissue block sectioning. Specimens with positive and negative final margin status did not differ significantly with respect to patient age; gender; biopsy technique; number of gross specimen sections; or tumor characteristics, including location, size, and subtype (Table)(P>.05).

We also examined the type of treatment performed, which varied and included curettage, electrodesiccation and curettage, excision, and Mohs micrographic surgery. Clinicians, who were not made aware of the exhaust level protocol, chose not to pursue further treatment in 6 (4.9%) of the cases because of negative biopsy margins. Four (66.7%) of the 6 providers were physicians, and 2 (33.3%) were advanced practitioners. All of the providers practiced within the Department of Dermatology.

Comment

Our findings support prior smaller studies investigating this topic. A prospective study by Schnebelen et al4 examined 27 BCC biopsy specimens and found that 8 (30%) were erroneously classified as negative on routine examination. This study similarly determined true margin status by assessing the margins at complete tissue block exhaustion.4 Willardson et al5 also demonstrated the poor predictive value of margin status based on the presence of residual BCC in subsequent excisions. They found that 34 (24%) of 143 cases with negative biopsy margins contained residual tumor in the corresponding excision.5

Our study revealed that almost half of BCC biopsy specimens that had negative histologic margins with routine sectioning had truly positive margins on complete block exhaustion. This finding was independent of multiple factors, including tumor subtype, indicating that even nonaggressive tumors are prone to false-negative margin reports. We also found that reports of negative margins persuaded some clinicians to forgo definitive treatment. This study serves to remind clinicians of the limitations of margin assessment and provides impetus for dermatopathologists to consider modifying how margin status is reported.

Limitations of this study include a small number of cases and limited generalizability. Institutions that routinely examine more levels of each biopsy specimen may be less likely to erroneously categorize a positive margin as negative. Furthermore, despite exhausting the tissue block, we still may have underestimated the number of cases with truly positive margins, as this method inherently does not allow for complete margin examination.

Acknowledgments

We thank the Geisinger Department of Dermatopathology and the Geisinger Biostatistics & Research Data Core (Danville, Pennsylvania) for their assistance with our project.

Basal cell carcinoma (BCC) is the most common type of skin cancer frequently encountered in both dermatology and primary care settings.1 When biopsies of these neoplasms are performed to confirm the diagnosis, pathology reports may indicate positive or negative margin status. No guidelines exist for reporting biopsy margin status for BCC, resulting in varied reporting practices among dermatopathologists. Furthermore, the terminology used to describe margin status can be ambiguous and differs among pathologists; language such as “approaches the margin” or “margins appear free” may be used, with nonuniform interpretation between pathologists and providers, leading to variability in patient management.2

When interpreting a negative margin status on a pathology report, one must question if the BCC extends beyond the margin in unexamined sections of the specimen, which could be the result of an irregular tumor growth pattern or tissue processing. It has been estimated that less than 2% of the peripheral surgical margin is ultimately examined when serial cross-sections are prepared histologically (the bread loaf technique). However, this estimation would depend on several variables, including the number and thickness of sections and the amount of tissue discarded during processing.3 Importantly, reports of a false-negative margin could lead both the clinician and patient to believe that the neoplasm has been completely removed, which could have serious consequences.

Our study sought to determine the reliability of negative biopsy margin status for BCC. We examined BCC biopsy specimens initially determined to have uninvolved margins on routine tissue processing and determined the proportion with truly negative margins after complete tissue block sectioning of the initial biopsy specimen. We felt this technique was a more accurate measurement of true margin status than examination of a re-excision specimen. We also identified any factors that were predictive of positive true margins.

Methods

We conducted a retrospective study evaluating tissue samples collected at Geisinger Health System (Danville, Pennsylvania) from January to December 2016. Specimens were queried via the electronic database system at our institution (CoPath). We included BCC biopsy specimens with negative histologic margins on initial assessment that subsequently had block exhaust levels routinely ordered. These levels are cut every 100 to 150 µm, generating approximately 8 glass slides. We excluded all tumors that did not fit these criteria as well as those in patients younger than 18 years. Data collection was performed utilizing specimen pathology reports in addition to the note from the corresponding clinician office visit from the institution’s electronic medical record (Epic). Appropriate statistical calculations were performed. This study was approved by an institutional review board at our institution, which is required for all research involving human participants. This served to ensure the proper review and storage of patients’ protected health information.

Results

The search yielded a total of 122 specimens from 104 patients after appropriate exclusions. We examined a total of 122 BCC biopsy specimens with negative initial margins: 121 (99.2%) shave biopsies and 1 (0.8%) punch biopsy. Of 122 specimens with negative initial margins, 53 (43.4%) were found to have a truly positive margin based on the presence of either tumor or stroma at the lateral or deep tissue edge after complete tissue block sectioning. Sixty-nine (56.6%) specimens had clear margins and were categorized as truly negative after complete tissue block sectioning. Specimens with positive and negative final margin status did not differ significantly with respect to patient age; gender; biopsy technique; number of gross specimen sections; or tumor characteristics, including location, size, and subtype (Table)(P>.05).

We also examined the type of treatment performed, which varied and included curettage, electrodesiccation and curettage, excision, and Mohs micrographic surgery. Clinicians, who were not made aware of the exhaust level protocol, chose not to pursue further treatment in 6 (4.9%) of the cases because of negative biopsy margins. Four (66.7%) of the 6 providers were physicians, and 2 (33.3%) were advanced practitioners. All of the providers practiced within the Department of Dermatology.

Comment

Our findings support prior smaller studies investigating this topic. A prospective study by Schnebelen et al4 examined 27 BCC biopsy specimens and found that 8 (30%) were erroneously classified as negative on routine examination. This study similarly determined true margin status by assessing the margins at complete tissue block exhaustion.4 Willardson et al5 also demonstrated the poor predictive value of margin status based on the presence of residual BCC in subsequent excisions. They found that 34 (24%) of 143 cases with negative biopsy margins contained residual tumor in the corresponding excision.5

Our study revealed that almost half of BCC biopsy specimens that had negative histologic margins with routine sectioning had truly positive margins on complete block exhaustion. This finding was independent of multiple factors, including tumor subtype, indicating that even nonaggressive tumors are prone to false-negative margin reports. We also found that reports of negative margins persuaded some clinicians to forgo definitive treatment. This study serves to remind clinicians of the limitations of margin assessment and provides impetus for dermatopathologists to consider modifying how margin status is reported.

Limitations of this study include a small number of cases and limited generalizability. Institutions that routinely examine more levels of each biopsy specimen may be less likely to erroneously categorize a positive margin as negative. Furthermore, despite exhausting the tissue block, we still may have underestimated the number of cases with truly positive margins, as this method inherently does not allow for complete margin examination.

Acknowledgments

We thank the Geisinger Department of Dermatopathology and the Geisinger Biostatistics & Research Data Core (Danville, Pennsylvania) for their assistance with our project.

- Lukowiak TM, Aizman L, Perz A, et al. Association of age, sex, race, and geographic region with variation of the ratio of basal cell to squamous cell carcinomas in the United States. JAMA Dermatol. 2020;156:1149-1276.

- Abide JM, Nahai F, Bennett RG. The meaning of surgical margins. Plast Reconstr Surg. 1984;73:492-497.

- Kimyai-Asadi A, Goldberg LH, Jih MH. Accuracy of serial transverse cross-sections in detecting residual basal cell carcinoma at the surgical margins of an elliptical excision specimen. J Am Acad Dermatol. 2005;53:469-473.

- Schnebelen AM, Gardner JM, Shalin SC. Margin status in shave biopsies of nonmelanoma skin cancers: is it worth reporting? Arch Pathol Lab Med. 2016;140:678-681.

- Willardson HB, Lombardo J, Raines M, et al. Predictive value of basal cell carcinoma biopsies with negative margins: a retrospective cohort study. J Am Acad Dermatol. 2018;79:42-46.

- Lukowiak TM, Aizman L, Perz A, et al. Association of age, sex, race, and geographic region with variation of the ratio of basal cell to squamous cell carcinomas in the United States. JAMA Dermatol. 2020;156:1149-1276.

- Abide JM, Nahai F, Bennett RG. The meaning of surgical margins. Plast Reconstr Surg. 1984;73:492-497.

- Kimyai-Asadi A, Goldberg LH, Jih MH. Accuracy of serial transverse cross-sections in detecting residual basal cell carcinoma at the surgical margins of an elliptical excision specimen. J Am Acad Dermatol. 2005;53:469-473.

- Schnebelen AM, Gardner JM, Shalin SC. Margin status in shave biopsies of nonmelanoma skin cancers: is it worth reporting? Arch Pathol Lab Med. 2016;140:678-681.

- Willardson HB, Lombardo J, Raines M, et al. Predictive value of basal cell carcinoma biopsies with negative margins: a retrospective cohort study. J Am Acad Dermatol. 2018;79:42-46.

Practice Points

- Clinicians must recognize the limitations of margin assessment of biopsy specimens and not rely on margin status to dictate treatment.

- Dermatopathologists should consider modifying how margin status is reported, either by omitting it or clarifying its limitations on the pathology report.

Reducing Inappropriate Laboratory Testing in the Hospital Setting: How Low Can We Go?

From the University of Toronto (Dr. Basuita, Corey L. Kamen, and Dr. Soong) and Sinai Health System (Corey L. Kamen, Cheryl Ethier, and Dr. Soong), Toronto, Ontario, Canada. Co-first authors are Manpreet Basuita, MD, and Corey L. Kamen, BSc.

Abstract

- Objective: Routine laboratory testing is common among medical inpatients; however, when ordered inappropriately testing can represent low-value care. We examined the impact of an evidence-based intervention bundle on utilization.

- Participants/setting: This prospective cohort study took place at a tertiary academic medical center and included 6424 patients admitted to the general internal medicine service between April 2016 and March 2018.

- Intervention: An intervention bundle, whose first components were implemented in July 2016, included computer order entry restrictions on repetitive laboratory testing, education, and audit-feedback.

- Measures: Data were extracted from the hospital electronic health record. The primary outcome was the number of routine blood tests (complete blood count, creatinine, and electrolytes) ordered per inpatient day.

- Analysis: Descriptive statistics were calculated for demographic variables. We used statistical process control charts to compare the baseline period (April 2016-June 2017) and the intervention period (July 2017-March 2018) for the primary outcome.

- Results: The mean number of combined routine laboratory tests ordered per inpatient day decreased from 1.19 (SD, 0.21) tests to 1.11 (SD, 0.05), a relative reduction of 6.7% (P < 0.0001). Mean cost per case related to laboratory tests decreased from $17.24 in the pre-intervention period to $16.17 in the post-intervention period (relative reduction of 6.2%). This resulted in savings of $26,851 in the intervention year.

- Conclusion: A laboratory intervention bundle was associated with small reductions in testing and costs. A routine test performed less than once per inpatient day may not be clinically appropriate or possible.

Keywords: utilization; clinical costs; quality improvement; QI intervention; internal medicine; inpatient.

Routine laboratory blood testing is a commonly used diagnostic tool that physicians rely on to provide patient care. Although routine blood testing represents less than 5% of most hospital budgets, routine use and over-reliance on testing among physicians makes it a target of cost-reduction efforts.1-3 A variety of interventions have been proposed to reduce inappropriate laboratory tests, with varying results.1,4-6 Successful interventions include providing physicians with fee data associated with ordered laboratory tests, unbundling panels of tests, and multicomponent interventions.6 We conducted a multifaceted quality improvement study to promote and develop interventions to adopt appropriate blood test ordering practices.

Methods

Setting

This prospective cohort study took place at Mount Sinai Hospital, a 443-bed academic hospital affiliated with the University of Toronto, where more than 2400 learners rotate through annually. The study was approved by the Mount Sinai Hospital Research Ethics Board.

Participants

We included all inpatient admissions to the general internal medicine service between April 2016 and March 2018. Exclusion criteria included a length of stay (LOS) longer than 365 days and admission to a critical care unit. Patients with more than 1 admission were counted as separate hospital inpatient visits.

Intervention

Based on internal data, we targeted the top 3 most frequently ordered routine blood tests: complete blood count (CBC), creatinine, and electrolytes. Trainee interviews revealed that habit, bundled order sets, and fear of “missing something” contributed to inappropriate routine blood test ordering. Based on these root causes, we used the Model for Improvement to iteratively develop a multimodal intervention that began in July 2016.7,8 This included a change to the computerized provider order entry (CPOE) to nudge clinicians to a restrictive ordering strategy by substituting the “Daily x3” frequency of blood test ordering with a “Daily x1” option on a pick list of order options. Clinicians could still order daily routine blood tests for any specified duration, but would have to do so by manually changing the default setting within the CPOE.

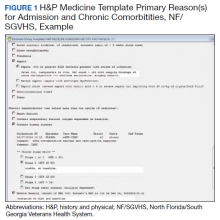

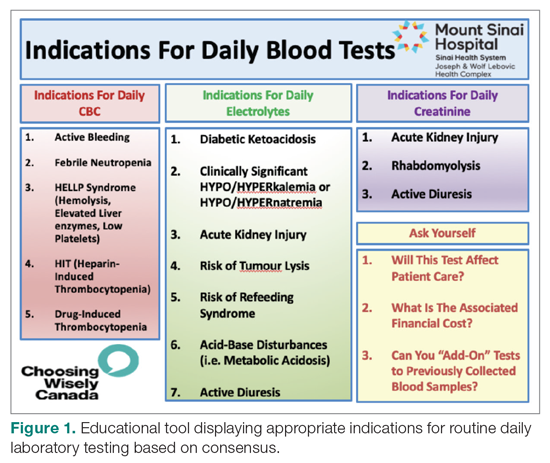

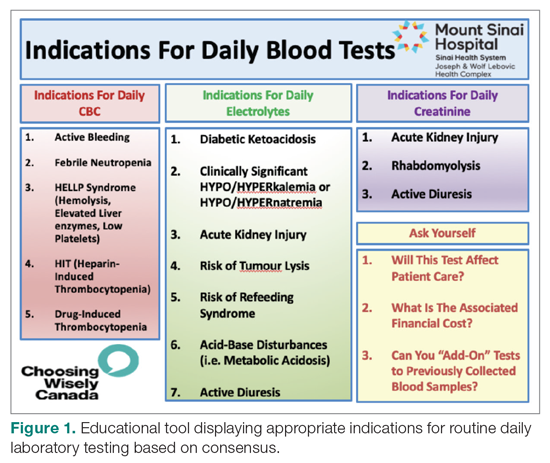

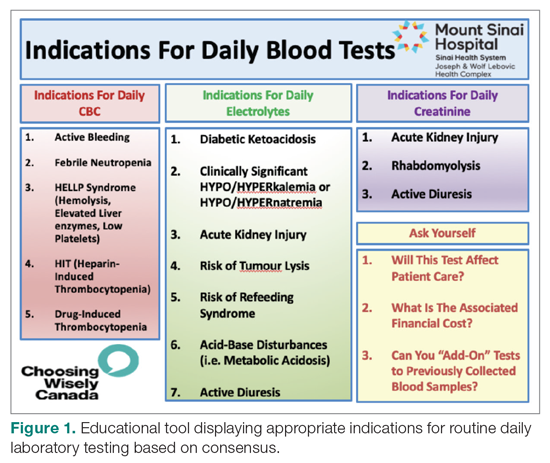

From July 2017 to March 2018, the research team educated residents on appropriate laboratory test ordering and provided audit and feedback data to the clinicians. Diagnostic uncertainty was addressed in teaching sessions. Attending physicians were surveyed on appropriate indications for daily laboratory testing for each of CBC, electrolytes, and creatinine. Appropriate indications (Figure 1) were displayed in visible clinical areas and incorporated into teaching sessions.9

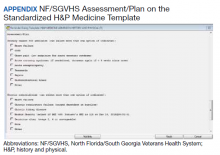

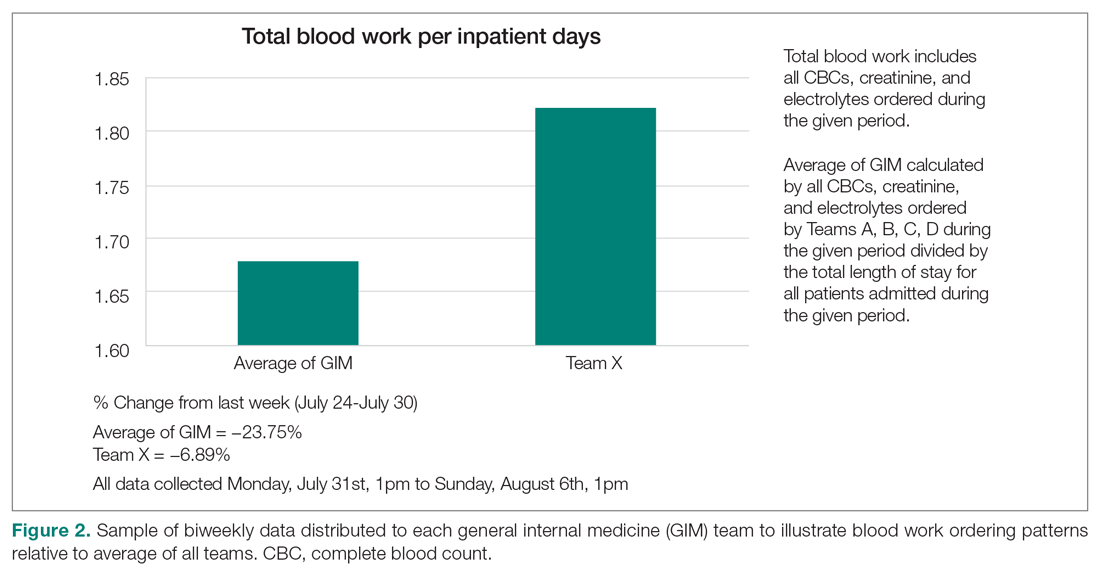

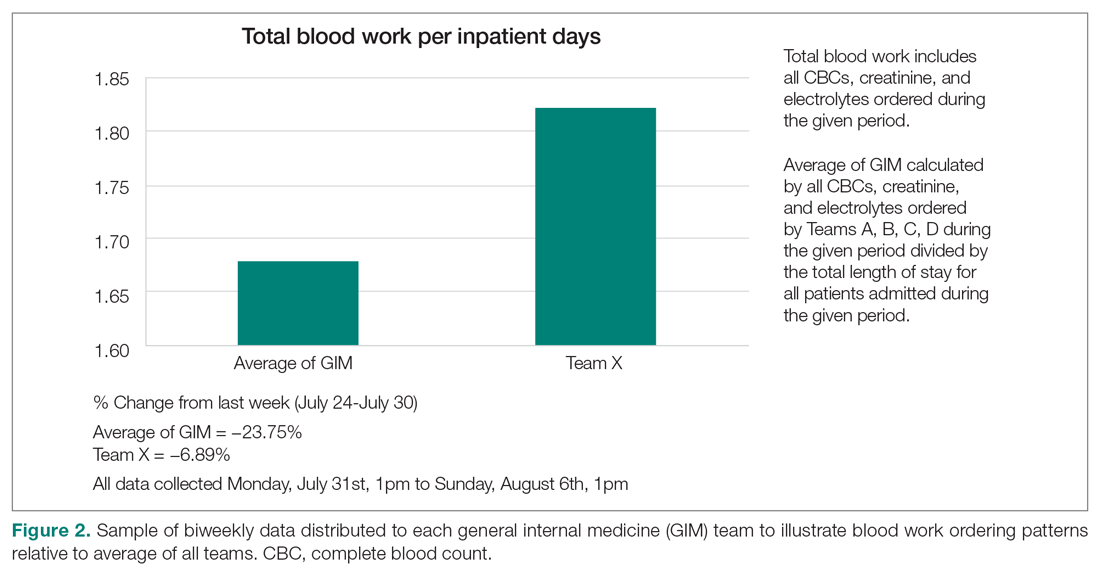

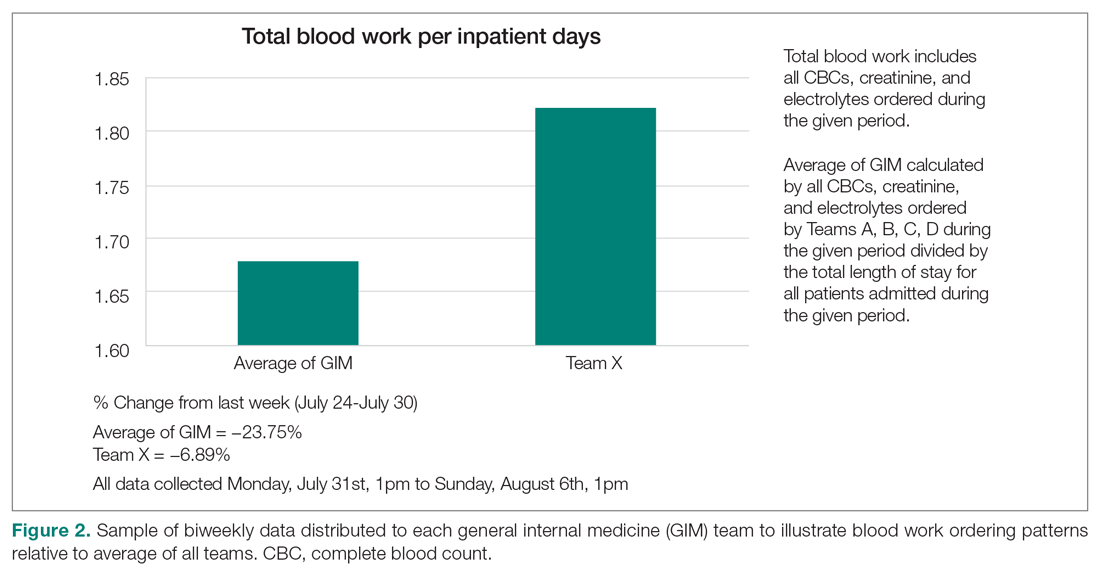

Clinician teams received real-time performance data on their routine blood test ordering patterns compared with an institutional benchmark. Bar graphs of blood work ordering rates (sum of CBCs, creatinine, and electrolytes ordered for all patients on a given team divided by the total LOS for all patients) were distributed to each internal medicine team via email every 2 weeks (Figure 2).1,10-12

Data Collection and Analysis

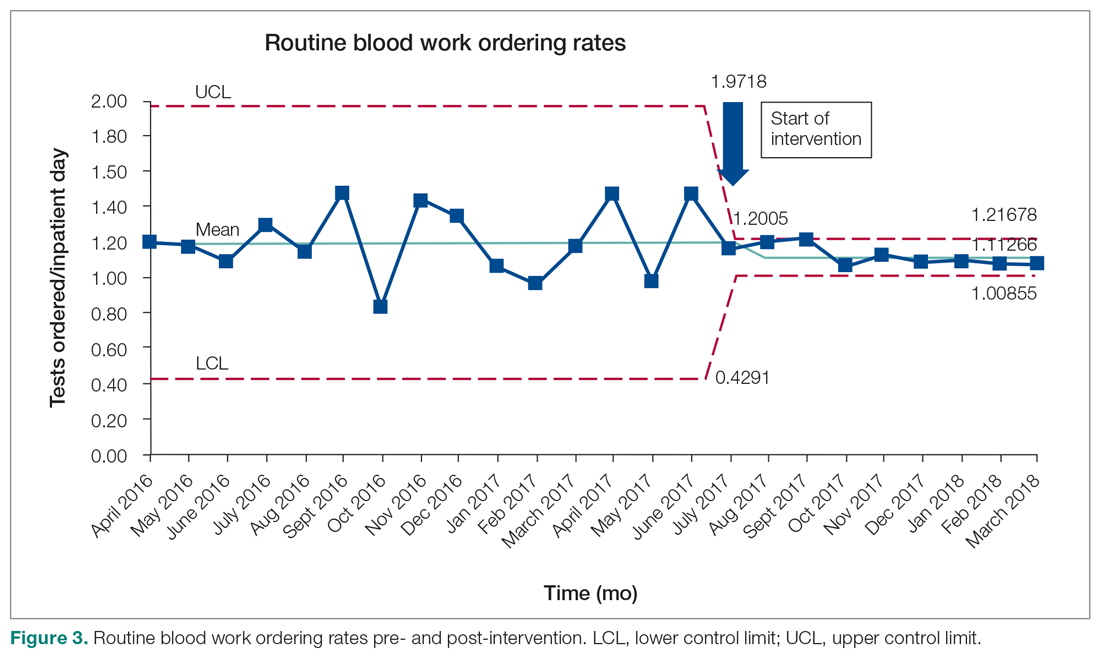

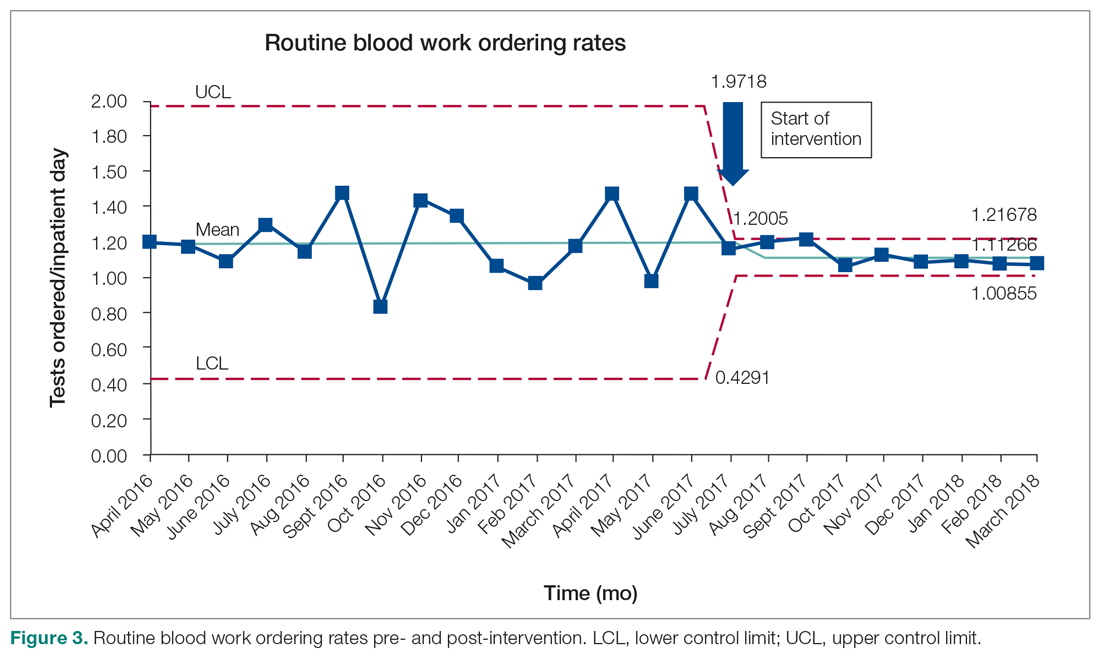

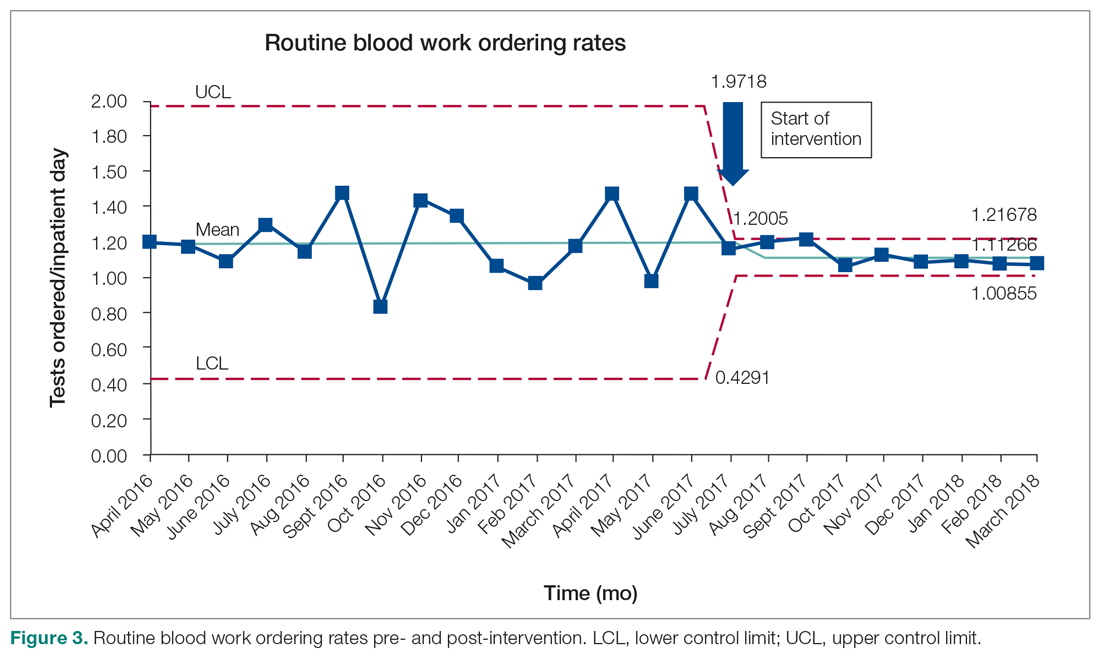

Data were extracted from the hospital electronic health record (EHR). The primary outcome was the number of routine blood tests (CBC, creatinine, and electrolytes) ordered per inpatient day. Descriptive statistics were calculated for demographic variables. We used statistical process control (SPC) charts to compare the baseline period (April 2016-June 2017) and the intervention period (July 2017-March 2018) for the primary outcome. SPC charts display process changes over time. Data are plotted in chronological order, with the central line representing the outcome mean, an upper line representing the upper control limit, and a lower line representing the lower control limit. The upper and lower limits were set at 3δ, which correspond to 3 standard deviations above and below the mean. Six successive points above or beyond the mean suggests “special cause variation,” indicating that observed results are unlikely due to secular trends. SPC charts are commonly used quality tools for process improvement as well as research.13-16 These charts were created using QI Macros SPC software for Excel V. 2012.07 (KnowWare International, Denver, CO).

The direct cost of each laboratory test was acquired from the hospital laboratory department. The cost of each laboratory test (CBC = $7.54/test, electrolytes = $2.04/test, creatinine = $1.28/test, in Canadian dollars) was subsequently added together and multiplied by the pre- and post-intervention difference of total blood tests saved per inpatient day and then multiplied by 365 to arrive at an estimated cost savings per year.

Results

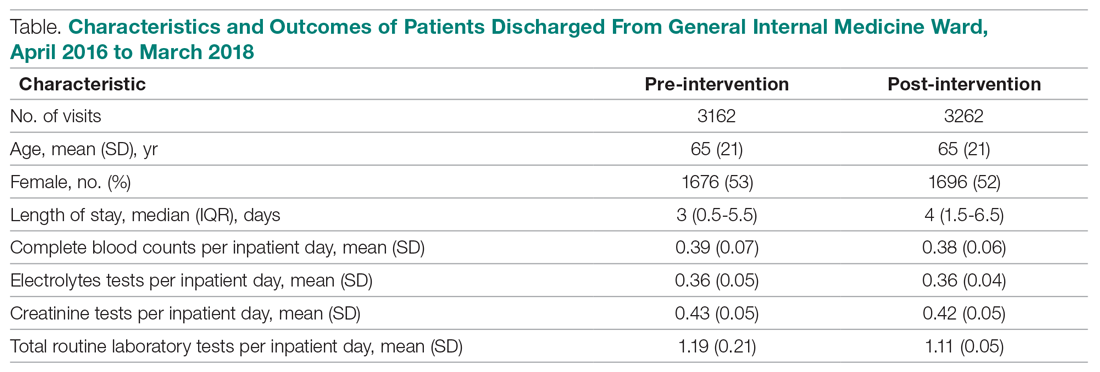

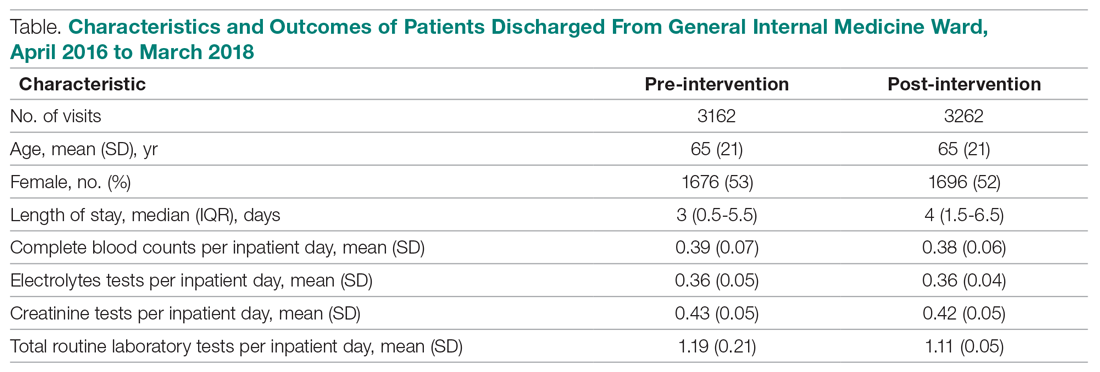

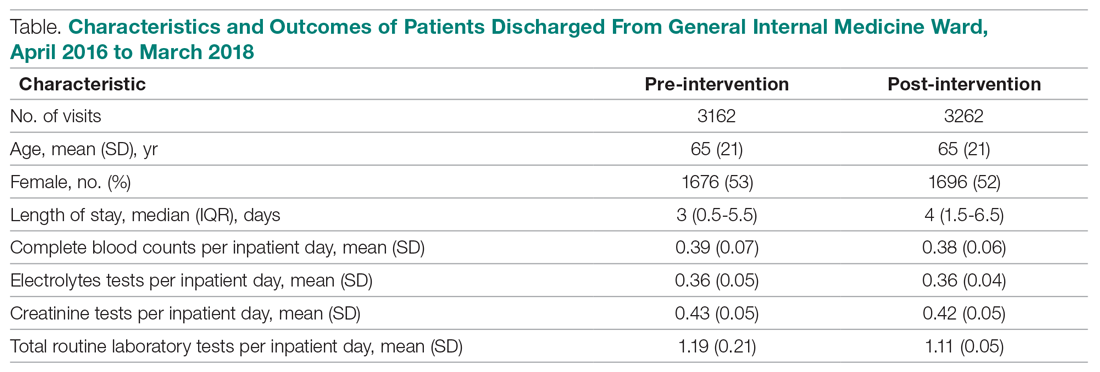

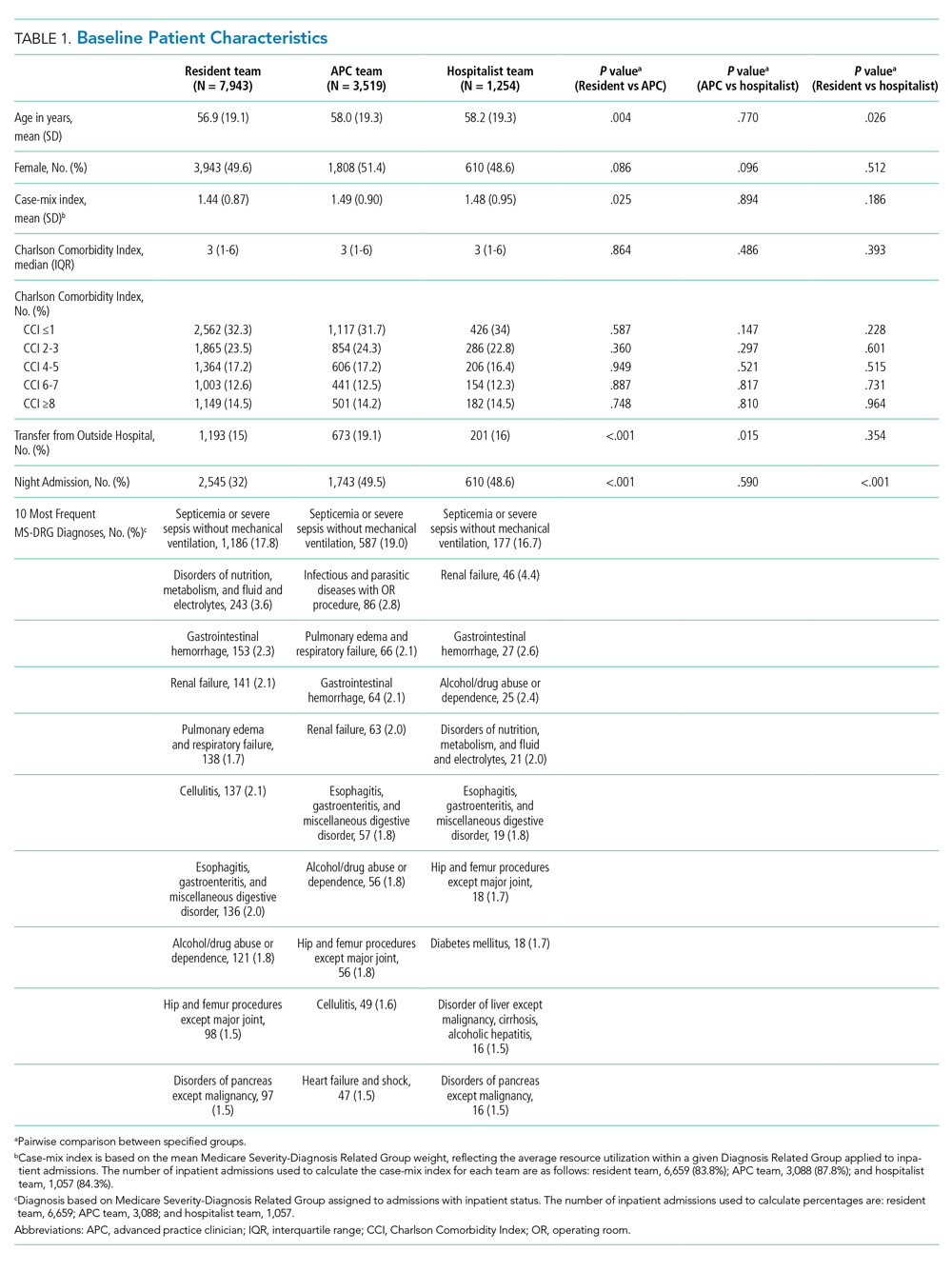

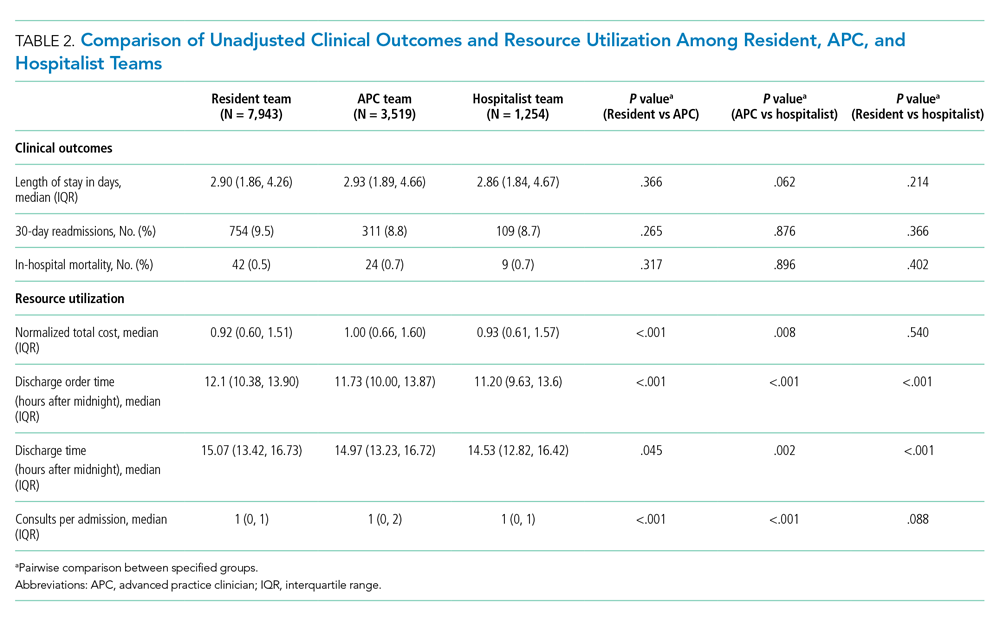

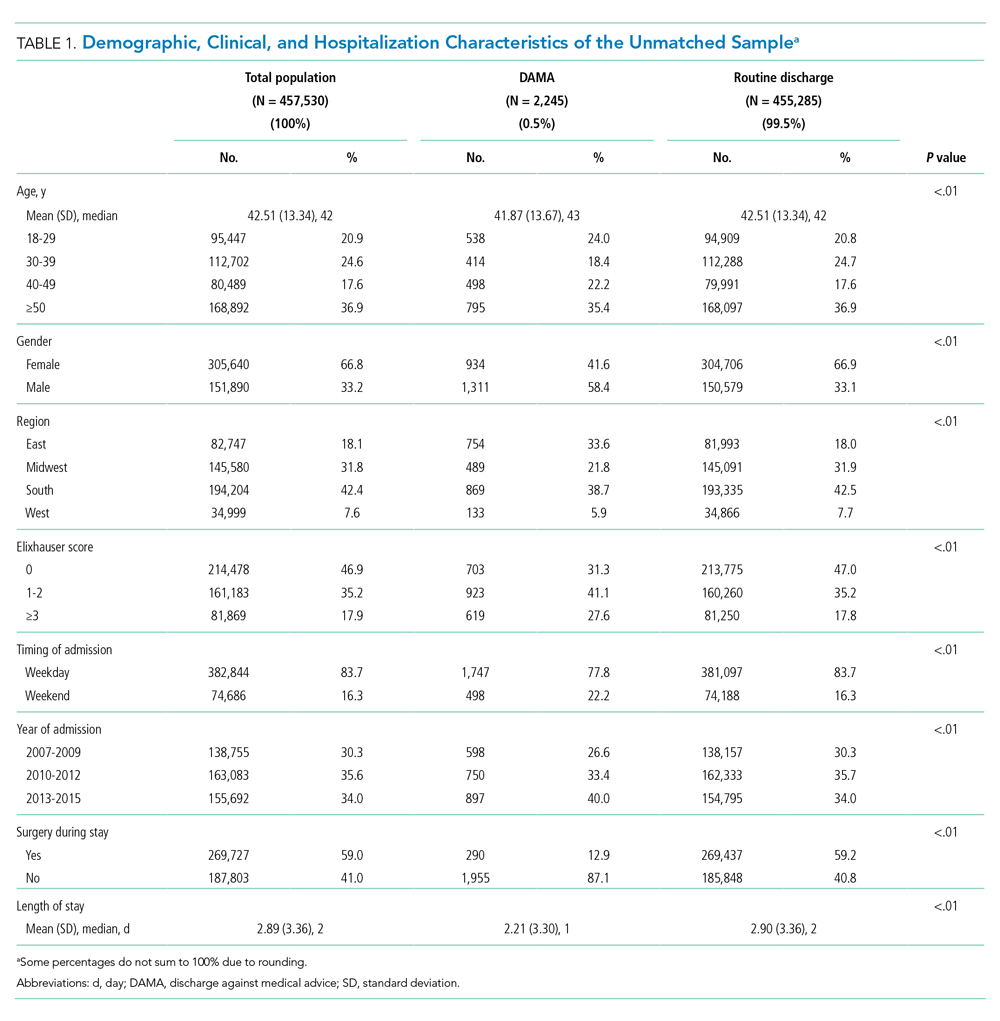

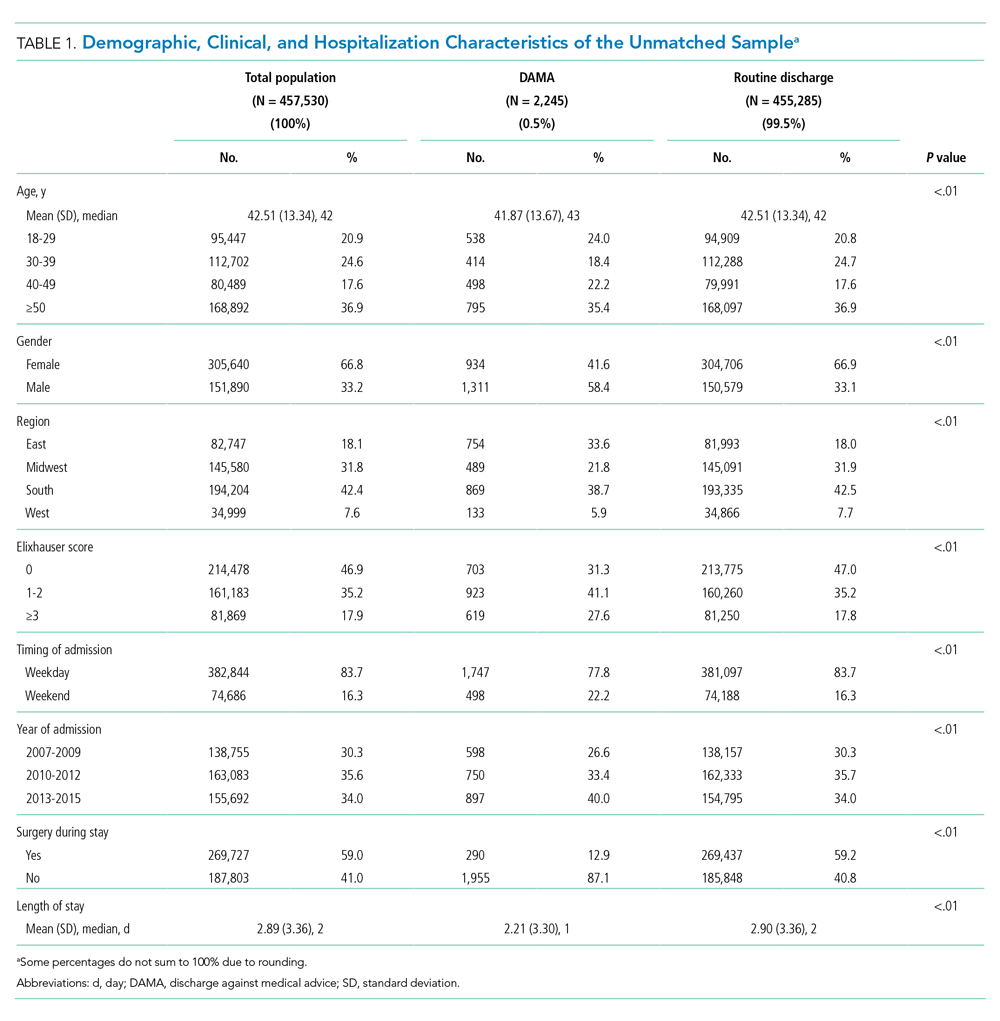

Over the study period, there were 6424 unique patient admissions on the general internal medicine service, with a median LOS of 3.5 days (Table).

The majority of inpatient visits had at least 1 test of CBC (80%; mean, 3.6 tests/visit), creatinine (79.3%; mean, 3.5 tests/visit), or electrolytes (81.6%; mean, 3.9 tests/visit) completed. In total, 56,767 laboratory tests were ordered.

Following the intervention, there was a reduction in both rates of routine blood test orders and their associated costs, with a shift below the mean. The mean number of tests ordered (combined CBC, creatinine, and electrolytes) per inpatient day decreased from 1.19 (SD, 0.21) in the pre-intervention period to 1.11 (SD, 0.05) in the post-intervention period (P < 0.0001), representing a 6.7% relative reduction (Figure 3). We observed a 6.2% relative reduction in costs per inpatient day, translating to a total savings of $26,851 over 1 year for the intervention period.

Discussion

Our study suggests that a multimodal intervention, including CPOE restrictions, resident education with posters, and audit and feedback strategies, can reduce lab test ordering on general internal medicine wards. This finding is similar to those of previous studies using a similar intervention, although different laboratory tests were targeted.1,2,5,6,10,17

Our study found lower test result reductions than those reported by a previous study, which reported a relative reduction of 17% to 30%,18 and by another investigation that was conducted recently in a similar setting.17 In the latter study, reductions in laboratory testing were mostly found in nonroutine tests, and no significant improvements were noted in CBC, electrolytes, and creatine, the 3 tests we studied over the same duration.17 This may represent a ceiling effect to reducing laboratory testing, and efforts to reduce CBC, electrolytes, and creatinine testing beyond 0.3 to 0.4 tests per inpatient day (or combined 1.16 tests per inpatient day) may not be clinically appropriate or possible. This information can guide institutions to include other areas of overuse based on rates of utilization in order to maximize the benefits from a resource intensive intervention.