User login

Evaluation of Pharmacologic Interventions for Weight Management in a Veteran Population

The American Heart Association, the American College of Cardiology, and the Obesity Society define overweight as a body mass index (BMI) of 25 to 29.9 and obesity as a BMI ≥ 30. Morbid obesity is defined as a BMI ≥ 35 or 40.2,3 Based on these BMI cutoffs, the Endocrine Society recommends diet and lifestyle as the foundation of weight management and pharmacotherapy for those with a BMI ≥ 30 without comorbidities. In patients with a BMI ≥ 27, weight management medications may be considered if a patient has comorbid hypertension, T2DM, dyslipidemia, metabolic syndrome, obstructive sleep apnea, or nonalcoholic fatty liver disease. Patients with BMI > 40 are eligible for weight loss surgery.4

Lifestyle and dietary interventions are the foundation of current weight management guidelines from the Endocrine Society.4 At a minimum, guidelines recommended enrolling motivated patients in a high-intensity lifestyle intervention class of at least 14 sessions in the first 6 months to reach a goal weight loss of 5 to 10% from baseline and to maintain a reduction of 3 to 5% from baseline.3 Medications are recommended as an adjunct to lifestyle and dietary changes. Most weight management medications work in the brain to stimulate satiety signaling, which helps motivated patients adhere to their dietary interventions, assist those who have been unsuccessful in earlier weight loss attempts, and help maintain weight.3,4

Guidelines recommend 7 weight management medications, including orlistat (both prescription strength and over-the-counter), liraglutide, phentermine, phentermine/topiramate, lorcaserin, and naltrexone/bupropion. Using medications to assist with weight loss increases likelihood that patients will achieve 5 to 10% weight loss from baseline.5,6 Studies looking at long-term effects of these medications on weight loss have found improvements in blood pressure (BP), biomarkers for cardiovascular disease, and T2DM-related comorbidities.3,5,7

Positive effects on comorbidities have been found to be related to drug class and mechanism of action (MOA); those that also are approved for T2DM have demonstrated the most favorable cardiovascular effects.7 Other medications that work as stimulants or as modulators of serotonin pathways are associated with increased risks, prompting the US Food and Drug Administration (FDA) to remove some medications from the market.7,8 In January 2020, lorcaserin was taken off the market because of increased risk of cancer found in postmarketing surveillance.9 The benefit of weight loss must be weighed against the risk of medication use.

Monthly follow-up is recommended with weight management medications in the beginning to assess safety and efficacy; medications should be discontinued if weight loss is inadequate in the first 3 months.1,3,4 Limited studies have assessed the long-term use of weight management medications in a real-world setting. Medications are prescribed for weight management at Veteran Health Indiana (VHI) in outpatient clinics, including primary care, endocrinology, and gastrointestinal (GI) specialties. However, prescribing practices, outcomes, and adherence to guideline recommendations have not been studied. Data from this study will be used to better understand how VHI can serve its veterans through diet, lifestyle, and pharmacologic interventions.

Methods

We conducted a single-center, retrospective chart review for patients started on weight management medications at VHI. A patient list was generated based on prescription fills from June 1, 2017 to June 30, 2019. All data were obtained using the Computerized Patient Record System and patients were not contacted. This study was approved by the Indiana University Health Institutional Review Board and the VHI Research and Development Committee.

At the time of study, orlistat, liraglutide, phentermine/topiramate,

Patients were included in the study if they received a prescription of any 1 of the 5 available medications during the enrollment period. Patients were excluded if they received a prescription from or were treated by a civilian health care provider, if they never used the medication, or if their weight loss was attributed to a cancer diagnosis. These criteria produced 86 patients of whom 96 unique weight loss prescriptions were generated. Data were collected for each instance of medication use so that some patients were included multiple times. In this case, data collection for the failed medication ended when failure was documented, and new data points began when new medication was prescribed; all data collected were per medication, not per patient. This method was used to account for medication failure and provide accurate weight loss results based on medication choice within this institution.

The primary outcomes included total weight loss and weight loss as a percentage of baseline weight at 3, 6, 12, and > 12 months of therapy. Secondary outcomes included weight loss of 5% from baseline, rate of successful weight maintenance after initial weight loss of 5% from baseline, adverse drug reaction (ADR) monitoring, and use of weight management medications across clinics at VHI.

Demographic data included race, age, sex, baseline weight, BMI, and comorbid medical conditions. Comorbidities were collected based on the most recent primary care clinical note before initiating medication. Medication data collected included medications used to manage comorbidities. Data related to weight management medication included prescribing clinic, reason for medication discontinuation, or bariatric surgery intervention if applicable.

Efficacy outcome data included weight and BMI across therapy duration. Safety outcomes data included heart rate, BP, and ADRs that resulted in medication discontinuation as documented in the electronic health record (EHR).

We used descriptive statistics, including mean, standard deviation (SD), range, and percentage. For continuous data, Kruskal-Wallis tests were used because of nonparametric data distribution among the different medications with a prespecified α = 0.05. With the observed sample sizes and SDs in this study, post hoc poststudy power calculations showed that the study had 80% power at a 5% significance level to detect weight changes of 8.6 kg, 7.3 kg, and 12.4 kg at 3, 6, and 12 months, respectively, using nonparametric tests.

Results

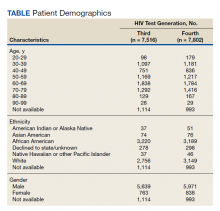

A total of 86 patients were identified based on prescription fills, which produced 99 unique instances of medication use. Of the 99 identified, 3 met exclusion criteria and were not included in the final analysis. Among included veterans, 16 were female and 80 were male (Table 1). Most of those included identified as White race (86%), male (83%), and mean age 53 years. At baseline, mean weight was 130 kg and mean BMI 41.

Comorbidities and Medication Use

Hypertension (66%), hyperlipidemia (64%), and psychiatric diagnoses (50%) were most common comorbid conditions. Substance use (23%) and T2DM (40%) were the most common comorbidities influencing medication choice. Substance use evaluation included amphetamines and cocaine for this analysis.

Phentermine/topiramate is the preferred first-line agent unless patients have contraindications for use, in which case naltrexone/bupropion is recommended, based on guidelines for weight management medications within the VHI system. However, for patients with comorbid T2DM, liraglutide is preferred because of its beneficial effects for both weight loss and blood glucose control.2 Most patients at VHI were started on liraglutide (44%) or phentermine/topiramate (42%), which was in line with recommendations. Our sample included ≥ 1 prescription for each medication available at our facility, although the number of patients on each medication was not equal. Of note, the one patient taking lorcaserin at the time of study discontinued therapy in response to recent FDA guidance.9

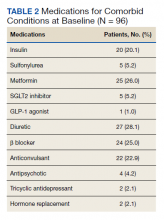

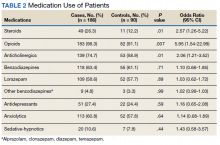

Medications for comorbid conditions could contribute to weight gain. Of the patient sample, β blockers (n = 24) and anticonvulsants, including gabapentin and pregabalin (n = 22) were the most common Other medications that could have contributed to weight gain included sulfonylureas (n = 5), antipsychotics (n = 4), tricyclic antidepressants (n = 2), and hormone replacement therapies (n = 2).

Primary Outcomes

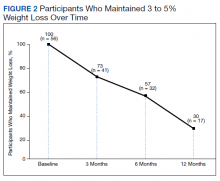

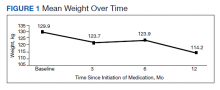

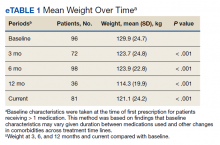

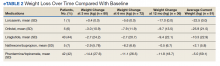

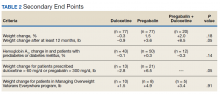

The mean weight of participants dropped from 129.9 to 114.2 kg over the 12 months of weight management medication therapy for a absolute difference of 15.8 kg (Figure 1 and eTable 1 available at doi:10.12788/fp.0117). Weight loss was recorded at 3, 6, 12, and > 12 months of weight management therapy. At each time point, weight loss was statistically significant (P < .001) compared with baseline (Table 2), even though not every patient had weight loss records at each time point.

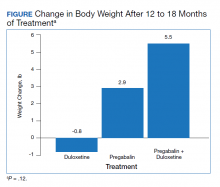

When classified by medication choice,

Secondary Outcomes

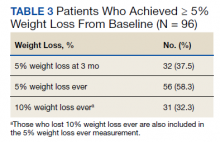

More than one-half of the patients analyzed lost 5 to 10% from baseline while taking weight management medication.

Among patients who lost at least 5% from baseline, we performed further analysis to assess weight maintenance of 3 to 5% from baseline for 12 months.

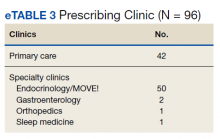

We found that most of our prescriptions (n = 50) were entered by the endocrinology department in conjunction with the MOVE! program (eTable 3 available at doi:10.12788/fp.0117). All 4 of our primary care clinics prescribed weight loss medication; however, 1 clinic prescribed the most. Other prescriptions came from community-based outpatient clinics or other specialties, including gastroenterology, orthopedics, and sleep medicine.

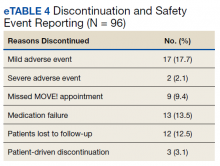

Nineteen (18%) patients experienced an adverse event (AE) that led to medication discontinuation, which was recorded in their chart (eTable 4 available at doi:10.12788/fp.0117). Most common AEs were GI upset with liraglutide or orlistat or dull aching and pain with phentermine/topiramate. Two severe AEs occurred: One patient experienced a change in mental health status and suicide attempt with naltrexone/bupropion; and 1 patient discontinued phentermine/topiramate because of a change in neurologic status.

Primarily medications were stopped because of inadequate weight loss (n = 13), and most patients tried additional medications. However, 1 medication failure resulted in sleeve gastrectomy. Other reasons for medication discontinuation included missed MOVE! appointments, patient lost to follow-up, and patient-elected discontinuation.

Discussion

This study evaluated the use and outcomes of weight management medication among veterans at VHI. The study aimed to better understand the efficacy and safety of these medications while exposing potential weaknesses in care and to promote avenues to improve weight loss and maintenance.

Clinical trials for weight management medications reported weight loss of 8 to 10 kg over 56 weeks: 21 to 63% of patients losing at least 5% from baseline weight.10-14 The findings from our study found a higher average weight loss (−15.8 kg) than that reported in trials and a consistent percentage of patients (58.3%) who achieved at least 5% weight loss. It is promising to see that when used in a noncontrolled setting, these medications were able to produce weight loss consistent with results seen in large, controlled trials.

Pi-Sunyer and colleagues found continued weight loss after the initial 5% weight loss to an eventual 10% weight loss in many patients.10 Additionally, Smith and colleagues found that nearly 68% of their participants who took lorcaserin were able to maintain 3 to 5% weight loss over 12 months.13 Sjöström and colleagues acknowledged that many patients taking orlistat for an extended period began to gain weight, although at one-half the rate than that seen in the placebo group.12 This study found that fewer patients were able to maintain their weight loss over 12 months, with only 30% of patients maintaining 3 to 5% weight loss from baseline. This difference in weight maintenance likely was because of the uncontrolled nature of this study. Once patients reach their initial weight loss goal, even the most motivated patients will have trouble maintaining that weight.4 Despite the challenges associated with maintaining weight loss, the quality of life benefits patients gained and potential reductions in health care spending support using resources to improve these outcomes.2,14,15

Pi-Sunyer and colleagues reported high incidences of nausea (40%), vomiting (16%), diarrhea (21%), and constipation (20%) with liraglutide.10 Sjöström and colleagues reported 7% of patients experienced GI upset with orlistat.12 Comparatively, only 17% of our patients reported AEs that required discontinuation, including GI upset. One patient in our study discontinued naltrexone/bupropion because of a significant change in mental status and suicide attempt. Clinical trials did not report a greater risk of depression or suicidality compared with placebo; however, there is a warning on the labeling of naltrexone/bupropion for increased suicidality with the use of antidepressant agents.16,17 The neurologic AE that required discontinuation of phentermine/topiramate at our institution is unique based on published information.11,18

The data from this study reinforced the observation that weight maintenance is the most challenging aspect of weight loss. Although our data showed clinically meaningful weight loss from baseline, many patients regained their weight, and some exceeded their baseline weight. Beyond providing these medications, this evidence suggests the need for close, continued follow-up through patients’ weight loss journey.

Limitations

Because this is a retrospective chart review, data collection was influenced by and limited to information that had been recorded in the EHR. AEs that resulted in medication discontinuation were assessed from the patient’s chart, which might not be correct if providers did not update the records. Follow-up was not always scheduled at regular intervals after medication initiation, resulting in varying sample numbers at each time point, potentially interfering with true weight loss averages. Although not included in this analysis, it might be beneficial to evaluate adherence to recommendations for follow-up with laboratory and weight monitoring to better capture where future monitoring can be improved. Second, there was an unbalanced number of patients taking each medication. Specifically, we saw a change in weight with orlistat that exceeded what is consistently seen in larger, more controlled trials. Although this is an effect of the real world, small sample sizes cannot be generalized to the larger population and might result in data reflecting that of an outlier. Last, there is a lack of generalizability because of the veteran population demographic, which is more male and lacks ethnic diversity. This study also was carried out at a single, educational tertiary medical center, which might not apply to all populations.

Conclusions

Despite the limitations discussed, this study shows that the use of weight management medications in a general veteran population produces initial weight loss consistent with previous studies. However, there is room for continued improvement in follow-up strategies to promote greater weight maintenance after initial weight loss. Considering the high health care costs, personal burden, and potential long-term complications associated with obesity, efforts to promote development of programs that support weight management and maintenance are imperative.

Acknowledgment

This material is the result of work supported with resources and the use of facilities at Veteran Health Indiana.

1. Centers for Disease Control and Prevention. Adult obesity facts. Accessed April 2020. https://www.cdc.gov/obesity/data/adult.html

2. The Management of Overweight and Obesity Working Group. VA/DoD Clinical Practice Guideline for Screening and Management of Overweight and Obesity. Accessed March 13, 2021. https://www.healthquality.va.gov/guidelines/CD/obesity/VADoDCPGManagementOfOverweightAndObesityFinal.pdf

3. Jensen MD, Ryan DH, Apovian CM, et al; American College of Cardiology/American Heart Association Task Force on Practice Guidelines; Obesity Society. 2013 AHA/ACC/TOS guideline for the management of overweight and obesity in adults: a report of the American College of Cardiology/American Heart Association Task Force on Practice Guidelines and the Obesity Society. J Am Coll Cardiol. 2014;63(25, pt B):2985-3023. doi:10.1016/j.jacc.2013.11.004

4. Apovian CM, Aronne LJ, Bessesen DH, et al; Endocrine Society. Pharmacological management of obesity: an Endocrine Society clinical practice guideline. J Clin Endocrinol Metab 2015;100(2):342-362. doi:10.1210/jc.2014-3415

5. Rucker D, Padwal R, Li SK, Curioni C, Lau DCW. Long term pharmacotherapy for obesity and overweight: updated meta-analysis. BMJ. 2007;335(7631):1194-1199. doi:10.1136/bmj.39385.413113.25

6. Siebenhofer A, Winterholer, S, Jeitler K, et al. Long-term effects of weight-reducing drugs in people with hypertension. Cochrane Database Syst Rev 2021;1:CD007654. doi:10.1002/14651858.CD007654.pub5

7. Bramante CT, Raatz S, Bomber EM, Oberle MM, Ryder JR. Cardiovascular risks and benefits of medications used for weight loss. Front Endocrinol (Lausanne). 2020;10:883. doi:10.3389/fendo.2019.00883

8. Christensen R, Kristensen PK, Bartels EM, Bliddal H, Astrup A. Efficacy and safety of the weight-loss drug rimonabant: a meta-analysis of randomized trials. Lancet. 2007;370(9600):1706-1713. doi:10.1016/S0140-6736(07)61721-8

9. US Food and Drug Administration. FDA requests the withdrawal of the weight-loss drug Blevique, Belvique XR (lorcaserin) from the market. Accessed April 2020. https://www.fda.gov/drugs/drug-safety-and-availability/fda-requests-withdrawal-weight-loss-drug-belviq-belviq-xr-lorcaserin-market

10. Pi-Sunyer X, Astrup A, Fujioka K, et al; SCALE Obesity and Prediabetes NN8022-1839 Study Group. A randomized, controlled trial of 3.0 mg of liraglutide in weight management. N Engl J Med. 2015;373(1):11-22. doi:10.1056/NEJMoa1411892

11. Gadde KM, Allison DB, Ryan DH, et al. Effects of low-dose, controlled-release phentermine plus topiramate combination on weight and associated comorbidities in overweight and obese adults (CONQUER): a randomized, placebo-controlled, phase 3 trial. Lancet. 2011;377(9774):1341-1352. doi:10.1016/S0140-6736(11)60205-5

12. Sjöström L, Rissanen A, Andersen T, et al. Randomised placebo-controlled trial of orlistat for weight loss and prevention of weight regain in obese patients. European Multicentre Orlistat Study Group. Lancet. 1998;352(9123):167-172. doi:10.1016/s0140-6736(97)11509-4

13. Smith SR, Weissman NJ, Anderson CM, et al; Behavioral Modification and Lorcaserin for Overweight and Obesity Management (BLOOM) Study Group. Multicenter, placebo-controlled trial of lorcaserin for weight loss. N Engl J Med. 2010;363(3):245-256. doi:10.1056/NEJMoa0909809

14. Warkentin LM, Das D, Majumdar SR, Johnson JA, Padwal RS. The effect of weight loss on health-related quality of life: systematic review and meta-analysis of randomized trials. Obes Rev. 2014;15(3):169-182. doi:10.1111/obr.12113

15. Finkelstein EA, Trogdon JG, Cohen JW, Dietz W. Annual medical spending attributable to obesity: payer-and service-specific estimates. Health Aff (Millwood). 2009;28(5):w822-831. doi:10.1377/hlthaff.28.5.w822

16. Greenway FL, Fujioka K, Plodkowski RA, et al; COR-I Study Group. Effect of naltrexone plus bupropion on weight loss in overweight and obese adults (COR-I): a multicenter, randomized, double-blind, placebo-controlled phase 3 trial. Lancet. 2010;376(9741):595-605. doi:10.1016/S0140-6736(10)60888-4

17. Contrave. Prescribing information. Nalpropion Pharmaceuticals, Inc; 2019.

18. Qsymia. Prescribing information. VIVUS Inc; 2018.

The American Heart Association, the American College of Cardiology, and the Obesity Society define overweight as a body mass index (BMI) of 25 to 29.9 and obesity as a BMI ≥ 30. Morbid obesity is defined as a BMI ≥ 35 or 40.2,3 Based on these BMI cutoffs, the Endocrine Society recommends diet and lifestyle as the foundation of weight management and pharmacotherapy for those with a BMI ≥ 30 without comorbidities. In patients with a BMI ≥ 27, weight management medications may be considered if a patient has comorbid hypertension, T2DM, dyslipidemia, metabolic syndrome, obstructive sleep apnea, or nonalcoholic fatty liver disease. Patients with BMI > 40 are eligible for weight loss surgery.4

Lifestyle and dietary interventions are the foundation of current weight management guidelines from the Endocrine Society.4 At a minimum, guidelines recommended enrolling motivated patients in a high-intensity lifestyle intervention class of at least 14 sessions in the first 6 months to reach a goal weight loss of 5 to 10% from baseline and to maintain a reduction of 3 to 5% from baseline.3 Medications are recommended as an adjunct to lifestyle and dietary changes. Most weight management medications work in the brain to stimulate satiety signaling, which helps motivated patients adhere to their dietary interventions, assist those who have been unsuccessful in earlier weight loss attempts, and help maintain weight.3,4

Guidelines recommend 7 weight management medications, including orlistat (both prescription strength and over-the-counter), liraglutide, phentermine, phentermine/topiramate, lorcaserin, and naltrexone/bupropion. Using medications to assist with weight loss increases likelihood that patients will achieve 5 to 10% weight loss from baseline.5,6 Studies looking at long-term effects of these medications on weight loss have found improvements in blood pressure (BP), biomarkers for cardiovascular disease, and T2DM-related comorbidities.3,5,7

Positive effects on comorbidities have been found to be related to drug class and mechanism of action (MOA); those that also are approved for T2DM have demonstrated the most favorable cardiovascular effects.7 Other medications that work as stimulants or as modulators of serotonin pathways are associated with increased risks, prompting the US Food and Drug Administration (FDA) to remove some medications from the market.7,8 In January 2020, lorcaserin was taken off the market because of increased risk of cancer found in postmarketing surveillance.9 The benefit of weight loss must be weighed against the risk of medication use.

Monthly follow-up is recommended with weight management medications in the beginning to assess safety and efficacy; medications should be discontinued if weight loss is inadequate in the first 3 months.1,3,4 Limited studies have assessed the long-term use of weight management medications in a real-world setting. Medications are prescribed for weight management at Veteran Health Indiana (VHI) in outpatient clinics, including primary care, endocrinology, and gastrointestinal (GI) specialties. However, prescribing practices, outcomes, and adherence to guideline recommendations have not been studied. Data from this study will be used to better understand how VHI can serve its veterans through diet, lifestyle, and pharmacologic interventions.

Methods

We conducted a single-center, retrospective chart review for patients started on weight management medications at VHI. A patient list was generated based on prescription fills from June 1, 2017 to June 30, 2019. All data were obtained using the Computerized Patient Record System and patients were not contacted. This study was approved by the Indiana University Health Institutional Review Board and the VHI Research and Development Committee.

At the time of study, orlistat, liraglutide, phentermine/topiramate,

Patients were included in the study if they received a prescription of any 1 of the 5 available medications during the enrollment period. Patients were excluded if they received a prescription from or were treated by a civilian health care provider, if they never used the medication, or if their weight loss was attributed to a cancer diagnosis. These criteria produced 86 patients of whom 96 unique weight loss prescriptions were generated. Data were collected for each instance of medication use so that some patients were included multiple times. In this case, data collection for the failed medication ended when failure was documented, and new data points began when new medication was prescribed; all data collected were per medication, not per patient. This method was used to account for medication failure and provide accurate weight loss results based on medication choice within this institution.

The primary outcomes included total weight loss and weight loss as a percentage of baseline weight at 3, 6, 12, and > 12 months of therapy. Secondary outcomes included weight loss of 5% from baseline, rate of successful weight maintenance after initial weight loss of 5% from baseline, adverse drug reaction (ADR) monitoring, and use of weight management medications across clinics at VHI.

Demographic data included race, age, sex, baseline weight, BMI, and comorbid medical conditions. Comorbidities were collected based on the most recent primary care clinical note before initiating medication. Medication data collected included medications used to manage comorbidities. Data related to weight management medication included prescribing clinic, reason for medication discontinuation, or bariatric surgery intervention if applicable.

Efficacy outcome data included weight and BMI across therapy duration. Safety outcomes data included heart rate, BP, and ADRs that resulted in medication discontinuation as documented in the electronic health record (EHR).

We used descriptive statistics, including mean, standard deviation (SD), range, and percentage. For continuous data, Kruskal-Wallis tests were used because of nonparametric data distribution among the different medications with a prespecified α = 0.05. With the observed sample sizes and SDs in this study, post hoc poststudy power calculations showed that the study had 80% power at a 5% significance level to detect weight changes of 8.6 kg, 7.3 kg, and 12.4 kg at 3, 6, and 12 months, respectively, using nonparametric tests.

Results

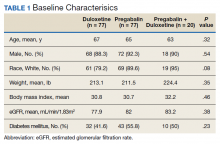

A total of 86 patients were identified based on prescription fills, which produced 99 unique instances of medication use. Of the 99 identified, 3 met exclusion criteria and were not included in the final analysis. Among included veterans, 16 were female and 80 were male (Table 1). Most of those included identified as White race (86%), male (83%), and mean age 53 years. At baseline, mean weight was 130 kg and mean BMI 41.

Comorbidities and Medication Use

Hypertension (66%), hyperlipidemia (64%), and psychiatric diagnoses (50%) were most common comorbid conditions. Substance use (23%) and T2DM (40%) were the most common comorbidities influencing medication choice. Substance use evaluation included amphetamines and cocaine for this analysis.

Phentermine/topiramate is the preferred first-line agent unless patients have contraindications for use, in which case naltrexone/bupropion is recommended, based on guidelines for weight management medications within the VHI system. However, for patients with comorbid T2DM, liraglutide is preferred because of its beneficial effects for both weight loss and blood glucose control.2 Most patients at VHI were started on liraglutide (44%) or phentermine/topiramate (42%), which was in line with recommendations. Our sample included ≥ 1 prescription for each medication available at our facility, although the number of patients on each medication was not equal. Of note, the one patient taking lorcaserin at the time of study discontinued therapy in response to recent FDA guidance.9

Medications for comorbid conditions could contribute to weight gain. Of the patient sample, β blockers (n = 24) and anticonvulsants, including gabapentin and pregabalin (n = 22) were the most common Other medications that could have contributed to weight gain included sulfonylureas (n = 5), antipsychotics (n = 4), tricyclic antidepressants (n = 2), and hormone replacement therapies (n = 2).

Primary Outcomes

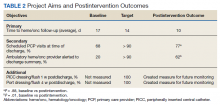

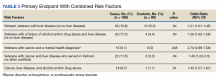

The mean weight of participants dropped from 129.9 to 114.2 kg over the 12 months of weight management medication therapy for a absolute difference of 15.8 kg (Figure 1 and eTable 1 available at doi:10.12788/fp.0117). Weight loss was recorded at 3, 6, 12, and > 12 months of weight management therapy. At each time point, weight loss was statistically significant (P < .001) compared with baseline (Table 2), even though not every patient had weight loss records at each time point.

When classified by medication choice,

Secondary Outcomes

More than one-half of the patients analyzed lost 5 to 10% from baseline while taking weight management medication.

Among patients who lost at least 5% from baseline, we performed further analysis to assess weight maintenance of 3 to 5% from baseline for 12 months.

We found that most of our prescriptions (n = 50) were entered by the endocrinology department in conjunction with the MOVE! program (eTable 3 available at doi:10.12788/fp.0117). All 4 of our primary care clinics prescribed weight loss medication; however, 1 clinic prescribed the most. Other prescriptions came from community-based outpatient clinics or other specialties, including gastroenterology, orthopedics, and sleep medicine.

Nineteen (18%) patients experienced an adverse event (AE) that led to medication discontinuation, which was recorded in their chart (eTable 4 available at doi:10.12788/fp.0117). Most common AEs were GI upset with liraglutide or orlistat or dull aching and pain with phentermine/topiramate. Two severe AEs occurred: One patient experienced a change in mental health status and suicide attempt with naltrexone/bupropion; and 1 patient discontinued phentermine/topiramate because of a change in neurologic status.

Primarily medications were stopped because of inadequate weight loss (n = 13), and most patients tried additional medications. However, 1 medication failure resulted in sleeve gastrectomy. Other reasons for medication discontinuation included missed MOVE! appointments, patient lost to follow-up, and patient-elected discontinuation.

Discussion

This study evaluated the use and outcomes of weight management medication among veterans at VHI. The study aimed to better understand the efficacy and safety of these medications while exposing potential weaknesses in care and to promote avenues to improve weight loss and maintenance.

Clinical trials for weight management medications reported weight loss of 8 to 10 kg over 56 weeks: 21 to 63% of patients losing at least 5% from baseline weight.10-14 The findings from our study found a higher average weight loss (−15.8 kg) than that reported in trials and a consistent percentage of patients (58.3%) who achieved at least 5% weight loss. It is promising to see that when used in a noncontrolled setting, these medications were able to produce weight loss consistent with results seen in large, controlled trials.

Pi-Sunyer and colleagues found continued weight loss after the initial 5% weight loss to an eventual 10% weight loss in many patients.10 Additionally, Smith and colleagues found that nearly 68% of their participants who took lorcaserin were able to maintain 3 to 5% weight loss over 12 months.13 Sjöström and colleagues acknowledged that many patients taking orlistat for an extended period began to gain weight, although at one-half the rate than that seen in the placebo group.12 This study found that fewer patients were able to maintain their weight loss over 12 months, with only 30% of patients maintaining 3 to 5% weight loss from baseline. This difference in weight maintenance likely was because of the uncontrolled nature of this study. Once patients reach their initial weight loss goal, even the most motivated patients will have trouble maintaining that weight.4 Despite the challenges associated with maintaining weight loss, the quality of life benefits patients gained and potential reductions in health care spending support using resources to improve these outcomes.2,14,15

Pi-Sunyer and colleagues reported high incidences of nausea (40%), vomiting (16%), diarrhea (21%), and constipation (20%) with liraglutide.10 Sjöström and colleagues reported 7% of patients experienced GI upset with orlistat.12 Comparatively, only 17% of our patients reported AEs that required discontinuation, including GI upset. One patient in our study discontinued naltrexone/bupropion because of a significant change in mental status and suicide attempt. Clinical trials did not report a greater risk of depression or suicidality compared with placebo; however, there is a warning on the labeling of naltrexone/bupropion for increased suicidality with the use of antidepressant agents.16,17 The neurologic AE that required discontinuation of phentermine/topiramate at our institution is unique based on published information.11,18

The data from this study reinforced the observation that weight maintenance is the most challenging aspect of weight loss. Although our data showed clinically meaningful weight loss from baseline, many patients regained their weight, and some exceeded their baseline weight. Beyond providing these medications, this evidence suggests the need for close, continued follow-up through patients’ weight loss journey.

Limitations

Because this is a retrospective chart review, data collection was influenced by and limited to information that had been recorded in the EHR. AEs that resulted in medication discontinuation were assessed from the patient’s chart, which might not be correct if providers did not update the records. Follow-up was not always scheduled at regular intervals after medication initiation, resulting in varying sample numbers at each time point, potentially interfering with true weight loss averages. Although not included in this analysis, it might be beneficial to evaluate adherence to recommendations for follow-up with laboratory and weight monitoring to better capture where future monitoring can be improved. Second, there was an unbalanced number of patients taking each medication. Specifically, we saw a change in weight with orlistat that exceeded what is consistently seen in larger, more controlled trials. Although this is an effect of the real world, small sample sizes cannot be generalized to the larger population and might result in data reflecting that of an outlier. Last, there is a lack of generalizability because of the veteran population demographic, which is more male and lacks ethnic diversity. This study also was carried out at a single, educational tertiary medical center, which might not apply to all populations.

Conclusions

Despite the limitations discussed, this study shows that the use of weight management medications in a general veteran population produces initial weight loss consistent with previous studies. However, there is room for continued improvement in follow-up strategies to promote greater weight maintenance after initial weight loss. Considering the high health care costs, personal burden, and potential long-term complications associated with obesity, efforts to promote development of programs that support weight management and maintenance are imperative.

Acknowledgment

This material is the result of work supported with resources and the use of facilities at Veteran Health Indiana.

The American Heart Association, the American College of Cardiology, and the Obesity Society define overweight as a body mass index (BMI) of 25 to 29.9 and obesity as a BMI ≥ 30. Morbid obesity is defined as a BMI ≥ 35 or 40.2,3 Based on these BMI cutoffs, the Endocrine Society recommends diet and lifestyle as the foundation of weight management and pharmacotherapy for those with a BMI ≥ 30 without comorbidities. In patients with a BMI ≥ 27, weight management medications may be considered if a patient has comorbid hypertension, T2DM, dyslipidemia, metabolic syndrome, obstructive sleep apnea, or nonalcoholic fatty liver disease. Patients with BMI > 40 are eligible for weight loss surgery.4

Lifestyle and dietary interventions are the foundation of current weight management guidelines from the Endocrine Society.4 At a minimum, guidelines recommended enrolling motivated patients in a high-intensity lifestyle intervention class of at least 14 sessions in the first 6 months to reach a goal weight loss of 5 to 10% from baseline and to maintain a reduction of 3 to 5% from baseline.3 Medications are recommended as an adjunct to lifestyle and dietary changes. Most weight management medications work in the brain to stimulate satiety signaling, which helps motivated patients adhere to their dietary interventions, assist those who have been unsuccessful in earlier weight loss attempts, and help maintain weight.3,4

Guidelines recommend 7 weight management medications, including orlistat (both prescription strength and over-the-counter), liraglutide, phentermine, phentermine/topiramate, lorcaserin, and naltrexone/bupropion. Using medications to assist with weight loss increases likelihood that patients will achieve 5 to 10% weight loss from baseline.5,6 Studies looking at long-term effects of these medications on weight loss have found improvements in blood pressure (BP), biomarkers for cardiovascular disease, and T2DM-related comorbidities.3,5,7

Positive effects on comorbidities have been found to be related to drug class and mechanism of action (MOA); those that also are approved for T2DM have demonstrated the most favorable cardiovascular effects.7 Other medications that work as stimulants or as modulators of serotonin pathways are associated with increased risks, prompting the US Food and Drug Administration (FDA) to remove some medications from the market.7,8 In January 2020, lorcaserin was taken off the market because of increased risk of cancer found in postmarketing surveillance.9 The benefit of weight loss must be weighed against the risk of medication use.

Monthly follow-up is recommended with weight management medications in the beginning to assess safety and efficacy; medications should be discontinued if weight loss is inadequate in the first 3 months.1,3,4 Limited studies have assessed the long-term use of weight management medications in a real-world setting. Medications are prescribed for weight management at Veteran Health Indiana (VHI) in outpatient clinics, including primary care, endocrinology, and gastrointestinal (GI) specialties. However, prescribing practices, outcomes, and adherence to guideline recommendations have not been studied. Data from this study will be used to better understand how VHI can serve its veterans through diet, lifestyle, and pharmacologic interventions.

Methods

We conducted a single-center, retrospective chart review for patients started on weight management medications at VHI. A patient list was generated based on prescription fills from June 1, 2017 to June 30, 2019. All data were obtained using the Computerized Patient Record System and patients were not contacted. This study was approved by the Indiana University Health Institutional Review Board and the VHI Research and Development Committee.

At the time of study, orlistat, liraglutide, phentermine/topiramate,

Patients were included in the study if they received a prescription of any 1 of the 5 available medications during the enrollment period. Patients were excluded if they received a prescription from or were treated by a civilian health care provider, if they never used the medication, or if their weight loss was attributed to a cancer diagnosis. These criteria produced 86 patients of whom 96 unique weight loss prescriptions were generated. Data were collected for each instance of medication use so that some patients were included multiple times. In this case, data collection for the failed medication ended when failure was documented, and new data points began when new medication was prescribed; all data collected were per medication, not per patient. This method was used to account for medication failure and provide accurate weight loss results based on medication choice within this institution.

The primary outcomes included total weight loss and weight loss as a percentage of baseline weight at 3, 6, 12, and > 12 months of therapy. Secondary outcomes included weight loss of 5% from baseline, rate of successful weight maintenance after initial weight loss of 5% from baseline, adverse drug reaction (ADR) monitoring, and use of weight management medications across clinics at VHI.

Demographic data included race, age, sex, baseline weight, BMI, and comorbid medical conditions. Comorbidities were collected based on the most recent primary care clinical note before initiating medication. Medication data collected included medications used to manage comorbidities. Data related to weight management medication included prescribing clinic, reason for medication discontinuation, or bariatric surgery intervention if applicable.

Efficacy outcome data included weight and BMI across therapy duration. Safety outcomes data included heart rate, BP, and ADRs that resulted in medication discontinuation as documented in the electronic health record (EHR).

We used descriptive statistics, including mean, standard deviation (SD), range, and percentage. For continuous data, Kruskal-Wallis tests were used because of nonparametric data distribution among the different medications with a prespecified α = 0.05. With the observed sample sizes and SDs in this study, post hoc poststudy power calculations showed that the study had 80% power at a 5% significance level to detect weight changes of 8.6 kg, 7.3 kg, and 12.4 kg at 3, 6, and 12 months, respectively, using nonparametric tests.

Results

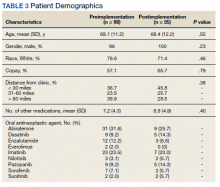

A total of 86 patients were identified based on prescription fills, which produced 99 unique instances of medication use. Of the 99 identified, 3 met exclusion criteria and were not included in the final analysis. Among included veterans, 16 were female and 80 were male (Table 1). Most of those included identified as White race (86%), male (83%), and mean age 53 years. At baseline, mean weight was 130 kg and mean BMI 41.

Comorbidities and Medication Use

Hypertension (66%), hyperlipidemia (64%), and psychiatric diagnoses (50%) were most common comorbid conditions. Substance use (23%) and T2DM (40%) were the most common comorbidities influencing medication choice. Substance use evaluation included amphetamines and cocaine for this analysis.

Phentermine/topiramate is the preferred first-line agent unless patients have contraindications for use, in which case naltrexone/bupropion is recommended, based on guidelines for weight management medications within the VHI system. However, for patients with comorbid T2DM, liraglutide is preferred because of its beneficial effects for both weight loss and blood glucose control.2 Most patients at VHI were started on liraglutide (44%) or phentermine/topiramate (42%), which was in line with recommendations. Our sample included ≥ 1 prescription for each medication available at our facility, although the number of patients on each medication was not equal. Of note, the one patient taking lorcaserin at the time of study discontinued therapy in response to recent FDA guidance.9

Medications for comorbid conditions could contribute to weight gain. Of the patient sample, β blockers (n = 24) and anticonvulsants, including gabapentin and pregabalin (n = 22) were the most common Other medications that could have contributed to weight gain included sulfonylureas (n = 5), antipsychotics (n = 4), tricyclic antidepressants (n = 2), and hormone replacement therapies (n = 2).

Primary Outcomes

The mean weight of participants dropped from 129.9 to 114.2 kg over the 12 months of weight management medication therapy for a absolute difference of 15.8 kg (Figure 1 and eTable 1 available at doi:10.12788/fp.0117). Weight loss was recorded at 3, 6, 12, and > 12 months of weight management therapy. At each time point, weight loss was statistically significant (P < .001) compared with baseline (Table 2), even though not every patient had weight loss records at each time point.

When classified by medication choice,

Secondary Outcomes

More than one-half of the patients analyzed lost 5 to 10% from baseline while taking weight management medication.

Among patients who lost at least 5% from baseline, we performed further analysis to assess weight maintenance of 3 to 5% from baseline for 12 months.

We found that most of our prescriptions (n = 50) were entered by the endocrinology department in conjunction with the MOVE! program (eTable 3 available at doi:10.12788/fp.0117). All 4 of our primary care clinics prescribed weight loss medication; however, 1 clinic prescribed the most. Other prescriptions came from community-based outpatient clinics or other specialties, including gastroenterology, orthopedics, and sleep medicine.

Nineteen (18%) patients experienced an adverse event (AE) that led to medication discontinuation, which was recorded in their chart (eTable 4 available at doi:10.12788/fp.0117). Most common AEs were GI upset with liraglutide or orlistat or dull aching and pain with phentermine/topiramate. Two severe AEs occurred: One patient experienced a change in mental health status and suicide attempt with naltrexone/bupropion; and 1 patient discontinued phentermine/topiramate because of a change in neurologic status.

Primarily medications were stopped because of inadequate weight loss (n = 13), and most patients tried additional medications. However, 1 medication failure resulted in sleeve gastrectomy. Other reasons for medication discontinuation included missed MOVE! appointments, patient lost to follow-up, and patient-elected discontinuation.

Discussion

This study evaluated the use and outcomes of weight management medication among veterans at VHI. The study aimed to better understand the efficacy and safety of these medications while exposing potential weaknesses in care and to promote avenues to improve weight loss and maintenance.

Clinical trials for weight management medications reported weight loss of 8 to 10 kg over 56 weeks: 21 to 63% of patients losing at least 5% from baseline weight.10-14 The findings from our study found a higher average weight loss (−15.8 kg) than that reported in trials and a consistent percentage of patients (58.3%) who achieved at least 5% weight loss. It is promising to see that when used in a noncontrolled setting, these medications were able to produce weight loss consistent with results seen in large, controlled trials.

Pi-Sunyer and colleagues found continued weight loss after the initial 5% weight loss to an eventual 10% weight loss in many patients.10 Additionally, Smith and colleagues found that nearly 68% of their participants who took lorcaserin were able to maintain 3 to 5% weight loss over 12 months.13 Sjöström and colleagues acknowledged that many patients taking orlistat for an extended period began to gain weight, although at one-half the rate than that seen in the placebo group.12 This study found that fewer patients were able to maintain their weight loss over 12 months, with only 30% of patients maintaining 3 to 5% weight loss from baseline. This difference in weight maintenance likely was because of the uncontrolled nature of this study. Once patients reach their initial weight loss goal, even the most motivated patients will have trouble maintaining that weight.4 Despite the challenges associated with maintaining weight loss, the quality of life benefits patients gained and potential reductions in health care spending support using resources to improve these outcomes.2,14,15

Pi-Sunyer and colleagues reported high incidences of nausea (40%), vomiting (16%), diarrhea (21%), and constipation (20%) with liraglutide.10 Sjöström and colleagues reported 7% of patients experienced GI upset with orlistat.12 Comparatively, only 17% of our patients reported AEs that required discontinuation, including GI upset. One patient in our study discontinued naltrexone/bupropion because of a significant change in mental status and suicide attempt. Clinical trials did not report a greater risk of depression or suicidality compared with placebo; however, there is a warning on the labeling of naltrexone/bupropion for increased suicidality with the use of antidepressant agents.16,17 The neurologic AE that required discontinuation of phentermine/topiramate at our institution is unique based on published information.11,18

The data from this study reinforced the observation that weight maintenance is the most challenging aspect of weight loss. Although our data showed clinically meaningful weight loss from baseline, many patients regained their weight, and some exceeded their baseline weight. Beyond providing these medications, this evidence suggests the need for close, continued follow-up through patients’ weight loss journey.

Limitations

Because this is a retrospective chart review, data collection was influenced by and limited to information that had been recorded in the EHR. AEs that resulted in medication discontinuation were assessed from the patient’s chart, which might not be correct if providers did not update the records. Follow-up was not always scheduled at regular intervals after medication initiation, resulting in varying sample numbers at each time point, potentially interfering with true weight loss averages. Although not included in this analysis, it might be beneficial to evaluate adherence to recommendations for follow-up with laboratory and weight monitoring to better capture where future monitoring can be improved. Second, there was an unbalanced number of patients taking each medication. Specifically, we saw a change in weight with orlistat that exceeded what is consistently seen in larger, more controlled trials. Although this is an effect of the real world, small sample sizes cannot be generalized to the larger population and might result in data reflecting that of an outlier. Last, there is a lack of generalizability because of the veteran population demographic, which is more male and lacks ethnic diversity. This study also was carried out at a single, educational tertiary medical center, which might not apply to all populations.

Conclusions

Despite the limitations discussed, this study shows that the use of weight management medications in a general veteran population produces initial weight loss consistent with previous studies. However, there is room for continued improvement in follow-up strategies to promote greater weight maintenance after initial weight loss. Considering the high health care costs, personal burden, and potential long-term complications associated with obesity, efforts to promote development of programs that support weight management and maintenance are imperative.

Acknowledgment

This material is the result of work supported with resources and the use of facilities at Veteran Health Indiana.

1. Centers for Disease Control and Prevention. Adult obesity facts. Accessed April 2020. https://www.cdc.gov/obesity/data/adult.html

2. The Management of Overweight and Obesity Working Group. VA/DoD Clinical Practice Guideline for Screening and Management of Overweight and Obesity. Accessed March 13, 2021. https://www.healthquality.va.gov/guidelines/CD/obesity/VADoDCPGManagementOfOverweightAndObesityFinal.pdf

3. Jensen MD, Ryan DH, Apovian CM, et al; American College of Cardiology/American Heart Association Task Force on Practice Guidelines; Obesity Society. 2013 AHA/ACC/TOS guideline for the management of overweight and obesity in adults: a report of the American College of Cardiology/American Heart Association Task Force on Practice Guidelines and the Obesity Society. J Am Coll Cardiol. 2014;63(25, pt B):2985-3023. doi:10.1016/j.jacc.2013.11.004

4. Apovian CM, Aronne LJ, Bessesen DH, et al; Endocrine Society. Pharmacological management of obesity: an Endocrine Society clinical practice guideline. J Clin Endocrinol Metab 2015;100(2):342-362. doi:10.1210/jc.2014-3415

5. Rucker D, Padwal R, Li SK, Curioni C, Lau DCW. Long term pharmacotherapy for obesity and overweight: updated meta-analysis. BMJ. 2007;335(7631):1194-1199. doi:10.1136/bmj.39385.413113.25

6. Siebenhofer A, Winterholer, S, Jeitler K, et al. Long-term effects of weight-reducing drugs in people with hypertension. Cochrane Database Syst Rev 2021;1:CD007654. doi:10.1002/14651858.CD007654.pub5

7. Bramante CT, Raatz S, Bomber EM, Oberle MM, Ryder JR. Cardiovascular risks and benefits of medications used for weight loss. Front Endocrinol (Lausanne). 2020;10:883. doi:10.3389/fendo.2019.00883

8. Christensen R, Kristensen PK, Bartels EM, Bliddal H, Astrup A. Efficacy and safety of the weight-loss drug rimonabant: a meta-analysis of randomized trials. Lancet. 2007;370(9600):1706-1713. doi:10.1016/S0140-6736(07)61721-8

9. US Food and Drug Administration. FDA requests the withdrawal of the weight-loss drug Blevique, Belvique XR (lorcaserin) from the market. Accessed April 2020. https://www.fda.gov/drugs/drug-safety-and-availability/fda-requests-withdrawal-weight-loss-drug-belviq-belviq-xr-lorcaserin-market

10. Pi-Sunyer X, Astrup A, Fujioka K, et al; SCALE Obesity and Prediabetes NN8022-1839 Study Group. A randomized, controlled trial of 3.0 mg of liraglutide in weight management. N Engl J Med. 2015;373(1):11-22. doi:10.1056/NEJMoa1411892

11. Gadde KM, Allison DB, Ryan DH, et al. Effects of low-dose, controlled-release phentermine plus topiramate combination on weight and associated comorbidities in overweight and obese adults (CONQUER): a randomized, placebo-controlled, phase 3 trial. Lancet. 2011;377(9774):1341-1352. doi:10.1016/S0140-6736(11)60205-5

12. Sjöström L, Rissanen A, Andersen T, et al. Randomised placebo-controlled trial of orlistat for weight loss and prevention of weight regain in obese patients. European Multicentre Orlistat Study Group. Lancet. 1998;352(9123):167-172. doi:10.1016/s0140-6736(97)11509-4

13. Smith SR, Weissman NJ, Anderson CM, et al; Behavioral Modification and Lorcaserin for Overweight and Obesity Management (BLOOM) Study Group. Multicenter, placebo-controlled trial of lorcaserin for weight loss. N Engl J Med. 2010;363(3):245-256. doi:10.1056/NEJMoa0909809

14. Warkentin LM, Das D, Majumdar SR, Johnson JA, Padwal RS. The effect of weight loss on health-related quality of life: systematic review and meta-analysis of randomized trials. Obes Rev. 2014;15(3):169-182. doi:10.1111/obr.12113

15. Finkelstein EA, Trogdon JG, Cohen JW, Dietz W. Annual medical spending attributable to obesity: payer-and service-specific estimates. Health Aff (Millwood). 2009;28(5):w822-831. doi:10.1377/hlthaff.28.5.w822

16. Greenway FL, Fujioka K, Plodkowski RA, et al; COR-I Study Group. Effect of naltrexone plus bupropion on weight loss in overweight and obese adults (COR-I): a multicenter, randomized, double-blind, placebo-controlled phase 3 trial. Lancet. 2010;376(9741):595-605. doi:10.1016/S0140-6736(10)60888-4

17. Contrave. Prescribing information. Nalpropion Pharmaceuticals, Inc; 2019.

18. Qsymia. Prescribing information. VIVUS Inc; 2018.

1. Centers for Disease Control and Prevention. Adult obesity facts. Accessed April 2020. https://www.cdc.gov/obesity/data/adult.html

2. The Management of Overweight and Obesity Working Group. VA/DoD Clinical Practice Guideline for Screening and Management of Overweight and Obesity. Accessed March 13, 2021. https://www.healthquality.va.gov/guidelines/CD/obesity/VADoDCPGManagementOfOverweightAndObesityFinal.pdf

3. Jensen MD, Ryan DH, Apovian CM, et al; American College of Cardiology/American Heart Association Task Force on Practice Guidelines; Obesity Society. 2013 AHA/ACC/TOS guideline for the management of overweight and obesity in adults: a report of the American College of Cardiology/American Heart Association Task Force on Practice Guidelines and the Obesity Society. J Am Coll Cardiol. 2014;63(25, pt B):2985-3023. doi:10.1016/j.jacc.2013.11.004

4. Apovian CM, Aronne LJ, Bessesen DH, et al; Endocrine Society. Pharmacological management of obesity: an Endocrine Society clinical practice guideline. J Clin Endocrinol Metab 2015;100(2):342-362. doi:10.1210/jc.2014-3415

5. Rucker D, Padwal R, Li SK, Curioni C, Lau DCW. Long term pharmacotherapy for obesity and overweight: updated meta-analysis. BMJ. 2007;335(7631):1194-1199. doi:10.1136/bmj.39385.413113.25

6. Siebenhofer A, Winterholer, S, Jeitler K, et al. Long-term effects of weight-reducing drugs in people with hypertension. Cochrane Database Syst Rev 2021;1:CD007654. doi:10.1002/14651858.CD007654.pub5

7. Bramante CT, Raatz S, Bomber EM, Oberle MM, Ryder JR. Cardiovascular risks and benefits of medications used for weight loss. Front Endocrinol (Lausanne). 2020;10:883. doi:10.3389/fendo.2019.00883

8. Christensen R, Kristensen PK, Bartels EM, Bliddal H, Astrup A. Efficacy and safety of the weight-loss drug rimonabant: a meta-analysis of randomized trials. Lancet. 2007;370(9600):1706-1713. doi:10.1016/S0140-6736(07)61721-8

9. US Food and Drug Administration. FDA requests the withdrawal of the weight-loss drug Blevique, Belvique XR (lorcaserin) from the market. Accessed April 2020. https://www.fda.gov/drugs/drug-safety-and-availability/fda-requests-withdrawal-weight-loss-drug-belviq-belviq-xr-lorcaserin-market

10. Pi-Sunyer X, Astrup A, Fujioka K, et al; SCALE Obesity and Prediabetes NN8022-1839 Study Group. A randomized, controlled trial of 3.0 mg of liraglutide in weight management. N Engl J Med. 2015;373(1):11-22. doi:10.1056/NEJMoa1411892

11. Gadde KM, Allison DB, Ryan DH, et al. Effects of low-dose, controlled-release phentermine plus topiramate combination on weight and associated comorbidities in overweight and obese adults (CONQUER): a randomized, placebo-controlled, phase 3 trial. Lancet. 2011;377(9774):1341-1352. doi:10.1016/S0140-6736(11)60205-5

12. Sjöström L, Rissanen A, Andersen T, et al. Randomised placebo-controlled trial of orlistat for weight loss and prevention of weight regain in obese patients. European Multicentre Orlistat Study Group. Lancet. 1998;352(9123):167-172. doi:10.1016/s0140-6736(97)11509-4

13. Smith SR, Weissman NJ, Anderson CM, et al; Behavioral Modification and Lorcaserin for Overweight and Obesity Management (BLOOM) Study Group. Multicenter, placebo-controlled trial of lorcaserin for weight loss. N Engl J Med. 2010;363(3):245-256. doi:10.1056/NEJMoa0909809

14. Warkentin LM, Das D, Majumdar SR, Johnson JA, Padwal RS. The effect of weight loss on health-related quality of life: systematic review and meta-analysis of randomized trials. Obes Rev. 2014;15(3):169-182. doi:10.1111/obr.12113

15. Finkelstein EA, Trogdon JG, Cohen JW, Dietz W. Annual medical spending attributable to obesity: payer-and service-specific estimates. Health Aff (Millwood). 2009;28(5):w822-831. doi:10.1377/hlthaff.28.5.w822

16. Greenway FL, Fujioka K, Plodkowski RA, et al; COR-I Study Group. Effect of naltrexone plus bupropion on weight loss in overweight and obese adults (COR-I): a multicenter, randomized, double-blind, placebo-controlled phase 3 trial. Lancet. 2010;376(9741):595-605. doi:10.1016/S0140-6736(10)60888-4

17. Contrave. Prescribing information. Nalpropion Pharmaceuticals, Inc; 2019.

18. Qsymia. Prescribing information. VIVUS Inc; 2018.

Outcomes Associated With Pharmacist- Led Consult Service for Opioid Tapering and Pharmacotherapy

In the late 1980s and early 1990s, an emphasis on better pain management led health care professionals (HCPs) to increase prescribing of opioids to better manage patient’s pain. In 1991, 76 million prescriptions were written for opioids in the United States, and by 2011, the number had nearly tripled to 219 million.1 Overdose rates increased as well, nearly tripling from 1999 to 2014.2 Of the 52,404 US deaths from drug overdoses in the in 2015, 63% involved an opioid.2

Opioid Safety Initiative

In response to the growing opioid epidemic, the US Department of Veterans Affairs (VA) created the Opioid Safety Initiative in 2014.3 This comprehensive, multifaceted initiative was designed to improve the care and safety of veterans managed with opioid therapy and promote rational opioid prescribing and monitoring. In 2016 the Centers for Disease Control and Prevention (CDC) issued guidelines for opioid prescriptions, and the following year the VA and the US Department of Defense (DoD) updated the VA/DoD Clinical Practice Guidelines for Opioid Therapy for Chronic Pain (VA/DoD guidelines).4,5 After the release of these guidelines, the use of opioid tapers expanded. However, due to public outcry of forced opioid tapering in 2019, the US Food and Drug Administration updated its opioid labeling requirements to provide clearer guidance on opioid tapers for tolerant patients.6,7

As a result, HCPs began to develop various strategies to balance the safety and efficacy of opioid use in patients with chronic pain. The West Palm Beach VA Medical Center (WPBVAMC) in Florida has a Pain Clinic that includes 2 pain management clinical pharmacy specialists (CPSs) with specialized training in pain management, who are uniquely qualified to assess and evaluate medication therapy in complex pain patient cases. These CPSs were involved in the face-to-face management of patients requiring specialized pain care and participated in a pain pharmacy electronic consult (eConsult) service to document pain management consultative recommendations for patients appropriate for management at the primary care level. This formalized process increased specialty pain care access for veterans whose pain was managed by primary care providers (PCPs).

The pain pharmacy eConsult service was initiated at the WPBVAMC in June 2013 to assist PCPs in the management of outpatients with chronic pain. The eConsult service includes evaluation of a patient’s electronic health records (EHRs) by CPSs. The eConsult service also provided PCPs with the option to engage a pharmacist who could provide recommendations for opioid dosing conversion, opioid tapering, pain pharmacotherapy, or drug screen interpretation, without the necessity for an additional patient visit.

Subsequent to the release of the 2016 CDC (and later the 2017 VA/DoD) guidelines recommending reducing morphine equivalent daily dose (MEDD) levels, the WPBVAMC had a large increase in pain eConsult requests for opioid tapering and opioid pharmacotherapy. A 3.4-fold increase in requests occurred in March, April, and May vs the following 9 months, and a nearly 4-fold increase in requests for opioid tapers during the same period. However, the impact of the completed eConsults was unclear. Therefore, the primary objective of this study was to assess the effect of CPS services for opioid tapering and opioid pharmacotherapy by quantifying the number of recommendations accepted/implemented by PCPs. The secondary objectives included evaluating harms associated with the recommendations (eg, increase in visits to the emergency department [ED], hospitalizations, suicide attempts, or PCP visits) and provider satisfaction.

Methods

A retrospective chart review was completed to assess data of patients from the WPBVAMC and its associated community-based outpatient clinics (CBOCs). The project was approved by the WPBVAMC Scientific Advisory Committee as part of the facility’s performance improvement efforts.

Included patients had a pain pharmacy eConsult placed between April 1, 2016 and March 31, 2017. EHRs were reviewed and only eConsults for opioid pharmacotherapy recommendation or opioid tapers were evaluated. eConsults were excluded if the request was discontinued, completed by a HCP other than the pain CPS, or placed for an opioid dose conversion, nonopioid pharmacotherapy, or drug screen interpretation.

Data for analyses were entered into Microsoft Excel 2016 and were securely saved and accessible to relevant researchers. Patient protected health information used during patient care remained confidential.

Demographic data were collected, including age, gender, race, pertinent medical comorbidities (eg, diabetes mellitus, sleep apnea), and mental health comorbidities. Pain scores were collected at baseline and 6-months postconsult. Pain medications used by patients were noted at baseline and 6 months postconsult, including concomitant opioid and benzodiazepine use, MEDD, and other pain medication. The duration of time needed by pain CPS to complete each eConsult and total time from eConsult entered to HCP implementation of the initial recommendation was collected. The number of actionable recommendations (eg, changes in drug therapy, urine drug screens [UDSs], and referrals to other services also were recorded and reviewed 6 months postconsult to determine the number and percentage of recommendations implemented by the HCP. The EHR was examined to determine adverse events (AEs) (eg, any documentation of suicide attempt, calls to the Veterans Crisis Line, or death 6 month postconsult). Collected data also included new eConsults, the reason for opioid tapering either by HCP or patient, and assessment of economic harms (count of the number of visits to ED, hospitalizations, or unscheduled PCP visits with uncontrolled pain as chief reason within 6 months postconsult). Last, PCPs were sent a survey to assess their satisfaction with the pain eConsult service.

Results

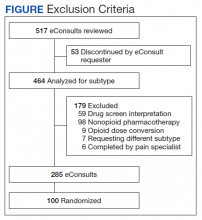

Of 517 eConsults received from April 1, 2016 to March 31, 2017, 285 (55.1%) met inclusion criteria (Figure). Using a random number generator, 100 eConsults were further reviewed for outcomes of interest.

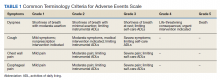

In this cohort, the mean age was 61 years, 87% were male, and 80% were White individuals. Most patients (83%) had ≥ 1 mental health comorbidity, and 53% had ≥ 2, with depressive symptoms, tobacco use, and/or posttraumatic stress disorder the most common diagnoses (Table 1). Eighty-seven percent of eConsults were for opioid tapers and the remaining 13% were for opioid pharmacotherapy.

The median pain score at time of consult was 6 on a 10-point scale, with no change at 6 months postconsult. However, 41% of patients overall had a median 3.3-point drop in pain score, 17% had no change in pain score, and 42% had a median 2.6-point increase in pain score.

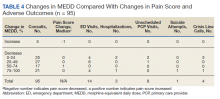

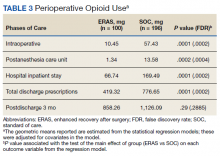

At time of consult, 24% of patients had an opioid and benzodiazepine prescribed concurrently. At the time of the initial request, the mean MEDD was 177.5 mg (median, 165; range, 0-577.5). At 6 months postconsult, the average MEDD was 71 mg (median, 90; range, 0-450) for a mean 44% MEDD decrease. Eighteen percent of patients had no change in MEDD, and 5% had an increase.

One concern was the number of patients whose pain management regimen consisted of either opioids as monotherapy or a combination of opioids and skeletal muscle relaxants (SMRs), which can increase the opioid overdose risk and are not indicated for long-term use (except for baclofen for spasticity). Thirty-five percent of patients were taking either opioid monotherapy or opioids and SMRs for chronic pain management at time of consult and 28% were taking opioid monotherapy or opioids and SMRs 6 months postconsult.

Electronic Consults

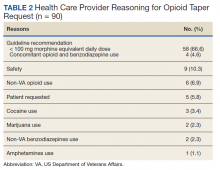

Table 2 describes the reasons eConsults were requested. The most common reason was to taper the dose to be in compliance with the CDC 2016 guideline recommendation of MEDD < 90 mg, which was later increased to 100 mg by the VA/DoD guideline.

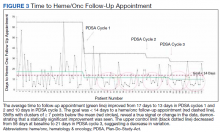

On average, eConsults were completed within a mean of 11.5 days of the PCP request, including nights and weekends. The CPS spent a mean 66.8 minutes to complete each eConsult. Once the eConsult was completed, PCPs took a mean of 9 days to initiate the primary recommendation. This 9-day average does not include 11 eConsults with no accepted recommendations and 11 eConsults for which the PCP implemented the primary recommendation before the CPS completed the consult, most likely due to a phone call or direct contact with the CPS at the time the eConsult was ordered.

A mean 3.5 actionable recommendations were made by the CPS and a mean 1.6 recommendations were implemented within 6 months by the PCP. At least 1 recommendation was accepted/implemented for 89% of patients, with a mean 55% recommendations that were accepted/implemented. Eleven percent of the eConsult final recommendations were not accepted by PCPs and clear documentation of the reasons were not provided.

Adverse Outcomes

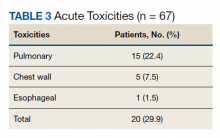

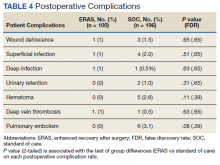

In the 6 months postconsult, 11 patients (7 men and 4 women) experienced 32 AEs (Table 3). Eight patients had 15 ED visits, with 3 of the visits resulting in hospitalizations, 8 patients had 9 unscheduled PCP visits, 1 patient reported suicidal ideation and 2 patients made a total of 4 calls to the Veterans Crisis Line. There were also 2 deaths; however, both were due to end-stage disease (cirrhosis and amyotrophic lateral sclerosis) and not believed to be related to eConsult recommendations.

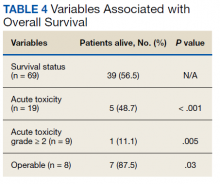

Eight patients had a history of substance use disorders (SUDs) and 8 had a history of a mood disorder or psychosis. One patient had both SUD and a mood/psychosis-related mental health disorder, including a reported suicidal attempt/ideation at an ED visit and a subsequent hospitalization. A similar number of AEs occurred in patients with decreases in MEDD of 0 to 24% compared with those that received more aggressive tapers of 75 to 100% (Table 4).

Nine patients were reconsulted, with only 1 secondary to the PCP not implementing recommendations from the initial consult. No factors were found that correlated with likelihood of a patient being reconsulted.

Surveys on PCP satisfaction with the eConsult service were completed by 29 of the 55 PCPs. PCP feedback was generally positive with nearly 90% of PCPs planning to use the service in the future as well as recommending use to other providers.

PCPs also were given the option to indicate the most important factor for overall satisfaction with eConsult service (time, access, safety, expectations or confidence). Safety was provider’s top choice with time being a close second.

Discussion

Most (89%) PCPs accepted at least 1 recommendation from the completed eConsult, and MEDDs decreased by 60%, likely reducing the patient’s risk of overdose or other AEs from opioids. There also was a slight reduction in patient’s mean pain scores; however, 41% had a decrease and 42% had an increase in pain scores. There was no clear relationship when pain scores were compared with MEDDs, likely giving credence to the idea that pain scores are largely subjective and an unreliable surrogate marker for assessing effectiveness of analgesic regimens.

Eleven patients experienced AEs, including 1 patient for whom the recommendations were not implemented by the PCP. Eight of the 11 had multiple AEs. One interesting finding was that 7 of the 11 patients with an AE tested positive for unexpected substances on routine UDS or were arrested for driving while intoxicated (DWI). However, only 3 of the 7 had an active SUD diagnosis. With 25% of the AEs coming from patients with a history of SUD, it is important that any history of SUD be documented in the EHR. Maintaining this documentation can be especially difficult if patients switch VA medical centers or receive services outside the VA. Thorough and accurate history and chart review should ideally be completed before prescribing opioids.

Guidelines

While the PCPs were following VA/DoD and CDC recommendations for opioid tapering to < 100 or 90 mg MEDD, respectively, there is weak evidence in these guidelines to support specific MEDD cutoffs. The CDC guidelines even state, “a single dosage threshold for safe opioid use could not be identified.”5 One of the largest issues when using MEDD as a cutoff is the lack of agreement on its calculation. In 2014, Nuckols and colleagues al conducted a study to compare the existing guidelines on the use of opioids for chronic pain. While 13 guidelines were considered eligible, most recommendations were supported only by observational data or expert recommendations, and there was no consensus on what constitutes a “morphine equivalent.”8 Currently there is no universally accepted opioid-conversion method, resulting in a substantial problem when calculating a MEDD.9 A survey of 8 online opioid dose conversion tools found a -55% to +242% variation.10 As Fudin and colleagues concluded in response to the large variations found in these various analyses, the studies “unequivocally disqualify the validity of embracing MEDD to assess risk in any meaningful statistical way.”11 Pharmacogenetics, drug tolerance, drug-drug interactions, body surface area, and organ function are patient- specific factors that are not taken into consideration when relying solely on a MEDD calculation. Tapering to lowest functional dose rather than a specific number or cutoff may be a more effective way to treat patients, and providers should use the guidelines as recommendations and not a hardline mandate.

At 6 months, 6 patients were receiving no pain medications from the VA, and 24 of the patients were tapered from their opiate to discontinuation. It is unclear whether patients are no longer taking opioids or switched their care to non-VA providers to receive medications, including opioids, privately. This is difficult to verify, though a prescription drug monitoring program (PDMP) could be used to assess patient adherence. As many of the patients that were tapered due to identification of aberrant behaviors, lack of continuity of care across health care systems may result in future patient harm.

The results of this analysis highlight the importance of checking PDMP databases and routine UDSs when prescribing opioids—there can be serious safety concerns if patients are taking other prescribed or illicit medications. However, care must be taken; there were 2 instances of patients’ chronic opioid prescriptions discontinued by their VA provider after a review of the PDMP showed they had received non-VA opioids. In both cases, the quantity and doses received were small (counts of ≤ 12) and were received more than 6 months prior to the check of the PDMP. While this constitutes a breach of the Informed Consent for long-term opioid use, if there are no other concerning behaviors, it may be more prudent to review the informed consent with the patient and discuss why the behavior is a breach to ensure that patients and PCPs continue to work as a team to manage chronic pain.

Limitations

The study population was one limitation of this project. While data suggest that chronic pain affects women more than men, this study’s population was only 13% female. Thirty percent of the women in this study had an AE compared with only 8% of the men. Additional limitations included use of problem list for comorbidities, as lists may be inaccurate or outdated, and limiting the monitoring of AE to only 6 months. As some tapers were not initiated immediately and some taper schedules can last several months to years; therefor, outcomes may have been higher if patients were followed longer. Many of the patients with AEs had increased ED visits or unscheduled primary care visits as the tapers went on and their pain worsened, but the visits were outside the 6-month time frame for data collection. An additional weakness of this review included assessing a pain score, but not functional status, which may be a better predictor of the effectiveness of a patient’s pain management regimen. This assessment is needed in future studies for more reliable data. Finally, PCP survey results also should be viewed with caution. The current survey had only 29 respondents, and the 2014 survey had only 10 respondents and did not include CBOC providers.

Conclusion

A pain eConsult service managed by CPSs specializing in pain management can assist patients and PCPs with opioid therapy recommendations in a safe and timely manner, reducing risk of overdose secondary to high dose opioid therapy and with limited harm to patients.

1. National Institute on Drug Abuse. Increased drug availability is associated with increased use and overdose. Published June 9, 2020. Accessed February 19, 2021. https://www.drugabuse.gov/publications/research-reports/prescription-opioids-heroin/increased-drug-availability-associated-increased-use-overdose

2. Rudd RA, Seth P, David F, Scholl L. Increases in drug and opioid-involved overdose deaths - United States, 2010-2015. MMWR Morb Mortal Wkly Rep. 2016;65(50-51):1445-1452. Published 2016 Dec 30.doi:10.15585/mmwr.mm655051e1

3. US Department of Veterans Affairs, Office of Inspector General. Healthcare inspection – VA patterns of dispensing take-home opioids and monitoring patients on opioid therapy. Report 14-00895-163. Published May 14, 2014. Accessed February 2, 2021. https://www.va.gov/oig/pubs/VAOIG-14-00895-163.pdf

4. US Department of Veterans Affairs, US Department of Defense, Opioid Therapy for Chronic Pain Work Group. VA/DoD clinical practice guidelines for opioid therapy for chronic pain. Version 3.0. Published December 2017. Accessed February 2, 2021. https://www.va.gov/HOMELESS/nchav/resources/docs/mental-health/substance-abuse/VA_DoD-CLINICAL-PRACTICE-GUIDELINE-FOR-OPIOID-THERAPY-FOR-CHRONIC-PAIN-508.pdf

5. Dowell D, Haegerich TM, Chou R. CDC Guideline for Prescribing Opioids for Chronic Pain - United States, 2016 [published correction appears in MMWR Recomm Rep. 2016;65(11):295]. MMWR Recomm Rep. 2016;65(1):1-49. Published 2016 Mar 18. doi:10.15585/mmwr.rr6501e1.

6. US Food and Drug Administration. (2019). FDA identifies harm reported from sudden discontinuation of opioid pain medicines and requires label changes to guide prescribers on gradual, individualized tapering. Updated April 17, 2019. Accessed February 2, 2021. https://www.fda.gov/drugs/fda-drug-safety-podcasts/fda-identifies-harm-reported-sudden-discontinuation-opioid-pain-medicines-and-requires-label-changes

7. Dowell D, Haegerich T, Chou R. No Shortcuts to Safer Opioid Prescribing. N Engl J Med. 2019;380(24):2285-2287. doi:10.1056/NEJMp1904190

8. Nuckols TK, Anderson L, Popescu I, et al. Opioid prescribing: a systematic review and critical appraisal of guidelines for chronic pain. Ann Intern Med. 2014;160(1):38-47. doi:10.7326/0003-4819-160-1-201401070-00732

9. Rennick A, Atkinson T, Cimino NM, Strassels SA, McPherson ML, Fudin J. Variability in Opioid Equivalence Calculations. Pain Med. 2016;17(5):892-898. doi:10.1111/pme.12920

10. Shaw K, Fudin J. Evaluation and comparison of online equianalgesic opioid dose conversion calculators. Pract Pain Manag. 2013;13(7):61-66.