User login

Cost Comparison of 2 Video Laryngoscopes in a Large Academic Center

From the Department of Anesthesiology, Thomas Jefferson University and Hospitals, Sidney Kimmel Medical College, Philadelphia, PA, and Sidney Kimmel Medical College at Thomas Jefferson University, Philadelphia, PA.

Objective: Retrospective study examining hospital cost information of patients requiring endotracheal intubation with video laryngoscopy. Provide a practical cost assessment on use of the McGRATH and GlideScope video laryngoscopes (VLs).

Methods: This study examined 52 hospital locations within a single, large university hospital, with most of those locations being hospital operating rooms. A total of 34 600 endotracheal intubations performed over 24 months, of which 11 345 were video laryngoscopies. Electronic medical records containing demographic data and information related to endotracheal intubation procedures, with monthly breakdowns between GlideScope and McGRATH intubations, were reviewed. Cost information calculated for equipment, blades, batteries, repairs, and subsequent analysis performed to determine cost differences between those 2 instruments during the COVID-19 period.

Results: A total of 5501 video laryngoscopy procedures were performed using the McGRATH VL and 5305 were performed using the GlideScope VL. Costs over 24 months were $181 093 lower (55.5%) for McGRATH compared to GlideScope. The mean (SD) monthly costs for GlideScope blades were $3837 ($1050) and $3236 ($538) for years 1 and 2, respectively, vs $1652 ($663) and $2933 ($585) for McGRATH blades (P < .001). Most total cost differences were attributed to equipment and blade purchases, which were $202 595 (65.0%) higher for GlideScope. During the COVID-19 period, the use of the McGRATH increased to 61% of all video laryngoscopy cases, compared to 37% for GlideScope (P < .001). Blade cost difference for the COVID-19 period was $128 higher for the McGRATH even though 293 more intubations were performed with that device.

Conclusions: Use of the McGRATH resulted in a cost savings of 55% compared to the GlideScope, and its use was highest during the COVID-19 period, which may be explained by its more portable and practical features.

Keywords: video laryngoscope; McGRATH; GlideScope; endotracheal intubation; hospital costs; COVID-19.

Hospitals have come to rely on video laryngoscopes (VLs) for tracheal intubation as necessary tools for better visualization of airways. Modern video laryngoscopy developed in the 2000s1 as a progression from direct laryngoscopy, which began in 1852 when Horace Green used a bent tongue spatula and sunlight to examine a child.2 VLs have seen many improvements and adaptations of their own, resulting in many different styles and types circulating around hospitals. The GlideScope (Verathon Inc, Bothell, WA) and the McGRATH (Medtronic, Minneapolis, MN) are examples of such instruments, which are now widely used in the US and are the 2 VLs of choice at our institution.

A few studies have compared VLs to direct laryngoscopes. In their systematic review, Lewis et al have shown the numerous benefits of using a VL over a direct laryngoscope. Some general conclusions were that the use of video laryngoscopy reduced the number of failed intubations, decreased laryngeal trauma, and provided improved visualizations.3 Other studies have compared the different types of VLs, including the McGRATH and the GlideScope, examining factors such as intubation time and display quality of the image. Two studies found that medical students were equally successful at using both the McGRATH and the GlideScope,4,5 while another study found that care providers using the GlideScope had quicker intubation times.6 Lastly, Savoldelli et al concluded that more providers preferred the McGRATH, which provided better laryngeal views,7 while their subsequent study showed more favorable learning curves of the Airtraq compared to the McGRATH and other VLs.8

Although there have been no reported differences in safety and effectiveness of the McGRATH and GlideScope devices, cost data on the use of these 2 popular laryngoscopes are lacking. Such information is important considering the increasing costs of medical technologies and the significant financial losses experienced by health care systems due to the COVID-19 crisis. The purpose of this retrospective cohort study was to compare the cost efficiency of the McGRATH MAC and GlideScope Core VLs at a large academic center.

Methods

This retrospective study was performed under exemption from the Thomas Jefferson University Institutional Review Board. The primary data sources consisted of hospital electronic patient records (EPIC) and cost information from the device manufacturers and hospital staff. The electronic patient data were provided by the EPIC Enterprise Analytics Business Intelligence group at Thomas Jefferson University Hospital (Center City Campus, Philadelphia, PA), while device costs were obtained from Verathon, Medtronic, and departmental staff responsible for purchasing equipment. Monthly data were obtained over a 24-month period (June 2018 through May 2020) when the McGRATH VL was placed into use in the department of anesthesiology. The 2 types of VLs were made available for use in a total of 52 locations, with the majority being hospital operating rooms.

The following variables were recorded: number of endotracheal intubations performed each month with breakdown between video laryngoscopy and flexible bronchoscopy airways, frequency of use for each type of laryngoscope, blades used, and equipment costs for use of each laryngoscope. Hospital cost estimates for both the McGRATH and GlideScope laryngoscopes included batteries, handles, blades, and the devices themselves. Cost data were also collected on frequency of device failure, maintenance, and replacement of parts and lost equipment.

Analysis

De-identified electronic medical records consisted of nominal and quantitative variables, with demographic data and information related to the endotracheal intubation procedure. All data were in chronological order and sorted by date after which coding was applied, to identify device type and allocate pertinent cost information. Descriptive statistics were reported as mean (SD) and sum for costs; frequency tables were generated for intubation procedures according to device type and time periods. Data were analyzed using the χ2 test, the student t test, and the Wilcoxon Mann-Whitney U test, with a P value set at .05 for statistical significance. SPSS version 26 and GraphPad Prism version 6 were used for all statistical analyses.

Results

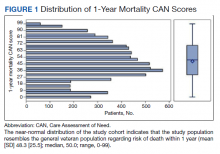

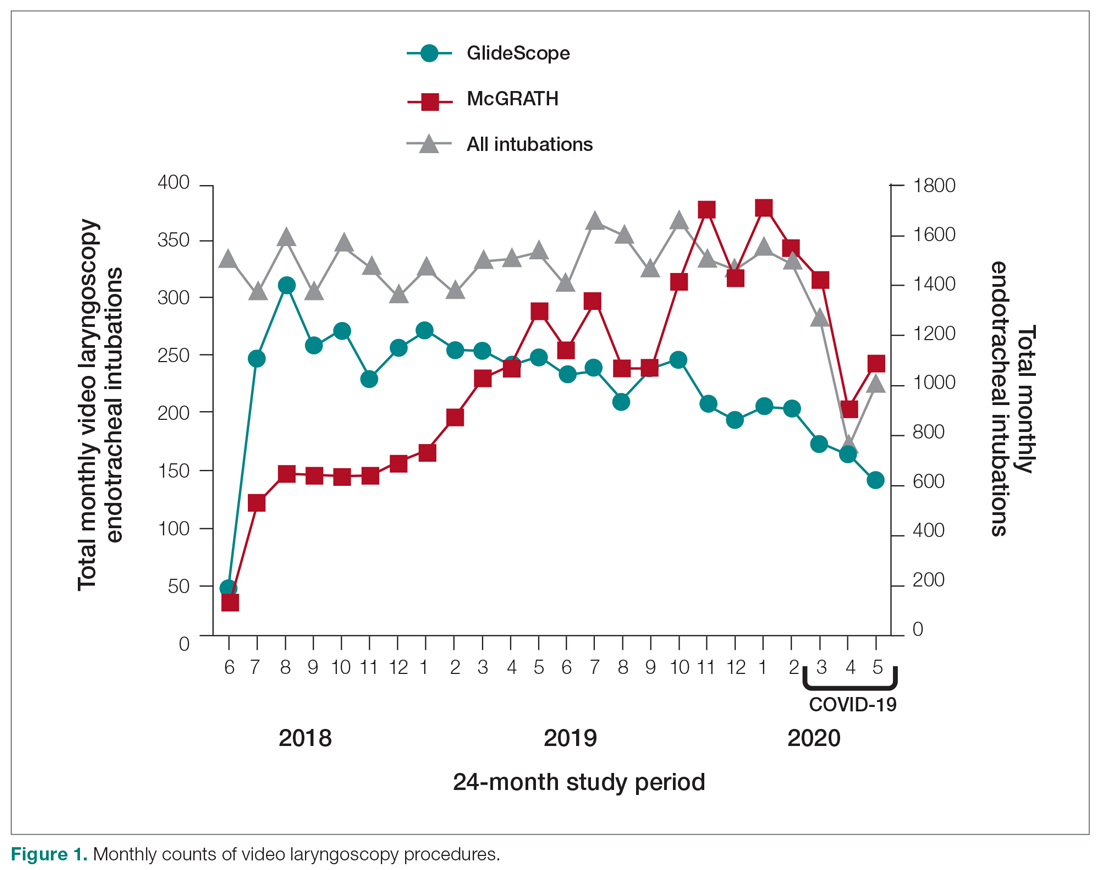

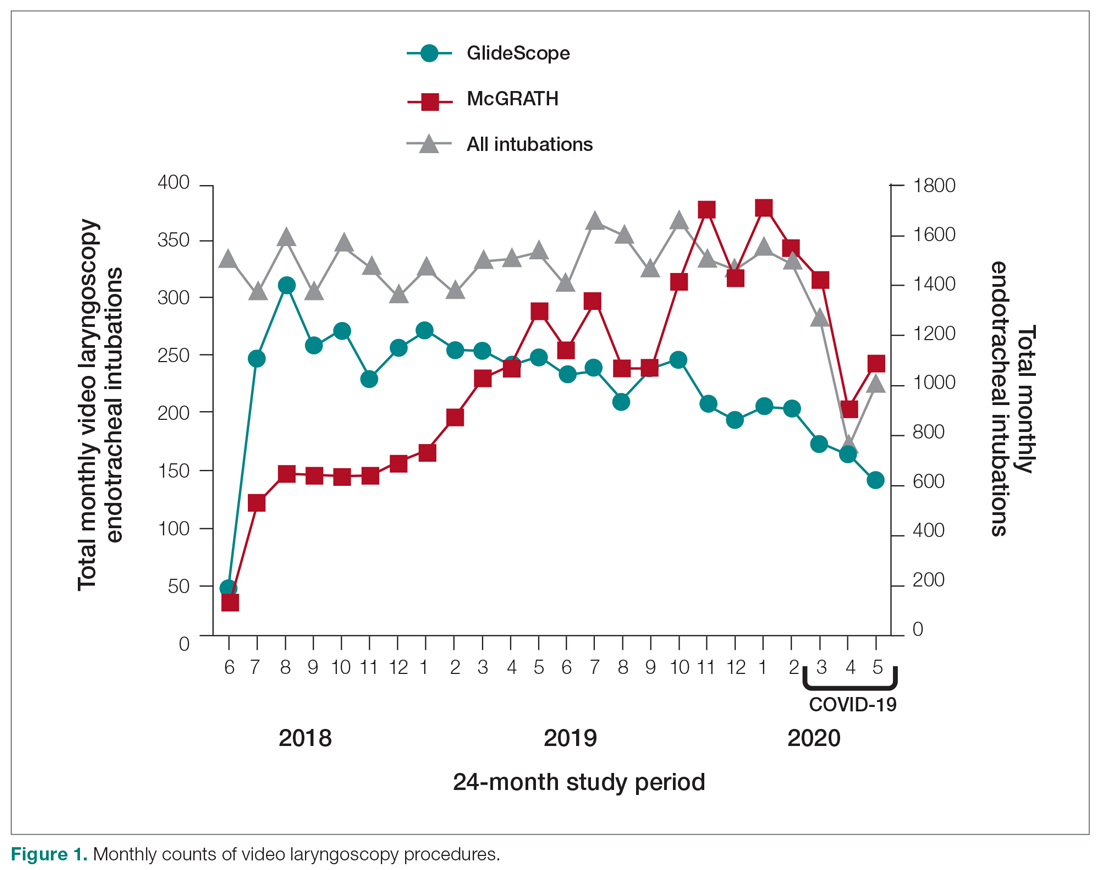

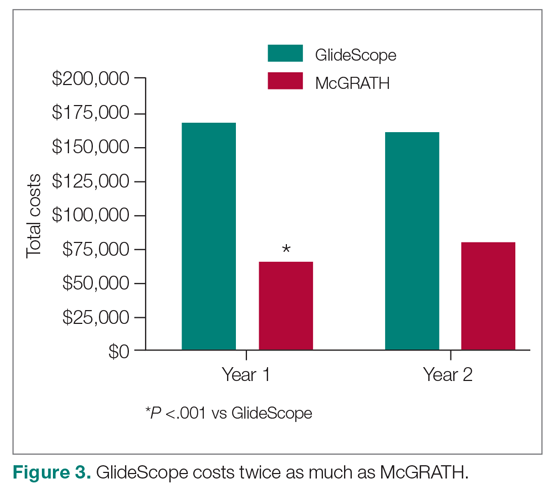

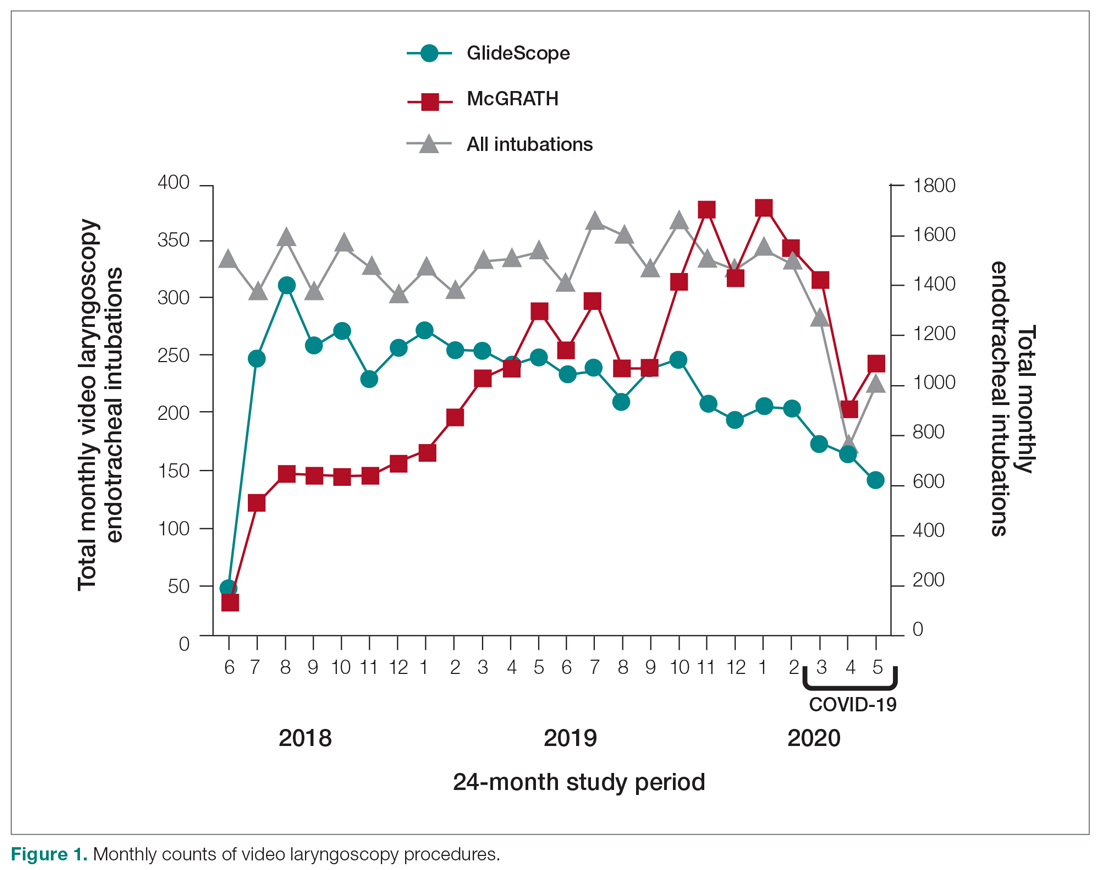

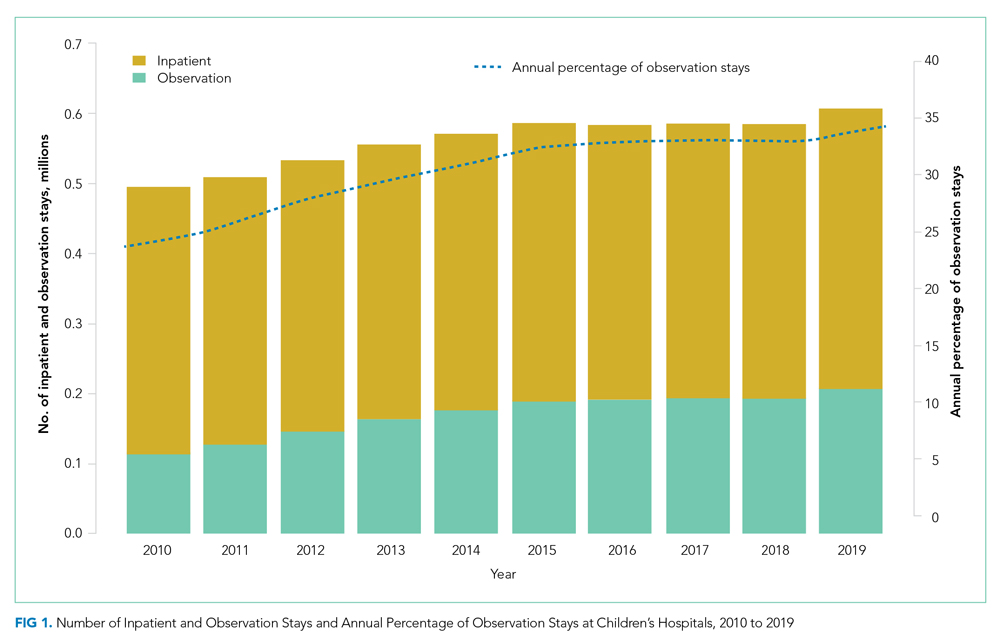

A total of 34 600 endotracheal intubations were performed over the 24-month study period, and 11 345 (32.8%) were video laryngoscopy procedures. Out of all video laryngoscopy procedures, 5501 (48.5%) were performed using the McGRATH VL and 5305 (46.8%) were conducted using the GlideScope VL. The difference of 539 (4.8%) cases accounts for flexible bronchoscopy procedures and endotracheal intubations using other video laryngoscopy equipment. The mean (SD) monthly number of video laryngoscopy procedures for the 24 months was 221 (54) and 229 (89) for the GlideScope and McGRATH devices, respectively. Monthly endotracheal intubation distributions over 24 months trended upward for the McGRATH VL and downward for the GlideScope, but there was no statistically significant (P = .71) difference in overall use between the 2 instruments (Figure 1).

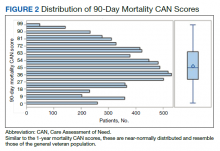

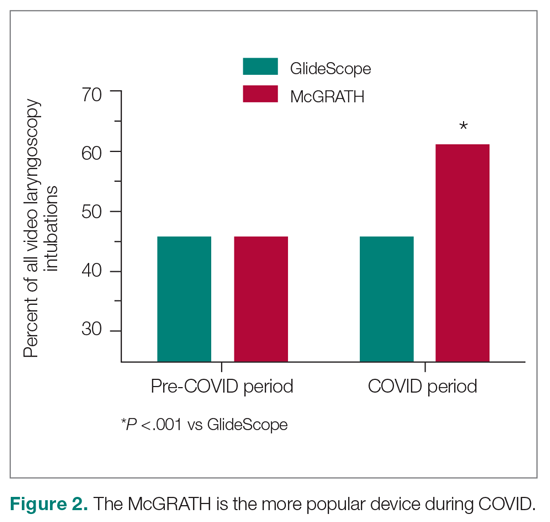

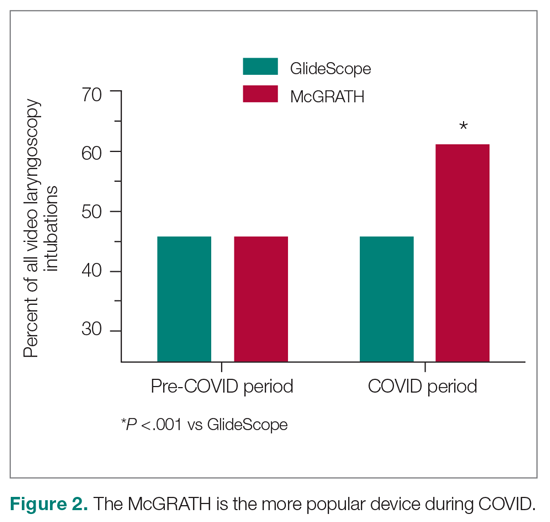

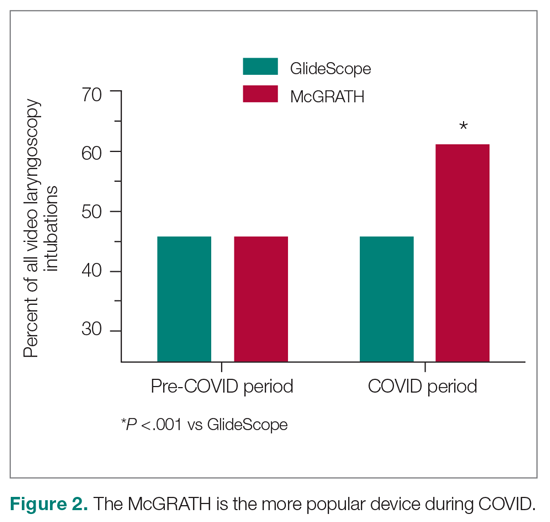

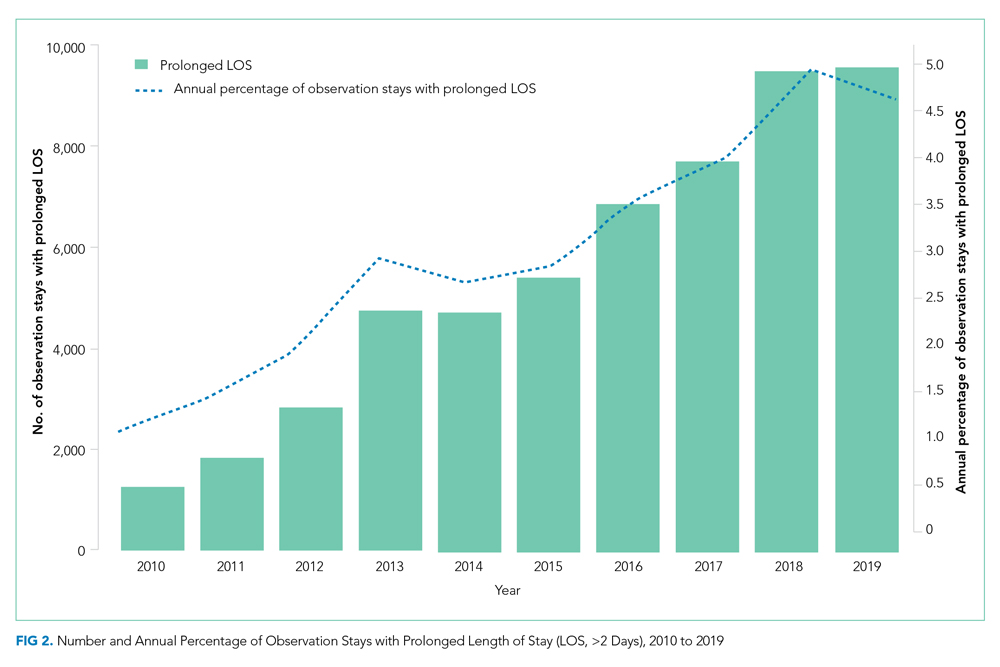

To examine the observed usage trends between the 2 VL during the first and last 12 months, a univariate ANOVA was conducted with the 2 time periods entered as predictors in the model. Video laryngoscopy intubations were performed (P = .001) more frequently with the GlideScope during the first 12 months; however, use of the McGRATH VL increased (P < .001) during the following 12 months compared to GlideScope. The GlideScope accounted for 54% of all VL intubations during the first 12 months, with the McGRATH accounting for 58% of all video laryngoscopy procedures for months 12 to 24. Additionally, the increase in video laryngoscopy procedures with the McGRATH during the last 3 months of the study period was despite an overall reduction in surgical volume due to the COVID-19 crisis, defined for this study as March 1, 2020, to May 31, 2020 (Figure 1). There was a statistically significant (P < .001) difference in the case distribution between use of the McGRATH and GlideScope VL for that period. The anesthesia personnel’s use of the McGRATH VL increased to 61% of all video laryngoscopy cases, compared to 37% for the GlideScope (Figure 2).

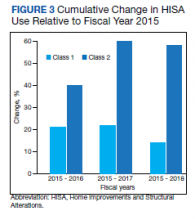

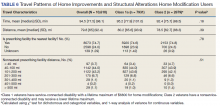

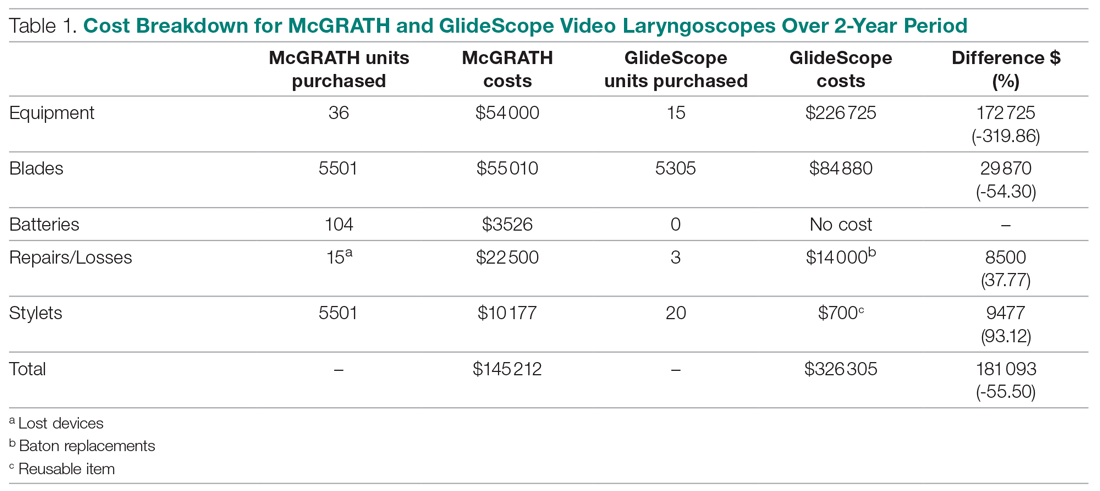

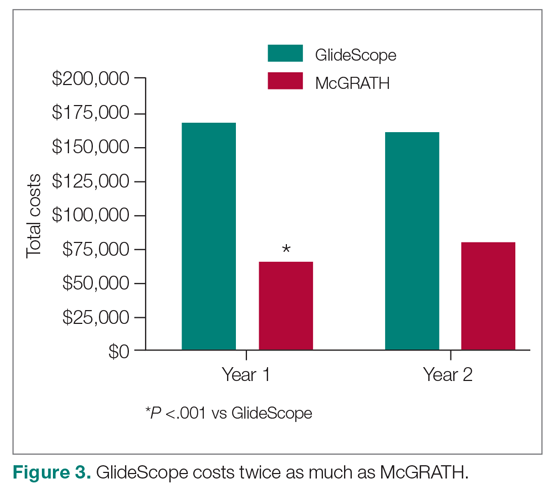

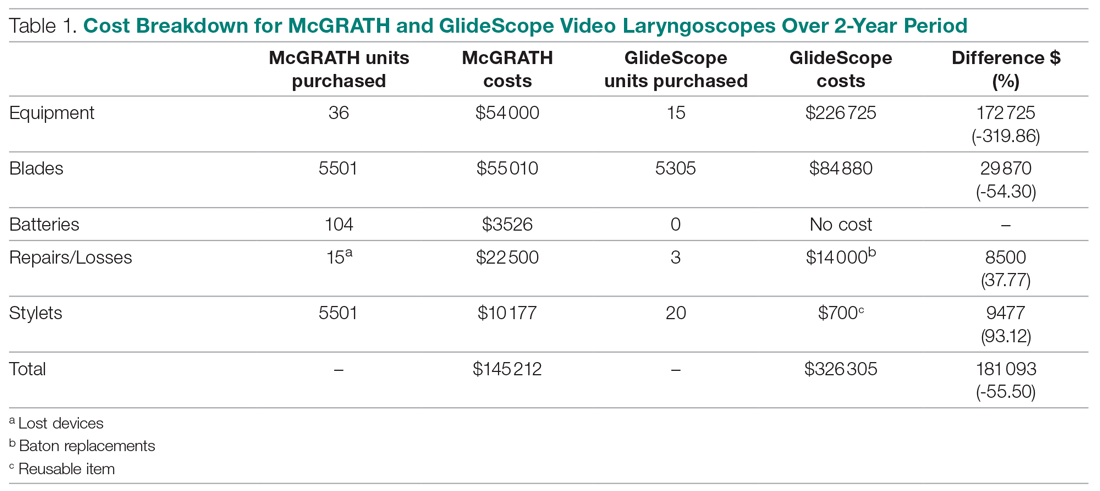

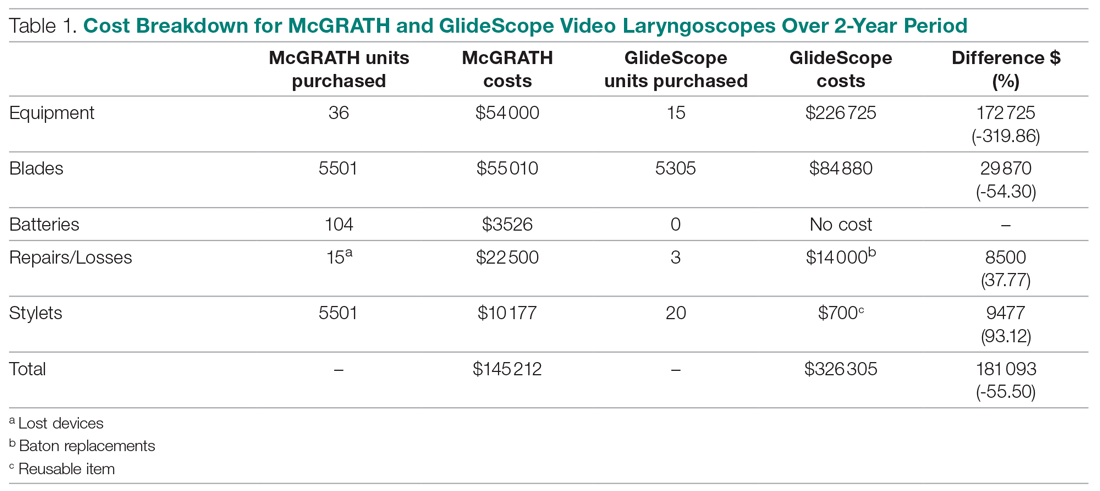

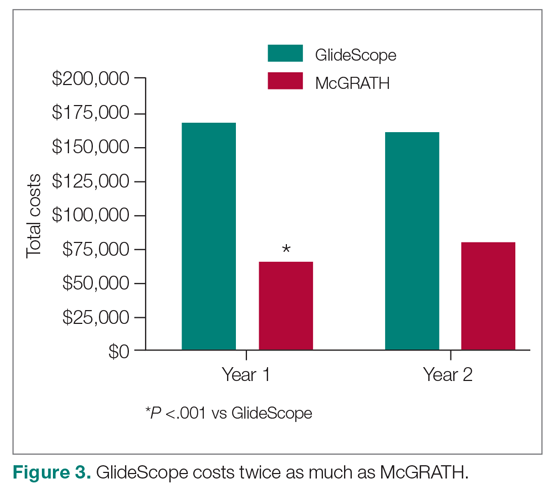

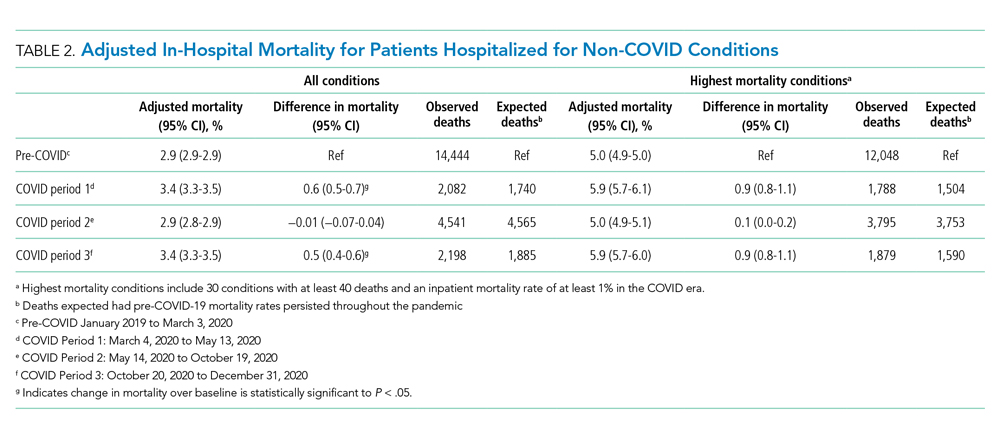

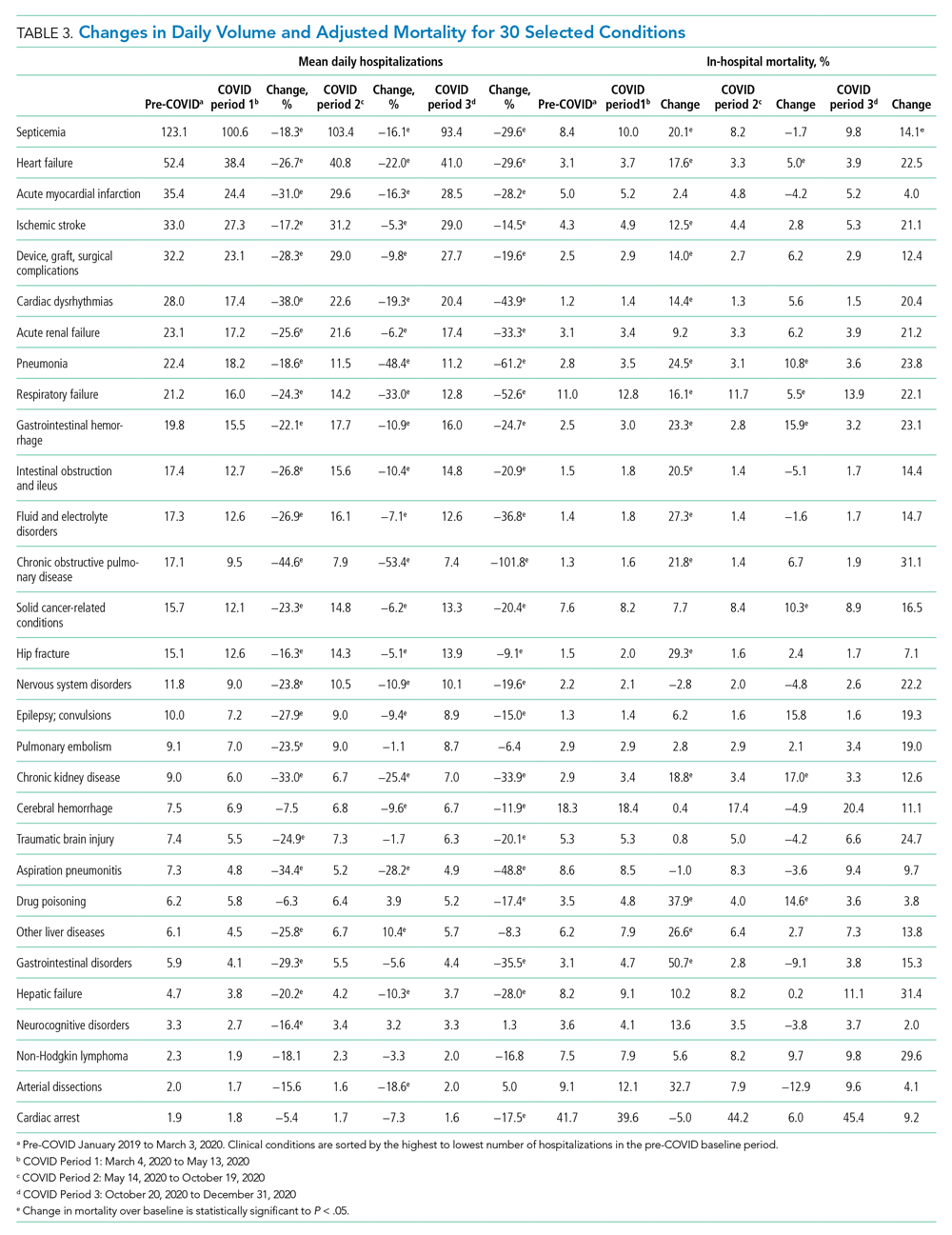

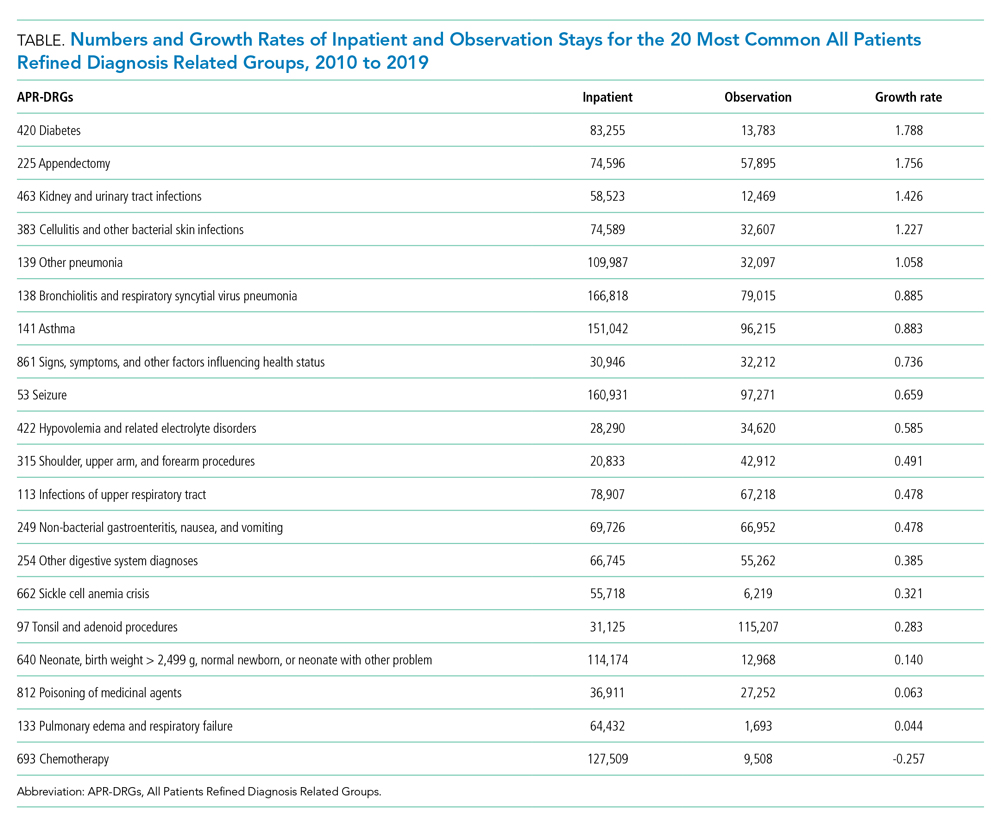

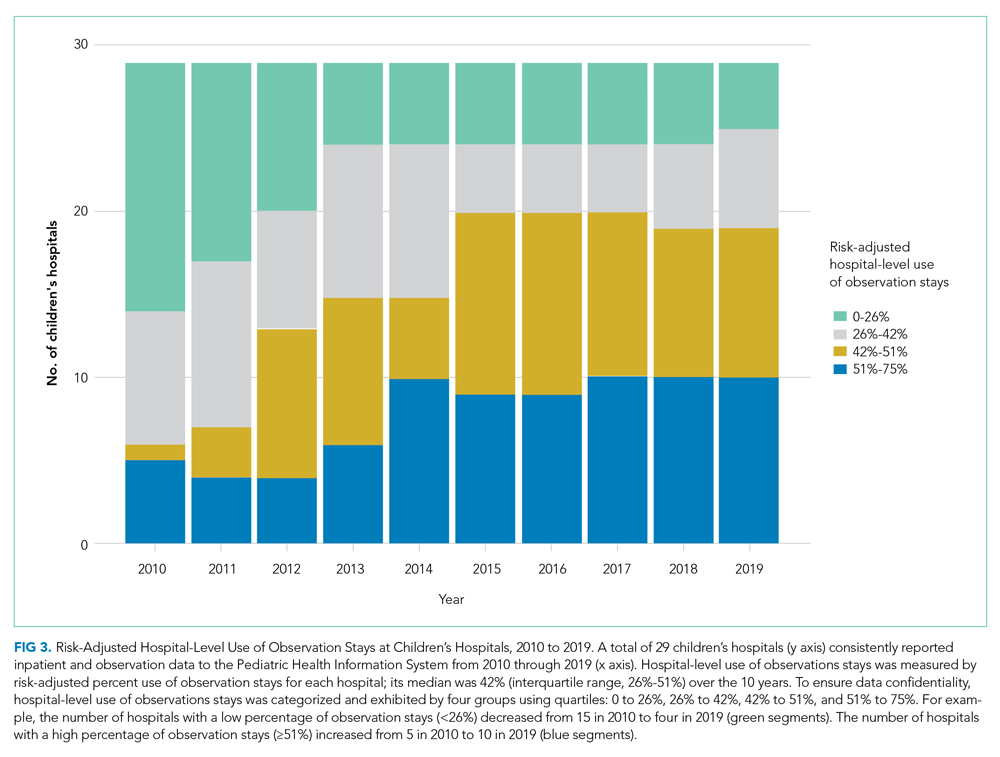

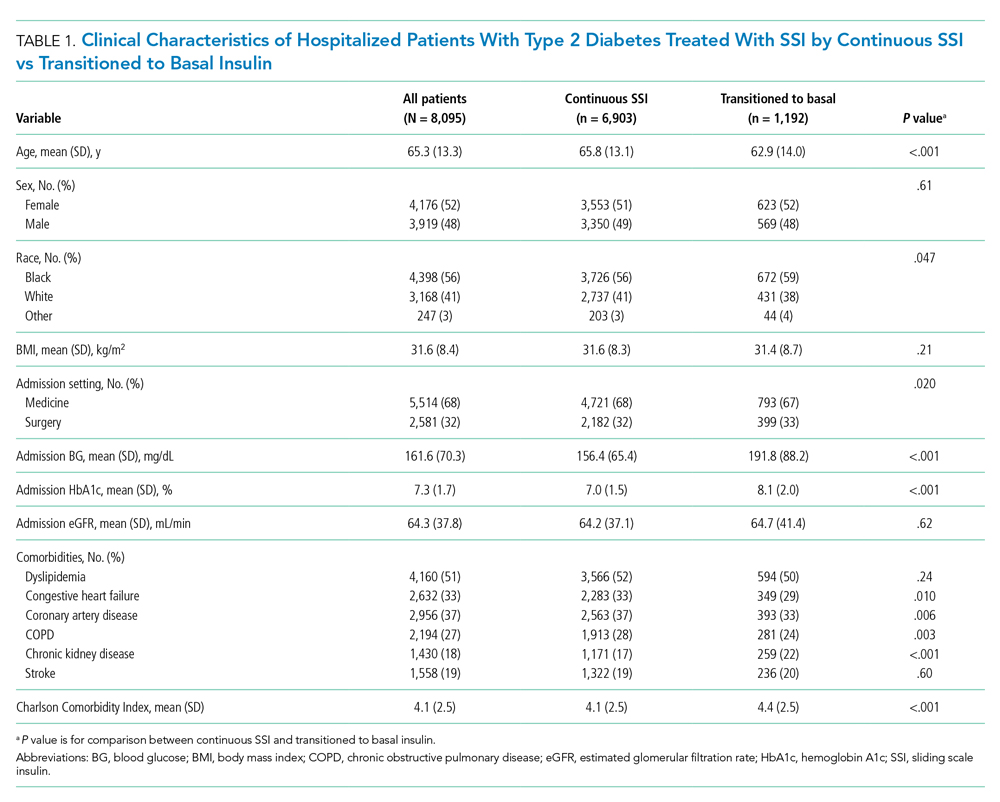

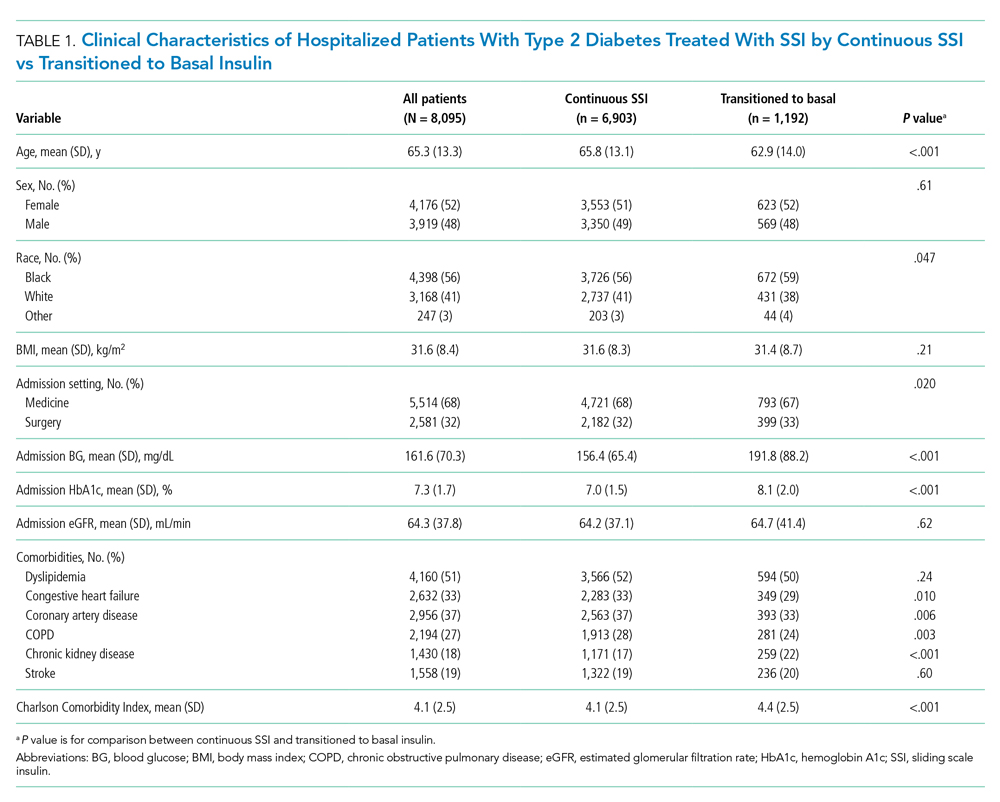

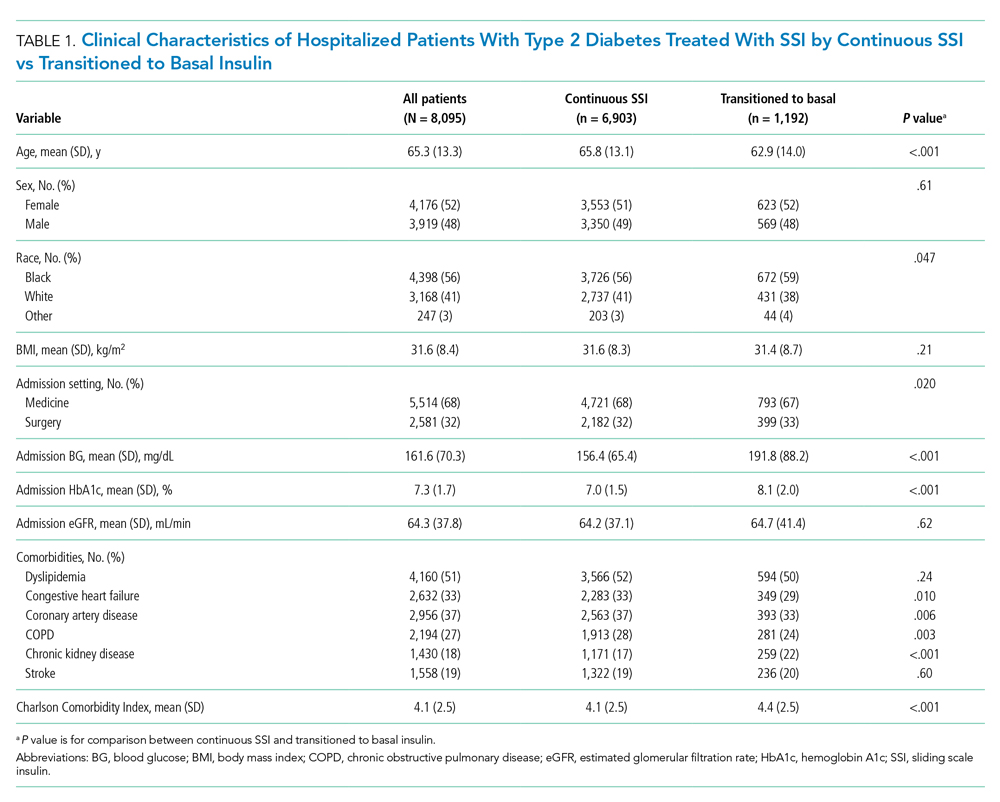

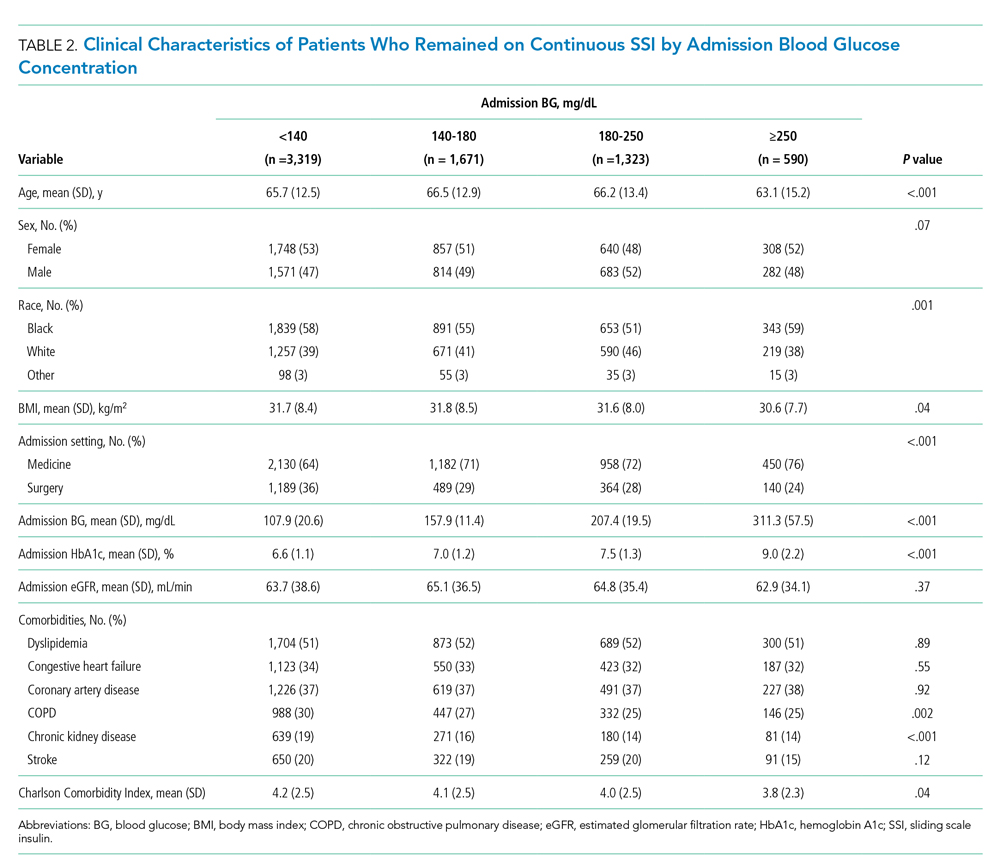

The total costs calculated for equipment, blades, and repairs are presented in Table 1 and yearly total costs are shown in Figure 3. Overall costs were $181 093 lower (55.5%) for the McGRATH VL compared to the GlideScope over the 24-month period. The mean (SD) monthly costs for GlideScope VL blades were $3837 ($1050) and $3236 ($538) for years 1 and 2, respectively, vs $1652 ($663) and $2933 ($585) for the McGRATH VL blades. Most of the total cost differences were attributed to equipment and blade purchases, which were $202 595 (65.0%) higher for the GlideScope compared to the McGRATH VL. The monthly blade costs alone were higher (P < .001) for the GlideScope over the 2-year period; however, the McGRATH VL required use of disposable stylets at a cost of $10 177 for all endotracheal intubations, compared to $700 for the GlideScope device.

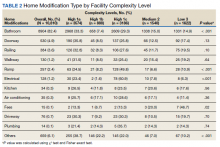

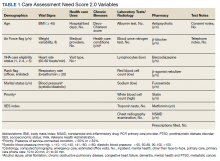

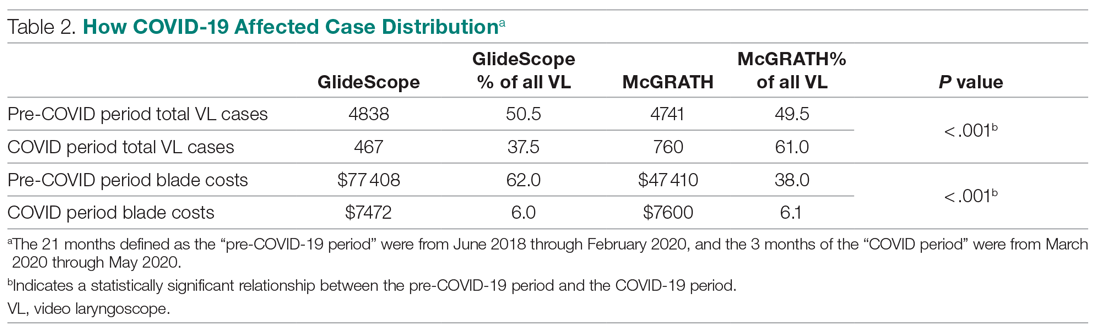

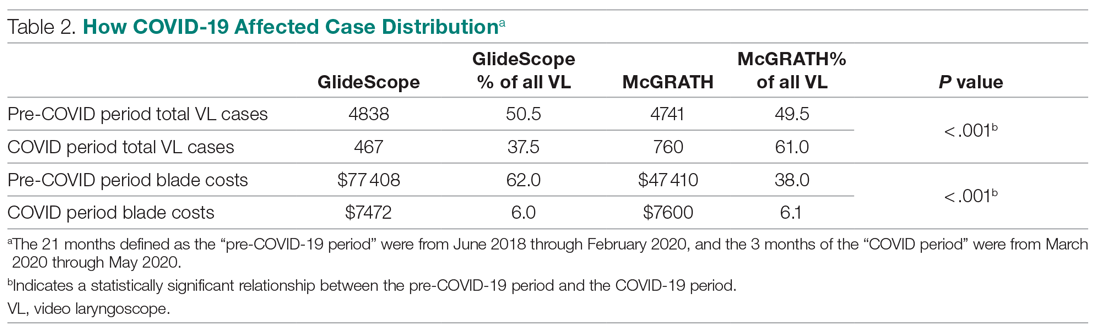

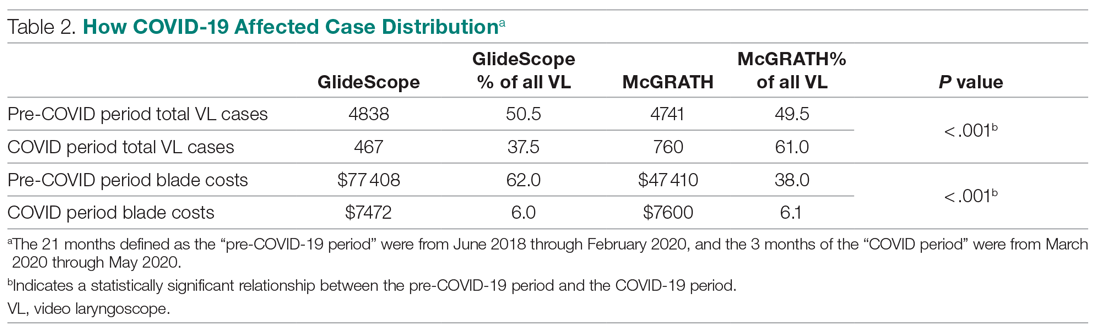

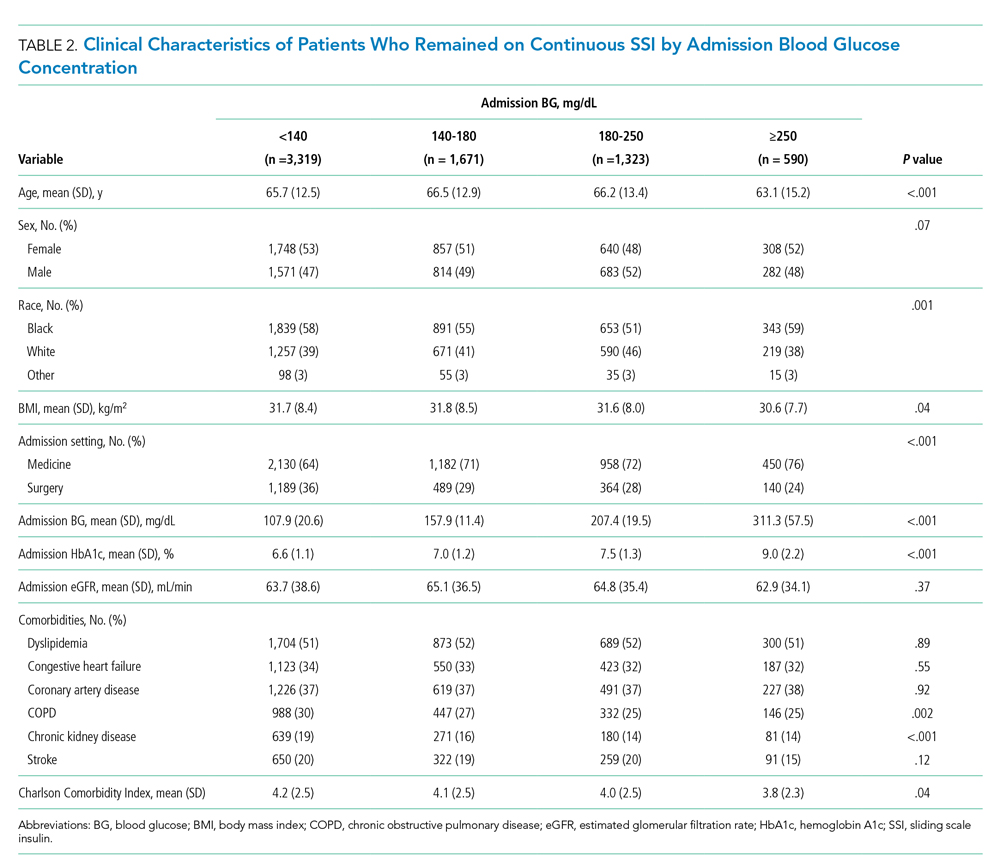

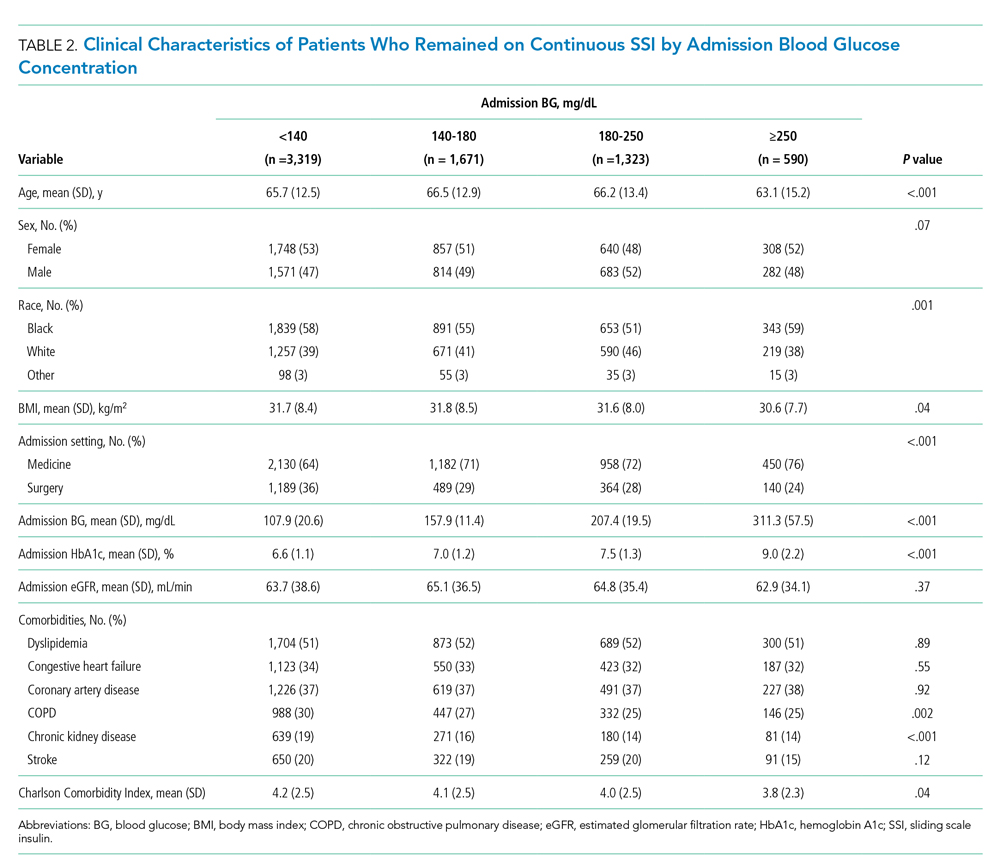

An analysis was performed to determine whether costs differed between those 2 instruments during the COVID-19 period. There was a statistically significant (P < .001) difference in the case distribution between use of the McGRATH and GlideScope VLs during that period. The calculated blade cost difference for the COVID period was $128 higher for the McGRATH even though 293 more intubations were performed with that device (Table 2).

Discussion

We attempted to provide useful cost estimates by presenting pricing data reflecting the approximate cost that most large institutional anesthesia practices would incur for using those 2 specific devices and related peripherals. The main findings of our analysis showed that use of the McGRATH MAC VL resulted in a 55% cost savings compared to the GlideScope, with a similar number of cases performed with each device over the 24-month study period. We believe this represents a substantial savings to the department and institution, which has prompted internal review on the use of video laryngoscopy equipment. None of the McGRATH units failed; however, the GlideScope required 3 baton replacements.

Of note, use of the McGRATH MAC increased during the COVID-19 period, which may be explained by the fact that the operators found it to be a more portable device. Several physicians in the department commented that its smaller size made the McGRATH MAC more practical during the time when a plexiglass box was being used around the patient’s head to shield the intubator from aerosolized viral particles.

Although this study demonstrated the cost-saving value of the McGRATH over the GlideScope, a suggested next step would be to examine resource utilization related to video laryngoscopy use. The more dynamic tracking of the use of these devices should facilitate the assessment of existing related resources and decision making, to optimize the benefits of this initiative. We would anticipate reduced use of anesthesia personnel, such as technicians to assist with the management of this device which could be significant. As new respiratory viruses are appearing each year, video laryngoscopy will continue to gain increasing use in operating rooms and acute care locations. The adding of protective barriers between patients and providers calls for use of the most practical and effective VL devices, to protect personnel who are at high risk of contamination from airway secretions and aerosolized particles.9,10

The COVID-19 pandemic has demonstrated the value of anesthesiology in regards to analyzing and finding solutions to effectively manage infected patients or those suspected of infection in the perioperative environment. Inexpensive products are often avoided because cheaper devices are associated with being of lower quality. However, the association with cost and quality—and the assumption that a higher price is positively correlated with higher quality—is overall inconsistent in the medical literature.11 A more effective or higher quality treatment does not necessarily cost more and may actually end up costing less,12 as was the case in this study. We have been able to directly cut departmental expenses by using a more efficient and cost-effective device for intubations, without compromising safety and efficacy. Future studies should determine whether this significant reduction in costs from video laryngoscopy intubations with the McGRATH VL will be sustained across anesthesiology departments in the Jefferson Health Enterprise Hospitals, or other health systems, as well as its impact on workflow and personnel resources.

This analysis was restricted to one of the campuses of the Jefferson Health Enterprise. However, this is the largest anesthesia practice, encompassing several locations, which should reflect the general practice patterns across other anesthesiology departments in this large institution. The costs for the devices and peripherals may vary across anesthesia practices depending on volume and contracts negotiated with the suppliers. It was not possible to estimate this variability, which could change the total costs by a few percentage points. We recognize that there may be other costs associated with securing the McGRATH VL to prevent loss from theft or misplacement, which were not included in the study. Lastly, the inability to obtain randomized samples for the 2 groups treated with each device opens up the possibility of selection bias. There were, however, multiple intubators who were free to select 1 of the devices for endotracheal intubation, which may have reduced the effect of selection bias.

Conclusion

This study demonstrated that over a 24-month period use of the McGRATH MAC VL resulted in a cost reduction of around 55% compared to using the GlideScope for endotracheal intubation procedures performed at a major academic center. Over the first 3 months of the COVID-19 crisis, which our study included, use of the McGRATH VL increased while GlideScope use decreased. This was most likely related to the portability and smaller size of the McGRATH, which better facilitated intubations of COVID-19 patients.

Acknowledgements: The authors thank Craig Smith, Senior Anesthesia Technician, for his assistance with the cost information and excellent record-keeping related to the use of video laryngoscopes.

Corresponding author: Marc C. Torjman, PhD, Professor, Department of Anesthesiology, Sidney Kimmel Medical College at Thomas Jefferson University, 111 South 11th St, Suite G-8290, Philadelphia, PA 19107; Marc.Torjman@Jefferson.edu.

Financial disclosures: Dr. Thaler has served as a consultant for Medtronic since September 2020. He has participated in 2 webinars on the routine use of video laryngoscopy.

Funding: This study was supported by the Department of Anesthesiology at Thomas Jefferson University.

1. Channa AB. Video laryngoscopes. Saudi J Anaesth. 2011;5(4):357-359.

2. Pieters BM, Eindhoven GB, Acott C, Van Zundert AAJ. Pioneers of laryngoscopy: indirect, direct and video laryngoscopy. Anaesth Intensive Care. 2015;43(suppl):4-11.

3. Lewis SR, Butler AR, Parker J, et al. Videolaryngoscopy versus direct laryngoscopy for adult patients requiring tracheal intubation. Cochrane Database Syst Rev. 2016;11(11):CD011136.

4. Kim W, Choi HJ, Lim T, Kang BS. Can the new McGrath laryngoscope rival the GlideScope Ranger portable video laryngoscope? A randomized manikin study. Am J Emerg Med. 2014;32(10):1225-1229.

5. Kim W, Choi HJ, Lim T, et al. Is McGrath MAC better than Glidescope Ranger for novice providers in the simulated difficult airway? A randomized manikin study. Resuscitation. 2014;85(suppl 1):S32.

6. Jeon WJ, Kim KH, Yeom JH, et al. A comparison of the Glidescope to the McGrath videolaryngoscope in patients. Korean J Anesthesiol. 2011;61(1):19-23.

7. Savoldelli GL, Schiffer E, Abegg C, et al. Comparison of the Glidescope, the McGrath, the Airtraq and the Macintosh laryngoscopes in simulated difficult airways. Anaesthesia. 2008;63(12):1358-1364.

8. Savoldelli GL, Schiffer E, Abegg C, et al. Learning curves of the Glidescope, the McGrath and the Airtraq laryngoscopes: a manikin study. Eur J Anaesthesiol. 2009;26(7):554-558.

9. Schumacher J, Arlidge J, Dudley D, et al. The impact of respiratory protective equipment on difficult airway management: a randomised, crossover, simulation study. Anaesthesia. 2020;75(10):1301-1306.

10. De Jong A, Pardo E, Rolle A, et al. Airway management for COVID-19: a move towards universal videolaryngoscope? Lancet Respir Med. 2020;8(6):555.

11. Hussey PS, Wertheimer S, Mehrotra A. The association between health care quality and cost: a systematic review. Ann Intern Med. 2013;158(1):27-34.

12. Mitton C, Dionne F, Peacock S, Sheps S. Quality and cost in healthcare: a relationship worth examining. Appl Health Econ Health Policy. 2006;5(4):201-208.

From the Department of Anesthesiology, Thomas Jefferson University and Hospitals, Sidney Kimmel Medical College, Philadelphia, PA, and Sidney Kimmel Medical College at Thomas Jefferson University, Philadelphia, PA.

Objective: Retrospective study examining hospital cost information of patients requiring endotracheal intubation with video laryngoscopy. Provide a practical cost assessment on use of the McGRATH and GlideScope video laryngoscopes (VLs).

Methods: This study examined 52 hospital locations within a single, large university hospital, with most of those locations being hospital operating rooms. A total of 34 600 endotracheal intubations performed over 24 months, of which 11 345 were video laryngoscopies. Electronic medical records containing demographic data and information related to endotracheal intubation procedures, with monthly breakdowns between GlideScope and McGRATH intubations, were reviewed. Cost information calculated for equipment, blades, batteries, repairs, and subsequent analysis performed to determine cost differences between those 2 instruments during the COVID-19 period.

Results: A total of 5501 video laryngoscopy procedures were performed using the McGRATH VL and 5305 were performed using the GlideScope VL. Costs over 24 months were $181 093 lower (55.5%) for McGRATH compared to GlideScope. The mean (SD) monthly costs for GlideScope blades were $3837 ($1050) and $3236 ($538) for years 1 and 2, respectively, vs $1652 ($663) and $2933 ($585) for McGRATH blades (P < .001). Most total cost differences were attributed to equipment and blade purchases, which were $202 595 (65.0%) higher for GlideScope. During the COVID-19 period, the use of the McGRATH increased to 61% of all video laryngoscopy cases, compared to 37% for GlideScope (P < .001). Blade cost difference for the COVID-19 period was $128 higher for the McGRATH even though 293 more intubations were performed with that device.

Conclusions: Use of the McGRATH resulted in a cost savings of 55% compared to the GlideScope, and its use was highest during the COVID-19 period, which may be explained by its more portable and practical features.

Keywords: video laryngoscope; McGRATH; GlideScope; endotracheal intubation; hospital costs; COVID-19.

Hospitals have come to rely on video laryngoscopes (VLs) for tracheal intubation as necessary tools for better visualization of airways. Modern video laryngoscopy developed in the 2000s1 as a progression from direct laryngoscopy, which began in 1852 when Horace Green used a bent tongue spatula and sunlight to examine a child.2 VLs have seen many improvements and adaptations of their own, resulting in many different styles and types circulating around hospitals. The GlideScope (Verathon Inc, Bothell, WA) and the McGRATH (Medtronic, Minneapolis, MN) are examples of such instruments, which are now widely used in the US and are the 2 VLs of choice at our institution.

A few studies have compared VLs to direct laryngoscopes. In their systematic review, Lewis et al have shown the numerous benefits of using a VL over a direct laryngoscope. Some general conclusions were that the use of video laryngoscopy reduced the number of failed intubations, decreased laryngeal trauma, and provided improved visualizations.3 Other studies have compared the different types of VLs, including the McGRATH and the GlideScope, examining factors such as intubation time and display quality of the image. Two studies found that medical students were equally successful at using both the McGRATH and the GlideScope,4,5 while another study found that care providers using the GlideScope had quicker intubation times.6 Lastly, Savoldelli et al concluded that more providers preferred the McGRATH, which provided better laryngeal views,7 while their subsequent study showed more favorable learning curves of the Airtraq compared to the McGRATH and other VLs.8

Although there have been no reported differences in safety and effectiveness of the McGRATH and GlideScope devices, cost data on the use of these 2 popular laryngoscopes are lacking. Such information is important considering the increasing costs of medical technologies and the significant financial losses experienced by health care systems due to the COVID-19 crisis. The purpose of this retrospective cohort study was to compare the cost efficiency of the McGRATH MAC and GlideScope Core VLs at a large academic center.

Methods

This retrospective study was performed under exemption from the Thomas Jefferson University Institutional Review Board. The primary data sources consisted of hospital electronic patient records (EPIC) and cost information from the device manufacturers and hospital staff. The electronic patient data were provided by the EPIC Enterprise Analytics Business Intelligence group at Thomas Jefferson University Hospital (Center City Campus, Philadelphia, PA), while device costs were obtained from Verathon, Medtronic, and departmental staff responsible for purchasing equipment. Monthly data were obtained over a 24-month period (June 2018 through May 2020) when the McGRATH VL was placed into use in the department of anesthesiology. The 2 types of VLs were made available for use in a total of 52 locations, with the majority being hospital operating rooms.

The following variables were recorded: number of endotracheal intubations performed each month with breakdown between video laryngoscopy and flexible bronchoscopy airways, frequency of use for each type of laryngoscope, blades used, and equipment costs for use of each laryngoscope. Hospital cost estimates for both the McGRATH and GlideScope laryngoscopes included batteries, handles, blades, and the devices themselves. Cost data were also collected on frequency of device failure, maintenance, and replacement of parts and lost equipment.

Analysis

De-identified electronic medical records consisted of nominal and quantitative variables, with demographic data and information related to the endotracheal intubation procedure. All data were in chronological order and sorted by date after which coding was applied, to identify device type and allocate pertinent cost information. Descriptive statistics were reported as mean (SD) and sum for costs; frequency tables were generated for intubation procedures according to device type and time periods. Data were analyzed using the χ2 test, the student t test, and the Wilcoxon Mann-Whitney U test, with a P value set at .05 for statistical significance. SPSS version 26 and GraphPad Prism version 6 were used for all statistical analyses.

Results

A total of 34 600 endotracheal intubations were performed over the 24-month study period, and 11 345 (32.8%) were video laryngoscopy procedures. Out of all video laryngoscopy procedures, 5501 (48.5%) were performed using the McGRATH VL and 5305 (46.8%) were conducted using the GlideScope VL. The difference of 539 (4.8%) cases accounts for flexible bronchoscopy procedures and endotracheal intubations using other video laryngoscopy equipment. The mean (SD) monthly number of video laryngoscopy procedures for the 24 months was 221 (54) and 229 (89) for the GlideScope and McGRATH devices, respectively. Monthly endotracheal intubation distributions over 24 months trended upward for the McGRATH VL and downward for the GlideScope, but there was no statistically significant (P = .71) difference in overall use between the 2 instruments (Figure 1).

To examine the observed usage trends between the 2 VL during the first and last 12 months, a univariate ANOVA was conducted with the 2 time periods entered as predictors in the model. Video laryngoscopy intubations were performed (P = .001) more frequently with the GlideScope during the first 12 months; however, use of the McGRATH VL increased (P < .001) during the following 12 months compared to GlideScope. The GlideScope accounted for 54% of all VL intubations during the first 12 months, with the McGRATH accounting for 58% of all video laryngoscopy procedures for months 12 to 24. Additionally, the increase in video laryngoscopy procedures with the McGRATH during the last 3 months of the study period was despite an overall reduction in surgical volume due to the COVID-19 crisis, defined for this study as March 1, 2020, to May 31, 2020 (Figure 1). There was a statistically significant (P < .001) difference in the case distribution between use of the McGRATH and GlideScope VL for that period. The anesthesia personnel’s use of the McGRATH VL increased to 61% of all video laryngoscopy cases, compared to 37% for the GlideScope (Figure 2).

The total costs calculated for equipment, blades, and repairs are presented in Table 1 and yearly total costs are shown in Figure 3. Overall costs were $181 093 lower (55.5%) for the McGRATH VL compared to the GlideScope over the 24-month period. The mean (SD) monthly costs for GlideScope VL blades were $3837 ($1050) and $3236 ($538) for years 1 and 2, respectively, vs $1652 ($663) and $2933 ($585) for the McGRATH VL blades. Most of the total cost differences were attributed to equipment and blade purchases, which were $202 595 (65.0%) higher for the GlideScope compared to the McGRATH VL. The monthly blade costs alone were higher (P < .001) for the GlideScope over the 2-year period; however, the McGRATH VL required use of disposable stylets at a cost of $10 177 for all endotracheal intubations, compared to $700 for the GlideScope device.

An analysis was performed to determine whether costs differed between those 2 instruments during the COVID-19 period. There was a statistically significant (P < .001) difference in the case distribution between use of the McGRATH and GlideScope VLs during that period. The calculated blade cost difference for the COVID period was $128 higher for the McGRATH even though 293 more intubations were performed with that device (Table 2).

Discussion

We attempted to provide useful cost estimates by presenting pricing data reflecting the approximate cost that most large institutional anesthesia practices would incur for using those 2 specific devices and related peripherals. The main findings of our analysis showed that use of the McGRATH MAC VL resulted in a 55% cost savings compared to the GlideScope, with a similar number of cases performed with each device over the 24-month study period. We believe this represents a substantial savings to the department and institution, which has prompted internal review on the use of video laryngoscopy equipment. None of the McGRATH units failed; however, the GlideScope required 3 baton replacements.

Of note, use of the McGRATH MAC increased during the COVID-19 period, which may be explained by the fact that the operators found it to be a more portable device. Several physicians in the department commented that its smaller size made the McGRATH MAC more practical during the time when a plexiglass box was being used around the patient’s head to shield the intubator from aerosolized viral particles.

Although this study demonstrated the cost-saving value of the McGRATH over the GlideScope, a suggested next step would be to examine resource utilization related to video laryngoscopy use. The more dynamic tracking of the use of these devices should facilitate the assessment of existing related resources and decision making, to optimize the benefits of this initiative. We would anticipate reduced use of anesthesia personnel, such as technicians to assist with the management of this device which could be significant. As new respiratory viruses are appearing each year, video laryngoscopy will continue to gain increasing use in operating rooms and acute care locations. The adding of protective barriers between patients and providers calls for use of the most practical and effective VL devices, to protect personnel who are at high risk of contamination from airway secretions and aerosolized particles.9,10

The COVID-19 pandemic has demonstrated the value of anesthesiology in regards to analyzing and finding solutions to effectively manage infected patients or those suspected of infection in the perioperative environment. Inexpensive products are often avoided because cheaper devices are associated with being of lower quality. However, the association with cost and quality—and the assumption that a higher price is positively correlated with higher quality—is overall inconsistent in the medical literature.11 A more effective or higher quality treatment does not necessarily cost more and may actually end up costing less,12 as was the case in this study. We have been able to directly cut departmental expenses by using a more efficient and cost-effective device for intubations, without compromising safety and efficacy. Future studies should determine whether this significant reduction in costs from video laryngoscopy intubations with the McGRATH VL will be sustained across anesthesiology departments in the Jefferson Health Enterprise Hospitals, or other health systems, as well as its impact on workflow and personnel resources.

This analysis was restricted to one of the campuses of the Jefferson Health Enterprise. However, this is the largest anesthesia practice, encompassing several locations, which should reflect the general practice patterns across other anesthesiology departments in this large institution. The costs for the devices and peripherals may vary across anesthesia practices depending on volume and contracts negotiated with the suppliers. It was not possible to estimate this variability, which could change the total costs by a few percentage points. We recognize that there may be other costs associated with securing the McGRATH VL to prevent loss from theft or misplacement, which were not included in the study. Lastly, the inability to obtain randomized samples for the 2 groups treated with each device opens up the possibility of selection bias. There were, however, multiple intubators who were free to select 1 of the devices for endotracheal intubation, which may have reduced the effect of selection bias.

Conclusion

This study demonstrated that over a 24-month period use of the McGRATH MAC VL resulted in a cost reduction of around 55% compared to using the GlideScope for endotracheal intubation procedures performed at a major academic center. Over the first 3 months of the COVID-19 crisis, which our study included, use of the McGRATH VL increased while GlideScope use decreased. This was most likely related to the portability and smaller size of the McGRATH, which better facilitated intubations of COVID-19 patients.

Acknowledgements: The authors thank Craig Smith, Senior Anesthesia Technician, for his assistance with the cost information and excellent record-keeping related to the use of video laryngoscopes.

Corresponding author: Marc C. Torjman, PhD, Professor, Department of Anesthesiology, Sidney Kimmel Medical College at Thomas Jefferson University, 111 South 11th St, Suite G-8290, Philadelphia, PA 19107; Marc.Torjman@Jefferson.edu.

Financial disclosures: Dr. Thaler has served as a consultant for Medtronic since September 2020. He has participated in 2 webinars on the routine use of video laryngoscopy.

Funding: This study was supported by the Department of Anesthesiology at Thomas Jefferson University.

From the Department of Anesthesiology, Thomas Jefferson University and Hospitals, Sidney Kimmel Medical College, Philadelphia, PA, and Sidney Kimmel Medical College at Thomas Jefferson University, Philadelphia, PA.

Objective: Retrospective study examining hospital cost information of patients requiring endotracheal intubation with video laryngoscopy. Provide a practical cost assessment on use of the McGRATH and GlideScope video laryngoscopes (VLs).

Methods: This study examined 52 hospital locations within a single, large university hospital, with most of those locations being hospital operating rooms. A total of 34 600 endotracheal intubations performed over 24 months, of which 11 345 were video laryngoscopies. Electronic medical records containing demographic data and information related to endotracheal intubation procedures, with monthly breakdowns between GlideScope and McGRATH intubations, were reviewed. Cost information calculated for equipment, blades, batteries, repairs, and subsequent analysis performed to determine cost differences between those 2 instruments during the COVID-19 period.

Results: A total of 5501 video laryngoscopy procedures were performed using the McGRATH VL and 5305 were performed using the GlideScope VL. Costs over 24 months were $181 093 lower (55.5%) for McGRATH compared to GlideScope. The mean (SD) monthly costs for GlideScope blades were $3837 ($1050) and $3236 ($538) for years 1 and 2, respectively, vs $1652 ($663) and $2933 ($585) for McGRATH blades (P < .001). Most total cost differences were attributed to equipment and blade purchases, which were $202 595 (65.0%) higher for GlideScope. During the COVID-19 period, the use of the McGRATH increased to 61% of all video laryngoscopy cases, compared to 37% for GlideScope (P < .001). Blade cost difference for the COVID-19 period was $128 higher for the McGRATH even though 293 more intubations were performed with that device.

Conclusions: Use of the McGRATH resulted in a cost savings of 55% compared to the GlideScope, and its use was highest during the COVID-19 period, which may be explained by its more portable and practical features.

Keywords: video laryngoscope; McGRATH; GlideScope; endotracheal intubation; hospital costs; COVID-19.

Hospitals have come to rely on video laryngoscopes (VLs) for tracheal intubation as necessary tools for better visualization of airways. Modern video laryngoscopy developed in the 2000s1 as a progression from direct laryngoscopy, which began in 1852 when Horace Green used a bent tongue spatula and sunlight to examine a child.2 VLs have seen many improvements and adaptations of their own, resulting in many different styles and types circulating around hospitals. The GlideScope (Verathon Inc, Bothell, WA) and the McGRATH (Medtronic, Minneapolis, MN) are examples of such instruments, which are now widely used in the US and are the 2 VLs of choice at our institution.

A few studies have compared VLs to direct laryngoscopes. In their systematic review, Lewis et al have shown the numerous benefits of using a VL over a direct laryngoscope. Some general conclusions were that the use of video laryngoscopy reduced the number of failed intubations, decreased laryngeal trauma, and provided improved visualizations.3 Other studies have compared the different types of VLs, including the McGRATH and the GlideScope, examining factors such as intubation time and display quality of the image. Two studies found that medical students were equally successful at using both the McGRATH and the GlideScope,4,5 while another study found that care providers using the GlideScope had quicker intubation times.6 Lastly, Savoldelli et al concluded that more providers preferred the McGRATH, which provided better laryngeal views,7 while their subsequent study showed more favorable learning curves of the Airtraq compared to the McGRATH and other VLs.8

Although there have been no reported differences in safety and effectiveness of the McGRATH and GlideScope devices, cost data on the use of these 2 popular laryngoscopes are lacking. Such information is important considering the increasing costs of medical technologies and the significant financial losses experienced by health care systems due to the COVID-19 crisis. The purpose of this retrospective cohort study was to compare the cost efficiency of the McGRATH MAC and GlideScope Core VLs at a large academic center.

Methods

This retrospective study was performed under exemption from the Thomas Jefferson University Institutional Review Board. The primary data sources consisted of hospital electronic patient records (EPIC) and cost information from the device manufacturers and hospital staff. The electronic patient data were provided by the EPIC Enterprise Analytics Business Intelligence group at Thomas Jefferson University Hospital (Center City Campus, Philadelphia, PA), while device costs were obtained from Verathon, Medtronic, and departmental staff responsible for purchasing equipment. Monthly data were obtained over a 24-month period (June 2018 through May 2020) when the McGRATH VL was placed into use in the department of anesthesiology. The 2 types of VLs were made available for use in a total of 52 locations, with the majority being hospital operating rooms.

The following variables were recorded: number of endotracheal intubations performed each month with breakdown between video laryngoscopy and flexible bronchoscopy airways, frequency of use for each type of laryngoscope, blades used, and equipment costs for use of each laryngoscope. Hospital cost estimates for both the McGRATH and GlideScope laryngoscopes included batteries, handles, blades, and the devices themselves. Cost data were also collected on frequency of device failure, maintenance, and replacement of parts and lost equipment.

Analysis

De-identified electronic medical records consisted of nominal and quantitative variables, with demographic data and information related to the endotracheal intubation procedure. All data were in chronological order and sorted by date after which coding was applied, to identify device type and allocate pertinent cost information. Descriptive statistics were reported as mean (SD) and sum for costs; frequency tables were generated for intubation procedures according to device type and time periods. Data were analyzed using the χ2 test, the student t test, and the Wilcoxon Mann-Whitney U test, with a P value set at .05 for statistical significance. SPSS version 26 and GraphPad Prism version 6 were used for all statistical analyses.

Results

A total of 34 600 endotracheal intubations were performed over the 24-month study period, and 11 345 (32.8%) were video laryngoscopy procedures. Out of all video laryngoscopy procedures, 5501 (48.5%) were performed using the McGRATH VL and 5305 (46.8%) were conducted using the GlideScope VL. The difference of 539 (4.8%) cases accounts for flexible bronchoscopy procedures and endotracheal intubations using other video laryngoscopy equipment. The mean (SD) monthly number of video laryngoscopy procedures for the 24 months was 221 (54) and 229 (89) for the GlideScope and McGRATH devices, respectively. Monthly endotracheal intubation distributions over 24 months trended upward for the McGRATH VL and downward for the GlideScope, but there was no statistically significant (P = .71) difference in overall use between the 2 instruments (Figure 1).

To examine the observed usage trends between the 2 VL during the first and last 12 months, a univariate ANOVA was conducted with the 2 time periods entered as predictors in the model. Video laryngoscopy intubations were performed (P = .001) more frequently with the GlideScope during the first 12 months; however, use of the McGRATH VL increased (P < .001) during the following 12 months compared to GlideScope. The GlideScope accounted for 54% of all VL intubations during the first 12 months, with the McGRATH accounting for 58% of all video laryngoscopy procedures for months 12 to 24. Additionally, the increase in video laryngoscopy procedures with the McGRATH during the last 3 months of the study period was despite an overall reduction in surgical volume due to the COVID-19 crisis, defined for this study as March 1, 2020, to May 31, 2020 (Figure 1). There was a statistically significant (P < .001) difference in the case distribution between use of the McGRATH and GlideScope VL for that period. The anesthesia personnel’s use of the McGRATH VL increased to 61% of all video laryngoscopy cases, compared to 37% for the GlideScope (Figure 2).

The total costs calculated for equipment, blades, and repairs are presented in Table 1 and yearly total costs are shown in Figure 3. Overall costs were $181 093 lower (55.5%) for the McGRATH VL compared to the GlideScope over the 24-month period. The mean (SD) monthly costs for GlideScope VL blades were $3837 ($1050) and $3236 ($538) for years 1 and 2, respectively, vs $1652 ($663) and $2933 ($585) for the McGRATH VL blades. Most of the total cost differences were attributed to equipment and blade purchases, which were $202 595 (65.0%) higher for the GlideScope compared to the McGRATH VL. The monthly blade costs alone were higher (P < .001) for the GlideScope over the 2-year period; however, the McGRATH VL required use of disposable stylets at a cost of $10 177 for all endotracheal intubations, compared to $700 for the GlideScope device.

An analysis was performed to determine whether costs differed between those 2 instruments during the COVID-19 period. There was a statistically significant (P < .001) difference in the case distribution between use of the McGRATH and GlideScope VLs during that period. The calculated blade cost difference for the COVID period was $128 higher for the McGRATH even though 293 more intubations were performed with that device (Table 2).

Discussion

We attempted to provide useful cost estimates by presenting pricing data reflecting the approximate cost that most large institutional anesthesia practices would incur for using those 2 specific devices and related peripherals. The main findings of our analysis showed that use of the McGRATH MAC VL resulted in a 55% cost savings compared to the GlideScope, with a similar number of cases performed with each device over the 24-month study period. We believe this represents a substantial savings to the department and institution, which has prompted internal review on the use of video laryngoscopy equipment. None of the McGRATH units failed; however, the GlideScope required 3 baton replacements.

Of note, use of the McGRATH MAC increased during the COVID-19 period, which may be explained by the fact that the operators found it to be a more portable device. Several physicians in the department commented that its smaller size made the McGRATH MAC more practical during the time when a plexiglass box was being used around the patient’s head to shield the intubator from aerosolized viral particles.

Although this study demonstrated the cost-saving value of the McGRATH over the GlideScope, a suggested next step would be to examine resource utilization related to video laryngoscopy use. The more dynamic tracking of the use of these devices should facilitate the assessment of existing related resources and decision making, to optimize the benefits of this initiative. We would anticipate reduced use of anesthesia personnel, such as technicians to assist with the management of this device which could be significant. As new respiratory viruses are appearing each year, video laryngoscopy will continue to gain increasing use in operating rooms and acute care locations. The adding of protective barriers between patients and providers calls for use of the most practical and effective VL devices, to protect personnel who are at high risk of contamination from airway secretions and aerosolized particles.9,10

The COVID-19 pandemic has demonstrated the value of anesthesiology in regards to analyzing and finding solutions to effectively manage infected patients or those suspected of infection in the perioperative environment. Inexpensive products are often avoided because cheaper devices are associated with being of lower quality. However, the association with cost and quality—and the assumption that a higher price is positively correlated with higher quality—is overall inconsistent in the medical literature.11 A more effective or higher quality treatment does not necessarily cost more and may actually end up costing less,12 as was the case in this study. We have been able to directly cut departmental expenses by using a more efficient and cost-effective device for intubations, without compromising safety and efficacy. Future studies should determine whether this significant reduction in costs from video laryngoscopy intubations with the McGRATH VL will be sustained across anesthesiology departments in the Jefferson Health Enterprise Hospitals, or other health systems, as well as its impact on workflow and personnel resources.

This analysis was restricted to one of the campuses of the Jefferson Health Enterprise. However, this is the largest anesthesia practice, encompassing several locations, which should reflect the general practice patterns across other anesthesiology departments in this large institution. The costs for the devices and peripherals may vary across anesthesia practices depending on volume and contracts negotiated with the suppliers. It was not possible to estimate this variability, which could change the total costs by a few percentage points. We recognize that there may be other costs associated with securing the McGRATH VL to prevent loss from theft or misplacement, which were not included in the study. Lastly, the inability to obtain randomized samples for the 2 groups treated with each device opens up the possibility of selection bias. There were, however, multiple intubators who were free to select 1 of the devices for endotracheal intubation, which may have reduced the effect of selection bias.

Conclusion

This study demonstrated that over a 24-month period use of the McGRATH MAC VL resulted in a cost reduction of around 55% compared to using the GlideScope for endotracheal intubation procedures performed at a major academic center. Over the first 3 months of the COVID-19 crisis, which our study included, use of the McGRATH VL increased while GlideScope use decreased. This was most likely related to the portability and smaller size of the McGRATH, which better facilitated intubations of COVID-19 patients.

Acknowledgements: The authors thank Craig Smith, Senior Anesthesia Technician, for his assistance with the cost information and excellent record-keeping related to the use of video laryngoscopes.

Corresponding author: Marc C. Torjman, PhD, Professor, Department of Anesthesiology, Sidney Kimmel Medical College at Thomas Jefferson University, 111 South 11th St, Suite G-8290, Philadelphia, PA 19107; Marc.Torjman@Jefferson.edu.

Financial disclosures: Dr. Thaler has served as a consultant for Medtronic since September 2020. He has participated in 2 webinars on the routine use of video laryngoscopy.

Funding: This study was supported by the Department of Anesthesiology at Thomas Jefferson University.

1. Channa AB. Video laryngoscopes. Saudi J Anaesth. 2011;5(4):357-359.

2. Pieters BM, Eindhoven GB, Acott C, Van Zundert AAJ. Pioneers of laryngoscopy: indirect, direct and video laryngoscopy. Anaesth Intensive Care. 2015;43(suppl):4-11.

3. Lewis SR, Butler AR, Parker J, et al. Videolaryngoscopy versus direct laryngoscopy for adult patients requiring tracheal intubation. Cochrane Database Syst Rev. 2016;11(11):CD011136.

4. Kim W, Choi HJ, Lim T, Kang BS. Can the new McGrath laryngoscope rival the GlideScope Ranger portable video laryngoscope? A randomized manikin study. Am J Emerg Med. 2014;32(10):1225-1229.

5. Kim W, Choi HJ, Lim T, et al. Is McGrath MAC better than Glidescope Ranger for novice providers in the simulated difficult airway? A randomized manikin study. Resuscitation. 2014;85(suppl 1):S32.

6. Jeon WJ, Kim KH, Yeom JH, et al. A comparison of the Glidescope to the McGrath videolaryngoscope in patients. Korean J Anesthesiol. 2011;61(1):19-23.

7. Savoldelli GL, Schiffer E, Abegg C, et al. Comparison of the Glidescope, the McGrath, the Airtraq and the Macintosh laryngoscopes in simulated difficult airways. Anaesthesia. 2008;63(12):1358-1364.

8. Savoldelli GL, Schiffer E, Abegg C, et al. Learning curves of the Glidescope, the McGrath and the Airtraq laryngoscopes: a manikin study. Eur J Anaesthesiol. 2009;26(7):554-558.

9. Schumacher J, Arlidge J, Dudley D, et al. The impact of respiratory protective equipment on difficult airway management: a randomised, crossover, simulation study. Anaesthesia. 2020;75(10):1301-1306.

10. De Jong A, Pardo E, Rolle A, et al. Airway management for COVID-19: a move towards universal videolaryngoscope? Lancet Respir Med. 2020;8(6):555.

11. Hussey PS, Wertheimer S, Mehrotra A. The association between health care quality and cost: a systematic review. Ann Intern Med. 2013;158(1):27-34.

12. Mitton C, Dionne F, Peacock S, Sheps S. Quality and cost in healthcare: a relationship worth examining. Appl Health Econ Health Policy. 2006;5(4):201-208.

1. Channa AB. Video laryngoscopes. Saudi J Anaesth. 2011;5(4):357-359.

2. Pieters BM, Eindhoven GB, Acott C, Van Zundert AAJ. Pioneers of laryngoscopy: indirect, direct and video laryngoscopy. Anaesth Intensive Care. 2015;43(suppl):4-11.

3. Lewis SR, Butler AR, Parker J, et al. Videolaryngoscopy versus direct laryngoscopy for adult patients requiring tracheal intubation. Cochrane Database Syst Rev. 2016;11(11):CD011136.

4. Kim W, Choi HJ, Lim T, Kang BS. Can the new McGrath laryngoscope rival the GlideScope Ranger portable video laryngoscope? A randomized manikin study. Am J Emerg Med. 2014;32(10):1225-1229.

5. Kim W, Choi HJ, Lim T, et al. Is McGrath MAC better than Glidescope Ranger for novice providers in the simulated difficult airway? A randomized manikin study. Resuscitation. 2014;85(suppl 1):S32.

6. Jeon WJ, Kim KH, Yeom JH, et al. A comparison of the Glidescope to the McGrath videolaryngoscope in patients. Korean J Anesthesiol. 2011;61(1):19-23.

7. Savoldelli GL, Schiffer E, Abegg C, et al. Comparison of the Glidescope, the McGrath, the Airtraq and the Macintosh laryngoscopes in simulated difficult airways. Anaesthesia. 2008;63(12):1358-1364.

8. Savoldelli GL, Schiffer E, Abegg C, et al. Learning curves of the Glidescope, the McGrath and the Airtraq laryngoscopes: a manikin study. Eur J Anaesthesiol. 2009;26(7):554-558.

9. Schumacher J, Arlidge J, Dudley D, et al. The impact of respiratory protective equipment on difficult airway management: a randomised, crossover, simulation study. Anaesthesia. 2020;75(10):1301-1306.

10. De Jong A, Pardo E, Rolle A, et al. Airway management for COVID-19: a move towards universal videolaryngoscope? Lancet Respir Med. 2020;8(6):555.

11. Hussey PS, Wertheimer S, Mehrotra A. The association between health care quality and cost: a systematic review. Ann Intern Med. 2013;158(1):27-34.

12. Mitton C, Dionne F, Peacock S, Sheps S. Quality and cost in healthcare: a relationship worth examining. Appl Health Econ Health Policy. 2006;5(4):201-208.

Phototherapy: Safe and Effective for Challenging Skin Conditions in Older Adults

Identifying safe, effective, and affordable evidence-based dermatologic treatments for older adults can be challenging because of age-related changes in the skin, comorbidities, polypharmacy, mobility issues, and cognitive changes. Phototherapy has been shown to be an effective nonpharmacologic treatment option for multiple challenging dermatologic conditions1-8; however, few studies have specifically examined its effectiveness in older adults. The challenge for older patients with psoriasis and dermatitis is that the conditions can be difficult to control and often require multiple treatment modalities.9,10 Patients with psoriasis also have a higher risk for diabetes, dyslipidemia, and cardiovascular disease compared to other older patients,11,12 which poses treatment challenges and makes nonpharmacologic treatments even more appealing.

Recent studies show that phototherapy can help decrease the use of dermatologic medications. Foerster and colleagues2 found that adults with psoriasis who were treated with phototherapy significantly decreased their use of topical steroids (24.5% fewer patients required steroid creams and 31.1% fewer patients required psoriasis-specific topicals)(P<.01) while their use of non–psoriasis-specific medications did not change. Click and colleagues13 identified a decrease in medication costs, health care utilization, and risk for immunosuppression in patients treated with phototherapy when compared to those treated with biologics and apremilast. Methotrexate is a common dermatologic medication that is highly associated with increased risks in elderly patients because of impaired immune system function and the presence of comorbidities (eg, kidney disease, obesity, diabetes, fatty liver),14 which increase in prevalence with age. Combining phototherapy with methotrexate can substantially decrease the amount of methotrexate needed to achieve disease control,15 thereby decreasing the methotrexate-associated risks. Findings from these studies suggest that a safe, effective, cost-effective, and well-tolerated nonpharmacologic alternative, such as phototherapy, is highly desirable and should be optimized. Unfortunately, most studies that report the effectiveness of phototherapy are in younger populations.

This retrospective study aimed to (1) identify the most common dermatologic conditions treated with phototherapy in older adults, (2) examine the effectiveness and safety of phototherapy in older adults

Methods

Design, Setting, Sample, and Statistical Analysis

The institutional review boards of Kaiser Permanente Washington Health Research Institute, Seattle, and the University of Washington, Seattle, approved this study. It was conducted in a large US multispecialty health care system (Group Health, Seattle, Washington [now Kaiser Permanente Washington]) serving approximately 600,000 patients, using billing records to identify all patients treated with phototherapy between January 1, 2015, and December 31, 2015, all who received narrowband UVB (NB-UVB) phototherapy. All adults 65 years and older who received phototherapy treatment during the 12-month study period were included. Patients were included regardless of comorbidities and other dermatologic treatments to maintain as much uniformity as possible between the present study and 2 prior studies examining phototherapy in older adult populations in the United Kingdom16 and Turkey.17 Demographic and clinical factors were presented using frequencies (percentages) or means and medians as appropriate. Comparisons of dermatologic conditions and clearance levels used a Fisher exact test. The number of phototherapy treatments to clearance and total number of treatments were compared between groups of patients using independent sample t tests.

Phototherapy Protocol

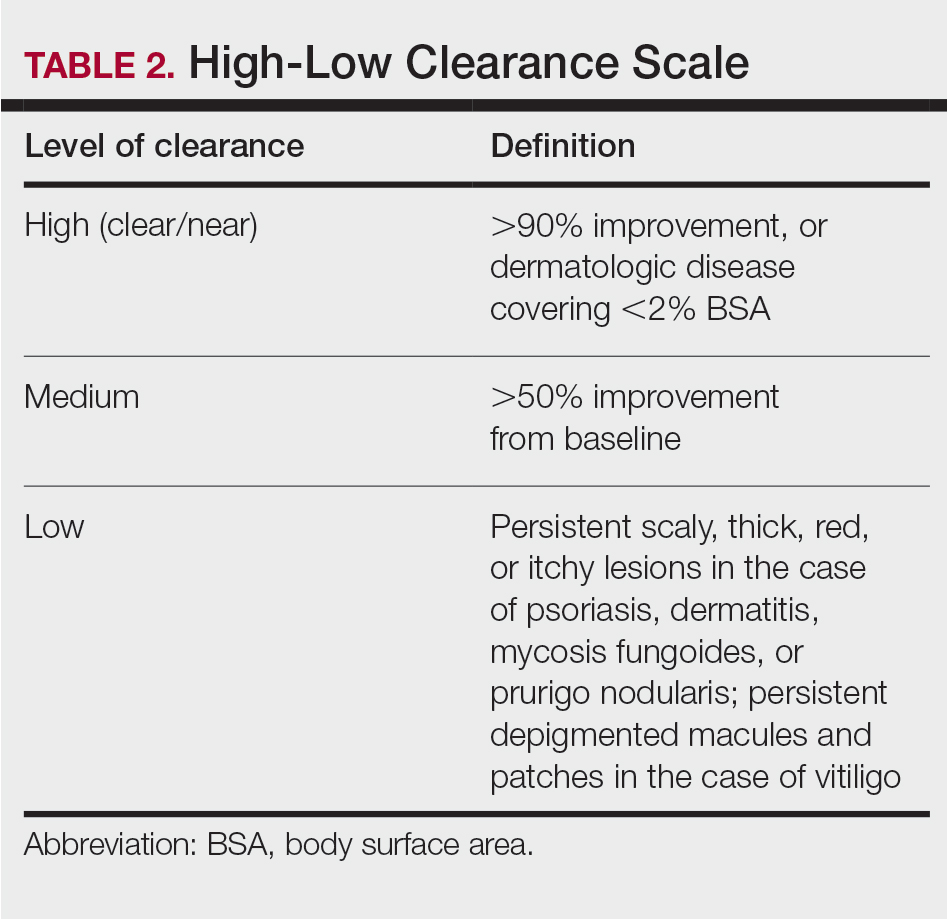

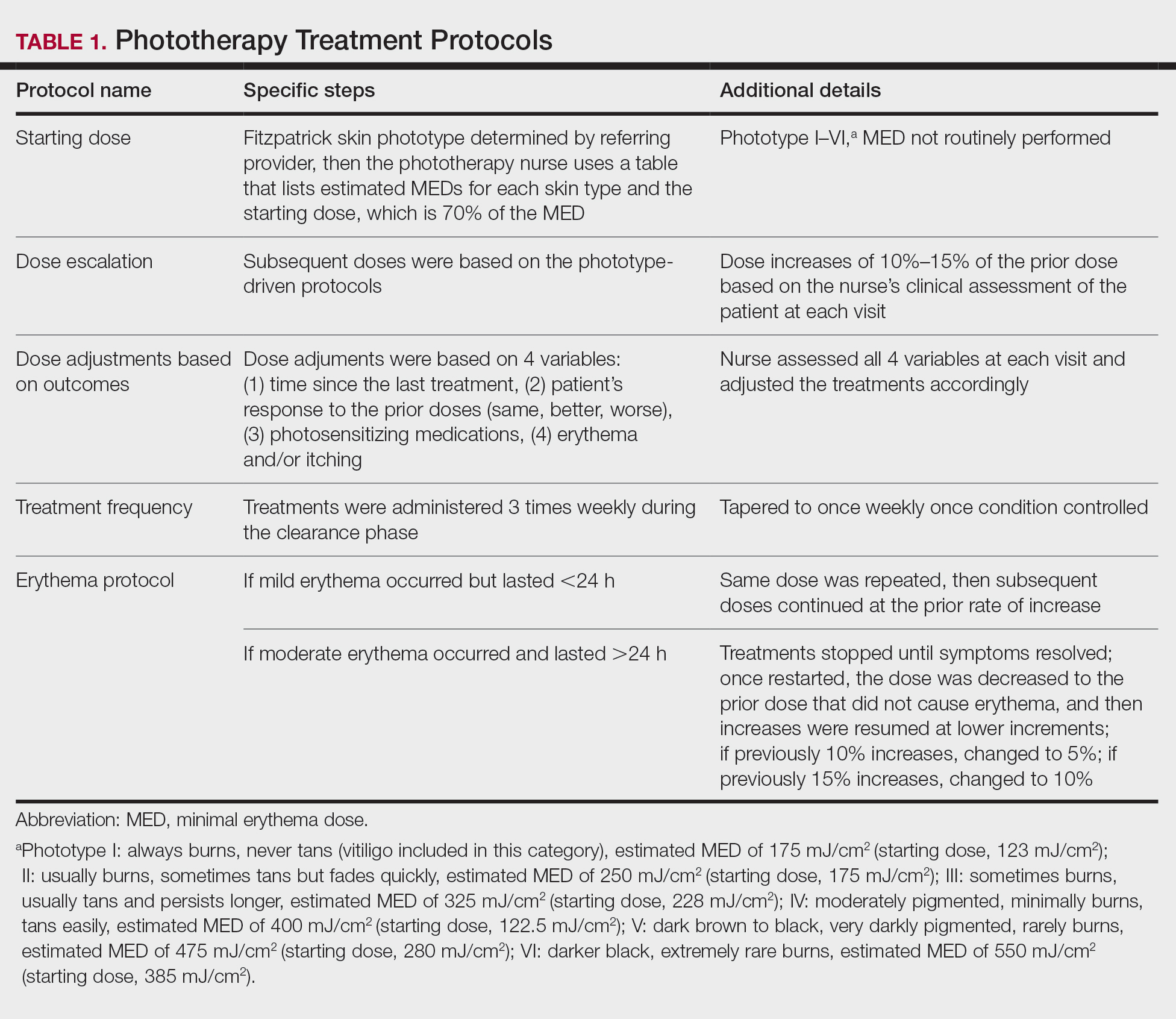

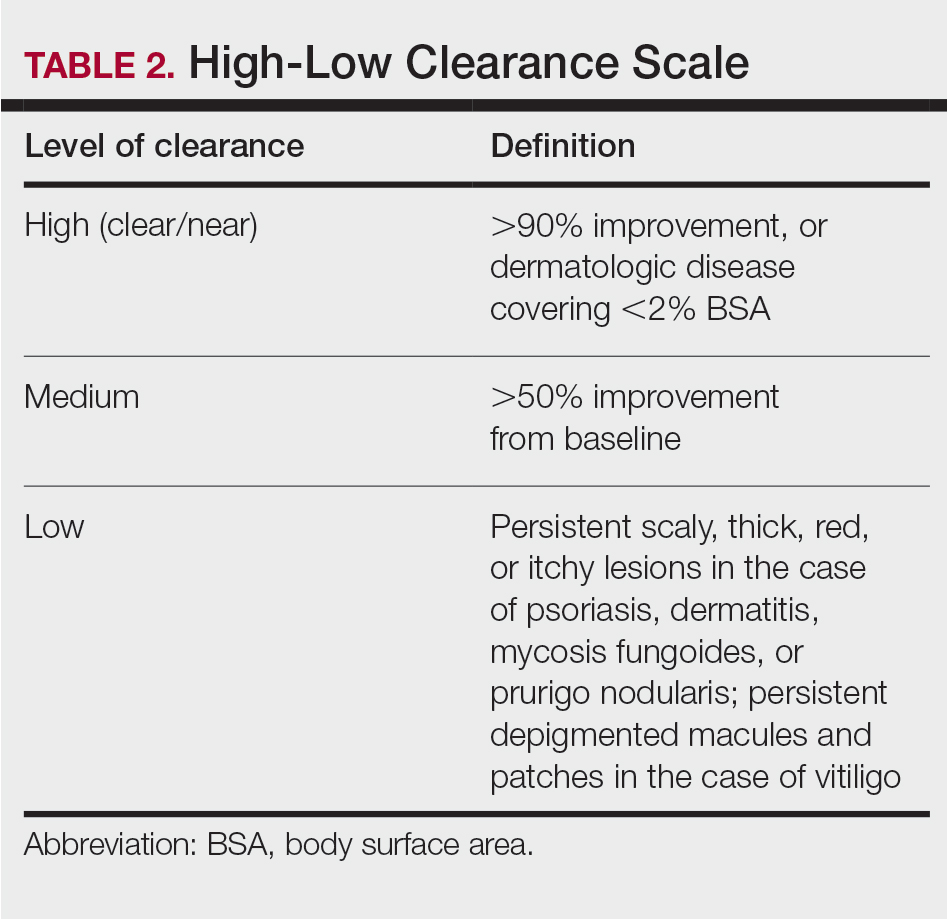

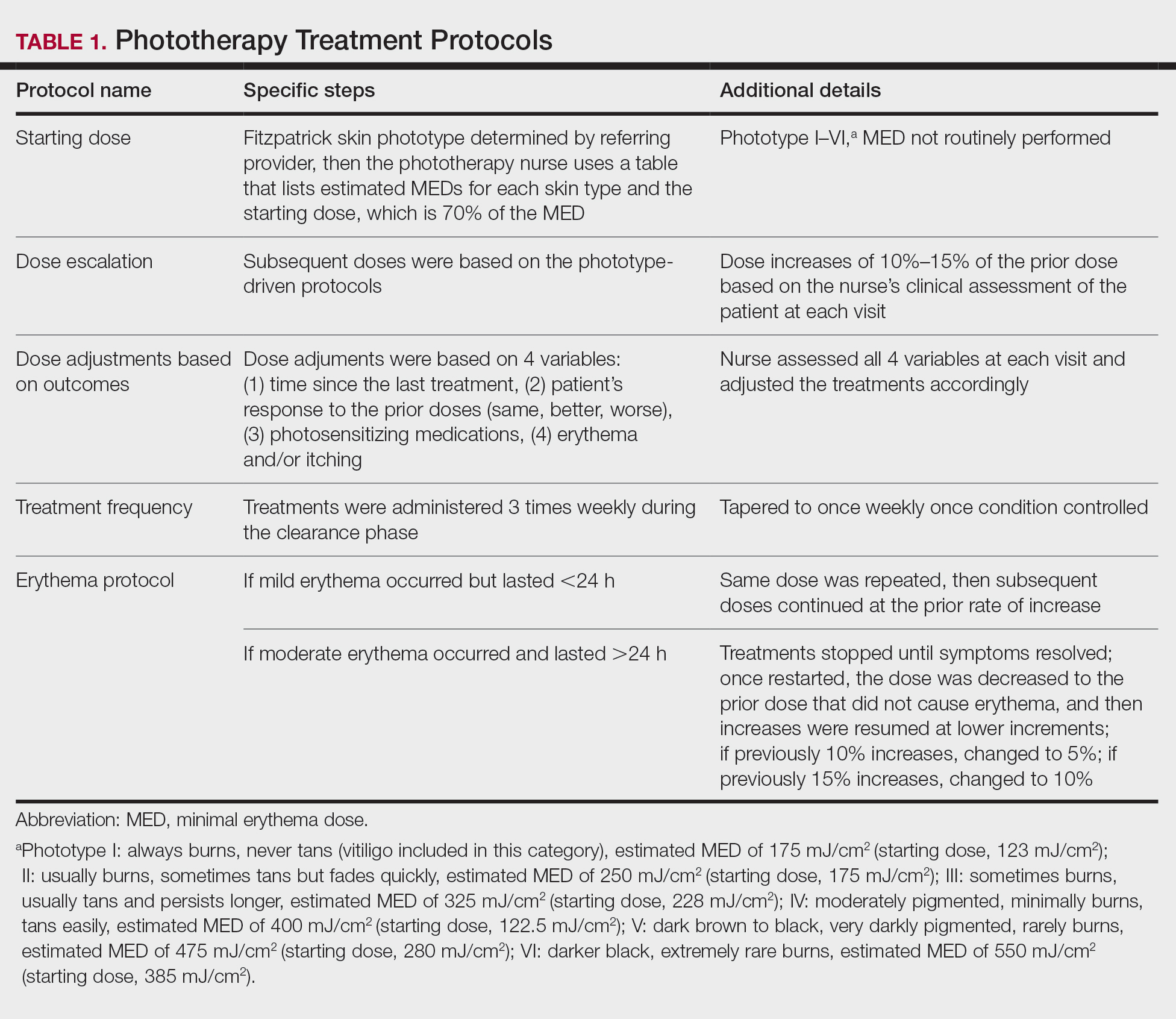

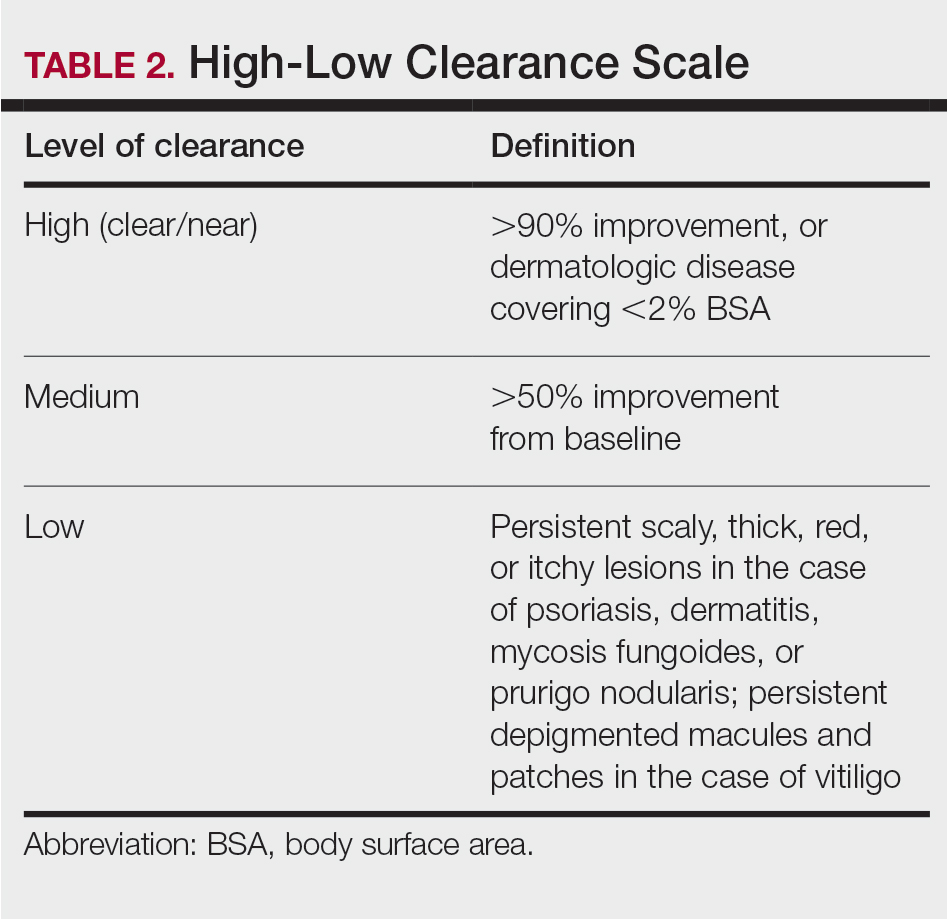

All patients received treatments administered by specially trained phototherapy nurses using a Daavlin UV Series (The Daavlin Company) or an Ultralite unit (Ultralite Enterprises, Inc), both with 48 lamps. All phototherapy nurses had been previously trained to provide treatments based on standardized protocols (Table 1) and to determine the patient’s level of disease clearance using a high to low clearance scale (Table 2). Daavlin’s treatment protocols were built into the software that accompanied the units and were developed based on the American Academy of Dermatology guidelines. The starting dose for an individual patient was determined based on the estimated

Results

Patients

Billing records identified 229 total patients who received phototherapy in 2015, of whom 52 (22.7%) were at least 65 years old. The median age was 70 years (range, 65–91 years). Twenty-nine (56%) were men and 35 (67%) had previously received phototherapy treatments.

Dermatologic Conditions Treated With Phototherapy

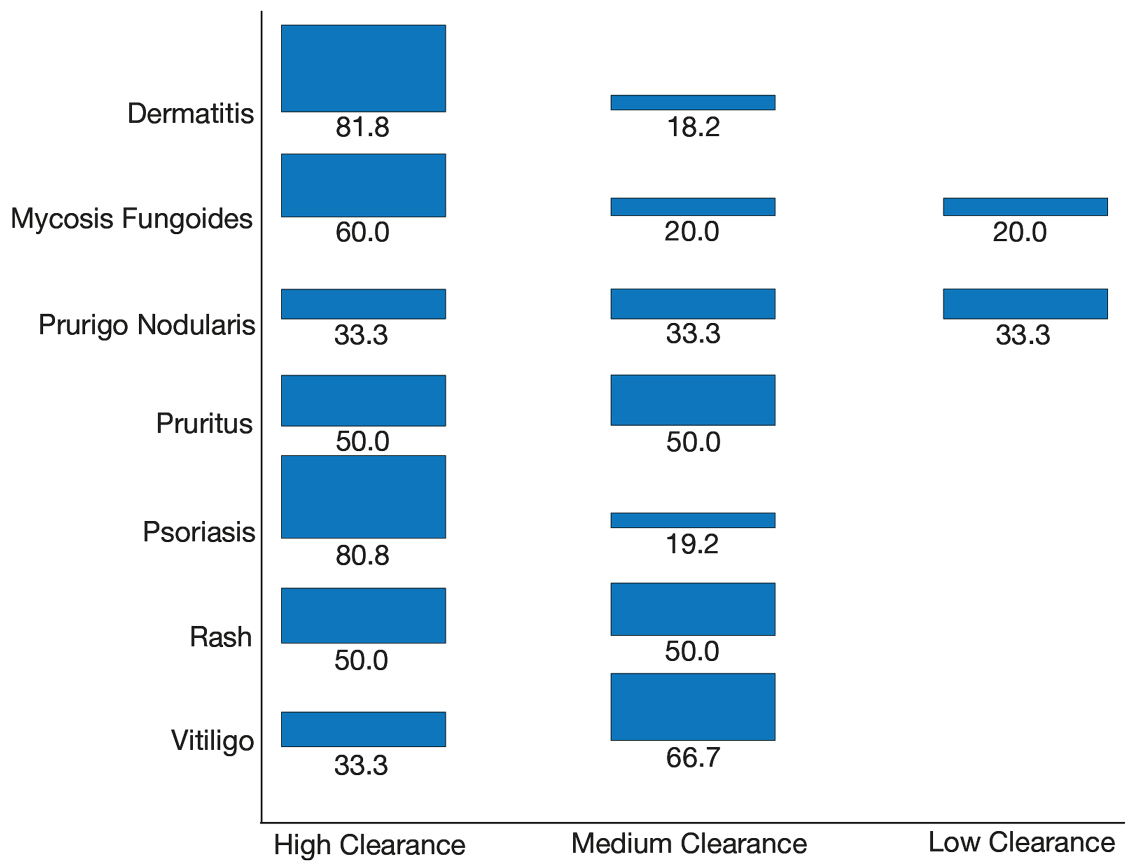

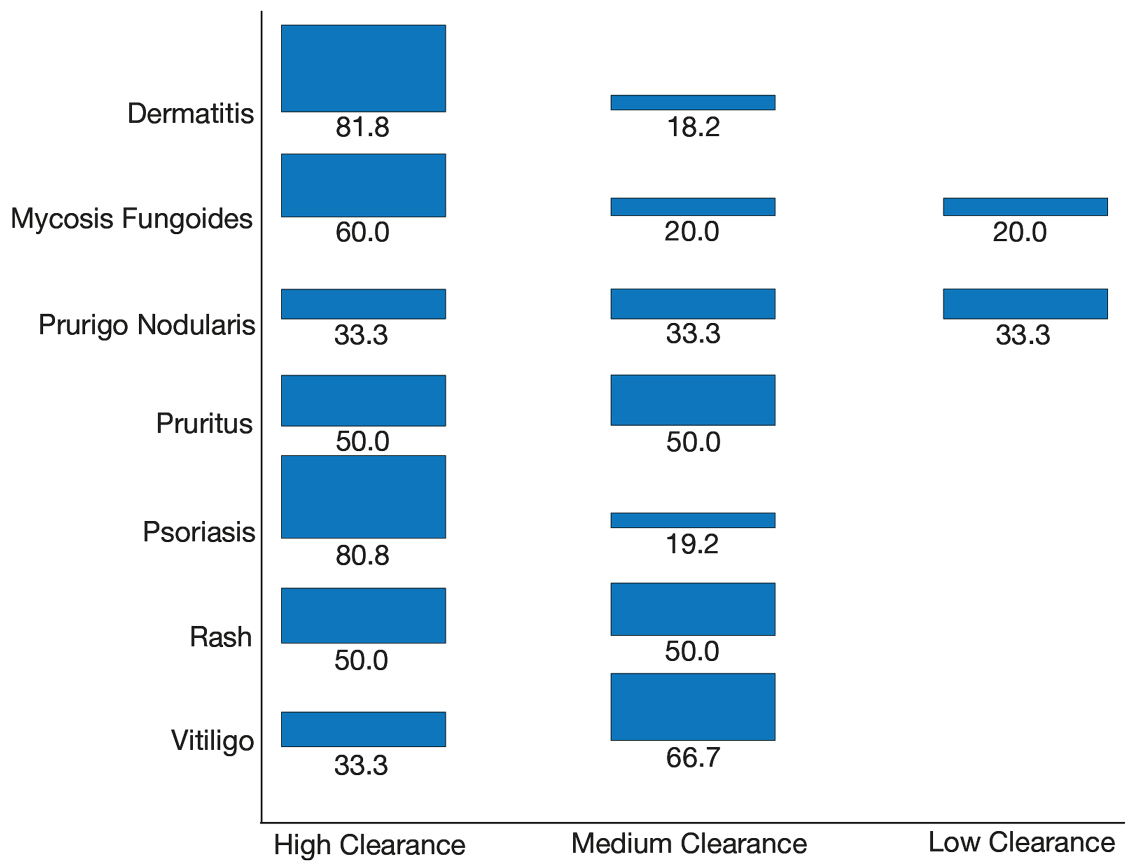

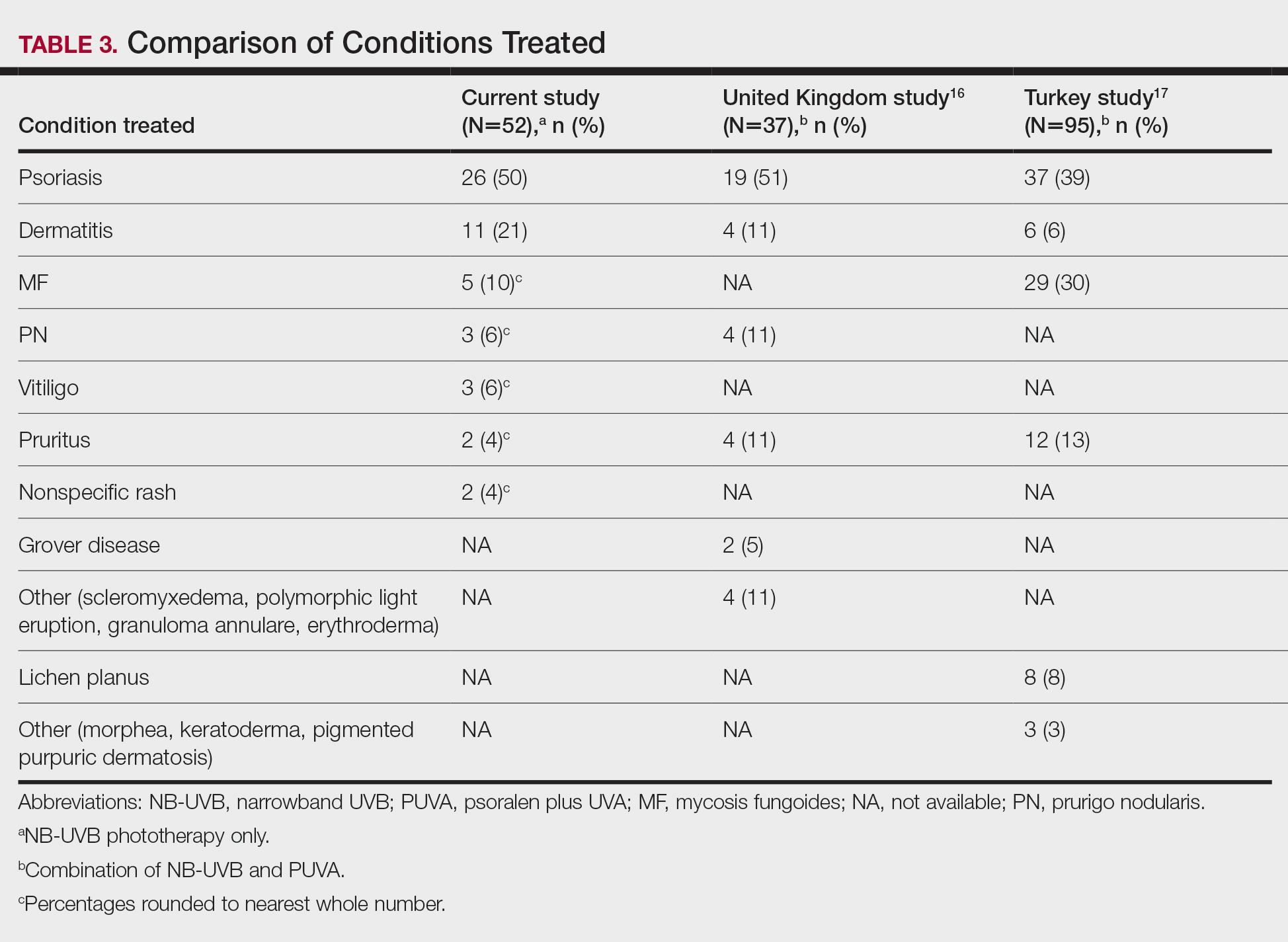

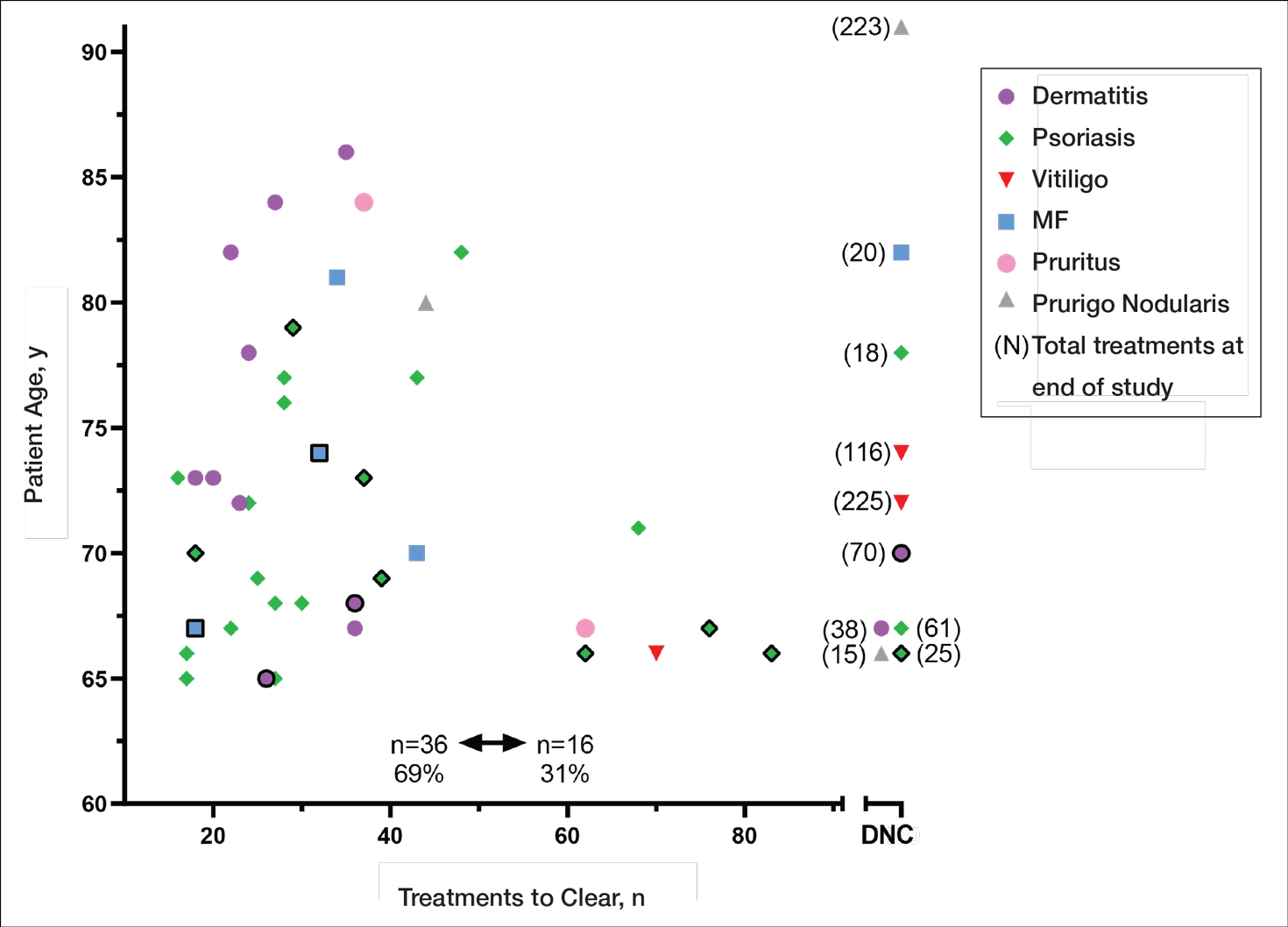

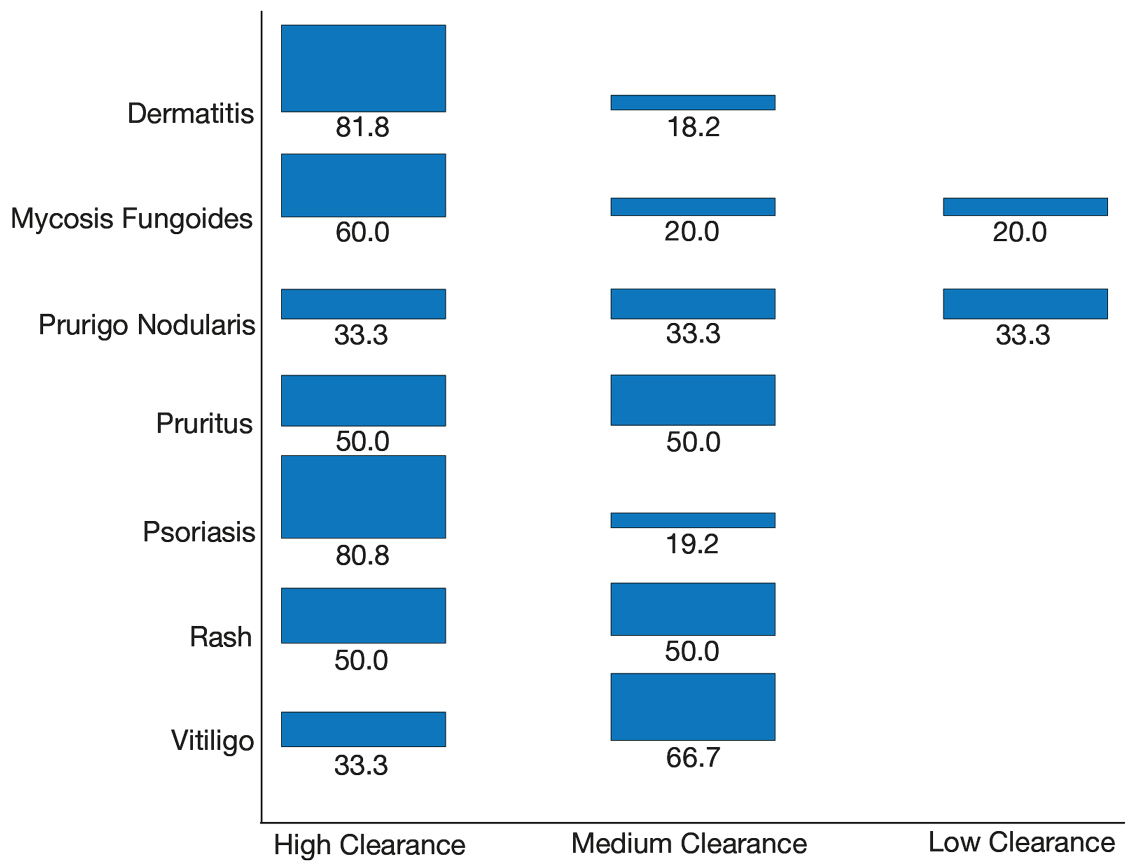

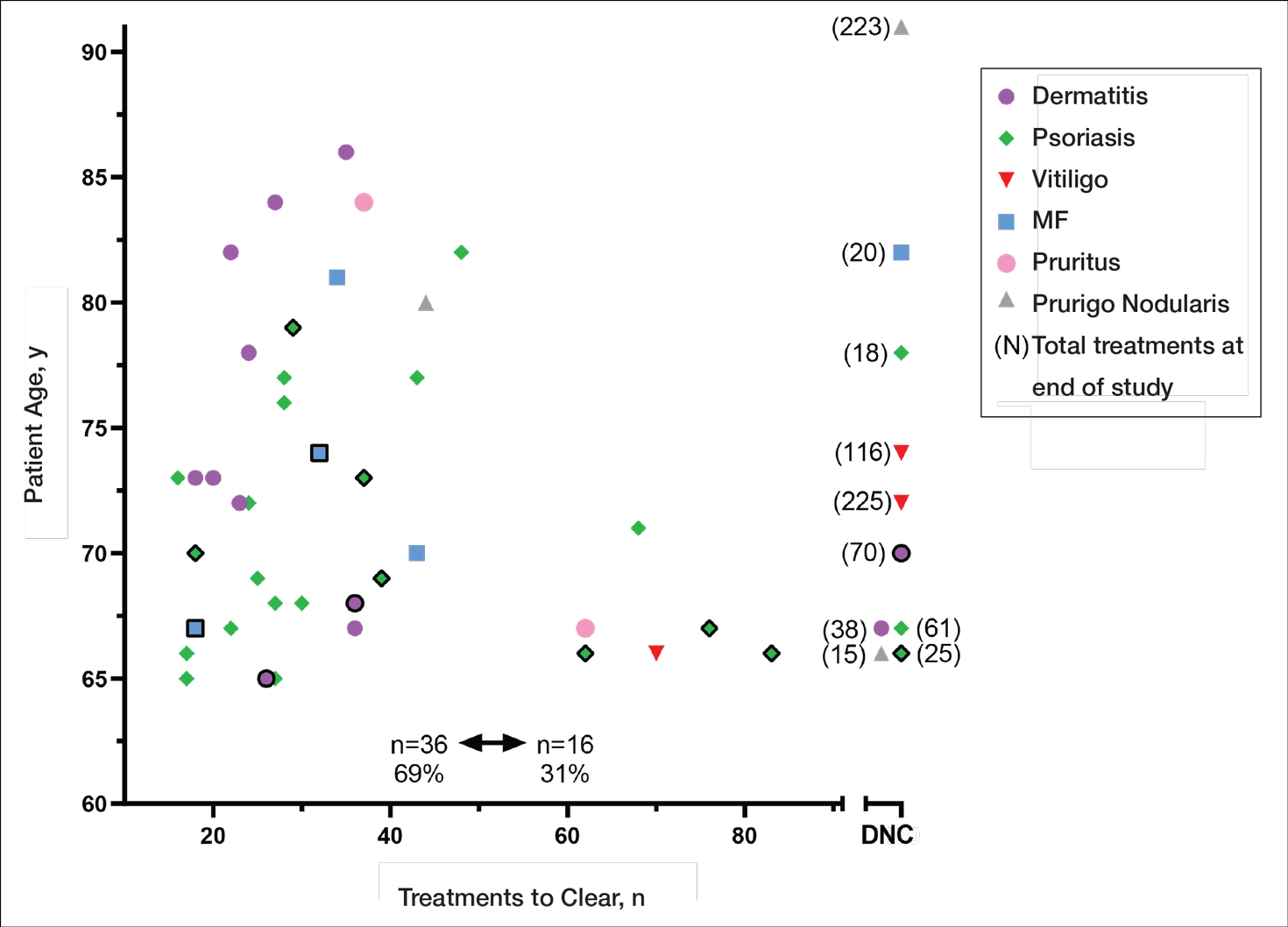

Our primary aim was to identify the most common dermatologic conditions treated with phototherapy in older adults. Psoriasis and dermatitis were the most common conditions treated in the sample (50% [26/52] and 21% [11/52], respectively), with mycosis fungoides being the third most common (10% [5/52]) and vitiligo tied with prurigo nodularis as fourth most common (6% [3/52])(Figure 1).

Effectiveness and Safety of Phototherapy

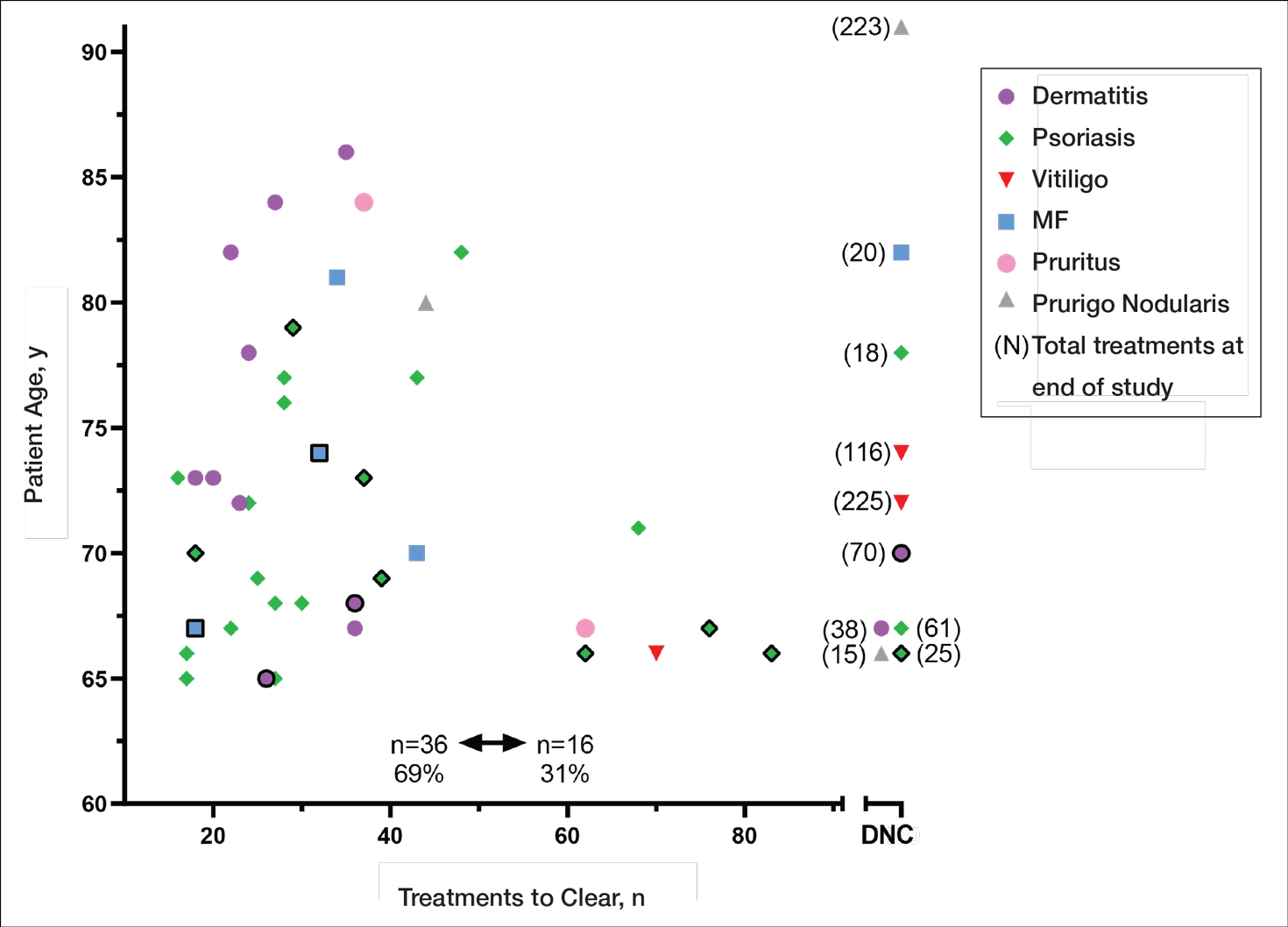

Our secondary aim was to examine the effectiveness and safety of phototherapy in older adults. Phototherapy was effective in this population, with 50 of 52 patients (96%) achieving a high or medium level of clearance. The degree of clearance for each of the dermatologic conditions is shown in Figure 2. Psoriasis and dermatitis achieved high clearance rates in 81% (21/26) and 82% (9/11) of patients, respectively. Overall, conditions did not have significant differences in clearances rates (Fisher exact test, P=.10). On average, it took patients 33 treatments to achieve medium or high rates of clearance. Psoriasis cleared more quickly, with an average of 30.4 treatments vs 36.1 treatments for other conditions, but the difference was not significant (t test, P=.26). Patients received an average of 98 total phototherapy treatments; the median number of treatments was 81 due to many being on maintenance therapy over several months. There was no relationship between a history of treatment with phototherapy and the total number of treatments needed to achieve clearance (t test, P=.40), but interestingly, those who had a history of phototherapy took approximately 5 more treatments to achieve clearance. The present study found that a slightly larger number of men were being treated for psoriasis (15 men vs 11 women), but there was no significant difference in response rate based on gender.

Side effects from phototherapy were minimal; 24 patients (46%) experienced grade 1 (mild) erythema at some point during their treatment course. Thirteen (25%) patients experienced grade 2 erythema, but this was a rare event for most patients. Only 1 (2%) patient experienced grade 3 erythema 1 time. Three patients experienced increased itching (6%). Thirteen (25%) patients had no side effects. None developed severe erythema or blisters, and none discontinued phototherapy because of side effects. Over the course of the study year, we found a high degree of acceptance of phototherapy treatments by older patients: 22 (42%) completed therapy after achieving clearance, 10 (19%) were continuing ongoing treatments (maintenance), and 15 (29%) stopped because of life circumstances (eg, other health issues, moving out of the area). Only 4 (8%) stopped because of a lack of effectiveness, and 1 (2%) patient because the treatments were burdensome.

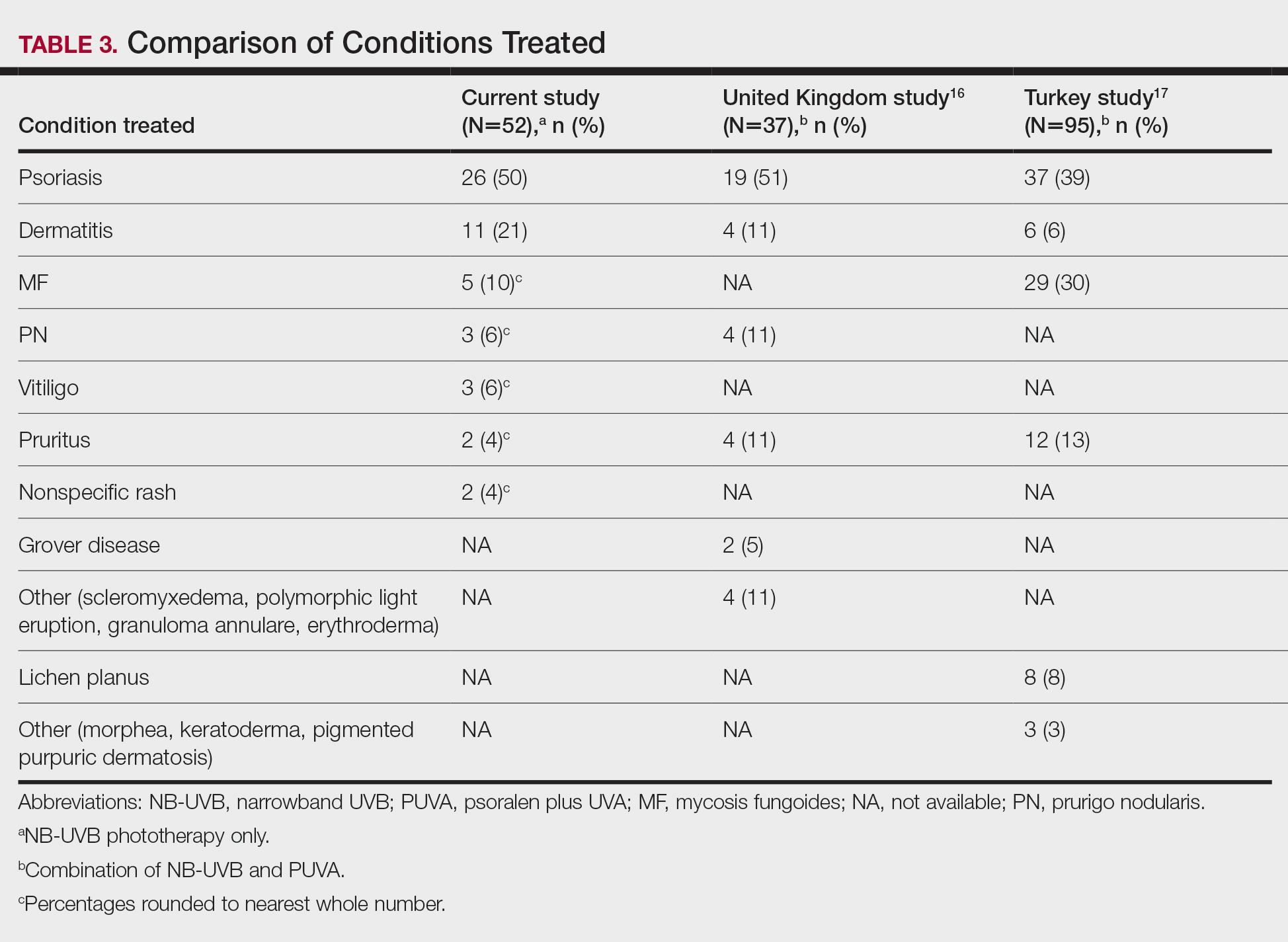

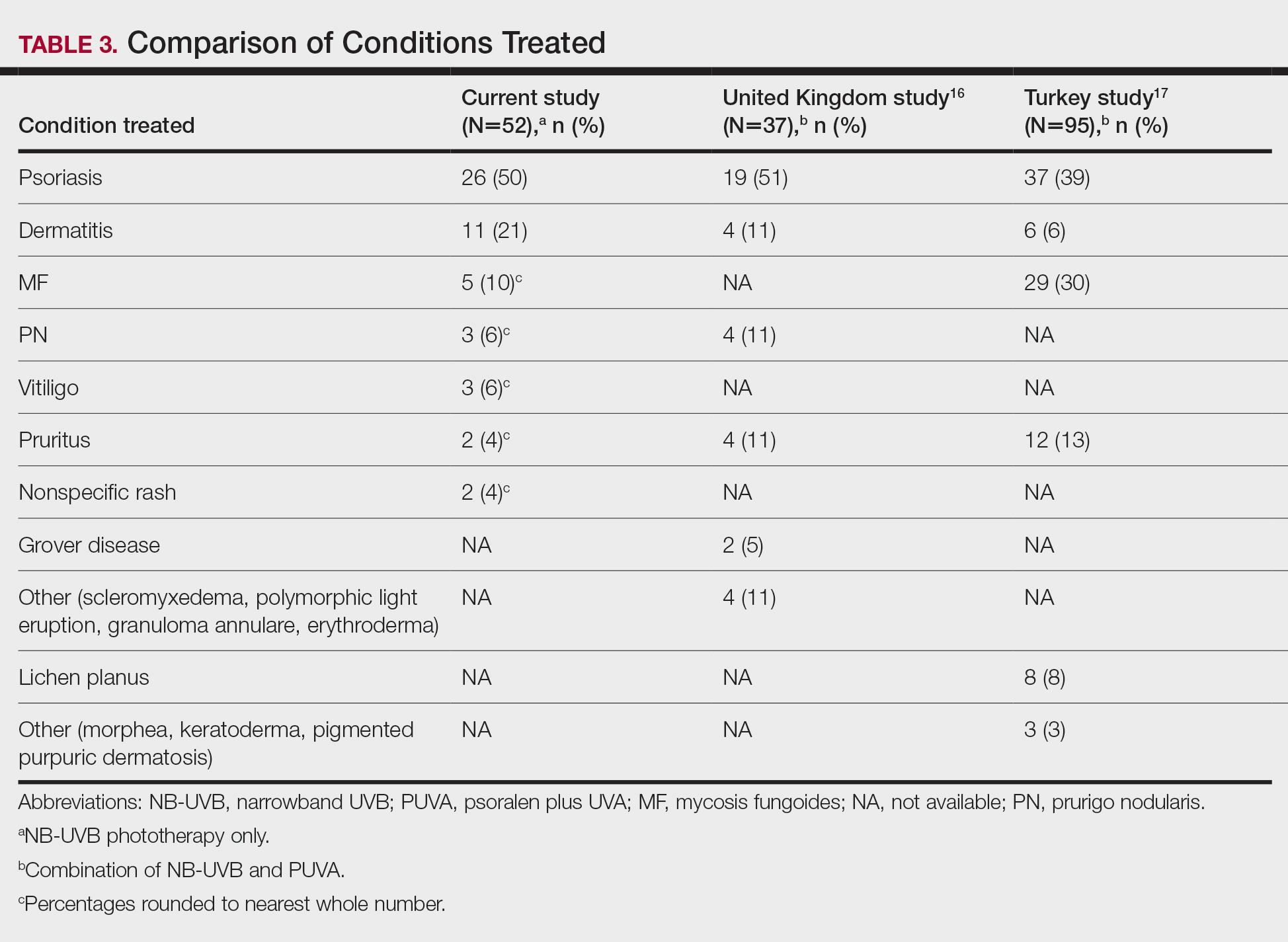

Comparison of Outcomes

Our third aim was to compare the outcomes with similar studies in the United Kingdom16 and Turkey.17 This study confirmed that phototherapy is being used in older adults (22.7% of this study’s total patients) and is an effective treatment for older patients experiencing a range of challenging inflammatory and proliferative skin diseases similar to studies in the general population. Prior phototherapy studies in elderly patients also found psoriasis to be the most common skin condition treated, with 1 study finding that 51% (19/37) of older phototherapy patients had psoriasis,16 while another reported 58% (37/95) of older phototherapy patients had psoriasis.17 These numbers are similar to those in our study, which showed 50% (26/52) of elderly phototherapy patients had psoriasis. Psoriasis is the main indication for treatment with NB-UVB phototherapy in the general population,19 and because the risk for psoriasis increases with age,20 it is not surprising that all 3 studies found psoriasis to be the most common indication in elderly phototherapy patients. Table 3 provides further details on conditions treated in all 3 studies.

Comment

Our study found that 94% of patients with psoriasis achieved clearance with an average of 30.4 treatments, which is comparable to the reported 91% response rate with an average of 30 treatments in the United Kingdom.16 The other similar study in Turkey17 reported 73.7% of psoriasis patients achieved a 75% or more improvement from baseline with an average of 42 treatments, which may reflect underlying differences in regional skin type. Of note, the scatter chart (Figure 3) shows that several patients in the present study’s analysis are listed as not clear, but many of those patients had low treatment numbers below the mean time to clearance. Thus, the present study’s response rate may have been underestimated.

In the general population, studies show that psoriasis treated with standardized phototherapy protocols typically clears with an average of 20.6 treatments.21 The levels of clearance were similar in our study’s older population, but more treatments were required to achieve those results, with an average of 10 more treatments needed (an additional 3.3 weeks). Similar results were found in this sample for dermatitis and mycosis fungoides, indicating comparable clearance rates and levels but a need for more treatments to achieve similar results compared to the general population.

Additionally, in the current study more patients experienced grade 1 (mild) erythema (46%) and grade 2 erythema (25%) at some point in their treatment compared with the United Kingdom16 (1.89%) and Turkey17 (35%) studies, though these side effects did not impact the clearance rate. Interestingly, the current study’s scatter chart (Figure 3) illustrates that this side effect did not seem to increase with aging in this population. If anything, the erythema response was more prevalent in the median or younger patients in the sample. Erythema may have been due to the frequent use of photosensitizing medications in older adults in the United States, some of which typically get discontinued in patients 75 years and older (eg, statins). Other potential causes might include the use of phototype vs minimal erythema dose–driven protocols, the standard utilization of protocols originally designed for psoriasis vs other condition-specific protocols, missed treatments leading to increased sensitivity, or possibly shielding mishaps (eg, not wearing a prescribed face shield). Given the number of potential causes and the possibility of overlapping factors, careful analysis is important. With NB-UVB phototherapy, near-erythemogenic doses are optimal to achieve effective treatments, but this delicate balance may be more problematic for older adults. Future studies are needed to fully determine the factors at play for this population. In the interim, it is important for phototherapy-trained nurses to consider this risk carefully in the older population. They must follow the prescribed protocols that guide them to query patients about their responses to the prior treatment (eg, erythema, tenderness, itching), photosensitizing medications, missed treatments, and placement of shielding, and then adjust the treatment dosing accordingly.

Limitations

This study had several limitations. Although clinical outcomes were recorded prospectively, the analysis was retrospective, unblinded, and not placebo controlled. It was conducted in a single organization (Group Health [now Kaiser Permanente Washington]) but did analyze data from 4 medical centers in different cities with diverse demographics and a variety of nursing staff providing the treatments. Although the vitiligo treatment protocol likely slowed the response rate for those patients with vitiligo, the numbers were small (ie, only 3 of 52 patients), so the researchers chose to include them in the current study. The sample population was relatively small, but when these data are evaluated alongside the studies in the United Kingdom16 and Turkey,17 they show a consistent picture illustrating the effectiveness and safety of phototherapy in the older population. Further epidemiologic studies could be helpful to further describe the usefulness of this modality compared with other treatments for a variety of dermatoses in this age group. Supplementary analysis specifically examining the relationship between the number and type of photosensitizing medications, frequency of erythema, and time to clearance also could be useful.

Conclusion

Older adults with a variety of dermatoses respond well to phototherapy and should have the opportunity to use it, particularly considering the potential for increased complications and costs from other treatment modalities, such as commonly used immunosuppressive pharmaceuticals. However, the current study and the comparison studies indicate that it is important to carefully consider the slower clearance rates and the potential risk for increased erythema in this population and adjust patient education and treatment dosing accordingly.

Unfortunately, many dermatology centers do not offer phototherapy because of infrastructure limitations such as space and specially trained nursing staff. Increasing accessibility of phototherapy for older adults through home treatments may be an alternative, given its effectiveness in the general population.22,23 In addition, home phototherapy may be worth pursuing for the older population considering the challenges they may face with transportation to the clinic setting and their increased risk for serious illness if exposed to infections such as COVID-19. The COVID-19 pandemic has brought to light the need for reliable, safe, and effective treatments that can be utilized in the safety of patients’ homes and should therefore be considered as an option for older adults. Issues such as mobility and cognitive decline could pose some complicating factors, but with the help of a well-trained family member or caregiver, home phototherapy could be a viable option that improves accessibility for older patients. Future research opportunities include further examination of the slower but ultimately equivalent response to phototherapy in the older population, the influence of photosensitizing medications on phototherapy effects, and the impact of phototherapy on utilization of immunosuppressive pharmaceuticals in older adults.

- British Photodermatology Group. An appraisal of narrowband (TL-01) UVB phototherapy. British Photodermatology Group Workshop Report (April 1996). Br J Dermatol. 1997;137:327-330.

Foerster J, Boswell K, West J, et al. Narrowband UVB treatment is highly effective and causes a strong reduction in the use of steroid and other creams in psoriasis patients in clinical practice. PLoS ONE. 2017;12:e0181813. doi:10.1371/journal.pone.0181813 - Fernández-Guarino M, Aboin-Gonzalez S, Barchino L, et al. Treatment of moderate and severe adult chronic atopic dermatitis with narrow-band UVB and the combination of narrow-band UVB/UVA phototherapy. Dermatol Ther. 2015;29:19-23.

- Ryu HH, Choe YS, Jo S, et al. Remission period in psoriasis after multiple cycles of narrowband ultraviolet B phototherapy. J Dermatol. 2014;41:622-627.

Tintle S, Shemer A, Suárez-Fariñas M, et al. Reversal of atopic dermatitis with narrow-band UVB phototherapy and biomarkers for therapeutic response. J Allergy Clin Immunol. 2011;128:583-593. - Gambichler T, Breuckmann F, Boms S, et al. Narrowband UVB phototherapy in skin conditions beyond psoriasis. J Am Acad Dermatol. 2005;52:660-670.

- Schneider LA, Hinrichs R, Scharffetter-Kochanek K. Phototherapy and photochemotherapy. Clin Dermatol. 2008;26:464-476.

- Martin JA, Laube S, Edwards C, et al. Rate of acute adverse events for narrow-band UVB and psoralen-UVA phototherapy. Photodermatol Photoimmunol Photomed. 2007;23:68-72.

- Mokos ZB, Jovic A, Ceovic R, et al. Therapeutic challenges in the mature patient. Clin Dermatol. 2018;36:128-139.

- Di Lernia V, Goldust M. An overview of the efficacy and safety of systemic treatments for psoriasis in the elderly. Exp Opin Biol Ther. 2018;18:897-903.

- Napolitano M, Balato N, Ayala F, et al. Psoriasis in elderly and non-elderly population: clinical and molecular features. G Ital Dermatol Venereol. 2016;151:587-595.

- Grozdev IS, Van Voorhees AS, Gottlieb AB, et al. Psoriasis in the elderly: from the Medical Board of the National Psoriasis Foundation. J Am Acad Dermatol. 2011;65:537-545.

- Click J, Alabaster A, Postlethwaite D, et al. Effect of availability of at-home phototherapy on the use of systemic medications for psoriasis.

Photodermatol Photoimmunol Photomed. 2017;33:345-346. - Piaserico S, Conti A, Lo Console F, et al.

Efficacy and safety of systemic treatments for psoriasis in elderly. Acta Derm Venereol. 2014;94:293-297. - Soliman A, Nofal E, Nofal A, et al. Combination therapy of methotrexate plus NB-UVB phototherapy is more effective than methotrexate monotherapy in the treatment of chronic plaque psoriasis. J Dermatol Treat. 2015;26:528-534.

- Powell JB, Gach JE. Phototherapy in the elderly. Clin Exp Dermatol. 2015;40:605-610.

- Bulur I, Erdogan HK, Aksu AE, et al. The efficacy and safety of phototherapy in geriatric patients: a retrospective study. An Bras Dermatol. 2018;93:33-38.

- Madigan LM, Al-Jamal M, Hamzavi I. Exploring the gaps in the evidence-based application of narrowband UVB for the treatment of vitiligo. Photodermatol Photoimmunol Photomed. 2016;32:66-80.

- Ibbotson SH. A perspective on the use of NB-UVB phototherapy vs. PUVA photochemotherapy. Front Med (Lausanne). 2018;5:184.

- Bell LM, Sedlack R, Beard CM, et al. Incidence of psoriasis in Rochester, Minn, 1980-1983. Arch Dermatol. 1991;127:1184-1187.

- Totonchy MB, Chiu MW. UV-based therapy. Dermatol Clin. 2014;32:399-413.

- Cameron H, Yule S, Dawe RS, et al. Review of an established UK home phototherapy service 1998-2011: improving access to a cost-effective treatment for chronic skin disease. Public Health. 2014;128:317-324.

- Matthews SW, Simmer M, Williams L, et al. Transition of patients with psoriasis from office-based phototherapy to nurse-supported home phototherapy: a pilot study. JDNA. 2018;10:29-41.

Identifying safe, effective, and affordable evidence-based dermatologic treatments for older adults can be challenging because of age-related changes in the skin, comorbidities, polypharmacy, mobility issues, and cognitive changes. Phototherapy has been shown to be an effective nonpharmacologic treatment option for multiple challenging dermatologic conditions1-8; however, few studies have specifically examined its effectiveness in older adults. The challenge for older patients with psoriasis and dermatitis is that the conditions can be difficult to control and often require multiple treatment modalities.9,10 Patients with psoriasis also have a higher risk for diabetes, dyslipidemia, and cardiovascular disease compared to other older patients,11,12 which poses treatment challenges and makes nonpharmacologic treatments even more appealing.

Recent studies show that phototherapy can help decrease the use of dermatologic medications. Foerster and colleagues2 found that adults with psoriasis who were treated with phototherapy significantly decreased their use of topical steroids (24.5% fewer patients required steroid creams and 31.1% fewer patients required psoriasis-specific topicals)(P<.01) while their use of non–psoriasis-specific medications did not change. Click and colleagues13 identified a decrease in medication costs, health care utilization, and risk for immunosuppression in patients treated with phototherapy when compared to those treated with biologics and apremilast. Methotrexate is a common dermatologic medication that is highly associated with increased risks in elderly patients because of impaired immune system function and the presence of comorbidities (eg, kidney disease, obesity, diabetes, fatty liver),14 which increase in prevalence with age. Combining phototherapy with methotrexate can substantially decrease the amount of methotrexate needed to achieve disease control,15 thereby decreasing the methotrexate-associated risks. Findings from these studies suggest that a safe, effective, cost-effective, and well-tolerated nonpharmacologic alternative, such as phototherapy, is highly desirable and should be optimized. Unfortunately, most studies that report the effectiveness of phototherapy are in younger populations.

This retrospective study aimed to (1) identify the most common dermatologic conditions treated with phototherapy in older adults, (2) examine the effectiveness and safety of phototherapy in older adults

Methods

Design, Setting, Sample, and Statistical Analysis

The institutional review boards of Kaiser Permanente Washington Health Research Institute, Seattle, and the University of Washington, Seattle, approved this study. It was conducted in a large US multispecialty health care system (Group Health, Seattle, Washington [now Kaiser Permanente Washington]) serving approximately 600,000 patients, using billing records to identify all patients treated with phototherapy between January 1, 2015, and December 31, 2015, all who received narrowband UVB (NB-UVB) phototherapy. All adults 65 years and older who received phototherapy treatment during the 12-month study period were included. Patients were included regardless of comorbidities and other dermatologic treatments to maintain as much uniformity as possible between the present study and 2 prior studies examining phototherapy in older adult populations in the United Kingdom16 and Turkey.17 Demographic and clinical factors were presented using frequencies (percentages) or means and medians as appropriate. Comparisons of dermatologic conditions and clearance levels used a Fisher exact test. The number of phototherapy treatments to clearance and total number of treatments were compared between groups of patients using independent sample t tests.

Phototherapy Protocol

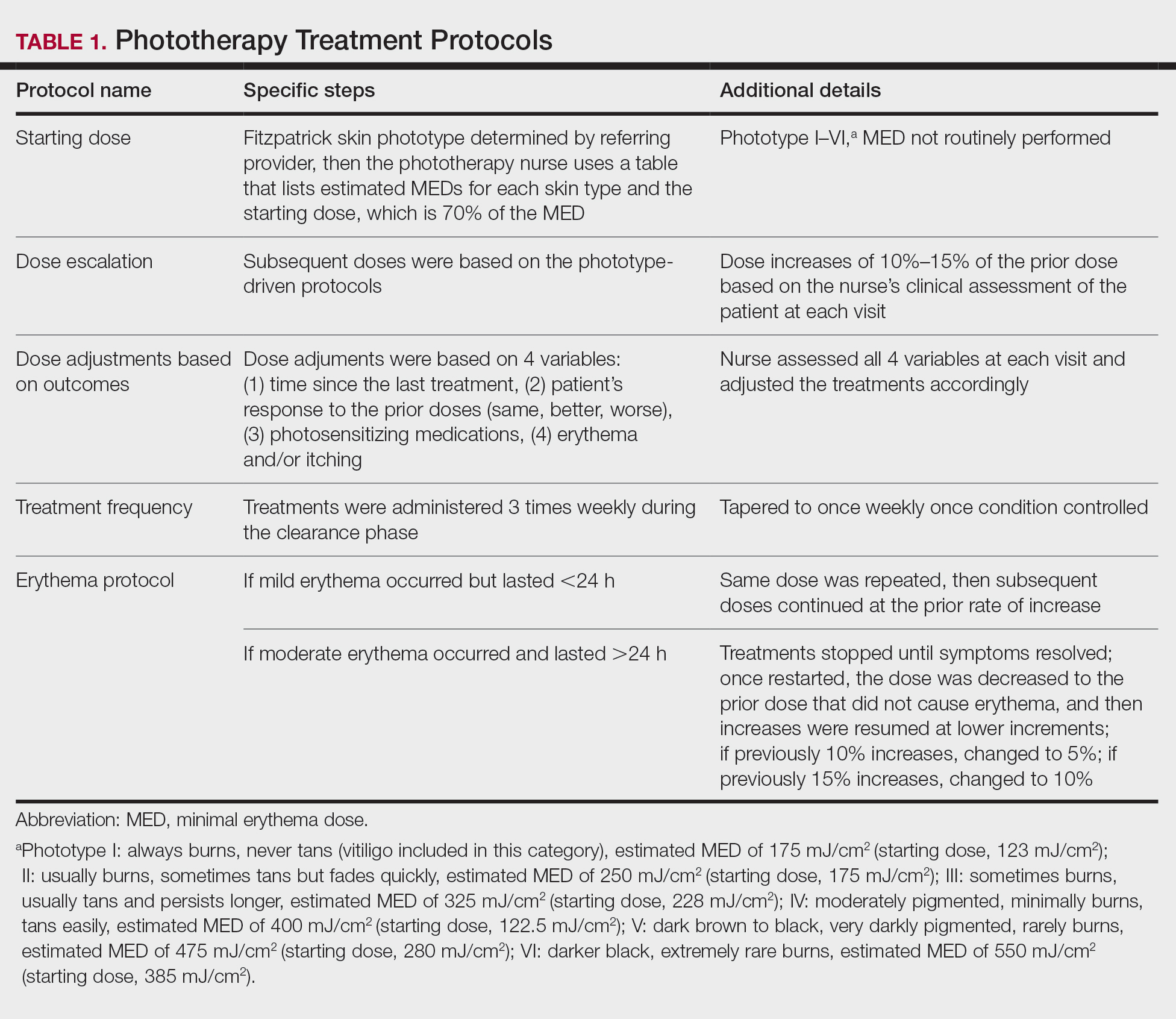

All patients received treatments administered by specially trained phototherapy nurses using a Daavlin UV Series (The Daavlin Company) or an Ultralite unit (Ultralite Enterprises, Inc), both with 48 lamps. All phototherapy nurses had been previously trained to provide treatments based on standardized protocols (Table 1) and to determine the patient’s level of disease clearance using a high to low clearance scale (Table 2). Daavlin’s treatment protocols were built into the software that accompanied the units and were developed based on the American Academy of Dermatology guidelines. The starting dose for an individual patient was determined based on the estimated

Results

Patients

Billing records identified 229 total patients who received phototherapy in 2015, of whom 52 (22.7%) were at least 65 years old. The median age was 70 years (range, 65–91 years). Twenty-nine (56%) were men and 35 (67%) had previously received phototherapy treatments.

Dermatologic Conditions Treated With Phototherapy

Our primary aim was to identify the most common dermatologic conditions treated with phototherapy in older adults. Psoriasis and dermatitis were the most common conditions treated in the sample (50% [26/52] and 21% [11/52], respectively), with mycosis fungoides being the third most common (10% [5/52]) and vitiligo tied with prurigo nodularis as fourth most common (6% [3/52])(Figure 1).

Effectiveness and Safety of Phototherapy

Our secondary aim was to examine the effectiveness and safety of phototherapy in older adults. Phototherapy was effective in this population, with 50 of 52 patients (96%) achieving a high or medium level of clearance. The degree of clearance for each of the dermatologic conditions is shown in Figure 2. Psoriasis and dermatitis achieved high clearance rates in 81% (21/26) and 82% (9/11) of patients, respectively. Overall, conditions did not have significant differences in clearances rates (Fisher exact test, P=.10). On average, it took patients 33 treatments to achieve medium or high rates of clearance. Psoriasis cleared more quickly, with an average of 30.4 treatments vs 36.1 treatments for other conditions, but the difference was not significant (t test, P=.26). Patients received an average of 98 total phototherapy treatments; the median number of treatments was 81 due to many being on maintenance therapy over several months. There was no relationship between a history of treatment with phototherapy and the total number of treatments needed to achieve clearance (t test, P=.40), but interestingly, those who had a history of phototherapy took approximately 5 more treatments to achieve clearance. The present study found that a slightly larger number of men were being treated for psoriasis (15 men vs 11 women), but there was no significant difference in response rate based on gender.

Side effects from phototherapy were minimal; 24 patients (46%) experienced grade 1 (mild) erythema at some point during their treatment course. Thirteen (25%) patients experienced grade 2 erythema, but this was a rare event for most patients. Only 1 (2%) patient experienced grade 3 erythema 1 time. Three patients experienced increased itching (6%). Thirteen (25%) patients had no side effects. None developed severe erythema or blisters, and none discontinued phototherapy because of side effects. Over the course of the study year, we found a high degree of acceptance of phototherapy treatments by older patients: 22 (42%) completed therapy after achieving clearance, 10 (19%) were continuing ongoing treatments (maintenance), and 15 (29%) stopped because of life circumstances (eg, other health issues, moving out of the area). Only 4 (8%) stopped because of a lack of effectiveness, and 1 (2%) patient because the treatments were burdensome.

Comparison of Outcomes