User login

Contralateral Constrictor Dose Predicts Swallowing Function After Radiation for Head and Neck Cancer

Radiation therapy can cause long-term dysphagia that seriously affects quality of life for survivors of head and neck (H&N) cancer.1-3 Numerous studies have linked pharyngeal constrictor dose to long-term dysphagia, but conclusions about the dose distribution that can be safely tolerated have been inconsistent. For example, a group from the Netherlands found that the mean dose to the superior pharyngeal constrictor muscle and the supraglottic larynx were each predictive of dysphagia.4 A subsequent Vanderbilt study refuted these findings, reporting that these structures were not predictive but that dose to the inferior pharyngeal constrictor muscle was.5 Other studies have connected late dysphagia with dose to the middle pharyngeal constrictor muscle, total larynx, oral cavity, contralateral submandibular gland, contralateral parotid gland, or a combination of these structures.6-14 NRG Oncology trials commonly evaluate dose to the “uninvolved pharynx,” which is the total pharyngeal constrictor muscle volume minus the planning target volume for the lowest dose target volume. NRG H&N trials 3, 4, 5, 6, 8, and 9 all use uninvolved pharynx mean dose ≤ 45 Gy as a constraint to judge radiation plan quality.

Differences in methodology or patient population may explain the inconsistency of prior studies on dosimetric predictors of dysphagia, but it is possible that these studies did not evaluate the optimal metric for dysphagia. This study evaluates a novel organ at risk, the contralateral pharyngeal constrictor muscle, to determine whether dose to this structure is predictive of late swallowing function. The study also compares a constraint based on this structure to the NRG uninvolved pharynx constraint mentioned earlier.

Methods

This study is a retrospective review of patients treated at the Richard L. Roudebush Veterans Affairs (VA) Medical Center in Indianapolis, Indiana. Patients were identified by searching the VA Cancer Registry for patients treated for H&N squamous cell carcinoma between September 1, 2016, and August 30, 2019. Eligible sites included cancers of the nasopharynx, oropharynx, hypopharynx, larynx and oral cavity, as well as H&N cancer of an unknown primary site. Only patients treated with primary radiation with concurrent systemic therapy were included. Patients were excluded if they had prior surgery or radiation to the H&N.

The pharyngeal constrictor muscles were contoured per the techniques described by Bhide and colleagues.11 The contralateral constrictor was defined as the half of the constrictor volume contralateral to the primary site. For midline tumors, the side of the neck with a lower volume of lymph node metastases was judged to be the contralateral side.

One-year dysphagia was defined as having a gastronomy tube (G-tube) in place or an abnormal modified barium swallow (MBS) ≥ 12 months after the completion of radiation. At the study institution, MBS is not routinely done after therapy but is ordered if a patient or clinician has concerns about swallowing function. MBS was considered abnormal if there was laryngeal penetration that reached the level of the glottis or was not ejected from the larynx.

Results

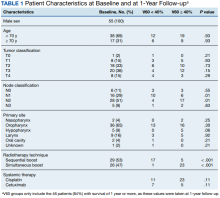

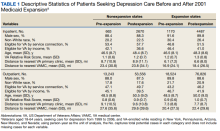

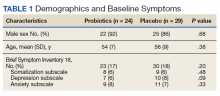

The VA Cancer Registry identified 113 patients treated for H&N cancer during the study period. Of these, 55 patients met the inclusion criteria. No patients were lost to follow-up. The median follow-up was 29 months. The median age was 67 years (range, 41-83) (Table 1).

All patients were treated with intensity-modulated radiotherapy (IMRT). Patients treated with a sequential boost had an initial dose of 54 Gy and/or 50 Gy, followed by a boost to a total of 70 Gy at 2 Gy per fraction. Patients treated with a simultaneous integrated boost (SIB) technique received 69.96 Gy in 33 fractions, with elective volumes treated to 54.45 Gy in 33 fractions. Both patients with nasopharyngeal cancer were treated with SIB plans and had an intermediate dose volume of 59.4 Gy.

Systemic therapy was weekly cisplatin in 41 patients (75%) and cetuximab in 14 (25%). Twenty percent of patients receiving cisplatin switched to an alternative agent during treatment, most commonly carboplatin.

Forty-nine patients (89%) had a G-tube placed before starting radiation. G-tubes were in place for an interval of 0 to 47 months (mean, 8.6); 12 (22%) had a G-tube > 12 months. After completion of radiation, 18 patients (33%) had an abnormal MBS. These were done 1 to 50 months (mean, 14.8) after completion of radiation. Abnormal MBS occurred ≥ 12 months after radiation in 9 patients, 5 of whom had their G-tube in place for less than a year.

Forty-six patients (84%) survived more than 1 year and could be evaluated for late swallowing function. One-year dysphagia was seen in 17 (37%) of these patients. Recurrence was seen in 20 patients (36%), with locoregional recurrence in 12 (60%) of these cases. Recurrence occurred at a range of 0 to 15 months (mean, 5.6). Neither recurrence (P = .69) nor locoregional recurrence (P = .11) was associated with increased 1-year dysphagia.

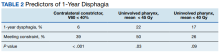

In patients who could be evaluated for long-term swallowing function, contralateral constrictor V60 ranged from 0% to 100% (median, 51%). V60 was < 40% in 18 patients (39%). With V60 < 40%, there was a 6% rate of 1-year dysphagia compared with 57% for V60 ≥ 40% (P < .001).

Patients with contralateral constrictor V60 < 40 and V60 ≥ 40 both had a mean age of 65 years. χ2 analysis did not show a difference in T stage or systemic treatment but did show that patients with V60 < 40% were more likely to have N1 disease (P = .01), and less likely to have N2 disease (P = .01) compared with patients with V60 ≥ 40%. The difference in 1-year dysphagia between N0 to N1 patients (27%) and N2 to N3 patients (46%) was not statistically significant (P = .19).

In patients who could be evaluated for long-term swallowing function, the uninvolved pharynx volume median of the total constrictor volume was 32% (range, < 1%-62%). The uninvolved pharynx mean dose ranged from 28 to 68 Gy (median, 45). When the uninvolved pharynx mean dose was < 45 Gy, 1-year dysphagia was 22% compared with 52% with a dose ≥ 45 Gy (P = .03).

Air cavity editing was performed in 27 patients (49%). One-year survival was 93% with air cavity editing, and 75% without, which was not statistically significant. Locoregional recurrence occurred in 3 patients (11%) with air cavity editing, and 9 (32%) without, which was not statistically significant. In patients surviving at least 1 year, contralateral constrictor V60 averaged 33% with editing and 62% without editing (P < .001). One-year dysphagia was 12% with air cavity editing and 67% without editing (P < .001).

An SIB technique was done in 26 patients (47%). One-year survival was 85% (n = 22) with SIB and 83% (n = 24) with sequential boost, which was not statistically significant. Locoregional recurrence occurred in 19% with SIB, and 32% with sequential boost, which was not statistically significant. For SIB patients alive at 1 year, the median contralateral V60 was 28%, compared with 66% for patients treated with sequential technique. Seventeen patients (77%) with SIB had V60 < 40%. Nineteen (86%) of SIB plans also had air cavity editing. One patient (5%) with SIB had dysphagia at 1 year, compared with 16 (67%) sequential patients (P < .001).

Discussion

This is the first study to link contralateral constrictor dose to long-term dysphagia in patients treated with radiation for H&N cancer. Editing the boost volume off air cavities was associated with lower contralateral constrictor V60 and with less long-term dysphagia. This may indicate that optimizing plans to meet a contralateral constrictor constraint can reduce rates of long-term dysphagia.

The most useful clinical predictors are those that identify a patient at low risk for toxicity. These constraints are useful because they reassure physicians that treatments will have a favorable risk/benefit ratio while identifying plans that may need modification before starting treatment.

The contralateral constrictor outperformed the uninvolved pharynx in identifying patients at low risk for long-term dysphagia. This difference could not be overcome by decreasing the threshold of the pharynx constraint, as 17% of patients with dysphagia had a mean dose of < 40 Gy to the uninvolved pharynx, which was not statistically significant.

An advantage of contralateral constrictor is that it is independent of planning target volume (PTV) size. The uninvolved pharynx structure depends on the PTV contour, so it may obscure a connection between PTV size and dysphagia.

In the context of a clinical trial, only measuring dose to the uninvolved pharynx may allow more plans to meet constraints, but even in NRG trials, physicians have some control over target volumes. For example, NRG HN009, a national trial for patients with H&N cancer, recommends editing the CTV_7000 (clinical target volume treated to 70 Gy) off air cavities but does not define how much the volume should be cropped or specify protocol violations if the volume is not cropped.15 Furthermore, constraints used in clinical trials are often adopted for use outside the trial, where physicians have extensive control over target volumes.

The broad range of uninvolved pharynx volume relative to total constrictor volume confounds predictions using this variable. For example, according to the NRG constraint, a patient with an uninvolved pharynx mean dose of 44 Gy will have a low risk of dysphagia even if this structure is only 1% of the total constrictor. The contralateral constrictor is always about 50% of the total constrictor volume, which means that predictions using this structure will not be confounded by the same variation in volume size.

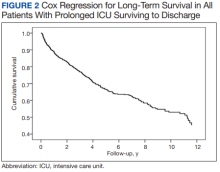

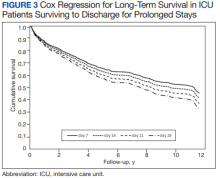

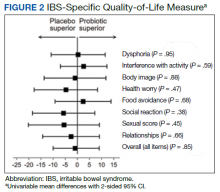

Figure 2 shows a representative patient who met the NRG uninvolved pharynx constraint but developed long-term dysphagia.

Pharyngoesophageal stricture is a common cause of dysphagia after IMRT for H&N cancer.16 Radiation has been shown to decrease pharyngeal function in patients with H&N cancer.17 Sparing one side of the pharynx may allow for better pharyngeal compliance throughout the length of the pharynx, possibly decreasing the rate of pharyngoesophageal stricture. Additionally, constraining the contralateral constrictor may preserve strength on this side, allowing it to compensate for weakness on the side of the primary cancer. An exercise sometimes used for dysphagia involves head rotation toward the affected side during swallowing. This technique has been shown to cause food to move to the unaffected side.18 Sparing the contralateral constrictor may help such techniques work better in patients with H&N cancer.

Few studies have commented specifically on dose to swallowing structures contralateral to the primary tumor. Two studies have proposed contralateral submandibular gland constraints for dysphagia (not xerostomia), but neither measured the dose to the contralateral constrictor muscle.9,10 Although the contralateral submandibular dose may correlate with dose to the constrictor on that side, the submandibular gland may have a less direct impact on swallowing than the constrictor muscle, and its limited dimensions may make constraints based on the gland less robust for cancers outside the oropharynx.

Another study reported improved quality of life in patients who were not treated with elective contralateral retropharyngeal radiation.19 Although it is likely that doses to the contralateral constrictor were lower in patients who did not receive elective radiation to this area, this study did not measure or constrain doses to the contralateral constrictors.

Limitations

This study is limited by its single institution, retrospective design, small sample size, and by all patients being male. The high correlation between air cavity editing and the use of SIB makes it impossible to assess the impact of each technique individually. Patients with contralateral constrictor V60 < 40% were less likely to have N2 disease, but N2 to N3 disease did not predict higher 1-year dysphagia, so the difference in N-category cannot fully explain the difference in 1-year dysphagia. It is possible that unreported factors, such as CTV, may contribute significantly to swallowing function. Nevertheless, within the study population, contralateral constrictor dose was able to identify a group with a low rate of long-term dysphagia.

Conclusions

Contralateral constrictor dose is a promising predictor of late dysphagia for patients with H&N cancer treated with radiation with concurrent systemic therapy. Contralateral constrictor V60 < 40% was able to identify a group of patients with a low rate of 1-year dysphagia in this single-center retrospective study. The correlation between air cavity editing and contralateral constrictor V60 suggests that contralateral constrictor dose may depend partly on technique. Further studies are needed to see if the contralateral constrictor dose can be used to predict long-term dysphagia prospectively and in other patient populations.

1. Langendijk JA, Doornaert P, Verdonck-de Leeuw IM, et al. Impact of late treatment-related toxicity on quality of life among patients with head and neck cancer treated with radiotherapy. J Clin Oncol. 2008;26(22):3770-3776. doi:10.1200/JCO.2007.14.6647

2. Nguyen NP, Frank C, Moltz CC, et al. Impact of dysphagia on quality of life after treatment of head-and-neck cancer. Int J Radiat Oncol Biol Phys. 2005;61(3):772-778. doi:10.1016/j.ijrobp.2004.06.017

3. Ramaekers BLT, Joore MA, Grutters JPC, et al. The impact of late treatment-toxicity on generic health-related quality of life in head and neck cancer patients after radiotherapy. Oral Oncol. 2011;47(8):768-774. doi:10.1016/j.oraloncology.2011.05.012

4. Christianen MEMC, Schilstra C, Beetz I, et al. Predictive modelling for swallowing dysfunction after primary (chemo)radiation: results of a prospective observational study. Radiother Oncol. 2012;105(1):107-114. doi:10.1016/j.radonc.2011.08.009

5. Vlachich G, Spratt DE, Diaz R, et al. Dose to inferior pharyngeal conctrictor predicts prolonged gastrostomy tube dependence with concurrent intensity-modulated radiation therapy and chemotherapy for locally-advanced head and neck cancer. Radiother Oncol. 2014;110(3):435-440. doi:10.1016/j.radonc.2013.12.007

6. Mogadas S, Busch CJ, Pflug Cet al. Influence of radiation dose to pharyngeal constrictor muscles on late dysphagia and quality of life in patients with locally advanced oropharyngeal carcinoma. Strahlenther Onkol. 2020;196(6):522-529. doi:10.1007/s00066-019-01572-0

7. Caglar HB, Tishler RB, Othus M, et al. Dose to larynx predicts of swallowing complications after intensity-modulated radiotherapy. Int J Radiat Oncol Biol Phys. 2008;72(4):1110-1118. doi:10.1016/j.ijrobp.2008.02.048

8. Schwartz DL, Hutcheson K, Barringer D, et al. Candidate dosimetric predictors of long-term swallowing dysfunction after oropharyngeal intensity-modulated radiotherapy. Int J Radiat Oncol Biol Phys. 2010;78(5):1356-1365. doi:10.1016/j.ijrobp.2009.10.002

9. Gensheimer MF, Nyflot M, Laramore GE, Laio JL, Parvathaneni U. Contribution of submandibular gland and swallowing structure sparing to post-radiation therapy peg dependence in oropharynx cancer patients treated with split-neck IMRT technique. Radiat Oncol. 2015;11(1):1-7. doi:10.1186/s13014-016-0726-3

10. Hedström J, Tuomi L, Finizia C, Olsson C. Identifying organs at risk for radiation-induced late dysphagia in head and neck cancer patients. Clin Transl Radiat Oncol. 2019;19:87-95. doi:10.1016/j.ctro.2019.08.005

11. Bhide SA, Gulliford S, Kazi R, et al. Correlation between dose to the pharyngeal constrictors and patient quality of life and late dysphagia following chemo-IMRT for head and neck cancer. Radiother Oncol. 2009;93(3):539-544. doi:10.1016/j.radonc.2009.09.017

12. Caudell JJ, Schaner PE, Desmond RA, Meredith RF, Spencer SA, Bonner JA. Dosimetric factors associated with long-term dysphagia after definitive radiotherapy for squamous cell carcinoma of the head and neck. Int J Radiat Oncol Biol Phys. 2010;76(2):403-409. doi:10.1016/j.ijrobp.2009.02.017

13. Levendag PC, Teguh DN, Voet P, et al. Dysphagia disorders in patients with cancer of the oropharynx are significantly affected by the radiation therapy dose to the superior and middle constrictor muscle: a dose-effect relationship. Radiother Oncol. 2007;85(1):64-73. doi:10.1016/j.radonc.2007.07.009

14. Eisbruch A, Schwartz M, Rasch C, et al. Dysphagia and aspiration after chemoradiotherapy for head-and-neck cancer: which anatomic structures are affected and can they be spared by IMRT? Int J Radiat Oncol Biol Phys. 2004;60(5):1425-1439. doi:10.1016/j.ijrobp.2004.05.050

15. Harari PM; NRG Oncology. Comparing high-dose cisplatin every three weeks to low-dose cisplatin weekly when combined with radiation for patients with advanced head and neck cancer. ClinicalTrials.gov identifier: NCT05050162. Updated November 25, 2022. Accessed December 7, 2022. https://clinicaltrials.gov/ct2/show/NCT05050162

16. Wang JJ, Goldsmith TA, Holman AS, Cianchetti M, Chan AW. Pharyngoesophageal stricture after treatment for head and neck cancer. Head Neck. 2011;34(7):967-973. doi:10.1002/hed.21842

17. Kendall KA, McKenzie SW, Leonard RJ, Jones CU. Timing of swallowing events after single-modality treatment of head and neck carcinoma with radiotherapy. Ann Otol Rhinol Laryngol. 2000;109(8, pt 1):767-775. doi:10.1177/000348940010900812

18. Ohmae Y, Ogura M, Kitahara S. Effects of head rotation on pharyngeal function during normal swallow. Ann Otol Rhinol Laryngol. 1998;107(4):344-348. doi:10.1177/000348949810700414

19. Spencer CR, Gay HA, Haughey BH, et al. Eliminating radiotherapy to the contralateral retropharyngeal and high level II lymph nodes in head and neck squamous cell carcinoma is safe and improves quality of life. Cancer. 2014;120(24):3994-4002. doi:10.1002/cncr.28938

Radiation therapy can cause long-term dysphagia that seriously affects quality of life for survivors of head and neck (H&N) cancer.1-3 Numerous studies have linked pharyngeal constrictor dose to long-term dysphagia, but conclusions about the dose distribution that can be safely tolerated have been inconsistent. For example, a group from the Netherlands found that the mean dose to the superior pharyngeal constrictor muscle and the supraglottic larynx were each predictive of dysphagia.4 A subsequent Vanderbilt study refuted these findings, reporting that these structures were not predictive but that dose to the inferior pharyngeal constrictor muscle was.5 Other studies have connected late dysphagia with dose to the middle pharyngeal constrictor muscle, total larynx, oral cavity, contralateral submandibular gland, contralateral parotid gland, or a combination of these structures.6-14 NRG Oncology trials commonly evaluate dose to the “uninvolved pharynx,” which is the total pharyngeal constrictor muscle volume minus the planning target volume for the lowest dose target volume. NRG H&N trials 3, 4, 5, 6, 8, and 9 all use uninvolved pharynx mean dose ≤ 45 Gy as a constraint to judge radiation plan quality.

Differences in methodology or patient population may explain the inconsistency of prior studies on dosimetric predictors of dysphagia, but it is possible that these studies did not evaluate the optimal metric for dysphagia. This study evaluates a novel organ at risk, the contralateral pharyngeal constrictor muscle, to determine whether dose to this structure is predictive of late swallowing function. The study also compares a constraint based on this structure to the NRG uninvolved pharynx constraint mentioned earlier.

Methods

This study is a retrospective review of patients treated at the Richard L. Roudebush Veterans Affairs (VA) Medical Center in Indianapolis, Indiana. Patients were identified by searching the VA Cancer Registry for patients treated for H&N squamous cell carcinoma between September 1, 2016, and August 30, 2019. Eligible sites included cancers of the nasopharynx, oropharynx, hypopharynx, larynx and oral cavity, as well as H&N cancer of an unknown primary site. Only patients treated with primary radiation with concurrent systemic therapy were included. Patients were excluded if they had prior surgery or radiation to the H&N.

The pharyngeal constrictor muscles were contoured per the techniques described by Bhide and colleagues.11 The contralateral constrictor was defined as the half of the constrictor volume contralateral to the primary site. For midline tumors, the side of the neck with a lower volume of lymph node metastases was judged to be the contralateral side.

One-year dysphagia was defined as having a gastronomy tube (G-tube) in place or an abnormal modified barium swallow (MBS) ≥ 12 months after the completion of radiation. At the study institution, MBS is not routinely done after therapy but is ordered if a patient or clinician has concerns about swallowing function. MBS was considered abnormal if there was laryngeal penetration that reached the level of the glottis or was not ejected from the larynx.

Results

The VA Cancer Registry identified 113 patients treated for H&N cancer during the study period. Of these, 55 patients met the inclusion criteria. No patients were lost to follow-up. The median follow-up was 29 months. The median age was 67 years (range, 41-83) (Table 1).

All patients were treated with intensity-modulated radiotherapy (IMRT). Patients treated with a sequential boost had an initial dose of 54 Gy and/or 50 Gy, followed by a boost to a total of 70 Gy at 2 Gy per fraction. Patients treated with a simultaneous integrated boost (SIB) technique received 69.96 Gy in 33 fractions, with elective volumes treated to 54.45 Gy in 33 fractions. Both patients with nasopharyngeal cancer were treated with SIB plans and had an intermediate dose volume of 59.4 Gy.

Systemic therapy was weekly cisplatin in 41 patients (75%) and cetuximab in 14 (25%). Twenty percent of patients receiving cisplatin switched to an alternative agent during treatment, most commonly carboplatin.

Forty-nine patients (89%) had a G-tube placed before starting radiation. G-tubes were in place for an interval of 0 to 47 months (mean, 8.6); 12 (22%) had a G-tube > 12 months. After completion of radiation, 18 patients (33%) had an abnormal MBS. These were done 1 to 50 months (mean, 14.8) after completion of radiation. Abnormal MBS occurred ≥ 12 months after radiation in 9 patients, 5 of whom had their G-tube in place for less than a year.

Forty-six patients (84%) survived more than 1 year and could be evaluated for late swallowing function. One-year dysphagia was seen in 17 (37%) of these patients. Recurrence was seen in 20 patients (36%), with locoregional recurrence in 12 (60%) of these cases. Recurrence occurred at a range of 0 to 15 months (mean, 5.6). Neither recurrence (P = .69) nor locoregional recurrence (P = .11) was associated with increased 1-year dysphagia.

In patients who could be evaluated for long-term swallowing function, contralateral constrictor V60 ranged from 0% to 100% (median, 51%). V60 was < 40% in 18 patients (39%). With V60 < 40%, there was a 6% rate of 1-year dysphagia compared with 57% for V60 ≥ 40% (P < .001).

Patients with contralateral constrictor V60 < 40 and V60 ≥ 40 both had a mean age of 65 years. χ2 analysis did not show a difference in T stage or systemic treatment but did show that patients with V60 < 40% were more likely to have N1 disease (P = .01), and less likely to have N2 disease (P = .01) compared with patients with V60 ≥ 40%. The difference in 1-year dysphagia between N0 to N1 patients (27%) and N2 to N3 patients (46%) was not statistically significant (P = .19).

In patients who could be evaluated for long-term swallowing function, the uninvolved pharynx volume median of the total constrictor volume was 32% (range, < 1%-62%). The uninvolved pharynx mean dose ranged from 28 to 68 Gy (median, 45). When the uninvolved pharynx mean dose was < 45 Gy, 1-year dysphagia was 22% compared with 52% with a dose ≥ 45 Gy (P = .03).

Air cavity editing was performed in 27 patients (49%). One-year survival was 93% with air cavity editing, and 75% without, which was not statistically significant. Locoregional recurrence occurred in 3 patients (11%) with air cavity editing, and 9 (32%) without, which was not statistically significant. In patients surviving at least 1 year, contralateral constrictor V60 averaged 33% with editing and 62% without editing (P < .001). One-year dysphagia was 12% with air cavity editing and 67% without editing (P < .001).

An SIB technique was done in 26 patients (47%). One-year survival was 85% (n = 22) with SIB and 83% (n = 24) with sequential boost, which was not statistically significant. Locoregional recurrence occurred in 19% with SIB, and 32% with sequential boost, which was not statistically significant. For SIB patients alive at 1 year, the median contralateral V60 was 28%, compared with 66% for patients treated with sequential technique. Seventeen patients (77%) with SIB had V60 < 40%. Nineteen (86%) of SIB plans also had air cavity editing. One patient (5%) with SIB had dysphagia at 1 year, compared with 16 (67%) sequential patients (P < .001).

Discussion

This is the first study to link contralateral constrictor dose to long-term dysphagia in patients treated with radiation for H&N cancer. Editing the boost volume off air cavities was associated with lower contralateral constrictor V60 and with less long-term dysphagia. This may indicate that optimizing plans to meet a contralateral constrictor constraint can reduce rates of long-term dysphagia.

The most useful clinical predictors are those that identify a patient at low risk for toxicity. These constraints are useful because they reassure physicians that treatments will have a favorable risk/benefit ratio while identifying plans that may need modification before starting treatment.

The contralateral constrictor outperformed the uninvolved pharynx in identifying patients at low risk for long-term dysphagia. This difference could not be overcome by decreasing the threshold of the pharynx constraint, as 17% of patients with dysphagia had a mean dose of < 40 Gy to the uninvolved pharynx, which was not statistically significant.

An advantage of contralateral constrictor is that it is independent of planning target volume (PTV) size. The uninvolved pharynx structure depends on the PTV contour, so it may obscure a connection between PTV size and dysphagia.

In the context of a clinical trial, only measuring dose to the uninvolved pharynx may allow more plans to meet constraints, but even in NRG trials, physicians have some control over target volumes. For example, NRG HN009, a national trial for patients with H&N cancer, recommends editing the CTV_7000 (clinical target volume treated to 70 Gy) off air cavities but does not define how much the volume should be cropped or specify protocol violations if the volume is not cropped.15 Furthermore, constraints used in clinical trials are often adopted for use outside the trial, where physicians have extensive control over target volumes.

The broad range of uninvolved pharynx volume relative to total constrictor volume confounds predictions using this variable. For example, according to the NRG constraint, a patient with an uninvolved pharynx mean dose of 44 Gy will have a low risk of dysphagia even if this structure is only 1% of the total constrictor. The contralateral constrictor is always about 50% of the total constrictor volume, which means that predictions using this structure will not be confounded by the same variation in volume size.

Figure 2 shows a representative patient who met the NRG uninvolved pharynx constraint but developed long-term dysphagia.

Pharyngoesophageal stricture is a common cause of dysphagia after IMRT for H&N cancer.16 Radiation has been shown to decrease pharyngeal function in patients with H&N cancer.17 Sparing one side of the pharynx may allow for better pharyngeal compliance throughout the length of the pharynx, possibly decreasing the rate of pharyngoesophageal stricture. Additionally, constraining the contralateral constrictor may preserve strength on this side, allowing it to compensate for weakness on the side of the primary cancer. An exercise sometimes used for dysphagia involves head rotation toward the affected side during swallowing. This technique has been shown to cause food to move to the unaffected side.18 Sparing the contralateral constrictor may help such techniques work better in patients with H&N cancer.

Few studies have commented specifically on dose to swallowing structures contralateral to the primary tumor. Two studies have proposed contralateral submandibular gland constraints for dysphagia (not xerostomia), but neither measured the dose to the contralateral constrictor muscle.9,10 Although the contralateral submandibular dose may correlate with dose to the constrictor on that side, the submandibular gland may have a less direct impact on swallowing than the constrictor muscle, and its limited dimensions may make constraints based on the gland less robust for cancers outside the oropharynx.

Another study reported improved quality of life in patients who were not treated with elective contralateral retropharyngeal radiation.19 Although it is likely that doses to the contralateral constrictor were lower in patients who did not receive elective radiation to this area, this study did not measure or constrain doses to the contralateral constrictors.

Limitations

This study is limited by its single institution, retrospective design, small sample size, and by all patients being male. The high correlation between air cavity editing and the use of SIB makes it impossible to assess the impact of each technique individually. Patients with contralateral constrictor V60 < 40% were less likely to have N2 disease, but N2 to N3 disease did not predict higher 1-year dysphagia, so the difference in N-category cannot fully explain the difference in 1-year dysphagia. It is possible that unreported factors, such as CTV, may contribute significantly to swallowing function. Nevertheless, within the study population, contralateral constrictor dose was able to identify a group with a low rate of long-term dysphagia.

Conclusions

Contralateral constrictor dose is a promising predictor of late dysphagia for patients with H&N cancer treated with radiation with concurrent systemic therapy. Contralateral constrictor V60 < 40% was able to identify a group of patients with a low rate of 1-year dysphagia in this single-center retrospective study. The correlation between air cavity editing and contralateral constrictor V60 suggests that contralateral constrictor dose may depend partly on technique. Further studies are needed to see if the contralateral constrictor dose can be used to predict long-term dysphagia prospectively and in other patient populations.

Radiation therapy can cause long-term dysphagia that seriously affects quality of life for survivors of head and neck (H&N) cancer.1-3 Numerous studies have linked pharyngeal constrictor dose to long-term dysphagia, but conclusions about the dose distribution that can be safely tolerated have been inconsistent. For example, a group from the Netherlands found that the mean dose to the superior pharyngeal constrictor muscle and the supraglottic larynx were each predictive of dysphagia.4 A subsequent Vanderbilt study refuted these findings, reporting that these structures were not predictive but that dose to the inferior pharyngeal constrictor muscle was.5 Other studies have connected late dysphagia with dose to the middle pharyngeal constrictor muscle, total larynx, oral cavity, contralateral submandibular gland, contralateral parotid gland, or a combination of these structures.6-14 NRG Oncology trials commonly evaluate dose to the “uninvolved pharynx,” which is the total pharyngeal constrictor muscle volume minus the planning target volume for the lowest dose target volume. NRG H&N trials 3, 4, 5, 6, 8, and 9 all use uninvolved pharynx mean dose ≤ 45 Gy as a constraint to judge radiation plan quality.

Differences in methodology or patient population may explain the inconsistency of prior studies on dosimetric predictors of dysphagia, but it is possible that these studies did not evaluate the optimal metric for dysphagia. This study evaluates a novel organ at risk, the contralateral pharyngeal constrictor muscle, to determine whether dose to this structure is predictive of late swallowing function. The study also compares a constraint based on this structure to the NRG uninvolved pharynx constraint mentioned earlier.

Methods

This study is a retrospective review of patients treated at the Richard L. Roudebush Veterans Affairs (VA) Medical Center in Indianapolis, Indiana. Patients were identified by searching the VA Cancer Registry for patients treated for H&N squamous cell carcinoma between September 1, 2016, and August 30, 2019. Eligible sites included cancers of the nasopharynx, oropharynx, hypopharynx, larynx and oral cavity, as well as H&N cancer of an unknown primary site. Only patients treated with primary radiation with concurrent systemic therapy were included. Patients were excluded if they had prior surgery or radiation to the H&N.

The pharyngeal constrictor muscles were contoured per the techniques described by Bhide and colleagues.11 The contralateral constrictor was defined as the half of the constrictor volume contralateral to the primary site. For midline tumors, the side of the neck with a lower volume of lymph node metastases was judged to be the contralateral side.

One-year dysphagia was defined as having a gastronomy tube (G-tube) in place or an abnormal modified barium swallow (MBS) ≥ 12 months after the completion of radiation. At the study institution, MBS is not routinely done after therapy but is ordered if a patient or clinician has concerns about swallowing function. MBS was considered abnormal if there was laryngeal penetration that reached the level of the glottis or was not ejected from the larynx.

Results

The VA Cancer Registry identified 113 patients treated for H&N cancer during the study period. Of these, 55 patients met the inclusion criteria. No patients were lost to follow-up. The median follow-up was 29 months. The median age was 67 years (range, 41-83) (Table 1).

All patients were treated with intensity-modulated radiotherapy (IMRT). Patients treated with a sequential boost had an initial dose of 54 Gy and/or 50 Gy, followed by a boost to a total of 70 Gy at 2 Gy per fraction. Patients treated with a simultaneous integrated boost (SIB) technique received 69.96 Gy in 33 fractions, with elective volumes treated to 54.45 Gy in 33 fractions. Both patients with nasopharyngeal cancer were treated with SIB plans and had an intermediate dose volume of 59.4 Gy.

Systemic therapy was weekly cisplatin in 41 patients (75%) and cetuximab in 14 (25%). Twenty percent of patients receiving cisplatin switched to an alternative agent during treatment, most commonly carboplatin.

Forty-nine patients (89%) had a G-tube placed before starting radiation. G-tubes were in place for an interval of 0 to 47 months (mean, 8.6); 12 (22%) had a G-tube > 12 months. After completion of radiation, 18 patients (33%) had an abnormal MBS. These were done 1 to 50 months (mean, 14.8) after completion of radiation. Abnormal MBS occurred ≥ 12 months after radiation in 9 patients, 5 of whom had their G-tube in place for less than a year.

Forty-six patients (84%) survived more than 1 year and could be evaluated for late swallowing function. One-year dysphagia was seen in 17 (37%) of these patients. Recurrence was seen in 20 patients (36%), with locoregional recurrence in 12 (60%) of these cases. Recurrence occurred at a range of 0 to 15 months (mean, 5.6). Neither recurrence (P = .69) nor locoregional recurrence (P = .11) was associated with increased 1-year dysphagia.

In patients who could be evaluated for long-term swallowing function, contralateral constrictor V60 ranged from 0% to 100% (median, 51%). V60 was < 40% in 18 patients (39%). With V60 < 40%, there was a 6% rate of 1-year dysphagia compared with 57% for V60 ≥ 40% (P < .001).

Patients with contralateral constrictor V60 < 40 and V60 ≥ 40 both had a mean age of 65 years. χ2 analysis did not show a difference in T stage or systemic treatment but did show that patients with V60 < 40% were more likely to have N1 disease (P = .01), and less likely to have N2 disease (P = .01) compared with patients with V60 ≥ 40%. The difference in 1-year dysphagia between N0 to N1 patients (27%) and N2 to N3 patients (46%) was not statistically significant (P = .19).

In patients who could be evaluated for long-term swallowing function, the uninvolved pharynx volume median of the total constrictor volume was 32% (range, < 1%-62%). The uninvolved pharynx mean dose ranged from 28 to 68 Gy (median, 45). When the uninvolved pharynx mean dose was < 45 Gy, 1-year dysphagia was 22% compared with 52% with a dose ≥ 45 Gy (P = .03).

Air cavity editing was performed in 27 patients (49%). One-year survival was 93% with air cavity editing, and 75% without, which was not statistically significant. Locoregional recurrence occurred in 3 patients (11%) with air cavity editing, and 9 (32%) without, which was not statistically significant. In patients surviving at least 1 year, contralateral constrictor V60 averaged 33% with editing and 62% without editing (P < .001). One-year dysphagia was 12% with air cavity editing and 67% without editing (P < .001).

An SIB technique was done in 26 patients (47%). One-year survival was 85% (n = 22) with SIB and 83% (n = 24) with sequential boost, which was not statistically significant. Locoregional recurrence occurred in 19% with SIB, and 32% with sequential boost, which was not statistically significant. For SIB patients alive at 1 year, the median contralateral V60 was 28%, compared with 66% for patients treated with sequential technique. Seventeen patients (77%) with SIB had V60 < 40%. Nineteen (86%) of SIB plans also had air cavity editing. One patient (5%) with SIB had dysphagia at 1 year, compared with 16 (67%) sequential patients (P < .001).

Discussion

This is the first study to link contralateral constrictor dose to long-term dysphagia in patients treated with radiation for H&N cancer. Editing the boost volume off air cavities was associated with lower contralateral constrictor V60 and with less long-term dysphagia. This may indicate that optimizing plans to meet a contralateral constrictor constraint can reduce rates of long-term dysphagia.

The most useful clinical predictors are those that identify a patient at low risk for toxicity. These constraints are useful because they reassure physicians that treatments will have a favorable risk/benefit ratio while identifying plans that may need modification before starting treatment.

The contralateral constrictor outperformed the uninvolved pharynx in identifying patients at low risk for long-term dysphagia. This difference could not be overcome by decreasing the threshold of the pharynx constraint, as 17% of patients with dysphagia had a mean dose of < 40 Gy to the uninvolved pharynx, which was not statistically significant.

An advantage of contralateral constrictor is that it is independent of planning target volume (PTV) size. The uninvolved pharynx structure depends on the PTV contour, so it may obscure a connection between PTV size and dysphagia.

In the context of a clinical trial, only measuring dose to the uninvolved pharynx may allow more plans to meet constraints, but even in NRG trials, physicians have some control over target volumes. For example, NRG HN009, a national trial for patients with H&N cancer, recommends editing the CTV_7000 (clinical target volume treated to 70 Gy) off air cavities but does not define how much the volume should be cropped or specify protocol violations if the volume is not cropped.15 Furthermore, constraints used in clinical trials are often adopted for use outside the trial, where physicians have extensive control over target volumes.

The broad range of uninvolved pharynx volume relative to total constrictor volume confounds predictions using this variable. For example, according to the NRG constraint, a patient with an uninvolved pharynx mean dose of 44 Gy will have a low risk of dysphagia even if this structure is only 1% of the total constrictor. The contralateral constrictor is always about 50% of the total constrictor volume, which means that predictions using this structure will not be confounded by the same variation in volume size.

Figure 2 shows a representative patient who met the NRG uninvolved pharynx constraint but developed long-term dysphagia.

Pharyngoesophageal stricture is a common cause of dysphagia after IMRT for H&N cancer.16 Radiation has been shown to decrease pharyngeal function in patients with H&N cancer.17 Sparing one side of the pharynx may allow for better pharyngeal compliance throughout the length of the pharynx, possibly decreasing the rate of pharyngoesophageal stricture. Additionally, constraining the contralateral constrictor may preserve strength on this side, allowing it to compensate for weakness on the side of the primary cancer. An exercise sometimes used for dysphagia involves head rotation toward the affected side during swallowing. This technique has been shown to cause food to move to the unaffected side.18 Sparing the contralateral constrictor may help such techniques work better in patients with H&N cancer.

Few studies have commented specifically on dose to swallowing structures contralateral to the primary tumor. Two studies have proposed contralateral submandibular gland constraints for dysphagia (not xerostomia), but neither measured the dose to the contralateral constrictor muscle.9,10 Although the contralateral submandibular dose may correlate with dose to the constrictor on that side, the submandibular gland may have a less direct impact on swallowing than the constrictor muscle, and its limited dimensions may make constraints based on the gland less robust for cancers outside the oropharynx.

Another study reported improved quality of life in patients who were not treated with elective contralateral retropharyngeal radiation.19 Although it is likely that doses to the contralateral constrictor were lower in patients who did not receive elective radiation to this area, this study did not measure or constrain doses to the contralateral constrictors.

Limitations

This study is limited by its single institution, retrospective design, small sample size, and by all patients being male. The high correlation between air cavity editing and the use of SIB makes it impossible to assess the impact of each technique individually. Patients with contralateral constrictor V60 < 40% were less likely to have N2 disease, but N2 to N3 disease did not predict higher 1-year dysphagia, so the difference in N-category cannot fully explain the difference in 1-year dysphagia. It is possible that unreported factors, such as CTV, may contribute significantly to swallowing function. Nevertheless, within the study population, contralateral constrictor dose was able to identify a group with a low rate of long-term dysphagia.

Conclusions

Contralateral constrictor dose is a promising predictor of late dysphagia for patients with H&N cancer treated with radiation with concurrent systemic therapy. Contralateral constrictor V60 < 40% was able to identify a group of patients with a low rate of 1-year dysphagia in this single-center retrospective study. The correlation between air cavity editing and contralateral constrictor V60 suggests that contralateral constrictor dose may depend partly on technique. Further studies are needed to see if the contralateral constrictor dose can be used to predict long-term dysphagia prospectively and in other patient populations.

1. Langendijk JA, Doornaert P, Verdonck-de Leeuw IM, et al. Impact of late treatment-related toxicity on quality of life among patients with head and neck cancer treated with radiotherapy. J Clin Oncol. 2008;26(22):3770-3776. doi:10.1200/JCO.2007.14.6647

2. Nguyen NP, Frank C, Moltz CC, et al. Impact of dysphagia on quality of life after treatment of head-and-neck cancer. Int J Radiat Oncol Biol Phys. 2005;61(3):772-778. doi:10.1016/j.ijrobp.2004.06.017

3. Ramaekers BLT, Joore MA, Grutters JPC, et al. The impact of late treatment-toxicity on generic health-related quality of life in head and neck cancer patients after radiotherapy. Oral Oncol. 2011;47(8):768-774. doi:10.1016/j.oraloncology.2011.05.012

4. Christianen MEMC, Schilstra C, Beetz I, et al. Predictive modelling for swallowing dysfunction after primary (chemo)radiation: results of a prospective observational study. Radiother Oncol. 2012;105(1):107-114. doi:10.1016/j.radonc.2011.08.009

5. Vlachich G, Spratt DE, Diaz R, et al. Dose to inferior pharyngeal conctrictor predicts prolonged gastrostomy tube dependence with concurrent intensity-modulated radiation therapy and chemotherapy for locally-advanced head and neck cancer. Radiother Oncol. 2014;110(3):435-440. doi:10.1016/j.radonc.2013.12.007

6. Mogadas S, Busch CJ, Pflug Cet al. Influence of radiation dose to pharyngeal constrictor muscles on late dysphagia and quality of life in patients with locally advanced oropharyngeal carcinoma. Strahlenther Onkol. 2020;196(6):522-529. doi:10.1007/s00066-019-01572-0

7. Caglar HB, Tishler RB, Othus M, et al. Dose to larynx predicts of swallowing complications after intensity-modulated radiotherapy. Int J Radiat Oncol Biol Phys. 2008;72(4):1110-1118. doi:10.1016/j.ijrobp.2008.02.048

8. Schwartz DL, Hutcheson K, Barringer D, et al. Candidate dosimetric predictors of long-term swallowing dysfunction after oropharyngeal intensity-modulated radiotherapy. Int J Radiat Oncol Biol Phys. 2010;78(5):1356-1365. doi:10.1016/j.ijrobp.2009.10.002

9. Gensheimer MF, Nyflot M, Laramore GE, Laio JL, Parvathaneni U. Contribution of submandibular gland and swallowing structure sparing to post-radiation therapy peg dependence in oropharynx cancer patients treated with split-neck IMRT technique. Radiat Oncol. 2015;11(1):1-7. doi:10.1186/s13014-016-0726-3

10. Hedström J, Tuomi L, Finizia C, Olsson C. Identifying organs at risk for radiation-induced late dysphagia in head and neck cancer patients. Clin Transl Radiat Oncol. 2019;19:87-95. doi:10.1016/j.ctro.2019.08.005

11. Bhide SA, Gulliford S, Kazi R, et al. Correlation between dose to the pharyngeal constrictors and patient quality of life and late dysphagia following chemo-IMRT for head and neck cancer. Radiother Oncol. 2009;93(3):539-544. doi:10.1016/j.radonc.2009.09.017

12. Caudell JJ, Schaner PE, Desmond RA, Meredith RF, Spencer SA, Bonner JA. Dosimetric factors associated with long-term dysphagia after definitive radiotherapy for squamous cell carcinoma of the head and neck. Int J Radiat Oncol Biol Phys. 2010;76(2):403-409. doi:10.1016/j.ijrobp.2009.02.017

13. Levendag PC, Teguh DN, Voet P, et al. Dysphagia disorders in patients with cancer of the oropharynx are significantly affected by the radiation therapy dose to the superior and middle constrictor muscle: a dose-effect relationship. Radiother Oncol. 2007;85(1):64-73. doi:10.1016/j.radonc.2007.07.009

14. Eisbruch A, Schwartz M, Rasch C, et al. Dysphagia and aspiration after chemoradiotherapy for head-and-neck cancer: which anatomic structures are affected and can they be spared by IMRT? Int J Radiat Oncol Biol Phys. 2004;60(5):1425-1439. doi:10.1016/j.ijrobp.2004.05.050

15. Harari PM; NRG Oncology. Comparing high-dose cisplatin every three weeks to low-dose cisplatin weekly when combined with radiation for patients with advanced head and neck cancer. ClinicalTrials.gov identifier: NCT05050162. Updated November 25, 2022. Accessed December 7, 2022. https://clinicaltrials.gov/ct2/show/NCT05050162

16. Wang JJ, Goldsmith TA, Holman AS, Cianchetti M, Chan AW. Pharyngoesophageal stricture after treatment for head and neck cancer. Head Neck. 2011;34(7):967-973. doi:10.1002/hed.21842

17. Kendall KA, McKenzie SW, Leonard RJ, Jones CU. Timing of swallowing events after single-modality treatment of head and neck carcinoma with radiotherapy. Ann Otol Rhinol Laryngol. 2000;109(8, pt 1):767-775. doi:10.1177/000348940010900812

18. Ohmae Y, Ogura M, Kitahara S. Effects of head rotation on pharyngeal function during normal swallow. Ann Otol Rhinol Laryngol. 1998;107(4):344-348. doi:10.1177/000348949810700414

19. Spencer CR, Gay HA, Haughey BH, et al. Eliminating radiotherapy to the contralateral retropharyngeal and high level II lymph nodes in head and neck squamous cell carcinoma is safe and improves quality of life. Cancer. 2014;120(24):3994-4002. doi:10.1002/cncr.28938

1. Langendijk JA, Doornaert P, Verdonck-de Leeuw IM, et al. Impact of late treatment-related toxicity on quality of life among patients with head and neck cancer treated with radiotherapy. J Clin Oncol. 2008;26(22):3770-3776. doi:10.1200/JCO.2007.14.6647

2. Nguyen NP, Frank C, Moltz CC, et al. Impact of dysphagia on quality of life after treatment of head-and-neck cancer. Int J Radiat Oncol Biol Phys. 2005;61(3):772-778. doi:10.1016/j.ijrobp.2004.06.017

3. Ramaekers BLT, Joore MA, Grutters JPC, et al. The impact of late treatment-toxicity on generic health-related quality of life in head and neck cancer patients after radiotherapy. Oral Oncol. 2011;47(8):768-774. doi:10.1016/j.oraloncology.2011.05.012

4. Christianen MEMC, Schilstra C, Beetz I, et al. Predictive modelling for swallowing dysfunction after primary (chemo)radiation: results of a prospective observational study. Radiother Oncol. 2012;105(1):107-114. doi:10.1016/j.radonc.2011.08.009

5. Vlachich G, Spratt DE, Diaz R, et al. Dose to inferior pharyngeal conctrictor predicts prolonged gastrostomy tube dependence with concurrent intensity-modulated radiation therapy and chemotherapy for locally-advanced head and neck cancer. Radiother Oncol. 2014;110(3):435-440. doi:10.1016/j.radonc.2013.12.007

6. Mogadas S, Busch CJ, Pflug Cet al. Influence of radiation dose to pharyngeal constrictor muscles on late dysphagia and quality of life in patients with locally advanced oropharyngeal carcinoma. Strahlenther Onkol. 2020;196(6):522-529. doi:10.1007/s00066-019-01572-0

7. Caglar HB, Tishler RB, Othus M, et al. Dose to larynx predicts of swallowing complications after intensity-modulated radiotherapy. Int J Radiat Oncol Biol Phys. 2008;72(4):1110-1118. doi:10.1016/j.ijrobp.2008.02.048

8. Schwartz DL, Hutcheson K, Barringer D, et al. Candidate dosimetric predictors of long-term swallowing dysfunction after oropharyngeal intensity-modulated radiotherapy. Int J Radiat Oncol Biol Phys. 2010;78(5):1356-1365. doi:10.1016/j.ijrobp.2009.10.002

9. Gensheimer MF, Nyflot M, Laramore GE, Laio JL, Parvathaneni U. Contribution of submandibular gland and swallowing structure sparing to post-radiation therapy peg dependence in oropharynx cancer patients treated with split-neck IMRT technique. Radiat Oncol. 2015;11(1):1-7. doi:10.1186/s13014-016-0726-3

10. Hedström J, Tuomi L, Finizia C, Olsson C. Identifying organs at risk for radiation-induced late dysphagia in head and neck cancer patients. Clin Transl Radiat Oncol. 2019;19:87-95. doi:10.1016/j.ctro.2019.08.005

11. Bhide SA, Gulliford S, Kazi R, et al. Correlation between dose to the pharyngeal constrictors and patient quality of life and late dysphagia following chemo-IMRT for head and neck cancer. Radiother Oncol. 2009;93(3):539-544. doi:10.1016/j.radonc.2009.09.017

12. Caudell JJ, Schaner PE, Desmond RA, Meredith RF, Spencer SA, Bonner JA. Dosimetric factors associated with long-term dysphagia after definitive radiotherapy for squamous cell carcinoma of the head and neck. Int J Radiat Oncol Biol Phys. 2010;76(2):403-409. doi:10.1016/j.ijrobp.2009.02.017

13. Levendag PC, Teguh DN, Voet P, et al. Dysphagia disorders in patients with cancer of the oropharynx are significantly affected by the radiation therapy dose to the superior and middle constrictor muscle: a dose-effect relationship. Radiother Oncol. 2007;85(1):64-73. doi:10.1016/j.radonc.2007.07.009

14. Eisbruch A, Schwartz M, Rasch C, et al. Dysphagia and aspiration after chemoradiotherapy for head-and-neck cancer: which anatomic structures are affected and can they be spared by IMRT? Int J Radiat Oncol Biol Phys. 2004;60(5):1425-1439. doi:10.1016/j.ijrobp.2004.05.050

15. Harari PM; NRG Oncology. Comparing high-dose cisplatin every three weeks to low-dose cisplatin weekly when combined with radiation for patients with advanced head and neck cancer. ClinicalTrials.gov identifier: NCT05050162. Updated November 25, 2022. Accessed December 7, 2022. https://clinicaltrials.gov/ct2/show/NCT05050162

16. Wang JJ, Goldsmith TA, Holman AS, Cianchetti M, Chan AW. Pharyngoesophageal stricture after treatment for head and neck cancer. Head Neck. 2011;34(7):967-973. doi:10.1002/hed.21842

17. Kendall KA, McKenzie SW, Leonard RJ, Jones CU. Timing of swallowing events after single-modality treatment of head and neck carcinoma with radiotherapy. Ann Otol Rhinol Laryngol. 2000;109(8, pt 1):767-775. doi:10.1177/000348940010900812

18. Ohmae Y, Ogura M, Kitahara S. Effects of head rotation on pharyngeal function during normal swallow. Ann Otol Rhinol Laryngol. 1998;107(4):344-348. doi:10.1177/000348949810700414

19. Spencer CR, Gay HA, Haughey BH, et al. Eliminating radiotherapy to the contralateral retropharyngeal and high level II lymph nodes in head and neck squamous cell carcinoma is safe and improves quality of life. Cancer. 2014;120(24):3994-4002. doi:10.1002/cncr.28938

New Razor Technology Improves Appearance and Quality of Life in Men With Pseudofolliculitis Barbae

Pseudofolliculitis barbae (PFB)(also known as razor bumps or shaving bumps)1 is a skin condition that consists of papules resulting from ingrown hairs.2 In more severe cases, papules become pustules, then abscesses, which can cause scarring.1,2 The condition can be distressing for patients, with considerable negative impact on their daily lives.3 The condition also is associated with shaving-related stinging, burning, pruritus, and cuts on the skin.4

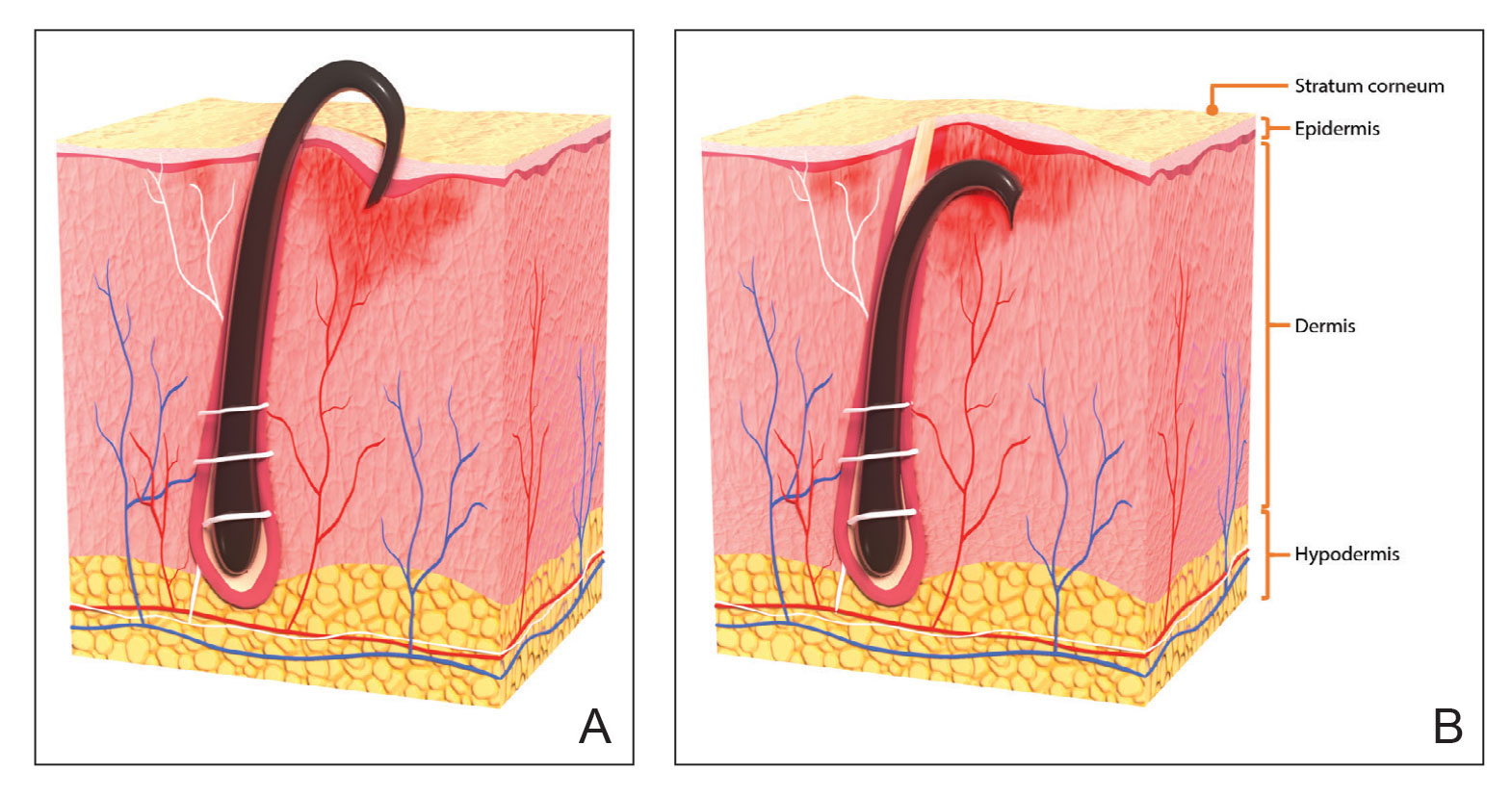

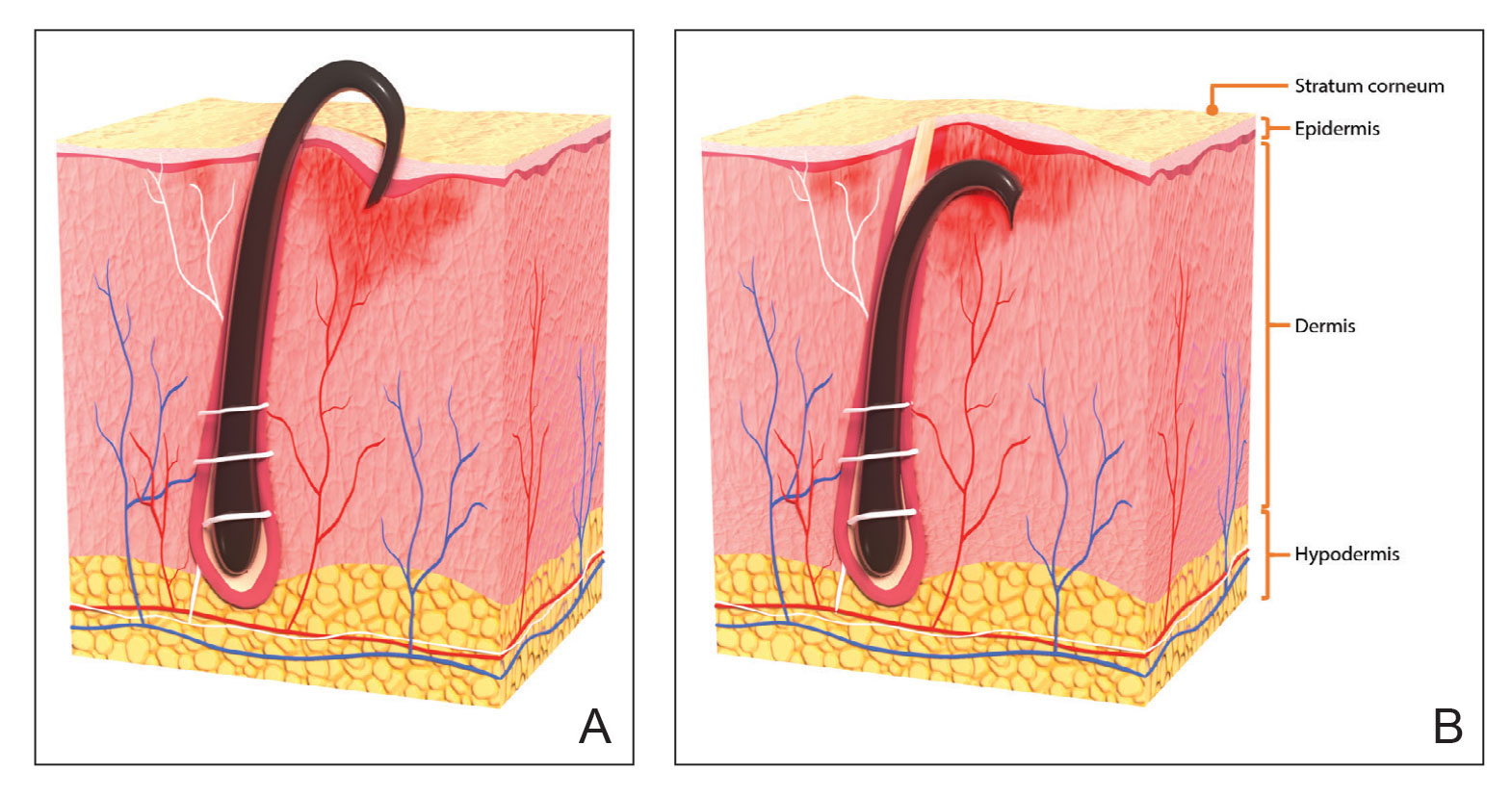

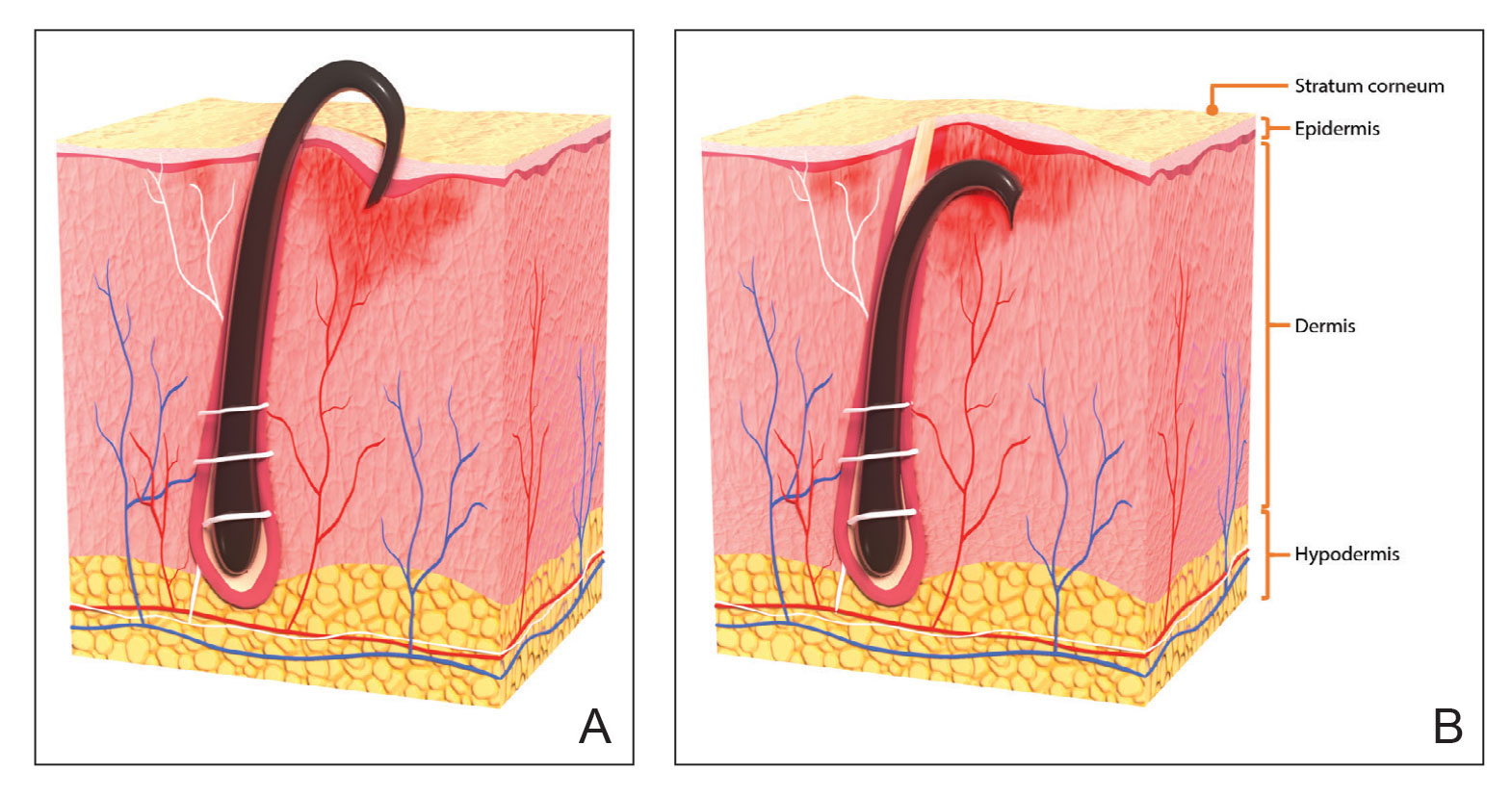

Pseudofolliculitis barbae is most common in men of African descent due to the curved nature of the hair follicle,2,5,6 with an estimated prevalence in this population of 45% to 83%,1,6 but it can affect men of other ethnicities.7 A genetic polymorphism in a gene encoding a keratin specific to the hair follicle also has been found to predispose some individuals to PFB.5 When hair from a curved or destabilized hair follicle is cut to form a sharp tip, it is susceptible to extrafollicular and/or transfollicular penetration,5,6,8 as illustrated in Figure 1.

With extrafollicular or transfollicular penetration, the hair shaft re-enters or retracts into the dermis, triggering an inflammatory response that may be exacerbated by subsequent shaving.2 Few studies have been published that aim to identify potential shaving solutions for individuals with PFB who elect to or need to continue shaving.

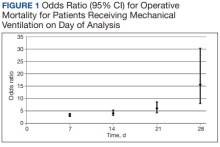

A new razor technology comprising 2 blades separated by a bridge feature has been designed specifically for men with razor bumps (SkinGuard [Procter & Gamble]). The SkinGuard razor redistributes shaving pressure so that there is less force from the blades on the skin and inflamed lesions than without the bridge, as seen in Figure 2. The razor has been designed to protect the skin from the blades, thereby minimizing the occurrence of new lesions and allowing existing lesions to heal.

![Test razor bridge feature (SkinGuard [Procter & Gamble]) minimizes the force of the razor blades on the skin. Copyright 2022 The Procter & Gamble Company. Test razor bridge feature (SkinGuard [Procter & Gamble]) minimizes the force of the razor blades on the skin. Copyright 2022 The Procter & Gamble Company.](https://cdn.mdedge.com/files/s3fs-public/Moran_2.jpg)

The primary purpose of this study was to assess the appearance of males with razor bumps and shaving irritation when using the new razor technology in a regular shaving routine. The secondary objective was to measure satisfaction of the shaving experience when using the new razor by means of assessing itching, burning, and stinging using the participant global severity assessment (PGSA) and the impact on quality of life (QOL) measures.

Methods

Participants—Eligible participants were male, aged 20 to 60 years, and had clinically diagnosed PFB as well as symptoms of skin irritation from shaving. Participants were recruited from a dermatology clinic and via institutional review board–approved advertising.

Those eligible for inclusion in the study had a shaving routine that comprised shaving at least 3 times a week using a wet-shave, blade-razor technique accompanied by only a shave gel or foam. In addition, eligible participants had mild to moderate symptoms of skin irritation (a minimum of 10 razor bumps) from shaving based on investigator global severity assessment (IGSA) rating scales and were willing to shave at least 5 times a week during the study period. Participants could continue certain topical and systemic interventions for their skin.

Participants were excluded from the study if they had an underlying inflammatory disease that could manifest with a skin rash or were using any of these medications: topical benzoyl peroxide, topical clindamycin, topical retinoids, or oral antibiotics.

Study Design—A prospective, open-label study was conducted over a period of 12 weeks at a single site in the United States. Investigators instructed participants to shave 5 or more times per week with the test razor and to keep a daily shaving journal to track the number of shaves and compliance.

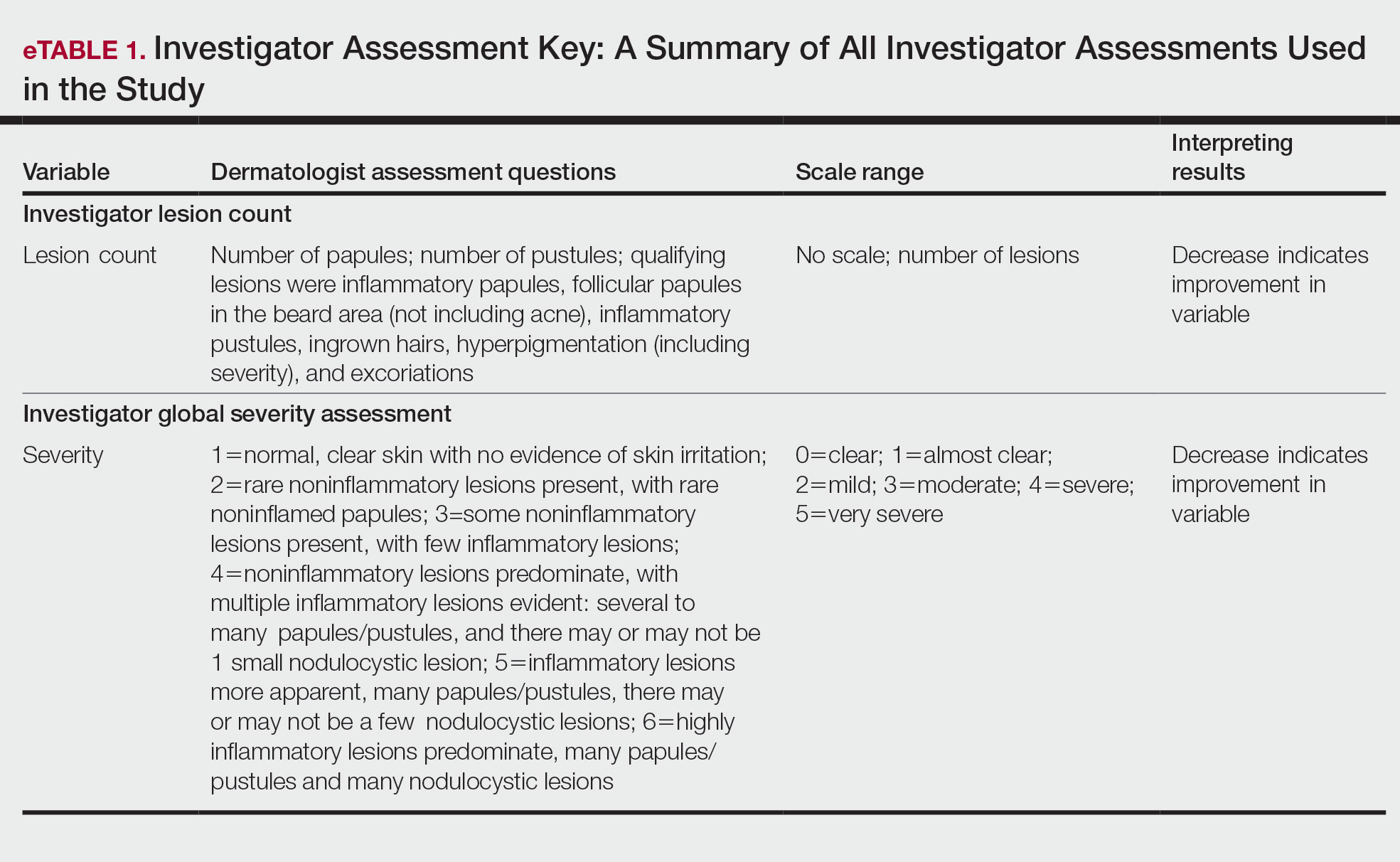

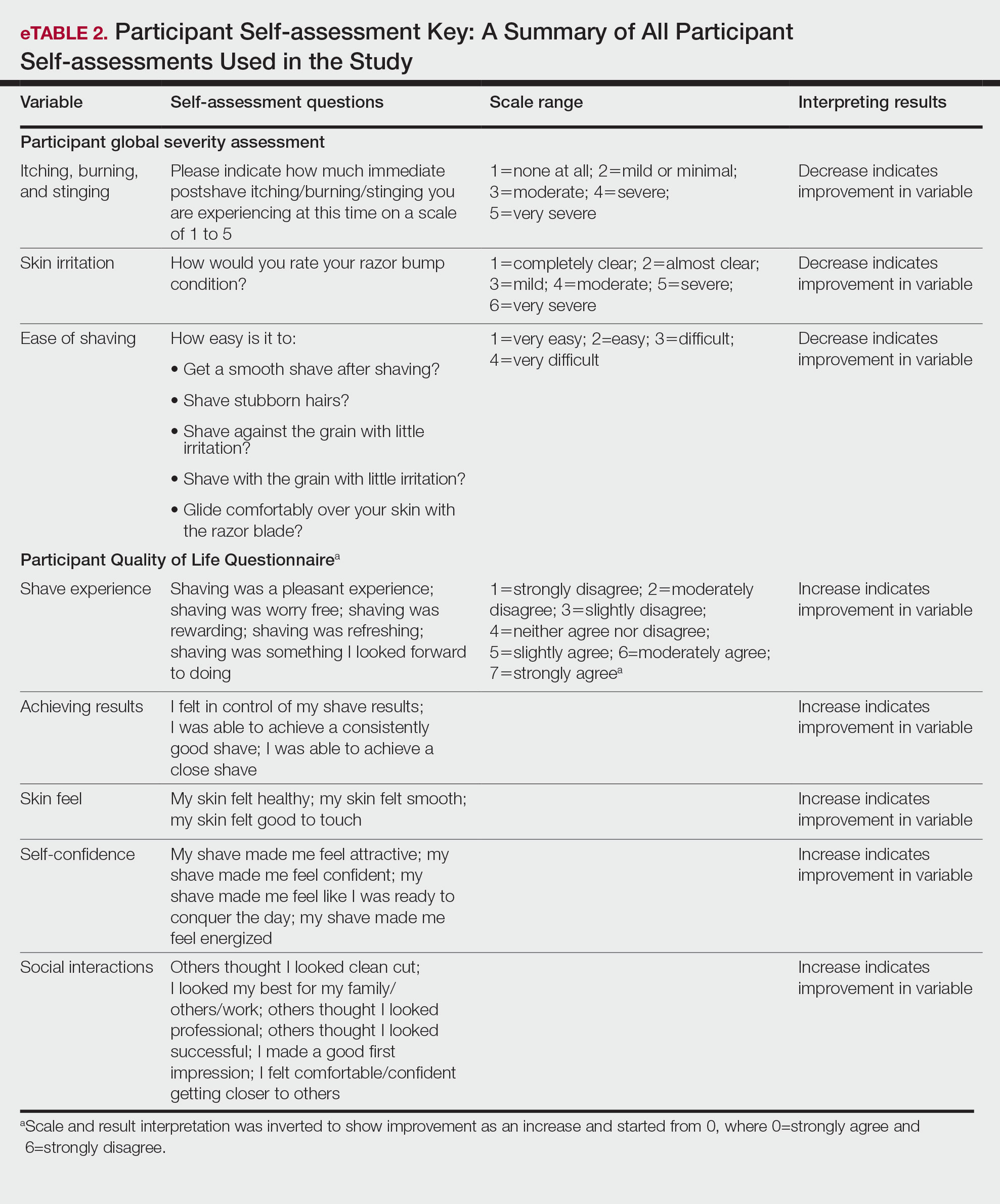

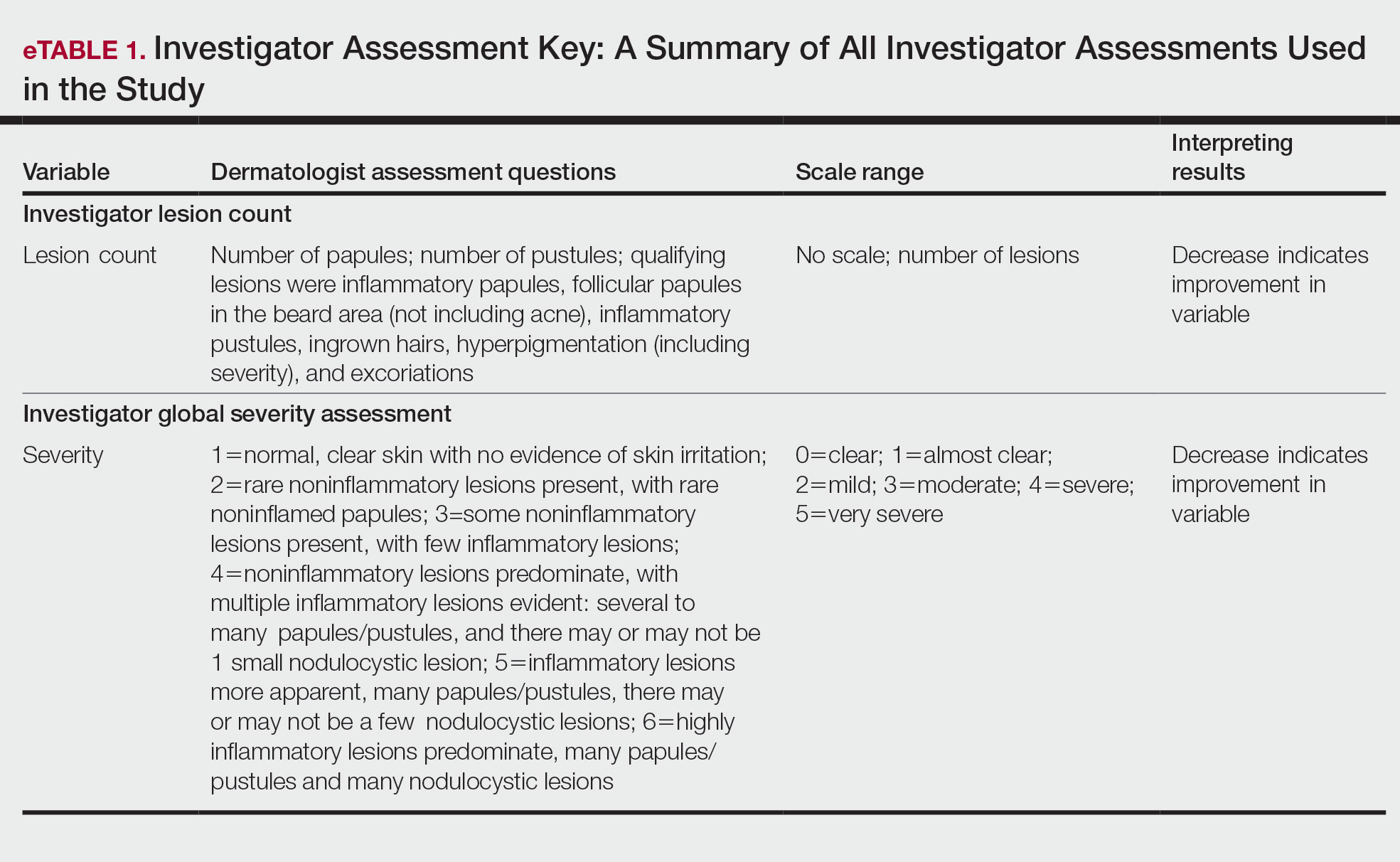

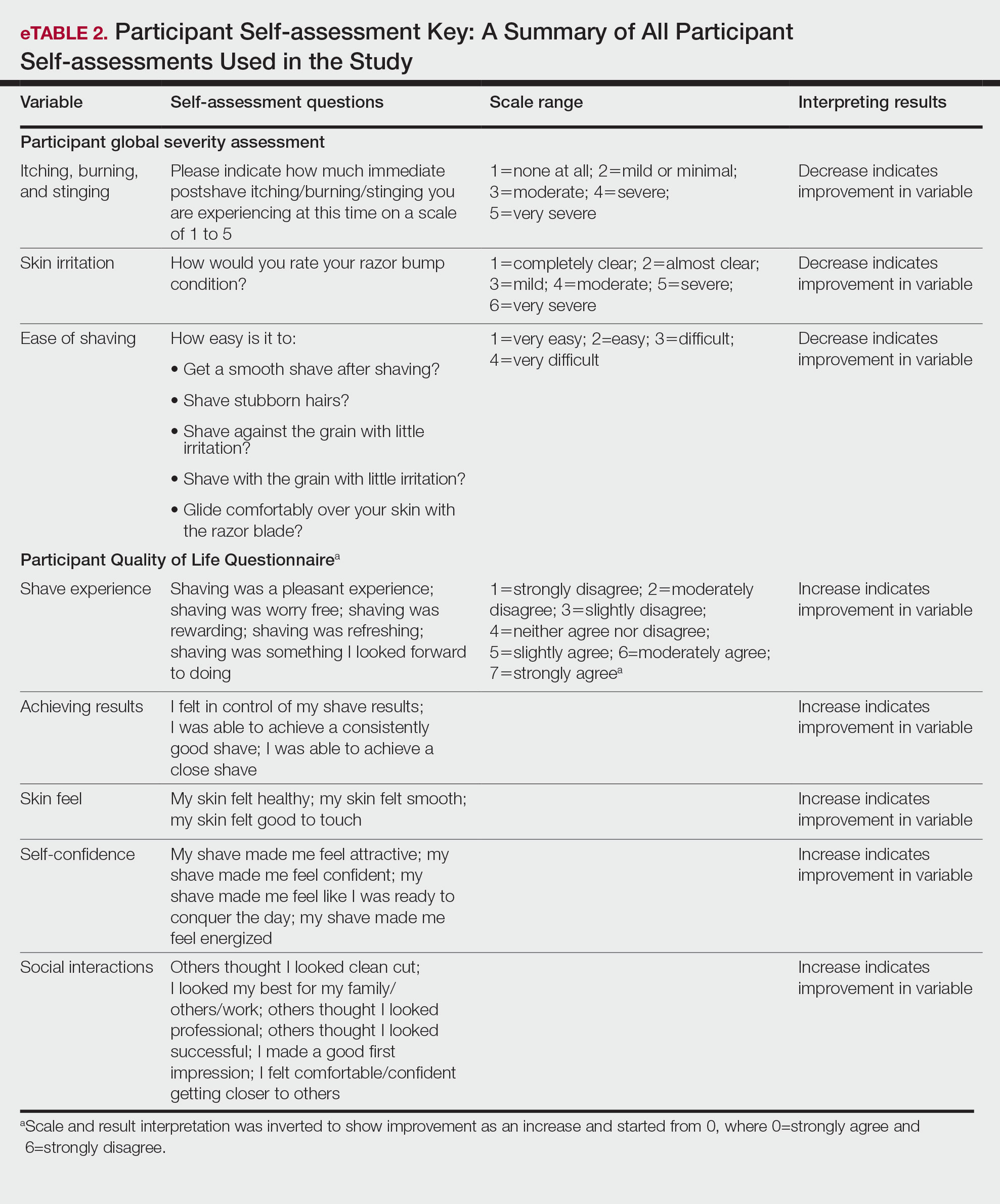

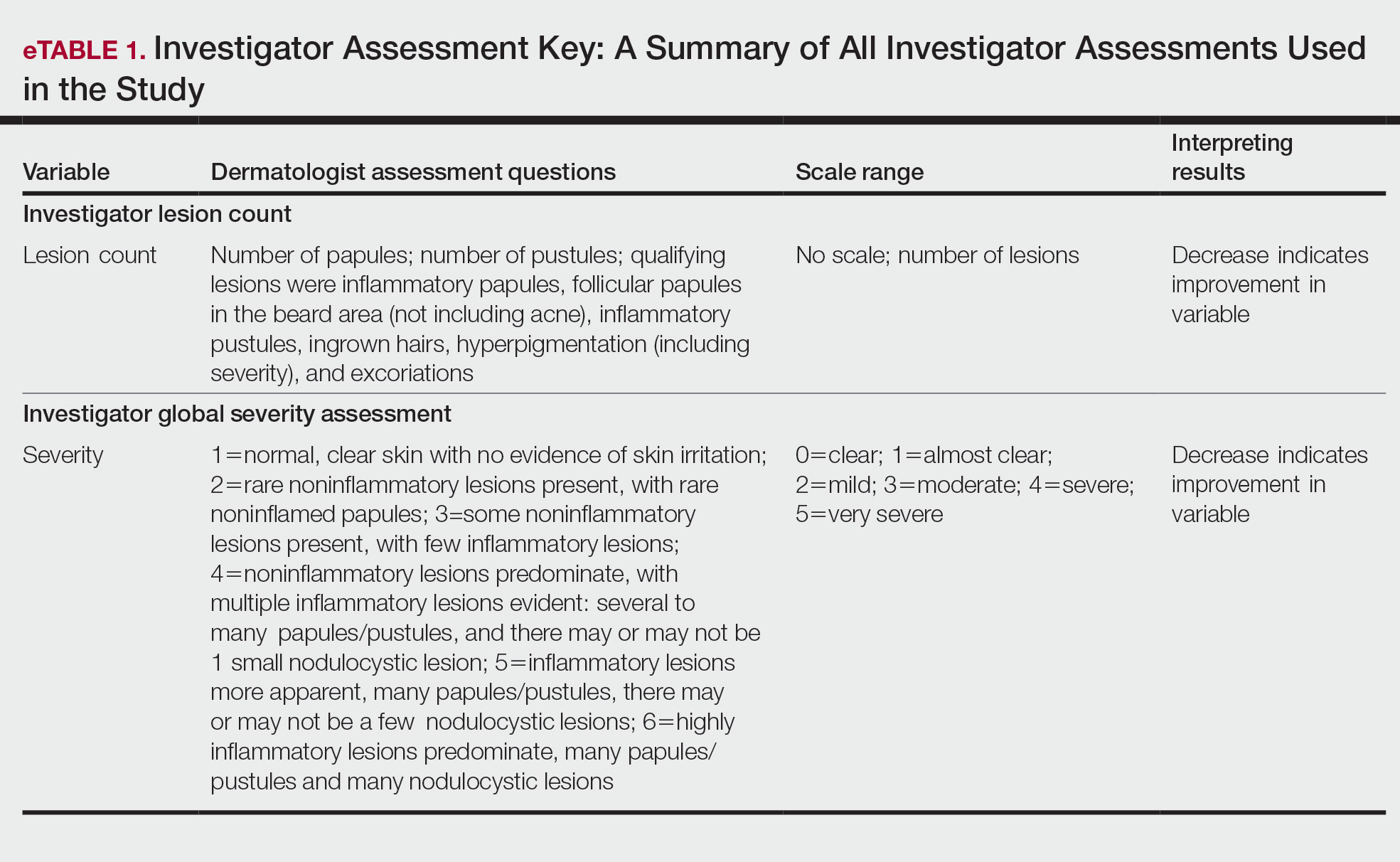

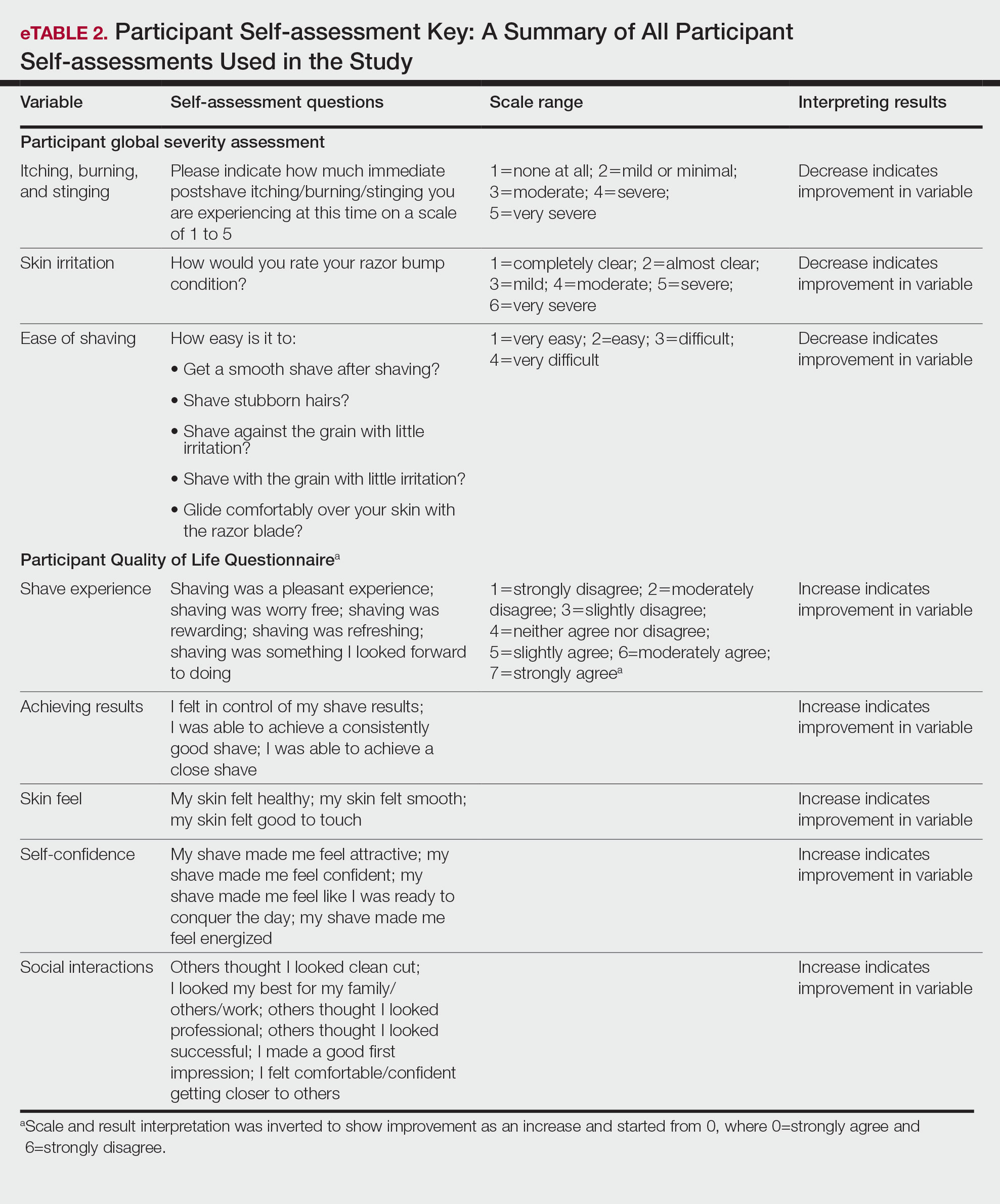

Participants were evaluated at the baseline screening visit, then at 4, 8, and 12 weeks. Evaluations included an investigator lesion count, the IGSA, and the PGSA. The PGSA was used to evaluate subjective clinical measurements (ie, indicate how much postshave burning/itching/stinging the participant was experiencing). The impact of shaving on daily life was evaluated at the baseline screening visit and at 12 weeks with the Participant Quality of Life Questionnaire comprised of 22 QOL statements. eTable 1 summarizes the investigator assessments used in the study, and eTable 2 summarizes the participant self-assessments. Both tables include the scale details and results interpretation for each assessment.

The study was approved by the local institutional review board, and all participants provided written informed consent in accordance with Title 21 of the Code of Federal Regulations, Part 50.

Study Visits—At the baseline screening visit, participants provided written informed consent and completed a prestudy shave questionnaire concerning shaving preparations, techniques, and opinions. Participants also provided a medical history, including prior and concomitant medications, and were evaluated using the inclusion/exclusion criteria. Investigators explained adverse event reporting to the participants. Participants were provided with an adequate supply of test razors for the 12-week period.

Data Analysis—Means and SDs were calculated for the study measures assessed at each visit. Analyses were performed evaluating change from baseline in repeated-measures analysis of variance models. These models were adjusted for baseline levels of the outcome measure and visit number. The magnitude of change from baseline was evaluated against a null hypothesis of 0% change. This longitudinal model adjusted for any potential differing baseline levels among participants. Statistical significance was defined as P<.05. SAS version 9.4 (SAS Institute Inc) was used for all analyses.

Results

In total, 21 individuals were enrolled, and 20 completed the study. Participants who completed the study were non-Hispanic Black (n=10); non-Hispanic White (n=8); Asian (n=1); or White, American Indian (n=1). All participants adhered to the protocol and reported shaving at least 5 times a week for 12 weeks using the test razor. One participant was removed after he was found to have a history of sarcoidosis, making him ineligible for the study. No study-related adverse events were reported.

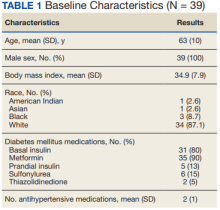

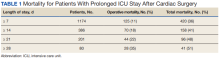

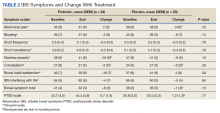

Papules and Pustules—Over the course of the 12-week study, the papule count decreased significantly from baseline. Results from the investigator lesion count (see eTable 1 for key) indicated that by week 12—adjusted for number of papules at baseline—the mean percentage reduction was estimated to be 59.6% (P<.0001). A significant decrease in papule count also was observed between the baseline visit and week 8 (57.2%; P<.0001). A nonsignificant decrease was observed at week 4 (18.9%; P=.17). Only 3 participants presented with pustules at baseline, and the pustule count remained low over the course of the study. No significant change was noted at week 12 vs baseline (P=.98). Notably, there was no increase in pustule count at the end of the study compared with baseline (Table 1).

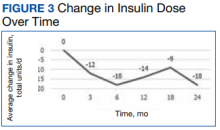

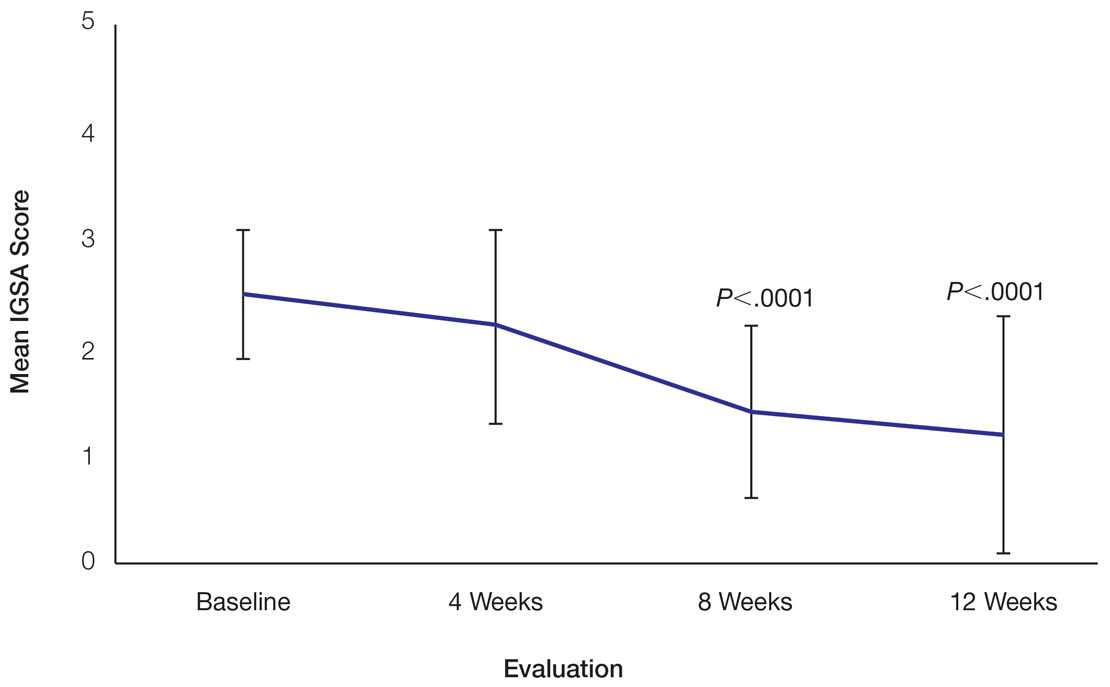

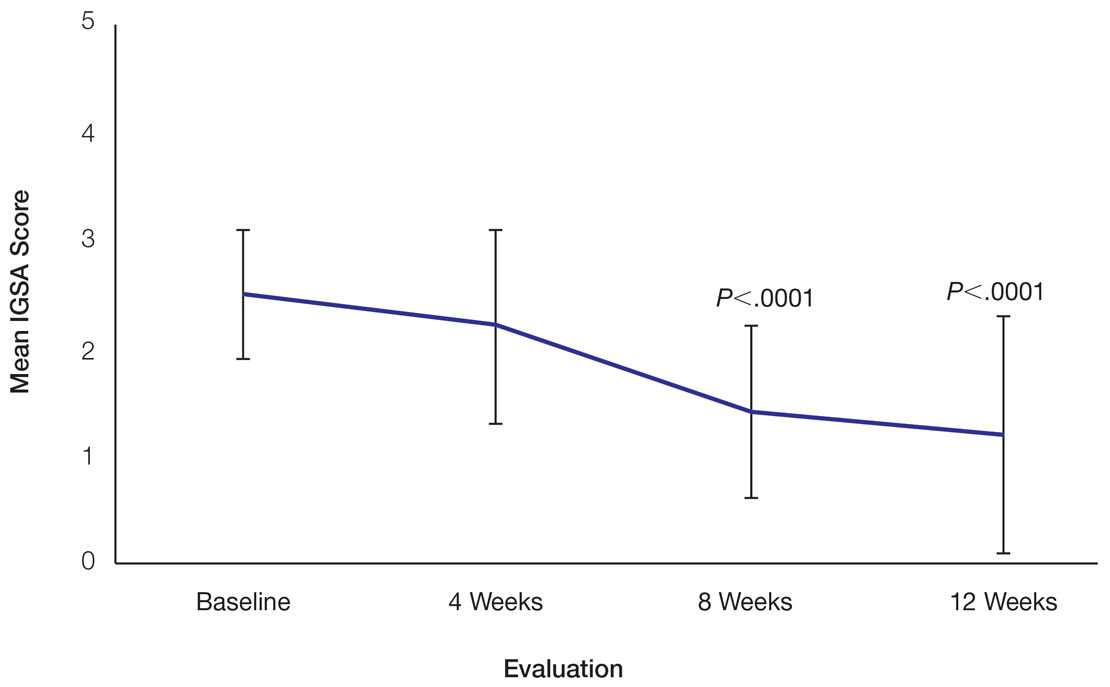

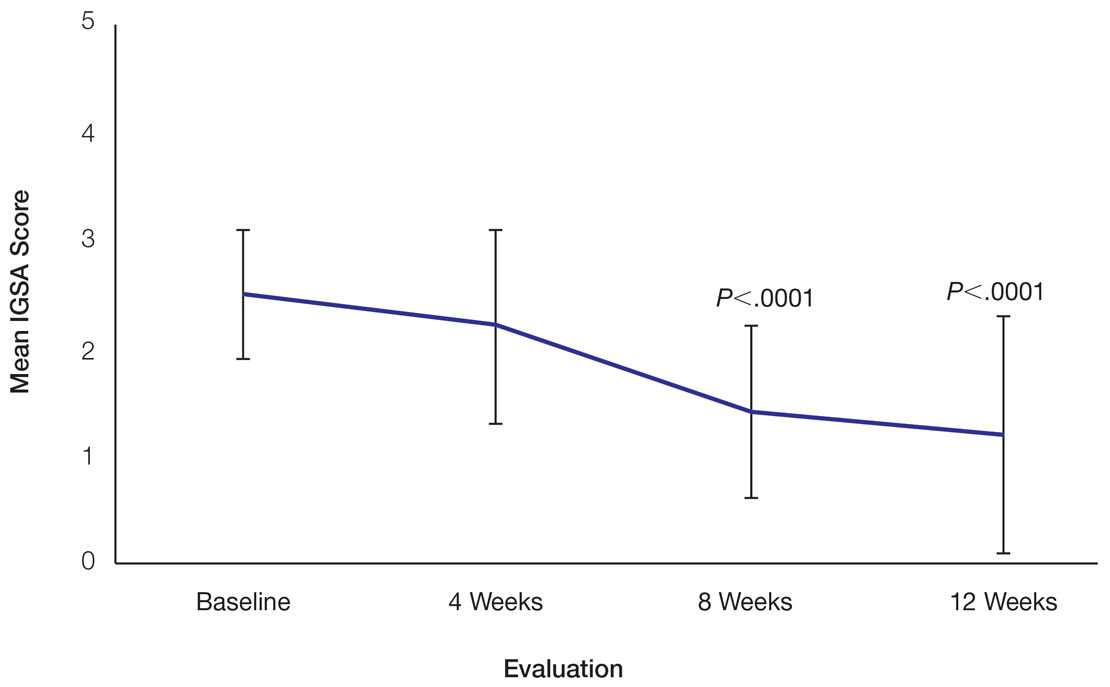

Skin Appearance—An improvement in the skin’s appearance over the course of the study from baseline was consistent with an improvement in the IGSA. The IGSA score significantly improved from a mean (SD) measurement of 2.5 (0.6) (indicating mild to moderate inflammation) at baseline to 1.4 (0.8) at week 8 (P<.0001) and 1.2 (1.1) (indicating mild inflammation to almost clear) at week 12 (P<.0001). The observed decrease in severity of skin condition and skin inflammation is shown in Figure 3.

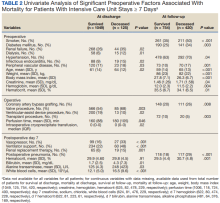

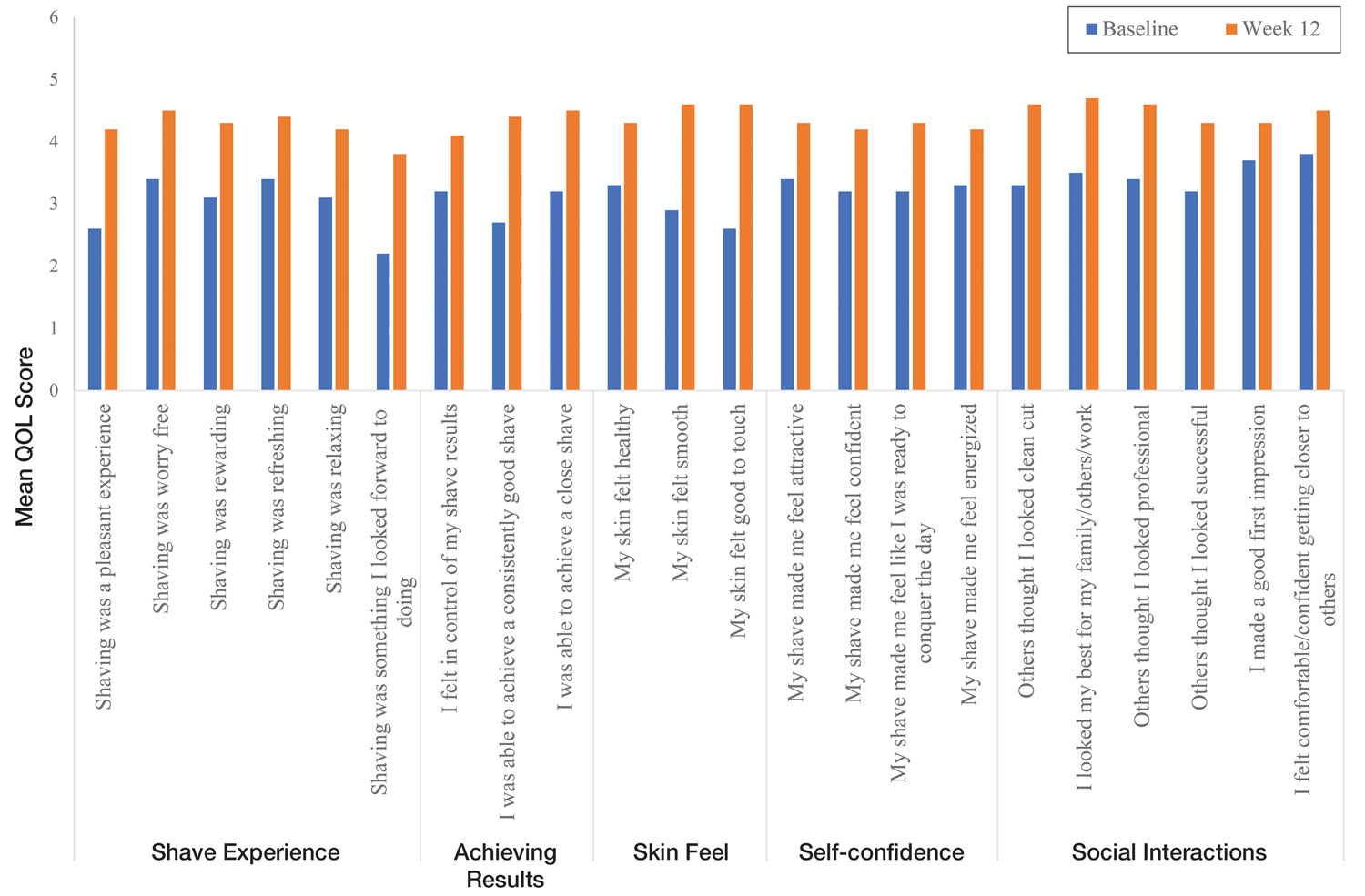

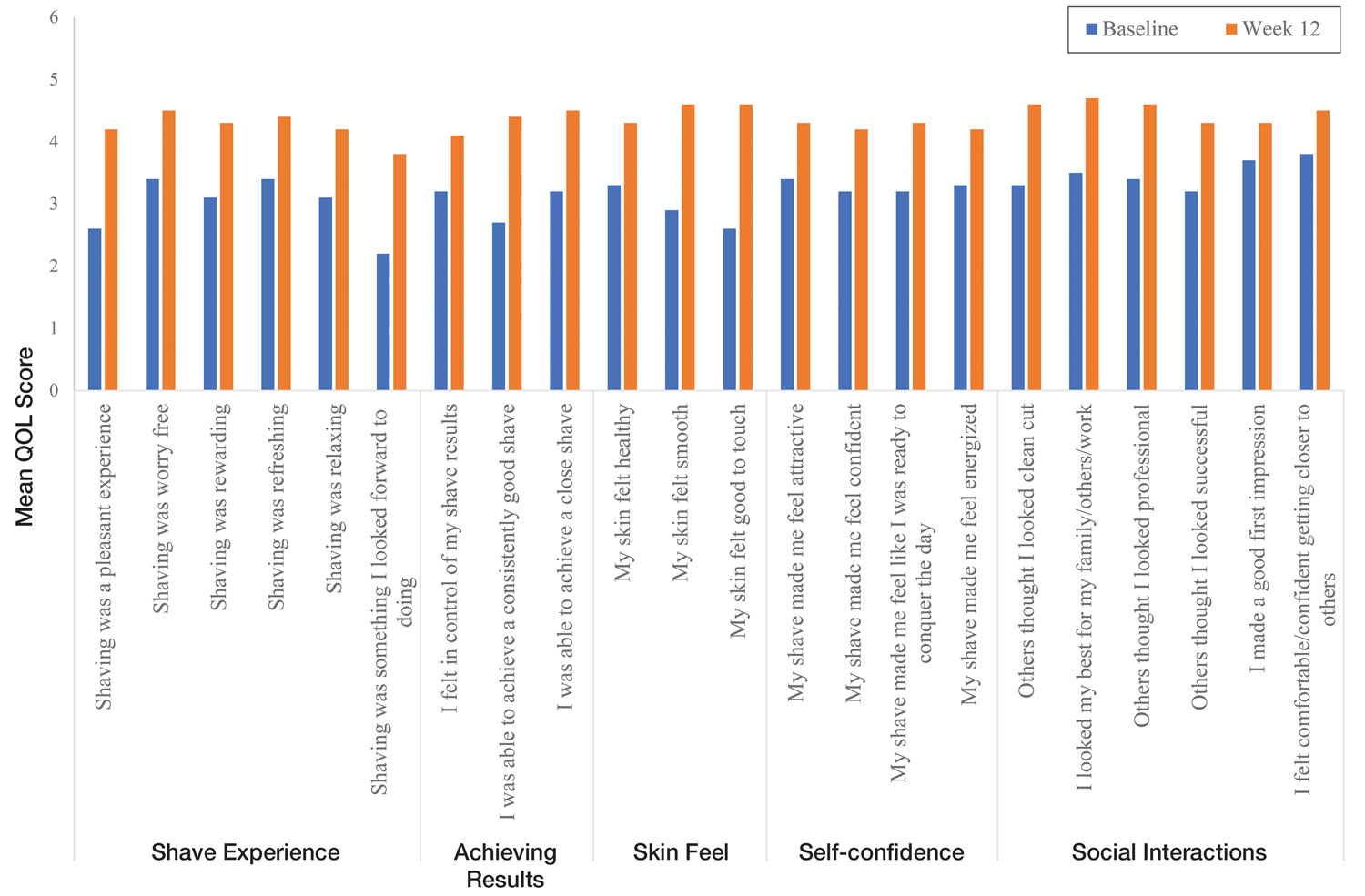

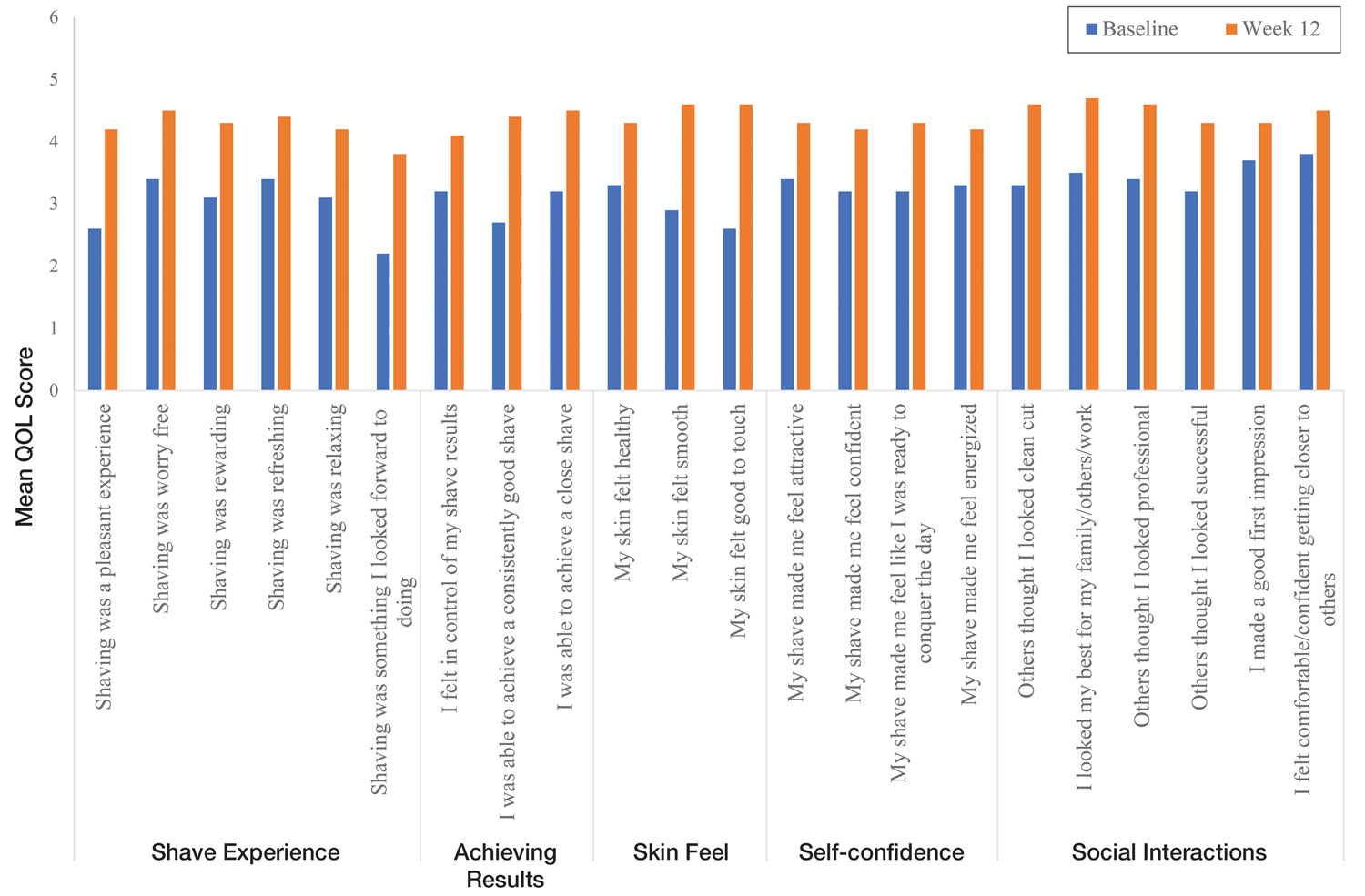

Significant improvements were observed in every category of the PGSA at week 12 vs baseline (P≤.0007)(Table 2). At week 12, there was a significant (P≤.05) increase from baseline in participant agreement for all 22 QOL metrics describing positive shave experience, achieving results, skin feel, self-confidence, and social interactions (Figure 4), which supports the positive impact of adopting a shaving regimen with the test razor. Notably, after using the test razor for 12 weeks, men reported that they were more likely to agree with the statements “my skin felt smooth,” “my skin felt good to touch,” and “I was able to achieve a consistently good shave.” Other meaningful increases occurred in “shaving was something I looked forward to doing,” “others thought I looked clean cut,” “I looked my best for my family/others/work,” and “I felt comfortable/confident getting closer to others.” All QOL statements are shown in Figure 4.

Comment

Improvement With Novel Razor Technology—For the first time, frequent use of a novel razor technology designed specifically for men with PFB was found to significantly improve skin appearance, shave satisfaction, and QOL after 12 weeks vs baseline in participants clinically diagnosed with PFB. In men with shave-related skin irritation and razor bumps who typically wet-shaved with a razor at least 3 times a week, use of the test razor with their regular shaving preparation product 5 or more times per week for 12 weeks was associated with significant improvements from baseline in investigator lesion count, IGSA, PGSA, and Participant Quality of Life Questionnaire measurements.

Study strengths included the quantification of the change in the number of lesions and the degree of severity by a trained investigator in a prospective clinical study along with an assessment of the impact on participant QOL. A lack of a control arm could be considered a limitation of the study; however, study end points were evaluated compared with baseline, with each participant serving as their own control. Spontaneous resolution of the condition with their standard routine was considered highly unlikely in these participants; therefore, in the absence of any other changes, improvements were attributed to regular use of the test product over the course of the study. The results presented here provide strong support for the effectiveness of the new razor technology in improving the appearance of men with razor bumps and shaving irritation.

Hair Removal Tools for the Management of PFB—Although various tools and techniques have been proposed in the past for men with PFB, the current test razor technology provided unique benefits, including improvements in appearance and severity of the condition as well as a positive impact on QOL. In 1979, Conte and Lawrence9 evaluated the effect of using an electric hair clipper and twice-daily use of a skin-cleansing pad on the occurrence of PFB. Participants (n=96) allowed their beards to grow out for 1 month, after which they started shaving with an electric clipper with a triple O head. The authors reported a favorable response in 95% (91/96) of cases. However, the electric clippers left 1 mm of beard at the skin level,9 which may not be acceptable for those who prefer a clean-shaven appearance.6

A prospective survey of 22 men of African descent with PFB found use of a safety razor was preferred over an electric razor.10 The single-arm study evaluated use of a foil-guarded shaver (single-razor blade) in the management of PFB based on investigator lesion counts and a participant questionnaire. Participants were asked to shave at least every other day and use a specially designed preshave brush. A mean reduction in lesion counts was observed at 2 weeks (29.6%), 4 weeks (38.1%), and 6 weeks (47.1%); statistical significance was not reported. At 6 weeks, 77.3% (17/22) of participants judged the foil-guarded shaver to be superior to other shaving devices in controlling their razor bumps, and 90.9% (20/22) indicated they would recommend the shaver to others with PFB. The authors hypothesized that the guard buffered the skin from the blade, which might otherwise facilitate the penetration of ingrowing hairs and cause trauma to existing lesions.

The mean reduction in lesion count from baseline observed at week 4 was greater in the study with the foil-guarded shaver and preshave brush (38% reduction)10 than in our study (19% reduction in papule count). Different methodologies, use of a preshave brush in the earlier study, and a difference in lesion severity at baseline may have contributed to this difference. The study with the foil-guarded shaver concluded after 6 weeks, and there was a 47.1% reduction in lesion counts vs baseline.10 In contrast, the current study continued for 12 weeks, and a 59.6% reduction in lesion counts was reported. Participants from both studies reported an improved shaving experience compared with their usual practice,10 though only the current study explored the positive impact of the new razor technology on participant QOL.

Preventing Hairs From Being Cut Too Close—The closeness of the shave is believed to be a contributory factor in the development and persistence of PFB6,8,11 based on a tendency for the distal portion of tightly curled hair shafts to re-enter the skin after shaving via transfollicular penetration.12 Inclusion of a buffer in the razor between the sharp blades and the skin has been proposed to prevent hairs from being cut too close and causing transfollicular penetration.12

In the test razor used in the current study, the bridge technology acted as the buffer to prevent hairs from being cut too close to the skin and to reduce blade contact with the skin (Figure 2). Having only 2 blades also reduced the closeness of the shave compared with 5-bladed technologies,13 as each hair can only be pulled and cut up to a maximum of 2 times per shaving stroke. Notably, this did not impact the participants’ QOL scores related to achieving a close shave or skin feeling smooth; both attributes were significantly improved at 12 weeks vs baseline (Figure 4).

By reducing blade contact with the skin, the bridge technology in the test razor was designed to prevent excessive force from being applied to the skin through the blades. Reduced blade loading minimizes contact with and impact on sensitive skin.14 Additional design features of the test razor to minimize the impact of shaving on the skin include treatment of the 2 blades with low-friction coatings, which allows the blades to cut through the beard hair with minimal force, helping to reduce the tug-and-pull effect that may otherwise result in irritation and inflammation.13,15 Lubrication strips before and after the blades in the test razor reduce friction between the blades and the skin to further protect the skin from the blades.15

Shaving With Multiblade Razors Does Not Exacerbate PFB—In a 1-week, split-faced, randomized study of 45 Black men, shaving with a manual 3-bladed razor was compared with use of 3 different chemical depilatory formulations.16 Shaving every other day for 1 week with the manual razor resulted in more papule formation but less irritation than use of the depilatories. The authors concluded that a study with longer duration was needed to explore the impact of shaving on papule formation in participants with a history of PFB.16

In 2013, an investigator-blinded study of 90 African American men with PFB compared the impact of different shaving regimens on the signs and symptoms of PFB over a 12-week period.4 Participants were randomized to 1 of 3 arms: (1) shaving 2 to 3 times per week with a triple-blade razor and standard products (control group); (2) shaving daily with a 5-bladed razor and standard products; and (3) shaving daily with a 5-bladed razor and “advanced” specific pre- and postshave products. The researchers found that the mean papule measurement significantly decreased from baseline in the advanced (P=.01) and control (P=.016) groups. Between-group comparison revealed no significant differences for papule or pustule count among each arm. For the investigator-graded severity, the change from baseline was significant for all 3 groups (P≤.04); however, the differences among groups were not significant. Importantly, these data demonstrated that PFB was not exacerbated by multiblade razors used as part of a daily shaving regimen.4

The findings of the current study were consistent with those of Daniel et al4 in that there was no exacerbation of the signs and symptoms of PFB associated with daily shaving. However, rather than requiring participants to change their entire shaving regimen, the present study only required a change of razor type. Moreover, the use of the new razor technology significantly decreased papule counts at week 12 vs the baseline measurement (P<.0001) and was associated with an improvement in subjective skin severity measurements. The participants in the present study reported significantly less burning, stinging, and itching after using the test product for 12 weeks (P<.0001).

Impact of Treatment on QOL—The current study further expanded on prior findings by combining these clinical end points with the QOL results to assess the test razor’s impact on participants’ lives. Results showed that over the course of 12 weeks, the new razor technology significantly improved the participants’ QOL in all questions related to shaving experience, achieving results, skin feel, self-confidence, and social interactions. The significant improvement in QOL included statements such as “shaving was a pleasant experience,” “I was able to achieve a consistently good shave,” and “my skin felt smooth.” Participants also reported improvements in meaningful categories such as “my shave made me feel attractive” and “I felt comfortable/confident getting closer to others.” As the current study showed, a shave regimen has the potential to change participants’ overall assessment of their QOL, a variable that must not be overlooked.

Conclusion

In men with clinically diagnosed PFB, regular shaving with a razor designed to protect the skin was found to significantly decrease lesion counts, increase shave satisfaction, and improve QOL after 12 weeks compared with their usual shaving practice (baseline measures). This razor technology provides another option to help manage PFB for men who wish to or need to continue shaving.

Acknowledgments—The clinical study was funded by the Procter & Gamble Company. Editorial writing assistance, supported financially by the Procter & Gamble Company, was provided by Gill McFeat, PhD, of McFeat Science Ltd (Devon, United Kingdom).

- Alexander AM, Delph WI. Pseudofolliculitis barbae in the military. a medical, administrative and social problem. J Natl Med Assoc. 1974;66:459-464, 479.

- Kligman AM, Strauss JS. Pseudofolliculitis of the beard. AMA Arch Derm. 1956;74:533-542.

- Banta J, Bowen C, Wong E, et al. Perceptions of shaving profiles and their potential impacts on career progression in the United States Air Force. Mil Med. 2021;186:187-189.

- Daniel A, Gustafson CJ, Zupkosky PJ, et al. Shave frequency and regimen variation effects on the management of pseudofolliculitis barbae. J Drugs Dermatol. 2013;12:410-418.

- Winter H, Schissel D, Parry DA, et al. An unusual Ala12Thr polymorphism in the 1A alpha-helical segment of the companion layer-specific keratin K6hf: evidence for a risk factor in the etiology of the common hair disorder pseudofolliculitis barbae. J Invest Dermatol. 2004;122:652-657.

- Perry PK, Cook-Bolden FE, Rahman Z, et al. Defining pseudofolliculitis barbae in 2001: a review of the literature and current trends. J Am Acad Dermatol. 2002;46(2 suppl understanding):S113-S119.

- McMichael AJ. Hair and scalp disorders in ethnic populations. Dermatol Clin. 2003;21:629-644.

- Ribera M, Fernández-Chico N, Casals M. Pseudofolliculitis barbae [in Spanish]. Actas Dermosifiliogr. 2010;101:749-757.

- Conte MS, Lawrence JE. Pseudofolliculitis barbae. no ‘pseudoproblem.’ JAMA. 1979;241:53-54.

- Alexander AM. Evaluation of a foil-guarded shaver in the management of pseudofolliculitis barbae. Cutis. 1981;27:534-537, 540-542.

- Weiss AN, Arballo OM, Miletta NR, et al. Military grooming standards and their impact on skin diseases of the head and neck. Cutis. 2018;102:328;331-333.

- Alexis A, Heath CR, Halder RM. Folliculitis keloidalis nuchae and pseudofolliculitis barbae: are prevention and effective treatment within reach? Dermatol Clin. 2014;32:183-191.

- Cowley K, Vanoosthuyze K, Ertel K, et al. Blade shaving. In: Draelos ZD, ed. Cosmetic Dermatology: Products and Procedures. 2nd ed. John Wiley & Sons; 2015:166-173.

- Cowley K, Vanoosthuyze K. Insights into shaving and its impact on skin. Br J Dermatol. 2012;166(suppl 1):6-12.

- Cowley K, Vanoosthuyze K. The biomechanics of blade shaving. Int J Cosmet Sci. 2016;38(suppl 1):17-23.

- Kindred C, Oresajo CO, Yatskayer M, et al. Comparative evaluation of men’s depilatory composition versus razor in black men. Cutis. 2011;88:98-103.

Pseudofolliculitis barbae (PFB)(also known as razor bumps or shaving bumps)1 is a skin condition that consists of papules resulting from ingrown hairs.2 In more severe cases, papules become pustules, then abscesses, which can cause scarring.1,2 The condition can be distressing for patients, with considerable negative impact on their daily lives.3 The condition also is associated with shaving-related stinging, burning, pruritus, and cuts on the skin.4

Pseudofolliculitis barbae is most common in men of African descent due to the curved nature of the hair follicle,2,5,6 with an estimated prevalence in this population of 45% to 83%,1,6 but it can affect men of other ethnicities.7 A genetic polymorphism in a gene encoding a keratin specific to the hair follicle also has been found to predispose some individuals to PFB.5 When hair from a curved or destabilized hair follicle is cut to form a sharp tip, it is susceptible to extrafollicular and/or transfollicular penetration,5,6,8 as illustrated in Figure 1.

With extrafollicular or transfollicular penetration, the hair shaft re-enters or retracts into the dermis, triggering an inflammatory response that may be exacerbated by subsequent shaving.2 Few studies have been published that aim to identify potential shaving solutions for individuals with PFB who elect to or need to continue shaving.

A new razor technology comprising 2 blades separated by a bridge feature has been designed specifically for men with razor bumps (SkinGuard [Procter & Gamble]). The SkinGuard razor redistributes shaving pressure so that there is less force from the blades on the skin and inflamed lesions than without the bridge, as seen in Figure 2. The razor has been designed to protect the skin from the blades, thereby minimizing the occurrence of new lesions and allowing existing lesions to heal.

![Test razor bridge feature (SkinGuard [Procter & Gamble]) minimizes the force of the razor blades on the skin. Copyright 2022 The Procter & Gamble Company. Test razor bridge feature (SkinGuard [Procter & Gamble]) minimizes the force of the razor blades on the skin. Copyright 2022 The Procter & Gamble Company.](https://cdn.mdedge.com/files/s3fs-public/Moran_2.jpg)

The primary purpose of this study was to assess the appearance of males with razor bumps and shaving irritation when using the new razor technology in a regular shaving routine. The secondary objective was to measure satisfaction of the shaving experience when using the new razor by means of assessing itching, burning, and stinging using the participant global severity assessment (PGSA) and the impact on quality of life (QOL) measures.

Methods

Participants—Eligible participants were male, aged 20 to 60 years, and had clinically diagnosed PFB as well as symptoms of skin irritation from shaving. Participants were recruited from a dermatology clinic and via institutional review board–approved advertising.

Those eligible for inclusion in the study had a shaving routine that comprised shaving at least 3 times a week using a wet-shave, blade-razor technique accompanied by only a shave gel or foam. In addition, eligible participants had mild to moderate symptoms of skin irritation (a minimum of 10 razor bumps) from shaving based on investigator global severity assessment (IGSA) rating scales and were willing to shave at least 5 times a week during the study period. Participants could continue certain topical and systemic interventions for their skin.

Participants were excluded from the study if they had an underlying inflammatory disease that could manifest with a skin rash or were using any of these medications: topical benzoyl peroxide, topical clindamycin, topical retinoids, or oral antibiotics.