User login

Weekends Off on Clinical Rotations? Examining Clinical Opportunity Trends on Weekdays vs Weekends During Internal Medicine Clerkship Rotations in Veterans Health Administration Inpatient Wards

Background

The Accreditation Council for Graduate Medical Education (ACGME) mandates an 80-hour weekly work limit for residents.1 In contrast, decisions regarding undergraduate medical education (UME) are strongly influenced locally, with individual institutions setting academic policy for students. These differences in oversight reflect fundamental differences in residents’ and students’ roles in patient care, power, and responsibility. Considering rotation schedules, internal medicine (IM) clerkship directors have discussed the relative value of weekend vs weekday duty during inpatient rotations, a scheduling topic of interest to students as well, though these conversations are limited by a lack of knowledge regarding admission patterns. Addressing this information gap would inform policy decisions.

The Veterans Health Administration (VHA) is uniquely positioned to address questions about UME clinical experiences nationwide: annually, over 118,000 students representing 97% of US medical schools train at VHA facilities.2,3 We aim to compare the number and variety of patient encounter opportunities presenting during inpatient VHA IM rotations on weekdays versus weekends to inform policy decisions for UME rotation schedules.

Innovation

The VHA Corporate Data Warehouse will be queried for all admissions, diagnoses, and length of stay on inpatient IM services at the 420 VHA hospitals affiliated with US medical schools from 2016-2026. We will aggregate case data for day of week, floor, hospital, and Veteran Integrated Service Network (VISN), and determine number of admissions by weekday (Monday-Friday) and weekend (Saturday-Sunday). Weekday vs. weekend admission data will be compared using generalized mixed effects models for clustered longitudinal data. Heterogeneity across hospitals and VISNs will be explored to examine unique regional trends.

Results

We have drafted strategies to query and curate relevant datasets, developed a preliminary analysis plan, and await data deployment from VHA data stewards.

Conclusions

We believe this will be the first VHA-wide evaluation of patient encounter trends on IM services to examine potential training experiences for medical students. This will increase understanding of the critical role VHA has in developing the nations’ healthcare workforce, and how patterns of opportunities for clinical education may be distributed over time, informing decisions about rotation schedules to maximize students’ abilities to interact with, learn from, and serve our nation’s veterans

- Dimitris KD, Taylor BC, Fankhauser RA. Resident work-week regulations: historical review and modern perspectives. J Surg Educ. 2008;65(4):290-296. doi:10.1016/j.jsurg.2008.05.011

- Health professions education statistics. Veterans Health Administration. Accessed March 19, 2025. https://www.va.gov/oaa/docs/OAACurrentStats.pdf

- Medical education at VA: It’s all about the Veterans. VA News. Updated August 16, 2021. Accessed March 19, 2025. https://news.va.gov/93370/medical-education-at-va-its-all-about-the-veterans/

Background

The Accreditation Council for Graduate Medical Education (ACGME) mandates an 80-hour weekly work limit for residents.1 In contrast, decisions regarding undergraduate medical education (UME) are strongly influenced locally, with individual institutions setting academic policy for students. These differences in oversight reflect fundamental differences in residents’ and students’ roles in patient care, power, and responsibility. Considering rotation schedules, internal medicine (IM) clerkship directors have discussed the relative value of weekend vs weekday duty during inpatient rotations, a scheduling topic of interest to students as well, though these conversations are limited by a lack of knowledge regarding admission patterns. Addressing this information gap would inform policy decisions.

The Veterans Health Administration (VHA) is uniquely positioned to address questions about UME clinical experiences nationwide: annually, over 118,000 students representing 97% of US medical schools train at VHA facilities.2,3 We aim to compare the number and variety of patient encounter opportunities presenting during inpatient VHA IM rotations on weekdays versus weekends to inform policy decisions for UME rotation schedules.

Innovation

The VHA Corporate Data Warehouse will be queried for all admissions, diagnoses, and length of stay on inpatient IM services at the 420 VHA hospitals affiliated with US medical schools from 2016-2026. We will aggregate case data for day of week, floor, hospital, and Veteran Integrated Service Network (VISN), and determine number of admissions by weekday (Monday-Friday) and weekend (Saturday-Sunday). Weekday vs. weekend admission data will be compared using generalized mixed effects models for clustered longitudinal data. Heterogeneity across hospitals and VISNs will be explored to examine unique regional trends.

Results

We have drafted strategies to query and curate relevant datasets, developed a preliminary analysis plan, and await data deployment from VHA data stewards.

Conclusions

We believe this will be the first VHA-wide evaluation of patient encounter trends on IM services to examine potential training experiences for medical students. This will increase understanding of the critical role VHA has in developing the nations’ healthcare workforce, and how patterns of opportunities for clinical education may be distributed over time, informing decisions about rotation schedules to maximize students’ abilities to interact with, learn from, and serve our nation’s veterans

Background

The Accreditation Council for Graduate Medical Education (ACGME) mandates an 80-hour weekly work limit for residents.1 In contrast, decisions regarding undergraduate medical education (UME) are strongly influenced locally, with individual institutions setting academic policy for students. These differences in oversight reflect fundamental differences in residents’ and students’ roles in patient care, power, and responsibility. Considering rotation schedules, internal medicine (IM) clerkship directors have discussed the relative value of weekend vs weekday duty during inpatient rotations, a scheduling topic of interest to students as well, though these conversations are limited by a lack of knowledge regarding admission patterns. Addressing this information gap would inform policy decisions.

The Veterans Health Administration (VHA) is uniquely positioned to address questions about UME clinical experiences nationwide: annually, over 118,000 students representing 97% of US medical schools train at VHA facilities.2,3 We aim to compare the number and variety of patient encounter opportunities presenting during inpatient VHA IM rotations on weekdays versus weekends to inform policy decisions for UME rotation schedules.

Innovation

The VHA Corporate Data Warehouse will be queried for all admissions, diagnoses, and length of stay on inpatient IM services at the 420 VHA hospitals affiliated with US medical schools from 2016-2026. We will aggregate case data for day of week, floor, hospital, and Veteran Integrated Service Network (VISN), and determine number of admissions by weekday (Monday-Friday) and weekend (Saturday-Sunday). Weekday vs. weekend admission data will be compared using generalized mixed effects models for clustered longitudinal data. Heterogeneity across hospitals and VISNs will be explored to examine unique regional trends.

Results

We have drafted strategies to query and curate relevant datasets, developed a preliminary analysis plan, and await data deployment from VHA data stewards.

Conclusions

We believe this will be the first VHA-wide evaluation of patient encounter trends on IM services to examine potential training experiences for medical students. This will increase understanding of the critical role VHA has in developing the nations’ healthcare workforce, and how patterns of opportunities for clinical education may be distributed over time, informing decisions about rotation schedules to maximize students’ abilities to interact with, learn from, and serve our nation’s veterans

- Dimitris KD, Taylor BC, Fankhauser RA. Resident work-week regulations: historical review and modern perspectives. J Surg Educ. 2008;65(4):290-296. doi:10.1016/j.jsurg.2008.05.011

- Health professions education statistics. Veterans Health Administration. Accessed March 19, 2025. https://www.va.gov/oaa/docs/OAACurrentStats.pdf

- Medical education at VA: It’s all about the Veterans. VA News. Updated August 16, 2021. Accessed March 19, 2025. https://news.va.gov/93370/medical-education-at-va-its-all-about-the-veterans/

- Dimitris KD, Taylor BC, Fankhauser RA. Resident work-week regulations: historical review and modern perspectives. J Surg Educ. 2008;65(4):290-296. doi:10.1016/j.jsurg.2008.05.011

- Health professions education statistics. Veterans Health Administration. Accessed March 19, 2025. https://www.va.gov/oaa/docs/OAACurrentStats.pdf

- Medical education at VA: It’s all about the Veterans. VA News. Updated August 16, 2021. Accessed March 19, 2025. https://news.va.gov/93370/medical-education-at-va-its-all-about-the-veterans/

Developing a Multi-Disciplinary Integrative Health Elective at the San Francisco VA

Background

Integrative health (IH) combines conventional and complementary medicine in a coordinated, evidence-based approach to treat the whole person. Nearly 40% of American adults have used complementary health approaches,1 yet IH exposure in medical training is limited. In 2022, the San Francisco VA Health Care Center launched a multidisciplinary clinical IH elective for University of California San Francisco (UCSF) internal medicine and SFVA nurse practitioner residents. Based on findings from a general and targeted needs assessment, including faculty and learner feedback, we found that the elective was well-received, but relied on one-on-one patient-based teaching. This structure created variable learning experiences and high faculty burden. Our project aims to formalize and evaluate the IH elective curriculum to better address the needs of both faculty and learners.

Methods

We used Kern’s six-step framework for curriculum development. To reduce variability, we sought to formalize the core curricular content by: 1) reviewing existing elective components, comparing them to similar curricula nationwide, and outlining foundational knowledge based on the exam domains of the American Board of Integrative Medicine (ABOIM);2 2) creating eleven learning objectives across three themes: patient-centered care, systems-based practice, and IH-specific knowledge; 3) developing IH subspecialty experience guides to standardize clinical teaching with suggested takeaways, guided reflection, and curated resources. To reduce faculty burden, we consolidated elective resources into a centralized e-learning hub. Trainees complete a pre/post self-assessment and evaluation at the end of the elective.

Results

We identified key learning opportunities in each IH shadowing experience to enhance learners’ knowledge. We developed an IH e-Learning Hub to provide easy access to elective materials and IH clinical tools. Evaluations from the first two learners who completed the elective indicate that the learning objectives were met and that learners gained increased knowledge of lifestyle medicine, mind-body medicine, manual medicine, and botanicals/dietary supplements. Learners valued increased IH subspecialty familiarity and reported high likelihood of future practice change.

Discussion

The project is ongoing. Next steps include collecting faculty evaluations about their experience, continuing to create and refine experience guides, promoting clinical tools for learner’s future practice, and developing strategies to recruit more learners to the elective.

- Nahin RL, Rhee A, Stussman B. Use of Complementary Health Approaches Overall and for Pain Management by US Adults. JAMA. 2024;331(7):613-615. doi:10.1001/jama.2023.26775

- Integrative medicine exam description. American Board of Physician Specialties. Updated July 2021. Accessed December 12, 2025. https://www.abpsus.org/integrative-medicine-description

Background

Integrative health (IH) combines conventional and complementary medicine in a coordinated, evidence-based approach to treat the whole person. Nearly 40% of American adults have used complementary health approaches,1 yet IH exposure in medical training is limited. In 2022, the San Francisco VA Health Care Center launched a multidisciplinary clinical IH elective for University of California San Francisco (UCSF) internal medicine and SFVA nurse practitioner residents. Based on findings from a general and targeted needs assessment, including faculty and learner feedback, we found that the elective was well-received, but relied on one-on-one patient-based teaching. This structure created variable learning experiences and high faculty burden. Our project aims to formalize and evaluate the IH elective curriculum to better address the needs of both faculty and learners.

Methods

We used Kern’s six-step framework for curriculum development. To reduce variability, we sought to formalize the core curricular content by: 1) reviewing existing elective components, comparing them to similar curricula nationwide, and outlining foundational knowledge based on the exam domains of the American Board of Integrative Medicine (ABOIM);2 2) creating eleven learning objectives across three themes: patient-centered care, systems-based practice, and IH-specific knowledge; 3) developing IH subspecialty experience guides to standardize clinical teaching with suggested takeaways, guided reflection, and curated resources. To reduce faculty burden, we consolidated elective resources into a centralized e-learning hub. Trainees complete a pre/post self-assessment and evaluation at the end of the elective.

Results

We identified key learning opportunities in each IH shadowing experience to enhance learners’ knowledge. We developed an IH e-Learning Hub to provide easy access to elective materials and IH clinical tools. Evaluations from the first two learners who completed the elective indicate that the learning objectives were met and that learners gained increased knowledge of lifestyle medicine, mind-body medicine, manual medicine, and botanicals/dietary supplements. Learners valued increased IH subspecialty familiarity and reported high likelihood of future practice change.

Discussion

The project is ongoing. Next steps include collecting faculty evaluations about their experience, continuing to create and refine experience guides, promoting clinical tools for learner’s future practice, and developing strategies to recruit more learners to the elective.

Background

Integrative health (IH) combines conventional and complementary medicine in a coordinated, evidence-based approach to treat the whole person. Nearly 40% of American adults have used complementary health approaches,1 yet IH exposure in medical training is limited. In 2022, the San Francisco VA Health Care Center launched a multidisciplinary clinical IH elective for University of California San Francisco (UCSF) internal medicine and SFVA nurse practitioner residents. Based on findings from a general and targeted needs assessment, including faculty and learner feedback, we found that the elective was well-received, but relied on one-on-one patient-based teaching. This structure created variable learning experiences and high faculty burden. Our project aims to formalize and evaluate the IH elective curriculum to better address the needs of both faculty and learners.

Methods

We used Kern’s six-step framework for curriculum development. To reduce variability, we sought to formalize the core curricular content by: 1) reviewing existing elective components, comparing them to similar curricula nationwide, and outlining foundational knowledge based on the exam domains of the American Board of Integrative Medicine (ABOIM);2 2) creating eleven learning objectives across three themes: patient-centered care, systems-based practice, and IH-specific knowledge; 3) developing IH subspecialty experience guides to standardize clinical teaching with suggested takeaways, guided reflection, and curated resources. To reduce faculty burden, we consolidated elective resources into a centralized e-learning hub. Trainees complete a pre/post self-assessment and evaluation at the end of the elective.

Results

We identified key learning opportunities in each IH shadowing experience to enhance learners’ knowledge. We developed an IH e-Learning Hub to provide easy access to elective materials and IH clinical tools. Evaluations from the first two learners who completed the elective indicate that the learning objectives were met and that learners gained increased knowledge of lifestyle medicine, mind-body medicine, manual medicine, and botanicals/dietary supplements. Learners valued increased IH subspecialty familiarity and reported high likelihood of future practice change.

Discussion

The project is ongoing. Next steps include collecting faculty evaluations about their experience, continuing to create and refine experience guides, promoting clinical tools for learner’s future practice, and developing strategies to recruit more learners to the elective.

- Nahin RL, Rhee A, Stussman B. Use of Complementary Health Approaches Overall and for Pain Management by US Adults. JAMA. 2024;331(7):613-615. doi:10.1001/jama.2023.26775

- Integrative medicine exam description. American Board of Physician Specialties. Updated July 2021. Accessed December 12, 2025. https://www.abpsus.org/integrative-medicine-description

- Nahin RL, Rhee A, Stussman B. Use of Complementary Health Approaches Overall and for Pain Management by US Adults. JAMA. 2024;331(7):613-615. doi:10.1001/jama.2023.26775

- Integrative medicine exam description. American Board of Physician Specialties. Updated July 2021. Accessed December 12, 2025. https://www.abpsus.org/integrative-medicine-description

Harm Reduction Integration in an Interprofessional Primary Care Training Clinic

Background

Among people who use drugs (PWUD), harm reduction (HR) is an evidence-based low barrier approach to mitigating ongoing substance use risks and is considered a key pillar of the Department of Health and Human Service’s Overdose Prevention Strategy.1 Given the accessibility and continuity, primary care (PC) clinics are optimal sites for education about and provision of HR services.2,3

Aim

- Determining the impact of active and passive methods for HR supply.

- Recognizing the importance of clinician addiction education in the provision of HR services.

Methods

In January 2024, physician and nurse practitioner trainees in the West Haven Veterans Affairs (VA) Center of Education (CoE) in Interprofessional Primary Care received addiction care and HR strategy education. Initially, all patients presenting to the CoE completed a single-item substance use screening. Patients screening positive were offered HR supplies, including fentanyl and xylazine test strips (FTS, XTS), during the encounter (active distribution). Starting October 2024, HR kiosks were implemented in the clinic lobby, offering patients self-serve access to HR supplies (passive distribution). Test strip uptake was tracked through clinical encounter documentation and weekly kiosk inventory.

Results

Between January 2024 and June 2024, 92 FTS and 84 XTS were actively distributed. Upon implementation of the harm reduction kiosk, 253 FTS and 164 XTS were distributed between October 2024 and February 2025. In the CoE, FTS and XTS distribution increased by 275% and 195%, respectively, through passive kiosk distribution relative to active distribution during clinical encounters.

Conclusions

HR kiosk implementation resulted in significantly increased test strip uptake in the CoE, proving passive distribution to be an effective low barrier method of increasing access to HR and substance use disorder (SUD) resources. Although this model may reduce stigma and logistical barriers when presenting for a healthcare encounter, it limits the ability to track and engage patients for more intensive services. While each approach has unique advantages and disadvantages, test strip demand via both methods highlights the significant need for HR resources in PC settings. Continuing education for PC clinicians on low barrier SUD care and HR is critical to optimizing care for this population.

- Haffajee, RL, Sherry, TB, Dubenitz, JM, et al. Overdose prevention strategy. US Department of Health and Human Services (Issue Brief). Published October 27, 2021. Accessed December 11, 2025. https://aspe.hhs.gov/sites/default/files/documents/101936da95b69acb8446a4bad9179cc0/overdose-prevention-strategy.pdf

- Substance Abuse and Mental Health Services Administration. Advisory: low barrier models of care for substance use disorders. SAMHSA Publication No. PEP23-02-00-005. Published December 2023. Accessed December 11, 2025. https://library.samhsa.gov/sites/default/files/advisory-low-barrier-models-of-care-pep23-02-00-005.pdf

- Substance Abuse and Mental Health Services Administration: Harm Reduction Framework. Center for Substance Abuse Prevention, Substance Abuse and Mental Health Services Administration, 2023.

Background

Among people who use drugs (PWUD), harm reduction (HR) is an evidence-based low barrier approach to mitigating ongoing substance use risks and is considered a key pillar of the Department of Health and Human Service’s Overdose Prevention Strategy.1 Given the accessibility and continuity, primary care (PC) clinics are optimal sites for education about and provision of HR services.2,3

Aim

- Determining the impact of active and passive methods for HR supply.

- Recognizing the importance of clinician addiction education in the provision of HR services.

Methods

In January 2024, physician and nurse practitioner trainees in the West Haven Veterans Affairs (VA) Center of Education (CoE) in Interprofessional Primary Care received addiction care and HR strategy education. Initially, all patients presenting to the CoE completed a single-item substance use screening. Patients screening positive were offered HR supplies, including fentanyl and xylazine test strips (FTS, XTS), during the encounter (active distribution). Starting October 2024, HR kiosks were implemented in the clinic lobby, offering patients self-serve access to HR supplies (passive distribution). Test strip uptake was tracked through clinical encounter documentation and weekly kiosk inventory.

Results

Between January 2024 and June 2024, 92 FTS and 84 XTS were actively distributed. Upon implementation of the harm reduction kiosk, 253 FTS and 164 XTS were distributed between October 2024 and February 2025. In the CoE, FTS and XTS distribution increased by 275% and 195%, respectively, through passive kiosk distribution relative to active distribution during clinical encounters.

Conclusions

HR kiosk implementation resulted in significantly increased test strip uptake in the CoE, proving passive distribution to be an effective low barrier method of increasing access to HR and substance use disorder (SUD) resources. Although this model may reduce stigma and logistical barriers when presenting for a healthcare encounter, it limits the ability to track and engage patients for more intensive services. While each approach has unique advantages and disadvantages, test strip demand via both methods highlights the significant need for HR resources in PC settings. Continuing education for PC clinicians on low barrier SUD care and HR is critical to optimizing care for this population.

Background

Among people who use drugs (PWUD), harm reduction (HR) is an evidence-based low barrier approach to mitigating ongoing substance use risks and is considered a key pillar of the Department of Health and Human Service’s Overdose Prevention Strategy.1 Given the accessibility and continuity, primary care (PC) clinics are optimal sites for education about and provision of HR services.2,3

Aim

- Determining the impact of active and passive methods for HR supply.

- Recognizing the importance of clinician addiction education in the provision of HR services.

Methods

In January 2024, physician and nurse practitioner trainees in the West Haven Veterans Affairs (VA) Center of Education (CoE) in Interprofessional Primary Care received addiction care and HR strategy education. Initially, all patients presenting to the CoE completed a single-item substance use screening. Patients screening positive were offered HR supplies, including fentanyl and xylazine test strips (FTS, XTS), during the encounter (active distribution). Starting October 2024, HR kiosks were implemented in the clinic lobby, offering patients self-serve access to HR supplies (passive distribution). Test strip uptake was tracked through clinical encounter documentation and weekly kiosk inventory.

Results

Between January 2024 and June 2024, 92 FTS and 84 XTS were actively distributed. Upon implementation of the harm reduction kiosk, 253 FTS and 164 XTS were distributed between October 2024 and February 2025. In the CoE, FTS and XTS distribution increased by 275% and 195%, respectively, through passive kiosk distribution relative to active distribution during clinical encounters.

Conclusions

HR kiosk implementation resulted in significantly increased test strip uptake in the CoE, proving passive distribution to be an effective low barrier method of increasing access to HR and substance use disorder (SUD) resources. Although this model may reduce stigma and logistical barriers when presenting for a healthcare encounter, it limits the ability to track and engage patients for more intensive services. While each approach has unique advantages and disadvantages, test strip demand via both methods highlights the significant need for HR resources in PC settings. Continuing education for PC clinicians on low barrier SUD care and HR is critical to optimizing care for this population.

- Haffajee, RL, Sherry, TB, Dubenitz, JM, et al. Overdose prevention strategy. US Department of Health and Human Services (Issue Brief). Published October 27, 2021. Accessed December 11, 2025. https://aspe.hhs.gov/sites/default/files/documents/101936da95b69acb8446a4bad9179cc0/overdose-prevention-strategy.pdf

- Substance Abuse and Mental Health Services Administration. Advisory: low barrier models of care for substance use disorders. SAMHSA Publication No. PEP23-02-00-005. Published December 2023. Accessed December 11, 2025. https://library.samhsa.gov/sites/default/files/advisory-low-barrier-models-of-care-pep23-02-00-005.pdf

- Substance Abuse and Mental Health Services Administration: Harm Reduction Framework. Center for Substance Abuse Prevention, Substance Abuse and Mental Health Services Administration, 2023.

- Haffajee, RL, Sherry, TB, Dubenitz, JM, et al. Overdose prevention strategy. US Department of Health and Human Services (Issue Brief). Published October 27, 2021. Accessed December 11, 2025. https://aspe.hhs.gov/sites/default/files/documents/101936da95b69acb8446a4bad9179cc0/overdose-prevention-strategy.pdf

- Substance Abuse and Mental Health Services Administration. Advisory: low barrier models of care for substance use disorders. SAMHSA Publication No. PEP23-02-00-005. Published December 2023. Accessed December 11, 2025. https://library.samhsa.gov/sites/default/files/advisory-low-barrier-models-of-care-pep23-02-00-005.pdf

- Substance Abuse and Mental Health Services Administration: Harm Reduction Framework. Center for Substance Abuse Prevention, Substance Abuse and Mental Health Services Administration, 2023.

Building Trust: Enhancing Rural Women Veterans’ Healthcare Experiences Through Need-Supportive Patient-Centered Communication

Background

Rural women veterans often confront unique healthcare barriers—geographic isolation, gender-related stigma, and limited provider cultural sensitivity that undermine trust and engagement. In response, we co-designed an interprofessional communication curriculum to promote relational, patient-centered care grounded in psychological need support.

Innovation

Anchored in Self Determination Theory (SDT), this curriculum equips nurses and social workers with need-supportive communication strategies that nurture autonomy, competence, and relatedness, integrating two transformative learning methods for enhancing respectful and inclusive listening:

- Cultural humility reflections for veteran-centered care—personal narratives, storytelling, and power-awareness discussions to build lifelong reflective practices.

- Medical improv simulations—adaptive improvisational role plays for healthcare environments fostering presence, adaptability, empathy, trust-building, and real-time responsiveness.

Delivered via a multiday health professions learning lab, the training combines asynchronous workshops with in-person facilitated interactions. Core modules cover SDT foundations, need supportive dialogue, veteran-centered cultural humility, and shared decision-making practices that uplift rural women veterans’ voices. Using Kirkpatrick’s Four Level Model, we assess impact at multiple tiers:

- Reaction: Participant satisfaction and perceived training relevance.

- Learning: Pre/post assessments track SDT knowledge and communication skills gains.

- Behavior: Observe simulations and self-reported changes in communication practices.

- Results: Qualitative satisfaction metrics and care engagement trends among rural women veterans.

Results

A pilot cohort (N = 20) across two rural sites is pending implementation. pre/post surveys will assess any improved confidence in applying need supportive communication and the most effective component in building empathetic presence. Feedback measures will also indicate the significance of combined uses of medical improv and cultural humility on deepened relational capacity and trust.

Discussion

This program operationalizes SDT within healthcare communications, integrating cultural humility and improvisation learning modalities to enhance care quality for rural women veterans, ultimately strengthening provider-patient connections. Using health professions learning lab environments can foster sustained behavioral impacts. Future iterations will expand to additional rural VA sites, co-designing with the voices of women veterans through focus groups.

Background

Rural women veterans often confront unique healthcare barriers—geographic isolation, gender-related stigma, and limited provider cultural sensitivity that undermine trust and engagement. In response, we co-designed an interprofessional communication curriculum to promote relational, patient-centered care grounded in psychological need support.

Innovation

Anchored in Self Determination Theory (SDT), this curriculum equips nurses and social workers with need-supportive communication strategies that nurture autonomy, competence, and relatedness, integrating two transformative learning methods for enhancing respectful and inclusive listening:

- Cultural humility reflections for veteran-centered care—personal narratives, storytelling, and power-awareness discussions to build lifelong reflective practices.

- Medical improv simulations—adaptive improvisational role plays for healthcare environments fostering presence, adaptability, empathy, trust-building, and real-time responsiveness.

Delivered via a multiday health professions learning lab, the training combines asynchronous workshops with in-person facilitated interactions. Core modules cover SDT foundations, need supportive dialogue, veteran-centered cultural humility, and shared decision-making practices that uplift rural women veterans’ voices. Using Kirkpatrick’s Four Level Model, we assess impact at multiple tiers:

- Reaction: Participant satisfaction and perceived training relevance.

- Learning: Pre/post assessments track SDT knowledge and communication skills gains.

- Behavior: Observe simulations and self-reported changes in communication practices.

- Results: Qualitative satisfaction metrics and care engagement trends among rural women veterans.

Results

A pilot cohort (N = 20) across two rural sites is pending implementation. pre/post surveys will assess any improved confidence in applying need supportive communication and the most effective component in building empathetic presence. Feedback measures will also indicate the significance of combined uses of medical improv and cultural humility on deepened relational capacity and trust.

Discussion

This program operationalizes SDT within healthcare communications, integrating cultural humility and improvisation learning modalities to enhance care quality for rural women veterans, ultimately strengthening provider-patient connections. Using health professions learning lab environments can foster sustained behavioral impacts. Future iterations will expand to additional rural VA sites, co-designing with the voices of women veterans through focus groups.

Background

Rural women veterans often confront unique healthcare barriers—geographic isolation, gender-related stigma, and limited provider cultural sensitivity that undermine trust and engagement. In response, we co-designed an interprofessional communication curriculum to promote relational, patient-centered care grounded in psychological need support.

Innovation

Anchored in Self Determination Theory (SDT), this curriculum equips nurses and social workers with need-supportive communication strategies that nurture autonomy, competence, and relatedness, integrating two transformative learning methods for enhancing respectful and inclusive listening:

- Cultural humility reflections for veteran-centered care—personal narratives, storytelling, and power-awareness discussions to build lifelong reflective practices.

- Medical improv simulations—adaptive improvisational role plays for healthcare environments fostering presence, adaptability, empathy, trust-building, and real-time responsiveness.

Delivered via a multiday health professions learning lab, the training combines asynchronous workshops with in-person facilitated interactions. Core modules cover SDT foundations, need supportive dialogue, veteran-centered cultural humility, and shared decision-making practices that uplift rural women veterans’ voices. Using Kirkpatrick’s Four Level Model, we assess impact at multiple tiers:

- Reaction: Participant satisfaction and perceived training relevance.

- Learning: Pre/post assessments track SDT knowledge and communication skills gains.

- Behavior: Observe simulations and self-reported changes in communication practices.

- Results: Qualitative satisfaction metrics and care engagement trends among rural women veterans.

Results

A pilot cohort (N = 20) across two rural sites is pending implementation. pre/post surveys will assess any improved confidence in applying need supportive communication and the most effective component in building empathetic presence. Feedback measures will also indicate the significance of combined uses of medical improv and cultural humility on deepened relational capacity and trust.

Discussion

This program operationalizes SDT within healthcare communications, integrating cultural humility and improvisation learning modalities to enhance care quality for rural women veterans, ultimately strengthening provider-patient connections. Using health professions learning lab environments can foster sustained behavioral impacts. Future iterations will expand to additional rural VA sites, co-designing with the voices of women veterans through focus groups.

Tai Chi Modification and Supplemental Movements Quality Improvement Program

Background

The original program consisted of 12 movements that were to be split up between 3 weeks teaching 4 movements each week. Range of mobility was the main consideration for developing this HPE quality improvement project. Veterans who wanted to participate in Tai Chi were not able to engage in the activity due to the range of movement traditional Tai Chi required.

Innovation

The HPE Quality Improvement program developed a 15-movement warm-up, 12 co-ordinational movements consistent with the original program, 18 supplemental Tai Chi movements that were not included in the original program all of which focus on movements remaining below the shoulders and can be done standing or sitting. Four advanced exercises including “hip over heel” were included to target participants balance if able and to improve their hip strength, knee tendon/ligament strength. Tai Chi loses its potential to increase balance when performed in a sitting position.1 The movements drew upon Fu style Tai Chi and the program developer was given permission from Tommy Kirchoff to use his DVD Healing Exercises. The HPE program consisted of four 30–60-minute weekly sessions of learning the movements with another 4 weekly sessions of demonstrating the movements. Instructors were given written and visual documents to learn from and were evaluated by the developer during the last 4 weeks.

.

Results

Qualitative Data: Instructors notice a difference in how they feel, and appreciate having another option to offer veterans with mobility/standing issues. Patients expressed improvement in mobility relating to bending, arm extension, arm raising, muscle strengthening, hip strengthening and rotation.

Discussion

Future research will want to look at taking measurements before and after patient implementation to determine quantitative data related to balance, strength and range of movement including grip strength, stand up and go, and one-legged stands.

- Skelton DA, Mavroeidi A. How do muscle and bone strengthening and balance activities (MBSBA) vary across the life course, and are there particular ages where MBSBA are most important?. J Frailty Sarcopenia Falls. 2018;3(2):74-84. Published 2018 Jun 1. doi:10.22540/JFSF-03-074

Background

The original program consisted of 12 movements that were to be split up between 3 weeks teaching 4 movements each week. Range of mobility was the main consideration for developing this HPE quality improvement project. Veterans who wanted to participate in Tai Chi were not able to engage in the activity due to the range of movement traditional Tai Chi required.

Innovation

The HPE Quality Improvement program developed a 15-movement warm-up, 12 co-ordinational movements consistent with the original program, 18 supplemental Tai Chi movements that were not included in the original program all of which focus on movements remaining below the shoulders and can be done standing or sitting. Four advanced exercises including “hip over heel” were included to target participants balance if able and to improve their hip strength, knee tendon/ligament strength. Tai Chi loses its potential to increase balance when performed in a sitting position.1 The movements drew upon Fu style Tai Chi and the program developer was given permission from Tommy Kirchoff to use his DVD Healing Exercises. The HPE program consisted of four 30–60-minute weekly sessions of learning the movements with another 4 weekly sessions of demonstrating the movements. Instructors were given written and visual documents to learn from and were evaluated by the developer during the last 4 weeks.

.

Results

Qualitative Data: Instructors notice a difference in how they feel, and appreciate having another option to offer veterans with mobility/standing issues. Patients expressed improvement in mobility relating to bending, arm extension, arm raising, muscle strengthening, hip strengthening and rotation.

Discussion

Future research will want to look at taking measurements before and after patient implementation to determine quantitative data related to balance, strength and range of movement including grip strength, stand up and go, and one-legged stands.

Background

The original program consisted of 12 movements that were to be split up between 3 weeks teaching 4 movements each week. Range of mobility was the main consideration for developing this HPE quality improvement project. Veterans who wanted to participate in Tai Chi were not able to engage in the activity due to the range of movement traditional Tai Chi required.

Innovation

The HPE Quality Improvement program developed a 15-movement warm-up, 12 co-ordinational movements consistent with the original program, 18 supplemental Tai Chi movements that were not included in the original program all of which focus on movements remaining below the shoulders and can be done standing or sitting. Four advanced exercises including “hip over heel” were included to target participants balance if able and to improve their hip strength, knee tendon/ligament strength. Tai Chi loses its potential to increase balance when performed in a sitting position.1 The movements drew upon Fu style Tai Chi and the program developer was given permission from Tommy Kirchoff to use his DVD Healing Exercises. The HPE program consisted of four 30–60-minute weekly sessions of learning the movements with another 4 weekly sessions of demonstrating the movements. Instructors were given written and visual documents to learn from and were evaluated by the developer during the last 4 weeks.

.

Results

Qualitative Data: Instructors notice a difference in how they feel, and appreciate having another option to offer veterans with mobility/standing issues. Patients expressed improvement in mobility relating to bending, arm extension, arm raising, muscle strengthening, hip strengthening and rotation.

Discussion

Future research will want to look at taking measurements before and after patient implementation to determine quantitative data related to balance, strength and range of movement including grip strength, stand up and go, and one-legged stands.

- Skelton DA, Mavroeidi A. How do muscle and bone strengthening and balance activities (MBSBA) vary across the life course, and are there particular ages where MBSBA are most important?. J Frailty Sarcopenia Falls. 2018;3(2):74-84. Published 2018 Jun 1. doi:10.22540/JFSF-03-074

- Skelton DA, Mavroeidi A. How do muscle and bone strengthening and balance activities (MBSBA) vary across the life course, and are there particular ages where MBSBA are most important?. J Frailty Sarcopenia Falls. 2018;3(2):74-84. Published 2018 Jun 1. doi:10.22540/JFSF-03-074

Improving Life-Sustaining Treatment Discussions and Order Quality in a Primary Care Clinic

Background

Veterans Health Administration Directive 1004.03(1) (Advance Care Planning) aims to establish a “system-wide, patient-centered and evidence-based approach to Advance Care Planning.”1 Life-sustaining treatment (LST) orders are documents of patient preference regarding interventions such as mechanical ventilation, CPR, dialysis, artificial nutrition and hydration; and are considered part of an Advance Care Plan. From a bioethics perspective, these orders promote patient autonomy by formalizing patient preferences around LSTs in the medical record, particularly for when a patient lacks capacity and/or cannot make decisions on their own.2 Through consensus building, our team defined vague, inactionable, or incorrectly written LST orders as Potentially Problematic Orders (PPO). PPOs which cause confusion at the bedside or lack clarity around preferences can pose serious risks to patient safety and autonomy by exposing patients to inappropriate initiation or withholding of LSTs. Improving the quality of LST orders and reducing the number of PPOs is a crucial element for safe and effective implementation of Directive 1004.03(1).

Aim

The aim of this quality improvement project was to reduce the number of PPOs in a VA Community-Based Outpatient Clinic (CBOC) by 75% by the end of 2025.

Methods

The Model for Improvement was used for this quality improvement project.3 One year of LST orders were audited and thematic analysis identified 7 subtypes of PPO. Some PPO subtypes included clerical errors, potentially mismatched order sets (e.g., Comfort Care order with no associated DNR order) ill-defined or vague orders, and clinically impractical orders (eg, “consents to one shock during CPR”). We defined vague, ill-defined, and impractical orders as the most ethically and clinically challenging given the possibility of confusion or error at the bedside. Initial data were collected from October 2022 to October 2023, and post-intervention data were collected from February 2024 to September 2024. Interventions included process changes (clarifying role responsibility, documentation practices, patient education), regular auditing and feedback from a supervisor, and staff education.

Results

Post-intervention analysis demonstrated that the proportion of PPO remained the same, with 25% of patient charts containing at least one PPO. However, the distribution of PPO in the most ethically and clinically problematic categories (vague, ill-defined, and impractical orders) decreased from 14.7% to <1%.

Conclusions

We successfully reduced the most ethically and clinically challenging PPOs to <1% in our initial intervention. To reduce the overall proportion of PPO, we plan enhancements in process automations, additional physical educational resources, and minor changes in audit criteria. Future projects will aim to address the remaining PPO error types and prepare this project for implementation in other CBOCs.

- US Department of Veterans Affairs, Veterans Health Administration. VHA Directive 1004.03(1): Advance care planning. Published December 12, 2023. Accessed December 11, 2025. https://www.va.gov/vhapublications/ViewPublication.asp?pub_ID=11610

- White DB, Curtis JR, Lo B, Luce JM. Decisions to limit life-sustaining treatment for critically ill patients who lack both decision-making capacity and surrogate decision-makers. Crit Care Med. 2006;34(8):2053-2059. doi:10.1097/01.CCM.0000227654.38708.C1

- Ogrinc GS, Headrick LA, Barton AJ, Dolansky MA, Madigosky WS, Miltner RS, Hall AG. Fundamentals of Health Care Improvement: A Guide to Improving Your Patients’ Care (4th edition). Joint Commission Resources and Institute for Healthcare Improvement; 2022.

Background

Veterans Health Administration Directive 1004.03(1) (Advance Care Planning) aims to establish a “system-wide, patient-centered and evidence-based approach to Advance Care Planning.”1 Life-sustaining treatment (LST) orders are documents of patient preference regarding interventions such as mechanical ventilation, CPR, dialysis, artificial nutrition and hydration; and are considered part of an Advance Care Plan. From a bioethics perspective, these orders promote patient autonomy by formalizing patient preferences around LSTs in the medical record, particularly for when a patient lacks capacity and/or cannot make decisions on their own.2 Through consensus building, our team defined vague, inactionable, or incorrectly written LST orders as Potentially Problematic Orders (PPO). PPOs which cause confusion at the bedside or lack clarity around preferences can pose serious risks to patient safety and autonomy by exposing patients to inappropriate initiation or withholding of LSTs. Improving the quality of LST orders and reducing the number of PPOs is a crucial element for safe and effective implementation of Directive 1004.03(1).

Aim

The aim of this quality improvement project was to reduce the number of PPOs in a VA Community-Based Outpatient Clinic (CBOC) by 75% by the end of 2025.

Methods

The Model for Improvement was used for this quality improvement project.3 One year of LST orders were audited and thematic analysis identified 7 subtypes of PPO. Some PPO subtypes included clerical errors, potentially mismatched order sets (e.g., Comfort Care order with no associated DNR order) ill-defined or vague orders, and clinically impractical orders (eg, “consents to one shock during CPR”). We defined vague, ill-defined, and impractical orders as the most ethically and clinically challenging given the possibility of confusion or error at the bedside. Initial data were collected from October 2022 to October 2023, and post-intervention data were collected from February 2024 to September 2024. Interventions included process changes (clarifying role responsibility, documentation practices, patient education), regular auditing and feedback from a supervisor, and staff education.

Results

Post-intervention analysis demonstrated that the proportion of PPO remained the same, with 25% of patient charts containing at least one PPO. However, the distribution of PPO in the most ethically and clinically problematic categories (vague, ill-defined, and impractical orders) decreased from 14.7% to <1%.

Conclusions

We successfully reduced the most ethically and clinically challenging PPOs to <1% in our initial intervention. To reduce the overall proportion of PPO, we plan enhancements in process automations, additional physical educational resources, and minor changes in audit criteria. Future projects will aim to address the remaining PPO error types and prepare this project for implementation in other CBOCs.

Background

Veterans Health Administration Directive 1004.03(1) (Advance Care Planning) aims to establish a “system-wide, patient-centered and evidence-based approach to Advance Care Planning.”1 Life-sustaining treatment (LST) orders are documents of patient preference regarding interventions such as mechanical ventilation, CPR, dialysis, artificial nutrition and hydration; and are considered part of an Advance Care Plan. From a bioethics perspective, these orders promote patient autonomy by formalizing patient preferences around LSTs in the medical record, particularly for when a patient lacks capacity and/or cannot make decisions on their own.2 Through consensus building, our team defined vague, inactionable, or incorrectly written LST orders as Potentially Problematic Orders (PPO). PPOs which cause confusion at the bedside or lack clarity around preferences can pose serious risks to patient safety and autonomy by exposing patients to inappropriate initiation or withholding of LSTs. Improving the quality of LST orders and reducing the number of PPOs is a crucial element for safe and effective implementation of Directive 1004.03(1).

Aim

The aim of this quality improvement project was to reduce the number of PPOs in a VA Community-Based Outpatient Clinic (CBOC) by 75% by the end of 2025.

Methods

The Model for Improvement was used for this quality improvement project.3 One year of LST orders were audited and thematic analysis identified 7 subtypes of PPO. Some PPO subtypes included clerical errors, potentially mismatched order sets (e.g., Comfort Care order with no associated DNR order) ill-defined or vague orders, and clinically impractical orders (eg, “consents to one shock during CPR”). We defined vague, ill-defined, and impractical orders as the most ethically and clinically challenging given the possibility of confusion or error at the bedside. Initial data were collected from October 2022 to October 2023, and post-intervention data were collected from February 2024 to September 2024. Interventions included process changes (clarifying role responsibility, documentation practices, patient education), regular auditing and feedback from a supervisor, and staff education.

Results

Post-intervention analysis demonstrated that the proportion of PPO remained the same, with 25% of patient charts containing at least one PPO. However, the distribution of PPO in the most ethically and clinically problematic categories (vague, ill-defined, and impractical orders) decreased from 14.7% to <1%.

Conclusions

We successfully reduced the most ethically and clinically challenging PPOs to <1% in our initial intervention. To reduce the overall proportion of PPO, we plan enhancements in process automations, additional physical educational resources, and minor changes in audit criteria. Future projects will aim to address the remaining PPO error types and prepare this project for implementation in other CBOCs.

- US Department of Veterans Affairs, Veterans Health Administration. VHA Directive 1004.03(1): Advance care planning. Published December 12, 2023. Accessed December 11, 2025. https://www.va.gov/vhapublications/ViewPublication.asp?pub_ID=11610

- White DB, Curtis JR, Lo B, Luce JM. Decisions to limit life-sustaining treatment for critically ill patients who lack both decision-making capacity and surrogate decision-makers. Crit Care Med. 2006;34(8):2053-2059. doi:10.1097/01.CCM.0000227654.38708.C1

- Ogrinc GS, Headrick LA, Barton AJ, Dolansky MA, Madigosky WS, Miltner RS, Hall AG. Fundamentals of Health Care Improvement: A Guide to Improving Your Patients’ Care (4th edition). Joint Commission Resources and Institute for Healthcare Improvement; 2022.

- US Department of Veterans Affairs, Veterans Health Administration. VHA Directive 1004.03(1): Advance care planning. Published December 12, 2023. Accessed December 11, 2025. https://www.va.gov/vhapublications/ViewPublication.asp?pub_ID=11610

- White DB, Curtis JR, Lo B, Luce JM. Decisions to limit life-sustaining treatment for critically ill patients who lack both decision-making capacity and surrogate decision-makers. Crit Care Med. 2006;34(8):2053-2059. doi:10.1097/01.CCM.0000227654.38708.C1

- Ogrinc GS, Headrick LA, Barton AJ, Dolansky MA, Madigosky WS, Miltner RS, Hall AG. Fundamentals of Health Care Improvement: A Guide to Improving Your Patients’ Care (4th edition). Joint Commission Resources and Institute for Healthcare Improvement; 2022.

A Health Educator’s Primer to Cost-Effectiveness in Health Professions Education

Background

Cost-effectiveness (CE) evaluations, for existing and anticipated programs, are common in healthcare, but are rarely used in health professions education (HPE). A systematic review of HPE literature found not only few examples of CE evaluations, but also unclear and inconsistent methodology.1 One proposed reason HPE has been slow to adopt CE evaluations is uncertainty over terminology and how to adapt this methodology to HPE.2 CE evaluations present further challenges for HPE since educational outcomes are often not easily monetized. However, given the reality of constrained budgets and limited resources, CE evaluations can be a powerful tool for educators to strengthen arguments for proposed innovations, and for scholars seeking to conduct rigorous work that sustains critical review.

Innovation

This project aims to make CE evaluations more understandable to HPE educators, using a one-page infographic and glossary. This will provide a primer, operationalizing the steps involved in CE evaluations and addressing why and when CE evaluations might be considered in HPE. To improve comprehension, this is being developed collaboratively with health professions educators and an economist. This infographic will be submitted for publication, as a resource to facilitate educators’ scholarly work and conversations with fiscal administrators.

Results

The infographic includes 1) an overview of CE evaluations, 2) information about inputs required for CE evaluations, 3) guidance on interpreting results, 4) a glossary of key terminology, and 5) considerations for why educators might consider this type of analysis. A final draft will be pilot tested with a focus group to assess interdisciplinary accessibility.

Discussion

Discussions between health professions educators and an economist on this infographic uncovered concepts that were poorly understood or defined differently across disciplines, determining specific knowledge gaps and misunderstandings. For example, facilitating conversation between educators and economists highlighted key terms that were a source of misunderstanding. These were then added to the glossary, creating a shared vocabulary. This also helped clarify the steps and information necessary for conducting CE evaluations in HPE, particularly the issue of perspective choice for the analysis (educator, patient, learner, etc.). Overall, this collaboration aimed at making CE evaluations more approachable and understandable for HPE professionals through this infographic.

- Foo J, Cook DA, Walsh K, et al. Cost evaluations in health professions education: a systematic review of methods and reporting quality. Med Educ. 2019;53(12):1196-1208. doi:10.1111/medu.13936

- Maloney S, Reeves S, Rivers G, Ilic D, Foo J, Walsh K. The Prato Statement on cost and value in professional and interprofessional education. J Interprof Care. 2017;31(1):1-4. doi:10.1080/13561820.2016.1257255

Background

Cost-effectiveness (CE) evaluations, for existing and anticipated programs, are common in healthcare, but are rarely used in health professions education (HPE). A systematic review of HPE literature found not only few examples of CE evaluations, but also unclear and inconsistent methodology.1 One proposed reason HPE has been slow to adopt CE evaluations is uncertainty over terminology and how to adapt this methodology to HPE.2 CE evaluations present further challenges for HPE since educational outcomes are often not easily monetized. However, given the reality of constrained budgets and limited resources, CE evaluations can be a powerful tool for educators to strengthen arguments for proposed innovations, and for scholars seeking to conduct rigorous work that sustains critical review.

Innovation

This project aims to make CE evaluations more understandable to HPE educators, using a one-page infographic and glossary. This will provide a primer, operationalizing the steps involved in CE evaluations and addressing why and when CE evaluations might be considered in HPE. To improve comprehension, this is being developed collaboratively with health professions educators and an economist. This infographic will be submitted for publication, as a resource to facilitate educators’ scholarly work and conversations with fiscal administrators.

Results

The infographic includes 1) an overview of CE evaluations, 2) information about inputs required for CE evaluations, 3) guidance on interpreting results, 4) a glossary of key terminology, and 5) considerations for why educators might consider this type of analysis. A final draft will be pilot tested with a focus group to assess interdisciplinary accessibility.

Discussion

Discussions between health professions educators and an economist on this infographic uncovered concepts that were poorly understood or defined differently across disciplines, determining specific knowledge gaps and misunderstandings. For example, facilitating conversation between educators and economists highlighted key terms that were a source of misunderstanding. These were then added to the glossary, creating a shared vocabulary. This also helped clarify the steps and information necessary for conducting CE evaluations in HPE, particularly the issue of perspective choice for the analysis (educator, patient, learner, etc.). Overall, this collaboration aimed at making CE evaluations more approachable and understandable for HPE professionals through this infographic.

Background

Cost-effectiveness (CE) evaluations, for existing and anticipated programs, are common in healthcare, but are rarely used in health professions education (HPE). A systematic review of HPE literature found not only few examples of CE evaluations, but also unclear and inconsistent methodology.1 One proposed reason HPE has been slow to adopt CE evaluations is uncertainty over terminology and how to adapt this methodology to HPE.2 CE evaluations present further challenges for HPE since educational outcomes are often not easily monetized. However, given the reality of constrained budgets and limited resources, CE evaluations can be a powerful tool for educators to strengthen arguments for proposed innovations, and for scholars seeking to conduct rigorous work that sustains critical review.

Innovation

This project aims to make CE evaluations more understandable to HPE educators, using a one-page infographic and glossary. This will provide a primer, operationalizing the steps involved in CE evaluations and addressing why and when CE evaluations might be considered in HPE. To improve comprehension, this is being developed collaboratively with health professions educators and an economist. This infographic will be submitted for publication, as a resource to facilitate educators’ scholarly work and conversations with fiscal administrators.

Results

The infographic includes 1) an overview of CE evaluations, 2) information about inputs required for CE evaluations, 3) guidance on interpreting results, 4) a glossary of key terminology, and 5) considerations for why educators might consider this type of analysis. A final draft will be pilot tested with a focus group to assess interdisciplinary accessibility.

Discussion

Discussions between health professions educators and an economist on this infographic uncovered concepts that were poorly understood or defined differently across disciplines, determining specific knowledge gaps and misunderstandings. For example, facilitating conversation between educators and economists highlighted key terms that were a source of misunderstanding. These were then added to the glossary, creating a shared vocabulary. This also helped clarify the steps and information necessary for conducting CE evaluations in HPE, particularly the issue of perspective choice for the analysis (educator, patient, learner, etc.). Overall, this collaboration aimed at making CE evaluations more approachable and understandable for HPE professionals through this infographic.

- Foo J, Cook DA, Walsh K, et al. Cost evaluations in health professions education: a systematic review of methods and reporting quality. Med Educ. 2019;53(12):1196-1208. doi:10.1111/medu.13936

- Maloney S, Reeves S, Rivers G, Ilic D, Foo J, Walsh K. The Prato Statement on cost and value in professional and interprofessional education. J Interprof Care. 2017;31(1):1-4. doi:10.1080/13561820.2016.1257255

- Foo J, Cook DA, Walsh K, et al. Cost evaluations in health professions education: a systematic review of methods and reporting quality. Med Educ. 2019;53(12):1196-1208. doi:10.1111/medu.13936

- Maloney S, Reeves S, Rivers G, Ilic D, Foo J, Walsh K. The Prato Statement on cost and value in professional and interprofessional education. J Interprof Care. 2017;31(1):1-4. doi:10.1080/13561820.2016.1257255

Cost Analysis of Dermatology Residency Applications From 2021 to 2024 Using the Texas Seeking Transparency in Application to Residency Database

Cost Analysis of Dermatology Residency Applications From 2021 to 2024 Using the Texas Seeking Transparency in Application to Residency Database

To the Editor:

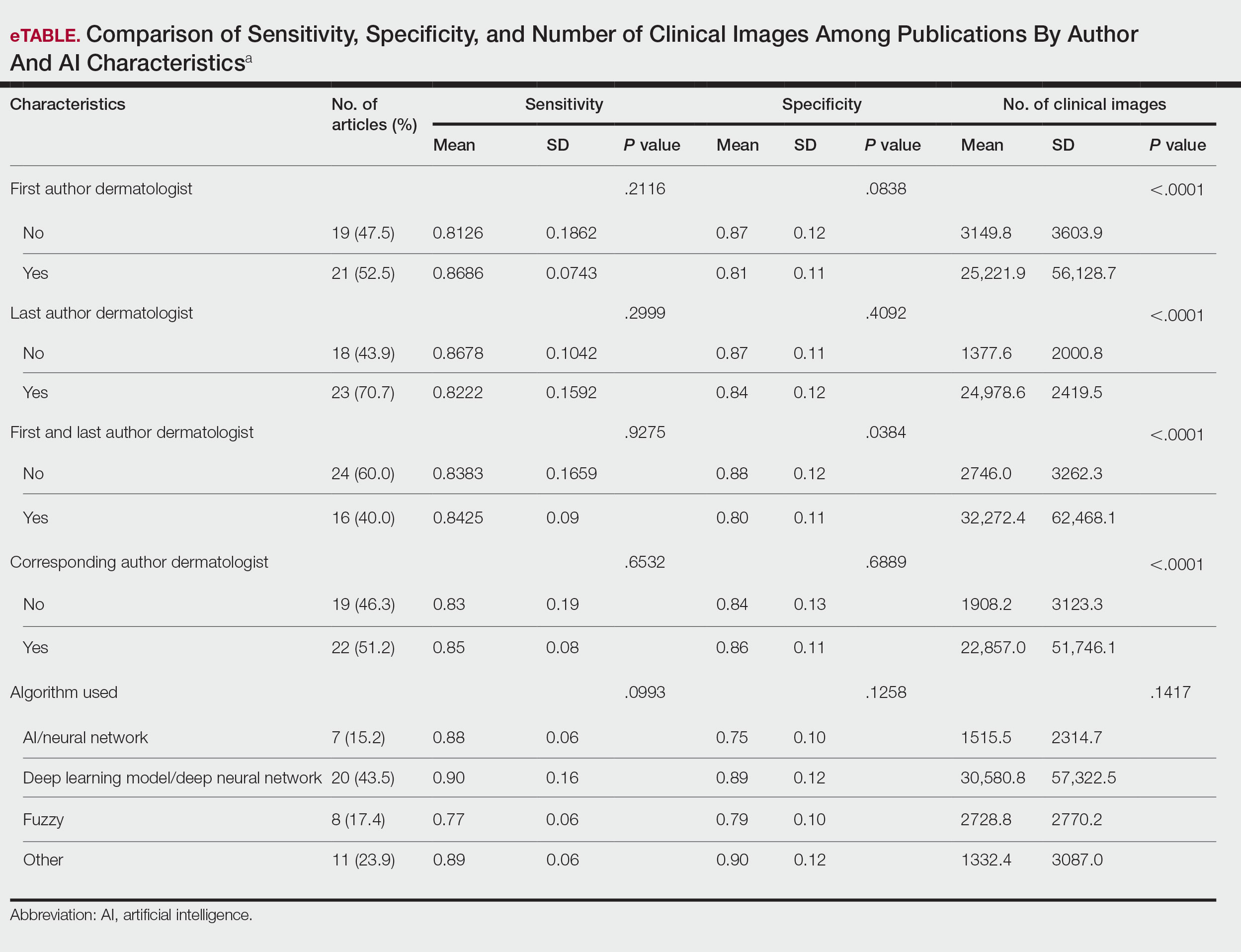

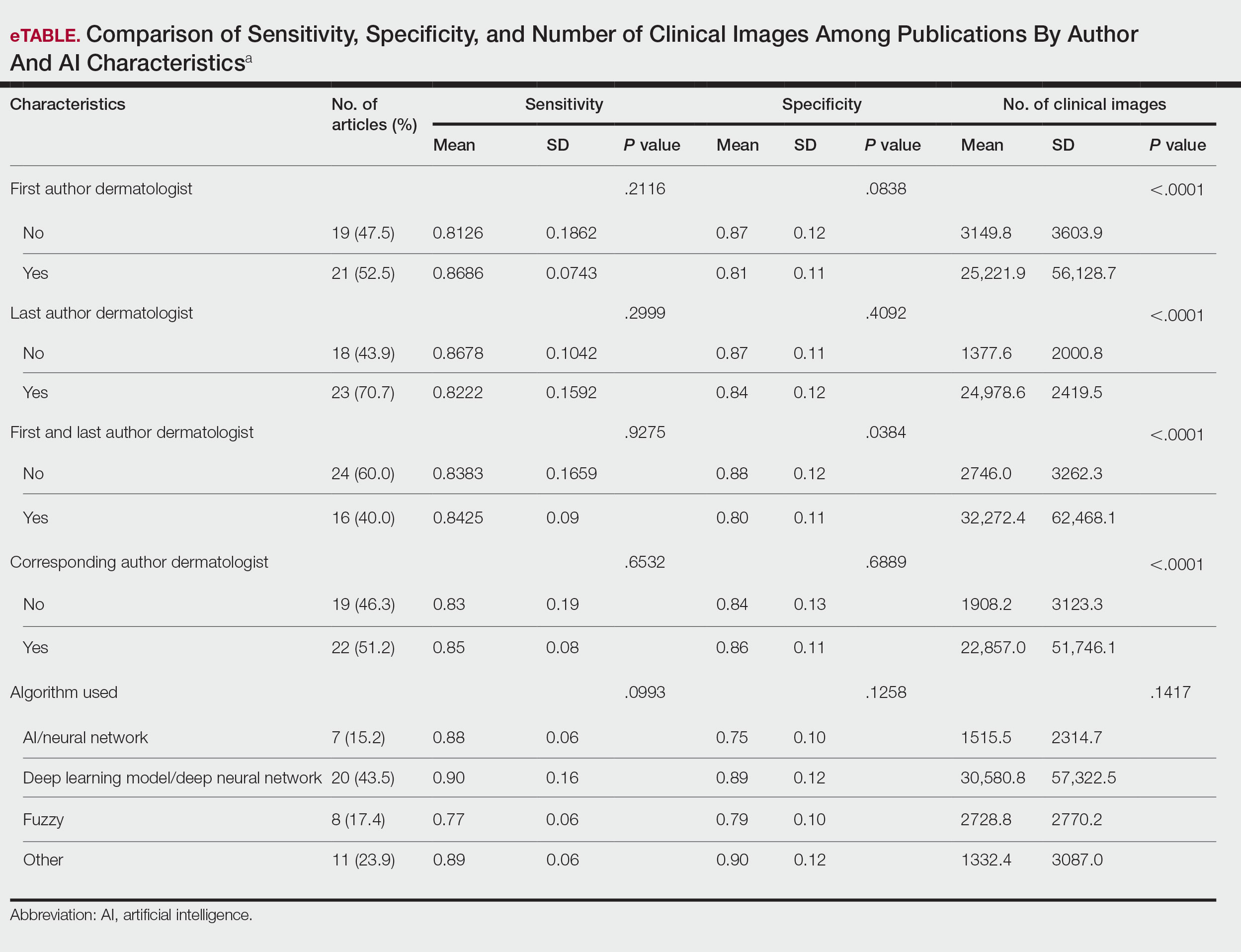

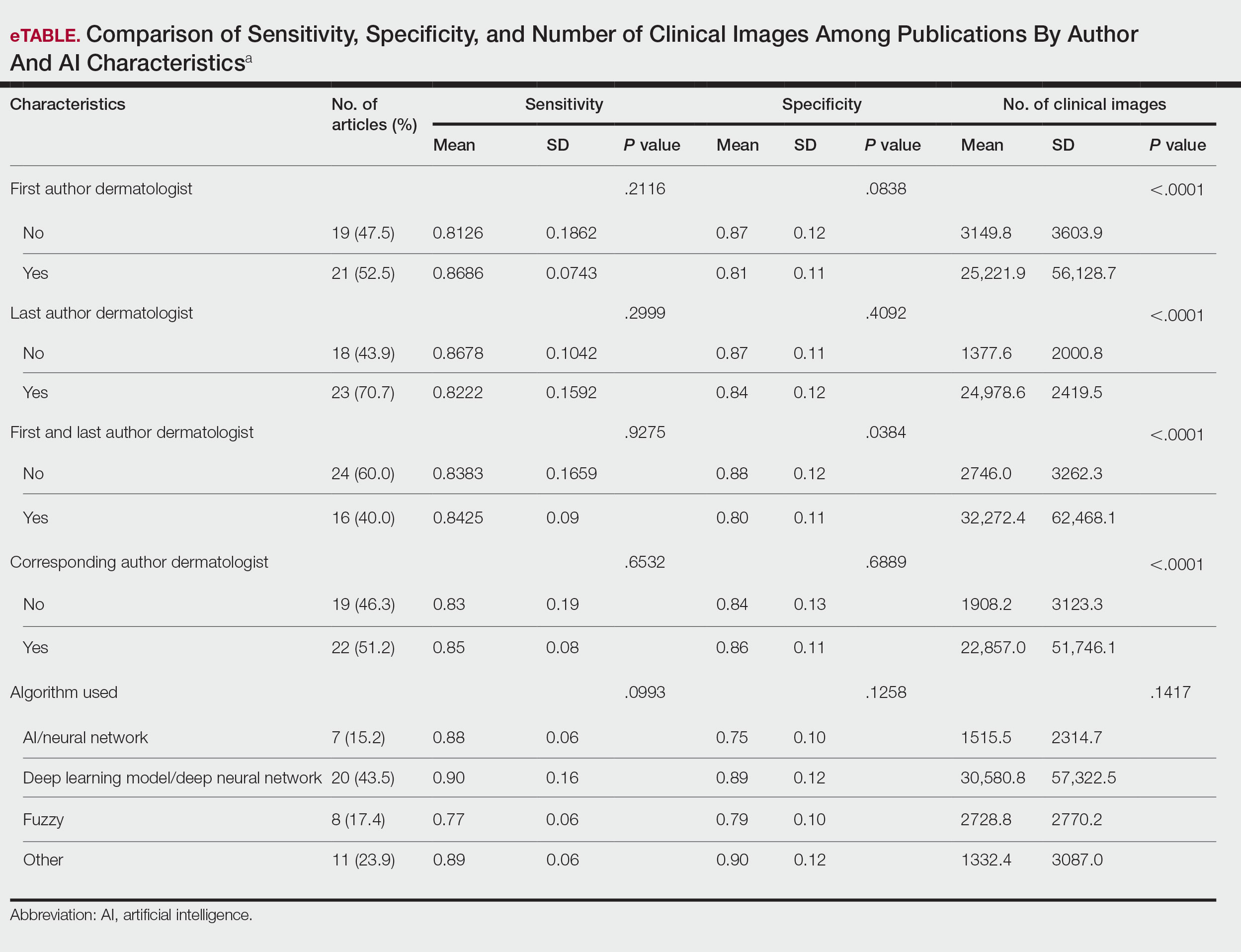

Residency applicants, especially in competitive specialties such as dermatology, face major financial barriers due to the high costs of applications, interviews, and away rotations.1 While several studies have examined application costs of other specialties, few have analyzed expenses associated with dermatology applications.1,2 There are no data examining costs following the start of the COVID-19 pandemic in 2020; thus, our study evaluated dermatology application cost trends from 2021 to 2024 and compared them to other specialties to identify strategies to reduce the financial burden on applicants.

Self-reported total application costs, application fees, interview expenses, and away rotation costs from 2021 to 2024 were collected from the Texas Seeking Transparency in Application to Residency (STAR) database powered by the UT Southwestern Medical Center (Dallas, Texas).3 The mean total application expenses per year were compared among specialties, and an analysis of variance was used to determine if the differences were statistically significant.

The number of applicants who recorded information in the Texas STAR database was 110 in 2021, 163 in 2022, 136 in 2023, and 129 in 2024.3 The total dermatology application expenses increased from $2805 in 2021 to $6231 in 2024; interview costs increased from $404 in 2021 to $911 in 2024; and away rotation costs increased from $850 in 2021 to $3812 in 2024 (all P<.05)(Table). There was no significant change in application fees during the study period ($2176 in 2021 to $2125 in 2024 [P=.58]). Dermatology had the fourth highest average total cost over the study period compared to all other specialties, increasing from $2250 in 2021 to $5250 in 2024, following orthopedic surgery ($2250 in 2021 to $6750 in 2024), plastic surgery ($2250 in 2021 to $9750 in 2024), and neurosurgery ($1750 in 2021 to $11,250 in 2024).

Our study found that dermatology residency application costs have increased significantly from 2021 to 2024, primarily driven by rising interview and away rotation expenses (both P<.05). This trend places dermatology among the most expensive fields to apply to for residency. A cross-sectional survey of dermatology residency program directors identified away rotations as one of the top 5 selection criteria, underscoring their importance in the matching process.4 In addition, a cross-sectional analysis of 345 dermatology residents found that 26.2% matched at institutions where they had mentors, including those they connected with through away rotations.5,6 Overall, the high cost of away rotations partially may reflect the competitive nature of the specialty, as building connections at programs may enhance the chances of matching. These costs also can vary based on geography, as rotating in high-cost urban centers can be more expensive than in rural areas; however, rural rotations may be less common due to limited program availability and applicant preferences. For example, nearly 50% of 2024 Electronic Residency Application Service applicants indicated a preference for urban settings, while fewer than 5% selected rural settings.7 Additionally, the high costs associated with applying to residency programs and completing away rotations can disproportionately impact students from rural backgrounds and underrepresented minorities, who may have fewer financial resources.

In our study, the lower application-related expenses in 2021 (during the pandemic) compared to those of 2024 (postpandemic) likely stem from the Association of American Medical Colleges’ recommendation to conduct virtual interviews during the pandemic.8 In 2024, some dermatology programs returned to in-person interviews, with some applicants consequently incurring higher costs related to travel, lodging, and other associated expenses.8 A cost-analysis study of 4153 dermatology applicants from 2016 to 2021 found that the average application costs were $1759 per applicant during the pandemic, when virtual interviews replaced in-person ones, whereas costs were $8476 per applicant during periods with in-person interviews and no COVID-19 restrictions.2 However, we did not observe a significant change in application fees over our study period, likely because the pandemic did not affect application numbers. A cross-sectional analysis of dermatology applicants during the pandemic similarly reported reductions in application-related expenses during the period when interviews were conducted virtually,9 supporting the trend observed in our study. Overall, our findings taken together with other studies highlight the pandemic’s role in reducing expenses and underscore the potential for exploring additional cost-saving measures.

Implementing strategies to reduce these financial burdens—including virtual interviews, increasing student funding for away rotations, and limiting the number of applications individual students can submit—could help alleviate socioeconomic disparities. The new signaling system for residency programs aims to reduce the number of applications submitted, as applicants typically receive interviews only from the limited number of programs they signal, reducing overall application costs. However, our data from the Texas STAR database suggest that application numbers remained relatively stable from 2021 to 2024, indicating that, despite signaling, many applicants still may apply broadly in hopes of improving their chances in an increasingly competitive field. Although a definitive solution to reducing the financial burden on dermatology applicants remains elusive, these strategies can raise awareness and encourage important dialogues.

Limitations of our study include the voluntary nature of the Texas STAR survey, leading to potential voluntary response bias, as well as the small sample size. Students who choose to submit cost data may differ systematically from those who do not; for example, students who match may be more likely to report their outcomes, while those who do not match may be less likely to participate, potentially introducing selection bias. In addition, general awareness of the Texas STAR survey may vary across institutions and among students, further limiting the number of students who participate. Additionally, 2021 was the only presignaling year included, making it difficult to assess longer-term trends. Despite these limitations, the Texas STAR database remains a valuable resource for analyzing general residency application expenses and trends, as it offers comprehensive data from more than 100 medical schools and includes many variables.3

In conclusion, our study found that total dermatology residency application costs have increased significantly from 2021 to 2024 (all P<.05), making dermatology among the most expensive specialties for applying. This study sets the foundation for future survey-based research for applicants and program directors on strategies to alleviate financial burdens.

- Mansouri B, Walker GD, Mitchell J, et al. The cost of applying to dermatology residency: 2014 data estimates. J Am Acad Dermatol. 2016;74:754-756. doi:10.1016/j.jaad.2015.10.049

- Gorgy M, Shah S, Arbuiso S, et al. Comparison of cost changes due to the COVID-19 pandemic for dermatology residency applications in the USA. Clin Exp Dermatol. 2022;47:600-602. doi:10.1111/ced.15001<.li>

- UT Southwestern. Texas STAR. 2024. Accessed November 5, 2025. https://www.utsouthwestern.edu/education/medical-school/about-the-school/student-affairs/texas-star.html

- Baldwin K, Weidner Z, Ahn J, et al. Are away rotations critical for a successful match in orthopaedic surgery? Clin Orthop Relat Res. 2009;467:3340-3345. doi:10.1007/s11999-009-0920-9

- Yeh C, Desai AD, Wilson BN, et al. Cross-sectional analysis of scholarly work and mentor relationships in matched dermatology residency applicants. J Am Acad Dermatol. 2022;86:1437-1439. doi:10.1016/j.jaad.2021.06.861

- Gorouhi F, Alikhan A, Rezaei A, et al. Dermatology residency selection criteria with an emphasis on program characteristics: a national program director survey. Dermatol Res Pract. 2014;2014:692760. doi:10.1155/2014/692760

- Association of American Medical Colleges. Decoding geographic and setting preferences in residency selection. January 18, 2024. Accessed October 27, 2025. https://www.aamc.org/services/eras-institutions/geographic-preferences

- Association of American Medical Colleges. Virtual interviews: tips for program directors. Updated May 14, 2020. https://med.stanford.edu/content/dam/sm/gme/program_portal/pd/pd_meet/2019-2020/8-6-20-Virtual_Interview_Tips_for_Program_Directors_05142020.pdf

- Williams GE, Zimmerman JM, Wiggins CJ, et al. The indelible marks on dermatology: impacts of COVID-19 on dermatology residency match using the Texas STAR database. Clin Dermatol. 2023;41:215-218. doi:10.1016/j.clindermatol.2022.12.001

To the Editor:

Residency applicants, especially in competitive specialties such as dermatology, face major financial barriers due to the high costs of applications, interviews, and away rotations.1 While several studies have examined application costs of other specialties, few have analyzed expenses associated with dermatology applications.1,2 There are no data examining costs following the start of the COVID-19 pandemic in 2020; thus, our study evaluated dermatology application cost trends from 2021 to 2024 and compared them to other specialties to identify strategies to reduce the financial burden on applicants.

Self-reported total application costs, application fees, interview expenses, and away rotation costs from 2021 to 2024 were collected from the Texas Seeking Transparency in Application to Residency (STAR) database powered by the UT Southwestern Medical Center (Dallas, Texas).3 The mean total application expenses per year were compared among specialties, and an analysis of variance was used to determine if the differences were statistically significant.

The number of applicants who recorded information in the Texas STAR database was 110 in 2021, 163 in 2022, 136 in 2023, and 129 in 2024.3 The total dermatology application expenses increased from $2805 in 2021 to $6231 in 2024; interview costs increased from $404 in 2021 to $911 in 2024; and away rotation costs increased from $850 in 2021 to $3812 in 2024 (all P<.05)(Table). There was no significant change in application fees during the study period ($2176 in 2021 to $2125 in 2024 [P=.58]). Dermatology had the fourth highest average total cost over the study period compared to all other specialties, increasing from $2250 in 2021 to $5250 in 2024, following orthopedic surgery ($2250 in 2021 to $6750 in 2024), plastic surgery ($2250 in 2021 to $9750 in 2024), and neurosurgery ($1750 in 2021 to $11,250 in 2024).

Our study found that dermatology residency application costs have increased significantly from 2021 to 2024, primarily driven by rising interview and away rotation expenses (both P<.05). This trend places dermatology among the most expensive fields to apply to for residency. A cross-sectional survey of dermatology residency program directors identified away rotations as one of the top 5 selection criteria, underscoring their importance in the matching process.4 In addition, a cross-sectional analysis of 345 dermatology residents found that 26.2% matched at institutions where they had mentors, including those they connected with through away rotations.5,6 Overall, the high cost of away rotations partially may reflect the competitive nature of the specialty, as building connections at programs may enhance the chances of matching. These costs also can vary based on geography, as rotating in high-cost urban centers can be more expensive than in rural areas; however, rural rotations may be less common due to limited program availability and applicant preferences. For example, nearly 50% of 2024 Electronic Residency Application Service applicants indicated a preference for urban settings, while fewer than 5% selected rural settings.7 Additionally, the high costs associated with applying to residency programs and completing away rotations can disproportionately impact students from rural backgrounds and underrepresented minorities, who may have fewer financial resources.

In our study, the lower application-related expenses in 2021 (during the pandemic) compared to those of 2024 (postpandemic) likely stem from the Association of American Medical Colleges’ recommendation to conduct virtual interviews during the pandemic.8 In 2024, some dermatology programs returned to in-person interviews, with some applicants consequently incurring higher costs related to travel, lodging, and other associated expenses.8 A cost-analysis study of 4153 dermatology applicants from 2016 to 2021 found that the average application costs were $1759 per applicant during the pandemic, when virtual interviews replaced in-person ones, whereas costs were $8476 per applicant during periods with in-person interviews and no COVID-19 restrictions.2 However, we did not observe a significant change in application fees over our study period, likely because the pandemic did not affect application numbers. A cross-sectional analysis of dermatology applicants during the pandemic similarly reported reductions in application-related expenses during the period when interviews were conducted virtually,9 supporting the trend observed in our study. Overall, our findings taken together with other studies highlight the pandemic’s role in reducing expenses and underscore the potential for exploring additional cost-saving measures.

Implementing strategies to reduce these financial burdens—including virtual interviews, increasing student funding for away rotations, and limiting the number of applications individual students can submit—could help alleviate socioeconomic disparities. The new signaling system for residency programs aims to reduce the number of applications submitted, as applicants typically receive interviews only from the limited number of programs they signal, reducing overall application costs. However, our data from the Texas STAR database suggest that application numbers remained relatively stable from 2021 to 2024, indicating that, despite signaling, many applicants still may apply broadly in hopes of improving their chances in an increasingly competitive field. Although a definitive solution to reducing the financial burden on dermatology applicants remains elusive, these strategies can raise awareness and encourage important dialogues.