User login

Health Care‐Associated Candidemia

In the United States, candida now accounts for between 8% and 12% of all catheter‐associated blood stream infections (BSIs).1 Additionally, crude mortality rates in candidemia exceed 40%, and a recent systematic review demonstrated that the attributable mortality of candidemia ranges from 5% to 71%.2 Candidal BSIs also affect resource utilization. These infections independently increase length of stay and result in substantial excess costs.3 Most cases of candidemia arise in noncritically ill patients and thus may be managed by hospitalists.

Historically, the majority of candidal BSIs were caused by C. albicans. Presently, C. albicans accounts for only half of all yeast BSIs, and approximately 20% of these infections are caused by organisms such as C. glabrata and C. krusei.4 These 2 organisms have either variable or no susceptibility to agents, such as fluconazole, empirically employed against yeast. Parallel with the evolution in microbiology of candidemia has been recognition that inappropriate treatment of these infections independently increases mortality.5 These factors underscore the need for the clinician to treat suspected candidal BSIs aggressively in order to avoid the risks associated with inappropriate treatment.

Efforts to enhance rates of initial appropriate therapy for bacterial infections have encompassed the realization that health care‐associated infections (HAIs) represent a distinct syndrome.6, 7 Traditionally, infections were considered either community‐acquired or nosocomial in origin. However, with the spread of health care delivery beyond the hospital, multiple studies indicate that patients may now present to the emergency department with infections caused by pathogens such as Methicillin‐resistant Staphylococcus aureus (MRSA) and P. aeruginosaorganisms that were previously thought limited to hospital‐acquired processes.69 Furthermore, hospitalists often encounter subjects presenting to the hospital with suspected BSIs who have an active and ongoing interaction with the healthcare system.

The importance of candida as a health care‐associated pathogen in BSI remains unclear. We hypothesized that health care‐associated candidemia (HCAC) represented a distinct clinical entity. In order to confirm our theory, we conducted a retrospective analysis of all cases of candidal BSI at our institution over a 3‐year period.

Methods

We reviewed the records of all patients diagnosed with candidemia at our hospital between January 1, 2004 and December 31, 2006. Our institutional review board approved this study. We included adult patients diagnosed with candidemia. The diagnosis of candidemia was based on the isolation of yeast from the blood in at least one blood culture. We employ the BACTEC 9240 blood Culture System (Becton Dickinson Microbiology Systems, Sparks, MD). We excluded subjects who were admitted to the hospital within one month of a known diagnosis of candidemia.

We defined a nosocomial candidal BSI as the diagnosis of candidemia based on cultures drawn after the patient had been hospitalized for >48 hours. We considered HCAC to be present based on previously employed criteria for identifying HAI.69 Specifically, for patients with candidemia based on blood cultures obtained within 48 hours of hospitalization, a patient had to meet at least 1 of the following criteria: (1) receipt of intravenous therapy outside the hospital, (2) end stage renal disease necessitating hemodialysis (ESRD requiring HD), (3) hospitalization within previous 30 days, (4) residence in a nursing home or long term care facility, or (5) underwent an invasive procedure as an outpatient within 30 days of presentation. Community‐acquired candidemia was restricted to patients whose index culture was drawn within 48 hours of admission but who failed to meet the definition for HCAC.

The prevalence of the various forms of candidemia served as our primary endpoint. In addition, we compared patients with respect to demographic factors, comorbidities, and severity of illness. Severity of illness was calculated based on the Acute Physiology and Chronic Health Evaluation (APACHE) II score. We further noted rates of immune suppression in the cohort and defined this as treatment with corticosteroids (10 mg of prednisone or equivalent daily for more than 30 consecutive days), other immunosuppressants (eg, methotrexate), or chemotherapy. Those with acquired immune deficiency syndrome (AIDS) or another immunodeficiency syndrome were defined as immunosuppressed as well. We examined the distribution of yeast species across the 3 forms of candidemia. Finally, we assessed the prevalence of fluconazole resistance. Fluconazole susceptibilities were determined based on Etest (AB BIODISK, Solna, Sweden). An isolate was considered resistant to fluconazole if the minimum inhibitory concentration was >64 g/mL.

We compared categorical variables with the Fisher's exact test. Continuous variables were analyzed with either the Student's t‐test or a Mann‐Whitney test, as appropriate. All tests were 2 tailed and a P value of 0.05 was assumed to represent statistical significance. Analyses were performed with Stata 9.1 (Stata Corp., College Station, TX).

Results

The final cohort included 223 subjects. The mean age of the patients was 59.6 15.7 years and 49% were male. Nearly one quarter (n = 55) fulfilled our criteria for HCAC. The remainder met the definition for nosocomial candidemia. We observed no cases of community‐acquired candidemia. Most (n = 33) patients with HCAC had exposure to more than 1 health care‐related source and many were initially admitted to the medicine/hospitalist service as opposed to the intensive care unit (ICU). The most common criteria leading to categorization as HCAC was recent hospitalization (n = 30, 54.5% of all HCAC). The median time from recent hospitalization to admission was 17 days (Range: 5‐28 days). Other common reasons for classification as HCAC included ESRD requiring HD (30.9%), residence in a nursing home (25.5%), and undergoing an invasive outpatient procedure (16.4%). More than 75% of subjects with HCAC (n = 42) had central venous catheters in place at presentation. Between 2004 and 2006, the proportion of all candidemia due to HCAC increased from 20.9% to 26.9%, but this difference was not statistically significant.

Patients with HCAC were similar to those with nosocomial candidemia (Table 1). There was no difference in either severity of illness or the frequency of neutropenia. The prevalence of most comorbidities did not differ between those with nosocomial candidemia and persons with HCAC. However, immunosuppression was more prevalent among patients with HCAC (prevalence ratio, 1.67; 95% CI, 1.13‐3.08; P = 0.004). In part this finding is expected given that our definition of HCAC includes exposure to agents which may lead to immunosuppression, such as chemotherapy. Of patients with HCAC, the majority (n = 38, 69.1%) were initially admitted to the general medicine service and not to the ICU.

| Characteristic | Healthcare‐Associated Candidemia (n = 55) | Nosocomial Candidemia (n = 168) | P |

|---|---|---|---|

| |||

| Demographics | |||

| Age, mean SD | 61.0 12.9 | 59.1 16.6 | 0.45 |

| Male, % | 60.0 | 45.8 | 0.08 |

| Severity of illness | |||

| APACHE II score, mean SD | 15.9 6.8 | 14.6 6.3 | 0.21 |

| Co‐morbid illnesses | |||

| Diabetes mellitus, % | 36.4 | 32.7 | 0.87 |

| Malignancy, % | 36.4 | 22.6 | 0.04 |

| ESRD on HD, % | 30.9 | 23.2 | 0.25 |

| AIDS, % | 7.2 | 6.0 | 0.73 |

| Immunosupressed, % | 54.5 | 32.7 | 0.004 |

| White cell status | |||

| ANC, 1000/mm3, mean SD | 10.7 7.2 | 12.3 8.0 | 0.20 |

| Neutropenic, % | 2.0 | 2.2 | 0.91 |

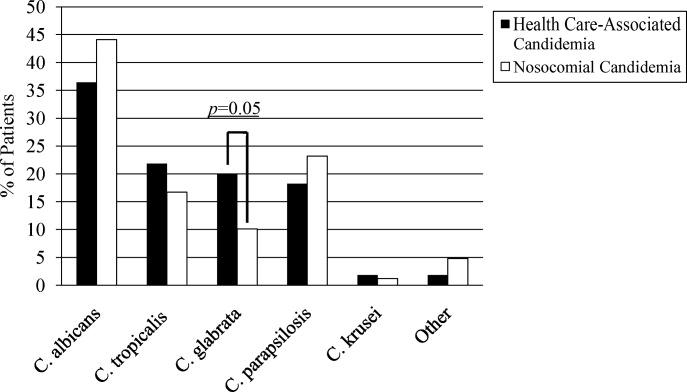

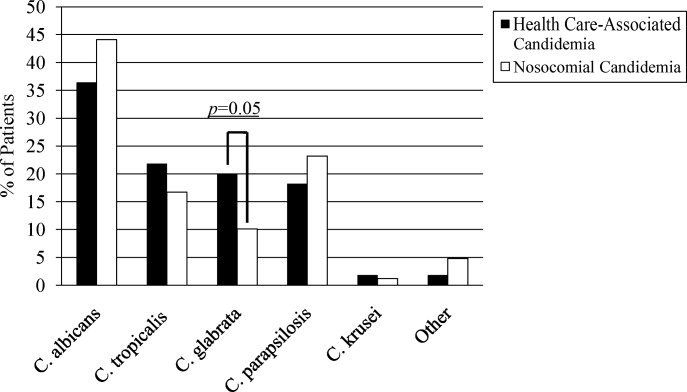

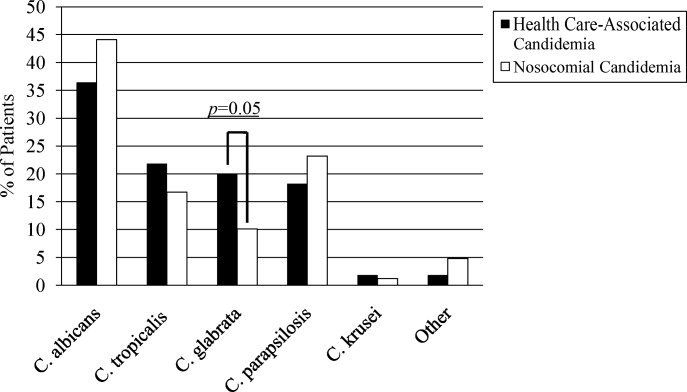

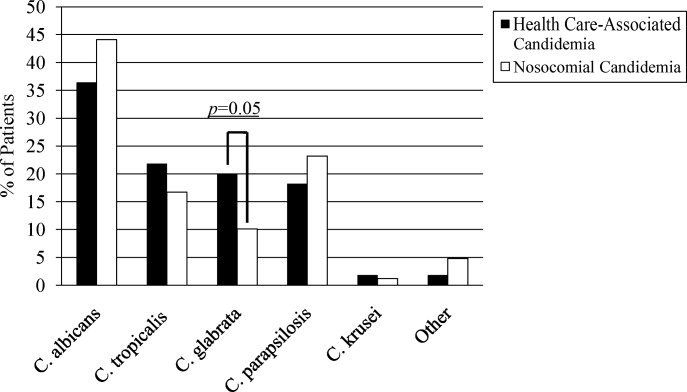

A multitude of various yeast species were recovered (Figure 1). Overall, nonalbicans candida were responsible for nearly 60% of all infections. Nonalbicans yeast were as likely to be recovered in HCAC as in nosocomial yeast infection. Among both types of Candidemia, C. krusei was a rare culprit accounting for fewer than 2% of infections. C. glabrata, however, occurred more often in HCAC. Specifically, C. glabrata represented 1 in 5 cases of HCAC as opposed to approximately 10% of all nosocomial yeast BSIs (P = 0.05). In part reflecting this, fluconazole resistance was noted more often in HCAC (18.2% of patients vs. 7.7% among nosocomial candidemia, P = 0.036). There was no difference in the eventual diagnosis of deep‐seeded yeast infections (ie, endocarditis, endopthlamitis, or osteomyelitis) between those with HCAC and persons with nosocomial candidemia (3 cases in each group).

Discussion

This analysis demonstrates that HCAC accounts for approximately a quarter of all candidemia. Our findings underscore that candidemia can present to the emergency department as an HAI and may potentially be initially cared for by a hospitalist. In addition, patients with HCAC and nosocomial candidemia share many attributes. Furthermore, nonalbicans yeast are as prevalent in HCAC as in nosocomial candidal infection. Nonetheless, there appear to be important differences in these syndromes. Immunosuppression appears to be more common in HCAC as does infection due to C. glabrata.

Others have explored the concept of HCAC. Kung et al.10 described community‐onset candidemia at a single center over a 10‐year period. They described 56 patients and noted that the majority had been recently hospitalized or had ongoing interaction with the healthcare system. Sofair et al.11 followed subjects presenting to emergency departments with candidemia. Overall, more than one‐third met criteria for community‐onset infection. In this analysis, though, Sofair et al.11 did not distinguish between community‐acquired processes and HCAC. From a population perspective, Chen et al.12 explored candidemia in Australia. Among over 1000 patients, the noted that 11.6% represented HCAC and, as we note, that select nonalbicans yeast occurred more often in HCAC than in nosocomial candidemia. Our project builds on and adds to these earlier efforts. First, we confirm the general observation that candidemia is no longer solely a nosocomial pathogen. Second, unlike several of these earlier reports we examined a larger cohort of candidemia. Third, beyond the observations of Chen et al.,12 we note that currently, the proportion of Candidal BSI classified as HACA relative to nosocomial candidemia seems larger than reported in the past. Finally, a unique aspect of our report is that we employed express criteria to define HAI.

Our findings have several implications. First, hospitalists and emergency department physicians, along with others, must remain vigilant when approaching patients presenting to the hospital with signs and symptoms of BSI and multiple risk factors for candidal BSI. The fact that the patient has not been hospitalized should not preclude consideration of and treatment for candidemia. The current evidence does not support broad, empiric use of antifungal agents, as this would lead to excessive costs and potentially expose many patients to unnecessary antifungal coverage. On the other hand, given the association between delayed antifungal therapy and the risk for death in candidemia, failure to consider this infection in at‐risk subjects may have adverse consequences. Second, our observations emphasize the need for clinical risk stratification schemes and rapid diagnostic modalities. Such tools are urgently needed if physicians hope to target antifungal therapies more appropriately. Third, if the clinician opts to initiate therapy for possible HCAC, reliance on fluconazole alone may prove inadequate. As the generalizability of our conclusions is necessarily limited, we recommend that infection control practitioners review local epidemiologic data regarding HCAC so that physicians can have the best available guidance.

Our study has several important limitations. Its retrospective nature exposes it to several forms of bias. The single center design limits the generalizability of our findings. Prospective, multicenter studies are needed to validate our results. Additionally, no universally accepted criteria exist to define HAI syndromes. Nonetheless, the criteria we employed have been used by others. We also lacked data on exposure to recent broad spectrum antimicrobials. Selection pressure via exposure to such agents is a risk factor for candidemia and without this data we cannot gauge the impact of this on our findings. Finally, we cannot control for the possibility that some patients were miscategorized. This could have arisen because of: (1) either limitations inherent in the definition of HCAC or (2) because the clinician delayed the decision to obtain blood cultures. Some patients classified as nosocomial may actually have had HCAC or community‐acquired diseasebut for some reason blood cultures were not drawn at time of admission but were deferred until later. Although a difficult issue to address in any study of the epidemiology of infection, the significance of this misclassification bias must be considered a significant concern.

In summary, Candidemia can be the cause of BSI presenting to the hospital. Moreover, HCAC represents a significant proportion of all Candidemia. Although patients with HCAC and nosocomial candidemia share select characteristics, there appear to be some differences in the microbiology of these syndromes.

- CDC.National Nosocomial Infections Surveillance (NNIS) System report, data summary from January 1990‐‐May 1999, issued June 1999.Am J Infect Control.1999;27:520–532.

- ,,.Attributable mortality of candidemia: a systematic review of matched cohort and case‐control studies.Eur J Clin Microbiol Infect Dis.2006;25:419–425.

- ,,, et al.Excess mortality, hospital stay, and cost due to candidemia: a case‐control study using data from population‐based candidemia surveillance.Infect Control Hosp Epidemiol.2005;26:540–547.

- .Shifting patterns in the epidemiology of nosocomial Candida infections.Chest.2003;123:500S–503S.

- ,,.Delaying the empiric treatment of candida bloodstream infection until positive blood culture results are obtained: a potential risk factor for hospital mortality.Antimicrob Agents Chemother.2005;49:3640–3645.

- ,,, et al.Healthcare‐associated bloodstream infection: A distinct entity? Insights from a large U.S. database.Crit Care Med.2006;34:2588–2595.

- ,,, et al.Health care‐‐associated bloodstream infections in adults: a reason to change the accepted definition of community‐acquired infections.Ann Intern Med.2002;137:791–797.

- ,.Epidemiology of healthcare‐associated pneumonia (HCAP).Semin Respir Crit Care Med.2009;30:10–15.

- ,,, et al.Health care associated pneumonia and community‐acquired pneumonia: a single‐center experience.Antimicrob Agents Chemother.2007;51:3568–3573.

- ,,, et al.Communtiy‐onset candidemia at a university hospital, 1995‐2005.J Microbiol Immunol Infect.2007;40:355–363.

- ,,, et al.Epidemiology of community‐onset candidemia in Connecticut and Maryland.Clin Infect Dis.2006;43:32–39.

- ,,, et al.Active surveillance for candidemia, Australia.Emerg Infect Dis.2006;12:1508–1516.

In the United States, candida now accounts for between 8% and 12% of all catheter‐associated blood stream infections (BSIs).1 Additionally, crude mortality rates in candidemia exceed 40%, and a recent systematic review demonstrated that the attributable mortality of candidemia ranges from 5% to 71%.2 Candidal BSIs also affect resource utilization. These infections independently increase length of stay and result in substantial excess costs.3 Most cases of candidemia arise in noncritically ill patients and thus may be managed by hospitalists.

Historically, the majority of candidal BSIs were caused by C. albicans. Presently, C. albicans accounts for only half of all yeast BSIs, and approximately 20% of these infections are caused by organisms such as C. glabrata and C. krusei.4 These 2 organisms have either variable or no susceptibility to agents, such as fluconazole, empirically employed against yeast. Parallel with the evolution in microbiology of candidemia has been recognition that inappropriate treatment of these infections independently increases mortality.5 These factors underscore the need for the clinician to treat suspected candidal BSIs aggressively in order to avoid the risks associated with inappropriate treatment.

Efforts to enhance rates of initial appropriate therapy for bacterial infections have encompassed the realization that health care‐associated infections (HAIs) represent a distinct syndrome.6, 7 Traditionally, infections were considered either community‐acquired or nosocomial in origin. However, with the spread of health care delivery beyond the hospital, multiple studies indicate that patients may now present to the emergency department with infections caused by pathogens such as Methicillin‐resistant Staphylococcus aureus (MRSA) and P. aeruginosaorganisms that were previously thought limited to hospital‐acquired processes.69 Furthermore, hospitalists often encounter subjects presenting to the hospital with suspected BSIs who have an active and ongoing interaction with the healthcare system.

The importance of candida as a health care‐associated pathogen in BSI remains unclear. We hypothesized that health care‐associated candidemia (HCAC) represented a distinct clinical entity. In order to confirm our theory, we conducted a retrospective analysis of all cases of candidal BSI at our institution over a 3‐year period.

Methods

We reviewed the records of all patients diagnosed with candidemia at our hospital between January 1, 2004 and December 31, 2006. Our institutional review board approved this study. We included adult patients diagnosed with candidemia. The diagnosis of candidemia was based on the isolation of yeast from the blood in at least one blood culture. We employ the BACTEC 9240 blood Culture System (Becton Dickinson Microbiology Systems, Sparks, MD). We excluded subjects who were admitted to the hospital within one month of a known diagnosis of candidemia.

We defined a nosocomial candidal BSI as the diagnosis of candidemia based on cultures drawn after the patient had been hospitalized for >48 hours. We considered HCAC to be present based on previously employed criteria for identifying HAI.69 Specifically, for patients with candidemia based on blood cultures obtained within 48 hours of hospitalization, a patient had to meet at least 1 of the following criteria: (1) receipt of intravenous therapy outside the hospital, (2) end stage renal disease necessitating hemodialysis (ESRD requiring HD), (3) hospitalization within previous 30 days, (4) residence in a nursing home or long term care facility, or (5) underwent an invasive procedure as an outpatient within 30 days of presentation. Community‐acquired candidemia was restricted to patients whose index culture was drawn within 48 hours of admission but who failed to meet the definition for HCAC.

The prevalence of the various forms of candidemia served as our primary endpoint. In addition, we compared patients with respect to demographic factors, comorbidities, and severity of illness. Severity of illness was calculated based on the Acute Physiology and Chronic Health Evaluation (APACHE) II score. We further noted rates of immune suppression in the cohort and defined this as treatment with corticosteroids (10 mg of prednisone or equivalent daily for more than 30 consecutive days), other immunosuppressants (eg, methotrexate), or chemotherapy. Those with acquired immune deficiency syndrome (AIDS) or another immunodeficiency syndrome were defined as immunosuppressed as well. We examined the distribution of yeast species across the 3 forms of candidemia. Finally, we assessed the prevalence of fluconazole resistance. Fluconazole susceptibilities were determined based on Etest (AB BIODISK, Solna, Sweden). An isolate was considered resistant to fluconazole if the minimum inhibitory concentration was >64 g/mL.

We compared categorical variables with the Fisher's exact test. Continuous variables were analyzed with either the Student's t‐test or a Mann‐Whitney test, as appropriate. All tests were 2 tailed and a P value of 0.05 was assumed to represent statistical significance. Analyses were performed with Stata 9.1 (Stata Corp., College Station, TX).

Results

The final cohort included 223 subjects. The mean age of the patients was 59.6 15.7 years and 49% were male. Nearly one quarter (n = 55) fulfilled our criteria for HCAC. The remainder met the definition for nosocomial candidemia. We observed no cases of community‐acquired candidemia. Most (n = 33) patients with HCAC had exposure to more than 1 health care‐related source and many were initially admitted to the medicine/hospitalist service as opposed to the intensive care unit (ICU). The most common criteria leading to categorization as HCAC was recent hospitalization (n = 30, 54.5% of all HCAC). The median time from recent hospitalization to admission was 17 days (Range: 5‐28 days). Other common reasons for classification as HCAC included ESRD requiring HD (30.9%), residence in a nursing home (25.5%), and undergoing an invasive outpatient procedure (16.4%). More than 75% of subjects with HCAC (n = 42) had central venous catheters in place at presentation. Between 2004 and 2006, the proportion of all candidemia due to HCAC increased from 20.9% to 26.9%, but this difference was not statistically significant.

Patients with HCAC were similar to those with nosocomial candidemia (Table 1). There was no difference in either severity of illness or the frequency of neutropenia. The prevalence of most comorbidities did not differ between those with nosocomial candidemia and persons with HCAC. However, immunosuppression was more prevalent among patients with HCAC (prevalence ratio, 1.67; 95% CI, 1.13‐3.08; P = 0.004). In part this finding is expected given that our definition of HCAC includes exposure to agents which may lead to immunosuppression, such as chemotherapy. Of patients with HCAC, the majority (n = 38, 69.1%) were initially admitted to the general medicine service and not to the ICU.

| Characteristic | Healthcare‐Associated Candidemia (n = 55) | Nosocomial Candidemia (n = 168) | P |

|---|---|---|---|

| |||

| Demographics | |||

| Age, mean SD | 61.0 12.9 | 59.1 16.6 | 0.45 |

| Male, % | 60.0 | 45.8 | 0.08 |

| Severity of illness | |||

| APACHE II score, mean SD | 15.9 6.8 | 14.6 6.3 | 0.21 |

| Co‐morbid illnesses | |||

| Diabetes mellitus, % | 36.4 | 32.7 | 0.87 |

| Malignancy, % | 36.4 | 22.6 | 0.04 |

| ESRD on HD, % | 30.9 | 23.2 | 0.25 |

| AIDS, % | 7.2 | 6.0 | 0.73 |

| Immunosupressed, % | 54.5 | 32.7 | 0.004 |

| White cell status | |||

| ANC, 1000/mm3, mean SD | 10.7 7.2 | 12.3 8.0 | 0.20 |

| Neutropenic, % | 2.0 | 2.2 | 0.91 |

A multitude of various yeast species were recovered (Figure 1). Overall, nonalbicans candida were responsible for nearly 60% of all infections. Nonalbicans yeast were as likely to be recovered in HCAC as in nosocomial yeast infection. Among both types of Candidemia, C. krusei was a rare culprit accounting for fewer than 2% of infections. C. glabrata, however, occurred more often in HCAC. Specifically, C. glabrata represented 1 in 5 cases of HCAC as opposed to approximately 10% of all nosocomial yeast BSIs (P = 0.05). In part reflecting this, fluconazole resistance was noted more often in HCAC (18.2% of patients vs. 7.7% among nosocomial candidemia, P = 0.036). There was no difference in the eventual diagnosis of deep‐seeded yeast infections (ie, endocarditis, endopthlamitis, or osteomyelitis) between those with HCAC and persons with nosocomial candidemia (3 cases in each group).

Discussion

This analysis demonstrates that HCAC accounts for approximately a quarter of all candidemia. Our findings underscore that candidemia can present to the emergency department as an HAI and may potentially be initially cared for by a hospitalist. In addition, patients with HCAC and nosocomial candidemia share many attributes. Furthermore, nonalbicans yeast are as prevalent in HCAC as in nosocomial candidal infection. Nonetheless, there appear to be important differences in these syndromes. Immunosuppression appears to be more common in HCAC as does infection due to C. glabrata.

Others have explored the concept of HCAC. Kung et al.10 described community‐onset candidemia at a single center over a 10‐year period. They described 56 patients and noted that the majority had been recently hospitalized or had ongoing interaction with the healthcare system. Sofair et al.11 followed subjects presenting to emergency departments with candidemia. Overall, more than one‐third met criteria for community‐onset infection. In this analysis, though, Sofair et al.11 did not distinguish between community‐acquired processes and HCAC. From a population perspective, Chen et al.12 explored candidemia in Australia. Among over 1000 patients, the noted that 11.6% represented HCAC and, as we note, that select nonalbicans yeast occurred more often in HCAC than in nosocomial candidemia. Our project builds on and adds to these earlier efforts. First, we confirm the general observation that candidemia is no longer solely a nosocomial pathogen. Second, unlike several of these earlier reports we examined a larger cohort of candidemia. Third, beyond the observations of Chen et al.,12 we note that currently, the proportion of Candidal BSI classified as HACA relative to nosocomial candidemia seems larger than reported in the past. Finally, a unique aspect of our report is that we employed express criteria to define HAI.

Our findings have several implications. First, hospitalists and emergency department physicians, along with others, must remain vigilant when approaching patients presenting to the hospital with signs and symptoms of BSI and multiple risk factors for candidal BSI. The fact that the patient has not been hospitalized should not preclude consideration of and treatment for candidemia. The current evidence does not support broad, empiric use of antifungal agents, as this would lead to excessive costs and potentially expose many patients to unnecessary antifungal coverage. On the other hand, given the association between delayed antifungal therapy and the risk for death in candidemia, failure to consider this infection in at‐risk subjects may have adverse consequences. Second, our observations emphasize the need for clinical risk stratification schemes and rapid diagnostic modalities. Such tools are urgently needed if physicians hope to target antifungal therapies more appropriately. Third, if the clinician opts to initiate therapy for possible HCAC, reliance on fluconazole alone may prove inadequate. As the generalizability of our conclusions is necessarily limited, we recommend that infection control practitioners review local epidemiologic data regarding HCAC so that physicians can have the best available guidance.

Our study has several important limitations. Its retrospective nature exposes it to several forms of bias. The single center design limits the generalizability of our findings. Prospective, multicenter studies are needed to validate our results. Additionally, no universally accepted criteria exist to define HAI syndromes. Nonetheless, the criteria we employed have been used by others. We also lacked data on exposure to recent broad spectrum antimicrobials. Selection pressure via exposure to such agents is a risk factor for candidemia and without this data we cannot gauge the impact of this on our findings. Finally, we cannot control for the possibility that some patients were miscategorized. This could have arisen because of: (1) either limitations inherent in the definition of HCAC or (2) because the clinician delayed the decision to obtain blood cultures. Some patients classified as nosocomial may actually have had HCAC or community‐acquired diseasebut for some reason blood cultures were not drawn at time of admission but were deferred until later. Although a difficult issue to address in any study of the epidemiology of infection, the significance of this misclassification bias must be considered a significant concern.

In summary, Candidemia can be the cause of BSI presenting to the hospital. Moreover, HCAC represents a significant proportion of all Candidemia. Although patients with HCAC and nosocomial candidemia share select characteristics, there appear to be some differences in the microbiology of these syndromes.

In the United States, candida now accounts for between 8% and 12% of all catheter‐associated blood stream infections (BSIs).1 Additionally, crude mortality rates in candidemia exceed 40%, and a recent systematic review demonstrated that the attributable mortality of candidemia ranges from 5% to 71%.2 Candidal BSIs also affect resource utilization. These infections independently increase length of stay and result in substantial excess costs.3 Most cases of candidemia arise in noncritically ill patients and thus may be managed by hospitalists.

Historically, the majority of candidal BSIs were caused by C. albicans. Presently, C. albicans accounts for only half of all yeast BSIs, and approximately 20% of these infections are caused by organisms such as C. glabrata and C. krusei.4 These 2 organisms have either variable or no susceptibility to agents, such as fluconazole, empirically employed against yeast. Parallel with the evolution in microbiology of candidemia has been recognition that inappropriate treatment of these infections independently increases mortality.5 These factors underscore the need for the clinician to treat suspected candidal BSIs aggressively in order to avoid the risks associated with inappropriate treatment.

Efforts to enhance rates of initial appropriate therapy for bacterial infections have encompassed the realization that health care‐associated infections (HAIs) represent a distinct syndrome.6, 7 Traditionally, infections were considered either community‐acquired or nosocomial in origin. However, with the spread of health care delivery beyond the hospital, multiple studies indicate that patients may now present to the emergency department with infections caused by pathogens such as Methicillin‐resistant Staphylococcus aureus (MRSA) and P. aeruginosaorganisms that were previously thought limited to hospital‐acquired processes.69 Furthermore, hospitalists often encounter subjects presenting to the hospital with suspected BSIs who have an active and ongoing interaction with the healthcare system.

The importance of candida as a health care‐associated pathogen in BSI remains unclear. We hypothesized that health care‐associated candidemia (HCAC) represented a distinct clinical entity. In order to confirm our theory, we conducted a retrospective analysis of all cases of candidal BSI at our institution over a 3‐year period.

Methods

We reviewed the records of all patients diagnosed with candidemia at our hospital between January 1, 2004 and December 31, 2006. Our institutional review board approved this study. We included adult patients diagnosed with candidemia. The diagnosis of candidemia was based on the isolation of yeast from the blood in at least one blood culture. We employ the BACTEC 9240 blood Culture System (Becton Dickinson Microbiology Systems, Sparks, MD). We excluded subjects who were admitted to the hospital within one month of a known diagnosis of candidemia.

We defined a nosocomial candidal BSI as the diagnosis of candidemia based on cultures drawn after the patient had been hospitalized for >48 hours. We considered HCAC to be present based on previously employed criteria for identifying HAI.69 Specifically, for patients with candidemia based on blood cultures obtained within 48 hours of hospitalization, a patient had to meet at least 1 of the following criteria: (1) receipt of intravenous therapy outside the hospital, (2) end stage renal disease necessitating hemodialysis (ESRD requiring HD), (3) hospitalization within previous 30 days, (4) residence in a nursing home or long term care facility, or (5) underwent an invasive procedure as an outpatient within 30 days of presentation. Community‐acquired candidemia was restricted to patients whose index culture was drawn within 48 hours of admission but who failed to meet the definition for HCAC.

The prevalence of the various forms of candidemia served as our primary endpoint. In addition, we compared patients with respect to demographic factors, comorbidities, and severity of illness. Severity of illness was calculated based on the Acute Physiology and Chronic Health Evaluation (APACHE) II score. We further noted rates of immune suppression in the cohort and defined this as treatment with corticosteroids (10 mg of prednisone or equivalent daily for more than 30 consecutive days), other immunosuppressants (eg, methotrexate), or chemotherapy. Those with acquired immune deficiency syndrome (AIDS) or another immunodeficiency syndrome were defined as immunosuppressed as well. We examined the distribution of yeast species across the 3 forms of candidemia. Finally, we assessed the prevalence of fluconazole resistance. Fluconazole susceptibilities were determined based on Etest (AB BIODISK, Solna, Sweden). An isolate was considered resistant to fluconazole if the minimum inhibitory concentration was >64 g/mL.

We compared categorical variables with the Fisher's exact test. Continuous variables were analyzed with either the Student's t‐test or a Mann‐Whitney test, as appropriate. All tests were 2 tailed and a P value of 0.05 was assumed to represent statistical significance. Analyses were performed with Stata 9.1 (Stata Corp., College Station, TX).

Results

The final cohort included 223 subjects. The mean age of the patients was 59.6 15.7 years and 49% were male. Nearly one quarter (n = 55) fulfilled our criteria for HCAC. The remainder met the definition for nosocomial candidemia. We observed no cases of community‐acquired candidemia. Most (n = 33) patients with HCAC had exposure to more than 1 health care‐related source and many were initially admitted to the medicine/hospitalist service as opposed to the intensive care unit (ICU). The most common criteria leading to categorization as HCAC was recent hospitalization (n = 30, 54.5% of all HCAC). The median time from recent hospitalization to admission was 17 days (Range: 5‐28 days). Other common reasons for classification as HCAC included ESRD requiring HD (30.9%), residence in a nursing home (25.5%), and undergoing an invasive outpatient procedure (16.4%). More than 75% of subjects with HCAC (n = 42) had central venous catheters in place at presentation. Between 2004 and 2006, the proportion of all candidemia due to HCAC increased from 20.9% to 26.9%, but this difference was not statistically significant.

Patients with HCAC were similar to those with nosocomial candidemia (Table 1). There was no difference in either severity of illness or the frequency of neutropenia. The prevalence of most comorbidities did not differ between those with nosocomial candidemia and persons with HCAC. However, immunosuppression was more prevalent among patients with HCAC (prevalence ratio, 1.67; 95% CI, 1.13‐3.08; P = 0.004). In part this finding is expected given that our definition of HCAC includes exposure to agents which may lead to immunosuppression, such as chemotherapy. Of patients with HCAC, the majority (n = 38, 69.1%) were initially admitted to the general medicine service and not to the ICU.

| Characteristic | Healthcare‐Associated Candidemia (n = 55) | Nosocomial Candidemia (n = 168) | P |

|---|---|---|---|

| |||

| Demographics | |||

| Age, mean SD | 61.0 12.9 | 59.1 16.6 | 0.45 |

| Male, % | 60.0 | 45.8 | 0.08 |

| Severity of illness | |||

| APACHE II score, mean SD | 15.9 6.8 | 14.6 6.3 | 0.21 |

| Co‐morbid illnesses | |||

| Diabetes mellitus, % | 36.4 | 32.7 | 0.87 |

| Malignancy, % | 36.4 | 22.6 | 0.04 |

| ESRD on HD, % | 30.9 | 23.2 | 0.25 |

| AIDS, % | 7.2 | 6.0 | 0.73 |

| Immunosupressed, % | 54.5 | 32.7 | 0.004 |

| White cell status | |||

| ANC, 1000/mm3, mean SD | 10.7 7.2 | 12.3 8.0 | 0.20 |

| Neutropenic, % | 2.0 | 2.2 | 0.91 |

A multitude of various yeast species were recovered (Figure 1). Overall, nonalbicans candida were responsible for nearly 60% of all infections. Nonalbicans yeast were as likely to be recovered in HCAC as in nosocomial yeast infection. Among both types of Candidemia, C. krusei was a rare culprit accounting for fewer than 2% of infections. C. glabrata, however, occurred more often in HCAC. Specifically, C. glabrata represented 1 in 5 cases of HCAC as opposed to approximately 10% of all nosocomial yeast BSIs (P = 0.05). In part reflecting this, fluconazole resistance was noted more often in HCAC (18.2% of patients vs. 7.7% among nosocomial candidemia, P = 0.036). There was no difference in the eventual diagnosis of deep‐seeded yeast infections (ie, endocarditis, endopthlamitis, or osteomyelitis) between those with HCAC and persons with nosocomial candidemia (3 cases in each group).

Discussion

This analysis demonstrates that HCAC accounts for approximately a quarter of all candidemia. Our findings underscore that candidemia can present to the emergency department as an HAI and may potentially be initially cared for by a hospitalist. In addition, patients with HCAC and nosocomial candidemia share many attributes. Furthermore, nonalbicans yeast are as prevalent in HCAC as in nosocomial candidal infection. Nonetheless, there appear to be important differences in these syndromes. Immunosuppression appears to be more common in HCAC as does infection due to C. glabrata.

Others have explored the concept of HCAC. Kung et al.10 described community‐onset candidemia at a single center over a 10‐year period. They described 56 patients and noted that the majority had been recently hospitalized or had ongoing interaction with the healthcare system. Sofair et al.11 followed subjects presenting to emergency departments with candidemia. Overall, more than one‐third met criteria for community‐onset infection. In this analysis, though, Sofair et al.11 did not distinguish between community‐acquired processes and HCAC. From a population perspective, Chen et al.12 explored candidemia in Australia. Among over 1000 patients, the noted that 11.6% represented HCAC and, as we note, that select nonalbicans yeast occurred more often in HCAC than in nosocomial candidemia. Our project builds on and adds to these earlier efforts. First, we confirm the general observation that candidemia is no longer solely a nosocomial pathogen. Second, unlike several of these earlier reports we examined a larger cohort of candidemia. Third, beyond the observations of Chen et al.,12 we note that currently, the proportion of Candidal BSI classified as HACA relative to nosocomial candidemia seems larger than reported in the past. Finally, a unique aspect of our report is that we employed express criteria to define HAI.

Our findings have several implications. First, hospitalists and emergency department physicians, along with others, must remain vigilant when approaching patients presenting to the hospital with signs and symptoms of BSI and multiple risk factors for candidal BSI. The fact that the patient has not been hospitalized should not preclude consideration of and treatment for candidemia. The current evidence does not support broad, empiric use of antifungal agents, as this would lead to excessive costs and potentially expose many patients to unnecessary antifungal coverage. On the other hand, given the association between delayed antifungal therapy and the risk for death in candidemia, failure to consider this infection in at‐risk subjects may have adverse consequences. Second, our observations emphasize the need for clinical risk stratification schemes and rapid diagnostic modalities. Such tools are urgently needed if physicians hope to target antifungal therapies more appropriately. Third, if the clinician opts to initiate therapy for possible HCAC, reliance on fluconazole alone may prove inadequate. As the generalizability of our conclusions is necessarily limited, we recommend that infection control practitioners review local epidemiologic data regarding HCAC so that physicians can have the best available guidance.

Our study has several important limitations. Its retrospective nature exposes it to several forms of bias. The single center design limits the generalizability of our findings. Prospective, multicenter studies are needed to validate our results. Additionally, no universally accepted criteria exist to define HAI syndromes. Nonetheless, the criteria we employed have been used by others. We also lacked data on exposure to recent broad spectrum antimicrobials. Selection pressure via exposure to such agents is a risk factor for candidemia and without this data we cannot gauge the impact of this on our findings. Finally, we cannot control for the possibility that some patients were miscategorized. This could have arisen because of: (1) either limitations inherent in the definition of HCAC or (2) because the clinician delayed the decision to obtain blood cultures. Some patients classified as nosocomial may actually have had HCAC or community‐acquired diseasebut for some reason blood cultures were not drawn at time of admission but were deferred until later. Although a difficult issue to address in any study of the epidemiology of infection, the significance of this misclassification bias must be considered a significant concern.

In summary, Candidemia can be the cause of BSI presenting to the hospital. Moreover, HCAC represents a significant proportion of all Candidemia. Although patients with HCAC and nosocomial candidemia share select characteristics, there appear to be some differences in the microbiology of these syndromes.

- CDC.National Nosocomial Infections Surveillance (NNIS) System report, data summary from January 1990‐‐May 1999, issued June 1999.Am J Infect Control.1999;27:520–532.

- ,,.Attributable mortality of candidemia: a systematic review of matched cohort and case‐control studies.Eur J Clin Microbiol Infect Dis.2006;25:419–425.

- ,,, et al.Excess mortality, hospital stay, and cost due to candidemia: a case‐control study using data from population‐based candidemia surveillance.Infect Control Hosp Epidemiol.2005;26:540–547.

- .Shifting patterns in the epidemiology of nosocomial Candida infections.Chest.2003;123:500S–503S.

- ,,.Delaying the empiric treatment of candida bloodstream infection until positive blood culture results are obtained: a potential risk factor for hospital mortality.Antimicrob Agents Chemother.2005;49:3640–3645.

- ,,, et al.Healthcare‐associated bloodstream infection: A distinct entity? Insights from a large U.S. database.Crit Care Med.2006;34:2588–2595.

- ,,, et al.Health care‐‐associated bloodstream infections in adults: a reason to change the accepted definition of community‐acquired infections.Ann Intern Med.2002;137:791–797.

- ,.Epidemiology of healthcare‐associated pneumonia (HCAP).Semin Respir Crit Care Med.2009;30:10–15.

- ,,, et al.Health care associated pneumonia and community‐acquired pneumonia: a single‐center experience.Antimicrob Agents Chemother.2007;51:3568–3573.

- ,,, et al.Communtiy‐onset candidemia at a university hospital, 1995‐2005.J Microbiol Immunol Infect.2007;40:355–363.

- ,,, et al.Epidemiology of community‐onset candidemia in Connecticut and Maryland.Clin Infect Dis.2006;43:32–39.

- ,,, et al.Active surveillance for candidemia, Australia.Emerg Infect Dis.2006;12:1508–1516.

- CDC.National Nosocomial Infections Surveillance (NNIS) System report, data summary from January 1990‐‐May 1999, issued June 1999.Am J Infect Control.1999;27:520–532.

- ,,.Attributable mortality of candidemia: a systematic review of matched cohort and case‐control studies.Eur J Clin Microbiol Infect Dis.2006;25:419–425.

- ,,, et al.Excess mortality, hospital stay, and cost due to candidemia: a case‐control study using data from population‐based candidemia surveillance.Infect Control Hosp Epidemiol.2005;26:540–547.

- .Shifting patterns in the epidemiology of nosocomial Candida infections.Chest.2003;123:500S–503S.

- ,,.Delaying the empiric treatment of candida bloodstream infection until positive blood culture results are obtained: a potential risk factor for hospital mortality.Antimicrob Agents Chemother.2005;49:3640–3645.

- ,,, et al.Healthcare‐associated bloodstream infection: A distinct entity? Insights from a large U.S. database.Crit Care Med.2006;34:2588–2595.

- ,,, et al.Health care‐‐associated bloodstream infections in adults: a reason to change the accepted definition of community‐acquired infections.Ann Intern Med.2002;137:791–797.

- ,.Epidemiology of healthcare‐associated pneumonia (HCAP).Semin Respir Crit Care Med.2009;30:10–15.

- ,,, et al.Health care associated pneumonia and community‐acquired pneumonia: a single‐center experience.Antimicrob Agents Chemother.2007;51:3568–3573.

- ,,, et al.Communtiy‐onset candidemia at a university hospital, 1995‐2005.J Microbiol Immunol Infect.2007;40:355–363.

- ,,, et al.Epidemiology of community‐onset candidemia in Connecticut and Maryland.Clin Infect Dis.2006;43:32–39.

- ,,, et al.Active surveillance for candidemia, Australia.Emerg Infect Dis.2006;12:1508–1516.

Myth: LBBB Masks Hyperkalemia

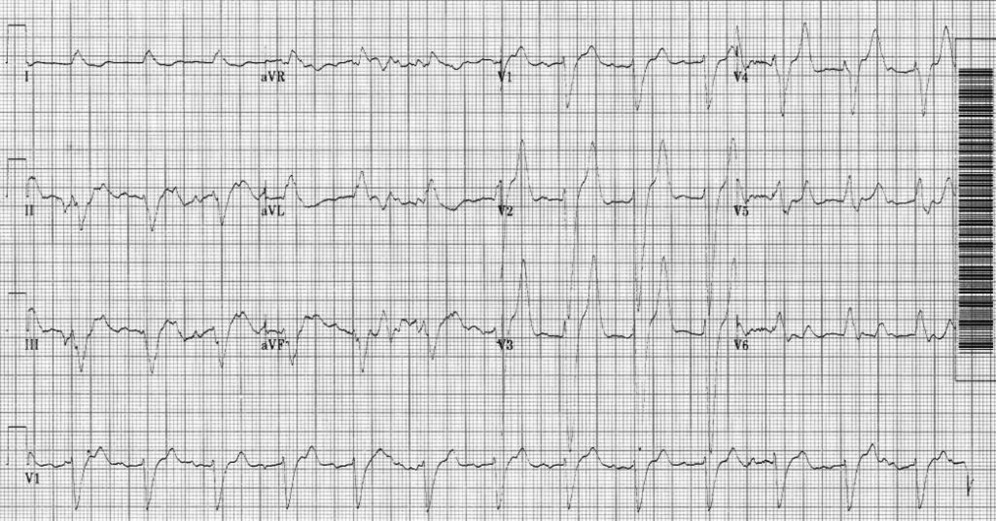

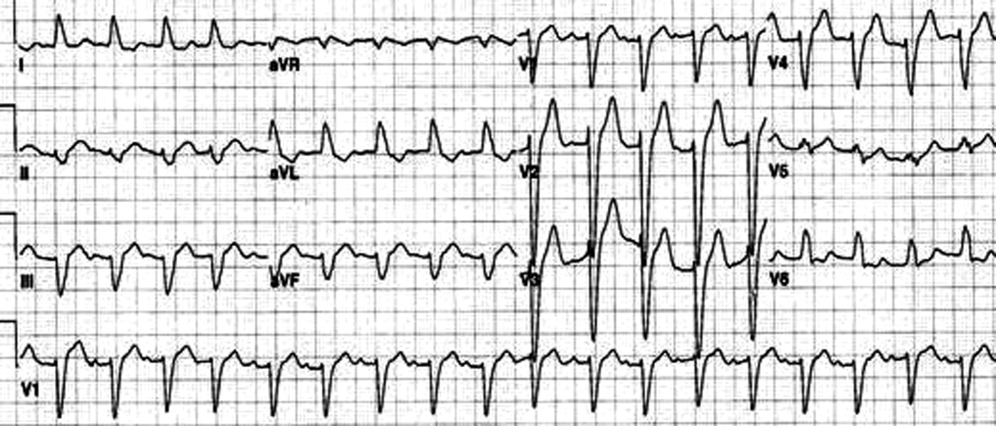

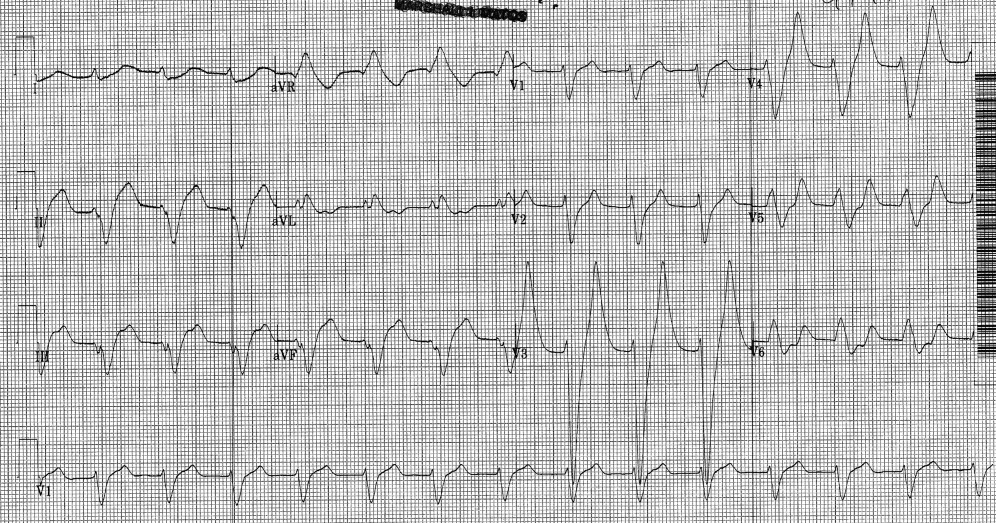

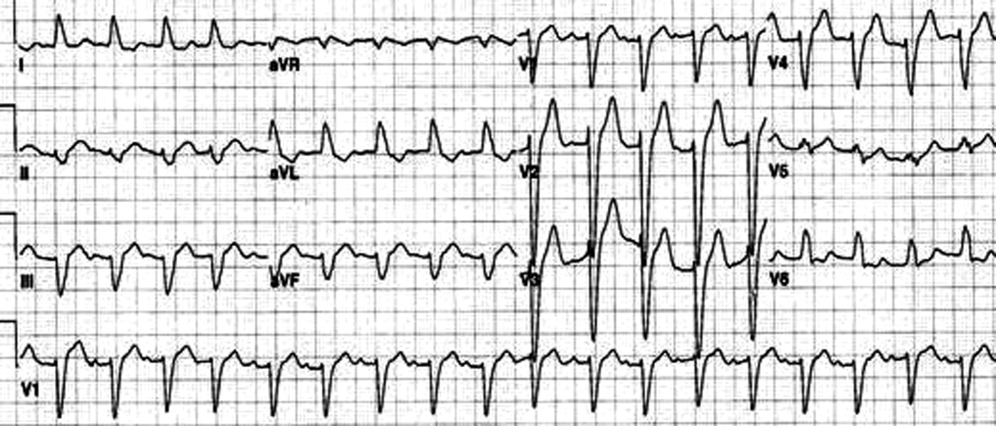

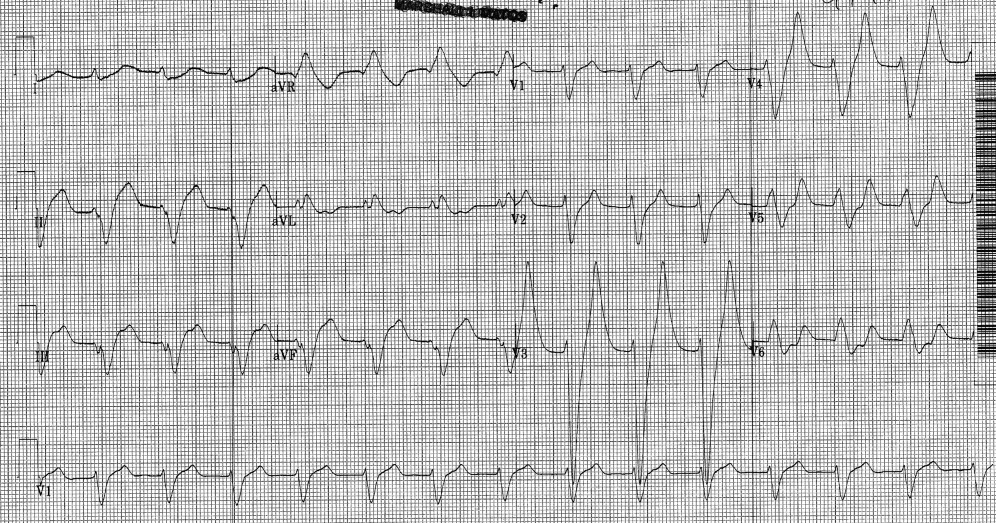

An 80‐year‐old man with end‐stage renal disease requiring maintenance hemodialysis was admitted to the emergency department (ED) with complaints of fever, generalized fatigue, and lethargy. Presenting electrocardiogram (ECG) revealed normal sinus rhythm at 82 beats per minute (bpm), prolonged PR interval, complete left bundle branch block (LBBB) with wide QRS interval and tall T waves (Figure 1). A baseline ECG done 3 months ago also showed LBBB (Figure 2). In view of the underlying LBBB, changes in the presenting ECG were ignored.

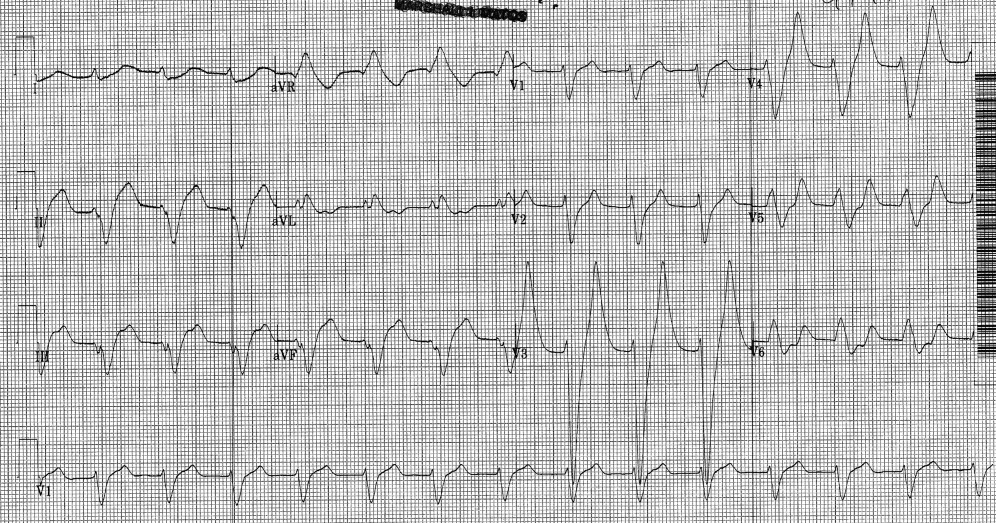

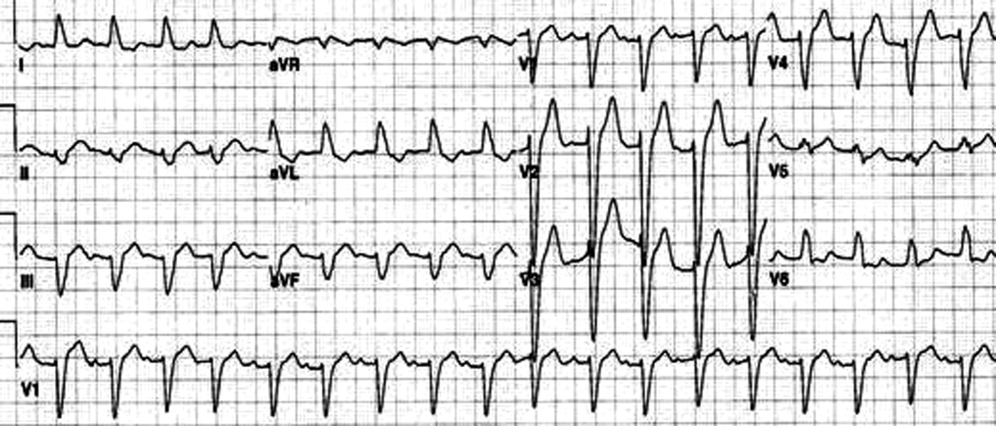

Hemodialysis was planned for the patient. A few hours later, repeat ECG revealed a sine wave pattern suggestive of severe hyperkalemia (Figure 3). Laboratory results were available then and his serum potassium was found to be 6.8 mmol/L. He was started on insulin, dextrose, and calcium gluconate, but he developed cardiorespiratory arrest and died.

Retrospectively, looking at the presenting ECG (Figure 1), it was found that the PR interval was longer, the QRS was broader, and the T waves were taller and more peaked than the baseline ECG (Figure 2).

Discussion

Hyperkalemia is a true medical emergency with potential lethal consequences that must be treated accordingly.1, 2 It can be difficult to diagnose due to the paucity of distinctive signs and symptoms. Any ECG change due to hyperkalemia becomes an indication for stabilizing the myocardium with calcium infusion.

Often, the sequence of repolarization due to myocardial infarction is altered on ECG in patients with baseline LBBB, making it difficult to diagnose accurately. Although it is thought that changes due to electrolyte imbalances will also be masked by the presence of LBBB, there is no evidence supporting this in the literature. Hence, it is wrongly believed that LBBB masks changes due to hyperkalemia. It is important that in patients with suspected electrolyte imbalance, baseline ECG showing LBBB is compared to the presenting ECG. In our patient, the presenting ECG (Figure 1) might not look too impressive, but in comparison to the baseline ECG (Figure 2), the PR interval is longer, QRS is wider, and T waves are more peaked and taller. If the admitting physician had closely compared the presenting ECG (Figure 1) to the baseline ECG (Figure 2), the suspicion of hyperkalemia would have been high.

- ,,.Electrolyte disturbances. In:Marx JA,Hockberger RS,Walls RM, et al.,Rosen's Emergency Medicine: Concepts and Clinical Practice.5th ed. Vol2.St. Louis:Mosby;2002:1730–1731.

- ,.Hyperkalemia in hospitalized patients.Int Urol Nephrol.2000;32:177–180.

An 80‐year‐old man with end‐stage renal disease requiring maintenance hemodialysis was admitted to the emergency department (ED) with complaints of fever, generalized fatigue, and lethargy. Presenting electrocardiogram (ECG) revealed normal sinus rhythm at 82 beats per minute (bpm), prolonged PR interval, complete left bundle branch block (LBBB) with wide QRS interval and tall T waves (Figure 1). A baseline ECG done 3 months ago also showed LBBB (Figure 2). In view of the underlying LBBB, changes in the presenting ECG were ignored.

Hemodialysis was planned for the patient. A few hours later, repeat ECG revealed a sine wave pattern suggestive of severe hyperkalemia (Figure 3). Laboratory results were available then and his serum potassium was found to be 6.8 mmol/L. He was started on insulin, dextrose, and calcium gluconate, but he developed cardiorespiratory arrest and died.

Retrospectively, looking at the presenting ECG (Figure 1), it was found that the PR interval was longer, the QRS was broader, and the T waves were taller and more peaked than the baseline ECG (Figure 2).

Discussion

Hyperkalemia is a true medical emergency with potential lethal consequences that must be treated accordingly.1, 2 It can be difficult to diagnose due to the paucity of distinctive signs and symptoms. Any ECG change due to hyperkalemia becomes an indication for stabilizing the myocardium with calcium infusion.

Often, the sequence of repolarization due to myocardial infarction is altered on ECG in patients with baseline LBBB, making it difficult to diagnose accurately. Although it is thought that changes due to electrolyte imbalances will also be masked by the presence of LBBB, there is no evidence supporting this in the literature. Hence, it is wrongly believed that LBBB masks changes due to hyperkalemia. It is important that in patients with suspected electrolyte imbalance, baseline ECG showing LBBB is compared to the presenting ECG. In our patient, the presenting ECG (Figure 1) might not look too impressive, but in comparison to the baseline ECG (Figure 2), the PR interval is longer, QRS is wider, and T waves are more peaked and taller. If the admitting physician had closely compared the presenting ECG (Figure 1) to the baseline ECG (Figure 2), the suspicion of hyperkalemia would have been high.

An 80‐year‐old man with end‐stage renal disease requiring maintenance hemodialysis was admitted to the emergency department (ED) with complaints of fever, generalized fatigue, and lethargy. Presenting electrocardiogram (ECG) revealed normal sinus rhythm at 82 beats per minute (bpm), prolonged PR interval, complete left bundle branch block (LBBB) with wide QRS interval and tall T waves (Figure 1). A baseline ECG done 3 months ago also showed LBBB (Figure 2). In view of the underlying LBBB, changes in the presenting ECG were ignored.

Hemodialysis was planned for the patient. A few hours later, repeat ECG revealed a sine wave pattern suggestive of severe hyperkalemia (Figure 3). Laboratory results were available then and his serum potassium was found to be 6.8 mmol/L. He was started on insulin, dextrose, and calcium gluconate, but he developed cardiorespiratory arrest and died.

Retrospectively, looking at the presenting ECG (Figure 1), it was found that the PR interval was longer, the QRS was broader, and the T waves were taller and more peaked than the baseline ECG (Figure 2).

Discussion

Hyperkalemia is a true medical emergency with potential lethal consequences that must be treated accordingly.1, 2 It can be difficult to diagnose due to the paucity of distinctive signs and symptoms. Any ECG change due to hyperkalemia becomes an indication for stabilizing the myocardium with calcium infusion.

Often, the sequence of repolarization due to myocardial infarction is altered on ECG in patients with baseline LBBB, making it difficult to diagnose accurately. Although it is thought that changes due to electrolyte imbalances will also be masked by the presence of LBBB, there is no evidence supporting this in the literature. Hence, it is wrongly believed that LBBB masks changes due to hyperkalemia. It is important that in patients with suspected electrolyte imbalance, baseline ECG showing LBBB is compared to the presenting ECG. In our patient, the presenting ECG (Figure 1) might not look too impressive, but in comparison to the baseline ECG (Figure 2), the PR interval is longer, QRS is wider, and T waves are more peaked and taller. If the admitting physician had closely compared the presenting ECG (Figure 1) to the baseline ECG (Figure 2), the suspicion of hyperkalemia would have been high.

- ,,.Electrolyte disturbances. In:Marx JA,Hockberger RS,Walls RM, et al.,Rosen's Emergency Medicine: Concepts and Clinical Practice.5th ed. Vol2.St. Louis:Mosby;2002:1730–1731.

- ,.Hyperkalemia in hospitalized patients.Int Urol Nephrol.2000;32:177–180.

- ,,.Electrolyte disturbances. In:Marx JA,Hockberger RS,Walls RM, et al.,Rosen's Emergency Medicine: Concepts and Clinical Practice.5th ed. Vol2.St. Louis:Mosby;2002:1730–1731.

- ,.Hyperkalemia in hospitalized patients.Int Urol Nephrol.2000;32:177–180.

Patient Knowledge of Hospital Medication

Inpatient medication errors represent an important patient safety issue. The magnitude of the problem is staggering, with 1 review finding almost 1 in every 5 medication doses in error, with 7% having potential for adverse drug events.1 While mistakes made at the ordering stage are frequently intercepted by pharmacist or nursing review, administration errors are particularly difficult to prevent.2 The patient, as the last link in the medication administration chain, represents the final individual capable of preventing an incorrect medication administration. It is perhaps surprising then that patients generally lack a formal role in detecting and preventing adverse medication administration events.3

There have been some ambitious attempts to improve patient education regarding hospital medications and involve selected patients in the medication administration process. Such initiatives may result in increased patient participation and satisfaction.47 There is also potential that increased patient knowledge of their hospital medications could promote the goal of medication safety, as the actively involved patient may be able to catch medication errors in the hospital.

Knowledge of prescribed medications is a prerequisite to patient involvement in prevention of inpatient medication errors and yet there is little research on patient knowledge of their hospital medications. Furthermore, as the experience of hospitalization may be disorienting and disempowering for patients, it remains to be seen if patient attitudes toward participation in inpatient medication safety are favorable. To that end, we conducted a pilot study in which we assessed current patient awareness of their in‐hospital medications and surveyed attitudes toward increased patient knowledge of hospital medications.

PATIENTS AND METHODS

We conducted a cross‐sectional study of 50 cognitively intact adult internal medicine inpatients at the University of Colorado Hospital, a tertiary‐care academic teaching hospital. This study was part of a larger project designed to examine potential for patient involvement in the medication reconciliation process. A professional research assistant approached eligible patients within 24 hours of admission. To be eligible, patients had to self‐identify as knowing their outpatient medications, speak English, and have been admitted from the community. Nursing home residents and patients with a past medical history of dementia were excluded. Enrollment was tracked during the first half of the study to estimate effect of inclusion/exclusion criteria. Thirty‐eight percent of hospital admissions to medicine services were excluded based on the specified criteria. Thirty‐four percent of eligible patients were approached and 50% of approached patients agreed to participate in the study. Patient knowledge of their outpatient medication regimen was compared to admitting physician medication reconciliation to assess accuracy of patient self‐report of outpatient medication knowledge.

After consenting to participate, study patients completed a structured list of their outpatient medications and a survey of attitudes about being shown their in‐hospital medications, hospital medication errors, and patient involvement in hospital safety. They then completed a list of the medications they believed to be prescribed to them in the hospital.

The primary outcomes were the proportions of as needed (PRN), scheduled, and total hospital medications omitted by the patient, compared to the inpatient medication administration record (MAR) (patient errors of omission). Secondary outcomes included the number of in‐hospital medications listed by the patient that did not appear on the inpatient MAR (patient errors of commission), as well as patient attitudes measured on a 5‐point Likert scale (1 indicated strongly disagree and 5 indicated strongly agree.) Descriptive data included age, race, gender, and number of inpatient medications prescribed. Separate analysis of variance (ANOVA) models provided mean estimates of the primary outcomes and tested differences according to each of the patient characteristics: age in years (65 or 65), self‐reported knowledge of hospital medications, and self‐reported desire to be involved in medication safety. Similar ANOVA models adjusted for number of medications were also examined to determine whether the relationship between the primary outcomes according to patient characteristics were altered by the number of medications. The protocol was approved by the Colorado Multiple Institutional Review Board.

RESULTS

Participants averaged 54 years of age (standard deviation [SD] = 17, range = 21‐89). Forty‐six percent (23/50) were male, and 74% (37/50) were non‐Hispanic white. Using a structured, patient‐completed, outpatient medication list, patients in the study were on an average of 5.3 outpatient prescription medications (range = 0‐17), 2.2 over‐the‐counter medications (range = 0‐8), and 0.2 herbal medications (range = 0‐7). The admitting physician's medication reconciliation list demonstrated similar number of outpatient prescription medications (average = 5.7) to the patient‐generated list. Fifty‐four percent of patient‐completed home medication lists included all of the prescription medications on the physician's medication reconciliation at admission. According to the inpatient MAR, study patients were prescribed an average of 11.3 scheduled and PRN hospital medications (range = 2‐26) at time of study enrollment.

Patient Knowledge of Their Hospital Medication List

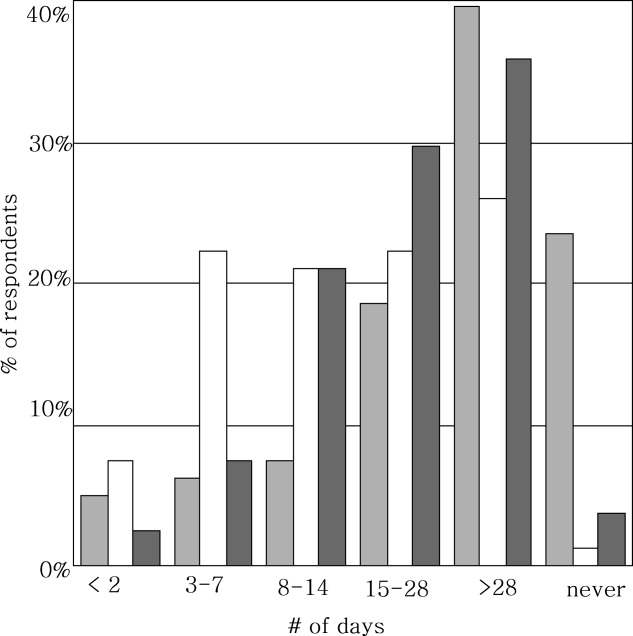

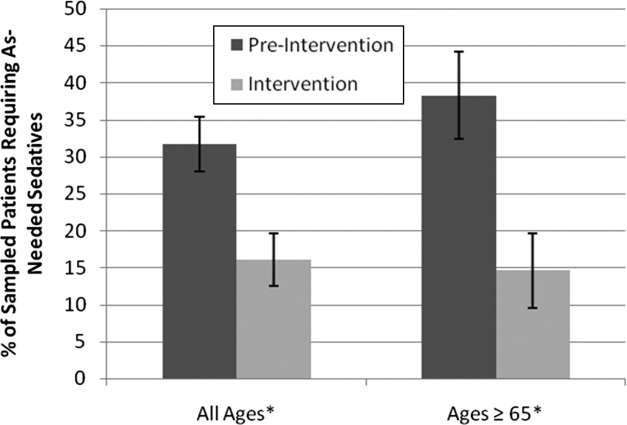

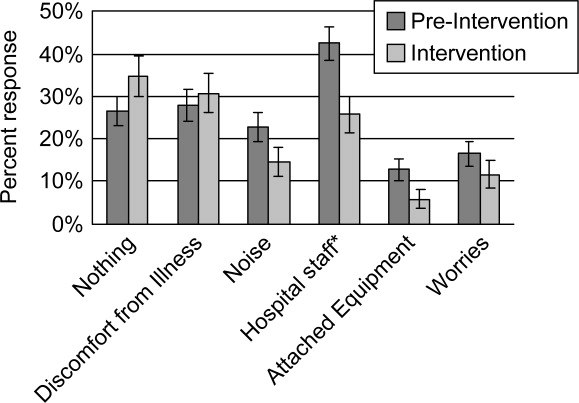

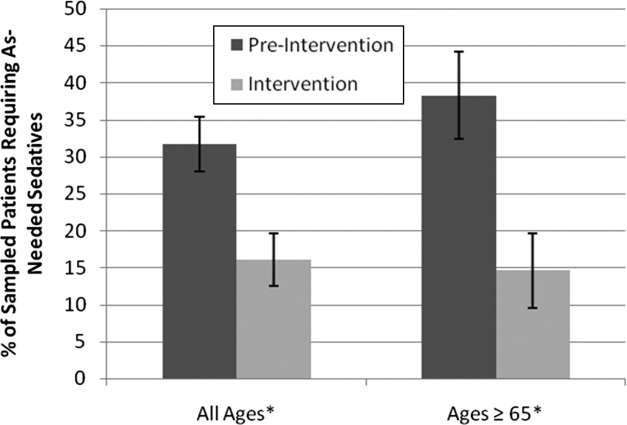

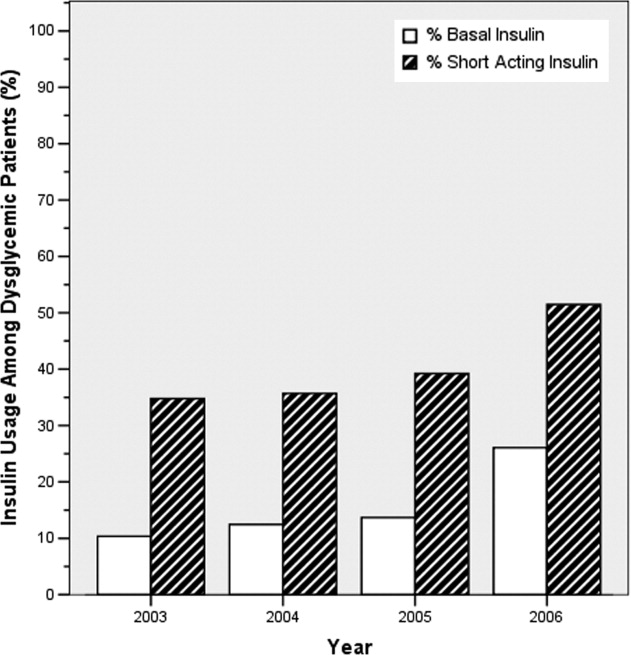

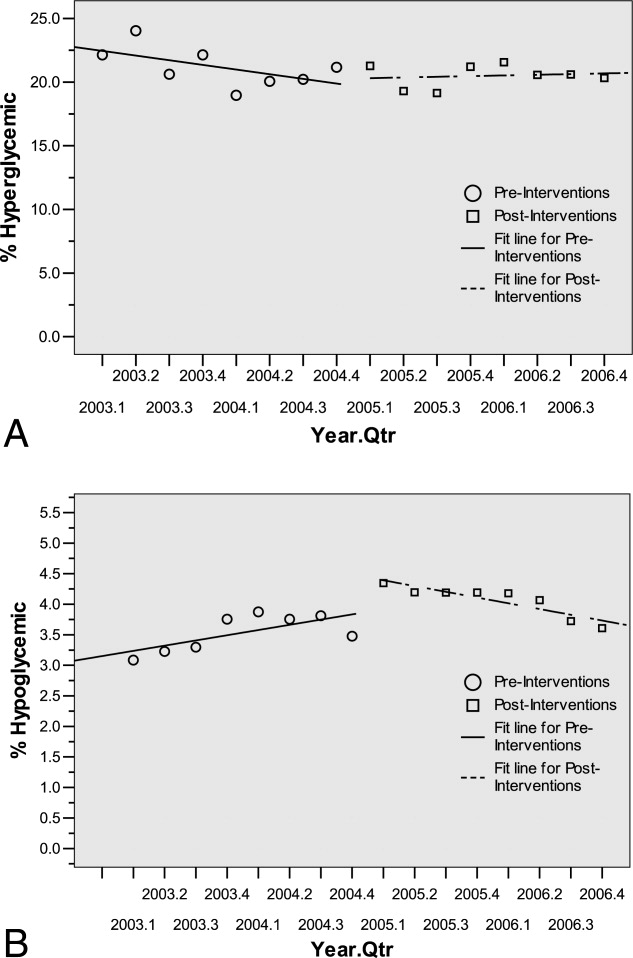

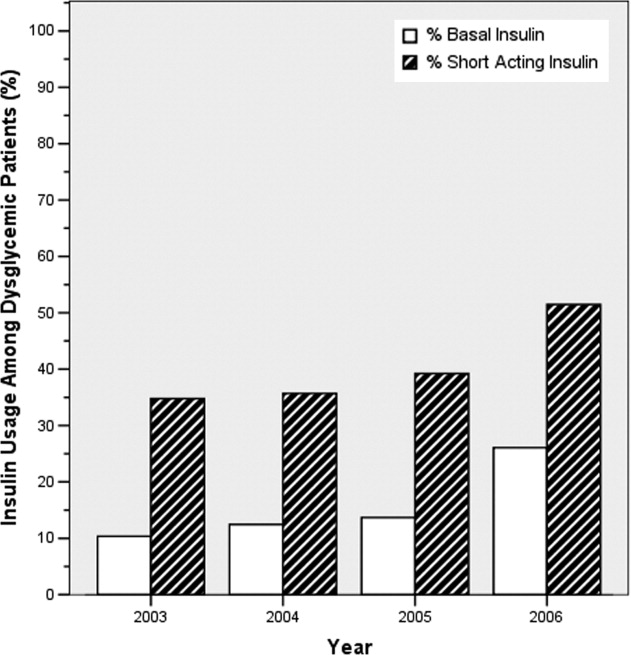

Ninety‐six percent (48/50) of study patients omitted 1 or more of their hospital medications. On average, patients omitted 6.8 medications (range = 0‐22) (Table 1). Among scheduled medications, patients most commonly omitted antibiotics (17%), cardiovascular medications (16%), and antithrombotics (15%) (Figure 1). Among PRN medications, patients most commonly omitted analgesics (33%) and gastrointestinal medications (29%) (Figure 2).

| Total Medications | Scheduled Medications | PRN Medications | |

|---|---|---|---|

| |||

| Percent of patients with at least 1 hospital medication they could not name (95% CI) | 96% (90‐100%) | 94% (87‐100%) | 80% (69‐92%) |

| Average number of hospital medications omitted by patient (range) | 6.8 (0‐22) | 5.2 (0‐15) | 1.6 (0‐7) |

| Percentage of hospital medications omitted by patient (95% CI) | 60% (52‐67%) | 60% (52‐67%) | 68% (57‐78%) |

Patients less than 65 years omitted 60% of their PRN medications whereas patients greater than 65 years omitted 88% (P = 0.01). This difference remained even after adjustment for number of medications. There were no significant differences, based on age, in ability to name scheduled or total medications. Forty‐four percent of patients (22/50) believed they were receiving a medication in the hospital that was not actually prescribed.

Patient Attitudes Toward Increased Knowledge of Hospital Medications

Only 28% (14/50) of patients reported having seen their hospital medication list, although 78% (39/50) favored being given such a list, and 81% (39/48) reported that this would improve their satisfaction with care. Ninety percent (45/50) wanted to review their hospital medication list for accuracy and 94% (47/50) felt patient participation in reviewing hospital medications had potential to reduce errors. No associations were found between self‐reported knowledge of hospital medications or self‐reported desire to be involved in medication safety and the proportion of PRN, scheduled, or total medications omitted.

DISCUSSION

Overall, patients in the study were able to name fewer than one‐half of their hospital medications. Our study suggests that adult medicine inpatients believe learning about their hospital medications would increase their satisfaction and has potential to promote medication safety. At the same time, patients did not know many of their hospital medications and this would limit their ability to fully participate in the medication safety process. Study patients frequently committed both errors of omission (ie, they did not know which medications were prescribed), and errors of commission (ie, they believed they were prescribed medications that were not prescribed). Younger patients were aware of more of their PRN medications than older patients, potentially reflecting greater patient care involvement in younger generations. However, study patients, regardless of age, were able to name fewer than one‐half of their PRN hospital medications. The most common scheduled hospital medications that patients were unable to name come from medication classes which can be associated with significant adverse events, including antibiotics, cardiovascular medications, and antithrombotics.

We posit that without systematically educating patients about their hospital medications, significant deficits in patient knowledge are inevitable. Some might argue that patients should not be asked to know their hospital medications or identify medication errors while sick and vulnerable. Certainly with multiple medication changes, formulary substitutions, and frequent modifications based on changes in clinical status, inpatient medication education could be time consuming and potentially introduce patient confusion or anxiety. Incorrect patient feedback could have potential to introduce new errors. An educational program might use graded participation based on patient interest and ability. Models for this exist in the literature, even extending to patient medication self‐administration.57 In our sample of inpatients, the majority desired a more active role in learning about their hospital medications and believed that their involvement might prevent hospital medication errors from occurring.

Medication literacy, education, and active patient involvement in medication monitoring as a means to improve patient outcomes has received significant attention in the outpatient setting, with lessons applicable to the hospital.8, 9 More broadly, the Joint Commission has established a Hospital National Patient Safety Goal to encourage patients' active involvement in their own care as a patient safety strategy.10 Examples set forth by the Joint Commission include involving patients in infection control measures, marking of procedural sites, and reporting of safety concerns relating to treatment.

While this study identifies patient knowledge deficit as a barrier to utilizing patients as part of the hospital medication safety process, it does not test whether reducing this knowledge deficit would actually reduce medication error. Our study population was limited to cognitively intact adult medicine patients at a single institution, limiting the generalizability of our conclusions. Our enrollment process may have resulted in a study population with less serious illness, greater knowledge of their hospital medications, and greater interest in participating in medication safety potentially overestimating patient knowledge of hospital medications. Finally, our small sample size limits the power to find differences in study comparisons.

Our findings are striking in that we found significant deficits in patient understanding of their hospital medications even among patients who believed they knew, or desired to know, what is being prescribed to them in the hospital. Without a system to incorporate the patient into hospital medication management, these patients will be disenfranchised from participating in inpatient medication safety. These results are a call to reexamine how we educate and involve patients regarding hospital medications. Mechanisms to allow patients to provide feedback to the medical team on their hospital medications might identify errors or improve patient satisfaction with their care. However, the systems and cultural changes needed to provide education on inpatient medications are considerable. Future research is needed to determine if increasing patient knowledge regarding their hospital medications would reduce medication errors in the inpatient setting and how this could be effectively implemented.

Acknowledgements

The authors thank Sue Felton, MA, Professional Research Assistant, for enrolling patients in this trial, and Traci Yamashita, MS, Professional Research Assistant, for statistical analysis.

- ,,,,.Medication errors observed in 36 health care facilities.Arch Intern Med.2002;162:1897–1903.

- ,,, et al.Incidence of adverse drug events and potential adverse drug events. Implications for prevention. ADE Prevention Study Group.JAMA.1995;274:29–34.

- ,.Patient Safety: what about the patient?Qual Saf Health Care.2002;11:76–80.

- ,,, et al.Pharmacist involvement in a multidisciplinary inpatient medication education program.Am J Health Syst Pharm.2003;60:1012–1018.

- ,,,.Self‐administration of medication by patients and family members during hospitalization.Patient Educ Couns.1996;27:103–112.

- ,,,.Hospital inpatient self‐administration of medicine programmes: a critical literature review.Pharm World Sci.2006;28:140–151.

- ,,,.Self‐administration of medication in hospital: patients' perspectives.J Adv Nurs.2004;46:194–203.

- ,.Outpatient drug safety: new steps in an old direction.Pharmacoepidemiol Drug Saf.2007;16:160–165.

- ,,.Impact of health literacy on health outcomes in ambulatory care patients: a systematic review.Phamacosociology.2008;42:1272–1281.

- Joint Commission.2009. Standards Improvement Initiative. Available at: http://www.jointcommission.org/NR/rdonlyres/31666E86‐E7F4–423E‐9BE8‐F05BD1CB0AA8/0/HAP_NPSG.pdf. Accessed June 2009.

Inpatient medication errors represent an important patient safety issue. The magnitude of the problem is staggering, with 1 review finding almost 1 in every 5 medication doses in error, with 7% having potential for adverse drug events.1 While mistakes made at the ordering stage are frequently intercepted by pharmacist or nursing review, administration errors are particularly difficult to prevent.2 The patient, as the last link in the medication administration chain, represents the final individual capable of preventing an incorrect medication administration. It is perhaps surprising then that patients generally lack a formal role in detecting and preventing adverse medication administration events.3

There have been some ambitious attempts to improve patient education regarding hospital medications and involve selected patients in the medication administration process. Such initiatives may result in increased patient participation and satisfaction.47 There is also potential that increased patient knowledge of their hospital medications could promote the goal of medication safety, as the actively involved patient may be able to catch medication errors in the hospital.

Knowledge of prescribed medications is a prerequisite to patient involvement in prevention of inpatient medication errors and yet there is little research on patient knowledge of their hospital medications. Furthermore, as the experience of hospitalization may be disorienting and disempowering for patients, it remains to be seen if patient attitudes toward participation in inpatient medication safety are favorable. To that end, we conducted a pilot study in which we assessed current patient awareness of their in‐hospital medications and surveyed attitudes toward increased patient knowledge of hospital medications.

PATIENTS AND METHODS

We conducted a cross‐sectional study of 50 cognitively intact adult internal medicine inpatients at the University of Colorado Hospital, a tertiary‐care academic teaching hospital. This study was part of a larger project designed to examine potential for patient involvement in the medication reconciliation process. A professional research assistant approached eligible patients within 24 hours of admission. To be eligible, patients had to self‐identify as knowing their outpatient medications, speak English, and have been admitted from the community. Nursing home residents and patients with a past medical history of dementia were excluded. Enrollment was tracked during the first half of the study to estimate effect of inclusion/exclusion criteria. Thirty‐eight percent of hospital admissions to medicine services were excluded based on the specified criteria. Thirty‐four percent of eligible patients were approached and 50% of approached patients agreed to participate in the study. Patient knowledge of their outpatient medication regimen was compared to admitting physician medication reconciliation to assess accuracy of patient self‐report of outpatient medication knowledge.

After consenting to participate, study patients completed a structured list of their outpatient medications and a survey of attitudes about being shown their in‐hospital medications, hospital medication errors, and patient involvement in hospital safety. They then completed a list of the medications they believed to be prescribed to them in the hospital.

The primary outcomes were the proportions of as needed (PRN), scheduled, and total hospital medications omitted by the patient, compared to the inpatient medication administration record (MAR) (patient errors of omission). Secondary outcomes included the number of in‐hospital medications listed by the patient that did not appear on the inpatient MAR (patient errors of commission), as well as patient attitudes measured on a 5‐point Likert scale (1 indicated strongly disagree and 5 indicated strongly agree.) Descriptive data included age, race, gender, and number of inpatient medications prescribed. Separate analysis of variance (ANOVA) models provided mean estimates of the primary outcomes and tested differences according to each of the patient characteristics: age in years (65 or 65), self‐reported knowledge of hospital medications, and self‐reported desire to be involved in medication safety. Similar ANOVA models adjusted for number of medications were also examined to determine whether the relationship between the primary outcomes according to patient characteristics were altered by the number of medications. The protocol was approved by the Colorado Multiple Institutional Review Board.

RESULTS

Participants averaged 54 years of age (standard deviation [SD] = 17, range = 21‐89). Forty‐six percent (23/50) were male, and 74% (37/50) were non‐Hispanic white. Using a structured, patient‐completed, outpatient medication list, patients in the study were on an average of 5.3 outpatient prescription medications (range = 0‐17), 2.2 over‐the‐counter medications (range = 0‐8), and 0.2 herbal medications (range = 0‐7). The admitting physician's medication reconciliation list demonstrated similar number of outpatient prescription medications (average = 5.7) to the patient‐generated list. Fifty‐four percent of patient‐completed home medication lists included all of the prescription medications on the physician's medication reconciliation at admission. According to the inpatient MAR, study patients were prescribed an average of 11.3 scheduled and PRN hospital medications (range = 2‐26) at time of study enrollment.

Patient Knowledge of Their Hospital Medication List

Ninety‐six percent (48/50) of study patients omitted 1 or more of their hospital medications. On average, patients omitted 6.8 medications (range = 0‐22) (Table 1). Among scheduled medications, patients most commonly omitted antibiotics (17%), cardiovascular medications (16%), and antithrombotics (15%) (Figure 1). Among PRN medications, patients most commonly omitted analgesics (33%) and gastrointestinal medications (29%) (Figure 2).

| Total Medications | Scheduled Medications | PRN Medications | |

|---|---|---|---|

| |||

| Percent of patients with at least 1 hospital medication they could not name (95% CI) | 96% (90‐100%) | 94% (87‐100%) | 80% (69‐92%) |

| Average number of hospital medications omitted by patient (range) | 6.8 (0‐22) | 5.2 (0‐15) | 1.6 (0‐7) |

| Percentage of hospital medications omitted by patient (95% CI) | 60% (52‐67%) | 60% (52‐67%) | 68% (57‐78%) |

Patients less than 65 years omitted 60% of their PRN medications whereas patients greater than 65 years omitted 88% (P = 0.01). This difference remained even after adjustment for number of medications. There were no significant differences, based on age, in ability to name scheduled or total medications. Forty‐four percent of patients (22/50) believed they were receiving a medication in the hospital that was not actually prescribed.

Patient Attitudes Toward Increased Knowledge of Hospital Medications

Only 28% (14/50) of patients reported having seen their hospital medication list, although 78% (39/50) favored being given such a list, and 81% (39/48) reported that this would improve their satisfaction with care. Ninety percent (45/50) wanted to review their hospital medication list for accuracy and 94% (47/50) felt patient participation in reviewing hospital medications had potential to reduce errors. No associations were found between self‐reported knowledge of hospital medications or self‐reported desire to be involved in medication safety and the proportion of PRN, scheduled, or total medications omitted.

DISCUSSION

Overall, patients in the study were able to name fewer than one‐half of their hospital medications. Our study suggests that adult medicine inpatients believe learning about their hospital medications would increase their satisfaction and has potential to promote medication safety. At the same time, patients did not know many of their hospital medications and this would limit their ability to fully participate in the medication safety process. Study patients frequently committed both errors of omission (ie, they did not know which medications were prescribed), and errors of commission (ie, they believed they were prescribed medications that were not prescribed). Younger patients were aware of more of their PRN medications than older patients, potentially reflecting greater patient care involvement in younger generations. However, study patients, regardless of age, were able to name fewer than one‐half of their PRN hospital medications. The most common scheduled hospital medications that patients were unable to name come from medication classes which can be associated with significant adverse events, including antibiotics, cardiovascular medications, and antithrombotics.

We posit that without systematically educating patients about their hospital medications, significant deficits in patient knowledge are inevitable. Some might argue that patients should not be asked to know their hospital medications or identify medication errors while sick and vulnerable. Certainly with multiple medication changes, formulary substitutions, and frequent modifications based on changes in clinical status, inpatient medication education could be time consuming and potentially introduce patient confusion or anxiety. Incorrect patient feedback could have potential to introduce new errors. An educational program might use graded participation based on patient interest and ability. Models for this exist in the literature, even extending to patient medication self‐administration.57 In our sample of inpatients, the majority desired a more active role in learning about their hospital medications and believed that their involvement might prevent hospital medication errors from occurring.

Medication literacy, education, and active patient involvement in medication monitoring as a means to improve patient outcomes has received significant attention in the outpatient setting, with lessons applicable to the hospital.8, 9 More broadly, the Joint Commission has established a Hospital National Patient Safety Goal to encourage patients' active involvement in their own care as a patient safety strategy.10 Examples set forth by the Joint Commission include involving patients in infection control measures, marking of procedural sites, and reporting of safety concerns relating to treatment.

While this study identifies patient knowledge deficit as a barrier to utilizing patients as part of the hospital medication safety process, it does not test whether reducing this knowledge deficit would actually reduce medication error. Our study population was limited to cognitively intact adult medicine patients at a single institution, limiting the generalizability of our conclusions. Our enrollment process may have resulted in a study population with less serious illness, greater knowledge of their hospital medications, and greater interest in participating in medication safety potentially overestimating patient knowledge of hospital medications. Finally, our small sample size limits the power to find differences in study comparisons.