User login

Antidepressants, TMS, and the risk of affective switch in bipolar depression

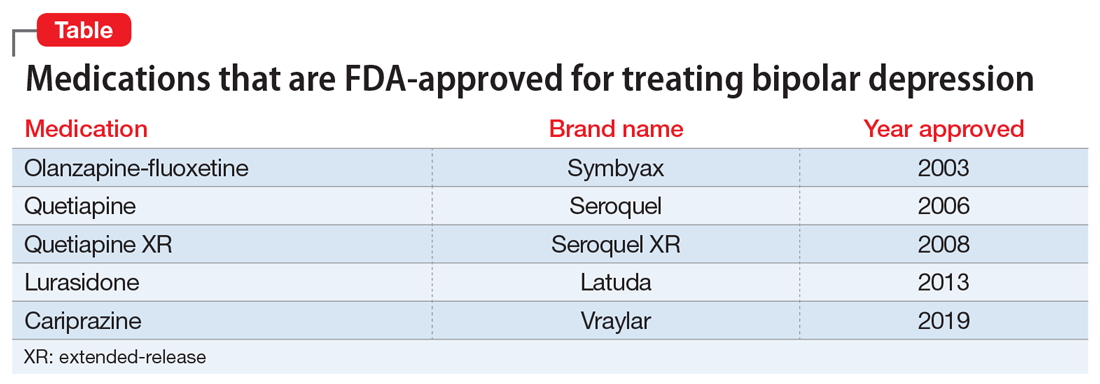

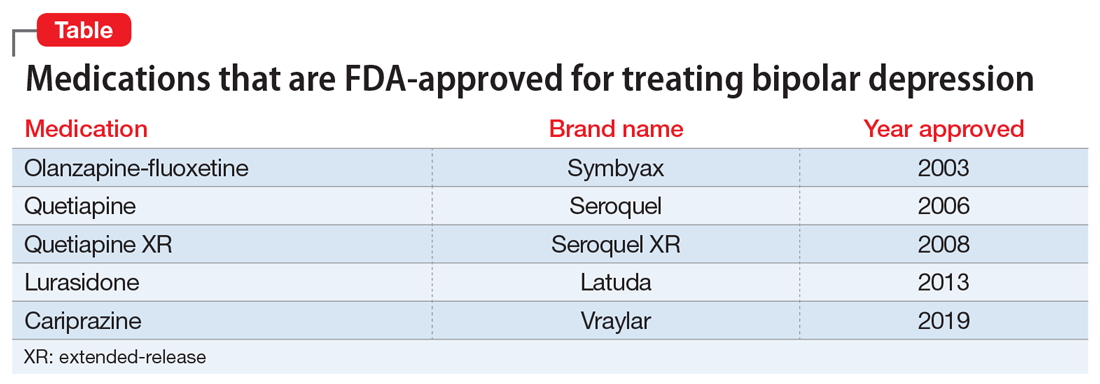

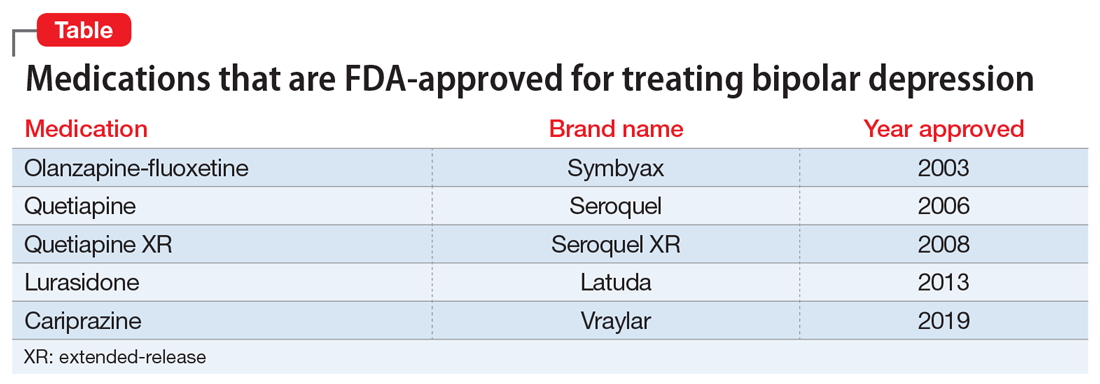

Because treatment resistance is a pervasive problem in bipolar depression, the use of neuromodulation treatments such as transcranial magnetic stimulation (TMS) is increasing for patients with this disorder.1-7 Patients with bipolar disorder tend to spend the majority of the time with depressive symptoms, which underscores the importance of providing effective treatment for bipolar depression, especially given the chronicity of this disease.2,3,5 Only a few medications are FDA-approved for treating bipolar depression (Table).

In this article, we describe the case of a patient with treatment-resistant bipolar depression undergoing adjunctive TMS treatment who experienced an affective switch from depression to mania. We also discuss evidence regarding the likelihood of treatment-emergent mania for antidepressants vs TMS in bipolar depression.

CASE

Ms. W, a 60-year-old White female with a history of bipolar I disorder and attention-deficit/hyperactivity disorder (ADHD), presented for TMS evaluation during a depressive episode. Throughout her life, she had experienced numerous manic episodes, but as she got older she noted an increasing frequency of depressive episodes. Over the course of her illness, she had completed adequate trials at therapeutic doses of many medications, including second-generation antipsychotics (SGAs) (aripiprazole, lurasidone, olanzapine, quetiapine), mood stabilizers (lamotrigine, lithium), and antidepressants (bupropion, venlafaxine, fluoxetine, mirtazapine, trazodone). A course of electroconvulsive therapy was not effective. Ms. W had a long-standing diagnosis of ADHD and had been treated with stimulants for >10 years, although it was unclear whether formal neuropsychological testing had been conducted to confirm this diagnosis. She had >10 suicide attempts and multiple psychiatric hospitalizations.

At her initial evaluation for TMS, Ms. W said she had depressive symptoms predominating for the past 2 years, including low mood, hopelessness, poor sleep, poor appetite, anhedonia, and suicidal ideation without a plan. At the time, she was taking clonazepam, 0.5 mg twice a day; lurasidone, 40 mg/d at bedtime; fluoxetine, 60 mg/d; trazodone, 50 mg/d at bedtime; and methylphenidate, 40 mg/d, and was participating in psychotherapy consistently.

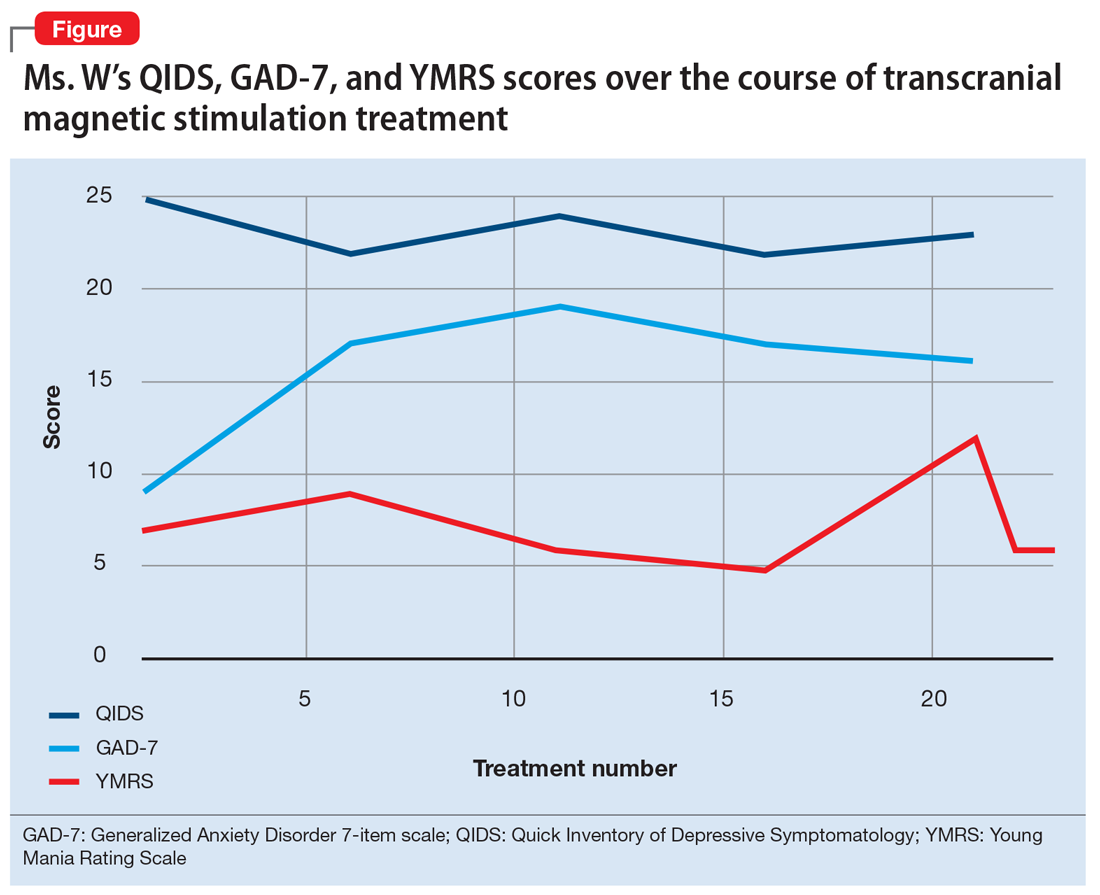

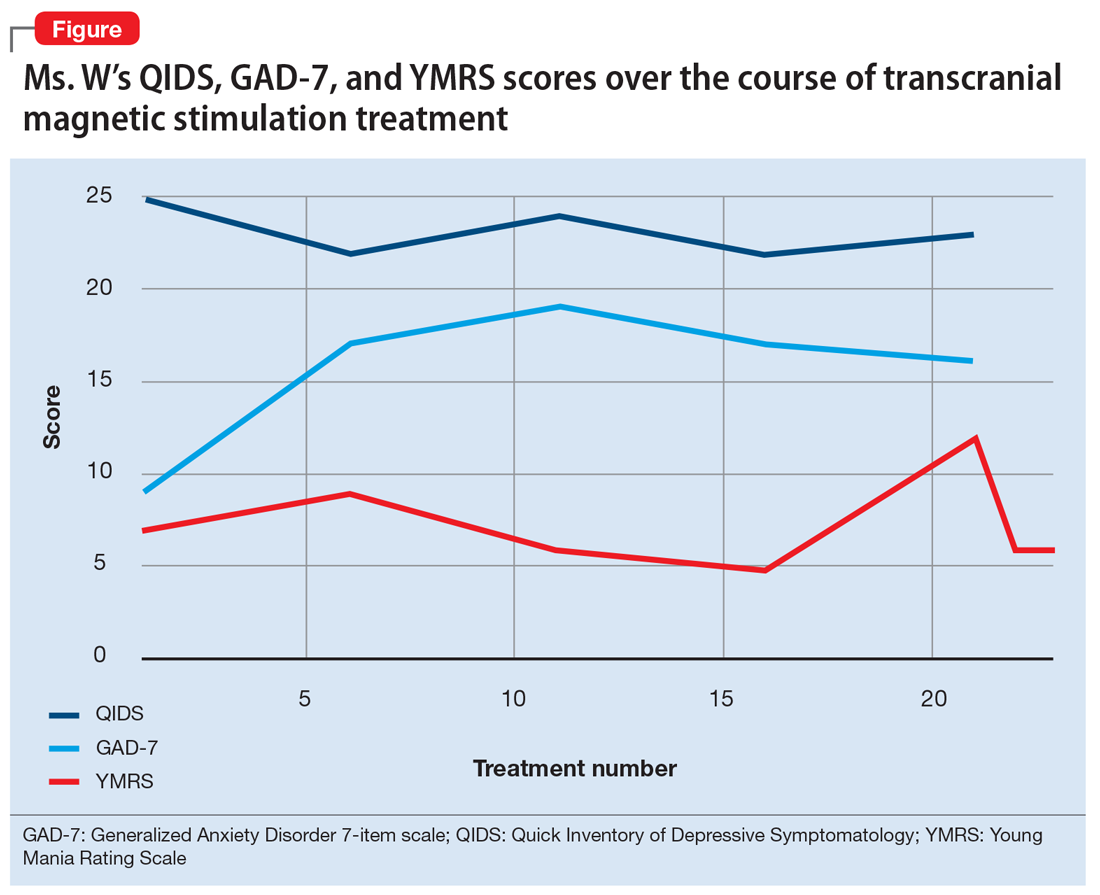

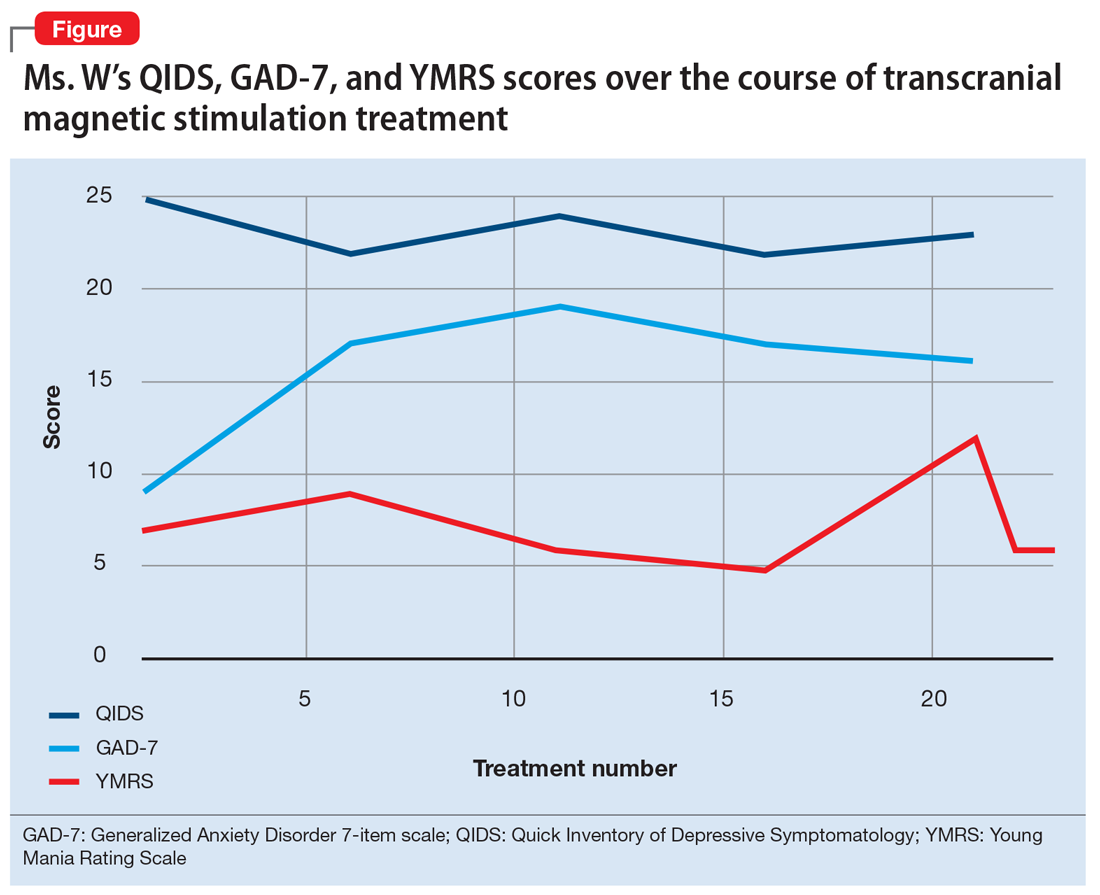

After Ms. W and her clinicians discussed alternatives, risks, benefits, and adverse effects, she consented to adjunctive TMS treatment and provided written informed consent. The treatment plan was outlined as 6 weeks of daily TMS therapy (NeuroStar; Neuronetics, Malvern, PA), 1 treatment per day, 5 days a week. Her clinical status was assessed weekly using the Quick Inventory of Depressive Symptomatology (QIDS) for depression, Generalized Anxiety Disorder 7-item scale (GAD-7) for anxiety, and Young Mania Rating Scale (YMRS) for mania. The Figure shows the trends in Ms. W’s QIDS, GAD-7, and YMRS scores over the course of TMS treatment.

Prior to initiating TMS, her baseline scores were QIDS: 25, GAD-7: 9, and YMRS: 7, indicating very severe depression, mild anxiety, and the absence of mania. Ms. W’s psychotropic regimen remained unchanged throughout the course of her TMS treatment. After her motor threshold was determined, her TMS treatment began at 80% of motor threshold and was titrated up to 95% at the first treatment. By the second treatment, it was titrated up to 110%. By the third treatment, it was titrated up to 120% of motor threshold, which is the percentage used for the remaining treatments.

Initially, Ms. W reported some improvement in her depression, but this improvement was short-lived, and she continued to have elevated QIDS scores throughout treatment. By treatment #21, her QIDS and GAD-7 scores remained elevated, and her YMRS score had increased to 12. Due to this increase in YMRS score, the YMRS was repeated on the next 2 treatment days (#22 and #23), and her score was 6 on both days. When Ms. W presented for treatment #25, she was disorganized, irritable, and endorsed racing thoughts and decreased sleep. She was involuntarily hospitalized for mania, and TMS was discontinued. Unfortunately, she did not complete any clinical scales on that day. Upon admission to the hospital, Ms. W reported that at approximately the time of treatment #21, she had a fluctuation in her mood that consisted of increased goal-directed activity, decreased need for sleep, racing thoughts, and increased frivolous spending. She was treated with lithium, 300 mg twice a day. Lurasidone was increased to 80 mg/d at bedtime, and she continued clonazepam, trazodone, and methylphenidate at the previous doses. Over 14 days, Ms. W’s mania gradually resolved, and she was discharged home.

Continue to: Mixed evidence on the risk of switching

Mixed evidence on the risk of switching

Currently, several TMS devices are FDA-cleared for treating unipolar major depressive disorder, obsessive-compulsive disorder, and certain types of migraine. In March 2020, the FDA granted Breakthrough Device Designation for one TMS device, the NeuroStar Advanced Therapy System, for the treatment of bipolar depression.8 This designation created an expedited pathway for prioritized FDA review of the NeuroStar Advanced Therapy clinical trial program.

Few published clinical studies have evaluated using TMS to treat patients with bipolar depression.9-15 As with any antidepressant treatment for bipolar depression, there is a risk of affective switch from depression to mania when using TMS. Most of the literature available regarding the treatment of bipolar depression focuses on the risk of antidepressant medications to induce an affective switch. This risk depends on the class of the antidepressant,16 and there is a paucity of studies examining the risk of switch with TMS.

Interpretation of available literature is limited due to inconsistencies in the definition of an affective switch, the variable length of treatment with antidepressants, the use of concurrent medications such as mood stabilizers, and confounders such as the natural course of switching in bipolar disorder.17 Overall, the evidence for treatment-emergent mania related to antidepressant use is mixed, and the reported rate of treatment-emergent mania varies. In a systematic review and meta-analysis of >20 randomized controlled trials that included 1,316 patients with bipolar disorder who received antidepressants, Fornaro et al18 found that the incidence of treatment-emergent mania was 11.8%. It is generally recommended that if antidepressants are used to treat patients with bipolar disorder, they should be given with a traditional mood stabilizer to prevent affective switches, although whether mood stabilizers can prevent such switches is unproven.19

In a literature review by Xia et al,20 the affective switch rate in patients with bipolar depression who were treated with TMS was 3.1%, which was not statistically different from the affective switch rate with sham treatment.However, most of the patients included in this analysis were receiving other medications concurrently, and the length of treatment was 2 weeks, which is shorter than the average length of TMS treatment in clinical practice. In a recent literature review by Rachid,21 TMS was found to possibly induce manic episodes when used as monotherapy or in combination with antidepressants in patients with bipolar depression. To reduce the risk of treatment-emergent mania, current recommendations advise the use of a mood stabilizer for a minimum of 2 weeks before initiating TMS.1

In our case, Ms. W was receiving antidepressants (fluoxetine and trazodone), lurasidone (an SGA that is FDA-approved for bipolar depression), and methylphenidate before starting TMS treatment. Fluoxetine, trazodone, and methylphenidate may possibly contribute to an increased risk of an affective switch.1,22 Further studies are needed to clarify whether mood stabilizers or SGAs can prevent the development of mania in patients with bipolar depression who undergo TMS treatment.20

Continue to: Because bipolar depression poses...

Because bipolar depression poses a major clinical challenge,23,24 it is imperative to consider alternate treatments. When evaluating alternative treatment strategies, one may consider TMS in conjunction with a traditional mood stabilizer because this regimen may have a lower risk of treatment-emergent mania compared with antidepressants.1,25

Acknowledgment

The authors thank Dr. Sy Saeed for his expertise and guidance on this article.

Bottom Line

For patients with bipolar depression, treatment with transcranial magnetic stimulation in conjunction with a mood stabilizer may have lower rates of treatment-emergent mania than treatment with antidepressants.

Related Resources

- Transcranial magnetic stimulation: clinical applications for psychiatric practice. Bermudes RA, Lanocha K, Janicak PG, eds. American Psychiatric Association Publishing; 2017.

- Gold AK, Ornelas AC, Cirillo P, et al. Clinical applications of transcranial magnetic stimulation in bipolar disorder. Brain Behav. 2019;9(10):e01419. doi: 10.1002/brb3.1419

Drug Brand Names

Aripiprazole • Abilify

Bupropion • Wellbutrin

Cariprazine • Vraylar

Clonazepam • Klonopin

Fluoxetine • Prozac

Lamotrigine • Lamictal

Lithium • Eskalith, Lithobid

Lurasidone • Latuda

Methylphenidate • Ritalin, Concerta

Mirtazapine • Remeron

Olanzapine • Zyprexa

Olanzapine-fluoxetine • Symbyax

Quetiapine • Seroquel

Trazodone • Desyrel

Venlafaxine • Effexor

1. Aaronson ST, Croarkin PE. Transcranial magnetic stimulation for the treatment of other mood disorders. In: Bermudes RA, Lanocha K, Janicak PG, eds. Transcranial magnetic stimulation: clinical applications for psychiatric practice. American Psychiatric Association Publishing; 2017:127-156.

2. Geddes JR, Miklowitz DJ. Treatment of bipolar disorder. Lancet. 2013;381(9878):1672-1682.

3. Gitlin M. Treatment-resistant bipolar disorder. Molecular Psychiatry. 2006;11(3):227-240.

4. Harrison PJ, Geddes JR, Tunbridge EM. The emerging neurobiology of bipolar disorder. Trends Neurosci. 2018;41(1):18-30.

5. Merikangas KR, Jin R, He JP, et al. Prevalence and correlates of bipolar spectrum disorder in the World Mental Health Survey Initiative. Arch Gen Psychiatry. 2011;68(3):241-251.

6. Myczkowski ML, Fernandes A, Moreno M, et al. Cognitive outcomes of TMS treatment in bipolar depression: safety data from a randomized controlled trial. J Affect Disord. 2018;235: 20-26.

7. Tavares DF, Myczkowski ML, Alberto RL, et al. Treatment of bipolar depression with deep TMS: results from a double-blind, randomized, parallel group, sham-controlled clinical trial. Neuropsychopharmacology. 2017;42(13):2593-2601.

8. Neuronetics. FDA grants NeuroStar® Advanced Therapy System Breakthrough Device Designation to treat bipolar depression. Accessed February 2, 2021. https://www.globenewswire.com/news-release/2020/03/06/1996447/0/en/FDA-Grants-NeuroStar-Advanced-Therapy-System-Breakthrough-Device-Designation-to-Treat-Bipolar-Depression.html

9. Cohen RB, Brunoni AR, Boggio PS, et al. Clinical predictors associated with duration of repetitive transcranial magnetic stimulation treatment for remission in bipolar depression: a naturalistic study. J Nerv Ment Dis. 2010;198(9):679-681.

10. Connolly KR, Helmer A, Cristancho MA, et al. Effectiveness of transcranial magnetic stimulation in clinical practice post-FDA approval in the United States: results observed with the first 100 consecutive cases of depression at an academic medical center. J Clin Psychiatry. 2012;73(4):e567-e573.

11. Dell’osso B, D’Urso N, Castellano F, et al. Long-term efficacy after acute augmentative repetitive transcranial magnetic stimulation in bipolar depression: a 1-year follow-up study. J ECT. 2011;27(2):141-144.

12. Dell’Osso B, Mundo E, D’Urso N, et al. Augmentative repetitive navigated transcranial magnetic stimulation (rTMS) in drug-resistant bipolar depression. Bipolar Disord. 2009;11(1):76-81.

13. Harel EV, Zangen A, Roth Y, et al. H-coil repetitive transcranial magnetic stimulation for the treatment of bipolar depression: an add-on, safety and feasibility study. World J Biol Psychiatry. 2011;12(2):119-126.

14. Nahas Z, Kozel FA, Li X, et al. Left prefrontal transcranial magnetic stimulation (TMS) treatment of depression in bipolar affective disorder: a pilot study of acute safety and efficacy. Bipolar Disord. 2003;5(1):40-47.

15. Tamas RL, Menkes D, El-Mallakh RS. Stimulating research: a prospective, randomized, double-blind, sham-controlled study of slow transcranial magnetic stimulation in depressed bipolar patients. J Neuropsychiatry Clin Neurosci. 2007;19(2):198-199.

16. Tundo A, Cavalieri P, Navari S, et al. Treating bipolar depression - antidepressants and alternatives: a critical review of the literature. Acta Neuropsychiatrica. 2011:23(3):94-105.

17. Gijsman HJ, Geddes JR, Rendell JM, et al. Antidepressants for bipolar depression: a systematic review of randomized, controlled trials. Am J Psychiatry. 2004;161(9):1537-1547.

18. Fornaro M, Anastasia A, Novello S, et al. Incidence, prevalence and clinical correlates of antidepressant‐emergent mania in bipolar depression: a systematic review and meta‐analysis. Bipolar Disord. 2018;20(3):195-227.

19. Pacchiarotti I, Bond DJ, Baldessarini RJ, et al. The International Society for Bipolar Disorders (ISBD) task force report on antidepressant use in bipolar disorders. Am J Psychiatry. 2013;170(11):1249-1262.

20. Xia G, Gajwani P, Muzina DJ, et al. Treatment-emergent mania in unipolar and bipolar depression: focus on repetitive transcranial magnetic stimulation. Int J Neuropsychopharmacol. 2008;11(1):119-130.

21. Rachid F. Repetitive transcranial magnetic stimulation and treatment-emergent mania and hypomania: a review of the literature. J Psychiatr Pract. 2017;23(2):150-159.

22. Victorin A, Rydén E, Thase M, et al. The risk of treatment-emergent mania with methylphenidate in bipolar disorder. Am J Psychiatry. 2017;174(4):341-348.

23. Hidalgo-Mazzei D, Berk M, Cipriani A, et al. Treatment-resistant and multi-therapy-resistant criteria for bipolar depression: consensus definition. Br J Psychiatry. 2019;214(1):27-35.

24. Baldessarini RJ, Vázquez GH, Tondo L. Bipolar depression: a major unsolved challenge. Int J Bipolar Disord. 2020;8(1):1.

25. Phillips AL, Burr RL, Dunner DL. Repetitive transcranial magnetic stimulation in the treatment of bipolar depression: Experience from a clinical setting. J Psychiatr Pract. 2020;26(1):37-45.

Because treatment resistance is a pervasive problem in bipolar depression, the use of neuromodulation treatments such as transcranial magnetic stimulation (TMS) is increasing for patients with this disorder.1-7 Patients with bipolar disorder tend to spend the majority of the time with depressive symptoms, which underscores the importance of providing effective treatment for bipolar depression, especially given the chronicity of this disease.2,3,5 Only a few medications are FDA-approved for treating bipolar depression (Table).

In this article, we describe the case of a patient with treatment-resistant bipolar depression undergoing adjunctive TMS treatment who experienced an affective switch from depression to mania. We also discuss evidence regarding the likelihood of treatment-emergent mania for antidepressants vs TMS in bipolar depression.

CASE

Ms. W, a 60-year-old White female with a history of bipolar I disorder and attention-deficit/hyperactivity disorder (ADHD), presented for TMS evaluation during a depressive episode. Throughout her life, she had experienced numerous manic episodes, but as she got older she noted an increasing frequency of depressive episodes. Over the course of her illness, she had completed adequate trials at therapeutic doses of many medications, including second-generation antipsychotics (SGAs) (aripiprazole, lurasidone, olanzapine, quetiapine), mood stabilizers (lamotrigine, lithium), and antidepressants (bupropion, venlafaxine, fluoxetine, mirtazapine, trazodone). A course of electroconvulsive therapy was not effective. Ms. W had a long-standing diagnosis of ADHD and had been treated with stimulants for >10 years, although it was unclear whether formal neuropsychological testing had been conducted to confirm this diagnosis. She had >10 suicide attempts and multiple psychiatric hospitalizations.

At her initial evaluation for TMS, Ms. W said she had depressive symptoms predominating for the past 2 years, including low mood, hopelessness, poor sleep, poor appetite, anhedonia, and suicidal ideation without a plan. At the time, she was taking clonazepam, 0.5 mg twice a day; lurasidone, 40 mg/d at bedtime; fluoxetine, 60 mg/d; trazodone, 50 mg/d at bedtime; and methylphenidate, 40 mg/d, and was participating in psychotherapy consistently.

After Ms. W and her clinicians discussed alternatives, risks, benefits, and adverse effects, she consented to adjunctive TMS treatment and provided written informed consent. The treatment plan was outlined as 6 weeks of daily TMS therapy (NeuroStar; Neuronetics, Malvern, PA), 1 treatment per day, 5 days a week. Her clinical status was assessed weekly using the Quick Inventory of Depressive Symptomatology (QIDS) for depression, Generalized Anxiety Disorder 7-item scale (GAD-7) for anxiety, and Young Mania Rating Scale (YMRS) for mania. The Figure shows the trends in Ms. W’s QIDS, GAD-7, and YMRS scores over the course of TMS treatment.

Prior to initiating TMS, her baseline scores were QIDS: 25, GAD-7: 9, and YMRS: 7, indicating very severe depression, mild anxiety, and the absence of mania. Ms. W’s psychotropic regimen remained unchanged throughout the course of her TMS treatment. After her motor threshold was determined, her TMS treatment began at 80% of motor threshold and was titrated up to 95% at the first treatment. By the second treatment, it was titrated up to 110%. By the third treatment, it was titrated up to 120% of motor threshold, which is the percentage used for the remaining treatments.

Initially, Ms. W reported some improvement in her depression, but this improvement was short-lived, and she continued to have elevated QIDS scores throughout treatment. By treatment #21, her QIDS and GAD-7 scores remained elevated, and her YMRS score had increased to 12. Due to this increase in YMRS score, the YMRS was repeated on the next 2 treatment days (#22 and #23), and her score was 6 on both days. When Ms. W presented for treatment #25, she was disorganized, irritable, and endorsed racing thoughts and decreased sleep. She was involuntarily hospitalized for mania, and TMS was discontinued. Unfortunately, she did not complete any clinical scales on that day. Upon admission to the hospital, Ms. W reported that at approximately the time of treatment #21, she had a fluctuation in her mood that consisted of increased goal-directed activity, decreased need for sleep, racing thoughts, and increased frivolous spending. She was treated with lithium, 300 mg twice a day. Lurasidone was increased to 80 mg/d at bedtime, and she continued clonazepam, trazodone, and methylphenidate at the previous doses. Over 14 days, Ms. W’s mania gradually resolved, and she was discharged home.

Continue to: Mixed evidence on the risk of switching

Mixed evidence on the risk of switching

Currently, several TMS devices are FDA-cleared for treating unipolar major depressive disorder, obsessive-compulsive disorder, and certain types of migraine. In March 2020, the FDA granted Breakthrough Device Designation for one TMS device, the NeuroStar Advanced Therapy System, for the treatment of bipolar depression.8 This designation created an expedited pathway for prioritized FDA review of the NeuroStar Advanced Therapy clinical trial program.

Few published clinical studies have evaluated using TMS to treat patients with bipolar depression.9-15 As with any antidepressant treatment for bipolar depression, there is a risk of affective switch from depression to mania when using TMS. Most of the literature available regarding the treatment of bipolar depression focuses on the risk of antidepressant medications to induce an affective switch. This risk depends on the class of the antidepressant,16 and there is a paucity of studies examining the risk of switch with TMS.

Interpretation of available literature is limited due to inconsistencies in the definition of an affective switch, the variable length of treatment with antidepressants, the use of concurrent medications such as mood stabilizers, and confounders such as the natural course of switching in bipolar disorder.17 Overall, the evidence for treatment-emergent mania related to antidepressant use is mixed, and the reported rate of treatment-emergent mania varies. In a systematic review and meta-analysis of >20 randomized controlled trials that included 1,316 patients with bipolar disorder who received antidepressants, Fornaro et al18 found that the incidence of treatment-emergent mania was 11.8%. It is generally recommended that if antidepressants are used to treat patients with bipolar disorder, they should be given with a traditional mood stabilizer to prevent affective switches, although whether mood stabilizers can prevent such switches is unproven.19

In a literature review by Xia et al,20 the affective switch rate in patients with bipolar depression who were treated with TMS was 3.1%, which was not statistically different from the affective switch rate with sham treatment.However, most of the patients included in this analysis were receiving other medications concurrently, and the length of treatment was 2 weeks, which is shorter than the average length of TMS treatment in clinical practice. In a recent literature review by Rachid,21 TMS was found to possibly induce manic episodes when used as monotherapy or in combination with antidepressants in patients with bipolar depression. To reduce the risk of treatment-emergent mania, current recommendations advise the use of a mood stabilizer for a minimum of 2 weeks before initiating TMS.1

In our case, Ms. W was receiving antidepressants (fluoxetine and trazodone), lurasidone (an SGA that is FDA-approved for bipolar depression), and methylphenidate before starting TMS treatment. Fluoxetine, trazodone, and methylphenidate may possibly contribute to an increased risk of an affective switch.1,22 Further studies are needed to clarify whether mood stabilizers or SGAs can prevent the development of mania in patients with bipolar depression who undergo TMS treatment.20

Continue to: Because bipolar depression poses...

Because bipolar depression poses a major clinical challenge,23,24 it is imperative to consider alternate treatments. When evaluating alternative treatment strategies, one may consider TMS in conjunction with a traditional mood stabilizer because this regimen may have a lower risk of treatment-emergent mania compared with antidepressants.1,25

Acknowledgment

The authors thank Dr. Sy Saeed for his expertise and guidance on this article.

Bottom Line

For patients with bipolar depression, treatment with transcranial magnetic stimulation in conjunction with a mood stabilizer may have lower rates of treatment-emergent mania than treatment with antidepressants.

Related Resources

- Transcranial magnetic stimulation: clinical applications for psychiatric practice. Bermudes RA, Lanocha K, Janicak PG, eds. American Psychiatric Association Publishing; 2017.

- Gold AK, Ornelas AC, Cirillo P, et al. Clinical applications of transcranial magnetic stimulation in bipolar disorder. Brain Behav. 2019;9(10):e01419. doi: 10.1002/brb3.1419

Drug Brand Names

Aripiprazole • Abilify

Bupropion • Wellbutrin

Cariprazine • Vraylar

Clonazepam • Klonopin

Fluoxetine • Prozac

Lamotrigine • Lamictal

Lithium • Eskalith, Lithobid

Lurasidone • Latuda

Methylphenidate • Ritalin, Concerta

Mirtazapine • Remeron

Olanzapine • Zyprexa

Olanzapine-fluoxetine • Symbyax

Quetiapine • Seroquel

Trazodone • Desyrel

Venlafaxine • Effexor

Because treatment resistance is a pervasive problem in bipolar depression, the use of neuromodulation treatments such as transcranial magnetic stimulation (TMS) is increasing for patients with this disorder.1-7 Patients with bipolar disorder tend to spend the majority of the time with depressive symptoms, which underscores the importance of providing effective treatment for bipolar depression, especially given the chronicity of this disease.2,3,5 Only a few medications are FDA-approved for treating bipolar depression (Table).

In this article, we describe the case of a patient with treatment-resistant bipolar depression undergoing adjunctive TMS treatment who experienced an affective switch from depression to mania. We also discuss evidence regarding the likelihood of treatment-emergent mania for antidepressants vs TMS in bipolar depression.

CASE

Ms. W, a 60-year-old White female with a history of bipolar I disorder and attention-deficit/hyperactivity disorder (ADHD), presented for TMS evaluation during a depressive episode. Throughout her life, she had experienced numerous manic episodes, but as she got older she noted an increasing frequency of depressive episodes. Over the course of her illness, she had completed adequate trials at therapeutic doses of many medications, including second-generation antipsychotics (SGAs) (aripiprazole, lurasidone, olanzapine, quetiapine), mood stabilizers (lamotrigine, lithium), and antidepressants (bupropion, venlafaxine, fluoxetine, mirtazapine, trazodone). A course of electroconvulsive therapy was not effective. Ms. W had a long-standing diagnosis of ADHD and had been treated with stimulants for >10 years, although it was unclear whether formal neuropsychological testing had been conducted to confirm this diagnosis. She had >10 suicide attempts and multiple psychiatric hospitalizations.

At her initial evaluation for TMS, Ms. W said she had depressive symptoms predominating for the past 2 years, including low mood, hopelessness, poor sleep, poor appetite, anhedonia, and suicidal ideation without a plan. At the time, she was taking clonazepam, 0.5 mg twice a day; lurasidone, 40 mg/d at bedtime; fluoxetine, 60 mg/d; trazodone, 50 mg/d at bedtime; and methylphenidate, 40 mg/d, and was participating in psychotherapy consistently.

After Ms. W and her clinicians discussed alternatives, risks, benefits, and adverse effects, she consented to adjunctive TMS treatment and provided written informed consent. The treatment plan was outlined as 6 weeks of daily TMS therapy (NeuroStar; Neuronetics, Malvern, PA), 1 treatment per day, 5 days a week. Her clinical status was assessed weekly using the Quick Inventory of Depressive Symptomatology (QIDS) for depression, Generalized Anxiety Disorder 7-item scale (GAD-7) for anxiety, and Young Mania Rating Scale (YMRS) for mania. The Figure shows the trends in Ms. W’s QIDS, GAD-7, and YMRS scores over the course of TMS treatment.

Prior to initiating TMS, her baseline scores were QIDS: 25, GAD-7: 9, and YMRS: 7, indicating very severe depression, mild anxiety, and the absence of mania. Ms. W’s psychotropic regimen remained unchanged throughout the course of her TMS treatment. After her motor threshold was determined, her TMS treatment began at 80% of motor threshold and was titrated up to 95% at the first treatment. By the second treatment, it was titrated up to 110%. By the third treatment, it was titrated up to 120% of motor threshold, which is the percentage used for the remaining treatments.

Initially, Ms. W reported some improvement in her depression, but this improvement was short-lived, and she continued to have elevated QIDS scores throughout treatment. By treatment #21, her QIDS and GAD-7 scores remained elevated, and her YMRS score had increased to 12. Due to this increase in YMRS score, the YMRS was repeated on the next 2 treatment days (#22 and #23), and her score was 6 on both days. When Ms. W presented for treatment #25, she was disorganized, irritable, and endorsed racing thoughts and decreased sleep. She was involuntarily hospitalized for mania, and TMS was discontinued. Unfortunately, she did not complete any clinical scales on that day. Upon admission to the hospital, Ms. W reported that at approximately the time of treatment #21, she had a fluctuation in her mood that consisted of increased goal-directed activity, decreased need for sleep, racing thoughts, and increased frivolous spending. She was treated with lithium, 300 mg twice a day. Lurasidone was increased to 80 mg/d at bedtime, and she continued clonazepam, trazodone, and methylphenidate at the previous doses. Over 14 days, Ms. W’s mania gradually resolved, and she was discharged home.

Continue to: Mixed evidence on the risk of switching

Mixed evidence on the risk of switching

Currently, several TMS devices are FDA-cleared for treating unipolar major depressive disorder, obsessive-compulsive disorder, and certain types of migraine. In March 2020, the FDA granted Breakthrough Device Designation for one TMS device, the NeuroStar Advanced Therapy System, for the treatment of bipolar depression.8 This designation created an expedited pathway for prioritized FDA review of the NeuroStar Advanced Therapy clinical trial program.

Few published clinical studies have evaluated using TMS to treat patients with bipolar depression.9-15 As with any antidepressant treatment for bipolar depression, there is a risk of affective switch from depression to mania when using TMS. Most of the literature available regarding the treatment of bipolar depression focuses on the risk of antidepressant medications to induce an affective switch. This risk depends on the class of the antidepressant,16 and there is a paucity of studies examining the risk of switch with TMS.

Interpretation of available literature is limited due to inconsistencies in the definition of an affective switch, the variable length of treatment with antidepressants, the use of concurrent medications such as mood stabilizers, and confounders such as the natural course of switching in bipolar disorder.17 Overall, the evidence for treatment-emergent mania related to antidepressant use is mixed, and the reported rate of treatment-emergent mania varies. In a systematic review and meta-analysis of >20 randomized controlled trials that included 1,316 patients with bipolar disorder who received antidepressants, Fornaro et al18 found that the incidence of treatment-emergent mania was 11.8%. It is generally recommended that if antidepressants are used to treat patients with bipolar disorder, they should be given with a traditional mood stabilizer to prevent affective switches, although whether mood stabilizers can prevent such switches is unproven.19

In a literature review by Xia et al,20 the affective switch rate in patients with bipolar depression who were treated with TMS was 3.1%, which was not statistically different from the affective switch rate with sham treatment.However, most of the patients included in this analysis were receiving other medications concurrently, and the length of treatment was 2 weeks, which is shorter than the average length of TMS treatment in clinical practice. In a recent literature review by Rachid,21 TMS was found to possibly induce manic episodes when used as monotherapy or in combination with antidepressants in patients with bipolar depression. To reduce the risk of treatment-emergent mania, current recommendations advise the use of a mood stabilizer for a minimum of 2 weeks before initiating TMS.1

In our case, Ms. W was receiving antidepressants (fluoxetine and trazodone), lurasidone (an SGA that is FDA-approved for bipolar depression), and methylphenidate before starting TMS treatment. Fluoxetine, trazodone, and methylphenidate may possibly contribute to an increased risk of an affective switch.1,22 Further studies are needed to clarify whether mood stabilizers or SGAs can prevent the development of mania in patients with bipolar depression who undergo TMS treatment.20

Continue to: Because bipolar depression poses...

Because bipolar depression poses a major clinical challenge,23,24 it is imperative to consider alternate treatments. When evaluating alternative treatment strategies, one may consider TMS in conjunction with a traditional mood stabilizer because this regimen may have a lower risk of treatment-emergent mania compared with antidepressants.1,25

Acknowledgment

The authors thank Dr. Sy Saeed for his expertise and guidance on this article.

Bottom Line

For patients with bipolar depression, treatment with transcranial magnetic stimulation in conjunction with a mood stabilizer may have lower rates of treatment-emergent mania than treatment with antidepressants.

Related Resources

- Transcranial magnetic stimulation: clinical applications for psychiatric practice. Bermudes RA, Lanocha K, Janicak PG, eds. American Psychiatric Association Publishing; 2017.

- Gold AK, Ornelas AC, Cirillo P, et al. Clinical applications of transcranial magnetic stimulation in bipolar disorder. Brain Behav. 2019;9(10):e01419. doi: 10.1002/brb3.1419

Drug Brand Names

Aripiprazole • Abilify

Bupropion • Wellbutrin

Cariprazine • Vraylar

Clonazepam • Klonopin

Fluoxetine • Prozac

Lamotrigine • Lamictal

Lithium • Eskalith, Lithobid

Lurasidone • Latuda

Methylphenidate • Ritalin, Concerta

Mirtazapine • Remeron

Olanzapine • Zyprexa

Olanzapine-fluoxetine • Symbyax

Quetiapine • Seroquel

Trazodone • Desyrel

Venlafaxine • Effexor

1. Aaronson ST, Croarkin PE. Transcranial magnetic stimulation for the treatment of other mood disorders. In: Bermudes RA, Lanocha K, Janicak PG, eds. Transcranial magnetic stimulation: clinical applications for psychiatric practice. American Psychiatric Association Publishing; 2017:127-156.

2. Geddes JR, Miklowitz DJ. Treatment of bipolar disorder. Lancet. 2013;381(9878):1672-1682.

3. Gitlin M. Treatment-resistant bipolar disorder. Molecular Psychiatry. 2006;11(3):227-240.

4. Harrison PJ, Geddes JR, Tunbridge EM. The emerging neurobiology of bipolar disorder. Trends Neurosci. 2018;41(1):18-30.

5. Merikangas KR, Jin R, He JP, et al. Prevalence and correlates of bipolar spectrum disorder in the World Mental Health Survey Initiative. Arch Gen Psychiatry. 2011;68(3):241-251.

6. Myczkowski ML, Fernandes A, Moreno M, et al. Cognitive outcomes of TMS treatment in bipolar depression: safety data from a randomized controlled trial. J Affect Disord. 2018;235: 20-26.

7. Tavares DF, Myczkowski ML, Alberto RL, et al. Treatment of bipolar depression with deep TMS: results from a double-blind, randomized, parallel group, sham-controlled clinical trial. Neuropsychopharmacology. 2017;42(13):2593-2601.

8. Neuronetics. FDA grants NeuroStar® Advanced Therapy System Breakthrough Device Designation to treat bipolar depression. Accessed February 2, 2021. https://www.globenewswire.com/news-release/2020/03/06/1996447/0/en/FDA-Grants-NeuroStar-Advanced-Therapy-System-Breakthrough-Device-Designation-to-Treat-Bipolar-Depression.html

9. Cohen RB, Brunoni AR, Boggio PS, et al. Clinical predictors associated with duration of repetitive transcranial magnetic stimulation treatment for remission in bipolar depression: a naturalistic study. J Nerv Ment Dis. 2010;198(9):679-681.

10. Connolly KR, Helmer A, Cristancho MA, et al. Effectiveness of transcranial magnetic stimulation in clinical practice post-FDA approval in the United States: results observed with the first 100 consecutive cases of depression at an academic medical center. J Clin Psychiatry. 2012;73(4):e567-e573.

11. Dell’osso B, D’Urso N, Castellano F, et al. Long-term efficacy after acute augmentative repetitive transcranial magnetic stimulation in bipolar depression: a 1-year follow-up study. J ECT. 2011;27(2):141-144.

12. Dell’Osso B, Mundo E, D’Urso N, et al. Augmentative repetitive navigated transcranial magnetic stimulation (rTMS) in drug-resistant bipolar depression. Bipolar Disord. 2009;11(1):76-81.

13. Harel EV, Zangen A, Roth Y, et al. H-coil repetitive transcranial magnetic stimulation for the treatment of bipolar depression: an add-on, safety and feasibility study. World J Biol Psychiatry. 2011;12(2):119-126.

14. Nahas Z, Kozel FA, Li X, et al. Left prefrontal transcranial magnetic stimulation (TMS) treatment of depression in bipolar affective disorder: a pilot study of acute safety and efficacy. Bipolar Disord. 2003;5(1):40-47.

15. Tamas RL, Menkes D, El-Mallakh RS. Stimulating research: a prospective, randomized, double-blind, sham-controlled study of slow transcranial magnetic stimulation in depressed bipolar patients. J Neuropsychiatry Clin Neurosci. 2007;19(2):198-199.

16. Tundo A, Cavalieri P, Navari S, et al. Treating bipolar depression - antidepressants and alternatives: a critical review of the literature. Acta Neuropsychiatrica. 2011:23(3):94-105.

17. Gijsman HJ, Geddes JR, Rendell JM, et al. Antidepressants for bipolar depression: a systematic review of randomized, controlled trials. Am J Psychiatry. 2004;161(9):1537-1547.

18. Fornaro M, Anastasia A, Novello S, et al. Incidence, prevalence and clinical correlates of antidepressant‐emergent mania in bipolar depression: a systematic review and meta‐analysis. Bipolar Disord. 2018;20(3):195-227.

19. Pacchiarotti I, Bond DJ, Baldessarini RJ, et al. The International Society for Bipolar Disorders (ISBD) task force report on antidepressant use in bipolar disorders. Am J Psychiatry. 2013;170(11):1249-1262.

20. Xia G, Gajwani P, Muzina DJ, et al. Treatment-emergent mania in unipolar and bipolar depression: focus on repetitive transcranial magnetic stimulation. Int J Neuropsychopharmacol. 2008;11(1):119-130.

21. Rachid F. Repetitive transcranial magnetic stimulation and treatment-emergent mania and hypomania: a review of the literature. J Psychiatr Pract. 2017;23(2):150-159.

22. Victorin A, Rydén E, Thase M, et al. The risk of treatment-emergent mania with methylphenidate in bipolar disorder. Am J Psychiatry. 2017;174(4):341-348.

23. Hidalgo-Mazzei D, Berk M, Cipriani A, et al. Treatment-resistant and multi-therapy-resistant criteria for bipolar depression: consensus definition. Br J Psychiatry. 2019;214(1):27-35.

24. Baldessarini RJ, Vázquez GH, Tondo L. Bipolar depression: a major unsolved challenge. Int J Bipolar Disord. 2020;8(1):1.

25. Phillips AL, Burr RL, Dunner DL. Repetitive transcranial magnetic stimulation in the treatment of bipolar depression: Experience from a clinical setting. J Psychiatr Pract. 2020;26(1):37-45.

1. Aaronson ST, Croarkin PE. Transcranial magnetic stimulation for the treatment of other mood disorders. In: Bermudes RA, Lanocha K, Janicak PG, eds. Transcranial magnetic stimulation: clinical applications for psychiatric practice. American Psychiatric Association Publishing; 2017:127-156.

2. Geddes JR, Miklowitz DJ. Treatment of bipolar disorder. Lancet. 2013;381(9878):1672-1682.

3. Gitlin M. Treatment-resistant bipolar disorder. Molecular Psychiatry. 2006;11(3):227-240.

4. Harrison PJ, Geddes JR, Tunbridge EM. The emerging neurobiology of bipolar disorder. Trends Neurosci. 2018;41(1):18-30.

5. Merikangas KR, Jin R, He JP, et al. Prevalence and correlates of bipolar spectrum disorder in the World Mental Health Survey Initiative. Arch Gen Psychiatry. 2011;68(3):241-251.

6. Myczkowski ML, Fernandes A, Moreno M, et al. Cognitive outcomes of TMS treatment in bipolar depression: safety data from a randomized controlled trial. J Affect Disord. 2018;235: 20-26.

7. Tavares DF, Myczkowski ML, Alberto RL, et al. Treatment of bipolar depression with deep TMS: results from a double-blind, randomized, parallel group, sham-controlled clinical trial. Neuropsychopharmacology. 2017;42(13):2593-2601.

8. Neuronetics. FDA grants NeuroStar® Advanced Therapy System Breakthrough Device Designation to treat bipolar depression. Accessed February 2, 2021. https://www.globenewswire.com/news-release/2020/03/06/1996447/0/en/FDA-Grants-NeuroStar-Advanced-Therapy-System-Breakthrough-Device-Designation-to-Treat-Bipolar-Depression.html

9. Cohen RB, Brunoni AR, Boggio PS, et al. Clinical predictors associated with duration of repetitive transcranial magnetic stimulation treatment for remission in bipolar depression: a naturalistic study. J Nerv Ment Dis. 2010;198(9):679-681.

10. Connolly KR, Helmer A, Cristancho MA, et al. Effectiveness of transcranial magnetic stimulation in clinical practice post-FDA approval in the United States: results observed with the first 100 consecutive cases of depression at an academic medical center. J Clin Psychiatry. 2012;73(4):e567-e573.

11. Dell’osso B, D’Urso N, Castellano F, et al. Long-term efficacy after acute augmentative repetitive transcranial magnetic stimulation in bipolar depression: a 1-year follow-up study. J ECT. 2011;27(2):141-144.

12. Dell’Osso B, Mundo E, D’Urso N, et al. Augmentative repetitive navigated transcranial magnetic stimulation (rTMS) in drug-resistant bipolar depression. Bipolar Disord. 2009;11(1):76-81.

13. Harel EV, Zangen A, Roth Y, et al. H-coil repetitive transcranial magnetic stimulation for the treatment of bipolar depression: an add-on, safety and feasibility study. World J Biol Psychiatry. 2011;12(2):119-126.

14. Nahas Z, Kozel FA, Li X, et al. Left prefrontal transcranial magnetic stimulation (TMS) treatment of depression in bipolar affective disorder: a pilot study of acute safety and efficacy. Bipolar Disord. 2003;5(1):40-47.

15. Tamas RL, Menkes D, El-Mallakh RS. Stimulating research: a prospective, randomized, double-blind, sham-controlled study of slow transcranial magnetic stimulation in depressed bipolar patients. J Neuropsychiatry Clin Neurosci. 2007;19(2):198-199.

16. Tundo A, Cavalieri P, Navari S, et al. Treating bipolar depression - antidepressants and alternatives: a critical review of the literature. Acta Neuropsychiatrica. 2011:23(3):94-105.

17. Gijsman HJ, Geddes JR, Rendell JM, et al. Antidepressants for bipolar depression: a systematic review of randomized, controlled trials. Am J Psychiatry. 2004;161(9):1537-1547.

18. Fornaro M, Anastasia A, Novello S, et al. Incidence, prevalence and clinical correlates of antidepressant‐emergent mania in bipolar depression: a systematic review and meta‐analysis. Bipolar Disord. 2018;20(3):195-227.

19. Pacchiarotti I, Bond DJ, Baldessarini RJ, et al. The International Society for Bipolar Disorders (ISBD) task force report on antidepressant use in bipolar disorders. Am J Psychiatry. 2013;170(11):1249-1262.

20. Xia G, Gajwani P, Muzina DJ, et al. Treatment-emergent mania in unipolar and bipolar depression: focus on repetitive transcranial magnetic stimulation. Int J Neuropsychopharmacol. 2008;11(1):119-130.

21. Rachid F. Repetitive transcranial magnetic stimulation and treatment-emergent mania and hypomania: a review of the literature. J Psychiatr Pract. 2017;23(2):150-159.

22. Victorin A, Rydén E, Thase M, et al. The risk of treatment-emergent mania with methylphenidate in bipolar disorder. Am J Psychiatry. 2017;174(4):341-348.

23. Hidalgo-Mazzei D, Berk M, Cipriani A, et al. Treatment-resistant and multi-therapy-resistant criteria for bipolar depression: consensus definition. Br J Psychiatry. 2019;214(1):27-35.

24. Baldessarini RJ, Vázquez GH, Tondo L. Bipolar depression: a major unsolved challenge. Int J Bipolar Disord. 2020;8(1):1.

25. Phillips AL, Burr RL, Dunner DL. Repetitive transcranial magnetic stimulation in the treatment of bipolar depression: Experience from a clinical setting. J Psychiatr Pract. 2020;26(1):37-45.

More than just 3 dogs: Is burnout getting in the way of knowing our patients?

Do you ever leave work thinking “Why do I always feel so tired after my shift?” “How can I overcome this fatigue?” “Is this what I expected?” “How can I get over the dread of so much administrative work when I want more time for my patients?” As clinicians, we face these and many other questions every day. These questions are the result of feeling entrapped in a health care system that has forgotten that clinicians need enough time to get to know and connect with their patients. Burnout is real, and relying on wellness activities is not sufficient to overcome it. Instead, taking the time for some introspection and self-reflection can help to overcome these difficulties.

A valuable lesson

Ten months into my intern year as a psychiatry resident, while on a busy night shift at the psychiatry emergency unit, an 86-year-old man arrived alone, hopeless, and with persistent death wishes. He needed to be heard and comforted by someone. Although he understood the nonnegotiable need to be hospitalized, he was extremely hesitant. But why? After all, he expressed wanting to get better and feared going back home alone, yet he was unwilling to be admitted to the hospital for acute care.

I knew I had to address the reason behind my patient’s ambivalence by further exploring his history. Nonetheless, my physician-in-training mind was battling feelings of stress secondary to what at the time seemed to be a never-ending to-do list full of nurses’ requests and patient-related tasks. Despite an unconscious temptation to rush through the history to please my go, go, go! trainee mind, I do not regret having taken the time to ask and address the often-feared “why.” Why was my patient reluctant to accept my recommendation?

To my surprise, it turned out to be an important matter. He said, “I have 3 dogs back home I don’t want to leave alone. They are the only living memory of my wife, who passed away 5 months ago. They help me stay alive.” I was struck by a feeling of empathy, but also guilt for having almost rushed through the history and not being thorough enough to ask why.

Take time to explore ‘why’

Do we really recognize the importance of being inquisitive in our history-taking? What might seem a simple matter to us (in my patient’s case, his 3 dogs were his main support system) can be a significant cause of a patient’s distress. A patients’ hesitancy to accept our recommendations can be secondary to reasons that unfortunately at times we only partially explore, or do not explore at all. Asking why can open Pandora’s box. It can uncover feelings and emotions such as frustration, anger, anxiety, and sorrow. It can also reveal uncertainties regarding topics such as race, gender identity, sexual orientation, socioeconomic status, and religion. We should be driven by humble curiosity, and tailor the interview by purposefully asking questions with the goal of learning and understanding our patients’ concerns. This practice serves to cultivate honest and trustworthy physician-patient relationships founded on empathy and respect.

If we know that obtaining an in-depth history is crucial for formulating a patient’s treatment plan, why do we sometimes fall in the trap of obtaining superficial ones, at times limiting ourselves to checklists? Reasons for not delving into our patients’ histories include (but are not limited to) an overload of patients, time constraints, a physician’s personal style, unconscious bias, suboptimal mentoring, and burnout. Of all these reasons, I worry the most about burnout. Physicians face insurmountable academic, institutional, and administrative demands. These constraints inarguably contribute to feeling rushed, and eventually possibly burned out.

Using self-reflection to prevent burnout

Physician burnout—as well as attempts to define, identify, target, and prevent it—has been on the rise in the past decades. If burnout affects the physician-patient relationship, we should make efforts to mitigate it. One should try to rely on internal, rather than external, influences to positively influence our delivery of care. One way to do this is by really getting to know the patient in front of us: a father, mother, brother, sister, member of the community, etc. Understanding our patient’s needs and concerns promotes empathy and connectedness. Another way is to exercise self-reflection by asking ourselves: How do I feel about the care I delivered today? Did I make an effort to fully understand my patients’ concerns? Did I make each patient feel understood? Was I rushing through the day, or was I mindful of the person in front of me? Did I deliver the care I wish I had received?

Although there are innumerable ways to target physician burnout, these self-reflections are quick, simple exercises that easily can be woven into a clinician’s busy schedule. The goal is to be mindful of improving the quality of our interactions with patients to ultimately cultivate our own well-being by potentiating a sense of fulfilment and satisfaction with our profession. I encourage clinicians to always go after the “why.” After all, why not? Thankfully, after some persuasion, my patient accepted voluntary admission, and arranged with neighbors to take care of his 3 dogs.

Do you ever leave work thinking “Why do I always feel so tired after my shift?” “How can I overcome this fatigue?” “Is this what I expected?” “How can I get over the dread of so much administrative work when I want more time for my patients?” As clinicians, we face these and many other questions every day. These questions are the result of feeling entrapped in a health care system that has forgotten that clinicians need enough time to get to know and connect with their patients. Burnout is real, and relying on wellness activities is not sufficient to overcome it. Instead, taking the time for some introspection and self-reflection can help to overcome these difficulties.

A valuable lesson

Ten months into my intern year as a psychiatry resident, while on a busy night shift at the psychiatry emergency unit, an 86-year-old man arrived alone, hopeless, and with persistent death wishes. He needed to be heard and comforted by someone. Although he understood the nonnegotiable need to be hospitalized, he was extremely hesitant. But why? After all, he expressed wanting to get better and feared going back home alone, yet he was unwilling to be admitted to the hospital for acute care.

I knew I had to address the reason behind my patient’s ambivalence by further exploring his history. Nonetheless, my physician-in-training mind was battling feelings of stress secondary to what at the time seemed to be a never-ending to-do list full of nurses’ requests and patient-related tasks. Despite an unconscious temptation to rush through the history to please my go, go, go! trainee mind, I do not regret having taken the time to ask and address the often-feared “why.” Why was my patient reluctant to accept my recommendation?

To my surprise, it turned out to be an important matter. He said, “I have 3 dogs back home I don’t want to leave alone. They are the only living memory of my wife, who passed away 5 months ago. They help me stay alive.” I was struck by a feeling of empathy, but also guilt for having almost rushed through the history and not being thorough enough to ask why.

Take time to explore ‘why’

Do we really recognize the importance of being inquisitive in our history-taking? What might seem a simple matter to us (in my patient’s case, his 3 dogs were his main support system) can be a significant cause of a patient’s distress. A patients’ hesitancy to accept our recommendations can be secondary to reasons that unfortunately at times we only partially explore, or do not explore at all. Asking why can open Pandora’s box. It can uncover feelings and emotions such as frustration, anger, anxiety, and sorrow. It can also reveal uncertainties regarding topics such as race, gender identity, sexual orientation, socioeconomic status, and religion. We should be driven by humble curiosity, and tailor the interview by purposefully asking questions with the goal of learning and understanding our patients’ concerns. This practice serves to cultivate honest and trustworthy physician-patient relationships founded on empathy and respect.

If we know that obtaining an in-depth history is crucial for formulating a patient’s treatment plan, why do we sometimes fall in the trap of obtaining superficial ones, at times limiting ourselves to checklists? Reasons for not delving into our patients’ histories include (but are not limited to) an overload of patients, time constraints, a physician’s personal style, unconscious bias, suboptimal mentoring, and burnout. Of all these reasons, I worry the most about burnout. Physicians face insurmountable academic, institutional, and administrative demands. These constraints inarguably contribute to feeling rushed, and eventually possibly burned out.

Using self-reflection to prevent burnout

Physician burnout—as well as attempts to define, identify, target, and prevent it—has been on the rise in the past decades. If burnout affects the physician-patient relationship, we should make efforts to mitigate it. One should try to rely on internal, rather than external, influences to positively influence our delivery of care. One way to do this is by really getting to know the patient in front of us: a father, mother, brother, sister, member of the community, etc. Understanding our patient’s needs and concerns promotes empathy and connectedness. Another way is to exercise self-reflection by asking ourselves: How do I feel about the care I delivered today? Did I make an effort to fully understand my patients’ concerns? Did I make each patient feel understood? Was I rushing through the day, or was I mindful of the person in front of me? Did I deliver the care I wish I had received?

Although there are innumerable ways to target physician burnout, these self-reflections are quick, simple exercises that easily can be woven into a clinician’s busy schedule. The goal is to be mindful of improving the quality of our interactions with patients to ultimately cultivate our own well-being by potentiating a sense of fulfilment and satisfaction with our profession. I encourage clinicians to always go after the “why.” After all, why not? Thankfully, after some persuasion, my patient accepted voluntary admission, and arranged with neighbors to take care of his 3 dogs.

Do you ever leave work thinking “Why do I always feel so tired after my shift?” “How can I overcome this fatigue?” “Is this what I expected?” “How can I get over the dread of so much administrative work when I want more time for my patients?” As clinicians, we face these and many other questions every day. These questions are the result of feeling entrapped in a health care system that has forgotten that clinicians need enough time to get to know and connect with their patients. Burnout is real, and relying on wellness activities is not sufficient to overcome it. Instead, taking the time for some introspection and self-reflection can help to overcome these difficulties.

A valuable lesson

Ten months into my intern year as a psychiatry resident, while on a busy night shift at the psychiatry emergency unit, an 86-year-old man arrived alone, hopeless, and with persistent death wishes. He needed to be heard and comforted by someone. Although he understood the nonnegotiable need to be hospitalized, he was extremely hesitant. But why? After all, he expressed wanting to get better and feared going back home alone, yet he was unwilling to be admitted to the hospital for acute care.

I knew I had to address the reason behind my patient’s ambivalence by further exploring his history. Nonetheless, my physician-in-training mind was battling feelings of stress secondary to what at the time seemed to be a never-ending to-do list full of nurses’ requests and patient-related tasks. Despite an unconscious temptation to rush through the history to please my go, go, go! trainee mind, I do not regret having taken the time to ask and address the often-feared “why.” Why was my patient reluctant to accept my recommendation?

To my surprise, it turned out to be an important matter. He said, “I have 3 dogs back home I don’t want to leave alone. They are the only living memory of my wife, who passed away 5 months ago. They help me stay alive.” I was struck by a feeling of empathy, but also guilt for having almost rushed through the history and not being thorough enough to ask why.

Take time to explore ‘why’

Do we really recognize the importance of being inquisitive in our history-taking? What might seem a simple matter to us (in my patient’s case, his 3 dogs were his main support system) can be a significant cause of a patient’s distress. A patients’ hesitancy to accept our recommendations can be secondary to reasons that unfortunately at times we only partially explore, or do not explore at all. Asking why can open Pandora’s box. It can uncover feelings and emotions such as frustration, anger, anxiety, and sorrow. It can also reveal uncertainties regarding topics such as race, gender identity, sexual orientation, socioeconomic status, and religion. We should be driven by humble curiosity, and tailor the interview by purposefully asking questions with the goal of learning and understanding our patients’ concerns. This practice serves to cultivate honest and trustworthy physician-patient relationships founded on empathy and respect.

If we know that obtaining an in-depth history is crucial for formulating a patient’s treatment plan, why do we sometimes fall in the trap of obtaining superficial ones, at times limiting ourselves to checklists? Reasons for not delving into our patients’ histories include (but are not limited to) an overload of patients, time constraints, a physician’s personal style, unconscious bias, suboptimal mentoring, and burnout. Of all these reasons, I worry the most about burnout. Physicians face insurmountable academic, institutional, and administrative demands. These constraints inarguably contribute to feeling rushed, and eventually possibly burned out.

Using self-reflection to prevent burnout

Physician burnout—as well as attempts to define, identify, target, and prevent it—has been on the rise in the past decades. If burnout affects the physician-patient relationship, we should make efforts to mitigate it. One should try to rely on internal, rather than external, influences to positively influence our delivery of care. One way to do this is by really getting to know the patient in front of us: a father, mother, brother, sister, member of the community, etc. Understanding our patient’s needs and concerns promotes empathy and connectedness. Another way is to exercise self-reflection by asking ourselves: How do I feel about the care I delivered today? Did I make an effort to fully understand my patients’ concerns? Did I make each patient feel understood? Was I rushing through the day, or was I mindful of the person in front of me? Did I deliver the care I wish I had received?

Although there are innumerable ways to target physician burnout, these self-reflections are quick, simple exercises that easily can be woven into a clinician’s busy schedule. The goal is to be mindful of improving the quality of our interactions with patients to ultimately cultivate our own well-being by potentiating a sense of fulfilment and satisfaction with our profession. I encourage clinicians to always go after the “why.” After all, why not? Thankfully, after some persuasion, my patient accepted voluntary admission, and arranged with neighbors to take care of his 3 dogs.

A resident’s guide to lithium

Lithium has been used in psychiatry for more than half a century and is considered the gold standard for treating acute mania and maintenance treatment of bipolar disorder.1 Evidence supports its use to reduce suicidal behavior and as an adjunctive treatment for major depressive disorder.2 However, lithium has fallen out of favor because of its narrow therapeutic index as well as the introduction of newer psychotropic medications that have a quicker onset of action and do not require strict blood monitoring. For residents early in their training, keeping track of the laboratory monitoring and medical screening can be confusing. Different institutions and countries have specific guidelines and recommendations for monitoring patients receiving lithium, which adds to the confusion.

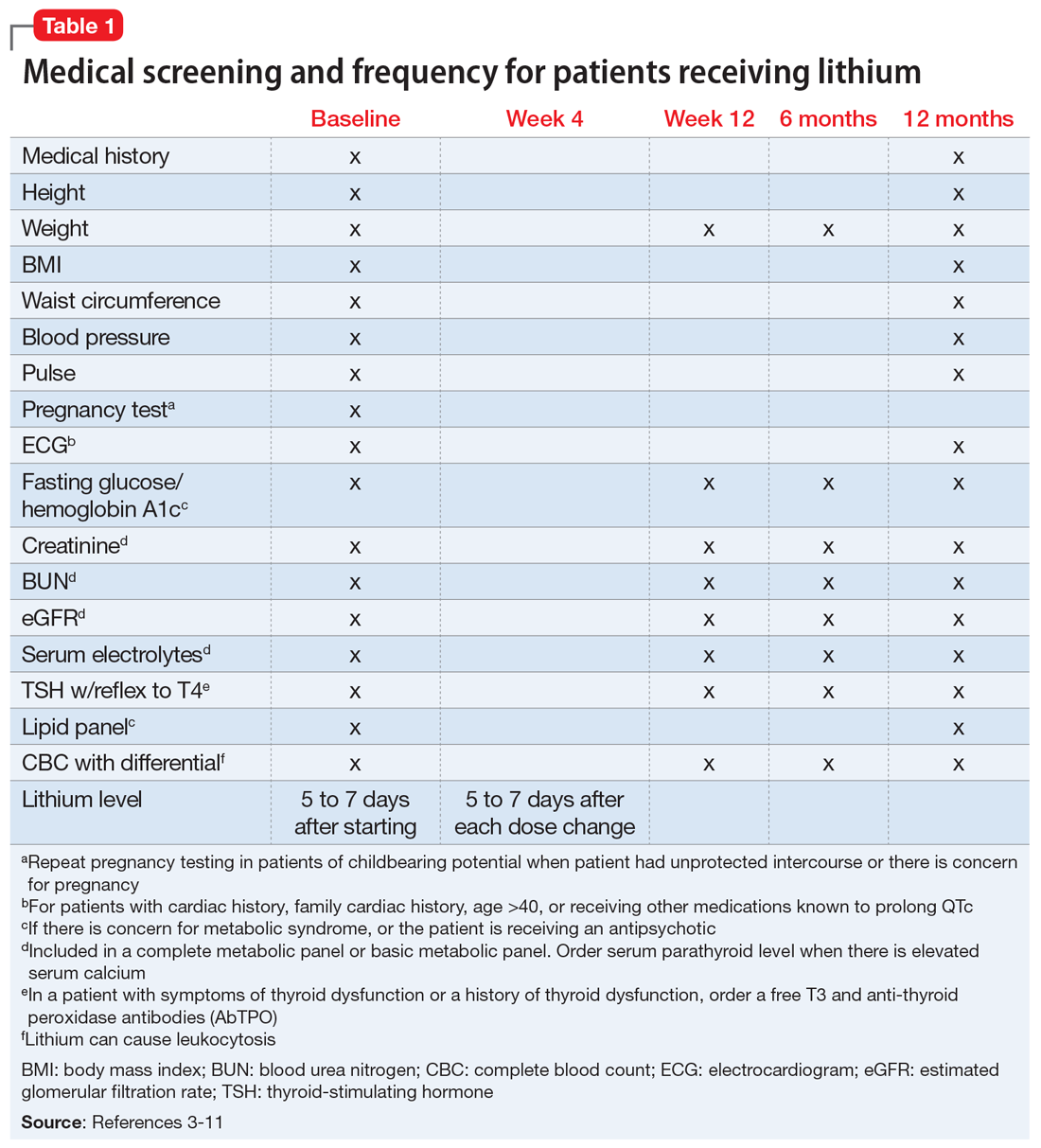

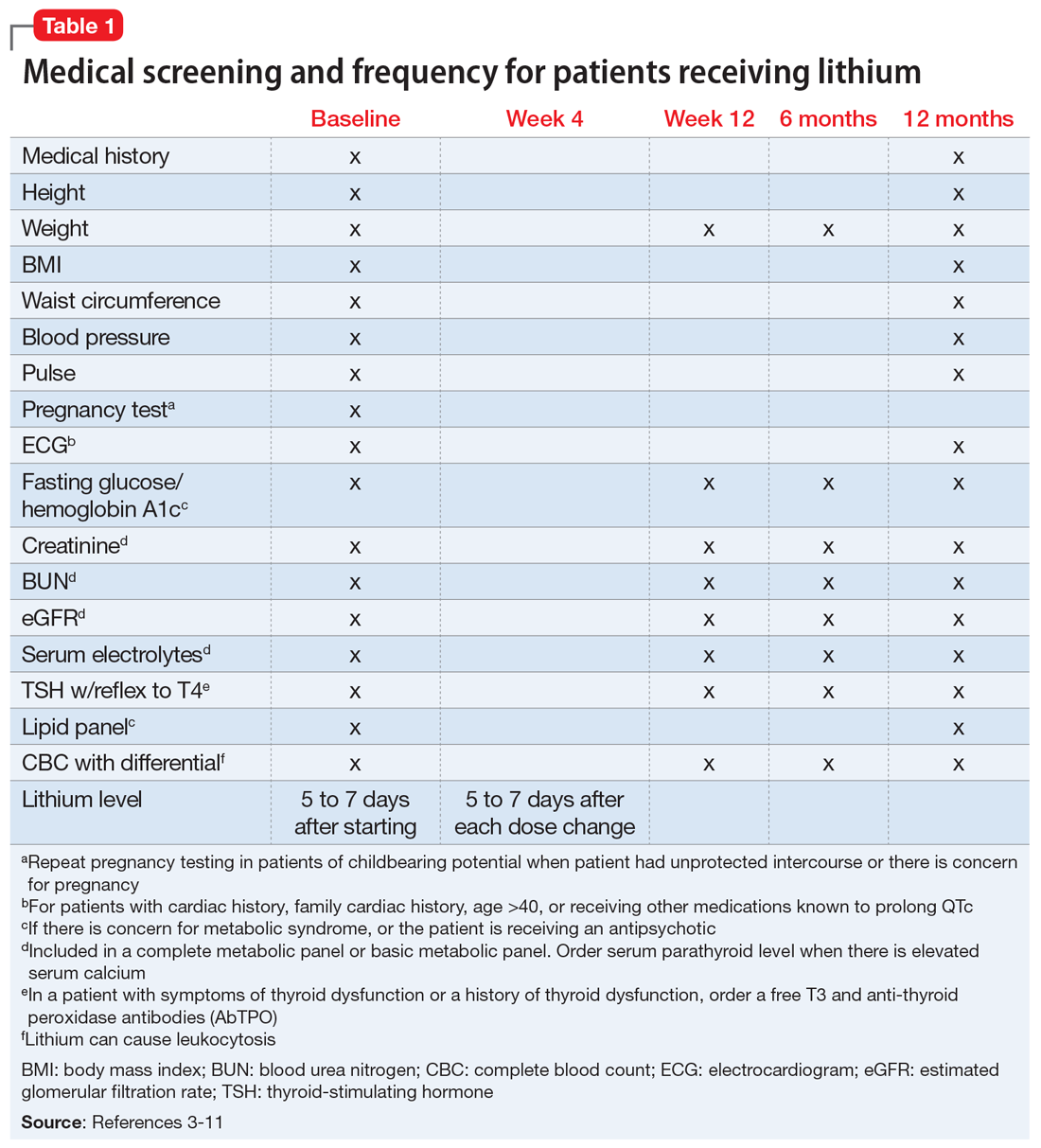

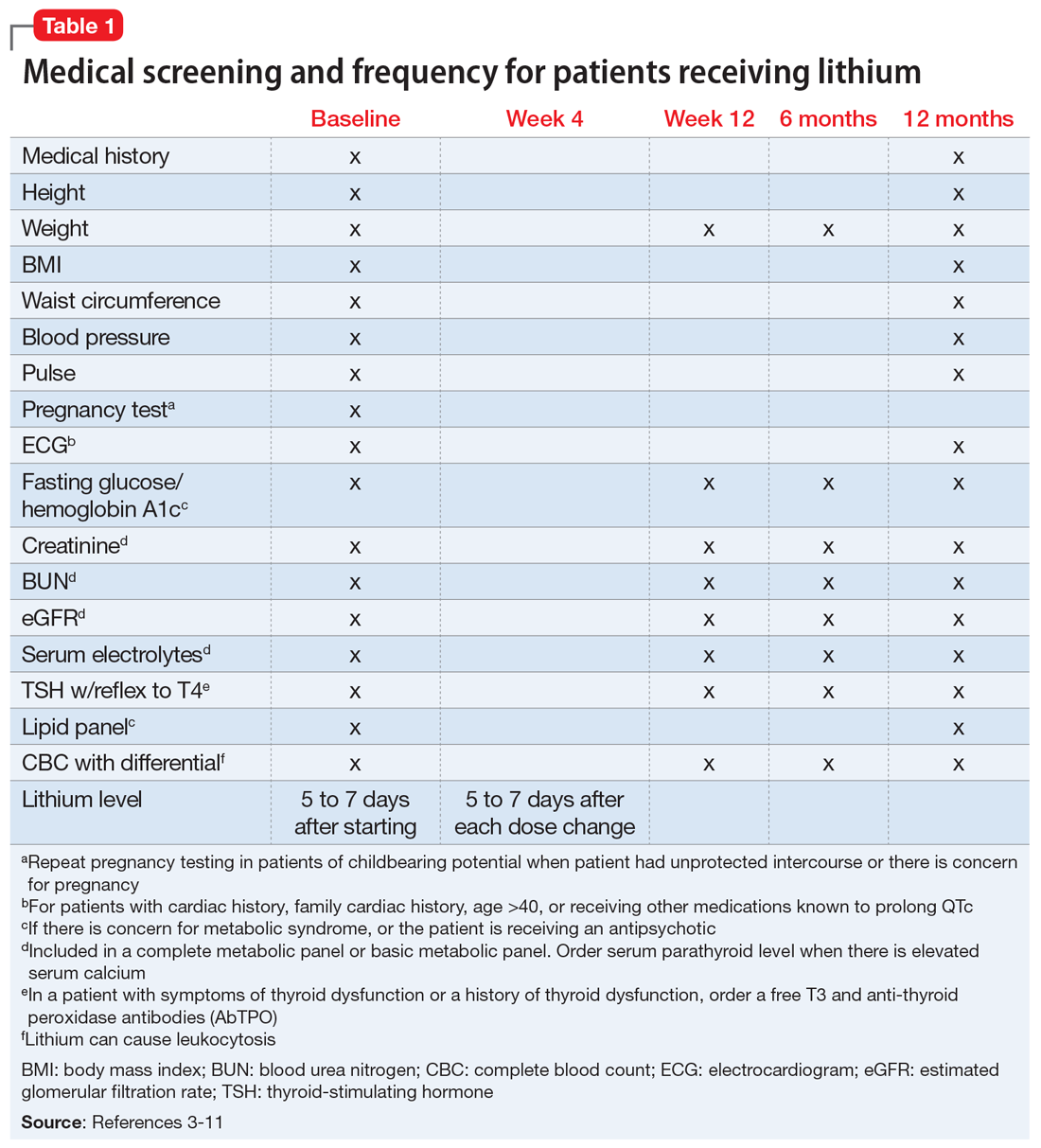

We completed a literature review to develop clear and concise recommendations for lithium monitoring for residents in our psychiatry residency program. These recommendations outline screening at baseline and after patients treated with lithium achieve stability. Table 13-11 outlines medical screening parameters, including bloodwork, that should be completed before initiating treatment, and how often such screening should be repeated. Table 2 incorporates these parameters into progress notes in the electronic medical record to keep track of the laboratory values and when they were last drawn. Our aim is to help residents stay organized and prevent missed screenings.

How often should lithium levels be monitored?

After starting a patient on lithium, check the level within 5 to 7 days, and 5 to 7 days after each dose change. Draw the lithium level 10 to 14 hours after the patient’s last dose (12 hours is best).1 Because of dosage changes, lithium levels usually are monitored more frequently during the first 3 months of treatment until therapeutic levels are reached or symptoms are controlled. It is recommended to monitor lithium levels every 3 months for the first year and every 6 months after the first year of treatment once the patient is stable and considering age, medical health, and how consistently a patient reports symptoms/adverse effects.3,5 Continue monitoring levels every 3 months in older adults; in patients with renal dysfunction, thyroid dysfunction, hypercalcemia, or other significant medical comorbidities; and in those who are taking medications that affect lithium, such as pain medications (nonsteroidal anti-inflammatory drugs can raise lithium levels), certain antihypertensives (angiotensin-converting-enzyme inhibitors can raise lithium levels), and diuretics (thiazide diuretics can raise lithium levels; osmotic diuretics and carbonic anhydrase inhibitors can reduce lithium levels).1,3,5

Lithium levels could vary by up to 0.5 mEq/L during transition between manic, euthymic, and depressive states.12 On a consistent dosage, lithium levels decrease during mania because of hemodilution, and increase during depression secondary to physiological effects specific to these episodes.13,14

Recommendations for plasma lithium levels (trough levels)

Mania. Lithium levels of 0.8 to 1.2 mEq/L often are needed to achieve symptom control during manic episodes.15 As levels approach 1.5 mEq/L, patients are at increased risk for intolerable adverse effects (eg, nausea and vomiting) and toxicity.16,17 Adverse effects at higher levels may result in patients abruptly discontinuing lithium. Patients who experience mania before a depressive episode at illness onsettend to have a better treatment response with lithium.18 Lithium monotherapy has been shown to be less effective for acute mania than antipsychotics or combination therapies.19 Consider combining lithium with valproate or antipsychotics for patients who have tolerated lithium in the past and plan to use lithium for maintenance treatment.20

Maintenance. In adults, the lithium level should be 0.60 to 80mEq/L, but consider levels of 0.40 to 0.60 mEq/L in patients who have a good response to lithium but develop adverse effects at higher levels.21 For patients who do not respond to treatment, such as those with severe mania, maintenance levels can be increased to 0.76 to 0.90 mEq/L.22 These same recommendations for maintenance levels can be used for children and adolescents. In older adults, aim for maintenance levels of 0.4 to 0.6 mEq/L. For patients age 65 to 79, the maximum level is 0.7 to 0.8 mEq/L, and should not exceed 0.7 mEq/L in patients age >80. Lithium levels <0.4 mEq/L do not appear to be effective.21

Depression. Aim for a lithium level of 0.6 to 1.0 mEq/L for patients with depression.11

Continue to: Renal function monitoring frequency

Renal function monitoring frequency

Obtain a basic metabolic panel or comprehensive metabolic panel to establish baseline levels of creatinine, blood urea nitrogen (BUN), and estimated glomerular filtration rate (eGFR). Repeat testing at Week 12 and at 6 months to detect any changes. Renal function can be monitored every 6 to 12 months in stable patients, but should be closely watched when a patient’s clinical status changes.3 A new lower eGFR value after starting lithium therapy should be investigated with a repeat test in 2 weeks.23 Mild elevations in creatinine should be monitored, and further medical workup with a nephrologist is recommended for patients with a creatinine level ≥1.6 mg/dL.24 It is important to note that creatinine might remain within normal limits if there is considerable reduction in glomerular function. Creatinine levels could vary because of body mass and diet. Creatinine levels can be low in nonmuscular patients and elevated in patients who consume large amounts of protein.23,25

Ordering a basic metabolic panel also allows electrolyte monitoring. Hyponatremia and dehydration can lead to elevated lithium levels and result in toxicity; hypokalemia might increase the risk of lithium-induced cardiac toxicity. Monitor calcium (corrected serum calcium) because hypercalcemia has been seen in patients treated with lithium.

Thyroid function monitoring frequency

Obtain levels of thyroid-stimulating hormone with reflex to free T4 at baseline, 12 weeks, and 6 months. Monitor thyroid function every 6 to 12 months in stable patients and when a patient’s clinical status changes, such as with new reports of medical or psychiatric symptoms and when there is concern for thyroid dysfunction.3

Lithium and neurotoxicity

Lithium is known to have neurotoxic effects, such as effects on fast-acting neurons leading to dyscoordination or tremor, even at therapeutic levels.26 This is especially the case when lithium is combined with an antipsychotic,26,27 a combination that is used to treat bipolar I disorder with psychotic features. Older adults are at greater risk for neurotoxicity because of physiological changes associated with increasing age.28

Educate patients about adherence, diet, and exercise

Patients might stop taking their psychotropic medications when they start feeling better. Instruct patients to discuss discontinuation with the prescribing clinician before they stop any medication. Educate patients that rapidly discontinuing lithium therapy puts them at high risk of relapse29 and increases the risk of developing treatment-refractory symptoms.23,30 Emphasize the importance of staying hydrated and maintaining adequate sodium in their diet.17,31 Consuming excessive sodium can reduce lithium levels.17,32 Lithium levels could increase when patients experience excessive sweating, such as during exercise or being outside on warm days, because of sodium and volume loss.17,33

1. Tondo L, Alda M, Bauer M, et al. Clinical use of lithium salts: guide for users and prescribers. Int J Bipolar Disord. 2019;7(1):16. doi:10.1186/s40345-019-0151-2

2. Azab AN, Shnaider A, Osher Y, et al. Lithium nephrotoxicity. Int J Bipolar Disord. 2015;3(1):28. doi:10.1186/s40345-015-0028-y

3. American Psychiatric Association. Practice guideline for the treatment of patients with bipolar disorder (revision). Am J Psychiatry. 2002;159:1-50.

4. Yatham LN, Kennedy SH, Parikh SV, et al. Canadian Network for Mood and Anxiety Treatments (CANMAT) and International Society for Bipolar Disorders (ISBD) collaborative update of CANMAT guidelines for the management of patients with bipolar disorder: update 2013. Bipolar Disord. 2013;15:1‐44. doi:10.1111/bdi.12025

5. National Collaborating Center for Mental Health (UK). Bipolar disorder: the NICE guideline on the assessment and management of bipolar disorder in adults, children and young people in primary and secondary care. The British Psychological Society and The Royal College of Psychiatrists; 2014.

6. Kupka R, Goossens P, van Bendegem M, et al. Multidisciplinaire richtlijn bipolaire stoornissen. Nederlandse Vereniging voor Psychiatrie (NVvP); 2015. Accessed August 10, 2020. http://www.nvvp.net/stream/richtlijn-bipolaire-stoornissen-2015

7. Malhi GS, Bassett D, Boyce P, et al. Royal Australian and New Zealand College of Psychiatrists clinical practice guidelines for mood disorders. Aust N Z J Psychiatry. 2015;49:1087‐1206. doi:10.1177/0004867415617657

8. Nederlof M, Heerdink ER, Egberts ACG, et al. Monitoring of patients treated with lithium for bipolar disorder: an international survey. Int J Bipolar Disord. 2018;6(1):12. doi:10.1186/s40345-018-0120-1

9. Leo RJ, Sharma M, Chrostowski DA. A case of lithium-induced symptomatic hypercalcemia. Prim Care Companion J Clin Psychiatry. 2010;12(4):PCC.09l00917. doi:10.4088/PCC.09l00917yel

10. McHenry CR, Lee K. Lithium therapy and disorders of the parathyroid glands. Endocr Pract. 1996;2(2):103-109. doi:10.4158/EP.2.2.103

11. Stahl SM. The prescribers guide: Stahl’s essential psychopharmacology. 6th ed. Cambridge University Press; 2017.

12. Kukopulos A, Reginaldi D. Variations of serum lithium concentrations correlated with the phases of manic-depressive psychosis. Agressologie. 1978;19(D):219-222.

13. Rittmannsberger H, Malsiner-Walli G. Mood-dependent changes of serum lithium concentration in a rapid cycling patient maintained on stable doses of lithium carbonate. Bipolar Disord. 2013;15(3):333-337. doi:10.1111/bdi.12066

14. Hochman E, Weizman A, Valevski A, et al. Association between bipolar episodes and fluid and electrolyte homeostasis: a retrospective longitudinal study. Bipolar Disord. 2014;16(8):781-789. doi:10.1111/bdi.12248

15. Volkmann C, Bschor T, Köhler S. Lithium treatment over the lifespan in bipolar disorders. Front Psychiatry. 2020;11:377. doi: 10.3389/fpsyt.2020.00377

16. Boltan DD, Fenves AZ. Effectiveness of normal saline diuresis in treating lithium overdose. Proc (Bayl Univ Med Cent). 2008;21(3):261-263. doi:10.1080/08998280.2008.11928407

17. Sadock BJ, Saddock VA, Ruiz P. Kaplan and Sadock’s synopsis of psychiatry. 11th ed. Wolters Kluwer; 2014.

18. Tighe SK, Mahon PB, Potash JB. Predictors of lithium response in bipolar disorder. Ther Adv Chronic Dis. 2011;2(3):209-226. doi:10.1177/2040622311399173

19. Cipriani A, Barbui C, Salanti G, et al. Comparative efficacy and acceptability of antimanic drugs in acute mania: a multiple-treatments meta-analysis. Lancet. 2011;378(9799):1306-1315. doi:10.1016/S0140-6736(11)60873-8

20. Smith LA, Cornelius V, Tacchi MJ, et al. Acute bipolar mania: a systematic review and meta-analysis of co-therapy vs monotherapy. Acta Psychiatr Scand. 2016;115(1):12-20. doi:10.1111/j.1600-0447.2006.00912.x

21. Nolen WA, Licht RW, Young AH, et al; ISBD/IGSLI Task Force on the treatment with lithium. What is the optimal serum level for lithium in the maintenance treatment of bipolar disorder? A systematic review and recommendations from the ISBD/IGSLI Task Force on treatment with lithium. Bipolar Disord. 2019;21(5):394-409. doi:10.1111/bdi.12805

22. Maj M, Starace F, Nolfe G, et al. Minimum plasma lithium levels required for effective prophylaxis in DSM III bipolar disorder: a prospective study. Pharmacopsychiatry. 1986;19(6):420-423. doi:10.1055/s-2007-1017280

23. Gupta S, Kripalani M, Khastgir U, et al. Management of the renal adverse effects of lithium. Advances in Psychiatric Treatment. 2013;19(6):457-466. doi:10.1192/apt.bp.112.010306

24. Gitlin M. Lithium and the kidney: an updated review. Drug Saf. 1999;20(3):231-243. doi:10.2165/00002018-199920030-00004

25. Jefferson JW. A clinician’s guide to monitoring kidney function in lithium-treated patients. J Clin Psychiatry. 2010;71(9):1153-1157. doi:10.4088/JCP.09m05917yel

26. Shah VC, Kayathi P, Singh G, et al. Enhance your understanding of lithium neurotoxicity. Prim Care Companion CNS Disord. 2015;17(3):10.4088/PCC.14l01767. doi:10.4088/PCC.14l01767

27. Netto I, Phutane VH. Reversible lithium neurotoxicity: review of the literature. Prim Care Companion CNS Disord. 2012;14(1):PCC.11r01197. doi:10.4088/PCC.11r01197

28. Mohandas E, Rajmohan V. Lithium use in special populations. Indian J Psychiatry. 2007;49(3):211-218. doi:10.4103/0019-5545.37325

29. Gupta S, Khastgir U. Drug information update. Lithium and chronic kidney disease: debates and dilemmas. BJPsych Bull. 2017;41(4):216-220. doi:10.1192/pb.bp.116.054031

30. Post RM. Preventing the malignant transformation of bipolar disorder. JAMA. 2018;319(12):1197-1198. doi:10.1001/jama.2018.0322

31. Timmer RT, Sands JM. Lithium intoxication. J Am Soc Nephrol. 1999;10(3):666-674.

32. Demers RG, Heninger GR. Sodium intake and lithium treatment in mania. Am J Psychiatry. 1971;128(1):100-104. doi:10.1176/ajp.128.1.100

33. Hedya SA, Avula A, Swoboda HD. Lithium toxicity. In: StatPearls. StatPearls Publishing; 2020.

Lithium has been used in psychiatry for more than half a century and is considered the gold standard for treating acute mania and maintenance treatment of bipolar disorder.1 Evidence supports its use to reduce suicidal behavior and as an adjunctive treatment for major depressive disorder.2 However, lithium has fallen out of favor because of its narrow therapeutic index as well as the introduction of newer psychotropic medications that have a quicker onset of action and do not require strict blood monitoring. For residents early in their training, keeping track of the laboratory monitoring and medical screening can be confusing. Different institutions and countries have specific guidelines and recommendations for monitoring patients receiving lithium, which adds to the confusion.

We completed a literature review to develop clear and concise recommendations for lithium monitoring for residents in our psychiatry residency program. These recommendations outline screening at baseline and after patients treated with lithium achieve stability. Table 13-11 outlines medical screening parameters, including bloodwork, that should be completed before initiating treatment, and how often such screening should be repeated. Table 2 incorporates these parameters into progress notes in the electronic medical record to keep track of the laboratory values and when they were last drawn. Our aim is to help residents stay organized and prevent missed screenings.

How often should lithium levels be monitored?

After starting a patient on lithium, check the level within 5 to 7 days, and 5 to 7 days after each dose change. Draw the lithium level 10 to 14 hours after the patient’s last dose (12 hours is best).1 Because of dosage changes, lithium levels usually are monitored more frequently during the first 3 months of treatment until therapeutic levels are reached or symptoms are controlled. It is recommended to monitor lithium levels every 3 months for the first year and every 6 months after the first year of treatment once the patient is stable and considering age, medical health, and how consistently a patient reports symptoms/adverse effects.3,5 Continue monitoring levels every 3 months in older adults; in patients with renal dysfunction, thyroid dysfunction, hypercalcemia, or other significant medical comorbidities; and in those who are taking medications that affect lithium, such as pain medications (nonsteroidal anti-inflammatory drugs can raise lithium levels), certain antihypertensives (angiotensin-converting-enzyme inhibitors can raise lithium levels), and diuretics (thiazide diuretics can raise lithium levels; osmotic diuretics and carbonic anhydrase inhibitors can reduce lithium levels).1,3,5

Lithium levels could vary by up to 0.5 mEq/L during transition between manic, euthymic, and depressive states.12 On a consistent dosage, lithium levels decrease during mania because of hemodilution, and increase during depression secondary to physiological effects specific to these episodes.13,14

Recommendations for plasma lithium levels (trough levels)

Mania. Lithium levels of 0.8 to 1.2 mEq/L often are needed to achieve symptom control during manic episodes.15 As levels approach 1.5 mEq/L, patients are at increased risk for intolerable adverse effects (eg, nausea and vomiting) and toxicity.16,17 Adverse effects at higher levels may result in patients abruptly discontinuing lithium. Patients who experience mania before a depressive episode at illness onsettend to have a better treatment response with lithium.18 Lithium monotherapy has been shown to be less effective for acute mania than antipsychotics or combination therapies.19 Consider combining lithium with valproate or antipsychotics for patients who have tolerated lithium in the past and plan to use lithium for maintenance treatment.20

Maintenance. In adults, the lithium level should be 0.60 to 80mEq/L, but consider levels of 0.40 to 0.60 mEq/L in patients who have a good response to lithium but develop adverse effects at higher levels.21 For patients who do not respond to treatment, such as those with severe mania, maintenance levels can be increased to 0.76 to 0.90 mEq/L.22 These same recommendations for maintenance levels can be used for children and adolescents. In older adults, aim for maintenance levels of 0.4 to 0.6 mEq/L. For patients age 65 to 79, the maximum level is 0.7 to 0.8 mEq/L, and should not exceed 0.7 mEq/L in patients age >80. Lithium levels <0.4 mEq/L do not appear to be effective.21

Depression. Aim for a lithium level of 0.6 to 1.0 mEq/L for patients with depression.11

Continue to: Renal function monitoring frequency

Renal function monitoring frequency