User login

Applications of ChatGPT and Large Language Models in Medicine and Health Care: Benefits and Pitfalls

The development of [artificial intelligence] is as fundamental as the creation of the microprocessor, the personal computer, the Internet, and the mobile phone. It will change the way people work, learn, travel, get health care, and communicate with each other.

Bill Gates 1

As the world emerges from the pandemic and the health care system faces new challenges, technology has become an increasingly important tool for health care professionals (HCPs). One such technology is the large language model (LLM), which has the potential to revolutionize the health care industry. ChatGPT, a popular LLM developed by OpenAI, has gained particular attention in the medical community for its ability to pass the United States Medical Licensing Exam.2 This article will explore the benefits and potential pitfalls of using LLMs like ChatGPT in medicine and health care.

Benefits

HCP burnout is a serious issue that can lead to lower productivity, increased medical errors, and decreased patient satisfaction.3 LLMs can alleviate some administrative burdens on HCPs, allowing them to focus on patient care. By assisting with billing, coding, insurance claims, and organizing schedules, LLMs like ChatGPT can free up time for HCPs to focus on what they do best: providing quality patient care.4 ChatGPT also can assist with diagnoses by providing accurate and reliable information based on a vast amount of clinical data. By learning the relationships between different medical conditions, symptoms, and treatment options, ChatGPT can provide an appropriate differential diagnosis (Figure 1).

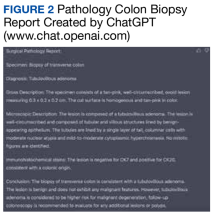

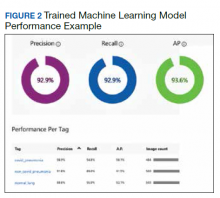

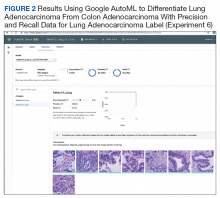

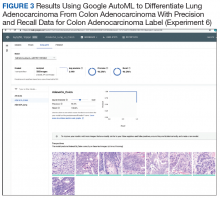

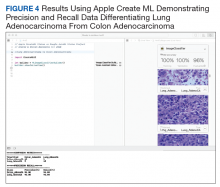

Imaging medical specialists like radiologists, pathologists, dermatologists, and others can benefit from combining computer vision diagnostics with ChatGPT report creation abilities to streamline the diagnostic workflow and improve diagnostic accuracy (Figure 2).

Although using ChatGPT and other LLMs in mental health care has potential benefits, it is essential to note that they are not a substitute for human interaction and personalized care. While ChatGPT can remember information from previous conversations, it cannot provide the same level of personalized, high-quality care that a professional therapist or HCP can. However, by augmenting the work of HCPs, ChatGPT and other LLMs have the potential to make mental health care more accessible and efficient. In addition to providing effective screening in underserved areas, ChatGPT technology may improve the competence of physician assistants and nurse practitioners in delivering mental health care. With the increased incidence of mental health problems in veterans, the pertinence of a ChatGPT-like feature will only increase with time.9

ChatGPT can also be integrated into health care organizations’ websites and mobile apps, providing patients instant access to medical information, self-care advice, symptom checkers, scheduling appointments, and arranging transportation. These features can reduce the burden on health care staff and help patients stay informed and motivated to take an active role in their health. Additionally, health care organizations can use ChatGPT to engage patients by providing reminders for medication renewals and assistance with self-care.4,6,10,11

The potential of artificial intelligence (AI) in the field of medical education and research is immense. According to a study by Gilson and colleagues, ChatGPT has shown promising results as a medical education tool.12 ChatGPT can simulate clinical scenarios, provide real-time feedback, and improve diagnostic skills. It also offers new interactive and personalized learning opportunities for medical students and HCPs.13 ChatGPT can help researchers by streamlining the process of data analysis. It can also administer surveys or questionnaires, facilitate data collection on preferences and experiences, and help in writing scientific publications.14 Nevertheless, to fully unlock the potential of these AI models, additional models that perform checks for factual accuracy, plagiarism, and copyright infringement must be developed.15,16

AI Bill of Rights

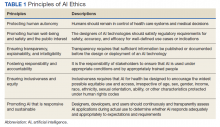

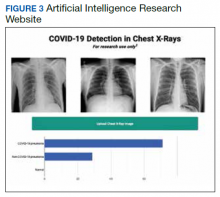

In order to protect the American public, the White House Office of Science and Technology Policy (OSTP) has released a blueprint for an AI Bill of Rights that emphasizes 5 principles to protect the public from the harmful effects of AI models, including safe and effective systems; algorithmic discrimination protection; data privacy; notice and explanation; and human alternatives, considerations, and fallback (Figure 3).17

One of the biggest challenges with LLMs like ChatGPT is the prevalence of inaccurate information or so-called hallucinations.16 These inaccuracies stem from the inability of LLMs to distinguish between real and fake information. To prevent hallucinations, researchers have proposed several methods, including training models on more diverse data, using adversarial training methods, and human-in-the-loop approaches.21 In addition, medicine-specific models like GatorTron, medPaLM, and Almanac were developed, increasing the accuracy of factual results.22-24 Unfortunately, only the GatorTron model is available to the public through the NVIDIA developers’ program.25

Despite these shortcomings, the future of LLMs in health care is promising. Although these models will not replace HCPs, they can help reduce the unnecessary burden on them, prevent burnout, and enable HCPs and patients spend more time together. Establishing an official hospital AI oversight governing body that would promote best practices could ensure the trustworthy implementation of these new technologies.26

Conclusions

The use of ChatGPT and other LLMs in health care has the potential to revolutionize the industry. By assisting HCPs with administrative tasks, improving the accuracy and reliability of diagnoses, and engaging patients, ChatGPT can help health care organizations provide better care to their patients. While LLMs are not a substitute for human interaction and personalized care, they can augment the work of HCPs, making health care more accessible and efficient. As the health care industry continues to evolve, it will be exciting to see how ChatGPT and other LLMs are used to improve patient outcomes and quality of care. In addition, AI technologies like ChatGPT offer enormous potential in medical education and research. To ensure that the benefits outweigh the risks, developing trustworthy AI health care products and establishing oversight governing bodies to ensure their implementation is essential. By doing so, we can help HCPs focus on what matters most, providing high-quality care to patients.

Acknowledgments

This material is the result of work supported by resources and the use of facilities at the James A. Haley Veterans’ Hospital.

1. Bill Gates. The age of AI has begun. March 21, 2023. Accessed May 10, 2023. https://www.gatesnotes.com/the-age-of-ai-has-begun

2. Kung TH, Cheatham M, Medenilla A, et al. Performance of ChatGPT on USMLE: Potential for AI-assisted medical education using large language models. PLOS Digit Health. 2023;2(2):e0000198. Published 2023 Feb 9. doi:10.1371/journal.pdig.0000198

3. Shanafelt TD, West CP, Sinsky C, et al. Changes in burnout and satisfaction with work-life integration in physicians and the general US working population between 2011 and 2020. Mayo Clin Proc. 2022;97(3):491-506. doi:10.1016/j.mayocp.2021.11.021

4. Goodman RS, Patrinely JR Jr, Osterman T, Wheless L, Johnson DB. On the cusp: considering the impact of artificial intelligence language models in healthcare. Med. 2023;4(3):139-140. doi:10.1016/j.medj.2023.02.008

5. Will ChatGPT transform healthcare? Nat Med. 2023;29(3):505-506. doi:10.1038/s41591-023-02289-5

6. Hopkins AM, Logan JM, Kichenadasse G, Sorich MJ. Artificial intelligence chatbots will revolutionize how cancer patients access information: ChatGPT represents a paradigm-shift. JNCI Cancer Spectr. 2023;7(2):pkad010. doi:10.1093/jncics/pkad010

7. Babar Z, van Laarhoven T, Zanzotto FM, Marchiori E. Evaluating diagnostic content of AI-generated radiology reports of chest X-rays. Artif Intell Med. 2021;116:102075. doi:10.1016/j.artmed.2021.102075

8. Lecler A, Duron L, Soyer P. Revolutionizing radiology with GPT-based models: current applications, future possibilities and limitations of ChatGPT. Diagn Interv Imaging. 2023;S2211-5684(23)00027-X. doi:10.1016/j.diii.2023.02.003

9. Germain JM. Is ChatGPT smart enough to practice mental health therapy? March 23, 2023. Accessed May 11, 2023. https://www.technewsworld.com/story/is-chatgpt-smart-enough-to-practice-mental-health-therapy-178064.html

10. Cascella M, Montomoli J, Bellini V, Bignami E. Evaluating the feasibility of ChatGPT in healthcare: an analysis of multiple clinical and research scenarios. J Med Syst. 2023;47(1):33. Published 2023 Mar 4. doi:10.1007/s10916-023-01925-4

11. Jungwirth D, Haluza D. Artificial intelligence and public health: an exploratory study. Int J Environ Res Public Health. 2023;20(5):4541. Published 2023 Mar 3. doi:10.3390/ijerph20054541

12. Gilson A, Safranek CW, Huang T, et al. How does ChatGPT perform on the United States Medical Licensing Examination? The implications of large language models for medical education and knowledge assessment. JMIR Med Educ. 2023;9:e45312. Published 2023 Feb 8. doi:10.2196/45312

13. Eysenbach G. The role of ChatGPT, generative language models, and artificial intelligence in medical education: a conversation with ChatGPT and a call for papers. JMIR Med Educ. 2023;9:e46885. Published 2023 Mar 6. doi:10.2196/46885

14. Macdonald C, Adeloye D, Sheikh A, Rudan I. Can ChatGPT draft a research article? An example of population-level vaccine effectiveness analysis. J Glob Health. 2023;13:01003. Published 2023 Feb 17. doi:10.7189/jogh.13.01003

15. Masters K. Ethical use of artificial intelligence in health professions education: AMEE Guide No.158. Med Teach. 2023;1-11. doi:10.1080/0142159X.2023.2186203

16. Smith CS. Hallucinations could blunt ChatGPT’s success. IEEE Spectrum. March 13, 2023. Accessed May 11, 2023. https://spectrum.ieee.org/ai-hallucination

17. Executive Office of the President, Office of Science and Technology Policy. Blueprint for an AI Bill of Rights. Accessed May 11, 2023. https://www.whitehouse.gov/ostp/ai-bill-of-rights

18. Executive office of the President. Executive Order 13960: promoting the use of trustworthy artificial intelligence in the federal government. Fed Regist. 2020;89(236):78939-78943.

19. US Department of Commerce, National institute of Standards and Technology. Artificial Intelligence Risk Management Framework (AI RMF 1.0). Published January 2023. doi:10.6028/NIST.AI.100-1

20. Microsoft. Azure Cognitive Search—Cloud Search Service. Accessed May 11, 2023. https://azure.microsoft.com/en-us/products/search

21. Aiyappa R, An J, Kwak H, Ahn YY. Can we trust the evaluation on ChatGPT? March 22, 2023. Accessed May 11, 2023. https://arxiv.org/abs/2303.12767v1

22. Yang X, Chen A, Pournejatian N, et al. GatorTron: a large clinical language model to unlock patient information from unstructured electronic health records. Updated December 16, 2022. Accessed May 11, 2023. https://arxiv.org/abs/2203.03540v3

23. Singhal K, Azizi S, Tu T, et al. Large language models encode clinical knowledge. December 26, 2022. Accessed May 11, 2023. https://arxiv.org/abs/2212.13138v1

24. Zakka C, Chaurasia A, Shad R, Hiesinger W. Almanac: knowledge-grounded language models for clinical medicine. March 1, 2023. Accessed May 11, 2023. https://arxiv.org/abs/2303.01229v1

25. NVIDIA. GatorTron-OG. Accessed May 11, 2023. https://catalog.ngc.nvidia.com/orgs/nvidia/teams/clara/models/gatortron_og

26. Borkowski AA, Jakey CE, Thomas LB, Viswanadhan N, Mastorides SM. Establishing a hospital artificial intelligence committee to improve patient care. Fed Pract. 2022;39(8):334-336. doi:10.12788/fp.0299

The development of [artificial intelligence] is as fundamental as the creation of the microprocessor, the personal computer, the Internet, and the mobile phone. It will change the way people work, learn, travel, get health care, and communicate with each other.

Bill Gates 1

As the world emerges from the pandemic and the health care system faces new challenges, technology has become an increasingly important tool for health care professionals (HCPs). One such technology is the large language model (LLM), which has the potential to revolutionize the health care industry. ChatGPT, a popular LLM developed by OpenAI, has gained particular attention in the medical community for its ability to pass the United States Medical Licensing Exam.2 This article will explore the benefits and potential pitfalls of using LLMs like ChatGPT in medicine and health care.

Benefits

HCP burnout is a serious issue that can lead to lower productivity, increased medical errors, and decreased patient satisfaction.3 LLMs can alleviate some administrative burdens on HCPs, allowing them to focus on patient care. By assisting with billing, coding, insurance claims, and organizing schedules, LLMs like ChatGPT can free up time for HCPs to focus on what they do best: providing quality patient care.4 ChatGPT also can assist with diagnoses by providing accurate and reliable information based on a vast amount of clinical data. By learning the relationships between different medical conditions, symptoms, and treatment options, ChatGPT can provide an appropriate differential diagnosis (Figure 1).

Imaging medical specialists like radiologists, pathologists, dermatologists, and others can benefit from combining computer vision diagnostics with ChatGPT report creation abilities to streamline the diagnostic workflow and improve diagnostic accuracy (Figure 2).

Although using ChatGPT and other LLMs in mental health care has potential benefits, it is essential to note that they are not a substitute for human interaction and personalized care. While ChatGPT can remember information from previous conversations, it cannot provide the same level of personalized, high-quality care that a professional therapist or HCP can. However, by augmenting the work of HCPs, ChatGPT and other LLMs have the potential to make mental health care more accessible and efficient. In addition to providing effective screening in underserved areas, ChatGPT technology may improve the competence of physician assistants and nurse practitioners in delivering mental health care. With the increased incidence of mental health problems in veterans, the pertinence of a ChatGPT-like feature will only increase with time.9

ChatGPT can also be integrated into health care organizations’ websites and mobile apps, providing patients instant access to medical information, self-care advice, symptom checkers, scheduling appointments, and arranging transportation. These features can reduce the burden on health care staff and help patients stay informed and motivated to take an active role in their health. Additionally, health care organizations can use ChatGPT to engage patients by providing reminders for medication renewals and assistance with self-care.4,6,10,11

The potential of artificial intelligence (AI) in the field of medical education and research is immense. According to a study by Gilson and colleagues, ChatGPT has shown promising results as a medical education tool.12 ChatGPT can simulate clinical scenarios, provide real-time feedback, and improve diagnostic skills. It also offers new interactive and personalized learning opportunities for medical students and HCPs.13 ChatGPT can help researchers by streamlining the process of data analysis. It can also administer surveys or questionnaires, facilitate data collection on preferences and experiences, and help in writing scientific publications.14 Nevertheless, to fully unlock the potential of these AI models, additional models that perform checks for factual accuracy, plagiarism, and copyright infringement must be developed.15,16

AI Bill of Rights

In order to protect the American public, the White House Office of Science and Technology Policy (OSTP) has released a blueprint for an AI Bill of Rights that emphasizes 5 principles to protect the public from the harmful effects of AI models, including safe and effective systems; algorithmic discrimination protection; data privacy; notice and explanation; and human alternatives, considerations, and fallback (Figure 3).17

One of the biggest challenges with LLMs like ChatGPT is the prevalence of inaccurate information or so-called hallucinations.16 These inaccuracies stem from the inability of LLMs to distinguish between real and fake information. To prevent hallucinations, researchers have proposed several methods, including training models on more diverse data, using adversarial training methods, and human-in-the-loop approaches.21 In addition, medicine-specific models like GatorTron, medPaLM, and Almanac were developed, increasing the accuracy of factual results.22-24 Unfortunately, only the GatorTron model is available to the public through the NVIDIA developers’ program.25

Despite these shortcomings, the future of LLMs in health care is promising. Although these models will not replace HCPs, they can help reduce the unnecessary burden on them, prevent burnout, and enable HCPs and patients spend more time together. Establishing an official hospital AI oversight governing body that would promote best practices could ensure the trustworthy implementation of these new technologies.26

Conclusions

The use of ChatGPT and other LLMs in health care has the potential to revolutionize the industry. By assisting HCPs with administrative tasks, improving the accuracy and reliability of diagnoses, and engaging patients, ChatGPT can help health care organizations provide better care to their patients. While LLMs are not a substitute for human interaction and personalized care, they can augment the work of HCPs, making health care more accessible and efficient. As the health care industry continues to evolve, it will be exciting to see how ChatGPT and other LLMs are used to improve patient outcomes and quality of care. In addition, AI technologies like ChatGPT offer enormous potential in medical education and research. To ensure that the benefits outweigh the risks, developing trustworthy AI health care products and establishing oversight governing bodies to ensure their implementation is essential. By doing so, we can help HCPs focus on what matters most, providing high-quality care to patients.

Acknowledgments

This material is the result of work supported by resources and the use of facilities at the James A. Haley Veterans’ Hospital.

The development of [artificial intelligence] is as fundamental as the creation of the microprocessor, the personal computer, the Internet, and the mobile phone. It will change the way people work, learn, travel, get health care, and communicate with each other.

Bill Gates 1

As the world emerges from the pandemic and the health care system faces new challenges, technology has become an increasingly important tool for health care professionals (HCPs). One such technology is the large language model (LLM), which has the potential to revolutionize the health care industry. ChatGPT, a popular LLM developed by OpenAI, has gained particular attention in the medical community for its ability to pass the United States Medical Licensing Exam.2 This article will explore the benefits and potential pitfalls of using LLMs like ChatGPT in medicine and health care.

Benefits

HCP burnout is a serious issue that can lead to lower productivity, increased medical errors, and decreased patient satisfaction.3 LLMs can alleviate some administrative burdens on HCPs, allowing them to focus on patient care. By assisting with billing, coding, insurance claims, and organizing schedules, LLMs like ChatGPT can free up time for HCPs to focus on what they do best: providing quality patient care.4 ChatGPT also can assist with diagnoses by providing accurate and reliable information based on a vast amount of clinical data. By learning the relationships between different medical conditions, symptoms, and treatment options, ChatGPT can provide an appropriate differential diagnosis (Figure 1).

Imaging medical specialists like radiologists, pathologists, dermatologists, and others can benefit from combining computer vision diagnostics with ChatGPT report creation abilities to streamline the diagnostic workflow and improve diagnostic accuracy (Figure 2).

Although using ChatGPT and other LLMs in mental health care has potential benefits, it is essential to note that they are not a substitute for human interaction and personalized care. While ChatGPT can remember information from previous conversations, it cannot provide the same level of personalized, high-quality care that a professional therapist or HCP can. However, by augmenting the work of HCPs, ChatGPT and other LLMs have the potential to make mental health care more accessible and efficient. In addition to providing effective screening in underserved areas, ChatGPT technology may improve the competence of physician assistants and nurse practitioners in delivering mental health care. With the increased incidence of mental health problems in veterans, the pertinence of a ChatGPT-like feature will only increase with time.9

ChatGPT can also be integrated into health care organizations’ websites and mobile apps, providing patients instant access to medical information, self-care advice, symptom checkers, scheduling appointments, and arranging transportation. These features can reduce the burden on health care staff and help patients stay informed and motivated to take an active role in their health. Additionally, health care organizations can use ChatGPT to engage patients by providing reminders for medication renewals and assistance with self-care.4,6,10,11

The potential of artificial intelligence (AI) in the field of medical education and research is immense. According to a study by Gilson and colleagues, ChatGPT has shown promising results as a medical education tool.12 ChatGPT can simulate clinical scenarios, provide real-time feedback, and improve diagnostic skills. It also offers new interactive and personalized learning opportunities for medical students and HCPs.13 ChatGPT can help researchers by streamlining the process of data analysis. It can also administer surveys or questionnaires, facilitate data collection on preferences and experiences, and help in writing scientific publications.14 Nevertheless, to fully unlock the potential of these AI models, additional models that perform checks for factual accuracy, plagiarism, and copyright infringement must be developed.15,16

AI Bill of Rights

In order to protect the American public, the White House Office of Science and Technology Policy (OSTP) has released a blueprint for an AI Bill of Rights that emphasizes 5 principles to protect the public from the harmful effects of AI models, including safe and effective systems; algorithmic discrimination protection; data privacy; notice and explanation; and human alternatives, considerations, and fallback (Figure 3).17

One of the biggest challenges with LLMs like ChatGPT is the prevalence of inaccurate information or so-called hallucinations.16 These inaccuracies stem from the inability of LLMs to distinguish between real and fake information. To prevent hallucinations, researchers have proposed several methods, including training models on more diverse data, using adversarial training methods, and human-in-the-loop approaches.21 In addition, medicine-specific models like GatorTron, medPaLM, and Almanac were developed, increasing the accuracy of factual results.22-24 Unfortunately, only the GatorTron model is available to the public through the NVIDIA developers’ program.25

Despite these shortcomings, the future of LLMs in health care is promising. Although these models will not replace HCPs, they can help reduce the unnecessary burden on them, prevent burnout, and enable HCPs and patients spend more time together. Establishing an official hospital AI oversight governing body that would promote best practices could ensure the trustworthy implementation of these new technologies.26

Conclusions

The use of ChatGPT and other LLMs in health care has the potential to revolutionize the industry. By assisting HCPs with administrative tasks, improving the accuracy and reliability of diagnoses, and engaging patients, ChatGPT can help health care organizations provide better care to their patients. While LLMs are not a substitute for human interaction and personalized care, they can augment the work of HCPs, making health care more accessible and efficient. As the health care industry continues to evolve, it will be exciting to see how ChatGPT and other LLMs are used to improve patient outcomes and quality of care. In addition, AI technologies like ChatGPT offer enormous potential in medical education and research. To ensure that the benefits outweigh the risks, developing trustworthy AI health care products and establishing oversight governing bodies to ensure their implementation is essential. By doing so, we can help HCPs focus on what matters most, providing high-quality care to patients.

Acknowledgments

This material is the result of work supported by resources and the use of facilities at the James A. Haley Veterans’ Hospital.

1. Bill Gates. The age of AI has begun. March 21, 2023. Accessed May 10, 2023. https://www.gatesnotes.com/the-age-of-ai-has-begun

2. Kung TH, Cheatham M, Medenilla A, et al. Performance of ChatGPT on USMLE: Potential for AI-assisted medical education using large language models. PLOS Digit Health. 2023;2(2):e0000198. Published 2023 Feb 9. doi:10.1371/journal.pdig.0000198

3. Shanafelt TD, West CP, Sinsky C, et al. Changes in burnout and satisfaction with work-life integration in physicians and the general US working population between 2011 and 2020. Mayo Clin Proc. 2022;97(3):491-506. doi:10.1016/j.mayocp.2021.11.021

4. Goodman RS, Patrinely JR Jr, Osterman T, Wheless L, Johnson DB. On the cusp: considering the impact of artificial intelligence language models in healthcare. Med. 2023;4(3):139-140. doi:10.1016/j.medj.2023.02.008

5. Will ChatGPT transform healthcare? Nat Med. 2023;29(3):505-506. doi:10.1038/s41591-023-02289-5

6. Hopkins AM, Logan JM, Kichenadasse G, Sorich MJ. Artificial intelligence chatbots will revolutionize how cancer patients access information: ChatGPT represents a paradigm-shift. JNCI Cancer Spectr. 2023;7(2):pkad010. doi:10.1093/jncics/pkad010

7. Babar Z, van Laarhoven T, Zanzotto FM, Marchiori E. Evaluating diagnostic content of AI-generated radiology reports of chest X-rays. Artif Intell Med. 2021;116:102075. doi:10.1016/j.artmed.2021.102075

8. Lecler A, Duron L, Soyer P. Revolutionizing radiology with GPT-based models: current applications, future possibilities and limitations of ChatGPT. Diagn Interv Imaging. 2023;S2211-5684(23)00027-X. doi:10.1016/j.diii.2023.02.003

9. Germain JM. Is ChatGPT smart enough to practice mental health therapy? March 23, 2023. Accessed May 11, 2023. https://www.technewsworld.com/story/is-chatgpt-smart-enough-to-practice-mental-health-therapy-178064.html

10. Cascella M, Montomoli J, Bellini V, Bignami E. Evaluating the feasibility of ChatGPT in healthcare: an analysis of multiple clinical and research scenarios. J Med Syst. 2023;47(1):33. Published 2023 Mar 4. doi:10.1007/s10916-023-01925-4

11. Jungwirth D, Haluza D. Artificial intelligence and public health: an exploratory study. Int J Environ Res Public Health. 2023;20(5):4541. Published 2023 Mar 3. doi:10.3390/ijerph20054541

12. Gilson A, Safranek CW, Huang T, et al. How does ChatGPT perform on the United States Medical Licensing Examination? The implications of large language models for medical education and knowledge assessment. JMIR Med Educ. 2023;9:e45312. Published 2023 Feb 8. doi:10.2196/45312

13. Eysenbach G. The role of ChatGPT, generative language models, and artificial intelligence in medical education: a conversation with ChatGPT and a call for papers. JMIR Med Educ. 2023;9:e46885. Published 2023 Mar 6. doi:10.2196/46885

14. Macdonald C, Adeloye D, Sheikh A, Rudan I. Can ChatGPT draft a research article? An example of population-level vaccine effectiveness analysis. J Glob Health. 2023;13:01003. Published 2023 Feb 17. doi:10.7189/jogh.13.01003

15. Masters K. Ethical use of artificial intelligence in health professions education: AMEE Guide No.158. Med Teach. 2023;1-11. doi:10.1080/0142159X.2023.2186203

16. Smith CS. Hallucinations could blunt ChatGPT’s success. IEEE Spectrum. March 13, 2023. Accessed May 11, 2023. https://spectrum.ieee.org/ai-hallucination

17. Executive Office of the President, Office of Science and Technology Policy. Blueprint for an AI Bill of Rights. Accessed May 11, 2023. https://www.whitehouse.gov/ostp/ai-bill-of-rights

18. Executive office of the President. Executive Order 13960: promoting the use of trustworthy artificial intelligence in the federal government. Fed Regist. 2020;89(236):78939-78943.

19. US Department of Commerce, National institute of Standards and Technology. Artificial Intelligence Risk Management Framework (AI RMF 1.0). Published January 2023. doi:10.6028/NIST.AI.100-1

20. Microsoft. Azure Cognitive Search—Cloud Search Service. Accessed May 11, 2023. https://azure.microsoft.com/en-us/products/search

21. Aiyappa R, An J, Kwak H, Ahn YY. Can we trust the evaluation on ChatGPT? March 22, 2023. Accessed May 11, 2023. https://arxiv.org/abs/2303.12767v1

22. Yang X, Chen A, Pournejatian N, et al. GatorTron: a large clinical language model to unlock patient information from unstructured electronic health records. Updated December 16, 2022. Accessed May 11, 2023. https://arxiv.org/abs/2203.03540v3

23. Singhal K, Azizi S, Tu T, et al. Large language models encode clinical knowledge. December 26, 2022. Accessed May 11, 2023. https://arxiv.org/abs/2212.13138v1

24. Zakka C, Chaurasia A, Shad R, Hiesinger W. Almanac: knowledge-grounded language models for clinical medicine. March 1, 2023. Accessed May 11, 2023. https://arxiv.org/abs/2303.01229v1

25. NVIDIA. GatorTron-OG. Accessed May 11, 2023. https://catalog.ngc.nvidia.com/orgs/nvidia/teams/clara/models/gatortron_og

26. Borkowski AA, Jakey CE, Thomas LB, Viswanadhan N, Mastorides SM. Establishing a hospital artificial intelligence committee to improve patient care. Fed Pract. 2022;39(8):334-336. doi:10.12788/fp.0299

1. Bill Gates. The age of AI has begun. March 21, 2023. Accessed May 10, 2023. https://www.gatesnotes.com/the-age-of-ai-has-begun

2. Kung TH, Cheatham M, Medenilla A, et al. Performance of ChatGPT on USMLE: Potential for AI-assisted medical education using large language models. PLOS Digit Health. 2023;2(2):e0000198. Published 2023 Feb 9. doi:10.1371/journal.pdig.0000198

3. Shanafelt TD, West CP, Sinsky C, et al. Changes in burnout and satisfaction with work-life integration in physicians and the general US working population between 2011 and 2020. Mayo Clin Proc. 2022;97(3):491-506. doi:10.1016/j.mayocp.2021.11.021

4. Goodman RS, Patrinely JR Jr, Osterman T, Wheless L, Johnson DB. On the cusp: considering the impact of artificial intelligence language models in healthcare. Med. 2023;4(3):139-140. doi:10.1016/j.medj.2023.02.008

5. Will ChatGPT transform healthcare? Nat Med. 2023;29(3):505-506. doi:10.1038/s41591-023-02289-5

6. Hopkins AM, Logan JM, Kichenadasse G, Sorich MJ. Artificial intelligence chatbots will revolutionize how cancer patients access information: ChatGPT represents a paradigm-shift. JNCI Cancer Spectr. 2023;7(2):pkad010. doi:10.1093/jncics/pkad010

7. Babar Z, van Laarhoven T, Zanzotto FM, Marchiori E. Evaluating diagnostic content of AI-generated radiology reports of chest X-rays. Artif Intell Med. 2021;116:102075. doi:10.1016/j.artmed.2021.102075

8. Lecler A, Duron L, Soyer P. Revolutionizing radiology with GPT-based models: current applications, future possibilities and limitations of ChatGPT. Diagn Interv Imaging. 2023;S2211-5684(23)00027-X. doi:10.1016/j.diii.2023.02.003

9. Germain JM. Is ChatGPT smart enough to practice mental health therapy? March 23, 2023. Accessed May 11, 2023. https://www.technewsworld.com/story/is-chatgpt-smart-enough-to-practice-mental-health-therapy-178064.html

10. Cascella M, Montomoli J, Bellini V, Bignami E. Evaluating the feasibility of ChatGPT in healthcare: an analysis of multiple clinical and research scenarios. J Med Syst. 2023;47(1):33. Published 2023 Mar 4. doi:10.1007/s10916-023-01925-4

11. Jungwirth D, Haluza D. Artificial intelligence and public health: an exploratory study. Int J Environ Res Public Health. 2023;20(5):4541. Published 2023 Mar 3. doi:10.3390/ijerph20054541

12. Gilson A, Safranek CW, Huang T, et al. How does ChatGPT perform on the United States Medical Licensing Examination? The implications of large language models for medical education and knowledge assessment. JMIR Med Educ. 2023;9:e45312. Published 2023 Feb 8. doi:10.2196/45312

13. Eysenbach G. The role of ChatGPT, generative language models, and artificial intelligence in medical education: a conversation with ChatGPT and a call for papers. JMIR Med Educ. 2023;9:e46885. Published 2023 Mar 6. doi:10.2196/46885

14. Macdonald C, Adeloye D, Sheikh A, Rudan I. Can ChatGPT draft a research article? An example of population-level vaccine effectiveness analysis. J Glob Health. 2023;13:01003. Published 2023 Feb 17. doi:10.7189/jogh.13.01003

15. Masters K. Ethical use of artificial intelligence in health professions education: AMEE Guide No.158. Med Teach. 2023;1-11. doi:10.1080/0142159X.2023.2186203

16. Smith CS. Hallucinations could blunt ChatGPT’s success. IEEE Spectrum. March 13, 2023. Accessed May 11, 2023. https://spectrum.ieee.org/ai-hallucination

17. Executive Office of the President, Office of Science and Technology Policy. Blueprint for an AI Bill of Rights. Accessed May 11, 2023. https://www.whitehouse.gov/ostp/ai-bill-of-rights

18. Executive office of the President. Executive Order 13960: promoting the use of trustworthy artificial intelligence in the federal government. Fed Regist. 2020;89(236):78939-78943.

19. US Department of Commerce, National institute of Standards and Technology. Artificial Intelligence Risk Management Framework (AI RMF 1.0). Published January 2023. doi:10.6028/NIST.AI.100-1

20. Microsoft. Azure Cognitive Search—Cloud Search Service. Accessed May 11, 2023. https://azure.microsoft.com/en-us/products/search

21. Aiyappa R, An J, Kwak H, Ahn YY. Can we trust the evaluation on ChatGPT? March 22, 2023. Accessed May 11, 2023. https://arxiv.org/abs/2303.12767v1

22. Yang X, Chen A, Pournejatian N, et al. GatorTron: a large clinical language model to unlock patient information from unstructured electronic health records. Updated December 16, 2022. Accessed May 11, 2023. https://arxiv.org/abs/2203.03540v3

23. Singhal K, Azizi S, Tu T, et al. Large language models encode clinical knowledge. December 26, 2022. Accessed May 11, 2023. https://arxiv.org/abs/2212.13138v1

24. Zakka C, Chaurasia A, Shad R, Hiesinger W. Almanac: knowledge-grounded language models for clinical medicine. March 1, 2023. Accessed May 11, 2023. https://arxiv.org/abs/2303.01229v1

25. NVIDIA. GatorTron-OG. Accessed May 11, 2023. https://catalog.ngc.nvidia.com/orgs/nvidia/teams/clara/models/gatortron_og

26. Borkowski AA, Jakey CE, Thomas LB, Viswanadhan N, Mastorides SM. Establishing a hospital artificial intelligence committee to improve patient care. Fed Pract. 2022;39(8):334-336. doi:10.12788/fp.0299

Establishing a Hospital Artificial Intelligence Committee to Improve Patient Care

In the past 10 years, artificial intelligence (AI) applications have exploded in numerous fields, including medicine. Myriad publications report that the use of AI in health care is increasing, and AI has shown utility in many medical specialties, eg, pathology, radiology, and oncology.1,2

In cancer pathology, AI was able not only to detect various cancers, but also to subtype and grade them. In addition, AI could predict survival, the success of therapeutic response, and underlying mutations from histopathologic images.3 In other medical fields, AI applications are as notable. For example, in imaging specialties like radiology, ophthalmology, dermatology, and gastroenterology, AI is being used for image recognition, enhancement, and segmentation. In addition, AI is beneficial for predicting disease progression, survival, and response to therapy in other medical specialties. Finally, AI may help with administrative tasks like scheduling.

However, many obstacles to successfully implementing AI programs in the clinical setting exist, including clinical data limitations and ethical use of data, trust in the AI models, regulatory barriers, and lack of clinical buy-in due to insufficient basic AI understanding.2 To address these barriers to successful clinical AI implementation, we decided to create a formal governing body at James A. Haley Veterans’ Hospital in Tampa, Florida. Accordingly, the hospital AI committee charter was officially approved on July 22, 2021. Our model could be used by both US Department of Veterans Affairs (VA) and non-VA hospitals throughout the country.

AI Committee

The vision of the AI committee is to improve outcomes and experiences for our veterans by developing trustworthy AI capabilities to support the VA mission. The mission is to build robust capacity in AI to create and apply innovative AI solutions and transform the VA by facilitating a learning environment that supports the delivery of world-class benefits and services to our veterans. Our vision and mission are aligned with the VA National AI Institute. 4

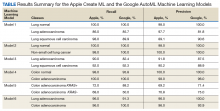

The AI Committee comprises 7 subcommittees: ethics, AI clinical product evaluation, education, data sharing and acquisition, research, 3D printing, and improvement and innovation. The role of the ethics subcommittee is to ensure the ethical and equitable implementation of clinical AI. We created the ethics subcommittee guidelines based on the World Health Organization ethics and governance of AI for health documents.5 They include 6 basic principles: protecting human autonomy; promoting human well-being and safety and the public interest; ensuring transparency, explainability, and intelligibility; fostering responsibility and accountability; ensuring inclusiveness and equity; and promoting AI that is responsive and sustainable (Table 1).

As the name indicates, the role of the AI clinical product evaluation subcommittee is to evaluate commercially available clinical AI products. More than 400 US Food and Drug Administration–approved AI medical applications exist, and the list is growing rapidly. Most AI applications are in medical imaging like radiology, dermatology, ophthalmology, and pathology.6,7 Each clinical product is evaluated according to 6 principles: relevance, usability, risks, regulatory, technical requirements, and financial (Table 2).8 We are in the process of evaluating a few commercial AI algorithms for pathology and radiology, using these 6 principles.

Implementations

After a comprehensive evaluation, we implemented 2 ClearRead (Riverain Technologies) AI radiology solutions. ClearRead CT Vessel Suppress produces a secondary series of computed tomography (CT) images, suppressing vessels and other normal structures within the lungs to improve nodule detectability, and ClearRead Xray Bone Suppress, which increases the visibility of soft tissue in standard chest X-rays by suppressing the bone on the digital image without the need for 2 exposures.

The role of the education subcommittee is to educate the staff about AI and how it can improve patient care. Every Friday, we email an AI article of the week to our practitioners. In addition, we publish a newsletter, and we organize an annual AI conference. The first conference in 2022 included speakers from the National AI Institute, Moffitt Cancer Center, the University of South Florida, and our facility.

As the name indicates, the data sharing and acquisition subcommittee oversees preparing data for our clinical and research projects. The role of the research subcommittee is to coordinate and promote AI research with the ultimate goal of improving patient care.

Other Technologies

Although 3D printing does not fall under the umbrella of AI, we have decided to include it in our future-oriented AI committee. We created an online 3D printing course to promote the technology throughout the VA. We 3D print organ models to help surgeons prepare for complicated operations. In addition, together with our colleagues from the University of Florida, we used 3D printing to address the shortage of swabs for COVID-19 testing. The VA Sunshine Healthcare Network (Veterans Integrated Services Network 8) has an active Innovation and Improvement Committee. 9 Our improvement and innovation subcommittee serves as a coordinating body with the network committee .

Conclusions

Through the hospital AI committee, we believe that we may overcome many obstacles to successfully implementing AI applications in the clinical setting, including the ethical use of data, trust in the AI models, regulatory barriers, and lack of clinical buy-in due to insufficient basic AI knowledge.

Acknowledgments

This material is the result of work supported with resources and the use of facilities at the James A. Haley Veterans’ Hospital.

In the past 10 years, artificial intelligence (AI) applications have exploded in numerous fields, including medicine. Myriad publications report that the use of AI in health care is increasing, and AI has shown utility in many medical specialties, eg, pathology, radiology, and oncology.1,2

In cancer pathology, AI was able not only to detect various cancers, but also to subtype and grade them. In addition, AI could predict survival, the success of therapeutic response, and underlying mutations from histopathologic images.3 In other medical fields, AI applications are as notable. For example, in imaging specialties like radiology, ophthalmology, dermatology, and gastroenterology, AI is being used for image recognition, enhancement, and segmentation. In addition, AI is beneficial for predicting disease progression, survival, and response to therapy in other medical specialties. Finally, AI may help with administrative tasks like scheduling.

However, many obstacles to successfully implementing AI programs in the clinical setting exist, including clinical data limitations and ethical use of data, trust in the AI models, regulatory barriers, and lack of clinical buy-in due to insufficient basic AI understanding.2 To address these barriers to successful clinical AI implementation, we decided to create a formal governing body at James A. Haley Veterans’ Hospital in Tampa, Florida. Accordingly, the hospital AI committee charter was officially approved on July 22, 2021. Our model could be used by both US Department of Veterans Affairs (VA) and non-VA hospitals throughout the country.

AI Committee

The vision of the AI committee is to improve outcomes and experiences for our veterans by developing trustworthy AI capabilities to support the VA mission. The mission is to build robust capacity in AI to create and apply innovative AI solutions and transform the VA by facilitating a learning environment that supports the delivery of world-class benefits and services to our veterans. Our vision and mission are aligned with the VA National AI Institute. 4

The AI Committee comprises 7 subcommittees: ethics, AI clinical product evaluation, education, data sharing and acquisition, research, 3D printing, and improvement and innovation. The role of the ethics subcommittee is to ensure the ethical and equitable implementation of clinical AI. We created the ethics subcommittee guidelines based on the World Health Organization ethics and governance of AI for health documents.5 They include 6 basic principles: protecting human autonomy; promoting human well-being and safety and the public interest; ensuring transparency, explainability, and intelligibility; fostering responsibility and accountability; ensuring inclusiveness and equity; and promoting AI that is responsive and sustainable (Table 1).

As the name indicates, the role of the AI clinical product evaluation subcommittee is to evaluate commercially available clinical AI products. More than 400 US Food and Drug Administration–approved AI medical applications exist, and the list is growing rapidly. Most AI applications are in medical imaging like radiology, dermatology, ophthalmology, and pathology.6,7 Each clinical product is evaluated according to 6 principles: relevance, usability, risks, regulatory, technical requirements, and financial (Table 2).8 We are in the process of evaluating a few commercial AI algorithms for pathology and radiology, using these 6 principles.

Implementations

After a comprehensive evaluation, we implemented 2 ClearRead (Riverain Technologies) AI radiology solutions. ClearRead CT Vessel Suppress produces a secondary series of computed tomography (CT) images, suppressing vessels and other normal structures within the lungs to improve nodule detectability, and ClearRead Xray Bone Suppress, which increases the visibility of soft tissue in standard chest X-rays by suppressing the bone on the digital image without the need for 2 exposures.

The role of the education subcommittee is to educate the staff about AI and how it can improve patient care. Every Friday, we email an AI article of the week to our practitioners. In addition, we publish a newsletter, and we organize an annual AI conference. The first conference in 2022 included speakers from the National AI Institute, Moffitt Cancer Center, the University of South Florida, and our facility.

As the name indicates, the data sharing and acquisition subcommittee oversees preparing data for our clinical and research projects. The role of the research subcommittee is to coordinate and promote AI research with the ultimate goal of improving patient care.

Other Technologies

Although 3D printing does not fall under the umbrella of AI, we have decided to include it in our future-oriented AI committee. We created an online 3D printing course to promote the technology throughout the VA. We 3D print organ models to help surgeons prepare for complicated operations. In addition, together with our colleagues from the University of Florida, we used 3D printing to address the shortage of swabs for COVID-19 testing. The VA Sunshine Healthcare Network (Veterans Integrated Services Network 8) has an active Innovation and Improvement Committee. 9 Our improvement and innovation subcommittee serves as a coordinating body with the network committee .

Conclusions

Through the hospital AI committee, we believe that we may overcome many obstacles to successfully implementing AI applications in the clinical setting, including the ethical use of data, trust in the AI models, regulatory barriers, and lack of clinical buy-in due to insufficient basic AI knowledge.

Acknowledgments

This material is the result of work supported with resources and the use of facilities at the James A. Haley Veterans’ Hospital.

In the past 10 years, artificial intelligence (AI) applications have exploded in numerous fields, including medicine. Myriad publications report that the use of AI in health care is increasing, and AI has shown utility in many medical specialties, eg, pathology, radiology, and oncology.1,2

In cancer pathology, AI was able not only to detect various cancers, but also to subtype and grade them. In addition, AI could predict survival, the success of therapeutic response, and underlying mutations from histopathologic images.3 In other medical fields, AI applications are as notable. For example, in imaging specialties like radiology, ophthalmology, dermatology, and gastroenterology, AI is being used for image recognition, enhancement, and segmentation. In addition, AI is beneficial for predicting disease progression, survival, and response to therapy in other medical specialties. Finally, AI may help with administrative tasks like scheduling.

However, many obstacles to successfully implementing AI programs in the clinical setting exist, including clinical data limitations and ethical use of data, trust in the AI models, regulatory barriers, and lack of clinical buy-in due to insufficient basic AI understanding.2 To address these barriers to successful clinical AI implementation, we decided to create a formal governing body at James A. Haley Veterans’ Hospital in Tampa, Florida. Accordingly, the hospital AI committee charter was officially approved on July 22, 2021. Our model could be used by both US Department of Veterans Affairs (VA) and non-VA hospitals throughout the country.

AI Committee

The vision of the AI committee is to improve outcomes and experiences for our veterans by developing trustworthy AI capabilities to support the VA mission. The mission is to build robust capacity in AI to create and apply innovative AI solutions and transform the VA by facilitating a learning environment that supports the delivery of world-class benefits and services to our veterans. Our vision and mission are aligned with the VA National AI Institute. 4

The AI Committee comprises 7 subcommittees: ethics, AI clinical product evaluation, education, data sharing and acquisition, research, 3D printing, and improvement and innovation. The role of the ethics subcommittee is to ensure the ethical and equitable implementation of clinical AI. We created the ethics subcommittee guidelines based on the World Health Organization ethics and governance of AI for health documents.5 They include 6 basic principles: protecting human autonomy; promoting human well-being and safety and the public interest; ensuring transparency, explainability, and intelligibility; fostering responsibility and accountability; ensuring inclusiveness and equity; and promoting AI that is responsive and sustainable (Table 1).

As the name indicates, the role of the AI clinical product evaluation subcommittee is to evaluate commercially available clinical AI products. More than 400 US Food and Drug Administration–approved AI medical applications exist, and the list is growing rapidly. Most AI applications are in medical imaging like radiology, dermatology, ophthalmology, and pathology.6,7 Each clinical product is evaluated according to 6 principles: relevance, usability, risks, regulatory, technical requirements, and financial (Table 2).8 We are in the process of evaluating a few commercial AI algorithms for pathology and radiology, using these 6 principles.

Implementations

After a comprehensive evaluation, we implemented 2 ClearRead (Riverain Technologies) AI radiology solutions. ClearRead CT Vessel Suppress produces a secondary series of computed tomography (CT) images, suppressing vessels and other normal structures within the lungs to improve nodule detectability, and ClearRead Xray Bone Suppress, which increases the visibility of soft tissue in standard chest X-rays by suppressing the bone on the digital image without the need for 2 exposures.

The role of the education subcommittee is to educate the staff about AI and how it can improve patient care. Every Friday, we email an AI article of the week to our practitioners. In addition, we publish a newsletter, and we organize an annual AI conference. The first conference in 2022 included speakers from the National AI Institute, Moffitt Cancer Center, the University of South Florida, and our facility.

As the name indicates, the data sharing and acquisition subcommittee oversees preparing data for our clinical and research projects. The role of the research subcommittee is to coordinate and promote AI research with the ultimate goal of improving patient care.

Other Technologies

Although 3D printing does not fall under the umbrella of AI, we have decided to include it in our future-oriented AI committee. We created an online 3D printing course to promote the technology throughout the VA. We 3D print organ models to help surgeons prepare for complicated operations. In addition, together with our colleagues from the University of Florida, we used 3D printing to address the shortage of swabs for COVID-19 testing. The VA Sunshine Healthcare Network (Veterans Integrated Services Network 8) has an active Innovation and Improvement Committee. 9 Our improvement and innovation subcommittee serves as a coordinating body with the network committee .

Conclusions

Through the hospital AI committee, we believe that we may overcome many obstacles to successfully implementing AI applications in the clinical setting, including the ethical use of data, trust in the AI models, regulatory barriers, and lack of clinical buy-in due to insufficient basic AI knowledge.

Acknowledgments

This material is the result of work supported with resources and the use of facilities at the James A. Haley Veterans’ Hospital.

Artificial Intelligence: Review of Current and Future Applications in Medicine

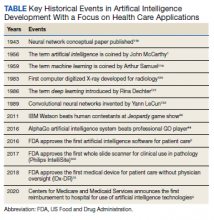

Artificial Intelligence (AI) was first described in 1956 and refers to machines having the ability to learn as they receive and process information, resulting in the ability to “think” like humans.1 AI’s impact in medicine is increasing; currently, at least 29 AI medical devices and algorithms are approved by the US Food and Drug Administration (FDA) in a variety of areas, including radiograph interpretation, managing glucose levels in patients with diabetes mellitus, analyzing electrocardiograms (ECGs), and diagnosing sleep disorders among others.2 Significantly, in 2020, the Centers for Medicare and Medicaid Services (CMS) announced the first reimbursement to hospitals for an AI platform, a model for early detection of strokes.3 AI is rapidly becoming an integral part of health care, and its role will only increase in the future (Table).

As knowledge in medicine is expanding exponentially, AI has great potential to assist with handling complex patient care data. The concept of exponential growth is not a natural one. As Bini described, with exponential growth the volume of knowledge amassed over the past 10 years will now occur in perhaps only 1 year.1 Likewise, equivalent advances over the past year may take just a few months. This phenomenon is partly due to the law of accelerating returns, which states that advances feed on themselves, continually increasing the rate of further advances.4 The volume of medical data doubles every 2 to 5 years.5 Fortunately, the field of AI is growing exponentially as well and can help health care practitioners (HCPs) keep pace, allowing the continued delivery of effective health care.

In this report, we review common terminology, principles, and general applications of AI, followed by current and potential applications of AI for selected medical specialties. Finally, we discuss AI’s future in health care, along with potential risks and pitfalls.

AI Overview

AI refers to machine programs that can “learn” or think based on past experiences. This functionality contrasts with simple rules-based programming available to health care for years. An example of rules-based programming is the warfarindosing.org website developed by Barnes-Jewish Hospital at Washington University Medical Center, which guides initial warfarin dosing.6,7 The prescriber inputs detailed patient information, including age, sex, height, weight, tobacco history, medications, laboratory results, and genotype if available. The application then calculates recommended warfarin dosing regimens to avoid over- or underanticoagulation. While the dosing algorithm may be complex, it depends entirely on preprogrammed rules. The program does not learn to reach its conclusions and recommendations from patient data.

In contrast, one of the most common subsets of AI is machine learning (ML). ML describes a program that “learns from experience and improves its performance as it learns.”1 With ML, the computer is initially provided with a training data set—data with known outcomes or labels. Because the initial data are input from known samples, this type of AI is known as supervised learning.8-10 As an example, we recently reported using ML to diagnose various types of cancer from pathology slides.11 In one experiment, we captured images of colon adenocarcinoma and normal colon (these 2 groups represent the training data set). Unlike traditional programming, we did not define characteristics that would differentiate colon cancer from normal; rather, the machine learned these characteristics independently by assessing the labeled images provided. A second data set (the validation data set) was used to evaluate the program and fine-tune the ML training model’s parameters. Finally, the program was presented with new images of cancer and normal cases for final assessment of accuracy (test data set). Our program learned to recognize differences from the images provided and was able to differentiate normal and cancer images with > 95% accuracy.

Advances in computer processing have allowed for the development of artificial neural networks (ANNs). While there are several types of ANNs, the most common types used for image classification and segmentation are known as convolutional neural networks (CNNs).9,12-14 The programs are designed to work similar to the human brain, specifically the visual cortex.15,16 As data are acquired, they are processed by various layers in the program. Much like neurons in the brain, one layer decides whether to advance information to the next.13,14 CNNs can be many layers deep, leading to the term deep learning: “computational models that are composed of multiple processing layers to learn representations of data with multiple levels of abstraction.”1,13,17

ANNs can process larger volumes of data. This advance has led to the development of unstructured or unsupervised learning. With this type of learning, imputing defined features (ie, predetermined answers) of the training data set described above is no longer required.1,8,10,14 The advantage of unsupervised learning is that the program can be presented raw data and extract meaningful interpretation without human input, often with less bias than may exist with supervised learning.1,18 If shown enough data, the program can extract relevant features to make conclusions independently without predefined definitions, potentially uncovering markers not previously known. For example, several studies have used unsupervised learning to search patient data to assess readmission risks of patients with congestive heart failure.10,19,20 AI compiled features independently and not previously defined, predicting patients at greater risk for readmission superior to traditional methods.

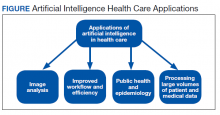

A more detailed description of the various terminologies and techniques of AI is beyond the scope of this review.9,10,17,21 However, in this basic overview, we describe 4 general areas that AI impacts health care (Figure).

Health Care Applications

Image analysis has seen the most AI health care applications.8,15 AI has shown potential in interpreting many types of medical images, including pathology slides, radiographs of various types, retina and other eye scans, and photographs of skin lesions. Many studies have demonstrated that AI can interpret these images as accurately as or even better than experienced clinicians.9,13,22-29 Studies have suggested AI interpretation of radiographs may better distinguish patients infected with COVID-19 from other causes of pneumonia, and AI interpretation of pathology slides may detect specific genetic mutations not previously identified without additional molecular tests.11,14,23,24,30-32

The second area in which AI can impact health care is improving workflow and efficiency. AI has improved surgery scheduling, saving significant revenue, and decreased patient wait times for appointments.1 AI can screen and triage radiographs, allowing attention to be directed to critical patients. This use would be valuable in many busy clinical settings, such as the recent COVID-19 pandemic.8,23 Similarly, AI can screen retina images to prioritize urgent conditions.25 AI has improved pathologists’ efficiency when used to detect breast metastases.33 Finally, AI may reduce medical errors, thereby ensuring patient safety.8,9,34

A third health care benefit of AI is in public health and epidemiology. AI can assist with clinical decision-making and diagnoses in low-income countries and areas with limited health care resources and personnel.25,29 AI can improve identification of infectious outbreaks, such as tuberculosis, malaria, dengue fever, and influenza.29,35-40 AI has been used to predict transmission patterns of the Zika virus and the current COVID-19 pandemic.41,42 Applications can stratify the risk of outbreaks based on multiple factors, including age, income, race, atypical geographic clusters, and seasonal factors like rainfall and temperature.35,36,38,43 AI has been used to assess morbidity and mortality, such as predicting disease severity with malaria and identifying treatment failures in tuberculosis.29

Finally, AI can dramatically impact health care due to processing large data sets or disconnected volumes of patient information—so-called big data.44-46 An example is the widespread use of electronic health records (EHRs) such as the Computerized Patient Record System used in Veteran Affairs medical centers (VAMCs). Much of patient information exists in written text: HCP notes, laboratory and radiology reports, medication records, etc. Natural language processing (NLP) allows platforms to sort through extensive volumes of data on complex patients at rates much faster than human capability, which has great potential to assist with diagnosis and treatment decisions.9

Medical literature is being produced at rates that exceed our ability to digest. More than 200,000 cancer-related articles were published in 2019 alone.14 NLP capabilities of AI have the potential to rapidly sort through this extensive medical literature and relate specific verbiage in patient records guiding therapy.46 IBM Watson, a supercomputer based on ML and NLP, demonstrates this concept with many potential applications, only some of which relate to health care.1,9 Watson has an oncology component to assimilate multiple aspects of patient care, including clinical notes, pathology results, radiograph findings, staging, and a tumor’s genetic profile. It coordinates these inputs from the EHR and mines medical literature and research databases to recommend treatment options.1,46 AI can assess and compile far greater patient data and therapeutic options than would be feasible by individual clinicians, thus providing customized patient care.47 Watson has partnered with numerous medical centers, including MD Anderson Cancer Center and Memorial Sloan Kettering Cancer Center, with variable success.44,47-49 While the full potential of Watson appears not yet realized, these AI-driven approaches will likely play an important role in leveraging the hidden value in the expanding volume of health care information.

Medical Specialty Applications

Radiology

Currently > 70% of FDA-approved AI medical devices are in the field of radiology.2 Most radiology departments have used AI-friendly digital imaging for years, such as the picture archiving and communication systems used by numerous health care systems, including VAMCs.2,15 Gray-scale images common in radiology lend themselves to standardization, although AI is not limited to black-and- white image interpretation.15

An abundance of literature describes plain radiograph interpretation using AI. One FDA-approved platform improved X-ray diagnosis of wrist fractures when used by emergency medicine clinicians.2,50 AI has been applied to chest X-ray (CXR) interpretation of many conditions, including pneumonia, tuberculosis, malignant lung lesions, and COVID-19.23,25,28,44,51-53 For example, Nam and colleagues suggested AI is better at diagnosing malignant pulmonary nodules from CXRs than are trained radiologists.28

In addition to plain radiographs, AI has been applied to many other imaging technologies, including ultrasounds, positron emission tomography, mammograms, computed tomography (CT), and magnetic resonance imaging (MRI).15,26,44,48,54-56 A large study demonstrated that ML platforms significantly reduced the time to diagnose intracranial hemorrhages on CT and identified subtle hemorrhages missed by radiologists.55 Other studies have claimed that AI programs may be better than radiologists in detecting cancer in screening mammograms, and 3 FDA-approved devices focus on mammogram interpretation.2,15,54,57 There is also great interest in MRI applications to detect and predict prognosis for breast cancer based on imaging findings.21,56

Aside from providing accurate diagnoses, other studies focus on AI radiograph interpretation to assist with patient screening, triage, improving time to final diagnosis, providing a rapid “second opinion,” and even monitoring disease progression and offering insights into prognosis.8,21,23,52,55,56,58 These features help in busy urban centers but may play an even greater role in areas with limited access to health care or trained specialists such as radiologists.52

Cardiology

Cardiology has the second highest number of FDA-approved AI applications.2 Many cardiology AI platforms involve image analysis, as described in several recent reviews.45,59,60 AI has been applied to echocardiography to measure ejection fractions, detect valvular disease, and assess heart failure from hypertrophic and restrictive cardiomyopathy and amyloidosis.45,48,59 Applications for cardiac CT scans and CT angiography have successfully quantified both calcified and noncalcified coronary artery plaques and lumen assessments, assessed myocardial perfusion, and performed coronary artery calcium scoring.45,59,60 Likewise, AI applications for cardiac MRI have been used to quantitate ejection fraction, large vessel flow assessment, and cardiac scar burden.45,59

For years ECG devices have provided interpretation with limited accuracy using preprogrammed parameters.48 However, the application of AI allows ECG interpretation on par with trained cardiologists. Numerous such AI applications exist, and 2 FDA-approved devices perform ECG interpretation.2,61-64 One of these devices incorporates an AI-powered stethoscope to detect atrial fibrillation and heart murmurs.65

Pathology

The advancement of whole slide imaging, wherein entire slides can be scanned and digitized at high speed and resolution, creates great potential for AI applications in pathology.12,24,32,33,66 A landmark study demonstrating the potential of AI for assessing whole slide imaging examined sentinel lymph node metastases in patients with breast cancer.22 Multiple algorithms in the study demonstrated that AI was equivalent or better than pathologists in detecting metastases, especially when the pathologists were time-constrained consistent with a normal working environment. Significantly, the most accurate and efficient diagnoses were achieved when the pathologist and AI interpretations were used together.22,33

AI has shown promise in diagnosing many other entities, including cancers of the prostate (including Gleason scoring), lung, colon, breast, and skin.11,12,24,27,32,67 In addition, AI has shown great potential in scoring biomarkers important for prognosis and treatment, such as immunohistochemistry (IHC) labeling of Ki-67 and PD-L1.32 Pathologists can have difficulty classifying certain tumors or determining the site of origin for metastases, often having to rely on IHC with limited success. The unique features of image analysis with AI have the potential to assist in classifying difficult tumors and identifying sites of origin for metastatic disease based on morphology alone.11

Oncology depends heavily on molecular pathology testing to dictate treatment options and determine prognosis. Preliminary studies suggest that AI interpretation alone has the potential to delineate whether certain molecular mutations are present in tumors from various sites.11,14,24,32 One study combined histology and genomic results for AI interpretation that improved prognostic predictions.68 In addition, AI analysis may have potential in predicting tumor recurrence or prognosis based on cellular features, as demonstrated for lung cancer and melanoma.67,69,70

Ophthalmology

AI applications for ophthalmology have focused on diabetic retinopathy, age-related macular degeneration, glaucoma, retinopathy of prematurity, age-related and congenital cataracts, and retinal vein occlusion.71-73 Diabetic retinopathy is a leading cause of blindness and has been studied by numerous platforms with good success, most having used color fundus photography.71,72 One study showed AI could diagnose diabetic retinopathy and diabetic macular edema with specificities similar to ophthalmologists.74 In 2018, the FDA approved the AI platform IDx-DR. This diagnostic system classifies retinal images and recommends referral for patients determined to have “more than mild diabetic retinopathy” and reexamination within a year for other patients.8,75 Significantly, the platform recommendations do not require confirmation by a clinician.8

AI has been applied to other modalities in ophthalmology such as optical coherence tomography (OCT) to diagnose retinal disease and to predict appropriate management of congenital cataracts.25,73,76 For example, an AI application using OCT has been demonstrated to match or exceed the accuracy of retinal experts in diagnosing and triaging patients with a variety of retinal pathologies, including patients needing urgent referrals.77

Dermatology

Multiple studies demonstrate AI performs at least equal to experienced dermatologists in differentiating selected skin lesions.78-81 For example, Esteva and colleagues demonstrated AI could differentiate keratinocyte carcinomas from benign seborrheic keratoses and malignant melanomas from benign nevi with accuracy equal to 21 board-certified dermatologists.78

AI is applicable to various imaging procedures common to dermatology, such as dermoscopy, very high-frequency ultrasound, and reflectance confocal microscopy.82 Several studies have demonstrated that AI interpretation compared favorably to dermatologists evaluating dermoscopy to assess melanocytic lesions.78-81,83

A limitation in these studies is that they differentiate only a few diagnoses.82 Furthermore, dermatologists have sensory input such as touch and visual examination under various conditions, something AI has yet to replicate.15,34,84 Also, most AI devices use no or limited clinical information.81 Dermatologists can recognize rarer conditions for which AI models may have had limited or no training.34 Nevertheless, a recent study assessed AI for the diagnosis of 134 separate skin disorders with promising results, including providing diagnoses with accuracy comparable to that of dermatologists and providing accurate treatment strategies.84 As Topol points out, most skin lesions are diagnosed in the primary care setting where AI can have a greater impact when used in conjunction with the clinical impression, especially where specialists are in limited supply.48,78

Finally, dermatology lends itself to using portable or smartphone applications (apps) wherein the user can photograph a lesion for analysis by AI algorithms to assess the need for further evaluation or make treatment recommendations.34,84,85 Although results from currently available apps are not encouraging, they may play a greater role as the technology advances.34,85

Oncology

Applications of AI in oncology include predicting prognosis for patients with cancer based on histologic and/or genetic information.14,68,86 Programs can predict the risk of complications before and recurrence risks after surgery for malignancies.44,87-89 AI can also assist in treatment planning and predict treatment failure with radiation therapy.90,91

AI has great potential in processing the large volumes of patient data in cancer genomics. Next-generation sequencing has allowed for the identification of millions of DNA sequences in a single tumor to detect genetic anomalies.92 Thousands of mutations can be found in individual tumor samples, and processing this information and determining its significance can be beyond human capability.14 We know little about the effects of various mutation combinations, and most tumors have a heterogeneous molecular profile among different cell populations.14,93 The presence or absence of various mutations can have diagnostic, prognostic, and therapeutic implications.93 AI has great potential to sort through these complex data and identify actionable findings.

More than 200,000 cancer-related articles were published in 2019, and publications in the field of cancer genomics are increasing exponentially.14,92,93 Patel and colleagues assessed the utility of IBM Watson for Genomics against results from a molecular tumor board.93 Watson for Genomics identified potentially significant mutations not identified by the tumor board in 32% of patients. Most mutations were related to new clinical trials not yet added to the tumor board watch list, demonstrating the role AI will have in processing the large volume of genetic data required to deliver personalized medicine moving forward.

Gastroenterology

AI has shown promise in predicting risk or outcomes based on clinical parameters in various common gastroenterology problems, including gastric reflux, acute pancreatitis, gastrointestinal bleeding, celiac disease, and inflammatory bowel disease.94,95 AI endoscopic analysis has demonstrated potential in assessing Barrett’s esophagus, gastric Helicobacter pylori infections, gastric atrophy, and gastric intestinal metaplasia.95 Applications have been used to assess esophageal, gastric, and colonic malignancies, including depth of invasion based on endoscopic images.95 Finally, studies have evaluated AI to assess small colon polyps during colonoscopy, including differentiating benign and premalignant polyps with success comparable to gastroenterologists.94,95 AI has been shown to increase the speed and accuracy of gastroenterologists in detecting small polyps during colonoscopy.48 In a prospective randomized study, colonoscopies performed using an AI device identified significantly more small adenomatous polyps than colonoscopies without AI.96

Neurology

It has been suggested that AI technologies are well suited for application in neurology due to the subtle presentation of many neurologic diseases.16 Viz LVO, the first CMS-approved AI reimbursement for the diagnosis of strokes, analyzes CTs to detect early ischemic strokes and alerts the medical team, thus shortening time to treatment.3,97 Many other AI platforms are in use or development that use CT and MRI for the early detection of strokes as well as for treatment and prognosis.9,97

AI technologies have been applied to neurodegenerative diseases, such as Alzheimer and Parkinson diseases.16,98 For example, several studies have evaluated patient movements in Parkinson disease for both early diagnosis and to assess response to treatment.98 These evaluations included assessment with both external cameras as well as wearable devices and smartphone apps.

AI has also been applied to seizure disorders, attempting to determine seizure type, localize the area of seizure onset, and address the challenges of identifying seizures in neonates.99,100 Other potential applications range from early detection and prognosis predictions for cases of multiple sclerosis to restoring movement in paralysis from a variety of conditions such as spinal cord injury.9,101,102

Mental Health

Due to the interactive nature of mental health care, the field has been slower to develop AI applications.18 With heavy reliance on textual information (eg, clinic notes, mood rating scales, and documentation of conversations), successful AI applications in this field will likely rely heavily on NLP.18 However, studies investigating the application of AI to mental health have also incorporated data such as brain imaging, smartphone monitoring, and social media platforms, such as Facebook and Twitter.18,103,104

The risk of suicide is higher in veteran patients, and ML algorithms have had limited success in predicting suicide risk in both veteran and nonveteran populations.104-106 While early models have low positive predictive values and low sensitivities, they still promise to be a useful tool in conjunction with traditional risk assessments.106 Kessler and colleagues suggest that combining multiple rather than single ML algorithms might lead to greater success.105,106

AI may assist in diagnosing other mental health disorders, including major depressive disorder, attention deficit hyperactivity disorder (ADHD), schizophrenia, posttraumatic stress disorder, and Alzheimer disease.103,104,107 These investigations are in the early stages with limited clinical applicability. However, 2 AI applications awaiting FDA approval relate to ADHD and opioid use.2 Furthermore, potential exists for AI to not only assist with prevention and diagnosis of ADHD, but also to identify optimal treatment options.2,103

General and Personalized Medicine

Additional AI applications include diagnosing patients with suspected sepsis, measuring liver iron concentrations, predicting hospital mortality at the time of admission, and more.2,108,109 AI can guide end-of-life decisions such as resuscitation status or whether to initiate mechanical ventilation.48

AI-driven smartphone apps can be beneficial to both patients and clinicians. Examples include predicting nonadherence to anticoagulation therapy, monitoring heart rhythms for atrial fibrillation or signs of hyperkalemia in patients with renal failure, and improving outcomes for patients with diabetes mellitus by decreasing glycemic variability and reducing hypoglycemia.8,48,110,111 The potential for AI applications to health care and personalized medicine are almost limitless.

Discussion