User login

New science reveals the best way to take a pill

I want to tell you a story about forgetfulness and haste, and how the combination of the two can lead to frightening consequences. A few years ago, I was lying in bed about to turn out the light when I realized I’d forgotten to take “my pill.”

Like some 161 million other American adults, I was then a consumer of a prescription medication. Being conscientious, I got up, retrieved said pill, and tossed it back. Being lazy, I didn’t bother to grab a glass of water to help the thing go down. Instead, I promptly returned to bed, threw a pillow over my head, and prepared for sleep.

Within seconds, I began to feel a burning sensation in my chest. After about a minute, that burn became a crippling pain. Not wanting to alarm my wife, I went into the living room, where I spent the next 30 minutes doubled over in agony. Was I having a heart attack? I phoned my sister, a hospitalist in Texas. She advised me to take myself to the ED to get checked out.

If only I’d known then about “Duke.” He could have told me how critical body posture is when people swallow pills.

Who’s Duke?

Duke is a computer representation of a 34-year-old, anatomically normal human male created by computer scientists at the IT’IS Foundation, a nonprofit group based in Switzerland that works on a variety of projects in health care technology. Using Duke, Rajat Mittal, PhD, a professor of medicine at the Johns Hopkins University, Baltimore, created a computer model called “StomachSim” to explore the process of digestion.

Their research, published in the journal Physics of Fluids, turned up several surprising findings about the dynamics of swallowing pills – the most common way medication is used worldwide.

Dr. Mittal said he chose to study the stomach because the functions of most other organ systems, from the heart to the brain, have already attracted plenty of attention from scientists.

“As I was looking to initiate research in some new directions, the implications of stomach biomechanics on important conditions such as diabetes, obesity, and gastroparesis became apparent to me,” he said. “It was clear that bioengineering research in this arena lags other more ‘sexy’ areas such as cardiovascular flows by at least 20 years, and there seemed to be a great opportunity to do impactful work.”

Your posture may help a pill work better

Several well-known things affect a pill’s ability to disperse its contents into the gut and be used by the body, such as the stomach’s contents (a heavy breakfast, a mix of liquids like juice, milk, and coffee) and the motion of the organ’s walls. But Dr. Mittal’s group learned that Duke’s posture also played a major role.

The researchers ran Duke through computer simulations in varying postures: upright, leaning right, leaning left, and leaning back, while keeping all the other parts of their analyses (like the things mentioned above) the same.

They found that posture determined as much as 83% of how quickly a pill disperses into the intestines. The most efficient position was leaning right. The least was leaning left, which prevented the pill from reaching the antrum, or bottom section of the stomach, and thus kept all but traces of the dissolved drug from entering the duodenum, where the stomach joins the small intestine. (Interestingly, Jews who observe Passover are advised to recline to the left during the meal as a symbol of freedom and leisure.)

That makes sense if you think about the stomach’s shape, which looks kind of like a bean, curving from the left to the right side of the body. Because of gravity, your position will change where the pill lands.

a condition in which the stomach loses the ability to empty properly.

How this could help people

Among the groups most likely to benefit from such studies, Dr. Mittal said, are the elderly – who both take a lot of pills and are more prone to trouble swallowing because of age-related changes in their esophagus – and the bedridden, who can’t easily shift their posture. The findings may also lead to improvements in the ability to treat people with gastroparesis, a particular problem for people with diabetes.

Future studies with Duke and similar simulations will look at how the GI system digests proteins, carbohydrates, and fatty meals, Dr. Mittal said.

In the meantime, Dr. Mittal offered the following advice: “Standing or sitting upright after taking a pill is fine. If you have to take a pill lying down, stay on your back or on your right side. Avoid lying on your left side after taking a pill.”

As for what happened to me, any gastroenterologist reading this has figured out that my condition was not heart-related. Instead, I likely was having a bout of pill esophagitis, irritation that can result from medications that aggravate the mucosa of the food tube. Although painful, esophagitis isn’t life-threatening. After about an hour, the pain began to subside, and by the next morning I was fine, with only a faint ache in my chest to remind me of my earlier torment. (Researchers noted an increase in the condition early in the COVID-19 pandemic, linked to the antibiotic doxycycline.)

And, in the interest of accuracy, my pill problem began above the stomach. Nothing in the Hopkins research suggests that the alignment of the esophagus plays a role in how drugs disperse in the gut – unless, of course, it prevents those pills from reaching the stomach in the first place.

A version of this article first appeared on WebMD.com.

I want to tell you a story about forgetfulness and haste, and how the combination of the two can lead to frightening consequences. A few years ago, I was lying in bed about to turn out the light when I realized I’d forgotten to take “my pill.”

Like some 161 million other American adults, I was then a consumer of a prescription medication. Being conscientious, I got up, retrieved said pill, and tossed it back. Being lazy, I didn’t bother to grab a glass of water to help the thing go down. Instead, I promptly returned to bed, threw a pillow over my head, and prepared for sleep.

Within seconds, I began to feel a burning sensation in my chest. After about a minute, that burn became a crippling pain. Not wanting to alarm my wife, I went into the living room, where I spent the next 30 minutes doubled over in agony. Was I having a heart attack? I phoned my sister, a hospitalist in Texas. She advised me to take myself to the ED to get checked out.

If only I’d known then about “Duke.” He could have told me how critical body posture is when people swallow pills.

Who’s Duke?

Duke is a computer representation of a 34-year-old, anatomically normal human male created by computer scientists at the IT’IS Foundation, a nonprofit group based in Switzerland that works on a variety of projects in health care technology. Using Duke, Rajat Mittal, PhD, a professor of medicine at the Johns Hopkins University, Baltimore, created a computer model called “StomachSim” to explore the process of digestion.

Their research, published in the journal Physics of Fluids, turned up several surprising findings about the dynamics of swallowing pills – the most common way medication is used worldwide.

Dr. Mittal said he chose to study the stomach because the functions of most other organ systems, from the heart to the brain, have already attracted plenty of attention from scientists.

“As I was looking to initiate research in some new directions, the implications of stomach biomechanics on important conditions such as diabetes, obesity, and gastroparesis became apparent to me,” he said. “It was clear that bioengineering research in this arena lags other more ‘sexy’ areas such as cardiovascular flows by at least 20 years, and there seemed to be a great opportunity to do impactful work.”

Your posture may help a pill work better

Several well-known things affect a pill’s ability to disperse its contents into the gut and be used by the body, such as the stomach’s contents (a heavy breakfast, a mix of liquids like juice, milk, and coffee) and the motion of the organ’s walls. But Dr. Mittal’s group learned that Duke’s posture also played a major role.

The researchers ran Duke through computer simulations in varying postures: upright, leaning right, leaning left, and leaning back, while keeping all the other parts of their analyses (like the things mentioned above) the same.

They found that posture determined as much as 83% of how quickly a pill disperses into the intestines. The most efficient position was leaning right. The least was leaning left, which prevented the pill from reaching the antrum, or bottom section of the stomach, and thus kept all but traces of the dissolved drug from entering the duodenum, where the stomach joins the small intestine. (Interestingly, Jews who observe Passover are advised to recline to the left during the meal as a symbol of freedom and leisure.)

That makes sense if you think about the stomach’s shape, which looks kind of like a bean, curving from the left to the right side of the body. Because of gravity, your position will change where the pill lands.

a condition in which the stomach loses the ability to empty properly.

How this could help people

Among the groups most likely to benefit from such studies, Dr. Mittal said, are the elderly – who both take a lot of pills and are more prone to trouble swallowing because of age-related changes in their esophagus – and the bedridden, who can’t easily shift their posture. The findings may also lead to improvements in the ability to treat people with gastroparesis, a particular problem for people with diabetes.

Future studies with Duke and similar simulations will look at how the GI system digests proteins, carbohydrates, and fatty meals, Dr. Mittal said.

In the meantime, Dr. Mittal offered the following advice: “Standing or sitting upright after taking a pill is fine. If you have to take a pill lying down, stay on your back or on your right side. Avoid lying on your left side after taking a pill.”

As for what happened to me, any gastroenterologist reading this has figured out that my condition was not heart-related. Instead, I likely was having a bout of pill esophagitis, irritation that can result from medications that aggravate the mucosa of the food tube. Although painful, esophagitis isn’t life-threatening. After about an hour, the pain began to subside, and by the next morning I was fine, with only a faint ache in my chest to remind me of my earlier torment. (Researchers noted an increase in the condition early in the COVID-19 pandemic, linked to the antibiotic doxycycline.)

And, in the interest of accuracy, my pill problem began above the stomach. Nothing in the Hopkins research suggests that the alignment of the esophagus plays a role in how drugs disperse in the gut – unless, of course, it prevents those pills from reaching the stomach in the first place.

A version of this article first appeared on WebMD.com.

I want to tell you a story about forgetfulness and haste, and how the combination of the two can lead to frightening consequences. A few years ago, I was lying in bed about to turn out the light when I realized I’d forgotten to take “my pill.”

Like some 161 million other American adults, I was then a consumer of a prescription medication. Being conscientious, I got up, retrieved said pill, and tossed it back. Being lazy, I didn’t bother to grab a glass of water to help the thing go down. Instead, I promptly returned to bed, threw a pillow over my head, and prepared for sleep.

Within seconds, I began to feel a burning sensation in my chest. After about a minute, that burn became a crippling pain. Not wanting to alarm my wife, I went into the living room, where I spent the next 30 minutes doubled over in agony. Was I having a heart attack? I phoned my sister, a hospitalist in Texas. She advised me to take myself to the ED to get checked out.

If only I’d known then about “Duke.” He could have told me how critical body posture is when people swallow pills.

Who’s Duke?

Duke is a computer representation of a 34-year-old, anatomically normal human male created by computer scientists at the IT’IS Foundation, a nonprofit group based in Switzerland that works on a variety of projects in health care technology. Using Duke, Rajat Mittal, PhD, a professor of medicine at the Johns Hopkins University, Baltimore, created a computer model called “StomachSim” to explore the process of digestion.

Their research, published in the journal Physics of Fluids, turned up several surprising findings about the dynamics of swallowing pills – the most common way medication is used worldwide.

Dr. Mittal said he chose to study the stomach because the functions of most other organ systems, from the heart to the brain, have already attracted plenty of attention from scientists.

“As I was looking to initiate research in some new directions, the implications of stomach biomechanics on important conditions such as diabetes, obesity, and gastroparesis became apparent to me,” he said. “It was clear that bioengineering research in this arena lags other more ‘sexy’ areas such as cardiovascular flows by at least 20 years, and there seemed to be a great opportunity to do impactful work.”

Your posture may help a pill work better

Several well-known things affect a pill’s ability to disperse its contents into the gut and be used by the body, such as the stomach’s contents (a heavy breakfast, a mix of liquids like juice, milk, and coffee) and the motion of the organ’s walls. But Dr. Mittal’s group learned that Duke’s posture also played a major role.

The researchers ran Duke through computer simulations in varying postures: upright, leaning right, leaning left, and leaning back, while keeping all the other parts of their analyses (like the things mentioned above) the same.

They found that posture determined as much as 83% of how quickly a pill disperses into the intestines. The most efficient position was leaning right. The least was leaning left, which prevented the pill from reaching the antrum, or bottom section of the stomach, and thus kept all but traces of the dissolved drug from entering the duodenum, where the stomach joins the small intestine. (Interestingly, Jews who observe Passover are advised to recline to the left during the meal as a symbol of freedom and leisure.)

That makes sense if you think about the stomach’s shape, which looks kind of like a bean, curving from the left to the right side of the body. Because of gravity, your position will change where the pill lands.

a condition in which the stomach loses the ability to empty properly.

How this could help people

Among the groups most likely to benefit from such studies, Dr. Mittal said, are the elderly – who both take a lot of pills and are more prone to trouble swallowing because of age-related changes in their esophagus – and the bedridden, who can’t easily shift their posture. The findings may also lead to improvements in the ability to treat people with gastroparesis, a particular problem for people with diabetes.

Future studies with Duke and similar simulations will look at how the GI system digests proteins, carbohydrates, and fatty meals, Dr. Mittal said.

In the meantime, Dr. Mittal offered the following advice: “Standing or sitting upright after taking a pill is fine. If you have to take a pill lying down, stay on your back or on your right side. Avoid lying on your left side after taking a pill.”

As for what happened to me, any gastroenterologist reading this has figured out that my condition was not heart-related. Instead, I likely was having a bout of pill esophagitis, irritation that can result from medications that aggravate the mucosa of the food tube. Although painful, esophagitis isn’t life-threatening. After about an hour, the pain began to subside, and by the next morning I was fine, with only a faint ache in my chest to remind me of my earlier torment. (Researchers noted an increase in the condition early in the COVID-19 pandemic, linked to the antibiotic doxycycline.)

And, in the interest of accuracy, my pill problem began above the stomach. Nothing in the Hopkins research suggests that the alignment of the esophagus plays a role in how drugs disperse in the gut – unless, of course, it prevents those pills from reaching the stomach in the first place.

A version of this article first appeared on WebMD.com.

COVID-19 linked to increased Alzheimer’s risk

The study of more than 6 million people aged 65 years or older found a 50%-80% increased risk for AD in the year after COVID-19; the risk was especially high for women older than 85 years.

However, the investigators were quick to point out that the observational retrospective study offers no evidence that COVID-19 causes AD. There could be a viral etiology at play, or the connection could be related to inflammation in neural tissue from the SARS-CoV-2 infection. Or it could simply be that exposure to the health care system for COVID-19 increased the odds of detection of existing undiagnosed AD cases.

Whatever the case, these findings point to a potential spike in AD cases, which is a cause for concern, study investigator Pamela Davis, MD, PhD, a professor in the Center for Community Health Integration at Case Western Reserve University, Cleveland, said in an interview.

“COVID may be giving us a legacy of ongoing medical difficulties,” Dr. Davis said. “We were already concerned about having a very large care burden and cost burden from Alzheimer’s disease. If this is another burden that’s increased by COVID, this is something we’re really going to have to prepare for.”

The findings were published online in Journal of Alzheimer’s Disease.

Increased risk

Earlier research points to a potential link between COVID-19 and increased risk for AD and Parkinson’s disease.

For the current study, researchers analyzed anonymous electronic health records of 6.2 million adults aged 65 years or older who received medical treatment between February 2020 and May 2021 and had no prior diagnosis of AD. The database includes information on almost 30% of the entire U.S. population.

Overall, there were 410,748 cases of COVID-19 during the study period.

The overall risk for new diagnosis of AD in the COVID-19 cohort was close to double that of those who did not have COVID-19 (0.68% vs. 0.35%, respectively).

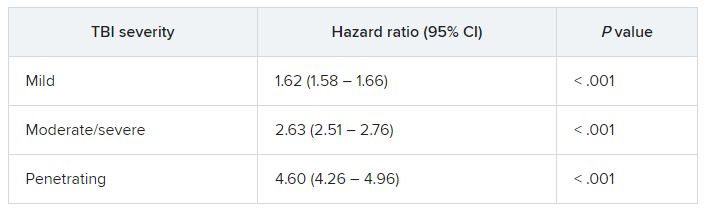

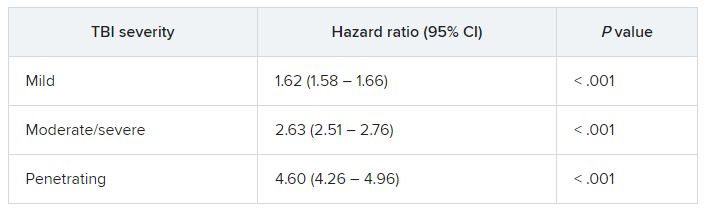

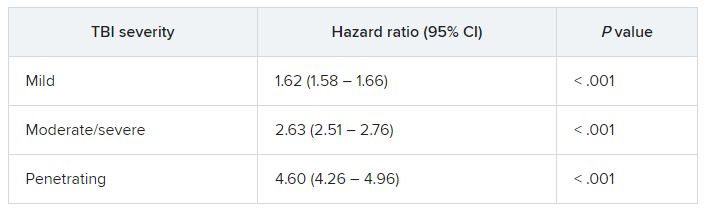

After propensity-score matching, those who have had COVID-19 had a significantly higher risk for an AD diagnosis compared with those who were not infected (hazard ratio [HR], 1.69; 95% confidence interval [CI],1.53-1.72).

Risk for AD was elevated in all age groups, regardless of gender or ethnicity. Researchers did not collect data on COVID-19 severity, and the medical codes for long COVID were not published until after the study had ended.

Those with the highest risk were individuals older than 85 years (HR, 1.89; 95% CI, 1.73-2.07) and women (HR, 1.82; 95% CI, 1.69-1.97).

“We expected to see some impact, but I was surprised that it was as potent as it was,” Dr. Davis said.

Association, not causation

Heather Snyder, PhD, Alzheimer’s Association vice president of medical and scientific relations, who commented on the findings for this article, called the study interesting but emphasized caution in interpreting the results.

“Because this study only showed an association through medical records, we cannot know what the underlying mechanisms driving this association are without more research,” Dr. Snyder said. “If you have had COVID-19, it doesn’t mean you’re going to get dementia. But if you have had COVID-19 and are experiencing long-term symptoms including cognitive difficulties, talk to your doctor.”

Dr. Davis agreed, noting that this type of study offers information on association, but not causation. “I do think that this makes it imperative that we continue to follow the population for what’s going on in various neurodegenerative diseases,” Dr. Davis said.

The study was funded by the National Institute of Aging, National Institute on Alcohol Abuse and Alcoholism, the Clinical and Translational Science Collaborative of Cleveland, and the National Cancer Institute. Dr. Synder reports no relevant financial conflicts.

A version of this article first appeared on Medscape.com.

The study of more than 6 million people aged 65 years or older found a 50%-80% increased risk for AD in the year after COVID-19; the risk was especially high for women older than 85 years.

However, the investigators were quick to point out that the observational retrospective study offers no evidence that COVID-19 causes AD. There could be a viral etiology at play, or the connection could be related to inflammation in neural tissue from the SARS-CoV-2 infection. Or it could simply be that exposure to the health care system for COVID-19 increased the odds of detection of existing undiagnosed AD cases.

Whatever the case, these findings point to a potential spike in AD cases, which is a cause for concern, study investigator Pamela Davis, MD, PhD, a professor in the Center for Community Health Integration at Case Western Reserve University, Cleveland, said in an interview.

“COVID may be giving us a legacy of ongoing medical difficulties,” Dr. Davis said. “We were already concerned about having a very large care burden and cost burden from Alzheimer’s disease. If this is another burden that’s increased by COVID, this is something we’re really going to have to prepare for.”

The findings were published online in Journal of Alzheimer’s Disease.

Increased risk

Earlier research points to a potential link between COVID-19 and increased risk for AD and Parkinson’s disease.

For the current study, researchers analyzed anonymous electronic health records of 6.2 million adults aged 65 years or older who received medical treatment between February 2020 and May 2021 and had no prior diagnosis of AD. The database includes information on almost 30% of the entire U.S. population.

Overall, there were 410,748 cases of COVID-19 during the study period.

The overall risk for new diagnosis of AD in the COVID-19 cohort was close to double that of those who did not have COVID-19 (0.68% vs. 0.35%, respectively).

After propensity-score matching, those who have had COVID-19 had a significantly higher risk for an AD diagnosis compared with those who were not infected (hazard ratio [HR], 1.69; 95% confidence interval [CI],1.53-1.72).

Risk for AD was elevated in all age groups, regardless of gender or ethnicity. Researchers did not collect data on COVID-19 severity, and the medical codes for long COVID were not published until after the study had ended.

Those with the highest risk were individuals older than 85 years (HR, 1.89; 95% CI, 1.73-2.07) and women (HR, 1.82; 95% CI, 1.69-1.97).

“We expected to see some impact, but I was surprised that it was as potent as it was,” Dr. Davis said.

Association, not causation

Heather Snyder, PhD, Alzheimer’s Association vice president of medical and scientific relations, who commented on the findings for this article, called the study interesting but emphasized caution in interpreting the results.

“Because this study only showed an association through medical records, we cannot know what the underlying mechanisms driving this association are without more research,” Dr. Snyder said. “If you have had COVID-19, it doesn’t mean you’re going to get dementia. But if you have had COVID-19 and are experiencing long-term symptoms including cognitive difficulties, talk to your doctor.”

Dr. Davis agreed, noting that this type of study offers information on association, but not causation. “I do think that this makes it imperative that we continue to follow the population for what’s going on in various neurodegenerative diseases,” Dr. Davis said.

The study was funded by the National Institute of Aging, National Institute on Alcohol Abuse and Alcoholism, the Clinical and Translational Science Collaborative of Cleveland, and the National Cancer Institute. Dr. Synder reports no relevant financial conflicts.

A version of this article first appeared on Medscape.com.

The study of more than 6 million people aged 65 years or older found a 50%-80% increased risk for AD in the year after COVID-19; the risk was especially high for women older than 85 years.

However, the investigators were quick to point out that the observational retrospective study offers no evidence that COVID-19 causes AD. There could be a viral etiology at play, or the connection could be related to inflammation in neural tissue from the SARS-CoV-2 infection. Or it could simply be that exposure to the health care system for COVID-19 increased the odds of detection of existing undiagnosed AD cases.

Whatever the case, these findings point to a potential spike in AD cases, which is a cause for concern, study investigator Pamela Davis, MD, PhD, a professor in the Center for Community Health Integration at Case Western Reserve University, Cleveland, said in an interview.

“COVID may be giving us a legacy of ongoing medical difficulties,” Dr. Davis said. “We were already concerned about having a very large care burden and cost burden from Alzheimer’s disease. If this is another burden that’s increased by COVID, this is something we’re really going to have to prepare for.”

The findings were published online in Journal of Alzheimer’s Disease.

Increased risk

Earlier research points to a potential link between COVID-19 and increased risk for AD and Parkinson’s disease.

For the current study, researchers analyzed anonymous electronic health records of 6.2 million adults aged 65 years or older who received medical treatment between February 2020 and May 2021 and had no prior diagnosis of AD. The database includes information on almost 30% of the entire U.S. population.

Overall, there were 410,748 cases of COVID-19 during the study period.

The overall risk for new diagnosis of AD in the COVID-19 cohort was close to double that of those who did not have COVID-19 (0.68% vs. 0.35%, respectively).

After propensity-score matching, those who have had COVID-19 had a significantly higher risk for an AD diagnosis compared with those who were not infected (hazard ratio [HR], 1.69; 95% confidence interval [CI],1.53-1.72).

Risk for AD was elevated in all age groups, regardless of gender or ethnicity. Researchers did not collect data on COVID-19 severity, and the medical codes for long COVID were not published until after the study had ended.

Those with the highest risk were individuals older than 85 years (HR, 1.89; 95% CI, 1.73-2.07) and women (HR, 1.82; 95% CI, 1.69-1.97).

“We expected to see some impact, but I was surprised that it was as potent as it was,” Dr. Davis said.

Association, not causation

Heather Snyder, PhD, Alzheimer’s Association vice president of medical and scientific relations, who commented on the findings for this article, called the study interesting but emphasized caution in interpreting the results.

“Because this study only showed an association through medical records, we cannot know what the underlying mechanisms driving this association are without more research,” Dr. Snyder said. “If you have had COVID-19, it doesn’t mean you’re going to get dementia. But if you have had COVID-19 and are experiencing long-term symptoms including cognitive difficulties, talk to your doctor.”

Dr. Davis agreed, noting that this type of study offers information on association, but not causation. “I do think that this makes it imperative that we continue to follow the population for what’s going on in various neurodegenerative diseases,” Dr. Davis said.

The study was funded by the National Institute of Aging, National Institute on Alcohol Abuse and Alcoholism, the Clinical and Translational Science Collaborative of Cleveland, and the National Cancer Institute. Dr. Synder reports no relevant financial conflicts.

A version of this article first appeared on Medscape.com.

FROM THE JOURNAL OF ALZHEIMER’S DISEASE

Abbreviated Delirium Screening Instruments: Plausible Tool to Improve Delirium Detection in Hospitalized Older Patients

Study 1 Overview (Oberhaus et al)

Objective: To compare the 3-Minute Diagnostic Confusion Assessment Method (3D-CAM) to the long-form Confusion Assessment Method (CAM) in detecting postoperative delirium.

Design: Prospective concurrent comparison of 3D-CAM and CAM evaluations in a cohort of postoperative geriatric patients.

Setting and participants: Eligible participants were patients aged 60 years or older undergoing major elective surgery at Barnes Jewish Hospital (St. Louis, Missouri) who were enrolled in ongoing clinical trials (PODCAST, ENGAGES, SATISFY-SOS) between 2015 and 2018. Surgeries were at least 2 hours in length and required general anesthesia, planned extubation, and a minimum 2-day hospital stay. Investigators were extensively trained in administering 3D-CAM and CAM instruments. Participants were evaluated 2 hours after the end of anesthesia care on the day of surgery, then daily until follow-up was completed per clinical trial protocol or until the participant was determined by CAM to be nondelirious for 3 consecutive days. For each evaluation, both 3D-CAM and CAM assessors approached the participant together, but the evaluation was conducted such that the 3D-CAM assessor was masked to the additional questions ascertained by the long-form CAM assessment. The 3D-CAM or CAM assessor independently scored their respective assessments blinded to the results of the other assessor.

Main outcome measures: Participants were concurrently evaluated for postoperative delirium by both 3D-CAM and long-form CAM assessments. Comparisons between 3D-CAM and CAM scores were made using Cohen κ with repeated measures, generalized linear mixed-effects model, and Bland-Altman analysis.

Main results: Sixteen raters performed 471 concurrent 3D-CAM and CAM assessments in 299 participants (mean [SD] age, 69 [6.5] years). Of these participants, 152 (50.8%) were men, 263 (88.0%) were White, and 211 (70.6%) underwent noncardiac surgery. Both instruments showed good intraclass correlation (0.98 for 3D-CAM, 0.84 for CAM) with good overall agreement (Cohen κ = 0.71; 95% CI, 0.58-0.83). The mixed-effects model indicated a significant disagreement between the 3D-CAM and CAM assessments (estimated difference in fixed effect, –0.68; 95% CI, –1.32 to –0.05; P = .04). The Bland-Altman analysis showed that the probability of a delirium diagnosis with the 3D-CAM was more than twice that with the CAM (probability ratio, 2.78; 95% CI, 2.44-3.23).

Conclusion: The high degree of agreement between 3D-CAM and long-form CAM assessments suggests that the former may be a pragmatic and easy-to-administer clinical tool to screen for postoperative delirium in vulnerable older surgical patients.

Study 2 Overview (Shenkin et al)

Objective: To assess the accuracy of the 4 ‘A’s Test (4AT) for delirium detection in the medical inpatient setting and to compare the 4AT to the CAM.

Design: Prospective randomized diagnostic test accuracy study.

Setting and participants: This study was conducted in emergency departments and acute medical wards at 3 UK sites (Edinburgh, Bradford, and Sheffield) and enrolled acute medical patients aged 70 years or older without acute life-threatening illnesses and/or coma. Assessors administering the delirium evaluation were nurses or graduate clinical research associates who underwent systematic training in delirium and delirium assessment. Additional training was provided to those administering the CAM but not to those administering the 4AT as the latter is designed to be administered without special training. First, all participants underwent a reference standard delirium assessment using Diagnostic and Statistical Manual of Mental Disorders (Fourth Edition) (DSM-IV) criteria to derive a final definitive diagnosis of delirium via expert consensus (1 psychiatrist and 2 geriatricians). Then, the participants were randomized to either the 4AT or the comparator CAM group using computer-generated pseudo-random numbers, stratified by study site, with block allocation. All assessments were performed by pairs of independent assessors blinded to the results of the other assessment.

Main outcome measures: All participants were evaluated by the reference standard (DSM-IV criteria for delirium) and by either 4AT or CAM instruments for delirium. The accuracy of the 4AT instrument was evaluated by comparing its positive and negative predictive values, sensitivity, and specificity to the reference standard and analyzed via the area under the receiver operating characteristic curve. The diagnostic accuracy of 4AT, compared to the CAM, was evaluated by comparing positive and negative predictive values, sensitivity, and specificity using Fisher’s exact test. The overall performance of 4AT and CAM was summarized using Youden’s Index and the diagnostic odds ratio of sensitivity to specificity.

Results: All 843 individuals enrolled in the study were randomized and 785 were included in the analysis (23 withdrew, 3 lost contact, 32 indeterminate diagnosis, 2 missing outcome). Of the participants analyzed, the mean age was 81.4 [6.4] years, and 12.1% (95/785) had delirium by reference standard assessment, 14.3% (56/392) by 4AT, and 4.7% (18/384) by CAM. The 4AT group had an area under the receiver operating characteristic curve of 0.90 (95% CI, 0.84-0.96), a sensitivity of 76% (95% CI, 61%-87%), and a specificity of 94% (95% CI, 92%-97%). In comparison, the CAM group had a sensitivity of 40% (95% CI, 26%-57%) and a specificity of 100% (95% CI, 98%-100%).

Conclusions: The 4AT is a pragmatic screening test for delirium in a medical space that does not require special training to administer. The use of this instrument may help to improve delirium detection as a part of routine clinical care in hospitalized older adults.

Commentary

Delirium is an acute confusional state marked by fluctuating mental status, inattention, disorganized thinking, and altered level of consciousness. It is exceedingly common in older patients in both surgical and medical settings and is associated with increased morbidity, mortality, hospital length of stay, institutionalization, and health care costs. Delirium is frequently underdiagnosed in the hospitalized setting, perhaps due to a combination of its waxing and waning nature and a lack of pragmatic and easily implementable screening tools that can be readily administered by clinicians and nonclinicians alike.1 While the CAM is a well-validated instrument to diagnose delirium, it requires specific training in the rating of each of the cardinal features ascertained through a brief cognitive assessment and takes 5 to 10 minutes to complete. Taken together, given the high patient load for clinicians in the hospital setting, the validation and application of brief delirium screening instruments that can be reliably administered by nonphysicians and nonclinicians may enhance delirium detection in vulnerable patients and consequently improve their outcomes.

In Study 1, Oberhaus et al approach the challenge of underdiagnosing delirium in the postoperative setting by investigating whether the widely accepted long-form CAM and an abbreviated 3-minute version, the 3D-CAM, provide similar delirium detection in older surgical patients. The authors found that both instruments were reliable tests individually (high interrater reliability) and had good overall agreement. However, the 3D-CAM was more likely to yield a positive diagnosis of delirium compared to the long-form CAM, consistent with its purpose as a screening tool with a high sensitivity. It is important to emphasize that the 3D-CAM takes less time to administer, but also requires less extensive training and clinical knowledge than the long-form CAM. Therefore, this instrument meets the prerequisite of a brief screening test that can be rapidly administered by nonclinicians, and if affirmative, followed by a more extensive confirmatory test performed by a clinician. Limitations of this study include a lack of a reference standard structured interview conducted by a physician-rater to better determine the true diagnostic accuracy of both 3D-CAM and CAM assessments, and the use of convenience sampling at a single center, which reduces the generalizability of its findings.

In a similar vein, Shenkin et al in Study 2 attempt to evaluate the utility of the 4AT instrument in diagnosing delirium in older medical inpatients by testing the diagnostic accuracy of the 4AT against a reference standard (ie, DSM-IV–based evaluation by physicians) as well as comparing it to CAM. The 4AT takes less time (~2 minutes) and requires less knowledge and training to administer as compared to the CAM. The study showed that the abbreviated 4AT, compared to CAM, had a higher sensitivity (76% vs 40%) and lower specificity (94% vs 100%) in delirium detection. Thus, akin to the application of 3D-CAM in the postoperative setting, 4AT possesses key characteristics of a brief delirium screening test for older patients in the acute medical setting. In contrast to the Oberhaus et al study, a major strength of this study was the utilization of a reference standard that was validated by expert consensus. This allowed the 4AT and CAM assessments to be compared to a more objective standard, thereby directly testing their diagnostic performance in detecting delirium.

Application for Clinical Practice and System Implementation

The findings from both Study 1 and 2 suggest that using an abbreviated delirium instrument in both surgical and acute medical settings may provide a pragmatic and sensitive method to detect delirium in older patients. The brevity of administration of 3D-CAM (~3 minutes) and 4AT (~2 minutes), combined with their higher sensitivity for detecting delirium compared to CAM, make these instruments potentially effective rapid screening tests for delirium in hospitalized older patients. Importantly, the utilization of such instruments might be a feasible way to mitigate the issue of underdiagnosing delirium in the hospital.

Several additional aspects of these abbreviated delirium instruments increase their suitability for clinical application. Specifically, the 3D-CAM and 4AT require less extensive training and clinical knowledge to both administer and interpret the results than the CAM.2 For instance, a multistage, multiday training for CAM is a key factor in maintaining its diagnostic accuracy.3,4 In contrast, the 3D-CAM requires only a 1- to 2-hour training session, and the 4AT can be administered by a nonclinician without the need for instrument-specific training. Thus, implementation of these instruments can be particularly pragmatic in clinical settings in which the staff involved in delirium screening cannot undergo the substantial training required to administer CAM. Moreover, these abbreviated tests enable nonphysician care team members to assume the role of delirium screener in the hospital. Taken together, the adoption of these abbreviated instruments may facilitate brief screenings of delirium in older patients by caregivers who see them most often—nurses and certified nursing assistants—thereby improving early detection and prevention of delirium-related complications in the hospital.

The feasibility of using abbreviated delirium screening instruments in the hospital setting raises a system implementation question—if these instruments are designed to be administered by those with limited to no training, could nonclinicians, such as hospital volunteers, effectively take on delirium screening roles in the hospital? If volunteers are able to take on this role, the integration of hospital volunteers into the clinical team can greatly expand the capacity for delirium screening in the hospital setting. Further research is warranted to validate the diagnostic accuracy of 3D-CAM and 4AT by nonclinician administrators in order to more broadly adopt this approach to delirium screening.

Practice Points

- Abbreviated delirium screening tools such as 3D-CAM and 4AT may be pragmatic instruments to improve delirium detection in surgical and hospitalized older patients, respectively.

- Further studies are warranted to validate the diagnostic accuracy of 3D-CAM and 4AT by nonclinician administrators in order to more broadly adopt this approach to delirium screening.

Jared Doan, BS, and Fred Ko, MD

Geriatrics and Palliative Medicine, Icahn School of Medicine at Mount Sinai

1. Fong TG, Tulebaev SR, Inouye SK. Delirium in elderly adults: diagnosis, prevention and treatment. Nat Rev Neurol. 2009;5(4):210-220. doi:10.1038/nrneurol.2009.24

2. Marcantonio ER, Ngo LH, O’Connor M, et al. 3D-CAM: derivation and validation of a 3-minute diagnostic interview for CAM-defined delirium: a cross-sectional diagnostic test study. Ann Intern Med. 2014;161(8):554-561. doi:10.7326/M14-0865

3. Green JR, Smith J, Teale E, et al. Use of the confusion assessment method in multicentre delirium trials: training and standardisation. BMC Geriatr. 2019;19(1):107. doi:10.1186/s12877-019-1129-8

4. Wei LA, Fearing MA, Sternberg EJ, Inouye SK. The Confusion Assessment Method: a systematic review of current usage. Am Geriatr Soc. 2008;56(5):823-830. doi:10.1111/j.1532-5415.2008.01674.x

Study 1 Overview (Oberhaus et al)

Objective: To compare the 3-Minute Diagnostic Confusion Assessment Method (3D-CAM) to the long-form Confusion Assessment Method (CAM) in detecting postoperative delirium.

Design: Prospective concurrent comparison of 3D-CAM and CAM evaluations in a cohort of postoperative geriatric patients.

Setting and participants: Eligible participants were patients aged 60 years or older undergoing major elective surgery at Barnes Jewish Hospital (St. Louis, Missouri) who were enrolled in ongoing clinical trials (PODCAST, ENGAGES, SATISFY-SOS) between 2015 and 2018. Surgeries were at least 2 hours in length and required general anesthesia, planned extubation, and a minimum 2-day hospital stay. Investigators were extensively trained in administering 3D-CAM and CAM instruments. Participants were evaluated 2 hours after the end of anesthesia care on the day of surgery, then daily until follow-up was completed per clinical trial protocol or until the participant was determined by CAM to be nondelirious for 3 consecutive days. For each evaluation, both 3D-CAM and CAM assessors approached the participant together, but the evaluation was conducted such that the 3D-CAM assessor was masked to the additional questions ascertained by the long-form CAM assessment. The 3D-CAM or CAM assessor independently scored their respective assessments blinded to the results of the other assessor.

Main outcome measures: Participants were concurrently evaluated for postoperative delirium by both 3D-CAM and long-form CAM assessments. Comparisons between 3D-CAM and CAM scores were made using Cohen κ with repeated measures, generalized linear mixed-effects model, and Bland-Altman analysis.

Main results: Sixteen raters performed 471 concurrent 3D-CAM and CAM assessments in 299 participants (mean [SD] age, 69 [6.5] years). Of these participants, 152 (50.8%) were men, 263 (88.0%) were White, and 211 (70.6%) underwent noncardiac surgery. Both instruments showed good intraclass correlation (0.98 for 3D-CAM, 0.84 for CAM) with good overall agreement (Cohen κ = 0.71; 95% CI, 0.58-0.83). The mixed-effects model indicated a significant disagreement between the 3D-CAM and CAM assessments (estimated difference in fixed effect, –0.68; 95% CI, –1.32 to –0.05; P = .04). The Bland-Altman analysis showed that the probability of a delirium diagnosis with the 3D-CAM was more than twice that with the CAM (probability ratio, 2.78; 95% CI, 2.44-3.23).

Conclusion: The high degree of agreement between 3D-CAM and long-form CAM assessments suggests that the former may be a pragmatic and easy-to-administer clinical tool to screen for postoperative delirium in vulnerable older surgical patients.

Study 2 Overview (Shenkin et al)

Objective: To assess the accuracy of the 4 ‘A’s Test (4AT) for delirium detection in the medical inpatient setting and to compare the 4AT to the CAM.

Design: Prospective randomized diagnostic test accuracy study.

Setting and participants: This study was conducted in emergency departments and acute medical wards at 3 UK sites (Edinburgh, Bradford, and Sheffield) and enrolled acute medical patients aged 70 years or older without acute life-threatening illnesses and/or coma. Assessors administering the delirium evaluation were nurses or graduate clinical research associates who underwent systematic training in delirium and delirium assessment. Additional training was provided to those administering the CAM but not to those administering the 4AT as the latter is designed to be administered without special training. First, all participants underwent a reference standard delirium assessment using Diagnostic and Statistical Manual of Mental Disorders (Fourth Edition) (DSM-IV) criteria to derive a final definitive diagnosis of delirium via expert consensus (1 psychiatrist and 2 geriatricians). Then, the participants were randomized to either the 4AT or the comparator CAM group using computer-generated pseudo-random numbers, stratified by study site, with block allocation. All assessments were performed by pairs of independent assessors blinded to the results of the other assessment.

Main outcome measures: All participants were evaluated by the reference standard (DSM-IV criteria for delirium) and by either 4AT or CAM instruments for delirium. The accuracy of the 4AT instrument was evaluated by comparing its positive and negative predictive values, sensitivity, and specificity to the reference standard and analyzed via the area under the receiver operating characteristic curve. The diagnostic accuracy of 4AT, compared to the CAM, was evaluated by comparing positive and negative predictive values, sensitivity, and specificity using Fisher’s exact test. The overall performance of 4AT and CAM was summarized using Youden’s Index and the diagnostic odds ratio of sensitivity to specificity.

Results: All 843 individuals enrolled in the study were randomized and 785 were included in the analysis (23 withdrew, 3 lost contact, 32 indeterminate diagnosis, 2 missing outcome). Of the participants analyzed, the mean age was 81.4 [6.4] years, and 12.1% (95/785) had delirium by reference standard assessment, 14.3% (56/392) by 4AT, and 4.7% (18/384) by CAM. The 4AT group had an area under the receiver operating characteristic curve of 0.90 (95% CI, 0.84-0.96), a sensitivity of 76% (95% CI, 61%-87%), and a specificity of 94% (95% CI, 92%-97%). In comparison, the CAM group had a sensitivity of 40% (95% CI, 26%-57%) and a specificity of 100% (95% CI, 98%-100%).

Conclusions: The 4AT is a pragmatic screening test for delirium in a medical space that does not require special training to administer. The use of this instrument may help to improve delirium detection as a part of routine clinical care in hospitalized older adults.

Commentary

Delirium is an acute confusional state marked by fluctuating mental status, inattention, disorganized thinking, and altered level of consciousness. It is exceedingly common in older patients in both surgical and medical settings and is associated with increased morbidity, mortality, hospital length of stay, institutionalization, and health care costs. Delirium is frequently underdiagnosed in the hospitalized setting, perhaps due to a combination of its waxing and waning nature and a lack of pragmatic and easily implementable screening tools that can be readily administered by clinicians and nonclinicians alike.1 While the CAM is a well-validated instrument to diagnose delirium, it requires specific training in the rating of each of the cardinal features ascertained through a brief cognitive assessment and takes 5 to 10 minutes to complete. Taken together, given the high patient load for clinicians in the hospital setting, the validation and application of brief delirium screening instruments that can be reliably administered by nonphysicians and nonclinicians may enhance delirium detection in vulnerable patients and consequently improve their outcomes.

In Study 1, Oberhaus et al approach the challenge of underdiagnosing delirium in the postoperative setting by investigating whether the widely accepted long-form CAM and an abbreviated 3-minute version, the 3D-CAM, provide similar delirium detection in older surgical patients. The authors found that both instruments were reliable tests individually (high interrater reliability) and had good overall agreement. However, the 3D-CAM was more likely to yield a positive diagnosis of delirium compared to the long-form CAM, consistent with its purpose as a screening tool with a high sensitivity. It is important to emphasize that the 3D-CAM takes less time to administer, but also requires less extensive training and clinical knowledge than the long-form CAM. Therefore, this instrument meets the prerequisite of a brief screening test that can be rapidly administered by nonclinicians, and if affirmative, followed by a more extensive confirmatory test performed by a clinician. Limitations of this study include a lack of a reference standard structured interview conducted by a physician-rater to better determine the true diagnostic accuracy of both 3D-CAM and CAM assessments, and the use of convenience sampling at a single center, which reduces the generalizability of its findings.

In a similar vein, Shenkin et al in Study 2 attempt to evaluate the utility of the 4AT instrument in diagnosing delirium in older medical inpatients by testing the diagnostic accuracy of the 4AT against a reference standard (ie, DSM-IV–based evaluation by physicians) as well as comparing it to CAM. The 4AT takes less time (~2 minutes) and requires less knowledge and training to administer as compared to the CAM. The study showed that the abbreviated 4AT, compared to CAM, had a higher sensitivity (76% vs 40%) and lower specificity (94% vs 100%) in delirium detection. Thus, akin to the application of 3D-CAM in the postoperative setting, 4AT possesses key characteristics of a brief delirium screening test for older patients in the acute medical setting. In contrast to the Oberhaus et al study, a major strength of this study was the utilization of a reference standard that was validated by expert consensus. This allowed the 4AT and CAM assessments to be compared to a more objective standard, thereby directly testing their diagnostic performance in detecting delirium.

Application for Clinical Practice and System Implementation

The findings from both Study 1 and 2 suggest that using an abbreviated delirium instrument in both surgical and acute medical settings may provide a pragmatic and sensitive method to detect delirium in older patients. The brevity of administration of 3D-CAM (~3 minutes) and 4AT (~2 minutes), combined with their higher sensitivity for detecting delirium compared to CAM, make these instruments potentially effective rapid screening tests for delirium in hospitalized older patients. Importantly, the utilization of such instruments might be a feasible way to mitigate the issue of underdiagnosing delirium in the hospital.

Several additional aspects of these abbreviated delirium instruments increase their suitability for clinical application. Specifically, the 3D-CAM and 4AT require less extensive training and clinical knowledge to both administer and interpret the results than the CAM.2 For instance, a multistage, multiday training for CAM is a key factor in maintaining its diagnostic accuracy.3,4 In contrast, the 3D-CAM requires only a 1- to 2-hour training session, and the 4AT can be administered by a nonclinician without the need for instrument-specific training. Thus, implementation of these instruments can be particularly pragmatic in clinical settings in which the staff involved in delirium screening cannot undergo the substantial training required to administer CAM. Moreover, these abbreviated tests enable nonphysician care team members to assume the role of delirium screener in the hospital. Taken together, the adoption of these abbreviated instruments may facilitate brief screenings of delirium in older patients by caregivers who see them most often—nurses and certified nursing assistants—thereby improving early detection and prevention of delirium-related complications in the hospital.

The feasibility of using abbreviated delirium screening instruments in the hospital setting raises a system implementation question—if these instruments are designed to be administered by those with limited to no training, could nonclinicians, such as hospital volunteers, effectively take on delirium screening roles in the hospital? If volunteers are able to take on this role, the integration of hospital volunteers into the clinical team can greatly expand the capacity for delirium screening in the hospital setting. Further research is warranted to validate the diagnostic accuracy of 3D-CAM and 4AT by nonclinician administrators in order to more broadly adopt this approach to delirium screening.

Practice Points

- Abbreviated delirium screening tools such as 3D-CAM and 4AT may be pragmatic instruments to improve delirium detection in surgical and hospitalized older patients, respectively.

- Further studies are warranted to validate the diagnostic accuracy of 3D-CAM and 4AT by nonclinician administrators in order to more broadly adopt this approach to delirium screening.

Jared Doan, BS, and Fred Ko, MD

Geriatrics and Palliative Medicine, Icahn School of Medicine at Mount Sinai

Study 1 Overview (Oberhaus et al)

Objective: To compare the 3-Minute Diagnostic Confusion Assessment Method (3D-CAM) to the long-form Confusion Assessment Method (CAM) in detecting postoperative delirium.

Design: Prospective concurrent comparison of 3D-CAM and CAM evaluations in a cohort of postoperative geriatric patients.

Setting and participants: Eligible participants were patients aged 60 years or older undergoing major elective surgery at Barnes Jewish Hospital (St. Louis, Missouri) who were enrolled in ongoing clinical trials (PODCAST, ENGAGES, SATISFY-SOS) between 2015 and 2018. Surgeries were at least 2 hours in length and required general anesthesia, planned extubation, and a minimum 2-day hospital stay. Investigators were extensively trained in administering 3D-CAM and CAM instruments. Participants were evaluated 2 hours after the end of anesthesia care on the day of surgery, then daily until follow-up was completed per clinical trial protocol or until the participant was determined by CAM to be nondelirious for 3 consecutive days. For each evaluation, both 3D-CAM and CAM assessors approached the participant together, but the evaluation was conducted such that the 3D-CAM assessor was masked to the additional questions ascertained by the long-form CAM assessment. The 3D-CAM or CAM assessor independently scored their respective assessments blinded to the results of the other assessor.

Main outcome measures: Participants were concurrently evaluated for postoperative delirium by both 3D-CAM and long-form CAM assessments. Comparisons between 3D-CAM and CAM scores were made using Cohen κ with repeated measures, generalized linear mixed-effects model, and Bland-Altman analysis.

Main results: Sixteen raters performed 471 concurrent 3D-CAM and CAM assessments in 299 participants (mean [SD] age, 69 [6.5] years). Of these participants, 152 (50.8%) were men, 263 (88.0%) were White, and 211 (70.6%) underwent noncardiac surgery. Both instruments showed good intraclass correlation (0.98 for 3D-CAM, 0.84 for CAM) with good overall agreement (Cohen κ = 0.71; 95% CI, 0.58-0.83). The mixed-effects model indicated a significant disagreement between the 3D-CAM and CAM assessments (estimated difference in fixed effect, –0.68; 95% CI, –1.32 to –0.05; P = .04). The Bland-Altman analysis showed that the probability of a delirium diagnosis with the 3D-CAM was more than twice that with the CAM (probability ratio, 2.78; 95% CI, 2.44-3.23).

Conclusion: The high degree of agreement between 3D-CAM and long-form CAM assessments suggests that the former may be a pragmatic and easy-to-administer clinical tool to screen for postoperative delirium in vulnerable older surgical patients.

Study 2 Overview (Shenkin et al)

Objective: To assess the accuracy of the 4 ‘A’s Test (4AT) for delirium detection in the medical inpatient setting and to compare the 4AT to the CAM.

Design: Prospective randomized diagnostic test accuracy study.

Setting and participants: This study was conducted in emergency departments and acute medical wards at 3 UK sites (Edinburgh, Bradford, and Sheffield) and enrolled acute medical patients aged 70 years or older without acute life-threatening illnesses and/or coma. Assessors administering the delirium evaluation were nurses or graduate clinical research associates who underwent systematic training in delirium and delirium assessment. Additional training was provided to those administering the CAM but not to those administering the 4AT as the latter is designed to be administered without special training. First, all participants underwent a reference standard delirium assessment using Diagnostic and Statistical Manual of Mental Disorders (Fourth Edition) (DSM-IV) criteria to derive a final definitive diagnosis of delirium via expert consensus (1 psychiatrist and 2 geriatricians). Then, the participants were randomized to either the 4AT or the comparator CAM group using computer-generated pseudo-random numbers, stratified by study site, with block allocation. All assessments were performed by pairs of independent assessors blinded to the results of the other assessment.

Main outcome measures: All participants were evaluated by the reference standard (DSM-IV criteria for delirium) and by either 4AT or CAM instruments for delirium. The accuracy of the 4AT instrument was evaluated by comparing its positive and negative predictive values, sensitivity, and specificity to the reference standard and analyzed via the area under the receiver operating characteristic curve. The diagnostic accuracy of 4AT, compared to the CAM, was evaluated by comparing positive and negative predictive values, sensitivity, and specificity using Fisher’s exact test. The overall performance of 4AT and CAM was summarized using Youden’s Index and the diagnostic odds ratio of sensitivity to specificity.

Results: All 843 individuals enrolled in the study were randomized and 785 were included in the analysis (23 withdrew, 3 lost contact, 32 indeterminate diagnosis, 2 missing outcome). Of the participants analyzed, the mean age was 81.4 [6.4] years, and 12.1% (95/785) had delirium by reference standard assessment, 14.3% (56/392) by 4AT, and 4.7% (18/384) by CAM. The 4AT group had an area under the receiver operating characteristic curve of 0.90 (95% CI, 0.84-0.96), a sensitivity of 76% (95% CI, 61%-87%), and a specificity of 94% (95% CI, 92%-97%). In comparison, the CAM group had a sensitivity of 40% (95% CI, 26%-57%) and a specificity of 100% (95% CI, 98%-100%).

Conclusions: The 4AT is a pragmatic screening test for delirium in a medical space that does not require special training to administer. The use of this instrument may help to improve delirium detection as a part of routine clinical care in hospitalized older adults.

Commentary

Delirium is an acute confusional state marked by fluctuating mental status, inattention, disorganized thinking, and altered level of consciousness. It is exceedingly common in older patients in both surgical and medical settings and is associated with increased morbidity, mortality, hospital length of stay, institutionalization, and health care costs. Delirium is frequently underdiagnosed in the hospitalized setting, perhaps due to a combination of its waxing and waning nature and a lack of pragmatic and easily implementable screening tools that can be readily administered by clinicians and nonclinicians alike.1 While the CAM is a well-validated instrument to diagnose delirium, it requires specific training in the rating of each of the cardinal features ascertained through a brief cognitive assessment and takes 5 to 10 minutes to complete. Taken together, given the high patient load for clinicians in the hospital setting, the validation and application of brief delirium screening instruments that can be reliably administered by nonphysicians and nonclinicians may enhance delirium detection in vulnerable patients and consequently improve their outcomes.

In Study 1, Oberhaus et al approach the challenge of underdiagnosing delirium in the postoperative setting by investigating whether the widely accepted long-form CAM and an abbreviated 3-minute version, the 3D-CAM, provide similar delirium detection in older surgical patients. The authors found that both instruments were reliable tests individually (high interrater reliability) and had good overall agreement. However, the 3D-CAM was more likely to yield a positive diagnosis of delirium compared to the long-form CAM, consistent with its purpose as a screening tool with a high sensitivity. It is important to emphasize that the 3D-CAM takes less time to administer, but also requires less extensive training and clinical knowledge than the long-form CAM. Therefore, this instrument meets the prerequisite of a brief screening test that can be rapidly administered by nonclinicians, and if affirmative, followed by a more extensive confirmatory test performed by a clinician. Limitations of this study include a lack of a reference standard structured interview conducted by a physician-rater to better determine the true diagnostic accuracy of both 3D-CAM and CAM assessments, and the use of convenience sampling at a single center, which reduces the generalizability of its findings.

In a similar vein, Shenkin et al in Study 2 attempt to evaluate the utility of the 4AT instrument in diagnosing delirium in older medical inpatients by testing the diagnostic accuracy of the 4AT against a reference standard (ie, DSM-IV–based evaluation by physicians) as well as comparing it to CAM. The 4AT takes less time (~2 minutes) and requires less knowledge and training to administer as compared to the CAM. The study showed that the abbreviated 4AT, compared to CAM, had a higher sensitivity (76% vs 40%) and lower specificity (94% vs 100%) in delirium detection. Thus, akin to the application of 3D-CAM in the postoperative setting, 4AT possesses key characteristics of a brief delirium screening test for older patients in the acute medical setting. In contrast to the Oberhaus et al study, a major strength of this study was the utilization of a reference standard that was validated by expert consensus. This allowed the 4AT and CAM assessments to be compared to a more objective standard, thereby directly testing their diagnostic performance in detecting delirium.

Application for Clinical Practice and System Implementation

The findings from both Study 1 and 2 suggest that using an abbreviated delirium instrument in both surgical and acute medical settings may provide a pragmatic and sensitive method to detect delirium in older patients. The brevity of administration of 3D-CAM (~3 minutes) and 4AT (~2 minutes), combined with their higher sensitivity for detecting delirium compared to CAM, make these instruments potentially effective rapid screening tests for delirium in hospitalized older patients. Importantly, the utilization of such instruments might be a feasible way to mitigate the issue of underdiagnosing delirium in the hospital.

Several additional aspects of these abbreviated delirium instruments increase their suitability for clinical application. Specifically, the 3D-CAM and 4AT require less extensive training and clinical knowledge to both administer and interpret the results than the CAM.2 For instance, a multistage, multiday training for CAM is a key factor in maintaining its diagnostic accuracy.3,4 In contrast, the 3D-CAM requires only a 1- to 2-hour training session, and the 4AT can be administered by a nonclinician without the need for instrument-specific training. Thus, implementation of these instruments can be particularly pragmatic in clinical settings in which the staff involved in delirium screening cannot undergo the substantial training required to administer CAM. Moreover, these abbreviated tests enable nonphysician care team members to assume the role of delirium screener in the hospital. Taken together, the adoption of these abbreviated instruments may facilitate brief screenings of delirium in older patients by caregivers who see them most often—nurses and certified nursing assistants—thereby improving early detection and prevention of delirium-related complications in the hospital.

The feasibility of using abbreviated delirium screening instruments in the hospital setting raises a system implementation question—if these instruments are designed to be administered by those with limited to no training, could nonclinicians, such as hospital volunteers, effectively take on delirium screening roles in the hospital? If volunteers are able to take on this role, the integration of hospital volunteers into the clinical team can greatly expand the capacity for delirium screening in the hospital setting. Further research is warranted to validate the diagnostic accuracy of 3D-CAM and 4AT by nonclinician administrators in order to more broadly adopt this approach to delirium screening.

Practice Points

- Abbreviated delirium screening tools such as 3D-CAM and 4AT may be pragmatic instruments to improve delirium detection in surgical and hospitalized older patients, respectively.

- Further studies are warranted to validate the diagnostic accuracy of 3D-CAM and 4AT by nonclinician administrators in order to more broadly adopt this approach to delirium screening.

Jared Doan, BS, and Fred Ko, MD

Geriatrics and Palliative Medicine, Icahn School of Medicine at Mount Sinai

1. Fong TG, Tulebaev SR, Inouye SK. Delirium in elderly adults: diagnosis, prevention and treatment. Nat Rev Neurol. 2009;5(4):210-220. doi:10.1038/nrneurol.2009.24

2. Marcantonio ER, Ngo LH, O’Connor M, et al. 3D-CAM: derivation and validation of a 3-minute diagnostic interview for CAM-defined delirium: a cross-sectional diagnostic test study. Ann Intern Med. 2014;161(8):554-561. doi:10.7326/M14-0865

3. Green JR, Smith J, Teale E, et al. Use of the confusion assessment method in multicentre delirium trials: training and standardisation. BMC Geriatr. 2019;19(1):107. doi:10.1186/s12877-019-1129-8

4. Wei LA, Fearing MA, Sternberg EJ, Inouye SK. The Confusion Assessment Method: a systematic review of current usage. Am Geriatr Soc. 2008;56(5):823-830. doi:10.1111/j.1532-5415.2008.01674.x

1. Fong TG, Tulebaev SR, Inouye SK. Delirium in elderly adults: diagnosis, prevention and treatment. Nat Rev Neurol. 2009;5(4):210-220. doi:10.1038/nrneurol.2009.24

2. Marcantonio ER, Ngo LH, O’Connor M, et al. 3D-CAM: derivation and validation of a 3-minute diagnostic interview for CAM-defined delirium: a cross-sectional diagnostic test study. Ann Intern Med. 2014;161(8):554-561. doi:10.7326/M14-0865

3. Green JR, Smith J, Teale E, et al. Use of the confusion assessment method in multicentre delirium trials: training and standardisation. BMC Geriatr. 2019;19(1):107. doi:10.1186/s12877-019-1129-8

4. Wei LA, Fearing MA, Sternberg EJ, Inouye SK. The Confusion Assessment Method: a systematic review of current usage. Am Geriatr Soc. 2008;56(5):823-830. doi:10.1111/j.1532-5415.2008.01674.x

New ESC guidelines for cutting CV risk in noncardiac surgery

The European Society of Cardiology guidelines on cardiovascular assessment and management of patients undergoing noncardiac surgery have seen extensive revision since the 2014 version.

They still have the same aim – to prevent surgery-related bleeding complications, perioperative myocardial infarction/injury (PMI), stent thrombosis, acute heart failure, arrhythmias, pulmonary embolism, ischemic stroke, and cardiovascular (CV) death.

Cochairpersons Sigrun Halvorsen, MD, PhD, and Julinda Mehilli, MD, presented highlights from the guidelines at the annual congress of the European Society of Cardiology and the document was simultaneously published online in the European Heart Journal.

The document classifies noncardiac surgery into three levels of 30-day risk of CV death, MI, or stroke. Low (< 1%) risk includes eye or thyroid surgery; intermediate (1%-5%) risk includes knee or hip replacement or renal transplant; and high (> 5%) risk includes aortic aneurysm, lung transplant, or pancreatic or bladder cancer surgery (see more examples below).

It classifies patients as low risk if they are younger than 65 without CV disease or CV risk factors (smoking, hypertension, diabetes, dyslipidemia, family history); intermediate risk if they are 65 or older or have CV risk factors; and high risk if they have CVD.

In an interview, Dr. Halvorsen, professor in cardiology, University of Oslo, zeroed in on three important revisions:

First, recommendations for preoperative ECG and biomarkers are more specific, he noted.

The guidelines advise that before intermediate- or high-risk noncardiac surgery, in patients who have known CVD, CV risk factors (including age 65 or older), or symptoms suggestive of CVD:

- It is recommended to obtain a preoperative 12-lead ECG (class I).

- It is recommended to measure high-sensitivity cardiac troponin T (hs-cTn T) or high-sensitivity cardiac troponin I (hs-cTn I). It is also recommended to measure these biomarkers at 24 hours and 48 hours post surgery (class I).

- It should be considered to measure B-type natriuretic peptide or N-terminal of the prohormone BNP (NT-proBNP).

However, for low-risk patients undergoing low- and intermediate-risk noncardiac surgery, it is not recommended to routinely obtain preoperative ECG, hs-cTn T/I, or BNP/NT-proBNP concentrations (class III).

Troponins have a stronger class I recommendation, compared with the IIA recommendation for BNP, because they are useful for preoperative risk stratification and for diagnosis of PMI, Dr. Halvorsen explained. “Patients receive painkillers after surgery and may have no pain,” she noted, but they may have PMI, which has a bad prognosis.

Second, the guidelines recommend that “all patients should stop smoking 4 weeks before noncardiac surgery [class I],” she noted. Clinicians should also “measure hemoglobin, and if the patient is anemic, treat the anemia.”

Third, the sections on antithrombotic treatment have been significantly revised. “Bridging – stopping an oral antithrombotic drug and switching to a subcutaneous or IV drug – has been common,” Dr. Halvorsen said, “but recently we have new evidence that in most cases that increases the risk of bleeding.”

“We are [now] much more restrictive with respect to bridging” with unfractionated heparin or low-molecular-weight heparin, she said. “We recommend against bridging in patients with low to moderate thrombotic risk,” and bridging should only be considered in patients with mechanical prosthetic heart valves or with very high thrombotic risk.

More preoperative recommendations

In the guideline overview session at the congress, Dr. Halverson highlighted some of the new recommendations for preoperative risk assessment.

If time allows, it is recommended to optimize guideline-recommended treatment of CVD and control of CV risk factors including blood pressure, dyslipidemia, and diabetes, before noncardiac surgery (class I).

Patients commonly have “murmurs, chest pain, dyspnea, and edema that may suggest severe CVD, but may also be caused by noncardiac disease,” she noted. The guidelines state that “for patients with a newly detected murmur and symptoms or signs of CVD, transthoracic echocardiography is recommended before noncardiac surgery (class I).

“Many studies have been performed to try to find out if initiation of specific drugs before surgery could reduce the risk of complications,” Dr. Halvorsen noted. However, few have shown any benefit and “the question of presurgery initiation of beta-blockers has been greatly debated,” she said. “We have again reviewed the literature and concluded ‘Routine initiation of beta-blockers perioperatively is not recommended (class IIIA).’ “

“We adhere to the guidelines on acute and chronic coronary syndrome recommending 6-12 months of dual antiplatelet treatment as a standard before elective surgery,” she said. “However, in case of time-sensitive surgery, the duration of that treatment can be shortened down to a minimum of 1 month after elective PCI and a minimum of 3 months after PCI and ACS.”

Patients with specific types of CVD

Dr. Mehilli, a professor at Landshut-Achdorf (Germany) Hospital, highlighted some new guideline recommendations for patients who have specific types of cardiovascular disease.

Coronary artery disease (CAD). “For chronic coronary syndrome, a cardiac workup is recommended only for patients undergoing intermediate risk or high-risk noncardiac surgery.”

“Stress imaging should be considered before any high risk, noncardiac surgery in asymptomatic patients with poor functional capacity and prior PCI or coronary artery bypass graft (new recommendation, class IIa).”

Mitral valve regurgitation. For patients undergoing scheduled noncardiac surgery, who remain symptomatic despite guideline-directed medical treatment for mitral valve regurgitation (including resynchronization and myocardial revascularization), consider a valve intervention – either transcatheter or surgical – before noncardiac surgery in eligible patients with acceptable procedural risk (new recommendation).

Cardiac implantable electronic devices (CIED). For high-risk patients with CIEDs undergoing noncardiac surgery with high probability of electromagnetic interference, a CIED checkup and necessary reprogramming immediately before the procedure should be considered (new recommendation).

Arrhythmias. “I want only to stress,” Dr. Mehilli said, “in patients with atrial fibrillation with acute or worsening hemodynamic instability undergoing noncardiac surgery, an emergency electrical cardioversion is recommended (class I).”

Peripheral artery disease (PAD) and abdominal aortic aneurysm. For these patients “we do not recommend a routine referral for a cardiac workup. But we recommend it for patients with poor functional capacity or with significant risk factors or symptoms (new recommendations).”

Chronic arterial hypertension. “We have modified the recommendation, recommending avoidance of large perioperative fluctuations in blood pressure, and we do not recommend deferring noncardiac surgery in patients with stage 1 or 2 hypertension,” she said.

Postoperative cardiovascular complications

The most frequent postoperative cardiovascular complication is PMI, Dr. Mehilli noted.

“In the BASEL-PMI registry, the incidence of this complication around intermediate or high-risk noncardiac surgery was up to 15% among patients older than 65 years or with a history of CAD or PAD, which makes this kind of complication really important to prevent, to assess, and to know how to treat.”

“It is recommended to have a high awareness for perioperative cardiovascular complications, combined with surveillance for PMI in patients undergoing intermediate- or high-risk noncardiac surgery” based on serial measurements of high-sensitivity cardiac troponin.

The guidelines define PMI as “an increase in the delta of high-sensitivity troponin more than the upper level of normal,” Dr. Mehilli said. “It’s different from the one used in a rule-in algorithm for non-STEMI acute coronary syndrome.”

Postoperative atrial fibrillation (AFib) is observed in 2%-30% of noncardiac surgery patients in different registries, particularly in patients undergoing intermediate or high-risk noncardiac surgery, she noted.

“We propose an algorithm on how to prevent and treat this complication. I want to highlight that in patients with hemodynamic unstable postoperative AF[ib], an emergency cardioversion is indicated. For the others, a rate control with the target heart rate of less than 110 beats per minute is indicated.”

In patients with postoperative AFib, long-term oral anticoagulation therapy should be considered in all patients at risk for stroke, considering the anticipated net clinical benefit of oral anticoagulation therapy as well as informed patient preference (new recommendations).

Routine use of beta-blockers to prevent postoperative AFib in patients undergoing noncardiac surgery is not recommended.

The document also covers the management of patients with kidney disease, diabetes, cancer, obesity, and COVID-19. In general, elective noncardiac surgery should be postponed after a patient has COVID-19, until he or she recovers completely, and coexisting conditions are optimized.

The guidelines are available from the ESC website in several formats: pocket guidelines, pocket guidelines smartphone app, guidelines slide set, essential messages, and the European Heart Journal article.

Noncardiac surgery risk categories

The guideline includes a table that classifies noncardiac surgeries into three groups, based on the associated 30-day risk of death, MI, or stroke:

- Low (< 1%): breast, dental, eye, thyroid, and minor gynecologic, orthopedic, and urologic surgery.

- Intermediate (1%-5%): carotid surgery, endovascular aortic aneurysm repair, gallbladder surgery, head or neck surgery, hernia repair, peripheral arterial angioplasty, renal transplant, major gynecologic, orthopedic, or neurologic (hip or spine) surgery, or urologic surgery

- High (> 5%): aortic and major vascular surgery (including aortic aneurysm), bladder removal (usually as a result of cancer), limb amputation, lung or liver transplant, pancreatic surgery, or perforated bowel repair.

The guidelines were endorsed by the European Society of Anaesthesiology and Intensive Care. The guideline authors reported numerous disclosures.

A version of this article first appeared on Medscape.com.

The European Society of Cardiology guidelines on cardiovascular assessment and management of patients undergoing noncardiac surgery have seen extensive revision since the 2014 version.