User login

The Journal of Clinical Outcomes Management® is an independent, peer-reviewed journal offering evidence-based, practical information for improving the quality, safety, and value of health care.

div[contains(@class, 'header__large-screen')]

div[contains(@class, 'read-next-article')]

div[contains(@class, 'nav-primary')]

nav[contains(@class, 'nav-primary')]

section[contains(@class, 'footer-nav-section-wrapper')]

footer[@id='footer']

div[contains(@class, 'main-prefix')]

section[contains(@class, 'nav-hidden')]

div[contains(@class, 'ce-card-content')]

nav[contains(@class, 'nav-ce-stack')]

Quality of Life and Population Health in Behavioral Health Care: A Retrospective, Cross-Sectional Study

From Milwaukee County Behavioral Health Services, Milwaukee, WI.

Abstract

Objectives: The goal of this study was to determine whether a single-item quality of life (QOL) measure could serve as a useful population health–level metric within the Quadruple Aim framework in a publicly funded behavioral health system.

Design: This was a retrospective, cross-sectional study that examined the correlation between the single-item QOL measure and several other key measures of the social determinants of health and a composite measure of acute service utilization for all patients receiving mental health and substance use services in a community behavioral health system.

Methods: Data were collected for 4488 patients who had at least 1 assessment between October 1, 2020, and September 30, 2021. Data on social determinants of health were obtained through patient self-report; acute service use data were obtained from electronic health records.

Results: Statistical analyses revealed results in the expected direction for all relationships tested. Patients with higher QOL were more likely to report “Good” or better self-rated physical health, be employed, have a private residence, and report recent positive social interactions, and were less likely to have received acute services in the previous 90 days.

Conclusion: A single-item QOL measure shows promise as a general, minimally burdensome whole-system metric that can function as a target for population health management efforts in a large behavioral health system. Future research should explore whether this QOL measure is sensitive to change over time and examine its temporal relationship with other key outcome metrics.

Keywords: Quadruple Aim, single-item measures, social determinants of health, acute service utilization metrics.

The Triple Aim for health care—improving the individual experience of care, increasing the health of populations, and reducing the costs of care—was first proposed in 2008.1 More recently, some have advocated for an expanded focus to include a fourth aim: the quality of staff work life.2 Since this seminal paper was published, many health care systems have endeavored to adopt and implement the Quadruple Aim3,4; however, the concepts representing each of the aims are not universally defined,3 nor are the measures needed to populate the Quadruple Aim always available within the health system in question.5

Although several assessment models and frameworks that provide guidance to stakeholders have been developed,6,7 it is ultimately up to organizations themselves to determine which measures they should deploy to best represent the different quadrants of the Quadruple Aim.6 Evidence suggests, however, that quality measurement, and the administrative time required to conduct it, can be both financially and emotionally burdensome to providers and health systems.8-10 Thus, it is incumbent on organizations to select a set of measures that are not only meaningful but as parsimonious as possible.6,11,12

Quality of life (QOL) is a potential candidate to assess the aim of population health. Brief health-related QOL questions have long been used in epidemiological surveys, such as the Behavioral Risk Factor Surveillance System survey.13 Such questions are also a key component of community health frameworks, such as the County Health Rankings developed by the University of Wisconsin Population Health Institute.14 Furthermore, Humana recently revealed that increasing the number of physical and mental health “Healthy Days” (which are among the Centers for Disease Control and Prevention’s Health-Related Quality of Life questions15) among the members enrolled in their insurance plan would become a major goal for the organization.16,17 Many of these measures, while brief, focus on QOL as a function of health, often as a self-rated construct (from “Poor” to “Excellent”) or in the form of days of poor physical or mental health in the past 30 days,15 rather than evaluating QOL itself; however, several authors have pointed out that health status and QOL are related but distinct concepts.18,19

Brief single-item assessments focused specifically on QOL have been developed and implemented within nonclinical20 and clinical populations, including individuals with cancer,21 adults with disabilities,22 individuals with cystic fibrosis,23 and children with epilepsy.24 Despite the long history of QOL assessment in behavioral health treatment,25 single-item measures have not been widely implemented in this population.

Milwaukee County Behavioral Health Services (BHS), a publicly funded, county-based behavioral health care system in Milwaukee, Wisconsin, provides inpatient and ambulatory treatment, psychiatric emergency care, withdrawal management, care management, crisis services, and other support services to individuals in Milwaukee County. In 2018 the community services arm of BHS began implementing a single QOL question from the World Health Organization’s WHOQOL-BREF26: On a 5-point rating scale of “Very Poor” to “Very Good,” “How would you rate your overall quality of life right now?” Previous research by Atroszko and colleagues,20 which used a similar approach with the same item from the WHOQOL-BREF, reported correlations in the expected direction of the single-item QOL measure with perceived stress, depression, anxiety, loneliness, and daily hours of sleep. This study’s sample, however, comprised opportunistically recruited college students, not a clinical population. Further, the researchers did not examine the relationship of QOL with acute service utilization or other measures of the social determinants of health, such as housing, employment, or social connectedness.

The following study was designed to extend these results by focusing on a clinical population—individuals with mental health or substance use issues—being served in a large, publicly funded behavioral health system in Milwaukee, Wisconsin. The objective of this study was to determine whether a single-item QOL measure could be used as a brief, parsimonious measure of overall population health by examining its relationship with other key outcome measures for patients receiving services from BHS. This study was reviewed and approved by BHS’s Institutional Review Board.

Methods

All patients engaged in nonacute community services are offered a standardized assessment that includes, among other measures, items related to QOL, housing status, employment status, self-rated physical health, and social connectedness. This assessment is administered at intake, discharge, and every 6 months while patients are enrolled in services. Patients who received at least 1 assessment between October 1, 2020, and September 30, 2021, were included in the analyses. Patients receiving crisis, inpatient, or withdrawal management services alone (ie, did not receive any other community-based services) were not offered the standard assessment and thus were not included in the analyses. If patients had more than 1 assessment during this time period, QOL data from the last assessment were used. Data on housing (private residence status, defined as adults living alone or with others without supervision in a house or apartment), employment status, self-rated physical health, and social connectedness (measured by asking people whether they have had positive interactions with family or friends in the past 30 days) were extracted from the same timepoint as well.

Also included in the analyses were rates of acute service utilization, in which any patient with at least 1 visit to BHS’s psychiatric emergency department, withdrawal management facility, or psychiatric inpatient facility in the 90 days prior to the date of the assessment received a code of “Yes,” and any patient who did not receive any of these services received a code of “No.” Chi-square analyses were conducted to determine the relationship between QOL rankings (“Very Poor,” “Poor,” “Neither Good nor Poor,” “Good,” and “Very Good”) and housing, employment, self-rated physical health, social connectedness, and 90-day acute service use. All acute service utilization data were obtained from BHS’s electronic health records system. All data used in the study were stored on a secure, password-protected server. All analyses were conducted with SPSS software (SPSS 28; IBM).

Results

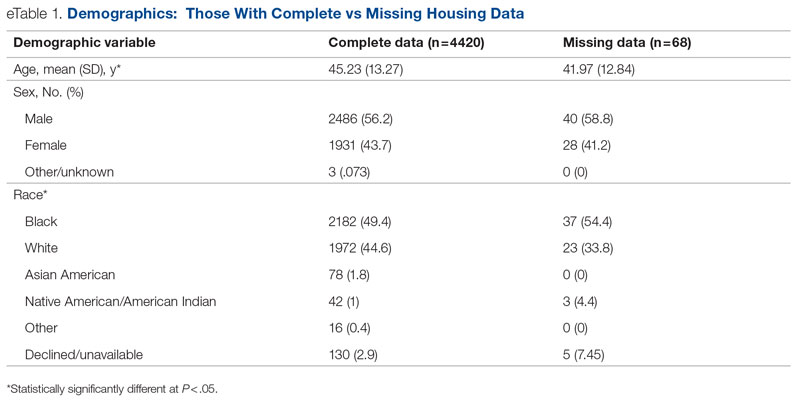

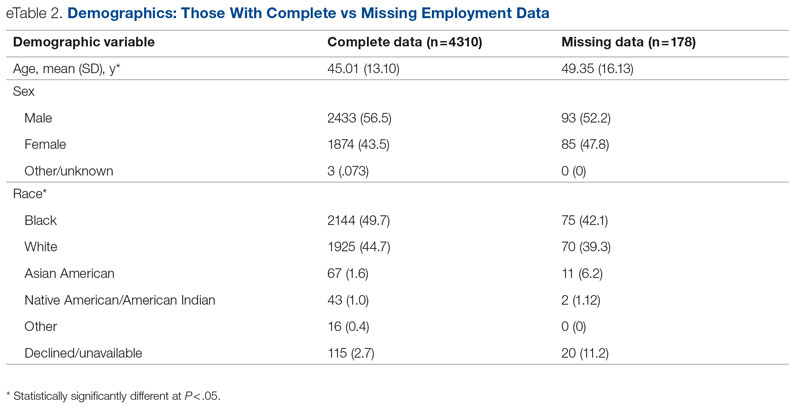

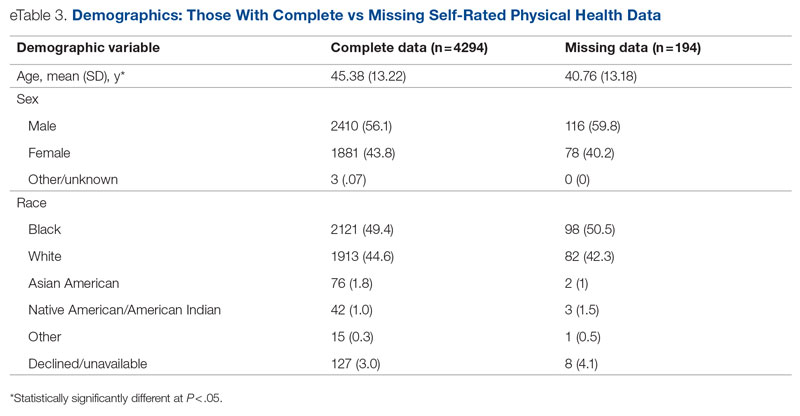

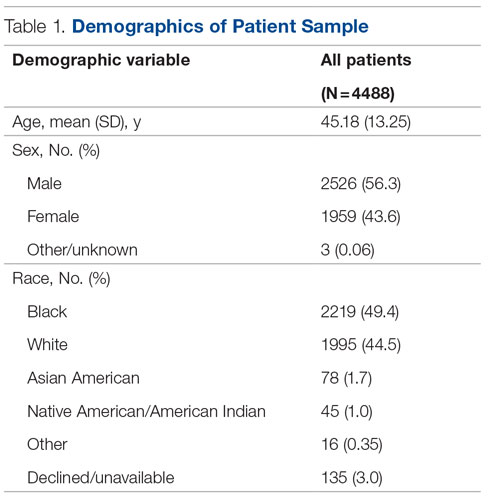

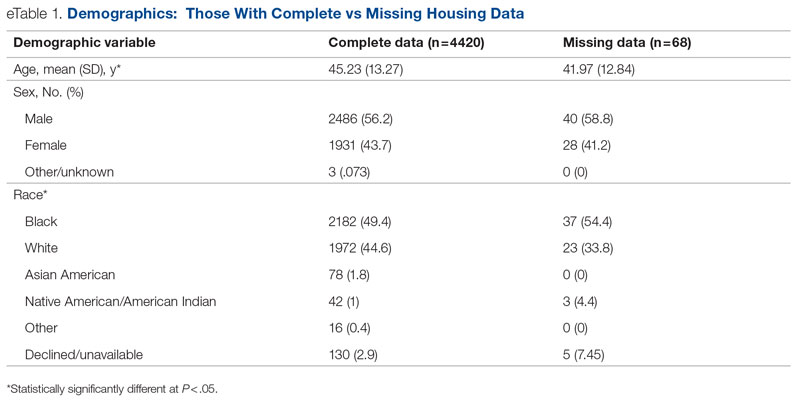

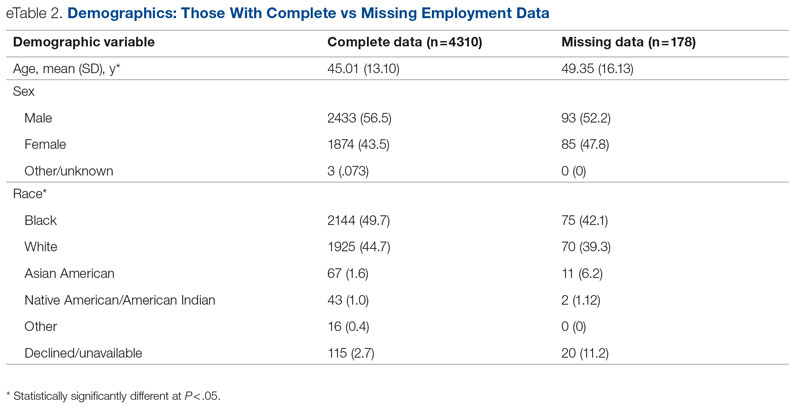

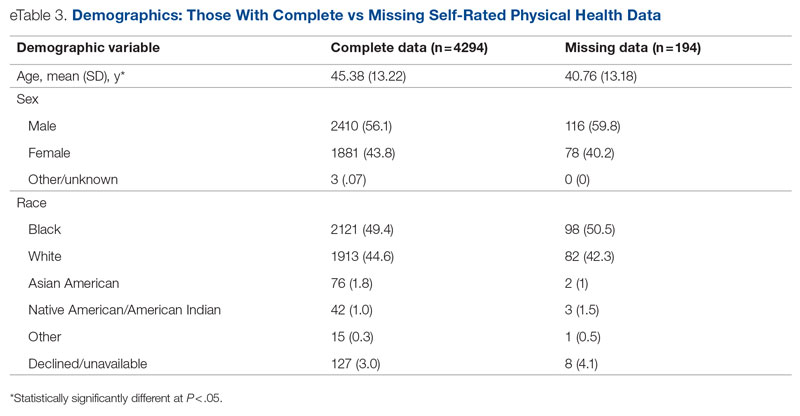

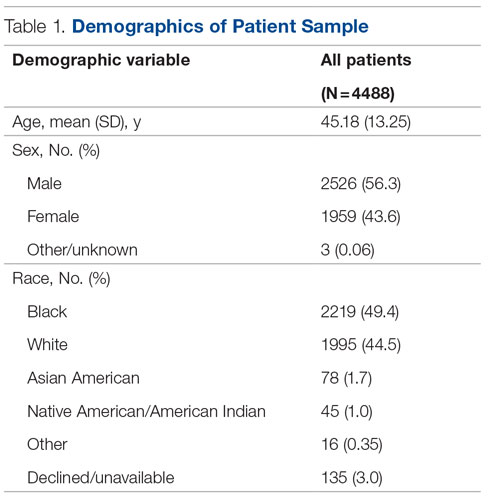

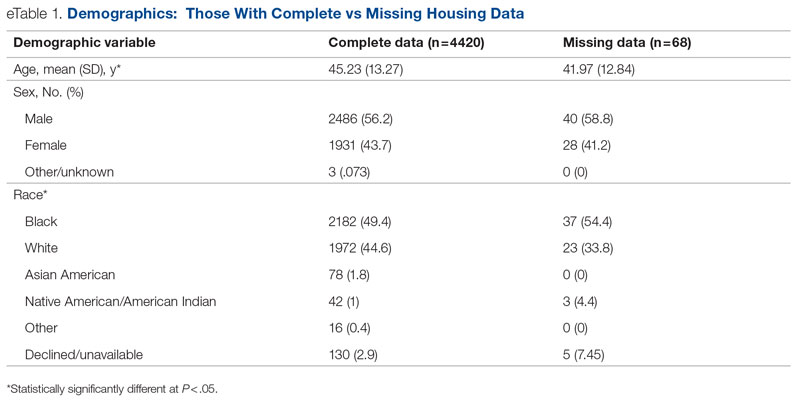

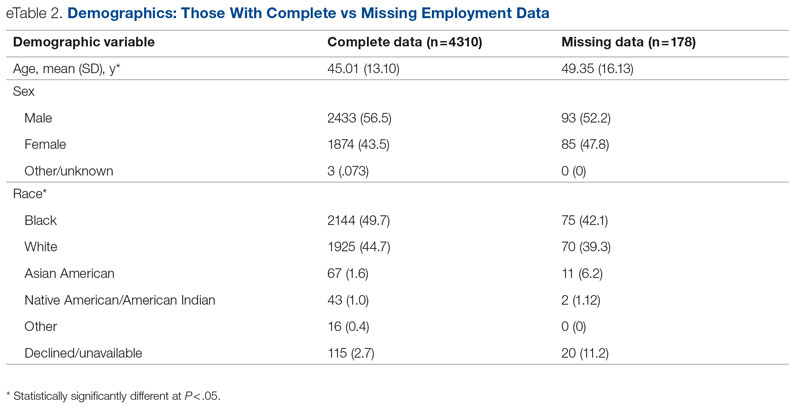

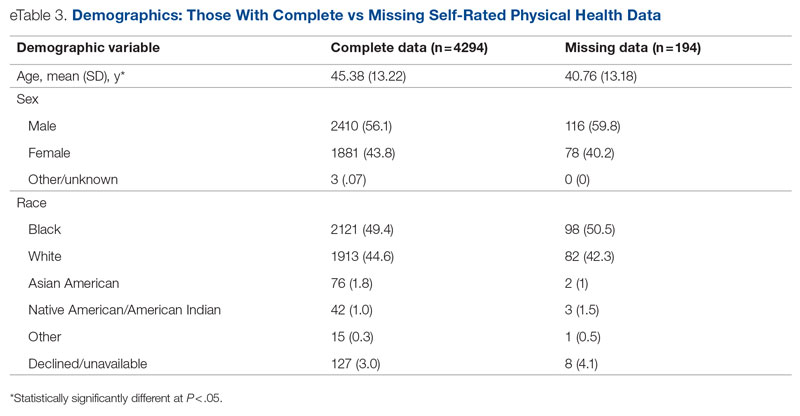

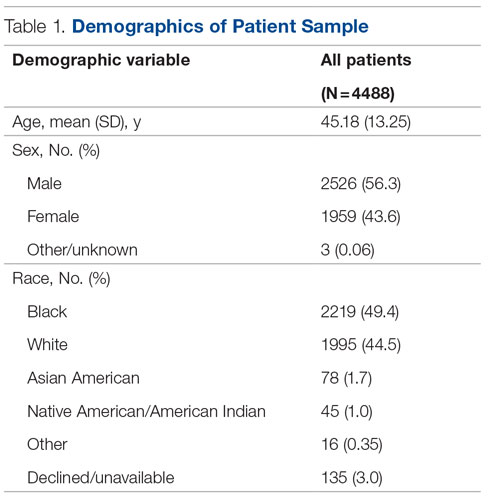

Data were available for 4488 patients who received an assessment between October 1, 2020, and September 30, 2021 (total numbers per item vary because some items had missing data; see supplementary eTables 1-3 for sample size per item). Demographics of the patient sample are listed in Table 1; the demographics of the patients who were missing data for specific outcomes are presented in eTables 1-3.

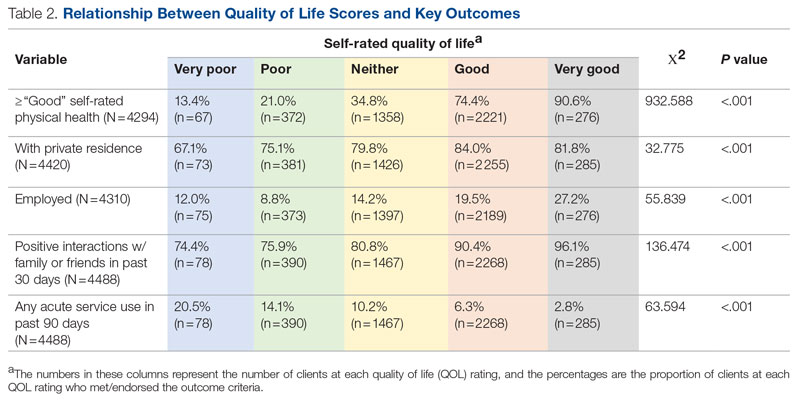

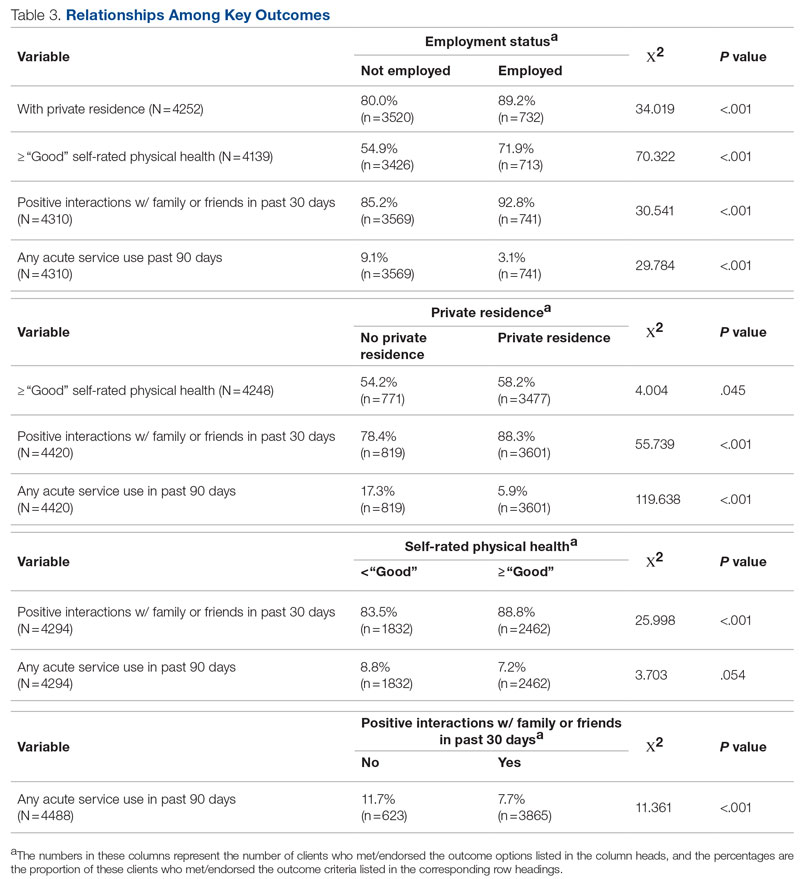

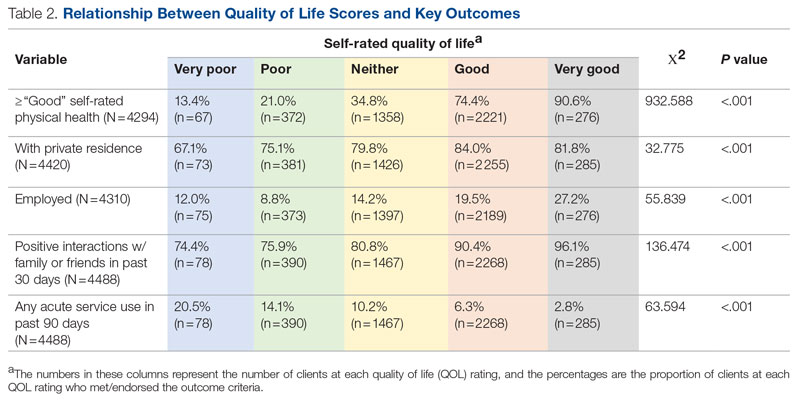

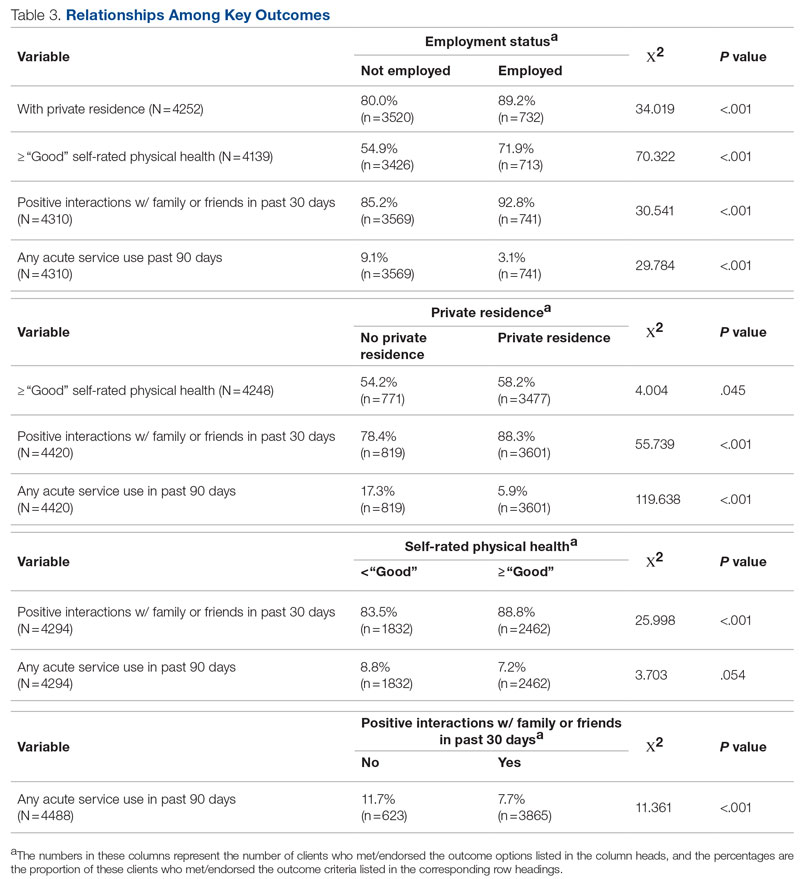

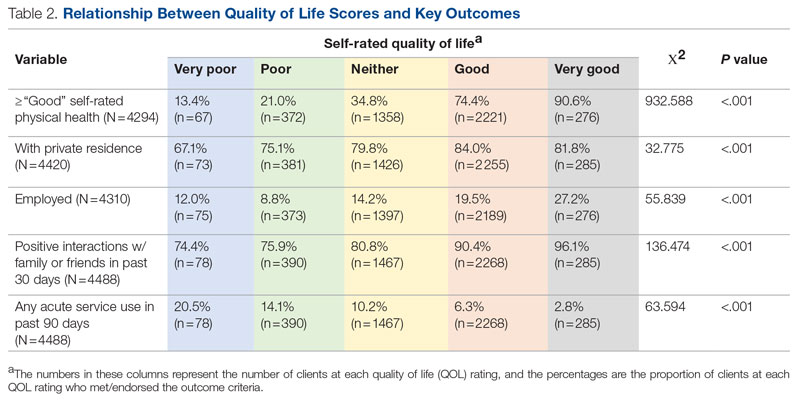

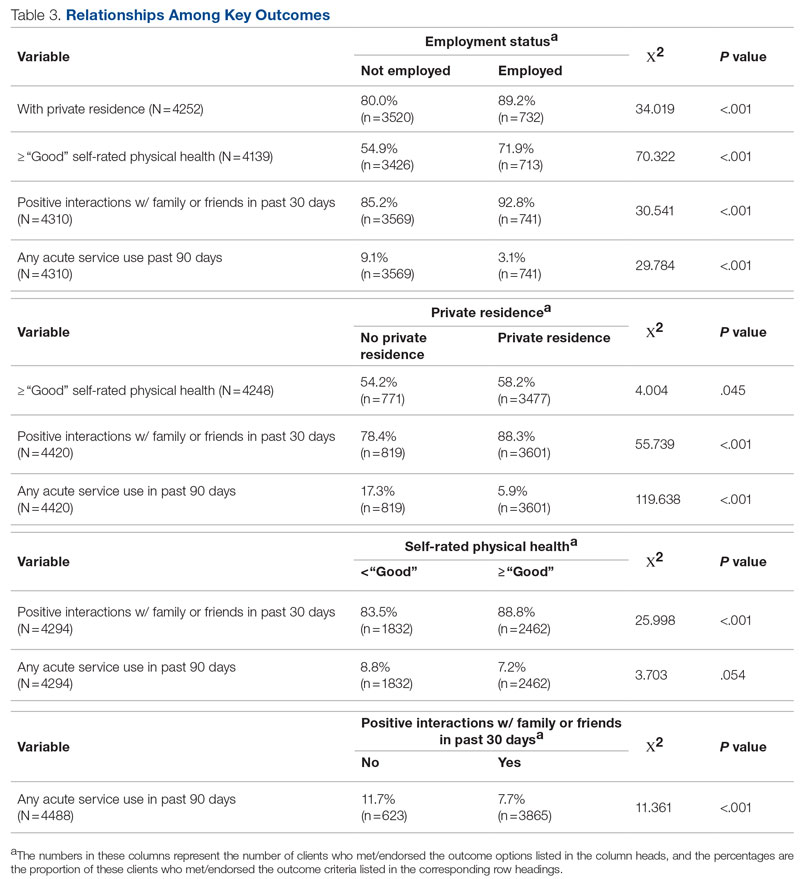

Statistical analyses revealed results in the expected direction for all relationships tested (Table 2). As patients’ self-reported QOL improved, so did the likelihood of higher rates of self-reported “Good” or better physical health, which was 576% higher among individuals who reported “Very Good” QOL relative to those who reported “Very Poor” QOL. Similarly, when compared with individuals with “Very Poor” QOL, individuals who reported “Very Good” QOL were 21.91% more likely to report having a private residence, 126.7% more likely to report being employed, and 29.17% more likely to report having had positive social interactions with family and friends in the past 30 days. There was an inverse relationship between QOL and the likelihood that a patient had received at least 1 admission for an acute service in the previous 90 days, such that patients who reported “Very Good” QOL were 86.34% less likely to have had an admission compared to patients with “Very Poor” QOL (2.8% vs 20.5%, respectively). The relationships among the criterion variables used in this study are presented in Table 3.

Discussion

The results of this preliminary analysis suggest that self-rated QOL is related to key health, social determinants of health, and acute service utilization metrics. These data are important for several reasons. First, because QOL is a diagnostically agnostic measure, it is a cross-cutting measure to use with clinically diverse populations receiving an array of different services. Second, at 1 item, the QOL measure is extremely brief and therefore minimally onerous to implement for both patients and administratively overburdened providers. Third, its correlation with other key metrics suggests that it can function as a broad population health measure for health care organizations because individuals with higher QOL will also likely have better outcomes in other key areas. This suggests that it has the potential to broadly represent the overall status of a population of patients, thus functioning as a type of “whole system” measure, which the Institute for Healthcare Improvement describes as “a small set of measures that reflect a health system’s overall performance on core dimensions of quality guided by the Triple Aim.”7 These whole system measures can help focus an organization’s strategic initiatives and efforts on the issues that matter most to the patients and community it serves.

The relationship of QOL to acute service utilization deserves special mention. As an administrative measure, utilization is not susceptible to the same response bias as the other self-reported variables. Furthermore, acute services are costly to health systems, and hospital readmissions are associated with payment reductions in the Centers for Medicare and Medicaid Services (CMS) Hospital Readmissions Reduction Program for hospitals that fail to meet certain performance targets.27 Thus, because of its alignment with federal mandates, improved QOL (and potentially concomitant decreases in acute service use) may have significant financial implications for health systems as well.

This study was limited by several factors. First, it was focused on a population receiving publicly funded behavioral health services with strict eligibility requirements, one of which stipulated that individuals must be at 200% or less of the Federal Poverty Level; therefore, the results might not be applicable to health systems with a more clinically or socioeconomically diverse patient population. Second, because these data are cross-sectional, it was not possible to determine whether QOL improved over time or whether changes in QOL covaried longitudinally with the other metrics under observation. For example, if patients’ QOL improved from the first to last assessment, did their employment or residential status improve as well, or were these patients more likely to be employed at their first assessment? Furthermore, if there was covariance, did changes in employment, housing status, and so on precede changes in QOL or vice versa? Multiple longitudinal observations would help to address these questions and will be the focus of future analyses.

Conclusion

This preliminary study suggests that a single-item QOL measure may be a valuable population health–level metric for health systems. It requires little administrative effort on the part of either the clinician or patient. It is also agnostic with regard to clinical issue or treatment approach and can therefore admit of a range of diagnoses or patient-specific, idiosyncratic recovery goals. It is correlated with other key health, social determinants of health, and acute service utilization indicators and can therefore serve as a “whole system” measure because of its ability to broadly represent improvements in an entire population. Furthermore, QOL is patient-centered in that data are obtained through patient self-report, which is a high priority for CMS and other health care organizations.28 In summary, a single-item QOL measure holds promise for health care organizations looking to implement the Quadruple Aim and assess the health of the populations they serve in a manner that is simple, efficient, and patient-centered.

Acknowledgments: The author thanks Jennifer Wittwer for her thoughtful comments on the initial draft of this manuscript and Gary Kraft for his help extracting the data used in the analyses.

Corresponding author: Walter Matthew Drymalski, PhD; walter.drymalski@milwaukeecountywi.gov

Disclosures: None reported.

1. Berwick DM, Nolan TW, Whittington J. The triple aim: care, health, and cost. Health Aff (Millwood). 2008;27(3):759-769. doi:10.1377/hlthaff.27.3.759

2. Bodenheimer T, Sinsky C. From triple to quadruple aim: care of the patient requires care of the provider. Ann Fam Med. 2014;12(6):573-576. doi:10.1370/afm.1713

3. Hendrikx RJP, Drewes HW, Spreeuwenberg M, et al. Which triple aim related measures are being used to evaluate population management initiatives? An international comparative analysis. Health Policy. 2016;120(5):471-485. doi:10.1016/j.healthpol.2016.03.008

4. Whittington JW, Nolan K, Lewis N, Torres T. Pursuing the triple aim: the first 7 years. Milbank Q. 2015;93(2):263-300. doi:10.1111/1468-0009.12122

5. Ryan BL, Brown JB, Glazier RH, Hutchison B. Examining primary healthcare performance through a triple aim lens. Healthc Policy. 2016;11(3):19-31.

6. Stiefel M, Nolan K. A guide to measuring the Triple Aim: population health, experience of care, and per capita cost. Institute for Healthcare Improvement; 2012. Accessed November 1, 2022. https://nhchc.org/wp-content/uploads/2019/08/ihiguidetomeasuringtripleaimwhitepaper2012.pdf

7. Martin L, Nelson E, Rakover J, Chase A. Whole system measures 2.0: a compass for health system leaders. Institute for Healthcare Improvement; 2016. Accessed November 1, 2022. http://www.ihi.org:80/resources/Pages/IHIWhitePapers/Whole-System-Measures-Compass-for-Health-System-Leaders.aspx

8. Casalino LP, Gans D, Weber R, et al. US physician practices spend more than $15.4 billion annually to report quality measures. Health Aff (Millwood). 2016;35(3):401-406. doi:10.1377/hlthaff.2015.1258

9. Rao SK, Kimball AB, Lehrhoff SR, et al. The impact of administrative burden on academic physicians: results of a hospital-wide physician survey. Acad Med. 2017;92(2):237-243. doi:10.1097/ACM.0000000000001461

10. Woolhandler S, Himmelstein DU. Administrative work consumes one-sixth of U.S. physicians’ working hours and lowers their career satisfaction. Int J Health Serv. 2014;44(4):635-642. doi:10.2190/HS.44.4.a

11. Meyer GS, Nelson EC, Pryor DB, et al. More quality measures versus measuring what matters: a call for balance and parsimony. BMJ Qual Saf. 2012;21(11):964-968. doi:10.1136/bmjqs-2012-001081

12. Vital Signs: Core Metrics for Health and Health Care Progress. Washington, DC: National Academies Press; 2015. doi:10.17226/19402

13. Centers for Disease Control and Prevention. BRFSS questionnaires. Accessed November 1, 2022. https://www.cdc.gov/brfss/questionnaires/index.htm

14. County Health Rankings and Roadmaps. Measures & data sources. University of Wisconsin Population Health Institute. Accessed November 1, 2022. https://www.countyhealthrankings.org/explore-health-rankings/measures-data-sources

15. Centers for Disease Control and Prevention. Healthy days core module (CDC HRQOL-4). Accessed November 1, 2022. https://www.cdc.gov/hrqol/hrqol14_measure.htm

16. Cordier T, Song Y, Cambon J, et al. A bold goal: more healthy days through improved community health. Popul Health Manag. 2018;21(3):202-208. doi:10.1089/pop.2017.0142

17. Slabaugh SL, Shah M, Zack M, et al. Leveraging health-related quality of life in population health management: the case for healthy days. Popul Health Manag. 2017;20(1):13-22. doi:10.1089/pop.2015.0162

18. Karimi M, Brazier J. Health, health-related quality of life, and quality of life: what is the difference? Pharmacoeconomics. 2016;34(7):645-649. doi:10.1007/s40273-016-0389-9

19. Smith KW, Avis NE, Assmann SF. Distinguishing between quality of life and health status in quality of life research: a meta-analysis. Qual Life Res. 1999;8(5):447-459. doi:10.1023/a:1008928518577

20. Atroszko PA, Baginska P, Mokosinska M, et al. Validity and reliability of single-item self-report measures of general quality of life, general health and sleep quality. In: CER Comparative European Research 2015. Sciemcee Publishing; 2015:207-211.

21. Singh JA, Satele D, Pattabasavaiah S, et al. Normative data and clinically significant effect sizes for single-item numerical linear analogue self-assessment (LASA) scales. Health Qual Life Outcomes. 2014;12:187. doi:10.1186/s12955-014-0187-z

22. Siebens HC, Tsukerman D, Adkins RH, et al. Correlates of a single-item quality-of-life measure in people aging with disabilities. Am J Phys Med Rehabil. 2015;94(12):1065-1074. doi:10.1097/PHM.0000000000000298

23. Yohannes AM, Dodd M, Morris J, Webb K. Reliability and validity of a single item measure of quality of life scale for adult patients with cystic fibrosis. Health Qual Life Outcomes. 2011;9:105. doi:10.1186/1477-7525-9-105

24. Conway L, Widjaja E, Smith ML. Single-item measure for assessing quality of life in children with drug-resistant epilepsy. Epilepsia Open. 2017;3(1):46-54. doi:10.1002/epi4.12088

25. Barry MM, Zissi A. Quality of life as an outcome measure in evaluating mental health services: a review of the empirical evidence. Soc Psychiatry Psychiatr Epidemiol. 1997;32(1):38-47. doi:10.1007/BF00800666

26. Skevington SM, Lotfy M, O’Connell KA. The World Health Organization’s WHOQOL-BREF quality of life assessment: psychometric properties and results of the international field trial. Qual Life Res. 2004;13(2):299-310. doi:10.1023/B:QURE.0000018486.91360.00

27. Centers for Medicare & Medicaid Services. Hospital readmissions reduction program (HRRP). Accessed November 1, 2022. https://www.cms.gov/Medicare/Medicare-Fee-for-Service-Payment/AcuteInpatientPPS/Readmissions-Reduction-Program

28. Centers for Medicare & Medicaid Services. Patient-reported outcome measures. CMS Measures Management System. Published May 2022. Accessed November 1, 2022. https://www.cms.gov/files/document/blueprint-patient-reported-outcome-measures.pdf

From Milwaukee County Behavioral Health Services, Milwaukee, WI.

Abstract

Objectives: The goal of this study was to determine whether a single-item quality of life (QOL) measure could serve as a useful population health–level metric within the Quadruple Aim framework in a publicly funded behavioral health system.

Design: This was a retrospective, cross-sectional study that examined the correlation between the single-item QOL measure and several other key measures of the social determinants of health and a composite measure of acute service utilization for all patients receiving mental health and substance use services in a community behavioral health system.

Methods: Data were collected for 4488 patients who had at least 1 assessment between October 1, 2020, and September 30, 2021. Data on social determinants of health were obtained through patient self-report; acute service use data were obtained from electronic health records.

Results: Statistical analyses revealed results in the expected direction for all relationships tested. Patients with higher QOL were more likely to report “Good” or better self-rated physical health, be employed, have a private residence, and report recent positive social interactions, and were less likely to have received acute services in the previous 90 days.

Conclusion: A single-item QOL measure shows promise as a general, minimally burdensome whole-system metric that can function as a target for population health management efforts in a large behavioral health system. Future research should explore whether this QOL measure is sensitive to change over time and examine its temporal relationship with other key outcome metrics.

Keywords: Quadruple Aim, single-item measures, social determinants of health, acute service utilization metrics.

The Triple Aim for health care—improving the individual experience of care, increasing the health of populations, and reducing the costs of care—was first proposed in 2008.1 More recently, some have advocated for an expanded focus to include a fourth aim: the quality of staff work life.2 Since this seminal paper was published, many health care systems have endeavored to adopt and implement the Quadruple Aim3,4; however, the concepts representing each of the aims are not universally defined,3 nor are the measures needed to populate the Quadruple Aim always available within the health system in question.5

Although several assessment models and frameworks that provide guidance to stakeholders have been developed,6,7 it is ultimately up to organizations themselves to determine which measures they should deploy to best represent the different quadrants of the Quadruple Aim.6 Evidence suggests, however, that quality measurement, and the administrative time required to conduct it, can be both financially and emotionally burdensome to providers and health systems.8-10 Thus, it is incumbent on organizations to select a set of measures that are not only meaningful but as parsimonious as possible.6,11,12

Quality of life (QOL) is a potential candidate to assess the aim of population health. Brief health-related QOL questions have long been used in epidemiological surveys, such as the Behavioral Risk Factor Surveillance System survey.13 Such questions are also a key component of community health frameworks, such as the County Health Rankings developed by the University of Wisconsin Population Health Institute.14 Furthermore, Humana recently revealed that increasing the number of physical and mental health “Healthy Days” (which are among the Centers for Disease Control and Prevention’s Health-Related Quality of Life questions15) among the members enrolled in their insurance plan would become a major goal for the organization.16,17 Many of these measures, while brief, focus on QOL as a function of health, often as a self-rated construct (from “Poor” to “Excellent”) or in the form of days of poor physical or mental health in the past 30 days,15 rather than evaluating QOL itself; however, several authors have pointed out that health status and QOL are related but distinct concepts.18,19

Brief single-item assessments focused specifically on QOL have been developed and implemented within nonclinical20 and clinical populations, including individuals with cancer,21 adults with disabilities,22 individuals with cystic fibrosis,23 and children with epilepsy.24 Despite the long history of QOL assessment in behavioral health treatment,25 single-item measures have not been widely implemented in this population.

Milwaukee County Behavioral Health Services (BHS), a publicly funded, county-based behavioral health care system in Milwaukee, Wisconsin, provides inpatient and ambulatory treatment, psychiatric emergency care, withdrawal management, care management, crisis services, and other support services to individuals in Milwaukee County. In 2018 the community services arm of BHS began implementing a single QOL question from the World Health Organization’s WHOQOL-BREF26: On a 5-point rating scale of “Very Poor” to “Very Good,” “How would you rate your overall quality of life right now?” Previous research by Atroszko and colleagues,20 which used a similar approach with the same item from the WHOQOL-BREF, reported correlations in the expected direction of the single-item QOL measure with perceived stress, depression, anxiety, loneliness, and daily hours of sleep. This study’s sample, however, comprised opportunistically recruited college students, not a clinical population. Further, the researchers did not examine the relationship of QOL with acute service utilization or other measures of the social determinants of health, such as housing, employment, or social connectedness.

The following study was designed to extend these results by focusing on a clinical population—individuals with mental health or substance use issues—being served in a large, publicly funded behavioral health system in Milwaukee, Wisconsin. The objective of this study was to determine whether a single-item QOL measure could be used as a brief, parsimonious measure of overall population health by examining its relationship with other key outcome measures for patients receiving services from BHS. This study was reviewed and approved by BHS’s Institutional Review Board.

Methods

All patients engaged in nonacute community services are offered a standardized assessment that includes, among other measures, items related to QOL, housing status, employment status, self-rated physical health, and social connectedness. This assessment is administered at intake, discharge, and every 6 months while patients are enrolled in services. Patients who received at least 1 assessment between October 1, 2020, and September 30, 2021, were included in the analyses. Patients receiving crisis, inpatient, or withdrawal management services alone (ie, did not receive any other community-based services) were not offered the standard assessment and thus were not included in the analyses. If patients had more than 1 assessment during this time period, QOL data from the last assessment were used. Data on housing (private residence status, defined as adults living alone or with others without supervision in a house or apartment), employment status, self-rated physical health, and social connectedness (measured by asking people whether they have had positive interactions with family or friends in the past 30 days) were extracted from the same timepoint as well.

Also included in the analyses were rates of acute service utilization, in which any patient with at least 1 visit to BHS’s psychiatric emergency department, withdrawal management facility, or psychiatric inpatient facility in the 90 days prior to the date of the assessment received a code of “Yes,” and any patient who did not receive any of these services received a code of “No.” Chi-square analyses were conducted to determine the relationship between QOL rankings (“Very Poor,” “Poor,” “Neither Good nor Poor,” “Good,” and “Very Good”) and housing, employment, self-rated physical health, social connectedness, and 90-day acute service use. All acute service utilization data were obtained from BHS’s electronic health records system. All data used in the study were stored on a secure, password-protected server. All analyses were conducted with SPSS software (SPSS 28; IBM).

Results

Data were available for 4488 patients who received an assessment between October 1, 2020, and September 30, 2021 (total numbers per item vary because some items had missing data; see supplementary eTables 1-3 for sample size per item). Demographics of the patient sample are listed in Table 1; the demographics of the patients who were missing data for specific outcomes are presented in eTables 1-3.

Statistical analyses revealed results in the expected direction for all relationships tested (Table 2). As patients’ self-reported QOL improved, so did the likelihood of higher rates of self-reported “Good” or better physical health, which was 576% higher among individuals who reported “Very Good” QOL relative to those who reported “Very Poor” QOL. Similarly, when compared with individuals with “Very Poor” QOL, individuals who reported “Very Good” QOL were 21.91% more likely to report having a private residence, 126.7% more likely to report being employed, and 29.17% more likely to report having had positive social interactions with family and friends in the past 30 days. There was an inverse relationship between QOL and the likelihood that a patient had received at least 1 admission for an acute service in the previous 90 days, such that patients who reported “Very Good” QOL were 86.34% less likely to have had an admission compared to patients with “Very Poor” QOL (2.8% vs 20.5%, respectively). The relationships among the criterion variables used in this study are presented in Table 3.

Discussion

The results of this preliminary analysis suggest that self-rated QOL is related to key health, social determinants of health, and acute service utilization metrics. These data are important for several reasons. First, because QOL is a diagnostically agnostic measure, it is a cross-cutting measure to use with clinically diverse populations receiving an array of different services. Second, at 1 item, the QOL measure is extremely brief and therefore minimally onerous to implement for both patients and administratively overburdened providers. Third, its correlation with other key metrics suggests that it can function as a broad population health measure for health care organizations because individuals with higher QOL will also likely have better outcomes in other key areas. This suggests that it has the potential to broadly represent the overall status of a population of patients, thus functioning as a type of “whole system” measure, which the Institute for Healthcare Improvement describes as “a small set of measures that reflect a health system’s overall performance on core dimensions of quality guided by the Triple Aim.”7 These whole system measures can help focus an organization’s strategic initiatives and efforts on the issues that matter most to the patients and community it serves.

The relationship of QOL to acute service utilization deserves special mention. As an administrative measure, utilization is not susceptible to the same response bias as the other self-reported variables. Furthermore, acute services are costly to health systems, and hospital readmissions are associated with payment reductions in the Centers for Medicare and Medicaid Services (CMS) Hospital Readmissions Reduction Program for hospitals that fail to meet certain performance targets.27 Thus, because of its alignment with federal mandates, improved QOL (and potentially concomitant decreases in acute service use) may have significant financial implications for health systems as well.

This study was limited by several factors. First, it was focused on a population receiving publicly funded behavioral health services with strict eligibility requirements, one of which stipulated that individuals must be at 200% or less of the Federal Poverty Level; therefore, the results might not be applicable to health systems with a more clinically or socioeconomically diverse patient population. Second, because these data are cross-sectional, it was not possible to determine whether QOL improved over time or whether changes in QOL covaried longitudinally with the other metrics under observation. For example, if patients’ QOL improved from the first to last assessment, did their employment or residential status improve as well, or were these patients more likely to be employed at their first assessment? Furthermore, if there was covariance, did changes in employment, housing status, and so on precede changes in QOL or vice versa? Multiple longitudinal observations would help to address these questions and will be the focus of future analyses.

Conclusion

This preliminary study suggests that a single-item QOL measure may be a valuable population health–level metric for health systems. It requires little administrative effort on the part of either the clinician or patient. It is also agnostic with regard to clinical issue or treatment approach and can therefore admit of a range of diagnoses or patient-specific, idiosyncratic recovery goals. It is correlated with other key health, social determinants of health, and acute service utilization indicators and can therefore serve as a “whole system” measure because of its ability to broadly represent improvements in an entire population. Furthermore, QOL is patient-centered in that data are obtained through patient self-report, which is a high priority for CMS and other health care organizations.28 In summary, a single-item QOL measure holds promise for health care organizations looking to implement the Quadruple Aim and assess the health of the populations they serve in a manner that is simple, efficient, and patient-centered.

Acknowledgments: The author thanks Jennifer Wittwer for her thoughtful comments on the initial draft of this manuscript and Gary Kraft for his help extracting the data used in the analyses.

Corresponding author: Walter Matthew Drymalski, PhD; walter.drymalski@milwaukeecountywi.gov

Disclosures: None reported.

From Milwaukee County Behavioral Health Services, Milwaukee, WI.

Abstract

Objectives: The goal of this study was to determine whether a single-item quality of life (QOL) measure could serve as a useful population health–level metric within the Quadruple Aim framework in a publicly funded behavioral health system.

Design: This was a retrospective, cross-sectional study that examined the correlation between the single-item QOL measure and several other key measures of the social determinants of health and a composite measure of acute service utilization for all patients receiving mental health and substance use services in a community behavioral health system.

Methods: Data were collected for 4488 patients who had at least 1 assessment between October 1, 2020, and September 30, 2021. Data on social determinants of health were obtained through patient self-report; acute service use data were obtained from electronic health records.

Results: Statistical analyses revealed results in the expected direction for all relationships tested. Patients with higher QOL were more likely to report “Good” or better self-rated physical health, be employed, have a private residence, and report recent positive social interactions, and were less likely to have received acute services in the previous 90 days.

Conclusion: A single-item QOL measure shows promise as a general, minimally burdensome whole-system metric that can function as a target for population health management efforts in a large behavioral health system. Future research should explore whether this QOL measure is sensitive to change over time and examine its temporal relationship with other key outcome metrics.

Keywords: Quadruple Aim, single-item measures, social determinants of health, acute service utilization metrics.

The Triple Aim for health care—improving the individual experience of care, increasing the health of populations, and reducing the costs of care—was first proposed in 2008.1 More recently, some have advocated for an expanded focus to include a fourth aim: the quality of staff work life.2 Since this seminal paper was published, many health care systems have endeavored to adopt and implement the Quadruple Aim3,4; however, the concepts representing each of the aims are not universally defined,3 nor are the measures needed to populate the Quadruple Aim always available within the health system in question.5

Although several assessment models and frameworks that provide guidance to stakeholders have been developed,6,7 it is ultimately up to organizations themselves to determine which measures they should deploy to best represent the different quadrants of the Quadruple Aim.6 Evidence suggests, however, that quality measurement, and the administrative time required to conduct it, can be both financially and emotionally burdensome to providers and health systems.8-10 Thus, it is incumbent on organizations to select a set of measures that are not only meaningful but as parsimonious as possible.6,11,12

Quality of life (QOL) is a potential candidate to assess the aim of population health. Brief health-related QOL questions have long been used in epidemiological surveys, such as the Behavioral Risk Factor Surveillance System survey.13 Such questions are also a key component of community health frameworks, such as the County Health Rankings developed by the University of Wisconsin Population Health Institute.14 Furthermore, Humana recently revealed that increasing the number of physical and mental health “Healthy Days” (which are among the Centers for Disease Control and Prevention’s Health-Related Quality of Life questions15) among the members enrolled in their insurance plan would become a major goal for the organization.16,17 Many of these measures, while brief, focus on QOL as a function of health, often as a self-rated construct (from “Poor” to “Excellent”) or in the form of days of poor physical or mental health in the past 30 days,15 rather than evaluating QOL itself; however, several authors have pointed out that health status and QOL are related but distinct concepts.18,19

Brief single-item assessments focused specifically on QOL have been developed and implemented within nonclinical20 and clinical populations, including individuals with cancer,21 adults with disabilities,22 individuals with cystic fibrosis,23 and children with epilepsy.24 Despite the long history of QOL assessment in behavioral health treatment,25 single-item measures have not been widely implemented in this population.

Milwaukee County Behavioral Health Services (BHS), a publicly funded, county-based behavioral health care system in Milwaukee, Wisconsin, provides inpatient and ambulatory treatment, psychiatric emergency care, withdrawal management, care management, crisis services, and other support services to individuals in Milwaukee County. In 2018 the community services arm of BHS began implementing a single QOL question from the World Health Organization’s WHOQOL-BREF26: On a 5-point rating scale of “Very Poor” to “Very Good,” “How would you rate your overall quality of life right now?” Previous research by Atroszko and colleagues,20 which used a similar approach with the same item from the WHOQOL-BREF, reported correlations in the expected direction of the single-item QOL measure with perceived stress, depression, anxiety, loneliness, and daily hours of sleep. This study’s sample, however, comprised opportunistically recruited college students, not a clinical population. Further, the researchers did not examine the relationship of QOL with acute service utilization or other measures of the social determinants of health, such as housing, employment, or social connectedness.

The following study was designed to extend these results by focusing on a clinical population—individuals with mental health or substance use issues—being served in a large, publicly funded behavioral health system in Milwaukee, Wisconsin. The objective of this study was to determine whether a single-item QOL measure could be used as a brief, parsimonious measure of overall population health by examining its relationship with other key outcome measures for patients receiving services from BHS. This study was reviewed and approved by BHS’s Institutional Review Board.

Methods

All patients engaged in nonacute community services are offered a standardized assessment that includes, among other measures, items related to QOL, housing status, employment status, self-rated physical health, and social connectedness. This assessment is administered at intake, discharge, and every 6 months while patients are enrolled in services. Patients who received at least 1 assessment between October 1, 2020, and September 30, 2021, were included in the analyses. Patients receiving crisis, inpatient, or withdrawal management services alone (ie, did not receive any other community-based services) were not offered the standard assessment and thus were not included in the analyses. If patients had more than 1 assessment during this time period, QOL data from the last assessment were used. Data on housing (private residence status, defined as adults living alone or with others without supervision in a house or apartment), employment status, self-rated physical health, and social connectedness (measured by asking people whether they have had positive interactions with family or friends in the past 30 days) were extracted from the same timepoint as well.

Also included in the analyses were rates of acute service utilization, in which any patient with at least 1 visit to BHS’s psychiatric emergency department, withdrawal management facility, or psychiatric inpatient facility in the 90 days prior to the date of the assessment received a code of “Yes,” and any patient who did not receive any of these services received a code of “No.” Chi-square analyses were conducted to determine the relationship between QOL rankings (“Very Poor,” “Poor,” “Neither Good nor Poor,” “Good,” and “Very Good”) and housing, employment, self-rated physical health, social connectedness, and 90-day acute service use. All acute service utilization data were obtained from BHS’s electronic health records system. All data used in the study were stored on a secure, password-protected server. All analyses were conducted with SPSS software (SPSS 28; IBM).

Results

Data were available for 4488 patients who received an assessment between October 1, 2020, and September 30, 2021 (total numbers per item vary because some items had missing data; see supplementary eTables 1-3 for sample size per item). Demographics of the patient sample are listed in Table 1; the demographics of the patients who were missing data for specific outcomes are presented in eTables 1-3.

Statistical analyses revealed results in the expected direction for all relationships tested (Table 2). As patients’ self-reported QOL improved, so did the likelihood of higher rates of self-reported “Good” or better physical health, which was 576% higher among individuals who reported “Very Good” QOL relative to those who reported “Very Poor” QOL. Similarly, when compared with individuals with “Very Poor” QOL, individuals who reported “Very Good” QOL were 21.91% more likely to report having a private residence, 126.7% more likely to report being employed, and 29.17% more likely to report having had positive social interactions with family and friends in the past 30 days. There was an inverse relationship between QOL and the likelihood that a patient had received at least 1 admission for an acute service in the previous 90 days, such that patients who reported “Very Good” QOL were 86.34% less likely to have had an admission compared to patients with “Very Poor” QOL (2.8% vs 20.5%, respectively). The relationships among the criterion variables used in this study are presented in Table 3.

Discussion

The results of this preliminary analysis suggest that self-rated QOL is related to key health, social determinants of health, and acute service utilization metrics. These data are important for several reasons. First, because QOL is a diagnostically agnostic measure, it is a cross-cutting measure to use with clinically diverse populations receiving an array of different services. Second, at 1 item, the QOL measure is extremely brief and therefore minimally onerous to implement for both patients and administratively overburdened providers. Third, its correlation with other key metrics suggests that it can function as a broad population health measure for health care organizations because individuals with higher QOL will also likely have better outcomes in other key areas. This suggests that it has the potential to broadly represent the overall status of a population of patients, thus functioning as a type of “whole system” measure, which the Institute for Healthcare Improvement describes as “a small set of measures that reflect a health system’s overall performance on core dimensions of quality guided by the Triple Aim.”7 These whole system measures can help focus an organization’s strategic initiatives and efforts on the issues that matter most to the patients and community it serves.

The relationship of QOL to acute service utilization deserves special mention. As an administrative measure, utilization is not susceptible to the same response bias as the other self-reported variables. Furthermore, acute services are costly to health systems, and hospital readmissions are associated with payment reductions in the Centers for Medicare and Medicaid Services (CMS) Hospital Readmissions Reduction Program for hospitals that fail to meet certain performance targets.27 Thus, because of its alignment with federal mandates, improved QOL (and potentially concomitant decreases in acute service use) may have significant financial implications for health systems as well.

This study was limited by several factors. First, it was focused on a population receiving publicly funded behavioral health services with strict eligibility requirements, one of which stipulated that individuals must be at 200% or less of the Federal Poverty Level; therefore, the results might not be applicable to health systems with a more clinically or socioeconomically diverse patient population. Second, because these data are cross-sectional, it was not possible to determine whether QOL improved over time or whether changes in QOL covaried longitudinally with the other metrics under observation. For example, if patients’ QOL improved from the first to last assessment, did their employment or residential status improve as well, or were these patients more likely to be employed at their first assessment? Furthermore, if there was covariance, did changes in employment, housing status, and so on precede changes in QOL or vice versa? Multiple longitudinal observations would help to address these questions and will be the focus of future analyses.

Conclusion

This preliminary study suggests that a single-item QOL measure may be a valuable population health–level metric for health systems. It requires little administrative effort on the part of either the clinician or patient. It is also agnostic with regard to clinical issue or treatment approach and can therefore admit of a range of diagnoses or patient-specific, idiosyncratic recovery goals. It is correlated with other key health, social determinants of health, and acute service utilization indicators and can therefore serve as a “whole system” measure because of its ability to broadly represent improvements in an entire population. Furthermore, QOL is patient-centered in that data are obtained through patient self-report, which is a high priority for CMS and other health care organizations.28 In summary, a single-item QOL measure holds promise for health care organizations looking to implement the Quadruple Aim and assess the health of the populations they serve in a manner that is simple, efficient, and patient-centered.

Acknowledgments: The author thanks Jennifer Wittwer for her thoughtful comments on the initial draft of this manuscript and Gary Kraft for his help extracting the data used in the analyses.

Corresponding author: Walter Matthew Drymalski, PhD; walter.drymalski@milwaukeecountywi.gov

Disclosures: None reported.

1. Berwick DM, Nolan TW, Whittington J. The triple aim: care, health, and cost. Health Aff (Millwood). 2008;27(3):759-769. doi:10.1377/hlthaff.27.3.759

2. Bodenheimer T, Sinsky C. From triple to quadruple aim: care of the patient requires care of the provider. Ann Fam Med. 2014;12(6):573-576. doi:10.1370/afm.1713

3. Hendrikx RJP, Drewes HW, Spreeuwenberg M, et al. Which triple aim related measures are being used to evaluate population management initiatives? An international comparative analysis. Health Policy. 2016;120(5):471-485. doi:10.1016/j.healthpol.2016.03.008

4. Whittington JW, Nolan K, Lewis N, Torres T. Pursuing the triple aim: the first 7 years. Milbank Q. 2015;93(2):263-300. doi:10.1111/1468-0009.12122

5. Ryan BL, Brown JB, Glazier RH, Hutchison B. Examining primary healthcare performance through a triple aim lens. Healthc Policy. 2016;11(3):19-31.

6. Stiefel M, Nolan K. A guide to measuring the Triple Aim: population health, experience of care, and per capita cost. Institute for Healthcare Improvement; 2012. Accessed November 1, 2022. https://nhchc.org/wp-content/uploads/2019/08/ihiguidetomeasuringtripleaimwhitepaper2012.pdf

7. Martin L, Nelson E, Rakover J, Chase A. Whole system measures 2.0: a compass for health system leaders. Institute for Healthcare Improvement; 2016. Accessed November 1, 2022. http://www.ihi.org:80/resources/Pages/IHIWhitePapers/Whole-System-Measures-Compass-for-Health-System-Leaders.aspx

8. Casalino LP, Gans D, Weber R, et al. US physician practices spend more than $15.4 billion annually to report quality measures. Health Aff (Millwood). 2016;35(3):401-406. doi:10.1377/hlthaff.2015.1258

9. Rao SK, Kimball AB, Lehrhoff SR, et al. The impact of administrative burden on academic physicians: results of a hospital-wide physician survey. Acad Med. 2017;92(2):237-243. doi:10.1097/ACM.0000000000001461

10. Woolhandler S, Himmelstein DU. Administrative work consumes one-sixth of U.S. physicians’ working hours and lowers their career satisfaction. Int J Health Serv. 2014;44(4):635-642. doi:10.2190/HS.44.4.a

11. Meyer GS, Nelson EC, Pryor DB, et al. More quality measures versus measuring what matters: a call for balance and parsimony. BMJ Qual Saf. 2012;21(11):964-968. doi:10.1136/bmjqs-2012-001081

12. Vital Signs: Core Metrics for Health and Health Care Progress. Washington, DC: National Academies Press; 2015. doi:10.17226/19402

13. Centers for Disease Control and Prevention. BRFSS questionnaires. Accessed November 1, 2022. https://www.cdc.gov/brfss/questionnaires/index.htm

14. County Health Rankings and Roadmaps. Measures & data sources. University of Wisconsin Population Health Institute. Accessed November 1, 2022. https://www.countyhealthrankings.org/explore-health-rankings/measures-data-sources

15. Centers for Disease Control and Prevention. Healthy days core module (CDC HRQOL-4). Accessed November 1, 2022. https://www.cdc.gov/hrqol/hrqol14_measure.htm

16. Cordier T, Song Y, Cambon J, et al. A bold goal: more healthy days through improved community health. Popul Health Manag. 2018;21(3):202-208. doi:10.1089/pop.2017.0142

17. Slabaugh SL, Shah M, Zack M, et al. Leveraging health-related quality of life in population health management: the case for healthy days. Popul Health Manag. 2017;20(1):13-22. doi:10.1089/pop.2015.0162

18. Karimi M, Brazier J. Health, health-related quality of life, and quality of life: what is the difference? Pharmacoeconomics. 2016;34(7):645-649. doi:10.1007/s40273-016-0389-9

19. Smith KW, Avis NE, Assmann SF. Distinguishing between quality of life and health status in quality of life research: a meta-analysis. Qual Life Res. 1999;8(5):447-459. doi:10.1023/a:1008928518577

20. Atroszko PA, Baginska P, Mokosinska M, et al. Validity and reliability of single-item self-report measures of general quality of life, general health and sleep quality. In: CER Comparative European Research 2015. Sciemcee Publishing; 2015:207-211.

21. Singh JA, Satele D, Pattabasavaiah S, et al. Normative data and clinically significant effect sizes for single-item numerical linear analogue self-assessment (LASA) scales. Health Qual Life Outcomes. 2014;12:187. doi:10.1186/s12955-014-0187-z

22. Siebens HC, Tsukerman D, Adkins RH, et al. Correlates of a single-item quality-of-life measure in people aging with disabilities. Am J Phys Med Rehabil. 2015;94(12):1065-1074. doi:10.1097/PHM.0000000000000298

23. Yohannes AM, Dodd M, Morris J, Webb K. Reliability and validity of a single item measure of quality of life scale for adult patients with cystic fibrosis. Health Qual Life Outcomes. 2011;9:105. doi:10.1186/1477-7525-9-105

24. Conway L, Widjaja E, Smith ML. Single-item measure for assessing quality of life in children with drug-resistant epilepsy. Epilepsia Open. 2017;3(1):46-54. doi:10.1002/epi4.12088

25. Barry MM, Zissi A. Quality of life as an outcome measure in evaluating mental health services: a review of the empirical evidence. Soc Psychiatry Psychiatr Epidemiol. 1997;32(1):38-47. doi:10.1007/BF00800666

26. Skevington SM, Lotfy M, O’Connell KA. The World Health Organization’s WHOQOL-BREF quality of life assessment: psychometric properties and results of the international field trial. Qual Life Res. 2004;13(2):299-310. doi:10.1023/B:QURE.0000018486.91360.00

27. Centers for Medicare & Medicaid Services. Hospital readmissions reduction program (HRRP). Accessed November 1, 2022. https://www.cms.gov/Medicare/Medicare-Fee-for-Service-Payment/AcuteInpatientPPS/Readmissions-Reduction-Program

28. Centers for Medicare & Medicaid Services. Patient-reported outcome measures. CMS Measures Management System. Published May 2022. Accessed November 1, 2022. https://www.cms.gov/files/document/blueprint-patient-reported-outcome-measures.pdf

1. Berwick DM, Nolan TW, Whittington J. The triple aim: care, health, and cost. Health Aff (Millwood). 2008;27(3):759-769. doi:10.1377/hlthaff.27.3.759

2. Bodenheimer T, Sinsky C. From triple to quadruple aim: care of the patient requires care of the provider. Ann Fam Med. 2014;12(6):573-576. doi:10.1370/afm.1713

3. Hendrikx RJP, Drewes HW, Spreeuwenberg M, et al. Which triple aim related measures are being used to evaluate population management initiatives? An international comparative analysis. Health Policy. 2016;120(5):471-485. doi:10.1016/j.healthpol.2016.03.008

4. Whittington JW, Nolan K, Lewis N, Torres T. Pursuing the triple aim: the first 7 years. Milbank Q. 2015;93(2):263-300. doi:10.1111/1468-0009.12122

5. Ryan BL, Brown JB, Glazier RH, Hutchison B. Examining primary healthcare performance through a triple aim lens. Healthc Policy. 2016;11(3):19-31.

6. Stiefel M, Nolan K. A guide to measuring the Triple Aim: population health, experience of care, and per capita cost. Institute for Healthcare Improvement; 2012. Accessed November 1, 2022. https://nhchc.org/wp-content/uploads/2019/08/ihiguidetomeasuringtripleaimwhitepaper2012.pdf

7. Martin L, Nelson E, Rakover J, Chase A. Whole system measures 2.0: a compass for health system leaders. Institute for Healthcare Improvement; 2016. Accessed November 1, 2022. http://www.ihi.org:80/resources/Pages/IHIWhitePapers/Whole-System-Measures-Compass-for-Health-System-Leaders.aspx

8. Casalino LP, Gans D, Weber R, et al. US physician practices spend more than $15.4 billion annually to report quality measures. Health Aff (Millwood). 2016;35(3):401-406. doi:10.1377/hlthaff.2015.1258

9. Rao SK, Kimball AB, Lehrhoff SR, et al. The impact of administrative burden on academic physicians: results of a hospital-wide physician survey. Acad Med. 2017;92(2):237-243. doi:10.1097/ACM.0000000000001461

10. Woolhandler S, Himmelstein DU. Administrative work consumes one-sixth of U.S. physicians’ working hours and lowers their career satisfaction. Int J Health Serv. 2014;44(4):635-642. doi:10.2190/HS.44.4.a

11. Meyer GS, Nelson EC, Pryor DB, et al. More quality measures versus measuring what matters: a call for balance and parsimony. BMJ Qual Saf. 2012;21(11):964-968. doi:10.1136/bmjqs-2012-001081

12. Vital Signs: Core Metrics for Health and Health Care Progress. Washington, DC: National Academies Press; 2015. doi:10.17226/19402

13. Centers for Disease Control and Prevention. BRFSS questionnaires. Accessed November 1, 2022. https://www.cdc.gov/brfss/questionnaires/index.htm

14. County Health Rankings and Roadmaps. Measures & data sources. University of Wisconsin Population Health Institute. Accessed November 1, 2022. https://www.countyhealthrankings.org/explore-health-rankings/measures-data-sources

15. Centers for Disease Control and Prevention. Healthy days core module (CDC HRQOL-4). Accessed November 1, 2022. https://www.cdc.gov/hrqol/hrqol14_measure.htm

16. Cordier T, Song Y, Cambon J, et al. A bold goal: more healthy days through improved community health. Popul Health Manag. 2018;21(3):202-208. doi:10.1089/pop.2017.0142

17. Slabaugh SL, Shah M, Zack M, et al. Leveraging health-related quality of life in population health management: the case for healthy days. Popul Health Manag. 2017;20(1):13-22. doi:10.1089/pop.2015.0162

18. Karimi M, Brazier J. Health, health-related quality of life, and quality of life: what is the difference? Pharmacoeconomics. 2016;34(7):645-649. doi:10.1007/s40273-016-0389-9

19. Smith KW, Avis NE, Assmann SF. Distinguishing between quality of life and health status in quality of life research: a meta-analysis. Qual Life Res. 1999;8(5):447-459. doi:10.1023/a:1008928518577

20. Atroszko PA, Baginska P, Mokosinska M, et al. Validity and reliability of single-item self-report measures of general quality of life, general health and sleep quality. In: CER Comparative European Research 2015. Sciemcee Publishing; 2015:207-211.

21. Singh JA, Satele D, Pattabasavaiah S, et al. Normative data and clinically significant effect sizes for single-item numerical linear analogue self-assessment (LASA) scales. Health Qual Life Outcomes. 2014;12:187. doi:10.1186/s12955-014-0187-z

22. Siebens HC, Tsukerman D, Adkins RH, et al. Correlates of a single-item quality-of-life measure in people aging with disabilities. Am J Phys Med Rehabil. 2015;94(12):1065-1074. doi:10.1097/PHM.0000000000000298

23. Yohannes AM, Dodd M, Morris J, Webb K. Reliability and validity of a single item measure of quality of life scale for adult patients with cystic fibrosis. Health Qual Life Outcomes. 2011;9:105. doi:10.1186/1477-7525-9-105

24. Conway L, Widjaja E, Smith ML. Single-item measure for assessing quality of life in children with drug-resistant epilepsy. Epilepsia Open. 2017;3(1):46-54. doi:10.1002/epi4.12088

25. Barry MM, Zissi A. Quality of life as an outcome measure in evaluating mental health services: a review of the empirical evidence. Soc Psychiatry Psychiatr Epidemiol. 1997;32(1):38-47. doi:10.1007/BF00800666

26. Skevington SM, Lotfy M, O’Connell KA. The World Health Organization’s WHOQOL-BREF quality of life assessment: psychometric properties and results of the international field trial. Qual Life Res. 2004;13(2):299-310. doi:10.1023/B:QURE.0000018486.91360.00

27. Centers for Medicare & Medicaid Services. Hospital readmissions reduction program (HRRP). Accessed November 1, 2022. https://www.cms.gov/Medicare/Medicare-Fee-for-Service-Payment/AcuteInpatientPPS/Readmissions-Reduction-Program

28. Centers for Medicare & Medicaid Services. Patient-reported outcome measures. CMS Measures Management System. Published May 2022. Accessed November 1, 2022. https://www.cms.gov/files/document/blueprint-patient-reported-outcome-measures.pdf

Neurosurgery Operating Room Efficiency During the COVID-19 Era

From the Department of Neurological Surgery, Vanderbilt University Medical Center, Nashville, TN (Stefan W. Koester, Puja Jagasia, and Drs. Liles, Dambrino IV, Feldman, and Chambless), and the Department of Anesthesiology, Vanderbilt University Medical Center, Nashville, TN (Drs. Mathews and Tiwari).

ABSTRACT

Background: The COVID-19 pandemic has had broad effects on surgical care, including operating room (OR) staffing, personal protective equipment (PPE) utilization, and newly implemented anti-infective measures. Our aim was to assess neurosurgery OR efficiency before the COVID-19 pandemic, during peak COVID-19, and during current times.

Methods: Institutional perioperative databases at a single, high-volume neurosurgical center were queried for operations performed from December 2019 until October 2021. March 12, 2020, the day that the state of Tennessee declared a state of emergency, was chosen as the onset of the COVID-19 pandemic. The 90-day periods before and after this day were used to define the pre-COVID-19, peak-COVID-19, and post-peak restrictions time periods for comparative analysis. Outcomes included delay in first-start and OR turnover time between neurosurgical cases. Preset threshold times were used in analyses to adjust for normal leniency in OR scheduling (15 minutes for first start and 90 minutes for turnover). Univariate analysis used Wilcoxon rank-sum test for continuous outcomes, while chi-square test and Fisher’s exact test were used for categorical comparisons. Significance was defined as P < .05.

Results: First-start time was analyzed in 426 pre-COVID-19, 357 peak-restrictions, and 2304 post-peak-restrictions cases. The unadjusted mean delay length was found to be significantly different between the time periods, but the magnitude of increase in minutes was immaterial (mean [SD] minutes, 6 [18] vs 10 [21] vs 8 [20], respectively; P = .004). The adjusted average delay length and proportion of cases delayed beyond the 15-minute threshold were not significantly different. The proportion of cases that started early, as well as significantly early past a 15-minute threshold, have not been impacted. There was no significant change in turnover time during peak restrictions relative to the pre-COVID-19 period (88 [100] minutes vs 85 [95] minutes), and turnover time has since remained unchanged (83 [87] minutes).

Conclusion: Our center was able to maintain OR efficiency before, during, and after peak restrictions even while instituting advanced infection-control strategies. While there were significant changes, delays were relatively small in magnitude.

Keywords: operating room timing, hospital efficiency, socioeconomics, pandemic.

The COVID-19 pandemic has led to major changes in patient care both from a surgical perspective and in regard to inpatient hospital course. Safety protocols nationwide have been implemented to protect both patients and providers. Some elements of surgical care have drastically changed, including operating room (OR) staffing, personal protective equipment (PPE) utilization, and increased sterilization measures. Furloughs, layoffs, and reassignments due to the focus on nonelective and COVID-19–related cases challenged OR staffing and efficiency. Operating room staff with COVID-19 exposures or COVID-19 infections also caused last-minute changes in staffing. All of these scenarios can cause issues due to actual understaffing or due to staff members being pushed into highly specialized areas, such as neurosurgery, in which they have very little experience. A further obstacle to OR efficiency included policy changes involving PPE utilization, sterilization measures, and supply chain shortages of necessary resources such as PPE.

Neurosurgery in particular has been susceptible to COVID-19–related system-wide changes given operator proximity to the patient’s respiratory passages, frequency of emergent cases, and varying anesthetic needs, as well as the high level of specialization needed to perform neurosurgical care. Previous studies have shown a change in the makeup of neurosurgical patients seeking care, as well as in the acuity of neurological consult of these patients.1 A study in orthopedic surgery by Andreata et al demonstrated worsened OR efficiency, with significantly increased first-start and turnover times.2 In the COVID-19 era, OR quality and safety are crucially important to both patients and providers. Providing this safe and effective care in an efficient manner is important for optimal neurosurgical management in the long term.3 Moreover, the financial burden of implementing new protocols and standards can be compounded by additional financial losses due to reduced OR efficiency.

Methods

To analyze the effect of COVID-19 on neurosurgical OR efficiency, institutional perioperative databases at a single high-volume center were queried for operations performed from December 2019 until October 2021. March 12, 2020, was chosen as the onset of COVID-19 for analytic purposes, as this was the date when the state of Tennessee declared a state of emergency. The 90-day periods before and after this date were used for comparative analysis for pre-COVID-19, peak COVID-19, and post-peak-restrictions time periods. The peak COVID-19 period was defined as the 90-day period following the initial onset of COVID-19 and the surge of cases. For comparison purposes, post-peak COVID-19 was defined as the months following the first peak until October 2021 (approximately 17 months). COVID-19 burden was determined using a COVID-19 single-institution census of confirmed cases by polymerase chain reaction (PCR) for which the average number of cases of COVID-19 during a given month was determined. This number is a scaled trend, and a true number of COVID-19 cases in our hospital was not reported.

Neurosurgical and neuroendovascular cases were included in the analysis. Outcomes included delay in first-start and OR turnover time between neurosurgical cases, defined as the time from the patient leaving the room until the next patient entered the room. Preset threshold times were used in analyses to adjust for normal leniency in OR scheduling (15 minutes for first start and 90 minutes for turnover, which is a standard for our single-institution perioperative center). Statistical analyses, including data aggregation, were performed using R, version 4.0.1 (R Foundation for Statistical Computing). Patients’ demographic and clinical characteristics were analyzed using an independent 2-sample t-test for interval variables and a chi-square test for categorical variables. Significance was defined as P < .05.

Results

First-Start Time

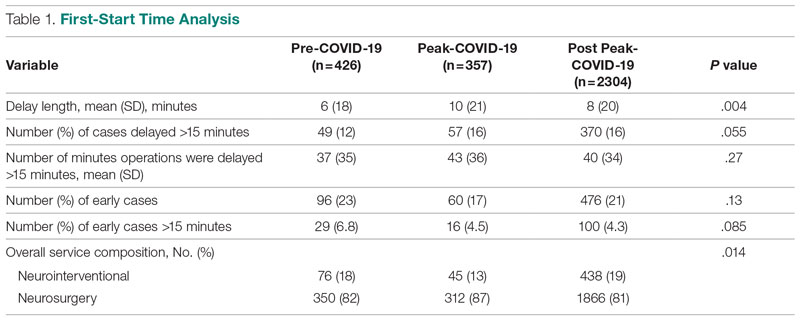

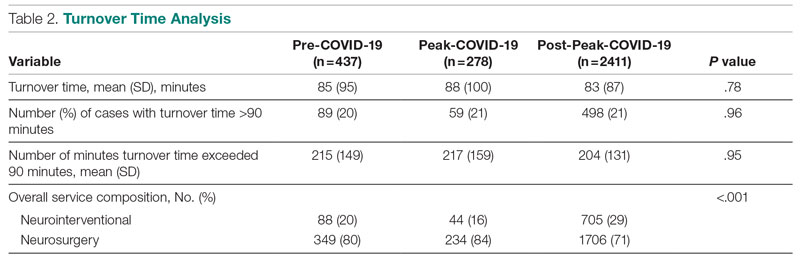

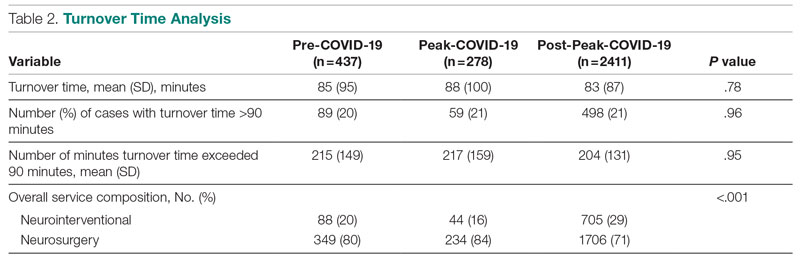

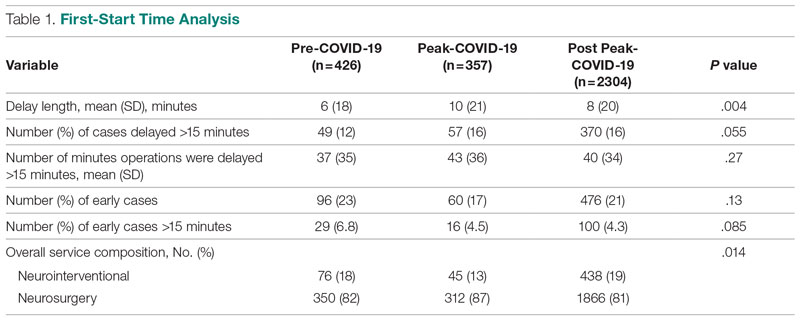

First-start time was analyzed in 426 pre-COVID-19, 357 peak-COVID-19, and 2304 post-peak-COVID-19 cases. The unadjusted mean delay length was significantly different between the time periods, but the magnitude of increase in minutes was immaterial (mean [SD] minutes, 6 [18] vs 10 [21] vs 8 [20], respectively; P = .004) (Table 1).

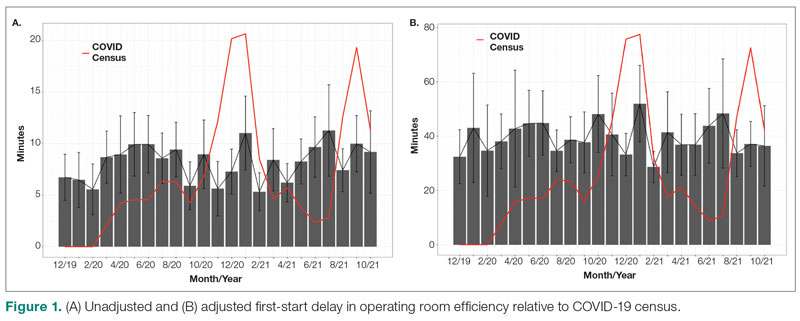

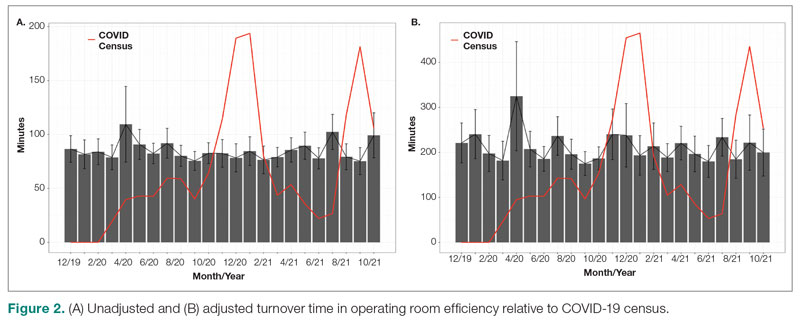

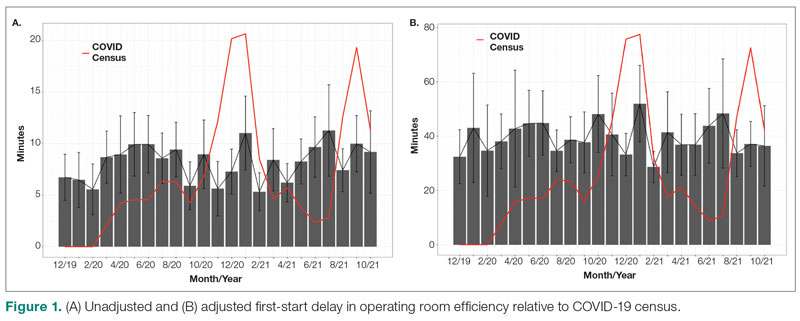

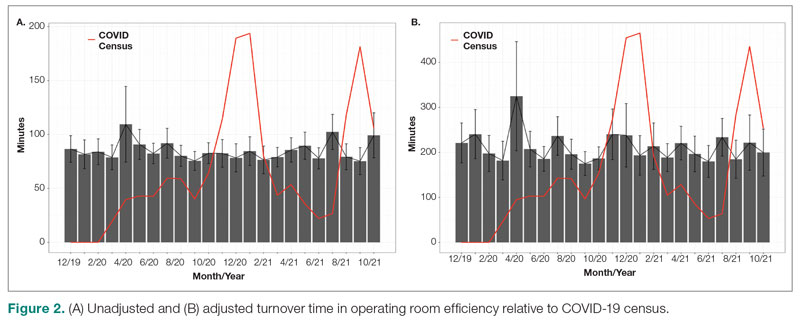

The adjusted average delay length and proportion of cases delayed beyond the 15-minute threshold were not significantly different, but they have been slightly higher since the onset of COVID-19. The proportion of cases that have started early, as well as significantly early past a 15-minute threshold, have also trended down since the onset of the COVID-19 pandemic, but this difference was again not significant. The temporal relationship of first-start delay, both unadjusted and adjusted, from December 2019 to October 2021 is shown in Figure 1. The trend of increasing delay is loosely associated with the COVID-19 burden experienced by our hospital. The start of COVID-19 as well as both COVID-19 peaks have been associated with increased delays in our hospital.

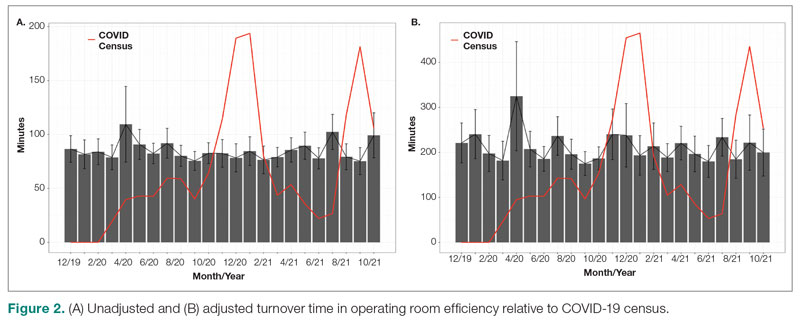

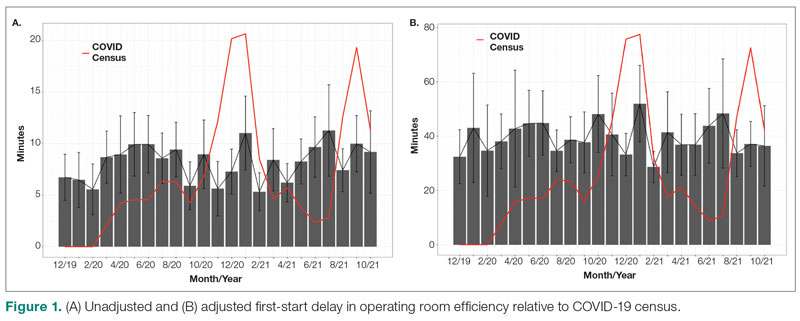

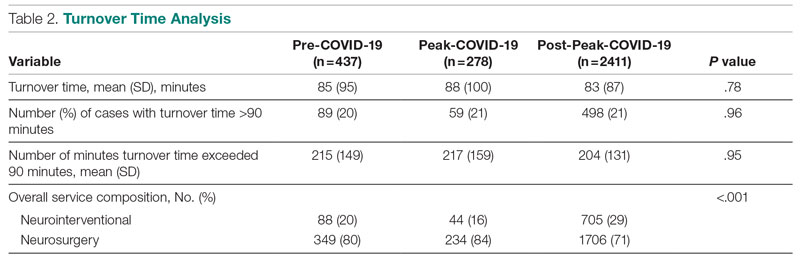

Turnover Time

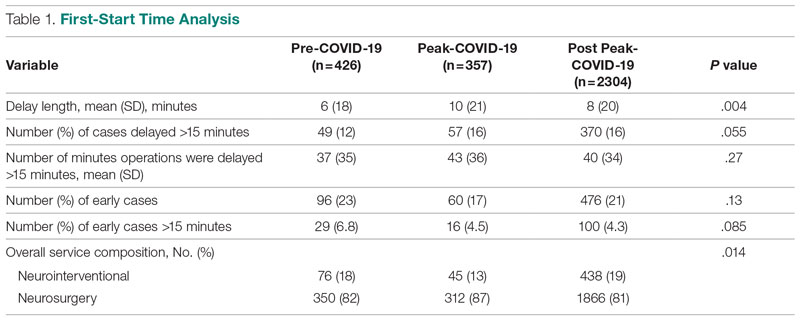

Turnover time was assessed in 437 pre-COVID-19, 278 peak-restrictions, and 2411 post-peak-restrictions cases. Turnover time during peak restrictions was not significantly different from pre-COVID-19 (88 [100] vs 85 [95]) and has since remained relatively unchanged (83 [87], P = .78). A similar trend held for comparisons of proportion of cases with turnover time past 90 minutes and average times past the 90-minute threshold (Table 2). The temporal relationship between COVID-19 burden and turnover time, both unadjusted and adjusted, from December 2019 to October 2021 is shown in Figure 2. Both figures demonstrate a slight initial increase in turnover time delay at the start of COVID-19, which stabilized with little variation thereafter.

Discussion

We analyzed the OR efficiency metrics of first-start and turnover time during the 90-day period before COVID-19 (pre-COVID-19), the 90 days following Tennessee declaring a state of emergency (peak COVID-19), and the time following this period (post-COVID-19) for all neurosurgical and neuroendovascular cases at Vanderbilt University Medical Center (VUMC). We found a significant difference in unadjusted mean delay length in first-start time between the time periods, but the magnitude of increase in minutes was immaterial (mean [SD] minutes for pre-COVID-19, peak-COVID-19, and post-COVID-19: 6 [18] vs 10 [21] vs 8 [20], respectively; P = .004). No significant increase in turnover time between cases was found between these 3 time periods. Based on metrics from first-start delay and turnover time, our center was able to maintain OR efficiency before, during, and after peak COVID-19.

After the Centers for Disease Control and Prevention released guidelines recommending deferring elective procedures to conserve beds and PPE, VUMC made the decision to suspend all elective surgical procedures from March 18 to April 24, 2020. Prior research conducted during the COVID-19 pandemic has demonstrated more than 400 types of surgical procedures with negatively impacted outcomes when compared to surgical outcomes from the same time frame in 2018 and 2019.4 For more than 20 of these types of procedures, there was a significant association between procedure delay and adverse patient outcomes.4 Testing protocols for patients prior to surgery varied throughout the pandemic based on vaccination status and type of procedure. Before vaccines became widely available, all patients were required to obtain a PCR SARS-CoV-2 test within 48 to 72 hours of the scheduled procedure. If the patient’s procedure was urgent and testing was not feasible, the patient was treated as a SARS-CoV-2–positive patient, which required all health care workers involved in the case to wear gowns, gloves, surgical masks, and eye protection. Testing patients preoperatively likely helped to maintain OR efficiency since not all patients received test results prior to the scheduled procedure, leading to cancellations of cases and therefore more staff available for fewer cases.

After vaccines became widely available to the public, testing requirements for patients preoperatively were relaxed, and only patients who were not fully vaccinated or severely immunocompromised were required to test prior to procedures. However, approximately 40% of the population in Tennessee was fully vaccinated in 2021, which reflects the patient population of VUMC.5 This means that many patients who received care at VUMC were still tested prior to procedures.

Adopting adequate safety protocols was found to be key for OR efficiency during the COVID-19 pandemic since performing surgery increased the risk of infection for each health care worker in the OR.6 VUMC protocols identified procedures that required enhanced safety measures to prevent infection of health care workers and avoid staffing shortages, which would decrease OR efficiency. Protocols mandated that only anesthesia team members were allowed to be in the OR during intubation and extubation of patients, which could be one factor leading to increased delays and decreased efficiency for some institutions. Methods for neurosurgeons to decrease risk of infection in the OR include postponing all nonurgent cases, reappraising the necessity for general anesthesia and endotracheal intubation, considering alternative surgical approaches that avoid the respiratory tract, and limiting the use of aerosol-generating instruments.7,8 VUMC’s success in implementing these protocols likely explains why our center was able to maintain OR efficiency throughout the COVID-19 pandemic.

A study conducted by Andreata et al showed a significantly increased mean first-case delay and a nonsignificant increased turnover time in orthopedic surgeries in Northern Italy when comparing surgeries performed during the COVID-19 pandemic to those performed prior to COVID-19.2 Other studies have indicated a similar trend in decreased OR efficiency during COVID-19 in other areas around the world.9,10 These findings are not consistent with our own findings for neurosurgical and neuroendovascular surgeries at VUMC, and any change at our institution was relatively immaterial. Factors that threatened to change OR efficiency—but did not result in meaningful changes in our institutional experience—include delays due to pending COVID-19 test results, safety procedures such as PPE donning, and planning difficulties to ensure the existence of teams with non-overlapping providers in the case of a surgeon being infected.2,11-13

Globally, many surgery centers halted all elective surgeries during the initial COVID-19 spike to prevent a PPE shortage and mitigate risk of infection of patients and health care workers.8,12,14 However, there is no centralized definition of which neurosurgical procedures are elective, so that decision was made on a surgeon or center level, which could lead to variability in efficiency trends.14 One study on neurosurgical procedures during COVID-19 found a 30% decline in all cases and a 23% decline in emergent procedures, showing that the decrease in volume was not only due to cancellation of elective procedures.15 This decrease in elective and emergent surgeries created a backlog of surgeries as well as a loss in health care revenue, and caused many patients to go without adequate health care.10 Looking forward, it is imperative that surgical centers study trends in OR efficiency from COVID-19 and learn how to better maintain OR efficiency during future pandemic conditions to prevent a backlog of cases, loss of health care revenue, and decreased health care access.

Limitations

Our data are from a single center and therefore may not be representative of experiences of other hospitals due to different populations and different impacts from COVID-19. However, given our center’s high volume and diverse patient population, we believe our analysis highlights important trends in neurosurgery practice. Notably, data for patient and OR timing are digitally generated and are entered manually by nurses in the electronic medical record, making it prone to errors and variability. This is in our experience, and if any error is present, we believe it is minimal.

Conclusion

The COVID-19 pandemic has had far-reaching effects on health care worldwide, including neurosurgical care. OR efficiency across the United States generally worsened given the stresses of supply chain issues, staffing shortages, and cancellations. At our institution, we were able to maintain OR efficiency during the known COVID-19 peaks until October 2021. Continually functional neurosurgical ORs are important in preventing delays in care and maintaining a steady revenue in order for hospitals and other health care entities to remain solvent. Further study of OR efficiency is needed for health care systems to prepare for future pandemics and other resource-straining events in order to provide optimal patient care.

Corresponding author: Campbell Liles, MD, Vanderbilt University Medical Center, Department of Neurological Surgery, 1161 21st Ave. South, T4224 Medical Center North, Nashville, TN 37232-2380; david.c.liles.1@vumc.org

Disclosures: None reported.

1. Koester SW, Catapano JS, Ma KL, et al. COVID-19 and neurosurgery consultation call volume at a single large tertiary center with a propensity- adjusted analysis. World Neurosurg. 2021;146:e768-e772. doi:10.1016/j.wneu.2020.11.017

2. Andreata M, Faraldi M, Bucci E, Lombardi G, Zagra L. Operating room efficiency and timing during coronavirus disease 2019 outbreak in a referral orthopaedic hospital in Northern Italy. Int Orthop. 2020;44(12):2499-2504. doi:10.1007/s00264-020-04772-x

3. Dexter F, Abouleish AE, Epstein RH, et al. Use of operating room information system data to predict the impact of reducing turnover times on staffing costs. Anesth Analg. 2003;97(4):1119-1126. doi:10.1213/01.ANE.0000082520.68800.79

4. Zheng NS, Warner JL, Osterman TJ, et al. A retrospective approach to evaluating potential adverse outcomes associated with delay of procedures for cardiovascular and cancer-related diagnoses in the context of COVID-19. J Biomed Inform. 2021;113:103657. doi:10.1016/j.jbi.2020.103657

5. Alcendor DJ. Targeting COVID-19 vaccine hesitancy in rural communities in Tennessee: implications for extending the COVID- 19 pandemic in the South. Vaccines (Basel). 2021;9(11):1279. doi:10.3390/vaccines9111279

6. Perrone G, Giuffrida M, Bellini V, et al. Operating room setup: how to improve health care professionals safety during pandemic COVID- 19: a quality improvement study. J Laparoendosc Adv Surg Tech A. 2021;31(1):85-89. doi:10.1089/lap.2020.0592

7. Iorio-Morin C, Hodaie M, Sarica C, et al. Letter: the risk of COVID-19 infection during neurosurgical procedures: a review of severe acute respiratory distress syndrome coronavirus 2 (SARS-CoV-2) modes of transmission and proposed neurosurgery-specific measures for mitigation. Neurosurgery. 2020;87(2):E178-E185. doi:10.1093/ neuros/nyaa157