User login

HCAHPS Patient Satisfaction Scores

Patient satisfaction surveys are widely used to empower patients to voice their concerns and point out areas of deficiency or excellence in the patient‐physician partnership and in the delivery of healthcare services.[1] In 2002, the Centers for Medicare and Medicaid Service (CMS) led an initiative to develop the Hospital Consumer Assessment of Healthcare Providers and Systems (HCAHPS) survey questionnaire.[2] This survey is sent to a randomly selected subset of patients after hospital discharge. The HCAHPS instrument assesses patient ratings of physician communication, nursing communication, pain control, responsiveness, room cleanliness and quietness, discharge process, and overall satisfaction. Over 4500 acute‐care facilities routinely use this survey.[3] HCAHPS scores are publicly reported, and patients can utilize these scores to compare hospitals and make informed choices about where to get care. At an institutional level, scores are used as a tool to identify and improve deficiencies in care delivery. Additionally, HCAHPS survey data results have been analyzed in numerous research studies.[4, 5, 6]

Specialty hospitals are a subset of acute‐care hospitals that provide a narrower set of services than general medical hospitals (GMHs), predominantly in a few specialty areas such as cardiac disease and surgical fields. Many specialty hospitals advertise high rates of patient satisfaction.[7, 8, 9, 10, 11] However, specialty hospitals differ from GMHs in significant ways. Patients at specialty hospitals may be less severely ill[10, 12] and may have more generous insurance coverage.[13] Many specialty hospitals do not have an emergency department (ED), and their outcomes may reflect care of relatively stable patients.[14] A significant number of the specialty hospitals are physician‐owned, which may provide an opportunity for physicians to deliver more patient‐focused healthcare.[14] It is also thought that specialty hospitals can provide high‐quality care by designing their facilities and service structure entirely to meet the needs of a narrow set of medical conditions.

HCAHPS survey results provide an opportunity to compare satisfaction scores among various types of hospitals. We analyzed national HCAHPS data to compare satisfaction scores of specialty hospitals and GMHs and identify factors that may be responsible for this difference.

METHODS

This was a cross‐sectional analysis of national HCAHPS survey data. The methods for administration and reporting of the HCAHPS survey have been described.[15] HCAHPS patient satisfaction data and hospital characteristics, such as location, presence of an ED, and for‐profit status, were obtained from Hospital Compare database. Teaching hospital status was identified using the CMS 2013 Open Payment teaching hospital listing.[16]

For this study, we defined specialty hospitals as acute‐care hospitals that predominantly provide care in a medical or surgical specialty and do not provide care to general medical patients. Based on this definition, specialty hospitals include cardiac hospitals, orthopedic and spine hospitals, oncology hospitals, and hospitals providing multispecialty surgical and procedure‐based services. Children's hospitals, long‐term acute‐care hospitals, and psychiatry hospitals were excluded.

Specialty hospitals were identified using hospital name searches in the HCAHPS database, the American Hospital Association 2013 Annual Survey, the Physician Hospital Association hospitals directory, and through contact with experts. The specialty hospital status of hospitals was further confirmed by checking hospital websites or by directly contacting the hospital.

We analyzed 3‐year HCAHPS patient satisfaction data that included the reporting period from July 2007 to June 2010. HCAHPS data are reported for 12‐month periods at a time. Hospital information, such as address, presence of an ED, and for‐profit status were obtained from the CMS Hospital Compare 2010 dataset. Teaching hospital status was identified using the CMS 2013 Open Payment teaching hospital listing.[16] For the purpose of this study, scores on the HCAHPS survey item definitely recommend the hospital was considered to represent overall satisfaction for the hospital. This is consistent with use of this measure in other sectors in the service industry.[17, 18] Other survey items were considered subdomains of satisfaction. For each hospital, the simple mean of satisfaction scores for overall satisfaction and each of the subdomains for the three 12‐month periods was calculated. Data were summarized using frequencies and meanstandard deviation. The primary dependent variable was overall satisfaction. The main independent variables were specialty hospital status (yes or no), teaching hospital status (yes or no), for‐profit status (yes or no), and the presence of an ED (yes or no). Multiple linear regression analysis was used to adjust for the above‐noted independent variables. A P value0.05 was considered significant. All analyses were performed on Stata 10.1 IC (StataCorp, College Station, TX).

RESULTS

We identified 188 specialty hospitals and 4638 GMHs within the HCAHPS dataset. Fewer specialty hospitals had emergency care services when compared with GMHs (53.2% for specialty hospitals vs 93.6% for GMHs, P0.0001), and 47.9% of all specialty hospitals were in states that do not require a Certificate of Need, whereas only 25% of all GMHs were present in these states. For example, Texas, which has 7.2% of all GMHs across the nation, has 24.7% of all specialty hospitals. As compared to GMHs, a majority of specialty hospitals were for profit (14.5% vs 66.9%).

In unadjusted analyses, specialty hospitals had significantly higher patient satisfaction scores compared with GMHs. Overall satisfaction, as measured by the proportion of patients that will definitely recommend that hospital, was 18.8% higher for specialty hospitals than GMHs (86.6% vs 67.8%, P0.0001). This was also true for subdomains of satisfaction including physician communication, nursing communication, and cleanliness (Table 1).

| Satisfaction Domains | GMH, Mean, n=4,638* | Specialty Hospital, Mean, n=188* | Unadjusted Mean Difference in Satisfaction (95% CI) | Mean Difference in Satisfaction Adjusted for Survey Response Rate (95% CI) | Mean Difference in Satisfaction for Full Adjusted Model (95% CI) |

|---|---|---|---|---|---|

| |||||

| Nurses always communicated well | 75.0% | 84.4% | 9.4% (8.310.5) | 4.0% (2.9‐5.0) | 5.0% (3.8‐6.2) |

| Doctors always communicated well | 80.0% | 86.5% | 6.5% (5.67.6) | 3.8% (2.8‐4.8) | 4.1% (3.05.2) |

| Pain always well controlled | 68.7% | 77.1% | 8.6% (7.79.6) | 4.5% (3.5‐4.5) | 4.6% (3.5‐5.6) |

| Always received help as soon as they wanted | 62.9% | 78.6% | 15.7% (14.117.4) | 7.8% (6.19.4) | 8.0% (6.39.7) |

| Room and bathroom always clean | 70.1% | 81.1% | 11.0% (9.612.4) | 5.5% (4.06.9) | 6.2% (4.7‐7.8) |

| Staff always explained about the medicines | 59.4% | 69.8% | 10.4 (9.211.5) | 5.8% (4.7‐6.9) | 6.5% (5.37.8) |

| Yes, were given information about what to do during recovery at home | 80.9% | 87.1% | 6.2% (5.57.0) | 1.4% (0.7‐2.1) | 2.0% (1.13.0) |

| Overall satisfaction (yes, patients would definitely recommend the hospital) | 67.8% | 86.6% | 18.8%(17.020.6) | 8.5% (6.910.2) | 8.6% (6.710.5) |

| Survey response rate | 32.2% | 49.6% | 17.4% (16.018.9) | ||

We next examined the effect of survey response rate. The survey response rate for specialty hospitals was on average 17.4 percentage points higher than that of GMHs (49.6% vs 32.2%, P0.0001). When adjusted for survey response rate, the difference in overall satisfaction for specialty hospitals was reduced to 8.6% (6.7%10.5%, P0.0001). Similarly, the differences in score for subdomains of satisfaction were more modest when adjusted for higher survey response rate. In the multiple regression models, specialty hospital status, survey response rate, for‐profit status, and the presence of an ED were independently associated with higher overall satisfaction, whereas teaching hospital status was not associated with overall satisfaction. Addition of for‐profit status and presence of an ED in the regression model did not change our results. Further, the satisfaction subdomain scores for specialty hospitals remained significantly higher than for GMHs in the regression models (Table 1).

DISCUSSION

In this national study, we found that specialty hospitals had significantly higher overall satisfaction scores on the HCAHPS satisfaction survey. Similarly, significantly higher satisfaction was noted across all the satisfaction subdomains. We found that a large proportion of the difference between specialty hospitals and GMHs in overall satisfaction and subdomains of satisfaction could be explained by a higher survey response rate in specialty hospitals. After adjusting for survey response rate, the differences were comparatively modest, although remained statistically significant. Adjustment for additional confounding variables did not change our results.

Studies have shown that specialty hospitals, when compared to GMHs, may treat more patients in their area of specialization, care for fewer sick and Medicaid patients, have greater physician ownership, and are less likely to have ED services.[11, 12, 13, 14] Two small studies comparing specialty hospitals to GMHs suggest that higher satisfaction with specialty hospitals was attributable to the presence of private rooms, quiet environment, accommodation for family members, and accessible, attentive, and well‐trained nursing staff.[10, 11] Although our analysis did not account for various other hospital and patient characteristics, we expect that these factors likely play a significant role in the observed differences in patient satisfaction.

Survey response rate can be an important determinant of the validity of survey results, and a response rate >70% is often considered desirable.[19, 20] However, the mean survey response rate for the HCAHPS survey was only 32.8% for all hospitals during the survey period. In the outpatient setting, a higher survey response rate has been shown to be associated with higher satisfaction rates.[21] In the hospital setting, a randomized study of a HCAHPS survey for 45 hospitals found that patient mix explained the nonresponse bias. However, this study did not examine the roles of severity of illness or insurance status, which may account for the differences in satisfaction seen between specialty hospitals and GMHs.[22] In contrast, we found that in the hospital setting, higher survey response rate was associated with higher patient satisfaction scores.

Our study has some limitations. First, it was not possible to determine from the dataset whether higher response rate is a result of differences in the patient population characteristics between specialty hospitals and GMHs or it represents the association between higher satisfaction and higher response rate noted by other investigators. Although we used various resources to identify all specialty hospitals, we may have missed some or misclassified others due to lack of a standardized definition.[10, 12, 13] However, the total number of specialty hospitals and their distribution across various states in the current study are consistent with previous studies, supporting our belief that few, if any, hospitals were misclassified.[13]

In summary, we found significant difference in satisfaction rates reported on HCAHPS in a national study of patients attending specialty hospitals versus GMHs. However, the observed differences in satisfaction scores were sensitive to differences in survey response rates among hospitals. Teaching hospital status, for‐profit status, and the presence of an ED did not appear to further explain the differences. Additional studies incorporating other hospital and patient characteristics are needed to fully understand factors associated with differences in the observed patient satisfaction between specialty hospitals and GMHs. Additionally, strategies to increase survey HCAHPS response rates should be a priority.

- About Picker Institute. Available at: http://pickerinstitute.org/about. Accessed September 24, 2012.

- HCAHPS Hospital Survey. Centers for Medicare 45(4):1024–1040.

- , . Consumers' use of HCAHPS ratings and word‐of‐mouth in hospital choice. Health Serv Res. 2010;45(6 pt 1):1602–1613.

- , , . Improving patient satisfaction in hospital care settings. Health Serv Manage Res. 2011;24(4):163–169.

- Live the life you want. Arkansas Surgical Hospital website. Available at: http://www.arksurgicalhospital.com/ash. Accessed September 24, 2012.

- Patient satisfaction—top 60 hospitals. Hoag Orthopedic Institute website. Available at: http://orthopedichospital.com/2012/06/patient‐satisfaction‐top‐60‐hospital. Accessed September 24, 2012.

- Northwest Specialty Hospital website. Available at: http://www.northwestspecialtyhospital.com/our‐services. Accessed September 24, 2012.

- , , , et al. Specialty versus community hospitals: referrals, quality, and community benefits. Health Affairs. 2006;25(1):106–118.

- Study of Physician‐Owned Specialty Hospitals Required in Section 507(c)(2) of the Medicare Prescription Drug, Improvement, and Modernization Act of 2003, May 2005. Available at: http://www.cms.gov/Medicare/Fraud‐and‐Abuse/PhysicianSelfReferral/Downloads/RTC‐StudyofPhysOwnedSpecHosp.pdf. Accessed June 16, 2014.

- Specialty Hospitals: Information on National Market Share, Physician Ownership and Patients Served. GAO: 03–683R. Washington, DC: General Accounting Office; 2003:1–20. Available at: http://www.gao.gov/new.items/d03683r.pdf. Accessed September 24, 2012.

- , , , . Insurance status of patients admitted to specialty cardiac and competing general hospitals: are accusations of cherry picking justified? Med Care. 2008;46:467–475.

- Specialty Hospitals: Geographic Location, Services Provided and Financial Performance: GAO‐04–167. Washington, DC: General Accounting Office; 2003:1–41. Available at: http://www.gao.gov/new.items/d04167.pdf. Accessed September 24, 2012.

- Centers for Medicare 9(4):5–17.

- , , . The relationship between customer satisfaction and loyalty: cross‐industry differences. Total Qual Manage. 2000;11(4‐6):509–514.

- , . Survey response rate levels and trends in organizational research. Hum Relat. 2008;61:1139–1160.

- , . Survey, cohort and case‐control studies. In: Design of Studies for Medical Research. Hoboken, NJ: John Wiley 2005:118–120.

- , , , , . A demonstration of the impact of response bias on the results of patient satisfaction surveys. Health Serv Res. 2002;37(5):1403–1417.

- , , , et al. Effects of survey mode, patient mix and nonresponse on CAHPS hospital survey scores. Health Serv Res. 2009;44:501–518.

Patient satisfaction surveys are widely used to empower patients to voice their concerns and point out areas of deficiency or excellence in the patient‐physician partnership and in the delivery of healthcare services.[1] In 2002, the Centers for Medicare and Medicaid Service (CMS) led an initiative to develop the Hospital Consumer Assessment of Healthcare Providers and Systems (HCAHPS) survey questionnaire.[2] This survey is sent to a randomly selected subset of patients after hospital discharge. The HCAHPS instrument assesses patient ratings of physician communication, nursing communication, pain control, responsiveness, room cleanliness and quietness, discharge process, and overall satisfaction. Over 4500 acute‐care facilities routinely use this survey.[3] HCAHPS scores are publicly reported, and patients can utilize these scores to compare hospitals and make informed choices about where to get care. At an institutional level, scores are used as a tool to identify and improve deficiencies in care delivery. Additionally, HCAHPS survey data results have been analyzed in numerous research studies.[4, 5, 6]

Specialty hospitals are a subset of acute‐care hospitals that provide a narrower set of services than general medical hospitals (GMHs), predominantly in a few specialty areas such as cardiac disease and surgical fields. Many specialty hospitals advertise high rates of patient satisfaction.[7, 8, 9, 10, 11] However, specialty hospitals differ from GMHs in significant ways. Patients at specialty hospitals may be less severely ill[10, 12] and may have more generous insurance coverage.[13] Many specialty hospitals do not have an emergency department (ED), and their outcomes may reflect care of relatively stable patients.[14] A significant number of the specialty hospitals are physician‐owned, which may provide an opportunity for physicians to deliver more patient‐focused healthcare.[14] It is also thought that specialty hospitals can provide high‐quality care by designing their facilities and service structure entirely to meet the needs of a narrow set of medical conditions.

HCAHPS survey results provide an opportunity to compare satisfaction scores among various types of hospitals. We analyzed national HCAHPS data to compare satisfaction scores of specialty hospitals and GMHs and identify factors that may be responsible for this difference.

METHODS

This was a cross‐sectional analysis of national HCAHPS survey data. The methods for administration and reporting of the HCAHPS survey have been described.[15] HCAHPS patient satisfaction data and hospital characteristics, such as location, presence of an ED, and for‐profit status, were obtained from Hospital Compare database. Teaching hospital status was identified using the CMS 2013 Open Payment teaching hospital listing.[16]

For this study, we defined specialty hospitals as acute‐care hospitals that predominantly provide care in a medical or surgical specialty and do not provide care to general medical patients. Based on this definition, specialty hospitals include cardiac hospitals, orthopedic and spine hospitals, oncology hospitals, and hospitals providing multispecialty surgical and procedure‐based services. Children's hospitals, long‐term acute‐care hospitals, and psychiatry hospitals were excluded.

Specialty hospitals were identified using hospital name searches in the HCAHPS database, the American Hospital Association 2013 Annual Survey, the Physician Hospital Association hospitals directory, and through contact with experts. The specialty hospital status of hospitals was further confirmed by checking hospital websites or by directly contacting the hospital.

We analyzed 3‐year HCAHPS patient satisfaction data that included the reporting period from July 2007 to June 2010. HCAHPS data are reported for 12‐month periods at a time. Hospital information, such as address, presence of an ED, and for‐profit status were obtained from the CMS Hospital Compare 2010 dataset. Teaching hospital status was identified using the CMS 2013 Open Payment teaching hospital listing.[16] For the purpose of this study, scores on the HCAHPS survey item definitely recommend the hospital was considered to represent overall satisfaction for the hospital. This is consistent with use of this measure in other sectors in the service industry.[17, 18] Other survey items were considered subdomains of satisfaction. For each hospital, the simple mean of satisfaction scores for overall satisfaction and each of the subdomains for the three 12‐month periods was calculated. Data were summarized using frequencies and meanstandard deviation. The primary dependent variable was overall satisfaction. The main independent variables were specialty hospital status (yes or no), teaching hospital status (yes or no), for‐profit status (yes or no), and the presence of an ED (yes or no). Multiple linear regression analysis was used to adjust for the above‐noted independent variables. A P value0.05 was considered significant. All analyses were performed on Stata 10.1 IC (StataCorp, College Station, TX).

RESULTS

We identified 188 specialty hospitals and 4638 GMHs within the HCAHPS dataset. Fewer specialty hospitals had emergency care services when compared with GMHs (53.2% for specialty hospitals vs 93.6% for GMHs, P0.0001), and 47.9% of all specialty hospitals were in states that do not require a Certificate of Need, whereas only 25% of all GMHs were present in these states. For example, Texas, which has 7.2% of all GMHs across the nation, has 24.7% of all specialty hospitals. As compared to GMHs, a majority of specialty hospitals were for profit (14.5% vs 66.9%).

In unadjusted analyses, specialty hospitals had significantly higher patient satisfaction scores compared with GMHs. Overall satisfaction, as measured by the proportion of patients that will definitely recommend that hospital, was 18.8% higher for specialty hospitals than GMHs (86.6% vs 67.8%, P0.0001). This was also true for subdomains of satisfaction including physician communication, nursing communication, and cleanliness (Table 1).

| Satisfaction Domains | GMH, Mean, n=4,638* | Specialty Hospital, Mean, n=188* | Unadjusted Mean Difference in Satisfaction (95% CI) | Mean Difference in Satisfaction Adjusted for Survey Response Rate (95% CI) | Mean Difference in Satisfaction for Full Adjusted Model (95% CI) |

|---|---|---|---|---|---|

| |||||

| Nurses always communicated well | 75.0% | 84.4% | 9.4% (8.310.5) | 4.0% (2.9‐5.0) | 5.0% (3.8‐6.2) |

| Doctors always communicated well | 80.0% | 86.5% | 6.5% (5.67.6) | 3.8% (2.8‐4.8) | 4.1% (3.05.2) |

| Pain always well controlled | 68.7% | 77.1% | 8.6% (7.79.6) | 4.5% (3.5‐4.5) | 4.6% (3.5‐5.6) |

| Always received help as soon as they wanted | 62.9% | 78.6% | 15.7% (14.117.4) | 7.8% (6.19.4) | 8.0% (6.39.7) |

| Room and bathroom always clean | 70.1% | 81.1% | 11.0% (9.612.4) | 5.5% (4.06.9) | 6.2% (4.7‐7.8) |

| Staff always explained about the medicines | 59.4% | 69.8% | 10.4 (9.211.5) | 5.8% (4.7‐6.9) | 6.5% (5.37.8) |

| Yes, were given information about what to do during recovery at home | 80.9% | 87.1% | 6.2% (5.57.0) | 1.4% (0.7‐2.1) | 2.0% (1.13.0) |

| Overall satisfaction (yes, patients would definitely recommend the hospital) | 67.8% | 86.6% | 18.8%(17.020.6) | 8.5% (6.910.2) | 8.6% (6.710.5) |

| Survey response rate | 32.2% | 49.6% | 17.4% (16.018.9) | ||

We next examined the effect of survey response rate. The survey response rate for specialty hospitals was on average 17.4 percentage points higher than that of GMHs (49.6% vs 32.2%, P0.0001). When adjusted for survey response rate, the difference in overall satisfaction for specialty hospitals was reduced to 8.6% (6.7%10.5%, P0.0001). Similarly, the differences in score for subdomains of satisfaction were more modest when adjusted for higher survey response rate. In the multiple regression models, specialty hospital status, survey response rate, for‐profit status, and the presence of an ED were independently associated with higher overall satisfaction, whereas teaching hospital status was not associated with overall satisfaction. Addition of for‐profit status and presence of an ED in the regression model did not change our results. Further, the satisfaction subdomain scores for specialty hospitals remained significantly higher than for GMHs in the regression models (Table 1).

DISCUSSION

In this national study, we found that specialty hospitals had significantly higher overall satisfaction scores on the HCAHPS satisfaction survey. Similarly, significantly higher satisfaction was noted across all the satisfaction subdomains. We found that a large proportion of the difference between specialty hospitals and GMHs in overall satisfaction and subdomains of satisfaction could be explained by a higher survey response rate in specialty hospitals. After adjusting for survey response rate, the differences were comparatively modest, although remained statistically significant. Adjustment for additional confounding variables did not change our results.

Studies have shown that specialty hospitals, when compared to GMHs, may treat more patients in their area of specialization, care for fewer sick and Medicaid patients, have greater physician ownership, and are less likely to have ED services.[11, 12, 13, 14] Two small studies comparing specialty hospitals to GMHs suggest that higher satisfaction with specialty hospitals was attributable to the presence of private rooms, quiet environment, accommodation for family members, and accessible, attentive, and well‐trained nursing staff.[10, 11] Although our analysis did not account for various other hospital and patient characteristics, we expect that these factors likely play a significant role in the observed differences in patient satisfaction.

Survey response rate can be an important determinant of the validity of survey results, and a response rate >70% is often considered desirable.[19, 20] However, the mean survey response rate for the HCAHPS survey was only 32.8% for all hospitals during the survey period. In the outpatient setting, a higher survey response rate has been shown to be associated with higher satisfaction rates.[21] In the hospital setting, a randomized study of a HCAHPS survey for 45 hospitals found that patient mix explained the nonresponse bias. However, this study did not examine the roles of severity of illness or insurance status, which may account for the differences in satisfaction seen between specialty hospitals and GMHs.[22] In contrast, we found that in the hospital setting, higher survey response rate was associated with higher patient satisfaction scores.

Our study has some limitations. First, it was not possible to determine from the dataset whether higher response rate is a result of differences in the patient population characteristics between specialty hospitals and GMHs or it represents the association between higher satisfaction and higher response rate noted by other investigators. Although we used various resources to identify all specialty hospitals, we may have missed some or misclassified others due to lack of a standardized definition.[10, 12, 13] However, the total number of specialty hospitals and their distribution across various states in the current study are consistent with previous studies, supporting our belief that few, if any, hospitals were misclassified.[13]

In summary, we found significant difference in satisfaction rates reported on HCAHPS in a national study of patients attending specialty hospitals versus GMHs. However, the observed differences in satisfaction scores were sensitive to differences in survey response rates among hospitals. Teaching hospital status, for‐profit status, and the presence of an ED did not appear to further explain the differences. Additional studies incorporating other hospital and patient characteristics are needed to fully understand factors associated with differences in the observed patient satisfaction between specialty hospitals and GMHs. Additionally, strategies to increase survey HCAHPS response rates should be a priority.

Patient satisfaction surveys are widely used to empower patients to voice their concerns and point out areas of deficiency or excellence in the patient‐physician partnership and in the delivery of healthcare services.[1] In 2002, the Centers for Medicare and Medicaid Service (CMS) led an initiative to develop the Hospital Consumer Assessment of Healthcare Providers and Systems (HCAHPS) survey questionnaire.[2] This survey is sent to a randomly selected subset of patients after hospital discharge. The HCAHPS instrument assesses patient ratings of physician communication, nursing communication, pain control, responsiveness, room cleanliness and quietness, discharge process, and overall satisfaction. Over 4500 acute‐care facilities routinely use this survey.[3] HCAHPS scores are publicly reported, and patients can utilize these scores to compare hospitals and make informed choices about where to get care. At an institutional level, scores are used as a tool to identify and improve deficiencies in care delivery. Additionally, HCAHPS survey data results have been analyzed in numerous research studies.[4, 5, 6]

Specialty hospitals are a subset of acute‐care hospitals that provide a narrower set of services than general medical hospitals (GMHs), predominantly in a few specialty areas such as cardiac disease and surgical fields. Many specialty hospitals advertise high rates of patient satisfaction.[7, 8, 9, 10, 11] However, specialty hospitals differ from GMHs in significant ways. Patients at specialty hospitals may be less severely ill[10, 12] and may have more generous insurance coverage.[13] Many specialty hospitals do not have an emergency department (ED), and their outcomes may reflect care of relatively stable patients.[14] A significant number of the specialty hospitals are physician‐owned, which may provide an opportunity for physicians to deliver more patient‐focused healthcare.[14] It is also thought that specialty hospitals can provide high‐quality care by designing their facilities and service structure entirely to meet the needs of a narrow set of medical conditions.

HCAHPS survey results provide an opportunity to compare satisfaction scores among various types of hospitals. We analyzed national HCAHPS data to compare satisfaction scores of specialty hospitals and GMHs and identify factors that may be responsible for this difference.

METHODS

This was a cross‐sectional analysis of national HCAHPS survey data. The methods for administration and reporting of the HCAHPS survey have been described.[15] HCAHPS patient satisfaction data and hospital characteristics, such as location, presence of an ED, and for‐profit status, were obtained from Hospital Compare database. Teaching hospital status was identified using the CMS 2013 Open Payment teaching hospital listing.[16]

For this study, we defined specialty hospitals as acute‐care hospitals that predominantly provide care in a medical or surgical specialty and do not provide care to general medical patients. Based on this definition, specialty hospitals include cardiac hospitals, orthopedic and spine hospitals, oncology hospitals, and hospitals providing multispecialty surgical and procedure‐based services. Children's hospitals, long‐term acute‐care hospitals, and psychiatry hospitals were excluded.

Specialty hospitals were identified using hospital name searches in the HCAHPS database, the American Hospital Association 2013 Annual Survey, the Physician Hospital Association hospitals directory, and through contact with experts. The specialty hospital status of hospitals was further confirmed by checking hospital websites or by directly contacting the hospital.

We analyzed 3‐year HCAHPS patient satisfaction data that included the reporting period from July 2007 to June 2010. HCAHPS data are reported for 12‐month periods at a time. Hospital information, such as address, presence of an ED, and for‐profit status were obtained from the CMS Hospital Compare 2010 dataset. Teaching hospital status was identified using the CMS 2013 Open Payment teaching hospital listing.[16] For the purpose of this study, scores on the HCAHPS survey item definitely recommend the hospital was considered to represent overall satisfaction for the hospital. This is consistent with use of this measure in other sectors in the service industry.[17, 18] Other survey items were considered subdomains of satisfaction. For each hospital, the simple mean of satisfaction scores for overall satisfaction and each of the subdomains for the three 12‐month periods was calculated. Data were summarized using frequencies and meanstandard deviation. The primary dependent variable was overall satisfaction. The main independent variables were specialty hospital status (yes or no), teaching hospital status (yes or no), for‐profit status (yes or no), and the presence of an ED (yes or no). Multiple linear regression analysis was used to adjust for the above‐noted independent variables. A P value0.05 was considered significant. All analyses were performed on Stata 10.1 IC (StataCorp, College Station, TX).

RESULTS

We identified 188 specialty hospitals and 4638 GMHs within the HCAHPS dataset. Fewer specialty hospitals had emergency care services when compared with GMHs (53.2% for specialty hospitals vs 93.6% for GMHs, P0.0001), and 47.9% of all specialty hospitals were in states that do not require a Certificate of Need, whereas only 25% of all GMHs were present in these states. For example, Texas, which has 7.2% of all GMHs across the nation, has 24.7% of all specialty hospitals. As compared to GMHs, a majority of specialty hospitals were for profit (14.5% vs 66.9%).

In unadjusted analyses, specialty hospitals had significantly higher patient satisfaction scores compared with GMHs. Overall satisfaction, as measured by the proportion of patients that will definitely recommend that hospital, was 18.8% higher for specialty hospitals than GMHs (86.6% vs 67.8%, P0.0001). This was also true for subdomains of satisfaction including physician communication, nursing communication, and cleanliness (Table 1).

| Satisfaction Domains | GMH, Mean, n=4,638* | Specialty Hospital, Mean, n=188* | Unadjusted Mean Difference in Satisfaction (95% CI) | Mean Difference in Satisfaction Adjusted for Survey Response Rate (95% CI) | Mean Difference in Satisfaction for Full Adjusted Model (95% CI) |

|---|---|---|---|---|---|

| |||||

| Nurses always communicated well | 75.0% | 84.4% | 9.4% (8.310.5) | 4.0% (2.9‐5.0) | 5.0% (3.8‐6.2) |

| Doctors always communicated well | 80.0% | 86.5% | 6.5% (5.67.6) | 3.8% (2.8‐4.8) | 4.1% (3.05.2) |

| Pain always well controlled | 68.7% | 77.1% | 8.6% (7.79.6) | 4.5% (3.5‐4.5) | 4.6% (3.5‐5.6) |

| Always received help as soon as they wanted | 62.9% | 78.6% | 15.7% (14.117.4) | 7.8% (6.19.4) | 8.0% (6.39.7) |

| Room and bathroom always clean | 70.1% | 81.1% | 11.0% (9.612.4) | 5.5% (4.06.9) | 6.2% (4.7‐7.8) |

| Staff always explained about the medicines | 59.4% | 69.8% | 10.4 (9.211.5) | 5.8% (4.7‐6.9) | 6.5% (5.37.8) |

| Yes, were given information about what to do during recovery at home | 80.9% | 87.1% | 6.2% (5.57.0) | 1.4% (0.7‐2.1) | 2.0% (1.13.0) |

| Overall satisfaction (yes, patients would definitely recommend the hospital) | 67.8% | 86.6% | 18.8%(17.020.6) | 8.5% (6.910.2) | 8.6% (6.710.5) |

| Survey response rate | 32.2% | 49.6% | 17.4% (16.018.9) | ||

We next examined the effect of survey response rate. The survey response rate for specialty hospitals was on average 17.4 percentage points higher than that of GMHs (49.6% vs 32.2%, P0.0001). When adjusted for survey response rate, the difference in overall satisfaction for specialty hospitals was reduced to 8.6% (6.7%10.5%, P0.0001). Similarly, the differences in score for subdomains of satisfaction were more modest when adjusted for higher survey response rate. In the multiple regression models, specialty hospital status, survey response rate, for‐profit status, and the presence of an ED were independently associated with higher overall satisfaction, whereas teaching hospital status was not associated with overall satisfaction. Addition of for‐profit status and presence of an ED in the regression model did not change our results. Further, the satisfaction subdomain scores for specialty hospitals remained significantly higher than for GMHs in the regression models (Table 1).

DISCUSSION

In this national study, we found that specialty hospitals had significantly higher overall satisfaction scores on the HCAHPS satisfaction survey. Similarly, significantly higher satisfaction was noted across all the satisfaction subdomains. We found that a large proportion of the difference between specialty hospitals and GMHs in overall satisfaction and subdomains of satisfaction could be explained by a higher survey response rate in specialty hospitals. After adjusting for survey response rate, the differences were comparatively modest, although remained statistically significant. Adjustment for additional confounding variables did not change our results.

Studies have shown that specialty hospitals, when compared to GMHs, may treat more patients in their area of specialization, care for fewer sick and Medicaid patients, have greater physician ownership, and are less likely to have ED services.[11, 12, 13, 14] Two small studies comparing specialty hospitals to GMHs suggest that higher satisfaction with specialty hospitals was attributable to the presence of private rooms, quiet environment, accommodation for family members, and accessible, attentive, and well‐trained nursing staff.[10, 11] Although our analysis did not account for various other hospital and patient characteristics, we expect that these factors likely play a significant role in the observed differences in patient satisfaction.

Survey response rate can be an important determinant of the validity of survey results, and a response rate >70% is often considered desirable.[19, 20] However, the mean survey response rate for the HCAHPS survey was only 32.8% for all hospitals during the survey period. In the outpatient setting, a higher survey response rate has been shown to be associated with higher satisfaction rates.[21] In the hospital setting, a randomized study of a HCAHPS survey for 45 hospitals found that patient mix explained the nonresponse bias. However, this study did not examine the roles of severity of illness or insurance status, which may account for the differences in satisfaction seen between specialty hospitals and GMHs.[22] In contrast, we found that in the hospital setting, higher survey response rate was associated with higher patient satisfaction scores.

Our study has some limitations. First, it was not possible to determine from the dataset whether higher response rate is a result of differences in the patient population characteristics between specialty hospitals and GMHs or it represents the association between higher satisfaction and higher response rate noted by other investigators. Although we used various resources to identify all specialty hospitals, we may have missed some or misclassified others due to lack of a standardized definition.[10, 12, 13] However, the total number of specialty hospitals and their distribution across various states in the current study are consistent with previous studies, supporting our belief that few, if any, hospitals were misclassified.[13]

In summary, we found significant difference in satisfaction rates reported on HCAHPS in a national study of patients attending specialty hospitals versus GMHs. However, the observed differences in satisfaction scores were sensitive to differences in survey response rates among hospitals. Teaching hospital status, for‐profit status, and the presence of an ED did not appear to further explain the differences. Additional studies incorporating other hospital and patient characteristics are needed to fully understand factors associated with differences in the observed patient satisfaction between specialty hospitals and GMHs. Additionally, strategies to increase survey HCAHPS response rates should be a priority.

- About Picker Institute. Available at: http://pickerinstitute.org/about. Accessed September 24, 2012.

- HCAHPS Hospital Survey. Centers for Medicare 45(4):1024–1040.

- , . Consumers' use of HCAHPS ratings and word‐of‐mouth in hospital choice. Health Serv Res. 2010;45(6 pt 1):1602–1613.

- , , . Improving patient satisfaction in hospital care settings. Health Serv Manage Res. 2011;24(4):163–169.

- Live the life you want. Arkansas Surgical Hospital website. Available at: http://www.arksurgicalhospital.com/ash. Accessed September 24, 2012.

- Patient satisfaction—top 60 hospitals. Hoag Orthopedic Institute website. Available at: http://orthopedichospital.com/2012/06/patient‐satisfaction‐top‐60‐hospital. Accessed September 24, 2012.

- Northwest Specialty Hospital website. Available at: http://www.northwestspecialtyhospital.com/our‐services. Accessed September 24, 2012.

- , , , et al. Specialty versus community hospitals: referrals, quality, and community benefits. Health Affairs. 2006;25(1):106–118.

- Study of Physician‐Owned Specialty Hospitals Required in Section 507(c)(2) of the Medicare Prescription Drug, Improvement, and Modernization Act of 2003, May 2005. Available at: http://www.cms.gov/Medicare/Fraud‐and‐Abuse/PhysicianSelfReferral/Downloads/RTC‐StudyofPhysOwnedSpecHosp.pdf. Accessed June 16, 2014.

- Specialty Hospitals: Information on National Market Share, Physician Ownership and Patients Served. GAO: 03–683R. Washington, DC: General Accounting Office; 2003:1–20. Available at: http://www.gao.gov/new.items/d03683r.pdf. Accessed September 24, 2012.

- , , , . Insurance status of patients admitted to specialty cardiac and competing general hospitals: are accusations of cherry picking justified? Med Care. 2008;46:467–475.

- Specialty Hospitals: Geographic Location, Services Provided and Financial Performance: GAO‐04–167. Washington, DC: General Accounting Office; 2003:1–41. Available at: http://www.gao.gov/new.items/d04167.pdf. Accessed September 24, 2012.

- Centers for Medicare 9(4):5–17.

- , , . The relationship between customer satisfaction and loyalty: cross‐industry differences. Total Qual Manage. 2000;11(4‐6):509–514.

- , . Survey response rate levels and trends in organizational research. Hum Relat. 2008;61:1139–1160.

- , . Survey, cohort and case‐control studies. In: Design of Studies for Medical Research. Hoboken, NJ: John Wiley 2005:118–120.

- , , , , . A demonstration of the impact of response bias on the results of patient satisfaction surveys. Health Serv Res. 2002;37(5):1403–1417.

- , , , et al. Effects of survey mode, patient mix and nonresponse on CAHPS hospital survey scores. Health Serv Res. 2009;44:501–518.

- About Picker Institute. Available at: http://pickerinstitute.org/about. Accessed September 24, 2012.

- HCAHPS Hospital Survey. Centers for Medicare 45(4):1024–1040.

- , . Consumers' use of HCAHPS ratings and word‐of‐mouth in hospital choice. Health Serv Res. 2010;45(6 pt 1):1602–1613.

- , , . Improving patient satisfaction in hospital care settings. Health Serv Manage Res. 2011;24(4):163–169.

- Live the life you want. Arkansas Surgical Hospital website. Available at: http://www.arksurgicalhospital.com/ash. Accessed September 24, 2012.

- Patient satisfaction—top 60 hospitals. Hoag Orthopedic Institute website. Available at: http://orthopedichospital.com/2012/06/patient‐satisfaction‐top‐60‐hospital. Accessed September 24, 2012.

- Northwest Specialty Hospital website. Available at: http://www.northwestspecialtyhospital.com/our‐services. Accessed September 24, 2012.

- , , , et al. Specialty versus community hospitals: referrals, quality, and community benefits. Health Affairs. 2006;25(1):106–118.

- Study of Physician‐Owned Specialty Hospitals Required in Section 507(c)(2) of the Medicare Prescription Drug, Improvement, and Modernization Act of 2003, May 2005. Available at: http://www.cms.gov/Medicare/Fraud‐and‐Abuse/PhysicianSelfReferral/Downloads/RTC‐StudyofPhysOwnedSpecHosp.pdf. Accessed June 16, 2014.

- Specialty Hospitals: Information on National Market Share, Physician Ownership and Patients Served. GAO: 03–683R. Washington, DC: General Accounting Office; 2003:1–20. Available at: http://www.gao.gov/new.items/d03683r.pdf. Accessed September 24, 2012.

- , , , . Insurance status of patients admitted to specialty cardiac and competing general hospitals: are accusations of cherry picking justified? Med Care. 2008;46:467–475.

- Specialty Hospitals: Geographic Location, Services Provided and Financial Performance: GAO‐04–167. Washington, DC: General Accounting Office; 2003:1–41. Available at: http://www.gao.gov/new.items/d04167.pdf. Accessed September 24, 2012.

- Centers for Medicare 9(4):5–17.

- , , . The relationship between customer satisfaction and loyalty: cross‐industry differences. Total Qual Manage. 2000;11(4‐6):509–514.

- , . Survey response rate levels and trends in organizational research. Hum Relat. 2008;61:1139–1160.

- , . Survey, cohort and case‐control studies. In: Design of Studies for Medical Research. Hoboken, NJ: John Wiley 2005:118–120.

- , , , , . A demonstration of the impact of response bias on the results of patient satisfaction surveys. Health Serv Res. 2002;37(5):1403–1417.

- , , , et al. Effects of survey mode, patient mix and nonresponse on CAHPS hospital survey scores. Health Serv Res. 2009;44:501–518.

Impact of Pocket Ultrasound Use

Applications of point‐of‐care ultrasonography (POC‐US) have grown rapidly over the past 20 years. POC‐US training is required by the Accreditation Council for Graduate Medical Education for several graduate medical education training programs, including emergency medicine residency and pulmonary/critical care fellowships.[1] Recent efforts have examined the utility of ultrasound in the education of medical students[2] and the diagnostic and procedural applications performed by residents.[3] One powerful application of POC‐US is the use of lung ultrasound to diagnose causes of respiratory failure at the bedside.[4] Although lung ultrasound has been shown to have superior diagnostic accuracy to chest x‐rays,[5] limited availability of expert physicians and ultrasound equipment have presented barriers to wider application. The advent of lower cost pocket ultrasounds may present a solution given the early reports of similar efficacy to traditional devices in the assessment of left ventricular dysfunction, acute decompensated heart failure,[6] and focused assessment with sonography for trauma.[7] We assessed the feasibility and diagnostic accuracy of residents trained in lung ultrasound with a pocket device for evaluating patients with dyspnea.

MATERIALS AND METHODS

Study Design

We performed a prospective, observational study of internal medicine residents performing lung ultrasound with a pocket ultrasound from September 2012 to August 2013 at Beth Israel Medical Center, an 856‐bed teaching hospital in New York City. This study was approved by the Committee of Scientific Affairs of Beth Israel Medical Center, which waived the requirement for informed consent (institutional review board #016‐10). Ten pocket ultrasounds (Vscan; GE Vingmed Ultrasound, Horten, Norway) were acquired through an educational grant from General Electric Company. Grant sponsors were not involved in any aspect of the study.

Recruitment and Training

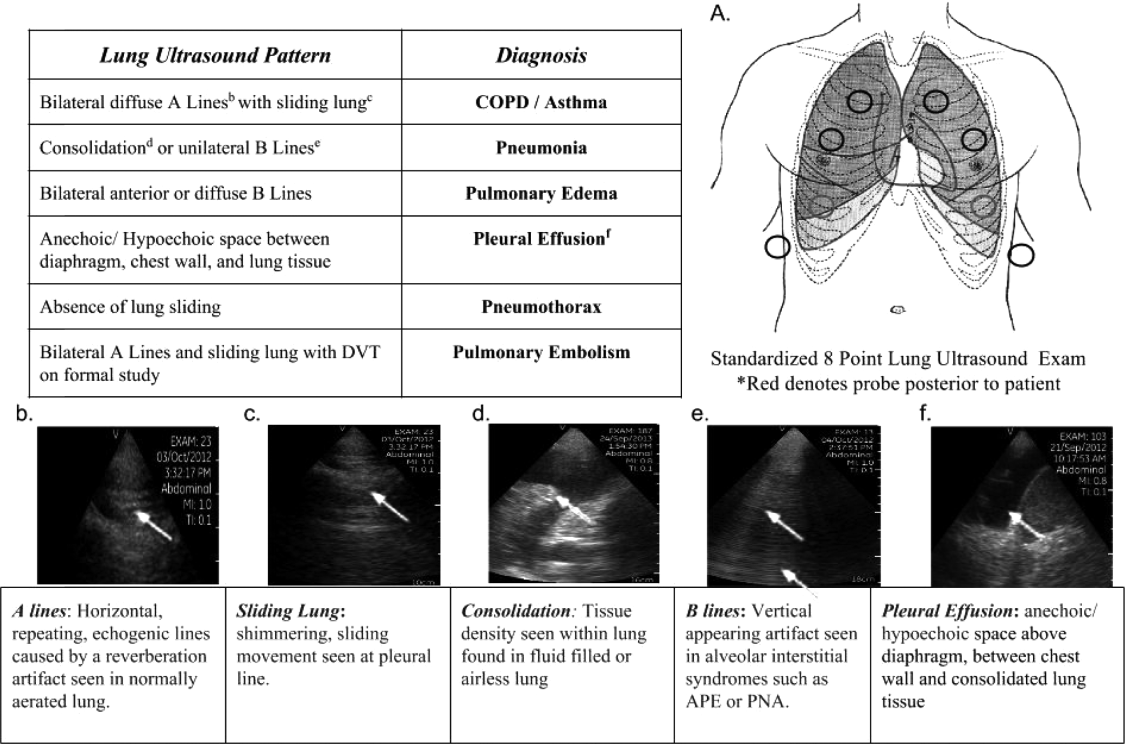

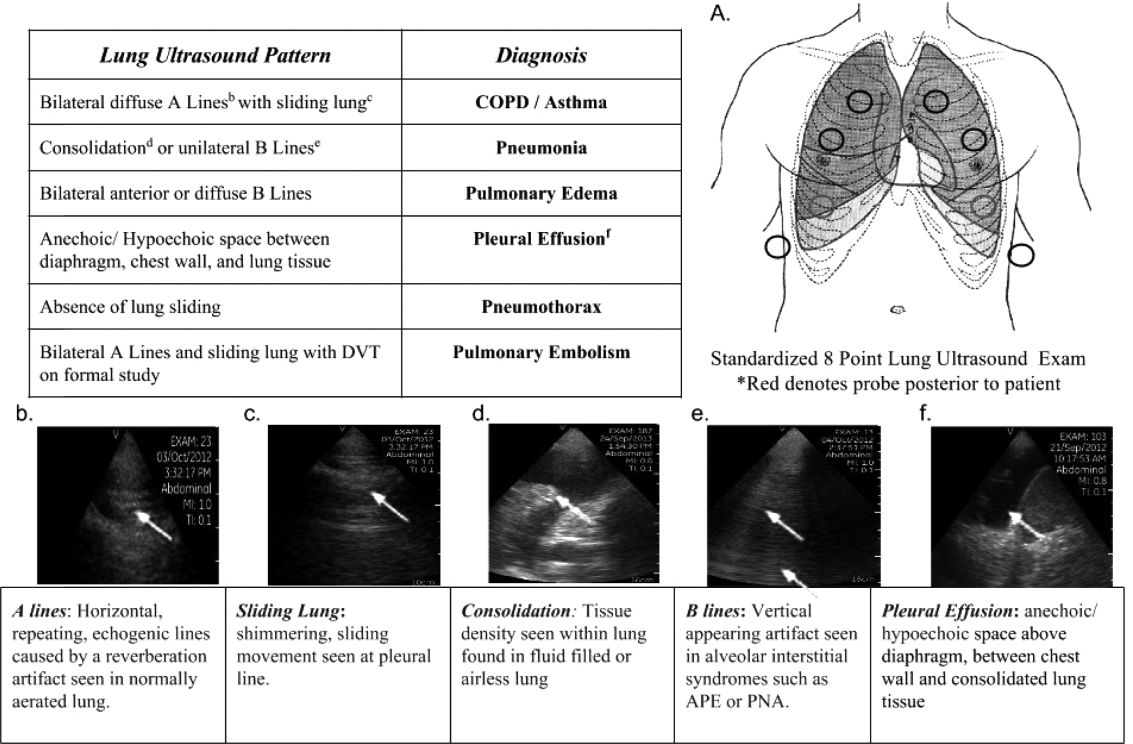

One hundred nineteen internal medicine residents were offered training on lung ultrasound in return for participating in the study. Initially, 10 residents from 3 postgraduate years with no previous lung ultrasound experience volunteered for the study and received a pocket ultrasound along with either focused or extended training. Focused and extended training groups both received 2 sessions of 90 minutes that included didactics covering image creation of the 5 main diagnostic lung ultrasound patterns and their pathological correlates. Sessions also included training in the operation of a pocket ultrasound along with bedside instruction in image acquisition using an 8‐point exam protocol (Figure 1A). All residents were required to demonstrate competency in this 8‐point protocol with proper image acquisition and interpretation of 3 lung ultrasound exams under direct supervision by an expert practitioner (P.K.). Only 5 residents completed the training due mostly to other commitments. Two extended training residents, both authors of this article, who plan to continue training in pulmonary and critical care medicine, volunteered for an additional 2‐week general critical care ultrasound elective. This elective included daily bedside supervised performance and interpretation of lung ultrasound patterns on at least 15 patients admitted during intensive care unit rounds.

Patient Selection

Patients admitted to a resident's service were considered for inclusion at their convenience if the patient reported a chief complaint of dyspnea.

Diagnostic Protocol

Upon admission, residents recorded a clinical diagnosis of dyspnea based on a standard diagnostic evaluation including complete history, physical exam, and all relevant laboratory and imaging studies, including chest x‐ray and computed tomography (CT) scans. A diagnosis of dyspnea after lung ultrasound was then recorded based on the lung ultrasound findings and integrated with all other clinical information available. Standard lung ultrasound patterns and diagnostic correlates are shown in Figure 1. Diagnoses of dyspnea were recorded as one of 7 possibilities; 1) exacerbation of chronic obstructive pulmonary disease or asthma (COPD/asthma), 2) acute pulmonary edema (APE), 3) pneumonia (PNA), 4) pulmonary embolus (PE), 5) pneumothorax (PTX), 6) pleural effusion (PLEFF), and 7) other (OTH), namely anemia, ascites, and dehydration.

Data Collection

Patient demographics, comorbidities, lung ultrasound findings, and both clinical and ultrasound diagnosis were recorded on a standardized form. A final diagnosis based on the attending physicians' diagnosis of dyspnea was determined through chart review by 3 investigators blinded to the clinical and ultrasound diagnoses. Discordant findings were resolved by consensus. Attending physicians were blinded to the lung ultrasound exam results.

Statistical Analysis

Sensitivity and specificity of the clinical and ultrasound diagnoses for focused and extended training groups were calculated for each diagnosis using final attending diagnosis as the gold standard. Causes of dyspnea were often deemed multifactorial, leading to more than 1 diagnosis recorded per patient exam. Overall diagnostic accuracy was calculated for each group using the reported clinical, ultrasound, and final diagnoses. Receiver operating curve (ROC) analysis was performed with Stata 12.1 (StataCorp, College Station, TX).

RESULTS

Five residents performed lung ultrasound on a convenience sample of 69 newly admitted patients. Patient baseline characteristics are shown in Table 1. Three residents made up the focused training group and examined 21 patients, resulting in 27 clinical diagnoses, 27 ultrasound diagnoses, and 31 final attending diagnoses. Two residents made up the extended training group and examined 48 patients, resulting in 61 clinical diagnoses, 60 ultrasound diagnoses, and 60 final attending diagnoses. Improvements in sensitivity and specificity using lung ultrasound were more pronounced for the extended training group and are shown for each diagnosis in Table 2.

| Age, y, mean | 69 |

|---|---|

| |

| Sex, male, % | 52.2 |

| BMI, mean, kg/m2 | 25.7 |

| Comorbidities, % | |

| COPD | 43.3 |

| CHF | 23.9 |

| Hypertension | 59.4 |

| Diabetes mellitus | 29 |

| Atrial fibrillation | 18.9 |

| DVT/PE | 1.5 |

| Lung cancer | 5.9 |

| Finding on admission, % | |

| CXR available | 94 |

| Chest CT available | 22.4 |

| WBC >10.4 K/L | 36.2 |

| BNP >400 pg/mL | 27.5 |

| Temperature >100.9F | 6 |

| Heart rate >90 bpm | 47.8 |

| Desaturation* | 32 |

| Focused Training Group | Extended Training Group | |||||||

|---|---|---|---|---|---|---|---|---|

| CLINDIAG, N=27 | USDIAG, N=27 | CLINDIAG, N=61 | USDIAG, N=20 | |||||

| Diagnosis | Sens, % | Spec, % | Sens, % | Spec, % | Sens, % | Spec, % | Sens, % | Spec, % |

| ||||||||

| COPD/asthma | 60 | 96 | 60 | 96 | 55 | 96 | 91 | 96 |

| Pneumonia | 45 | 90 | 36 | 100 | 93 | 88 | 96 | 100 |

| Pulmonary edema | 100 | 85 | 100 | 86 | 89 | 96 | 89 | 100 |

| Pleural effusion | 57 | 100 | 86 | 96 | 57 | 96 | 100 | 96 |

| Other | 50 | 100 | 75 | 96 | 80 | 96 | 80 | 100 |

Overall diagnostic accuracy using lung ultrasound improved only for the extended training group (clinical 92% vs ultrasound 97%), whereas the focused training group's accuracy was unchanged (clinical 87% vs ultrasound 88%).

ROC analysis demonstrated a superior diagnostic performance of ultrasound when compared to clinical diagnosis (Table 3).

| Diagnosis | CLINDIAG AUC, N=69 | USDIAG AUC, N=69 | P Value |

|---|---|---|---|

| |||

| COPD/asthma | 0.73 | 0.85 | 0.06 |

| Pulmonary edema | 0.85 | 0.89 | 0.49 |

| Pneumonia | 0.77 | 0.88 | 0.01 |

| Pleural effusion | 0.76 | 0.96 | 0.002 |

| Other* | 0.78 | 0.69 | 0.01 |

| All causes, n=69 | 0.81 | 0.87 | 0.01 |

DISCUSSION

In this prospective, observational study of residents performing lung ultrasound of patients with dyspnea, the diagnostic accuracy incorporating ultrasound increased compared to a standard diagnostic approach relying on history, physical exam, blood tests, and radiography. To our knowledge, this is the first study of residents independently performing lung ultrasound with a pocket ultrasound to diagnose dyspnea. Receiver operating curve analysis shows improvements in diagnostic accuracy for causes such as PNA, pleural effusion and COPD/asthma and demonstrates the feasibility and clinical utility of residents using pocket ultrasounds. The finding that improvements in sensitivity and specificity were larger in the extended training group highlights the need for sufficient training to demonstrate increased utility. Although a 2‐week critical care ultrasound elective may not be possible for all residents, perhaps training of intensity somewhere in between these 2 levels would be most feasible.

Challenges in diagnosing dyspnea have been well described, attributed to a lack of accurate history combined with often insensitive and nonspecific physical exam findings, blood tests, and radiographs.[8, 9] Further, patients often present with multiple contributing causes as was evidenced in this study.[10] Lack of initial, accurate diagnoses often leads to the provision of multiple, incorrect treatment regimens that may increase mortality.[11] The high accuracy of lung ultrasound in defining causes of respiratory failure suggests potential as a low‐cost solution.[12]

This study design differed from prior work in several respects. First, it included patients presenting with dyspnea to a hospital ward rather than acute respiratory failure to an intensive care unit (ICU), suggesting its diagnostic potential in a broader population of patients and settings. Second, the lung ultrasound was integrated with traditional clinical information rather than relied upon alone, a situation mimicking real‐world application of POC‐US. Third, operators were residents with limited amounts of training rather than highly trained experts. Finally, the lung ultrasound exams were performed using a pocket ultrasound with inferior imaging capability than larger, more established ultrasound devices. Despite these constraints, the utility of lung ultrasound was still evident, particularly in the diagnosis or exclusion of pneumonia and PLEFF.

Limitations include reliance on a small cohort of highly motivated residents with an interest in pulmonary and critical care, 2 who are authors of this article, making reproducibility a concern. Although convenience sampling may more closely mimic real world practices of POC‐US, a bias toward less challenging patients is possible and may limit conclusions regarding utility. Over‐reading and feedback were not provided to residents to improve their performance of lung ultrasound exams. Also, because chest CT is considered the gold standard in most studies examining the diagnostic accuracy of lung ultrasound, all residents aware of these data may underestimate the potential impact of integrating lung ultrasound with all clinical findings. Finally, the high cost of pocket ultrasounds is a barrier to general use. Recent studies on the significant cost savings associated with POC‐US make a further analysis of cost‐benefit ratios mandatory before broad use can be recommended.[13]

CONCLUSIONS

Residents participating in lung ultrasound training with a pocket ultrasound device showed improved diagnostic accuracy in their evaluation of patients with dyspnea. Those who received extended training had greater improvements across all causes of dyspnea. Training residents to apply lung ultrasound in non‐ICU settings appears to be feasible. Further study with a larger cohort of internal medicine residents and perhaps training duration that lies in between the focused and extended training groups is warranted.

Acknowledgements

The authors thank Dr. David Lucido for guidance on statistical analysis and Stephane Gatesoupe and the Vscan team at General Electric.

Disclosure: Ten Vscan pocket ultrasounds (General Electric) were provided free of cost solely for the purpose of conducting the clinical research study. This represented their sole participation in any stage of the research. The authors have no conflicts of interest to disclose.

- , , , . Barriers to ultrasound training in critical care medicine fellowships: a survey of program directors. Crit Care Med. 2010;38(10):1978–1983.

- , , , et al. Comparison of effectiveness of hand‐carried ultrasound to bedside cardiovascular physical examination. Am J Cardiol. 2005;96(7):1002–1006.

- , , . Diagnosing pulmonary edema: lung ultrasound versus chest radiography. Eur J Emerg Med. 2013;20(5):356–360.

- , . Relevance of lung ultrasound in the diagnosis of acute respiratory failure: the BLUE protocol. Chest. 2008;134(1):117–125.

- , , , et al. Lung ultrasound in the diagnosis and follow‐up of community‐acquired pneumonia: a prospective, multicenter, diagnostic accuracy study. Chest. 2012;142(4):965–972.

- , , , , , . Evaluation of a new pocket echoscopic device for focused cardiac ultrasonography in an emergency setting. Crit Care. 2012;16(3):R82.

- , , , . Our new stethoscope in the emergency department: handheld ultrasound. Ulus Travma Acil Cerrahi Derg. 2011;17(6):488–492.

- , , . Discriminating causes of dyspnea through clinical examination. J Gen Intern Med. 1993;8(7):383–392.

- , , . Does this patient have community‐acquired pneumonia? Diagnosing pneumonia by history and physical examination. JAMA. 1997;278(17):1440–1445.

- , , , et al. Acute respiratory failure in the elderly: etiology, emergency diagnosis and prognosis. Crit Care. 2006;10(3):R82.

- , , , et al. Early interventions in severe sepsis and septic shock: a review of the evidence one decade later. Minerva Anestesiol. 2012;78(6):712–724.

- , , , . Ultrasound diagnosis of alveolar consolidation in the critically ill. Intensive Care Med. 2004;30(2):276–281.

- , , , , , . Ultrasound assessment of pulmonary embolism in patients receiving computerized tomography pulmonary angiography. Chest. 2014;145(4):818–823.

Applications of point‐of‐care ultrasonography (POC‐US) have grown rapidly over the past 20 years. POC‐US training is required by the Accreditation Council for Graduate Medical Education for several graduate medical education training programs, including emergency medicine residency and pulmonary/critical care fellowships.[1] Recent efforts have examined the utility of ultrasound in the education of medical students[2] and the diagnostic and procedural applications performed by residents.[3] One powerful application of POC‐US is the use of lung ultrasound to diagnose causes of respiratory failure at the bedside.[4] Although lung ultrasound has been shown to have superior diagnostic accuracy to chest x‐rays,[5] limited availability of expert physicians and ultrasound equipment have presented barriers to wider application. The advent of lower cost pocket ultrasounds may present a solution given the early reports of similar efficacy to traditional devices in the assessment of left ventricular dysfunction, acute decompensated heart failure,[6] and focused assessment with sonography for trauma.[7] We assessed the feasibility and diagnostic accuracy of residents trained in lung ultrasound with a pocket device for evaluating patients with dyspnea.

MATERIALS AND METHODS

Study Design

We performed a prospective, observational study of internal medicine residents performing lung ultrasound with a pocket ultrasound from September 2012 to August 2013 at Beth Israel Medical Center, an 856‐bed teaching hospital in New York City. This study was approved by the Committee of Scientific Affairs of Beth Israel Medical Center, which waived the requirement for informed consent (institutional review board #016‐10). Ten pocket ultrasounds (Vscan; GE Vingmed Ultrasound, Horten, Norway) were acquired through an educational grant from General Electric Company. Grant sponsors were not involved in any aspect of the study.

Recruitment and Training

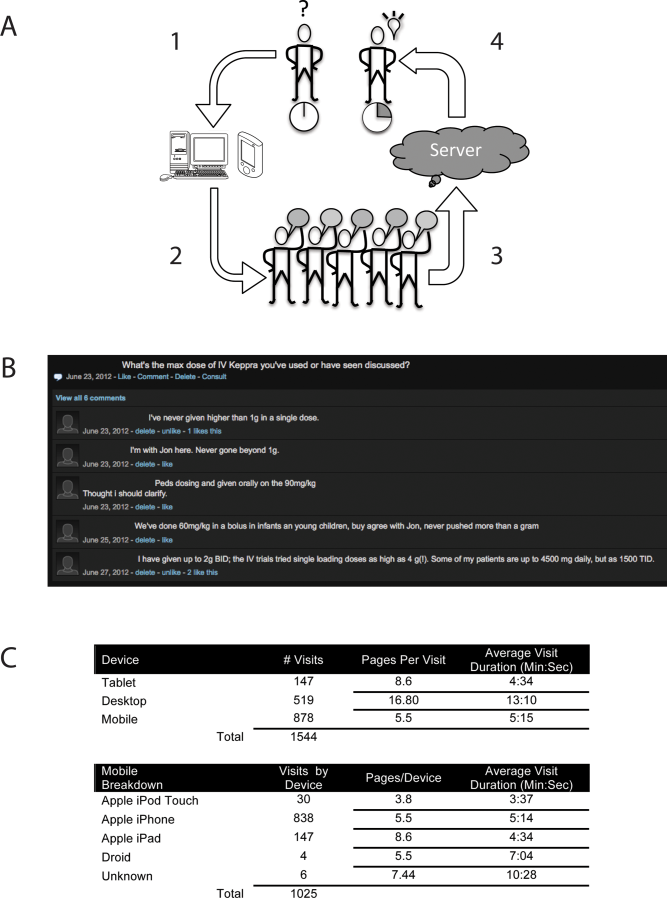

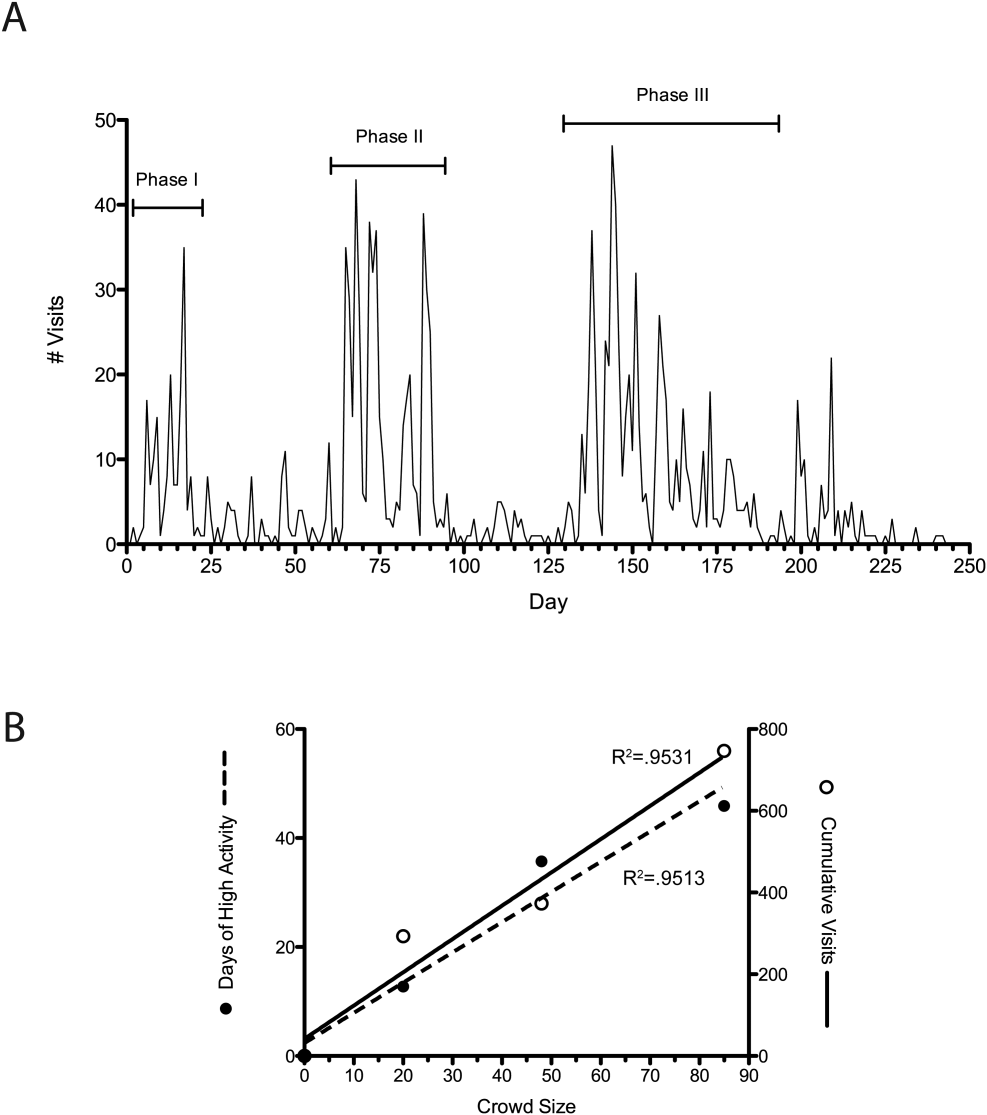

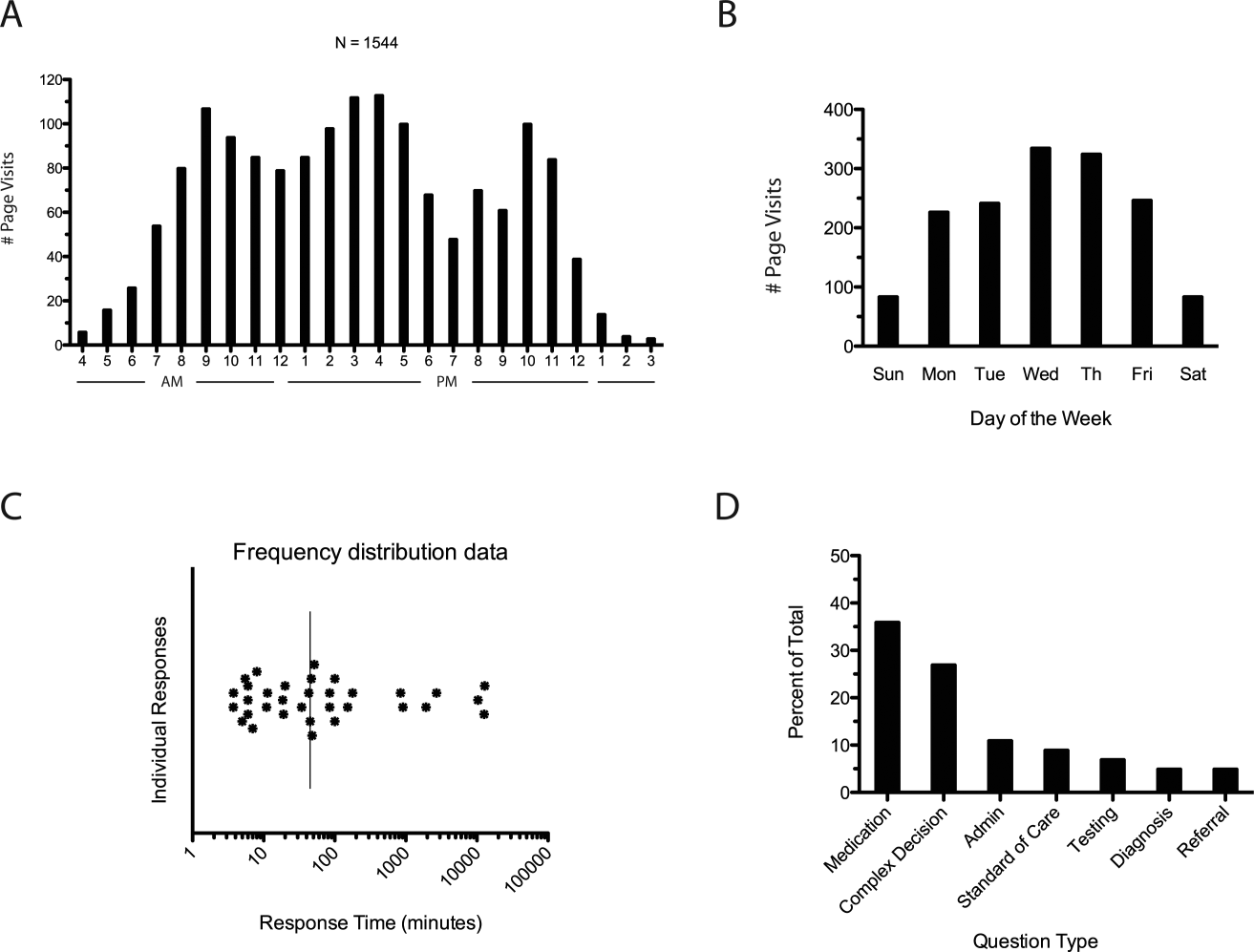

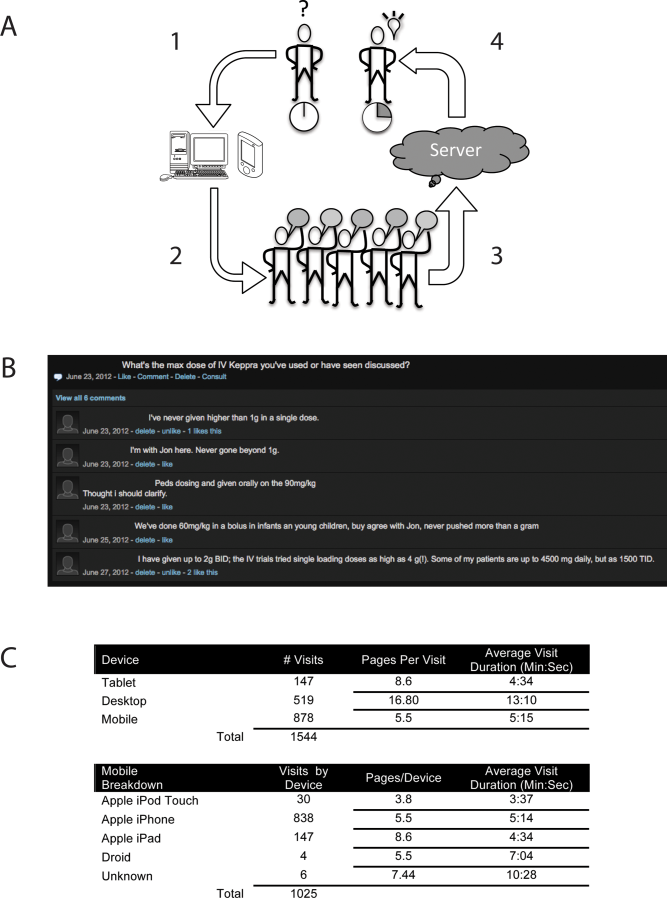

One hundred nineteen internal medicine residents were offered training on lung ultrasound in return for participating in the study. Initially, 10 residents from 3 postgraduate years with no previous lung ultrasound experience volunteered for the study and received a pocket ultrasound along with either focused or extended training. Focused and extended training groups both received 2 sessions of 90 minutes that included didactics covering image creation of the 5 main diagnostic lung ultrasound patterns and their pathological correlates. Sessions also included training in the operation of a pocket ultrasound along with bedside instruction in image acquisition using an 8‐point exam protocol (Figure 1A). All residents were required to demonstrate competency in this 8‐point protocol with proper image acquisition and interpretation of 3 lung ultrasound exams under direct supervision by an expert practitioner (P.K.). Only 5 residents completed the training due mostly to other commitments. Two extended training residents, both authors of this article, who plan to continue training in pulmonary and critical care medicine, volunteered for an additional 2‐week general critical care ultrasound elective. This elective included daily bedside supervised performance and interpretation of lung ultrasound patterns on at least 15 patients admitted during intensive care unit rounds.

Patient Selection

Patients admitted to a resident's service were considered for inclusion at their convenience if the patient reported a chief complaint of dyspnea.

Diagnostic Protocol

Upon admission, residents recorded a clinical diagnosis of dyspnea based on a standard diagnostic evaluation including complete history, physical exam, and all relevant laboratory and imaging studies, including chest x‐ray and computed tomography (CT) scans. A diagnosis of dyspnea after lung ultrasound was then recorded based on the lung ultrasound findings and integrated with all other clinical information available. Standard lung ultrasound patterns and diagnostic correlates are shown in Figure 1. Diagnoses of dyspnea were recorded as one of 7 possibilities; 1) exacerbation of chronic obstructive pulmonary disease or asthma (COPD/asthma), 2) acute pulmonary edema (APE), 3) pneumonia (PNA), 4) pulmonary embolus (PE), 5) pneumothorax (PTX), 6) pleural effusion (PLEFF), and 7) other (OTH), namely anemia, ascites, and dehydration.

Data Collection

Patient demographics, comorbidities, lung ultrasound findings, and both clinical and ultrasound diagnosis were recorded on a standardized form. A final diagnosis based on the attending physicians' diagnosis of dyspnea was determined through chart review by 3 investigators blinded to the clinical and ultrasound diagnoses. Discordant findings were resolved by consensus. Attending physicians were blinded to the lung ultrasound exam results.

Statistical Analysis

Sensitivity and specificity of the clinical and ultrasound diagnoses for focused and extended training groups were calculated for each diagnosis using final attending diagnosis as the gold standard. Causes of dyspnea were often deemed multifactorial, leading to more than 1 diagnosis recorded per patient exam. Overall diagnostic accuracy was calculated for each group using the reported clinical, ultrasound, and final diagnoses. Receiver operating curve (ROC) analysis was performed with Stata 12.1 (StataCorp, College Station, TX).

RESULTS

Five residents performed lung ultrasound on a convenience sample of 69 newly admitted patients. Patient baseline characteristics are shown in Table 1. Three residents made up the focused training group and examined 21 patients, resulting in 27 clinical diagnoses, 27 ultrasound diagnoses, and 31 final attending diagnoses. Two residents made up the extended training group and examined 48 patients, resulting in 61 clinical diagnoses, 60 ultrasound diagnoses, and 60 final attending diagnoses. Improvements in sensitivity and specificity using lung ultrasound were more pronounced for the extended training group and are shown for each diagnosis in Table 2.

| Age, y, mean | 69 |

|---|---|

| |

| Sex, male, % | 52.2 |

| BMI, mean, kg/m2 | 25.7 |

| Comorbidities, % | |

| COPD | 43.3 |

| CHF | 23.9 |

| Hypertension | 59.4 |

| Diabetes mellitus | 29 |

| Atrial fibrillation | 18.9 |

| DVT/PE | 1.5 |

| Lung cancer | 5.9 |

| Finding on admission, % | |

| CXR available | 94 |

| Chest CT available | 22.4 |

| WBC >10.4 K/L | 36.2 |

| BNP >400 pg/mL | 27.5 |

| Temperature >100.9F | 6 |

| Heart rate >90 bpm | 47.8 |

| Desaturation* | 32 |

| Focused Training Group | Extended Training Group | |||||||

|---|---|---|---|---|---|---|---|---|

| CLINDIAG, N=27 | USDIAG, N=27 | CLINDIAG, N=61 | USDIAG, N=20 | |||||

| Diagnosis | Sens, % | Spec, % | Sens, % | Spec, % | Sens, % | Spec, % | Sens, % | Spec, % |

| ||||||||

| COPD/asthma | 60 | 96 | 60 | 96 | 55 | 96 | 91 | 96 |

| Pneumonia | 45 | 90 | 36 | 100 | 93 | 88 | 96 | 100 |

| Pulmonary edema | 100 | 85 | 100 | 86 | 89 | 96 | 89 | 100 |

| Pleural effusion | 57 | 100 | 86 | 96 | 57 | 96 | 100 | 96 |

| Other | 50 | 100 | 75 | 96 | 80 | 96 | 80 | 100 |

Overall diagnostic accuracy using lung ultrasound improved only for the extended training group (clinical 92% vs ultrasound 97%), whereas the focused training group's accuracy was unchanged (clinical 87% vs ultrasound 88%).

ROC analysis demonstrated a superior diagnostic performance of ultrasound when compared to clinical diagnosis (Table 3).

| Diagnosis | CLINDIAG AUC, N=69 | USDIAG AUC, N=69 | P Value |

|---|---|---|---|

| |||

| COPD/asthma | 0.73 | 0.85 | 0.06 |

| Pulmonary edema | 0.85 | 0.89 | 0.49 |

| Pneumonia | 0.77 | 0.88 | 0.01 |

| Pleural effusion | 0.76 | 0.96 | 0.002 |

| Other* | 0.78 | 0.69 | 0.01 |

| All causes, n=69 | 0.81 | 0.87 | 0.01 |

DISCUSSION

In this prospective, observational study of residents performing lung ultrasound of patients with dyspnea, the diagnostic accuracy incorporating ultrasound increased compared to a standard diagnostic approach relying on history, physical exam, blood tests, and radiography. To our knowledge, this is the first study of residents independently performing lung ultrasound with a pocket ultrasound to diagnose dyspnea. Receiver operating curve analysis shows improvements in diagnostic accuracy for causes such as PNA, pleural effusion and COPD/asthma and demonstrates the feasibility and clinical utility of residents using pocket ultrasounds. The finding that improvements in sensitivity and specificity were larger in the extended training group highlights the need for sufficient training to demonstrate increased utility. Although a 2‐week critical care ultrasound elective may not be possible for all residents, perhaps training of intensity somewhere in between these 2 levels would be most feasible.

Challenges in diagnosing dyspnea have been well described, attributed to a lack of accurate history combined with often insensitive and nonspecific physical exam findings, blood tests, and radiographs.[8, 9] Further, patients often present with multiple contributing causes as was evidenced in this study.[10] Lack of initial, accurate diagnoses often leads to the provision of multiple, incorrect treatment regimens that may increase mortality.[11] The high accuracy of lung ultrasound in defining causes of respiratory failure suggests potential as a low‐cost solution.[12]

This study design differed from prior work in several respects. First, it included patients presenting with dyspnea to a hospital ward rather than acute respiratory failure to an intensive care unit (ICU), suggesting its diagnostic potential in a broader population of patients and settings. Second, the lung ultrasound was integrated with traditional clinical information rather than relied upon alone, a situation mimicking real‐world application of POC‐US. Third, operators were residents with limited amounts of training rather than highly trained experts. Finally, the lung ultrasound exams were performed using a pocket ultrasound with inferior imaging capability than larger, more established ultrasound devices. Despite these constraints, the utility of lung ultrasound was still evident, particularly in the diagnosis or exclusion of pneumonia and PLEFF.

Limitations include reliance on a small cohort of highly motivated residents with an interest in pulmonary and critical care, 2 who are authors of this article, making reproducibility a concern. Although convenience sampling may more closely mimic real world practices of POC‐US, a bias toward less challenging patients is possible and may limit conclusions regarding utility. Over‐reading and feedback were not provided to residents to improve their performance of lung ultrasound exams. Also, because chest CT is considered the gold standard in most studies examining the diagnostic accuracy of lung ultrasound, all residents aware of these data may underestimate the potential impact of integrating lung ultrasound with all clinical findings. Finally, the high cost of pocket ultrasounds is a barrier to general use. Recent studies on the significant cost savings associated with POC‐US make a further analysis of cost‐benefit ratios mandatory before broad use can be recommended.[13]

CONCLUSIONS

Residents participating in lung ultrasound training with a pocket ultrasound device showed improved diagnostic accuracy in their evaluation of patients with dyspnea. Those who received extended training had greater improvements across all causes of dyspnea. Training residents to apply lung ultrasound in non‐ICU settings appears to be feasible. Further study with a larger cohort of internal medicine residents and perhaps training duration that lies in between the focused and extended training groups is warranted.

Acknowledgements

The authors thank Dr. David Lucido for guidance on statistical analysis and Stephane Gatesoupe and the Vscan team at General Electric.

Disclosure: Ten Vscan pocket ultrasounds (General Electric) were provided free of cost solely for the purpose of conducting the clinical research study. This represented their sole participation in any stage of the research. The authors have no conflicts of interest to disclose.

Applications of point‐of‐care ultrasonography (POC‐US) have grown rapidly over the past 20 years. POC‐US training is required by the Accreditation Council for Graduate Medical Education for several graduate medical education training programs, including emergency medicine residency and pulmonary/critical care fellowships.[1] Recent efforts have examined the utility of ultrasound in the education of medical students[2] and the diagnostic and procedural applications performed by residents.[3] One powerful application of POC‐US is the use of lung ultrasound to diagnose causes of respiratory failure at the bedside.[4] Although lung ultrasound has been shown to have superior diagnostic accuracy to chest x‐rays,[5] limited availability of expert physicians and ultrasound equipment have presented barriers to wider application. The advent of lower cost pocket ultrasounds may present a solution given the early reports of similar efficacy to traditional devices in the assessment of left ventricular dysfunction, acute decompensated heart failure,[6] and focused assessment with sonography for trauma.[7] We assessed the feasibility and diagnostic accuracy of residents trained in lung ultrasound with a pocket device for evaluating patients with dyspnea.

MATERIALS AND METHODS

Study Design

We performed a prospective, observational study of internal medicine residents performing lung ultrasound with a pocket ultrasound from September 2012 to August 2013 at Beth Israel Medical Center, an 856‐bed teaching hospital in New York City. This study was approved by the Committee of Scientific Affairs of Beth Israel Medical Center, which waived the requirement for informed consent (institutional review board #016‐10). Ten pocket ultrasounds (Vscan; GE Vingmed Ultrasound, Horten, Norway) were acquired through an educational grant from General Electric Company. Grant sponsors were not involved in any aspect of the study.

Recruitment and Training

One hundred nineteen internal medicine residents were offered training on lung ultrasound in return for participating in the study. Initially, 10 residents from 3 postgraduate years with no previous lung ultrasound experience volunteered for the study and received a pocket ultrasound along with either focused or extended training. Focused and extended training groups both received 2 sessions of 90 minutes that included didactics covering image creation of the 5 main diagnostic lung ultrasound patterns and their pathological correlates. Sessions also included training in the operation of a pocket ultrasound along with bedside instruction in image acquisition using an 8‐point exam protocol (Figure 1A). All residents were required to demonstrate competency in this 8‐point protocol with proper image acquisition and interpretation of 3 lung ultrasound exams under direct supervision by an expert practitioner (P.K.). Only 5 residents completed the training due mostly to other commitments. Two extended training residents, both authors of this article, who plan to continue training in pulmonary and critical care medicine, volunteered for an additional 2‐week general critical care ultrasound elective. This elective included daily bedside supervised performance and interpretation of lung ultrasound patterns on at least 15 patients admitted during intensive care unit rounds.

Patient Selection

Patients admitted to a resident's service were considered for inclusion at their convenience if the patient reported a chief complaint of dyspnea.

Diagnostic Protocol

Upon admission, residents recorded a clinical diagnosis of dyspnea based on a standard diagnostic evaluation including complete history, physical exam, and all relevant laboratory and imaging studies, including chest x‐ray and computed tomography (CT) scans. A diagnosis of dyspnea after lung ultrasound was then recorded based on the lung ultrasound findings and integrated with all other clinical information available. Standard lung ultrasound patterns and diagnostic correlates are shown in Figure 1. Diagnoses of dyspnea were recorded as one of 7 possibilities; 1) exacerbation of chronic obstructive pulmonary disease or asthma (COPD/asthma), 2) acute pulmonary edema (APE), 3) pneumonia (PNA), 4) pulmonary embolus (PE), 5) pneumothorax (PTX), 6) pleural effusion (PLEFF), and 7) other (OTH), namely anemia, ascites, and dehydration.

Data Collection

Patient demographics, comorbidities, lung ultrasound findings, and both clinical and ultrasound diagnosis were recorded on a standardized form. A final diagnosis based on the attending physicians' diagnosis of dyspnea was determined through chart review by 3 investigators blinded to the clinical and ultrasound diagnoses. Discordant findings were resolved by consensus. Attending physicians were blinded to the lung ultrasound exam results.

Statistical Analysis

Sensitivity and specificity of the clinical and ultrasound diagnoses for focused and extended training groups were calculated for each diagnosis using final attending diagnosis as the gold standard. Causes of dyspnea were often deemed multifactorial, leading to more than 1 diagnosis recorded per patient exam. Overall diagnostic accuracy was calculated for each group using the reported clinical, ultrasound, and final diagnoses. Receiver operating curve (ROC) analysis was performed with Stata 12.1 (StataCorp, College Station, TX).

RESULTS

Five residents performed lung ultrasound on a convenience sample of 69 newly admitted patients. Patient baseline characteristics are shown in Table 1. Three residents made up the focused training group and examined 21 patients, resulting in 27 clinical diagnoses, 27 ultrasound diagnoses, and 31 final attending diagnoses. Two residents made up the extended training group and examined 48 patients, resulting in 61 clinical diagnoses, 60 ultrasound diagnoses, and 60 final attending diagnoses. Improvements in sensitivity and specificity using lung ultrasound were more pronounced for the extended training group and are shown for each diagnosis in Table 2.

| Age, y, mean | 69 |

|---|---|

| |

| Sex, male, % | 52.2 |

| BMI, mean, kg/m2 | 25.7 |

| Comorbidities, % | |

| COPD | 43.3 |

| CHF | 23.9 |

| Hypertension | 59.4 |

| Diabetes mellitus | 29 |

| Atrial fibrillation | 18.9 |

| DVT/PE | 1.5 |

| Lung cancer | 5.9 |

| Finding on admission, % | |

| CXR available | 94 |

| Chest CT available | 22.4 |

| WBC >10.4 K/L | 36.2 |

| BNP >400 pg/mL | 27.5 |

| Temperature >100.9F | 6 |

| Heart rate >90 bpm | 47.8 |

| Desaturation* | 32 |

| Focused Training Group | Extended Training Group | |||||||

|---|---|---|---|---|---|---|---|---|

| CLINDIAG, N=27 | USDIAG, N=27 | CLINDIAG, N=61 | USDIAG, N=20 | |||||

| Diagnosis | Sens, % | Spec, % | Sens, % | Spec, % | Sens, % | Spec, % | Sens, % | Spec, % |

| ||||||||

| COPD/asthma | 60 | 96 | 60 | 96 | 55 | 96 | 91 | 96 |

| Pneumonia | 45 | 90 | 36 | 100 | 93 | 88 | 96 | 100 |

| Pulmonary edema | 100 | 85 | 100 | 86 | 89 | 96 | 89 | 100 |

| Pleural effusion | 57 | 100 | 86 | 96 | 57 | 96 | 100 | 96 |

| Other | 50 | 100 | 75 | 96 | 80 | 96 | 80 | 100 |

Overall diagnostic accuracy using lung ultrasound improved only for the extended training group (clinical 92% vs ultrasound 97%), whereas the focused training group's accuracy was unchanged (clinical 87% vs ultrasound 88%).