User login

Decreased hospital LOS not associated with increase in 30-day readmission rates

Clinical question

Does decreased length of stay result in increased risk of 30-day readmission for hospitalized patients with acute medical illness?

Bottom line

Reduction in length of stay (LOS) is not associated with increased risk of 30-day readmission for patients with acute medical illness. Although this may suggest that decreased LOS does not affect quality of care, this finding may also be due to improved efficiencies in a previously inefficient Veteran Affairs (VA) system leading to earlier discharges and increased efforts at bettering transitions of care. LOE = 2b

Reference

Study design

Cohort (retrospective)

Funding source

Government

Allocation

Uncertain

Setting

Inpatient (any location)

Synopsis

To determine whether reductions in LOS adversely affect 30-day readmission rates, these investigators used a national VA administrative database to identify all acute medical admissions to VA hospitals from 1997 to 2010. Patients who died, were transferred to another acute care facility, or whose LOS was longer than 30 days were excluded from consideration. Readmissions were defined as those that were linked to the index admission and occurred within 30 days of discharge. The cohort consisted of more than 4 million admissions and was further subdivided into 5 high-volume diagnoses: heart failure, chronic obstructive pulmonary disease (COPD), heart failure, acute myocardial infarction (AMI), community-acquired pneumonia, and gastrointestinal bleed. After adjusting for hospital and patient characteristics, LOS decreased during the 14-year period from 5.44 days to 3.98 days, and 30-day readmission rates decreased from 16.5% to 13.8%. Among the 5 high-volume conditions, LOS decreased the most for AMI (by almost 3 days) while readmission rates decreased the most for COPD (3.3%). Further analysis of all medical conditions showed that each additional day of stay resulted in a 3% increased rate of readmission. This was likely due to unmeasured severity of illness that affected both LOS and readmission. Of note, however, hospitals that had a mean LOS lower than the average LOS across all hospitals had higher readmissions rates (6% increase for each day lower than the average). Despite this, the overall readmission rate decreased over time as LOS decreased. All-cause mortality at 30 days and 90 days also improved over time.

Clinical question

Does decreased length of stay result in increased risk of 30-day readmission for hospitalized patients with acute medical illness?

Bottom line

Reduction in length of stay (LOS) is not associated with increased risk of 30-day readmission for patients with acute medical illness. Although this may suggest that decreased LOS does not affect quality of care, this finding may also be due to improved efficiencies in a previously inefficient Veteran Affairs (VA) system leading to earlier discharges and increased efforts at bettering transitions of care. LOE = 2b

Reference

Study design

Cohort (retrospective)

Funding source

Government

Allocation

Uncertain

Setting

Inpatient (any location)

Synopsis

To determine whether reductions in LOS adversely affect 30-day readmission rates, these investigators used a national VA administrative database to identify all acute medical admissions to VA hospitals from 1997 to 2010. Patients who died, were transferred to another acute care facility, or whose LOS was longer than 30 days were excluded from consideration. Readmissions were defined as those that were linked to the index admission and occurred within 30 days of discharge. The cohort consisted of more than 4 million admissions and was further subdivided into 5 high-volume diagnoses: heart failure, chronic obstructive pulmonary disease (COPD), heart failure, acute myocardial infarction (AMI), community-acquired pneumonia, and gastrointestinal bleed. After adjusting for hospital and patient characteristics, LOS decreased during the 14-year period from 5.44 days to 3.98 days, and 30-day readmission rates decreased from 16.5% to 13.8%. Among the 5 high-volume conditions, LOS decreased the most for AMI (by almost 3 days) while readmission rates decreased the most for COPD (3.3%). Further analysis of all medical conditions showed that each additional day of stay resulted in a 3% increased rate of readmission. This was likely due to unmeasured severity of illness that affected both LOS and readmission. Of note, however, hospitals that had a mean LOS lower than the average LOS across all hospitals had higher readmissions rates (6% increase for each day lower than the average). Despite this, the overall readmission rate decreased over time as LOS decreased. All-cause mortality at 30 days and 90 days also improved over time.

Clinical question

Does decreased length of stay result in increased risk of 30-day readmission for hospitalized patients with acute medical illness?

Bottom line

Reduction in length of stay (LOS) is not associated with increased risk of 30-day readmission for patients with acute medical illness. Although this may suggest that decreased LOS does not affect quality of care, this finding may also be due to improved efficiencies in a previously inefficient Veteran Affairs (VA) system leading to earlier discharges and increased efforts at bettering transitions of care. LOE = 2b

Reference

Study design

Cohort (retrospective)

Funding source

Government

Allocation

Uncertain

Setting

Inpatient (any location)

Synopsis

To determine whether reductions in LOS adversely affect 30-day readmission rates, these investigators used a national VA administrative database to identify all acute medical admissions to VA hospitals from 1997 to 2010. Patients who died, were transferred to another acute care facility, or whose LOS was longer than 30 days were excluded from consideration. Readmissions were defined as those that were linked to the index admission and occurred within 30 days of discharge. The cohort consisted of more than 4 million admissions and was further subdivided into 5 high-volume diagnoses: heart failure, chronic obstructive pulmonary disease (COPD), heart failure, acute myocardial infarction (AMI), community-acquired pneumonia, and gastrointestinal bleed. After adjusting for hospital and patient characteristics, LOS decreased during the 14-year period from 5.44 days to 3.98 days, and 30-day readmission rates decreased from 16.5% to 13.8%. Among the 5 high-volume conditions, LOS decreased the most for AMI (by almost 3 days) while readmission rates decreased the most for COPD (3.3%). Further analysis of all medical conditions showed that each additional day of stay resulted in a 3% increased rate of readmission. This was likely due to unmeasured severity of illness that affected both LOS and readmission. Of note, however, hospitals that had a mean LOS lower than the average LOS across all hospitals had higher readmissions rates (6% increase for each day lower than the average). Despite this, the overall readmission rate decreased over time as LOS decreased. All-cause mortality at 30 days and 90 days also improved over time.

Femoral lines not associated with increased risk of bloodstream infections

Clinical question

Do central venous catheters in the femoral vein increase the risk of catheter-related bloodstream infections as compared with those placed in the subclavian or internal jugular veins?

Bottom line

The risk of catheter-related bloodstream infections (CRBIs) from nontunneled central venous catheters has decreased in the last decade.This review suggests that there is no difference in risk of CRBIs when comparing catheters placed in femoral sites with those placed in subclavian or internal jugular (IJ) sites, especially when looking at data from more recent studies. LOE = 1a

Reference

Study design

Meta-analysis (other)

Funding source

Unknown/not stated

Allocation

Uncertain

Setting

Inpatient (any location)

Synopsis

Current guidelines from the Centers for Disease Control recommend avoiding the femoral vein for central access in adult patients because of a potentially higher risk of CRBI. Two independent investigators searched MEDLINE, EMBASE, the Cochrane Database of Systematic Reviews, and bibliographies of relevant articles, as well as performed an Internet search, to find randomized controlled trials (RCTs) and cohort studies that examined the risk of CRBIs due to nontunneled central venous catheters placed in the femoral site as compared with those placed in the subclavian or IJ sites. Two RCTs, 8 cohort studies, and data from a Welsh infection control surveillance Web site were selected. Two authors independently extracted data from the selected studies. No formal quality assessment of the studies was performed. Data from the RCTs alone showed no difference in CRBIs between femoral sites and subclavian or IJ sites. Data from all the studies that compared femoral sites to subclavian sites showed no significant difference in the risk of CRBIs. For comparisons of femoral and IJ sites, the overall data favored the IJ site (relative risk of infection with femoral site placement = 1.90; 95% CI, 1.21-2.97; P = .005). However, 2 of the 9 included studies in this analysis were "statistical outliers," possibly due to unique circumstances in the hospitals in which they were performed, thus limiting their generalizability. When these 2 studies were removed from the analysis, there was no significant difference between femoral and IJ sites. For both comparisons (femoral vs subclavian and femoral vs IJ), there was an interaction between risk of infection and year of study publication, with earlier studies noting a greater risk of infection with femoral sites. Overall, this data confirms a decrease in incidence of CRBIs by more than 50% in the last 10 years. Additionally, study meta-analysis found no difference in the risk of deep venous thrombosis with femoral versus subclavian and IJ sites.

Clinical question

Do central venous catheters in the femoral vein increase the risk of catheter-related bloodstream infections as compared with those placed in the subclavian or internal jugular veins?

Bottom line

The risk of catheter-related bloodstream infections (CRBIs) from nontunneled central venous catheters has decreased in the last decade.This review suggests that there is no difference in risk of CRBIs when comparing catheters placed in femoral sites with those placed in subclavian or internal jugular (IJ) sites, especially when looking at data from more recent studies. LOE = 1a

Reference

Study design

Meta-analysis (other)

Funding source

Unknown/not stated

Allocation

Uncertain

Setting

Inpatient (any location)

Synopsis

Current guidelines from the Centers for Disease Control recommend avoiding the femoral vein for central access in adult patients because of a potentially higher risk of CRBI. Two independent investigators searched MEDLINE, EMBASE, the Cochrane Database of Systematic Reviews, and bibliographies of relevant articles, as well as performed an Internet search, to find randomized controlled trials (RCTs) and cohort studies that examined the risk of CRBIs due to nontunneled central venous catheters placed in the femoral site as compared with those placed in the subclavian or IJ sites. Two RCTs, 8 cohort studies, and data from a Welsh infection control surveillance Web site were selected. Two authors independently extracted data from the selected studies. No formal quality assessment of the studies was performed. Data from the RCTs alone showed no difference in CRBIs between femoral sites and subclavian or IJ sites. Data from all the studies that compared femoral sites to subclavian sites showed no significant difference in the risk of CRBIs. For comparisons of femoral and IJ sites, the overall data favored the IJ site (relative risk of infection with femoral site placement = 1.90; 95% CI, 1.21-2.97; P = .005). However, 2 of the 9 included studies in this analysis were "statistical outliers," possibly due to unique circumstances in the hospitals in which they were performed, thus limiting their generalizability. When these 2 studies were removed from the analysis, there was no significant difference between femoral and IJ sites. For both comparisons (femoral vs subclavian and femoral vs IJ), there was an interaction between risk of infection and year of study publication, with earlier studies noting a greater risk of infection with femoral sites. Overall, this data confirms a decrease in incidence of CRBIs by more than 50% in the last 10 years. Additionally, study meta-analysis found no difference in the risk of deep venous thrombosis with femoral versus subclavian and IJ sites.

Clinical question

Do central venous catheters in the femoral vein increase the risk of catheter-related bloodstream infections as compared with those placed in the subclavian or internal jugular veins?

Bottom line

The risk of catheter-related bloodstream infections (CRBIs) from nontunneled central venous catheters has decreased in the last decade.This review suggests that there is no difference in risk of CRBIs when comparing catheters placed in femoral sites with those placed in subclavian or internal jugular (IJ) sites, especially when looking at data from more recent studies. LOE = 1a

Reference

Study design

Meta-analysis (other)

Funding source

Unknown/not stated

Allocation

Uncertain

Setting

Inpatient (any location)

Synopsis

Current guidelines from the Centers for Disease Control recommend avoiding the femoral vein for central access in adult patients because of a potentially higher risk of CRBI. Two independent investigators searched MEDLINE, EMBASE, the Cochrane Database of Systematic Reviews, and bibliographies of relevant articles, as well as performed an Internet search, to find randomized controlled trials (RCTs) and cohort studies that examined the risk of CRBIs due to nontunneled central venous catheters placed in the femoral site as compared with those placed in the subclavian or IJ sites. Two RCTs, 8 cohort studies, and data from a Welsh infection control surveillance Web site were selected. Two authors independently extracted data from the selected studies. No formal quality assessment of the studies was performed. Data from the RCTs alone showed no difference in CRBIs between femoral sites and subclavian or IJ sites. Data from all the studies that compared femoral sites to subclavian sites showed no significant difference in the risk of CRBIs. For comparisons of femoral and IJ sites, the overall data favored the IJ site (relative risk of infection with femoral site placement = 1.90; 95% CI, 1.21-2.97; P = .005). However, 2 of the 9 included studies in this analysis were "statistical outliers," possibly due to unique circumstances in the hospitals in which they were performed, thus limiting their generalizability. When these 2 studies were removed from the analysis, there was no significant difference between femoral and IJ sites. For both comparisons (femoral vs subclavian and femoral vs IJ), there was an interaction between risk of infection and year of study publication, with earlier studies noting a greater risk of infection with femoral sites. Overall, this data confirms a decrease in incidence of CRBIs by more than 50% in the last 10 years. Additionally, study meta-analysis found no difference in the risk of deep venous thrombosis with femoral versus subclavian and IJ sites.

Norovirus now top cause of acute gastroenteritis in young U.S. children

Norovirus is now the leading cause of acute gastroenteritis requiring medical care among U.S. children younger than 5 years of age, according to a report published online March 20 in the New England Journal of Medicine.

Now that rotavirus vaccines have dramatically reduced the number of acute gastroenteritis cases attributable to that organism, norovirus infections have taken over the lead in causing the disorder in the young U.S. pediatric population. Norovirus is responsible for an estimated 1 million health care visits each year for this age group, at an estimated cost approaching $300 million, said Daniel C. Payne, Ph.D., of the National Center for Immunization and Respiratory Diseases, Centers for Disease Control and Prevention, and his associates.

"According to our estimation, by their fifth birthday, 1 in 278 U.S. children are hospitalized for norovirus infection, 1 in 14 are seen in the emergency department, and 1 in 6 are seen by outpatient care providers," the investigators noted.

They studied the epidemiology of the infection because now that candidate norovirus vaccines are in development, "there is a need to directly measure the pediatric health care burden of norovirus-associated gastroenteritis."

Dr. Payne and his colleagues analyzed data from the New Vaccine Surveillance Network, which collects information on the medical care of children residing near Rochester, N.Y.; Nashville, Tenn.; and Cincinnati – a catchment population exceeding 141,000 children under age 5.

The researchers prospectively assessed cases of acute gastroenteritis treated at hospitals, emergency departments, and outpatient clinics during two successive 12-month surveillance periods between October 2008 and September 2010. There were 1,077 cases the first year and 820 the second year; the data from these were compared with data from 806 age-matched children attending well-child visits, who served as a control group.

The disease burden of norovirus infection was "consistently high" during both years, accounting for 20%-22% of cases of acute gastroenteritis. Norovirus was detected in 4% of healthy controls in 2009. The overall rate of medical attention for the infection was highest – 47% – among children aged 6-18 months, Dr. Payne and his associates reported (N. Engl. J. Med. 2013;368:1121-30).

This study was supported by the CDC. Dr. Payne reported that he did not have any conflicts of interest relevant to this study. His coauthors reported ties to GlaxoSmithKline, Merck, and Luminex Molecular Diagnostics.

Norovirus is now the leading cause of acute gastroenteritis requiring medical care among U.S. children younger than 5 years of age, according to a report published online March 20 in the New England Journal of Medicine.

Now that rotavirus vaccines have dramatically reduced the number of acute gastroenteritis cases attributable to that organism, norovirus infections have taken over the lead in causing the disorder in the young U.S. pediatric population. Norovirus is responsible for an estimated 1 million health care visits each year for this age group, at an estimated cost approaching $300 million, said Daniel C. Payne, Ph.D., of the National Center for Immunization and Respiratory Diseases, Centers for Disease Control and Prevention, and his associates.

"According to our estimation, by their fifth birthday, 1 in 278 U.S. children are hospitalized for norovirus infection, 1 in 14 are seen in the emergency department, and 1 in 6 are seen by outpatient care providers," the investigators noted.

They studied the epidemiology of the infection because now that candidate norovirus vaccines are in development, "there is a need to directly measure the pediatric health care burden of norovirus-associated gastroenteritis."

Dr. Payne and his colleagues analyzed data from the New Vaccine Surveillance Network, which collects information on the medical care of children residing near Rochester, N.Y.; Nashville, Tenn.; and Cincinnati – a catchment population exceeding 141,000 children under age 5.

The researchers prospectively assessed cases of acute gastroenteritis treated at hospitals, emergency departments, and outpatient clinics during two successive 12-month surveillance periods between October 2008 and September 2010. There were 1,077 cases the first year and 820 the second year; the data from these were compared with data from 806 age-matched children attending well-child visits, who served as a control group.

The disease burden of norovirus infection was "consistently high" during both years, accounting for 20%-22% of cases of acute gastroenteritis. Norovirus was detected in 4% of healthy controls in 2009. The overall rate of medical attention for the infection was highest – 47% – among children aged 6-18 months, Dr. Payne and his associates reported (N. Engl. J. Med. 2013;368:1121-30).

This study was supported by the CDC. Dr. Payne reported that he did not have any conflicts of interest relevant to this study. His coauthors reported ties to GlaxoSmithKline, Merck, and Luminex Molecular Diagnostics.

Norovirus is now the leading cause of acute gastroenteritis requiring medical care among U.S. children younger than 5 years of age, according to a report published online March 20 in the New England Journal of Medicine.

Now that rotavirus vaccines have dramatically reduced the number of acute gastroenteritis cases attributable to that organism, norovirus infections have taken over the lead in causing the disorder in the young U.S. pediatric population. Norovirus is responsible for an estimated 1 million health care visits each year for this age group, at an estimated cost approaching $300 million, said Daniel C. Payne, Ph.D., of the National Center for Immunization and Respiratory Diseases, Centers for Disease Control and Prevention, and his associates.

"According to our estimation, by their fifth birthday, 1 in 278 U.S. children are hospitalized for norovirus infection, 1 in 14 are seen in the emergency department, and 1 in 6 are seen by outpatient care providers," the investigators noted.

They studied the epidemiology of the infection because now that candidate norovirus vaccines are in development, "there is a need to directly measure the pediatric health care burden of norovirus-associated gastroenteritis."

Dr. Payne and his colleagues analyzed data from the New Vaccine Surveillance Network, which collects information on the medical care of children residing near Rochester, N.Y.; Nashville, Tenn.; and Cincinnati – a catchment population exceeding 141,000 children under age 5.

The researchers prospectively assessed cases of acute gastroenteritis treated at hospitals, emergency departments, and outpatient clinics during two successive 12-month surveillance periods between October 2008 and September 2010. There were 1,077 cases the first year and 820 the second year; the data from these were compared with data from 806 age-matched children attending well-child visits, who served as a control group.

The disease burden of norovirus infection was "consistently high" during both years, accounting for 20%-22% of cases of acute gastroenteritis. Norovirus was detected in 4% of healthy controls in 2009. The overall rate of medical attention for the infection was highest – 47% – among children aged 6-18 months, Dr. Payne and his associates reported (N. Engl. J. Med. 2013;368:1121-30).

This study was supported by the CDC. Dr. Payne reported that he did not have any conflicts of interest relevant to this study. His coauthors reported ties to GlaxoSmithKline, Merck, and Luminex Molecular Diagnostics.

FROM THE NEW ENGLAND JOURNAL OF MEDICINE

Major Finding: By the time U.S. children turn 5, 1 in 278 is admitted to the hospital for a norovirus infection, 1 in 14 is seen in an emergency department, and 1 in 6 is seen by an outpatient health care provider, at a cost of $273 million annually.

Data Source: A prospective, population-based surveillance study of norovirus infections in children under age 5.

Disclosures: This study was supported by the CDC. Dr. Payne said that he did not have any conflicts of interest relevant to this study. His coauthors reported ties to GlaxoSmithKline, Merck, and Luminex Molecular Diagnostics.

FDA Recommends New Opioids Research Prove Abuse-Deterrent Properties

Inappropriate use of prescription opioids is a major public health challenge, prompting the U.S. Food and Drug Administration (FDA) to issue a draft guidance document aimed at helping industry create new formulations of opioids with abuse-deterrent properties.

Released in January, “Guidance for Industry: Abuse-Deterrent Opioids—Evaluation and Labeling” provides recommendations for conducting studies to prove that a particular formulation contains abuse-deterrent properties. It also explains how the FDA will review the results and determine which labeling claims to approve.

This announcement is “one component of our larger effort to prevent prescription drug abuse and misuse, while ensuring that patients in pain continue to have access to these important medicines,” Douglas Throckmorton, MD, deputy director for regulatory programs in the FDA’s Center for Drug Evaluation and Research, said during a teleconference.

According to the FDA guidance, opioid analgesics can be abused in a variety of ways:

- Swallowed whole;

- Crushed and swallowed;

- Crushed and snorted;

- Crushed and smoked; or

- Crushed, dissolved, and injected.

With the science of abuse deterrence being relatively new, the FDA plans to take a flexible and adaptive approach. That’s because the analytical, clinical, and statistical methods for evaluating formulation technologies are still evolving.

“Physicians should care about this because the government is regulating prescribing practices more directly than in the past, especially with pain drugs,” says Daniel Carpenter, PhD, a Harvard University government professor and author on FDA pharmaceutical regulation. “The FDA and federal agencies are going to be leaning more heavily upon physicians.”

To date, the majority of current abuse-deterrent technologies have not been effective in preventing the most widespread type of abuse—ingesting a number of pills or tablets to reach a state of euphoria.

—Daniel Carpenter, PhD, Harvard University government professor and author on FDA pharmaceutical regulation

Science points toward ways that formulations can help thwart abuse. For instance, adding an opioid antagonist can hinder, limit, or defeat euphoria. An antagonist can be sequestered and released only upon the product’s manipulation. In one such scenario, the substance acting as an antagonist could be clinically inactive when swallowed, but then would become active if the product is crushed and injected or snorted.

“The guidance describes advice for the development of abuse-deterrent opioids and does not describe practice guidelines,” says Christopher Kelly, an FDA spokesman. However, he adds, “[FDA] urges all prescribers of extended-release and long-acting opioids to participate in the training under the Risk Evaluation and Mitigation Strategy (REMS).” The first REMS-compliant training is expected to become available by March 1.

Such a strategy is intended to manage known or potential serious risks associated with a drug product. The FDA requires it to ensure that the benefits of a drug outweigh its risks.

Manufacturers of opioid analgesics have worked with the FDA to produce materials for the REMS program that would inform healthcare professionals about safe prescribing. Continuing-education providers also are designing accredited training. (For more information, listen to this NIH podcast about training to help providers prescribe painkillers properly.)

Prescribers are advised to complete a REMS-compliant program through an accredited continuing-education provider for their discipline. They should discuss the safe use, serious risks, storage, and disposal of opioids with patients and caregivers each time they prescribe these medicines. It’s also essential to stress the importance of reading the medication guide they will receive from the pharmacist at drug-dispensing time.

Whether the FDA’s industry guidance for the development of abuse-deterrent opioids will make a difference remains to be seen, according to Carpenter. The addictive potential of opioids has created “a kind of public health epidemic,” he says. “It’s not an infectious epidemic in the sense of the flu, but it’s socially and behaviorally infectious and very destructive.”

Creating better tamper-resistant drugs could impede someone from “taking a longer-acting version and breaking it down into a much more toxic soup for other purposes,” Carpenter says. However, he concedes it won’t be impossible to swallow one or more pills too many, leading to this very common form of pharmaceutical abuse.

The FDA is accepting public comment on the draft guidance, while encouraging further scientific and clinical research to advance the development and assessment of abuse-deterrent technologies.

Susan Kreimer is a freelance writer based in New York.

Inappropriate use of prescription opioids is a major public health challenge, prompting the U.S. Food and Drug Administration (FDA) to issue a draft guidance document aimed at helping industry create new formulations of opioids with abuse-deterrent properties.

Released in January, “Guidance for Industry: Abuse-Deterrent Opioids—Evaluation and Labeling” provides recommendations for conducting studies to prove that a particular formulation contains abuse-deterrent properties. It also explains how the FDA will review the results and determine which labeling claims to approve.

This announcement is “one component of our larger effort to prevent prescription drug abuse and misuse, while ensuring that patients in pain continue to have access to these important medicines,” Douglas Throckmorton, MD, deputy director for regulatory programs in the FDA’s Center for Drug Evaluation and Research, said during a teleconference.

According to the FDA guidance, opioid analgesics can be abused in a variety of ways:

- Swallowed whole;

- Crushed and swallowed;

- Crushed and snorted;

- Crushed and smoked; or

- Crushed, dissolved, and injected.

With the science of abuse deterrence being relatively new, the FDA plans to take a flexible and adaptive approach. That’s because the analytical, clinical, and statistical methods for evaluating formulation technologies are still evolving.

“Physicians should care about this because the government is regulating prescribing practices more directly than in the past, especially with pain drugs,” says Daniel Carpenter, PhD, a Harvard University government professor and author on FDA pharmaceutical regulation. “The FDA and federal agencies are going to be leaning more heavily upon physicians.”

To date, the majority of current abuse-deterrent technologies have not been effective in preventing the most widespread type of abuse—ingesting a number of pills or tablets to reach a state of euphoria.

—Daniel Carpenter, PhD, Harvard University government professor and author on FDA pharmaceutical regulation

Science points toward ways that formulations can help thwart abuse. For instance, adding an opioid antagonist can hinder, limit, or defeat euphoria. An antagonist can be sequestered and released only upon the product’s manipulation. In one such scenario, the substance acting as an antagonist could be clinically inactive when swallowed, but then would become active if the product is crushed and injected or snorted.

“The guidance describes advice for the development of abuse-deterrent opioids and does not describe practice guidelines,” says Christopher Kelly, an FDA spokesman. However, he adds, “[FDA] urges all prescribers of extended-release and long-acting opioids to participate in the training under the Risk Evaluation and Mitigation Strategy (REMS).” The first REMS-compliant training is expected to become available by March 1.

Such a strategy is intended to manage known or potential serious risks associated with a drug product. The FDA requires it to ensure that the benefits of a drug outweigh its risks.

Manufacturers of opioid analgesics have worked with the FDA to produce materials for the REMS program that would inform healthcare professionals about safe prescribing. Continuing-education providers also are designing accredited training. (For more information, listen to this NIH podcast about training to help providers prescribe painkillers properly.)

Prescribers are advised to complete a REMS-compliant program through an accredited continuing-education provider for their discipline. They should discuss the safe use, serious risks, storage, and disposal of opioids with patients and caregivers each time they prescribe these medicines. It’s also essential to stress the importance of reading the medication guide they will receive from the pharmacist at drug-dispensing time.

Whether the FDA’s industry guidance for the development of abuse-deterrent opioids will make a difference remains to be seen, according to Carpenter. The addictive potential of opioids has created “a kind of public health epidemic,” he says. “It’s not an infectious epidemic in the sense of the flu, but it’s socially and behaviorally infectious and very destructive.”

Creating better tamper-resistant drugs could impede someone from “taking a longer-acting version and breaking it down into a much more toxic soup for other purposes,” Carpenter says. However, he concedes it won’t be impossible to swallow one or more pills too many, leading to this very common form of pharmaceutical abuse.

The FDA is accepting public comment on the draft guidance, while encouraging further scientific and clinical research to advance the development and assessment of abuse-deterrent technologies.

Susan Kreimer is a freelance writer based in New York.

Inappropriate use of prescription opioids is a major public health challenge, prompting the U.S. Food and Drug Administration (FDA) to issue a draft guidance document aimed at helping industry create new formulations of opioids with abuse-deterrent properties.

Released in January, “Guidance for Industry: Abuse-Deterrent Opioids—Evaluation and Labeling” provides recommendations for conducting studies to prove that a particular formulation contains abuse-deterrent properties. It also explains how the FDA will review the results and determine which labeling claims to approve.

This announcement is “one component of our larger effort to prevent prescription drug abuse and misuse, while ensuring that patients in pain continue to have access to these important medicines,” Douglas Throckmorton, MD, deputy director for regulatory programs in the FDA’s Center for Drug Evaluation and Research, said during a teleconference.

According to the FDA guidance, opioid analgesics can be abused in a variety of ways:

- Swallowed whole;

- Crushed and swallowed;

- Crushed and snorted;

- Crushed and smoked; or

- Crushed, dissolved, and injected.

With the science of abuse deterrence being relatively new, the FDA plans to take a flexible and adaptive approach. That’s because the analytical, clinical, and statistical methods for evaluating formulation technologies are still evolving.

“Physicians should care about this because the government is regulating prescribing practices more directly than in the past, especially with pain drugs,” says Daniel Carpenter, PhD, a Harvard University government professor and author on FDA pharmaceutical regulation. “The FDA and federal agencies are going to be leaning more heavily upon physicians.”

To date, the majority of current abuse-deterrent technologies have not been effective in preventing the most widespread type of abuse—ingesting a number of pills or tablets to reach a state of euphoria.

—Daniel Carpenter, PhD, Harvard University government professor and author on FDA pharmaceutical regulation

Science points toward ways that formulations can help thwart abuse. For instance, adding an opioid antagonist can hinder, limit, or defeat euphoria. An antagonist can be sequestered and released only upon the product’s manipulation. In one such scenario, the substance acting as an antagonist could be clinically inactive when swallowed, but then would become active if the product is crushed and injected or snorted.

“The guidance describes advice for the development of abuse-deterrent opioids and does not describe practice guidelines,” says Christopher Kelly, an FDA spokesman. However, he adds, “[FDA] urges all prescribers of extended-release and long-acting opioids to participate in the training under the Risk Evaluation and Mitigation Strategy (REMS).” The first REMS-compliant training is expected to become available by March 1.

Such a strategy is intended to manage known or potential serious risks associated with a drug product. The FDA requires it to ensure that the benefits of a drug outweigh its risks.

Manufacturers of opioid analgesics have worked with the FDA to produce materials for the REMS program that would inform healthcare professionals about safe prescribing. Continuing-education providers also are designing accredited training. (For more information, listen to this NIH podcast about training to help providers prescribe painkillers properly.)

Prescribers are advised to complete a REMS-compliant program through an accredited continuing-education provider for their discipline. They should discuss the safe use, serious risks, storage, and disposal of opioids with patients and caregivers each time they prescribe these medicines. It’s also essential to stress the importance of reading the medication guide they will receive from the pharmacist at drug-dispensing time.

Whether the FDA’s industry guidance for the development of abuse-deterrent opioids will make a difference remains to be seen, according to Carpenter. The addictive potential of opioids has created “a kind of public health epidemic,” he says. “It’s not an infectious epidemic in the sense of the flu, but it’s socially and behaviorally infectious and very destructive.”

Creating better tamper-resistant drugs could impede someone from “taking a longer-acting version and breaking it down into a much more toxic soup for other purposes,” Carpenter says. However, he concedes it won’t be impossible to swallow one or more pills too many, leading to this very common form of pharmaceutical abuse.

The FDA is accepting public comment on the draft guidance, while encouraging further scientific and clinical research to advance the development and assessment of abuse-deterrent technologies.

Susan Kreimer is a freelance writer based in New York.

Old gout drug learns new cardiac tricks

SAN FRANCISCO – The venerable antihyperuricemic agent allopurinol has shown early promise for two novel cardiovascular applications: prevention of atrial fibrillation in the setting of heart failure and reduction of left ventricular hypertrophy in patients with type 2 diabetes.

Allopurinol is a xanthine oxidase inhibitor and antigout drug. The rationale for the drug’s use in reducing the incidence of atrial fibrillation in patients with heart failure lies in the observation that serum uric acid has emerged as an independent marker of mortality and a predictor of new-onset atrial fibrillation in heart failure. Xanthine oxidase is not only a source of reactive oxygen species that adversely affect myocardial function, but it also catalyzes the conversion of xanthine to uric acid, Dr. Fernando E. Hernandez explained at the annual meeting of the American College of Cardiology.

He presented a retrospective cohort study involving 603 patients enrolled in the Miami Veterans Affairs heart failure clinic. The 103 on allopurinol, and the 500 who were not, matched up well in terms of baseline characteristics including age, prevalence of coronary artery disease, median left ventricular ejection, left atrial size, and use of guideline-recommended ACE inhibitors and beta-blockers.

During up to 5 years of follow-up, the incidence of new-onset atrial fibrillation was 184 cases/1,000 person-years in the allopurinol users compared with 252/1,000 person-years in controls. In a Cox proportional hazards analysis adjusted for small differences in potential confounders, the use of allopurinol was independently associated with a 47% reduction in the risk of atrial fibrillation (P = .04), reported Dr. Hernandez of the University of Miami.

This intriguing finding needs to be confirmed in randomized prospective trials, he noted.

In a separate presentation, Dr. Benjamin R. Szwejkowski noted that left ventricular hypertrophy (LVH) is common in patients with type 2 diabetes and contributes to their elevated risk of cardiovascular morbidity and mortality.

Based on their hypothesis that LVH is related in part to oxidative stress and reducing that stress via xanthine oxidase inhibition using allopurinol can cause LVH regression, the investigators conducted a randomized, double-blind placebo-controlled clinical trial. Sixty-six patients with type 2 diabetes and echocardiographic evidence of LVH were randomized to allopurinol at 600 mg/day or placebo for 9 months.

The primary study endpoint was change in left ventricular mass between baseline and 9 months, as measured by cardiac MRI. Allopurinol resulted in a significant mean 2.65-g reduction in LV mass, while in the control group LV mass increased by 1.21 g. Similarly, LV mass indexed to body surface area fell significantly by 1.32 g/m2 in the allopurinol group while increasing by 0.65 g/m2 in the placebo arm, reported Dr. Szwejkowski of the University of Dundee(Scotland).

"Allopurinol may be a useful therapy to reduce cardiovascular risk in type 2 diabetic patients with LVH," according to the cardiologist.

Flow-mediated dilatation didn’t change significantly over time in either study group.

Dr. Szwejkowski and Dr. Hernandez reported having no relevant financial conflicts.

SAN FRANCISCO – The venerable antihyperuricemic agent allopurinol has shown early promise for two novel cardiovascular applications: prevention of atrial fibrillation in the setting of heart failure and reduction of left ventricular hypertrophy in patients with type 2 diabetes.

Allopurinol is a xanthine oxidase inhibitor and antigout drug. The rationale for the drug’s use in reducing the incidence of atrial fibrillation in patients with heart failure lies in the observation that serum uric acid has emerged as an independent marker of mortality and a predictor of new-onset atrial fibrillation in heart failure. Xanthine oxidase is not only a source of reactive oxygen species that adversely affect myocardial function, but it also catalyzes the conversion of xanthine to uric acid, Dr. Fernando E. Hernandez explained at the annual meeting of the American College of Cardiology.

He presented a retrospective cohort study involving 603 patients enrolled in the Miami Veterans Affairs heart failure clinic. The 103 on allopurinol, and the 500 who were not, matched up well in terms of baseline characteristics including age, prevalence of coronary artery disease, median left ventricular ejection, left atrial size, and use of guideline-recommended ACE inhibitors and beta-blockers.

During up to 5 years of follow-up, the incidence of new-onset atrial fibrillation was 184 cases/1,000 person-years in the allopurinol users compared with 252/1,000 person-years in controls. In a Cox proportional hazards analysis adjusted for small differences in potential confounders, the use of allopurinol was independently associated with a 47% reduction in the risk of atrial fibrillation (P = .04), reported Dr. Hernandez of the University of Miami.

This intriguing finding needs to be confirmed in randomized prospective trials, he noted.

In a separate presentation, Dr. Benjamin R. Szwejkowski noted that left ventricular hypertrophy (LVH) is common in patients with type 2 diabetes and contributes to their elevated risk of cardiovascular morbidity and mortality.

Based on their hypothesis that LVH is related in part to oxidative stress and reducing that stress via xanthine oxidase inhibition using allopurinol can cause LVH regression, the investigators conducted a randomized, double-blind placebo-controlled clinical trial. Sixty-six patients with type 2 diabetes and echocardiographic evidence of LVH were randomized to allopurinol at 600 mg/day or placebo for 9 months.

The primary study endpoint was change in left ventricular mass between baseline and 9 months, as measured by cardiac MRI. Allopurinol resulted in a significant mean 2.65-g reduction in LV mass, while in the control group LV mass increased by 1.21 g. Similarly, LV mass indexed to body surface area fell significantly by 1.32 g/m2 in the allopurinol group while increasing by 0.65 g/m2 in the placebo arm, reported Dr. Szwejkowski of the University of Dundee(Scotland).

"Allopurinol may be a useful therapy to reduce cardiovascular risk in type 2 diabetic patients with LVH," according to the cardiologist.

Flow-mediated dilatation didn’t change significantly over time in either study group.

Dr. Szwejkowski and Dr. Hernandez reported having no relevant financial conflicts.

SAN FRANCISCO – The venerable antihyperuricemic agent allopurinol has shown early promise for two novel cardiovascular applications: prevention of atrial fibrillation in the setting of heart failure and reduction of left ventricular hypertrophy in patients with type 2 diabetes.

Allopurinol is a xanthine oxidase inhibitor and antigout drug. The rationale for the drug’s use in reducing the incidence of atrial fibrillation in patients with heart failure lies in the observation that serum uric acid has emerged as an independent marker of mortality and a predictor of new-onset atrial fibrillation in heart failure. Xanthine oxidase is not only a source of reactive oxygen species that adversely affect myocardial function, but it also catalyzes the conversion of xanthine to uric acid, Dr. Fernando E. Hernandez explained at the annual meeting of the American College of Cardiology.

He presented a retrospective cohort study involving 603 patients enrolled in the Miami Veterans Affairs heart failure clinic. The 103 on allopurinol, and the 500 who were not, matched up well in terms of baseline characteristics including age, prevalence of coronary artery disease, median left ventricular ejection, left atrial size, and use of guideline-recommended ACE inhibitors and beta-blockers.

During up to 5 years of follow-up, the incidence of new-onset atrial fibrillation was 184 cases/1,000 person-years in the allopurinol users compared with 252/1,000 person-years in controls. In a Cox proportional hazards analysis adjusted for small differences in potential confounders, the use of allopurinol was independently associated with a 47% reduction in the risk of atrial fibrillation (P = .04), reported Dr. Hernandez of the University of Miami.

This intriguing finding needs to be confirmed in randomized prospective trials, he noted.

In a separate presentation, Dr. Benjamin R. Szwejkowski noted that left ventricular hypertrophy (LVH) is common in patients with type 2 diabetes and contributes to their elevated risk of cardiovascular morbidity and mortality.

Based on their hypothesis that LVH is related in part to oxidative stress and reducing that stress via xanthine oxidase inhibition using allopurinol can cause LVH regression, the investigators conducted a randomized, double-blind placebo-controlled clinical trial. Sixty-six patients with type 2 diabetes and echocardiographic evidence of LVH were randomized to allopurinol at 600 mg/day or placebo for 9 months.

The primary study endpoint was change in left ventricular mass between baseline and 9 months, as measured by cardiac MRI. Allopurinol resulted in a significant mean 2.65-g reduction in LV mass, while in the control group LV mass increased by 1.21 g. Similarly, LV mass indexed to body surface area fell significantly by 1.32 g/m2 in the allopurinol group while increasing by 0.65 g/m2 in the placebo arm, reported Dr. Szwejkowski of the University of Dundee(Scotland).

"Allopurinol may be a useful therapy to reduce cardiovascular risk in type 2 diabetic patients with LVH," according to the cardiologist.

Flow-mediated dilatation didn’t change significantly over time in either study group.

Dr. Szwejkowski and Dr. Hernandez reported having no relevant financial conflicts.

AT ACC 13

Major finding: At the end of 5 years of allopurinol use, the incidence of new-onset atrial fibrillation was 184 cases/1,000 person-years in the allopurinol users compared with 252/1,000 person-years in controls.

Data source: A retrospective cohort study involving 603 patients with heart failure.

Disclosures: The study presenters reported having no relevant financial conflicts.

New concussion guidelines stress individualized approach

Any athlete with a possible concussion should be immediately removed from play pending an evaluation by a licensed health care provider trained in assessing concussions and traumatic brain injury, according to a new guideline from the American Academy of Neurology.

The guideline for evaluating and managing athletes with concussion was published online in the journal Neurology on March 18 (doi:10.1212/WNL.0b013e31828d57dd) in conjunction with the annual meeting of the AAN. The guideline replaces the Academy’s 1997 recommendations, which stressed using a grading system to try to predict concussion outcomes.

The new guideline takes a more individualized and conservative approach, especially for younger athletes. The new approach comes as many states have enacted legislation regulating when young athletes can return to play following a concussion.

"If in doubt, sit it out," Dr. Jeffrey S. Kutcher, coauthor of the guideline and a neurologist at the University of Michigan in Ann Arbor, said in a statement. "Being seen by a trained professional is extremely important after a concussion. If headaches or other symptoms return with the start of exercise, stop the activity and consult a doctor. You only get one brain; treat it well."

The new guideline calls for athletes to stay off the field until they are asymptomatic off medication. High school athletes and younger players with a concussion should be managed more conservatively since they take longer to recover than older athletes, according to the AAN.

But there is not enough evidence to support complete rest after a concussion. Activities that do not worsen symptoms and don’t pose a risk of another concussion can be part of the management of the injury, according to the guideline.

"We’re moved away from the concussion grading systems we first established in 1997 and are now recommending concussion and return to play be assessed in each athlete individually," Dr. Christopher C. Giza, the co–lead guideline author and a neurologist at Mattel Children’s Hospital at the University of California, Los Angeles, said in a statement. "There is no set timeline for safe return to play."

The AAN expert panel recommends that sideline providers use symptom checklists such as the Standardized Assessment of Concussion to help identify suspected concussion and that the scores be shared with the physicians involved in the athletes’ care off the field. But these checklists should not be the only tool used in making a diagnosis, according to the guidelines. Also, the checklist scores may be more useful if they are compared against preinjury individual scores, especially in younger athletes and those with prior concussions.

CT imaging should not be used to diagnose a suspected sport-related concussion, according to the guideline. But imaging might be used to rule out more serious traumatic brain injuries, such as intracranial hemorrhage in athletes with a suspected concussion who also have a loss of consciousness, posttraumatic amnesia, persistently altered mental status, focal neurologic deficit, evidence of skull fracture, or signs of clinical deterioration.

Athletes are at greater risk of concussion if they have a history of concussion. The first 10 days after a concussion pose the greatest risk for a repeat injury.

The AAN advises physicians to be on the lookout for ongoing symptoms that are linked to a longer recovery, such as continued headache or fogginess. Athletes with a history of concussions and younger players also tend to have a longer recovery.

The guideline also include level C recommendations stating that health care providers "might" develop individualized graded plans for returning to physical and cognitive activity. They might also provide cognitive restructuring counseling in an effort to shorten the duration of symptoms and the likelihood of developing chronic post-concussion syndrome, according to the guideline.

The guideline also included a number of recommendations on areas for future research, including studies of pre–high school age athletes to determine the natural history of concussion and recovery time for this age group, as well as the best assessment tools. The expert panel also called for clinical trials of different postconcussion management strategies and return-to-play protocols.

The guidelines were developed by a multidisciplinary expert committee that included representatives from neurology, athletic training, neuropsychology, epidemiology and biostatistics, neurosurgery, physical medicine and rehabilitation, and sports medicine. Many of the authors reported serving as consultants for professional sports associations, receiving honoraria and funding for travel for lectures on sports concussion, receiving research support from various foundations and organizations, and providing expert testimony in legal cases involving traumatic brain injury or concussion.

One of the most important statements in the new guideline

is that providers should not rely on a single diagnostic test when evaluating

an athlete, said Dr. Barry Jordan, the assistant medical director and attending

neurologist at the Burke Rehabilitation Hospital in White Plains, N.Y. Dr.

Jordan, who is an expert on sports concussions, said he’s seen too many

providers using a single computerized screening tool to assess whether an

athlete is well enough to return to play.

The new

guideline calls on providers to combine screening checklists with clinical

findings when making the determination about whether an athlete is well enough

to return to the field. Dr. Jordan

said this comprehensive approach is the way to go. And physicians who are

knowledgeable about concussions must be involved with that evaluation, he said.

|

| Dr. Barry Jordan |

The new guideline is an important update reflecting

the movement away from grading concussions to a more individualized approach. "You can't grade the severity until the concussion is over," he said.

Dr. Jordan

said the AAN guideline is "clear and easy to follow" and will results in better

care if followed.

Dr.

Barry Jordan is the director of the Brain Injury Program at Burke

Rehabilitation Hospital in White Plains, N.Y. He works with several sports

organizations including the New York State Athletic Commission, U.S.A. Boxing, and the National

Football League Players Association. He also writes a bimonthly column for

Clinical Neurology News called “On the Sidelines.”

One of the most important statements in the new guideline

is that providers should not rely on a single diagnostic test when evaluating

an athlete, said Dr. Barry Jordan, the assistant medical director and attending

neurologist at the Burke Rehabilitation Hospital in White Plains, N.Y. Dr.

Jordan, who is an expert on sports concussions, said he’s seen too many

providers using a single computerized screening tool to assess whether an

athlete is well enough to return to play.

The new

guideline calls on providers to combine screening checklists with clinical

findings when making the determination about whether an athlete is well enough

to return to the field. Dr. Jordan

said this comprehensive approach is the way to go. And physicians who are

knowledgeable about concussions must be involved with that evaluation, he said.

|

| Dr. Barry Jordan |

The new guideline is an important update reflecting

the movement away from grading concussions to a more individualized approach. "You can't grade the severity until the concussion is over," he said.

Dr. Jordan

said the AAN guideline is "clear and easy to follow" and will results in better

care if followed.

Dr.

Barry Jordan is the director of the Brain Injury Program at Burke

Rehabilitation Hospital in White Plains, N.Y. He works with several sports

organizations including the New York State Athletic Commission, U.S.A. Boxing, and the National

Football League Players Association. He also writes a bimonthly column for

Clinical Neurology News called “On the Sidelines.”

One of the most important statements in the new guideline

is that providers should not rely on a single diagnostic test when evaluating

an athlete, said Dr. Barry Jordan, the assistant medical director and attending

neurologist at the Burke Rehabilitation Hospital in White Plains, N.Y. Dr.

Jordan, who is an expert on sports concussions, said he’s seen too many

providers using a single computerized screening tool to assess whether an

athlete is well enough to return to play.

The new

guideline calls on providers to combine screening checklists with clinical

findings when making the determination about whether an athlete is well enough

to return to the field. Dr. Jordan

said this comprehensive approach is the way to go. And physicians who are

knowledgeable about concussions must be involved with that evaluation, he said.

|

| Dr. Barry Jordan |

The new guideline is an important update reflecting

the movement away from grading concussions to a more individualized approach. "You can't grade the severity until the concussion is over," he said.

Dr. Jordan

said the AAN guideline is "clear and easy to follow" and will results in better

care if followed.

Dr.

Barry Jordan is the director of the Brain Injury Program at Burke

Rehabilitation Hospital in White Plains, N.Y. He works with several sports

organizations including the New York State Athletic Commission, U.S.A. Boxing, and the National

Football League Players Association. He also writes a bimonthly column for

Clinical Neurology News called “On the Sidelines.”

Any athlete with a possible concussion should be immediately removed from play pending an evaluation by a licensed health care provider trained in assessing concussions and traumatic brain injury, according to a new guideline from the American Academy of Neurology.

The guideline for evaluating and managing athletes with concussion was published online in the journal Neurology on March 18 (doi:10.1212/WNL.0b013e31828d57dd) in conjunction with the annual meeting of the AAN. The guideline replaces the Academy’s 1997 recommendations, which stressed using a grading system to try to predict concussion outcomes.

The new guideline takes a more individualized and conservative approach, especially for younger athletes. The new approach comes as many states have enacted legislation regulating when young athletes can return to play following a concussion.

"If in doubt, sit it out," Dr. Jeffrey S. Kutcher, coauthor of the guideline and a neurologist at the University of Michigan in Ann Arbor, said in a statement. "Being seen by a trained professional is extremely important after a concussion. If headaches or other symptoms return with the start of exercise, stop the activity and consult a doctor. You only get one brain; treat it well."

The new guideline calls for athletes to stay off the field until they are asymptomatic off medication. High school athletes and younger players with a concussion should be managed more conservatively since they take longer to recover than older athletes, according to the AAN.

But there is not enough evidence to support complete rest after a concussion. Activities that do not worsen symptoms and don’t pose a risk of another concussion can be part of the management of the injury, according to the guideline.

"We’re moved away from the concussion grading systems we first established in 1997 and are now recommending concussion and return to play be assessed in each athlete individually," Dr. Christopher C. Giza, the co–lead guideline author and a neurologist at Mattel Children’s Hospital at the University of California, Los Angeles, said in a statement. "There is no set timeline for safe return to play."

The AAN expert panel recommends that sideline providers use symptom checklists such as the Standardized Assessment of Concussion to help identify suspected concussion and that the scores be shared with the physicians involved in the athletes’ care off the field. But these checklists should not be the only tool used in making a diagnosis, according to the guidelines. Also, the checklist scores may be more useful if they are compared against preinjury individual scores, especially in younger athletes and those with prior concussions.

CT imaging should not be used to diagnose a suspected sport-related concussion, according to the guideline. But imaging might be used to rule out more serious traumatic brain injuries, such as intracranial hemorrhage in athletes with a suspected concussion who also have a loss of consciousness, posttraumatic amnesia, persistently altered mental status, focal neurologic deficit, evidence of skull fracture, or signs of clinical deterioration.

Athletes are at greater risk of concussion if they have a history of concussion. The first 10 days after a concussion pose the greatest risk for a repeat injury.

The AAN advises physicians to be on the lookout for ongoing symptoms that are linked to a longer recovery, such as continued headache or fogginess. Athletes with a history of concussions and younger players also tend to have a longer recovery.

The guideline also include level C recommendations stating that health care providers "might" develop individualized graded plans for returning to physical and cognitive activity. They might also provide cognitive restructuring counseling in an effort to shorten the duration of symptoms and the likelihood of developing chronic post-concussion syndrome, according to the guideline.

The guideline also included a number of recommendations on areas for future research, including studies of pre–high school age athletes to determine the natural history of concussion and recovery time for this age group, as well as the best assessment tools. The expert panel also called for clinical trials of different postconcussion management strategies and return-to-play protocols.

The guidelines were developed by a multidisciplinary expert committee that included representatives from neurology, athletic training, neuropsychology, epidemiology and biostatistics, neurosurgery, physical medicine and rehabilitation, and sports medicine. Many of the authors reported serving as consultants for professional sports associations, receiving honoraria and funding for travel for lectures on sports concussion, receiving research support from various foundations and organizations, and providing expert testimony in legal cases involving traumatic brain injury or concussion.

Any athlete with a possible concussion should be immediately removed from play pending an evaluation by a licensed health care provider trained in assessing concussions and traumatic brain injury, according to a new guideline from the American Academy of Neurology.

The guideline for evaluating and managing athletes with concussion was published online in the journal Neurology on March 18 (doi:10.1212/WNL.0b013e31828d57dd) in conjunction with the annual meeting of the AAN. The guideline replaces the Academy’s 1997 recommendations, which stressed using a grading system to try to predict concussion outcomes.

The new guideline takes a more individualized and conservative approach, especially for younger athletes. The new approach comes as many states have enacted legislation regulating when young athletes can return to play following a concussion.

"If in doubt, sit it out," Dr. Jeffrey S. Kutcher, coauthor of the guideline and a neurologist at the University of Michigan in Ann Arbor, said in a statement. "Being seen by a trained professional is extremely important after a concussion. If headaches or other symptoms return with the start of exercise, stop the activity and consult a doctor. You only get one brain; treat it well."

The new guideline calls for athletes to stay off the field until they are asymptomatic off medication. High school athletes and younger players with a concussion should be managed more conservatively since they take longer to recover than older athletes, according to the AAN.

But there is not enough evidence to support complete rest after a concussion. Activities that do not worsen symptoms and don’t pose a risk of another concussion can be part of the management of the injury, according to the guideline.

"We’re moved away from the concussion grading systems we first established in 1997 and are now recommending concussion and return to play be assessed in each athlete individually," Dr. Christopher C. Giza, the co–lead guideline author and a neurologist at Mattel Children’s Hospital at the University of California, Los Angeles, said in a statement. "There is no set timeline for safe return to play."

The AAN expert panel recommends that sideline providers use symptom checklists such as the Standardized Assessment of Concussion to help identify suspected concussion and that the scores be shared with the physicians involved in the athletes’ care off the field. But these checklists should not be the only tool used in making a diagnosis, according to the guidelines. Also, the checklist scores may be more useful if they are compared against preinjury individual scores, especially in younger athletes and those with prior concussions.

CT imaging should not be used to diagnose a suspected sport-related concussion, according to the guideline. But imaging might be used to rule out more serious traumatic brain injuries, such as intracranial hemorrhage in athletes with a suspected concussion who also have a loss of consciousness, posttraumatic amnesia, persistently altered mental status, focal neurologic deficit, evidence of skull fracture, or signs of clinical deterioration.

Athletes are at greater risk of concussion if they have a history of concussion. The first 10 days after a concussion pose the greatest risk for a repeat injury.

The AAN advises physicians to be on the lookout for ongoing symptoms that are linked to a longer recovery, such as continued headache or fogginess. Athletes with a history of concussions and younger players also tend to have a longer recovery.

The guideline also include level C recommendations stating that health care providers "might" develop individualized graded plans for returning to physical and cognitive activity. They might also provide cognitive restructuring counseling in an effort to shorten the duration of symptoms and the likelihood of developing chronic post-concussion syndrome, according to the guideline.

The guideline also included a number of recommendations on areas for future research, including studies of pre–high school age athletes to determine the natural history of concussion and recovery time for this age group, as well as the best assessment tools. The expert panel also called for clinical trials of different postconcussion management strategies and return-to-play protocols.

The guidelines were developed by a multidisciplinary expert committee that included representatives from neurology, athletic training, neuropsychology, epidemiology and biostatistics, neurosurgery, physical medicine and rehabilitation, and sports medicine. Many of the authors reported serving as consultants for professional sports associations, receiving honoraria and funding for travel for lectures on sports concussion, receiving research support from various foundations and organizations, and providing expert testimony in legal cases involving traumatic brain injury or concussion.

FROM NEUROLOGY

Sleep in Hospitalized Adults

Lack of sleep is a common problem in hospitalized patients and is associated with poorer health outcomes, especially in older patients.[1, 2, 3] Prior studies highlight a multitude of factors that can result in sleep loss in the hospital[3, 4, 5, 6] with 1 of the most common causes of sleep disruption in the hospital being noise.[7, 8, 9]

In addition to external factors, such as hospital noise, there may be inherent characteristics that predispose certain patients to greater sleep loss when hospitalized. One such measure is the construct of perceived control or the psychological measure of how much individuals expect themselves to be capable of bringing about desired outcomes.[10] Among older patients, low perceived control is associated with increased rates of physician visits, hospitalizations, and death.[11, 12] In contrast, patients who feel more in control of their environment may experience positive health benefits.[13]

Yet, when patients are placed in a hospital setting, they experience a significant reduction in control over their environment along with an increase in dependency on medical staff and therapies.[14, 15] For example, hospitalized patients are restricted in their personal decisions, such as what clothes they can wear and what they can eat and are not in charge of their own schedules, including their sleep time.

Although prior studies suggest that perceived control over sleep is related to actual sleep among community‐dwelling adults,[16, 17] no study has examined this relationship in hospitalized adults. Therefore, the aim of our study was to examine the possible association between perceived control, noise levels, and sleep in hospitalized middle‐aged and older patients.

METHODS

Study Design

We conducted a prospective cohort study of subjects recruited from a large ongoing study of admitted patients at the University of Chicago inpatient general medicine service.[18] Because we were interested in middle‐aged and older adults who are most sensitive to sleep disruptions, patients who were age 50 years and over, ambulatory, and living in the community were eligible for the study.[19] Exclusion criteria were cognitive impairment (telephone version of the Mini‐Mental State Exam <17 out of 22), preexisting sleeping disorders identified via patient charts, such as obstructive sleep apnea and narcolepsy, transfer from the intensive care unit (ICU), and admission to the hospital more than 72 hours prior to enrollment.[20] These inclusion and exclusion criteria were selected to identify a patient population with minimal sleep disturbances at baseline. Patients under isolation were excluded because they are not visited as frequently by the healthcare team.[21, 22] Most general medicine rooms were double occupancy but efforts were made to make patient rooms single when possible or required (ie, isolation for infection control). The study was approved by the University of Chicago Institutional Review Board.

Subjective Data Collection

Baseline levels of perceived control over sleep, or the amount of control patients believe they have over their sleep, were assessed using 2 different scales. The first tool was the 8‐item Sleep Locus of Control (SLOC) scale,[17] which ranges from 8 to 48, with higher values corresponding to a greater internal locus of control over sleep. An internal sleep locus of control indicates beliefs that patients feel that they are primarily responsible for their own sleep as opposed to an external locus of control which indicates beliefs that good sleep is due to luck or chance. For example, patients were asked how strongly they agree or disagree with statements, such as, If I take care of myself, I can avoid insomnia and People who never get insomnia are just plain lucky (see Supporting Information, Appendix 2, in the online version of this article). The second tool was the 9‐item Sleep Self‐Efficacy (SSE) scale,[23] which ranges from 9 to 45, with higher values corresponding to greater confidence patients have in their ability to sleep. One of the items asks, How confident are you that you can lie in bed feeling physically relaxed (see Supporting Information, Appendix 1, in the online version of this article)? Both instruments have been validated in an outpatient setting.[23] These surveys were given immediately on enrollment in the study to measure baseline perceived control.

Baseline sleep habits were also collected on enrollment using the Epworth Sleepiness Scale,[24, 25] a standard validated survey that assesses excess daytime sleepiness in various common situations. For each day in the hospital, patients were asked to report in‐hospital sleep quality using the Karolinska Sleep Log.[26] The Karolinska Sleep Quality Index (KSQI) is calculated from 4 items on the Karolinska Sleep Log (sleep quality, sleep restlessness, slept throughout the night, ease of falling asleep). The questions are on a 5‐point scale and the 4 items are averaged for a final score out of 5 with a higher number indicating better subjective sleep quality. The item How much was your sleep disturbed by noise? on the Karolinska Sleep Log was used to assess the degree to which noise was a disruptor of sleep. This question was also on a 5‐point scale with higher scores indicating greater disruptiveness of noise. Patients were also asked how disruptive noise from roommates was on a nightly basis using this same scale.

Objective Data Collection

Wrist activity monitors (Actiwatch 2; Respironics, Inc., Murrysville, PA)[27, 28, 29, 30] were used to measure patient sleep. Actiware 5 software (Respironics, Inc.)[31] was used to estimate quantitative measures of sleep time and efficiency. Sleep time is defined as the total duration of time spent sleeping at night and sleep efficiency is defined as the fraction of time, reported as a percentage, spent sleeping by actigraphy out of the total time patients reported they were sleeping.

Sound levels in patient rooms were recorded using Larson Davis 720 Sound Level Monitors (Larson Davis, Inc., Provo, UT). These monitors store functional average sound pressure levels in A‐weighted decibels called the Leq over 1‐hour intervals. The Leq is the average sound level over the given time interval. Minimum (Lmin) and maximum (Lmax) sound levels are also stored. The LD SLM Utility Program (Larson Davis, Inc.) was used to extract the sound level measurements recorded by the monitors.

Demographic information (age, gender, race, ethnicity, highest level of education, length of stay in the hospital, and comorbidities) was obtained from hospital charts via an ongoing study of admitted patients at the University of Chicago Medical Center inpatient general medicine service.[18] Chart audits were performed to determine whether patients received pharmacologic sleep aids in the hospital.

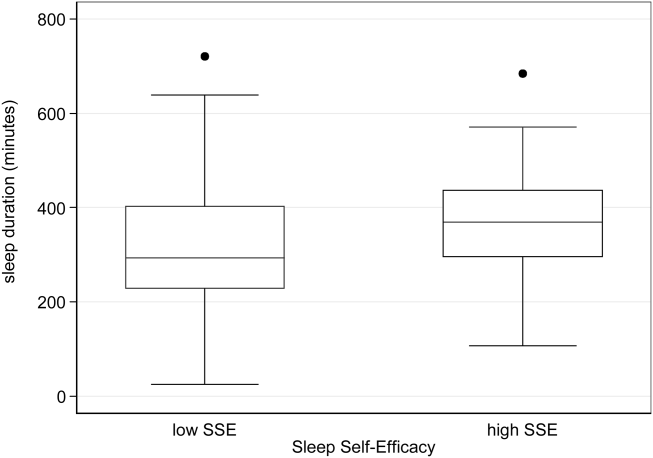

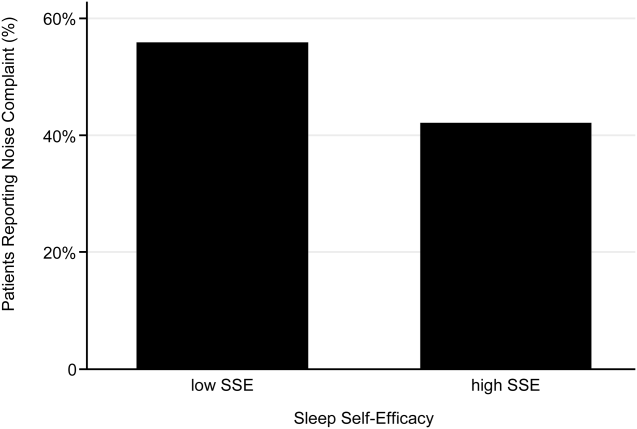

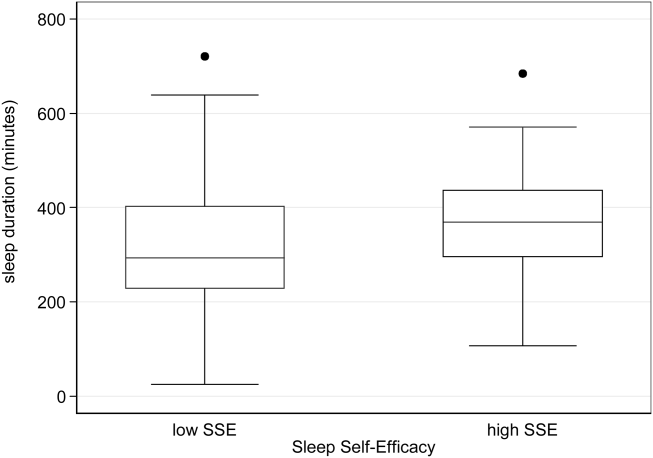

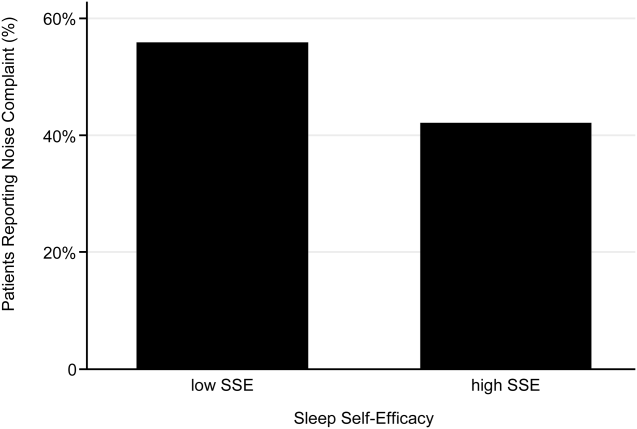

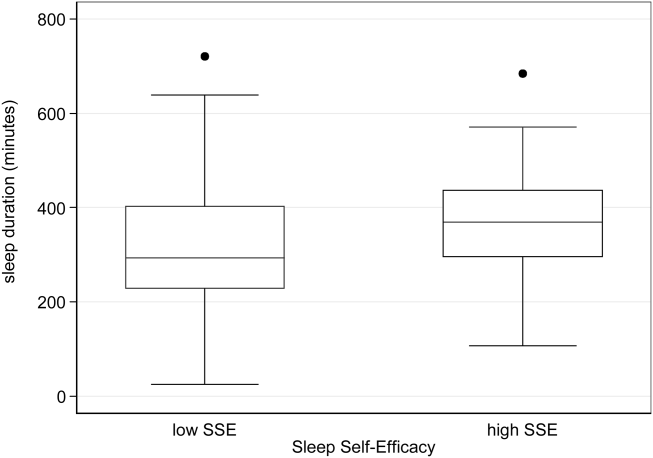

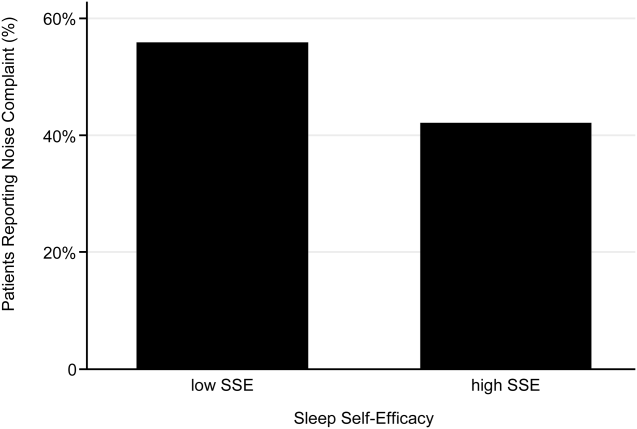

Data Analysis

Descriptive statistics were used to summarize mean sleep duration and sleep efficiency in the hospital as well as SLOC and SSE. Because the SSE scores were not normally distributed, the scores were dichotomized at the median to create a variable denoting high and low SSE. Additionally, because the distribution of responses to the noise disruption question was skewed to the right, reports of noise disruptions were grouped into not disruptive (score=1) and disruptive (score>1).

Two‐sample t tests with equal variances were used to assess the relationship between perceived control measures (high/low SLOC, SSE) and objective sleep measures (sleep time, sleep efficiency). Multivariate linear regression was used to test the association between high SSE (independent variable) and sleep time (dependent variable), clustering for multiple nights of data within the subject. Multivariate logistic regression, also adjusting for subject, was used to test the association between high SSE and noise disruptiveness and the association between high SSE and Karolinska scores. Leq, Lmax, and Lmin were all tested using stepwise forward regression. Because our prior work[9] demonstrated that noise levels separated into tertiles were significantly associated with sleep time, our analysis also used noise levels separated into tertiles. Stepwise forward regression was used to add basic patient demographics (gender, race, age) to the models. Statistical significance was defined as P<0.05, and all statistical analysis was done using Stata 11.0 (StataCorp, College Station, TX).

RESULTS

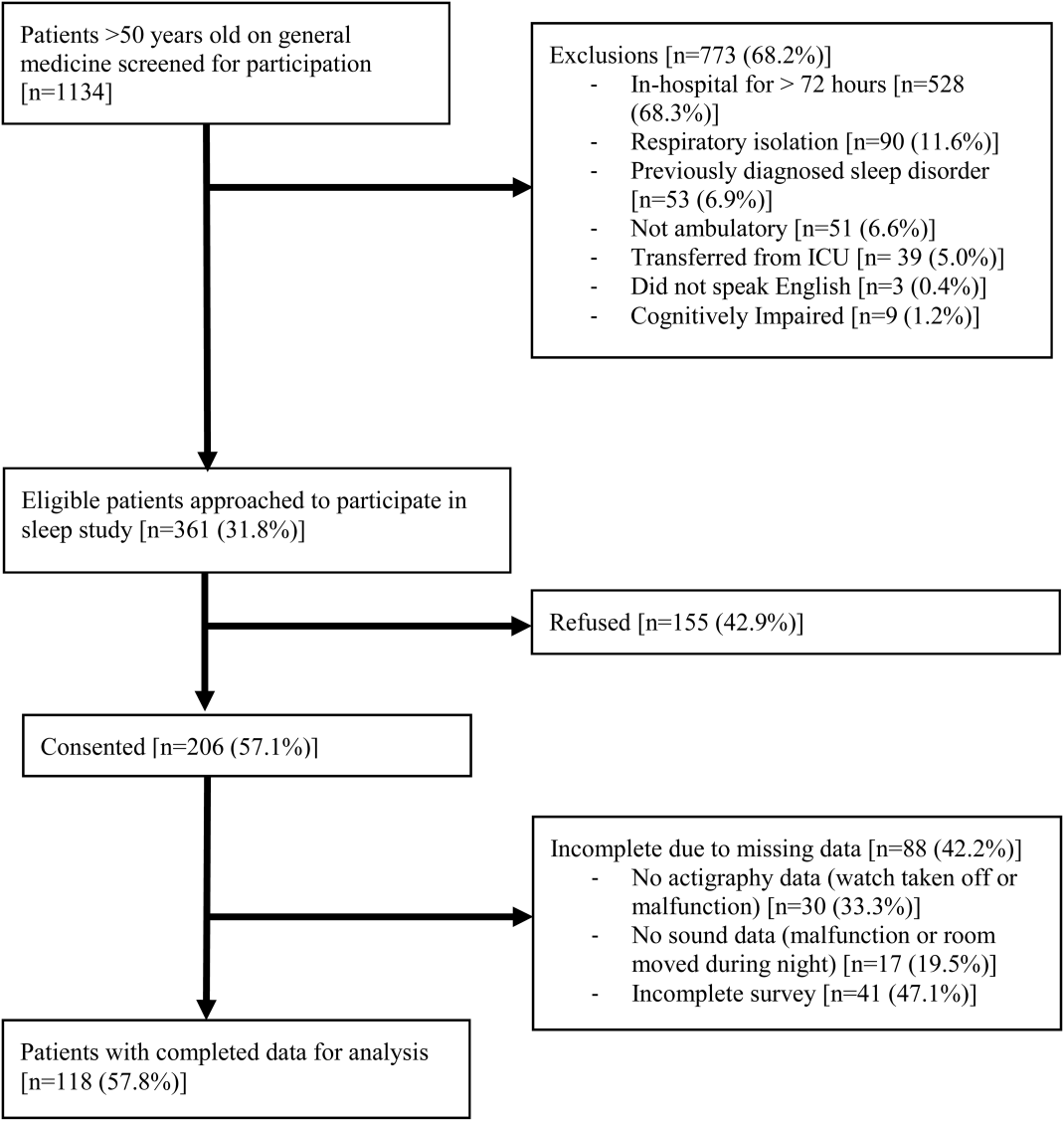

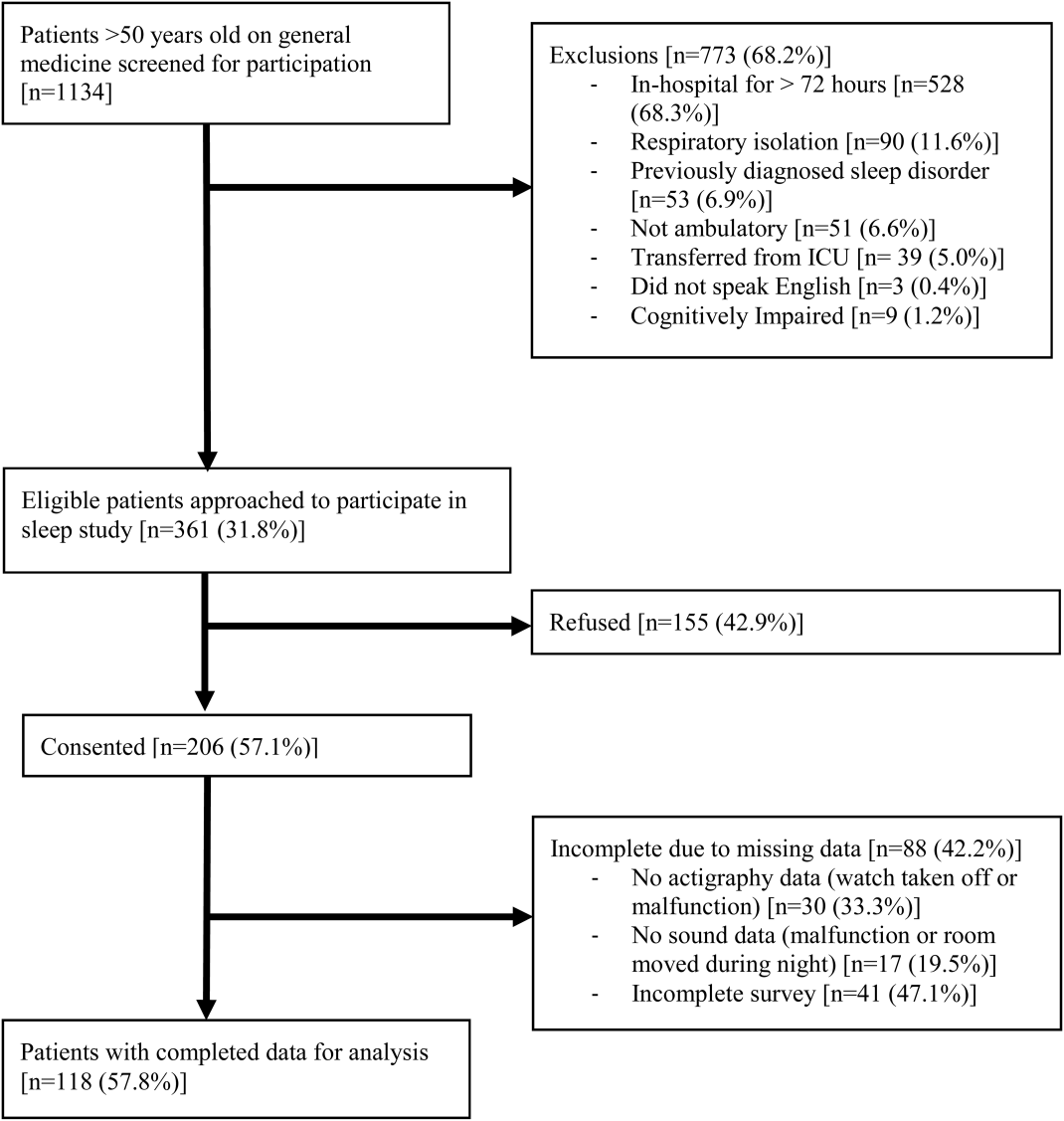

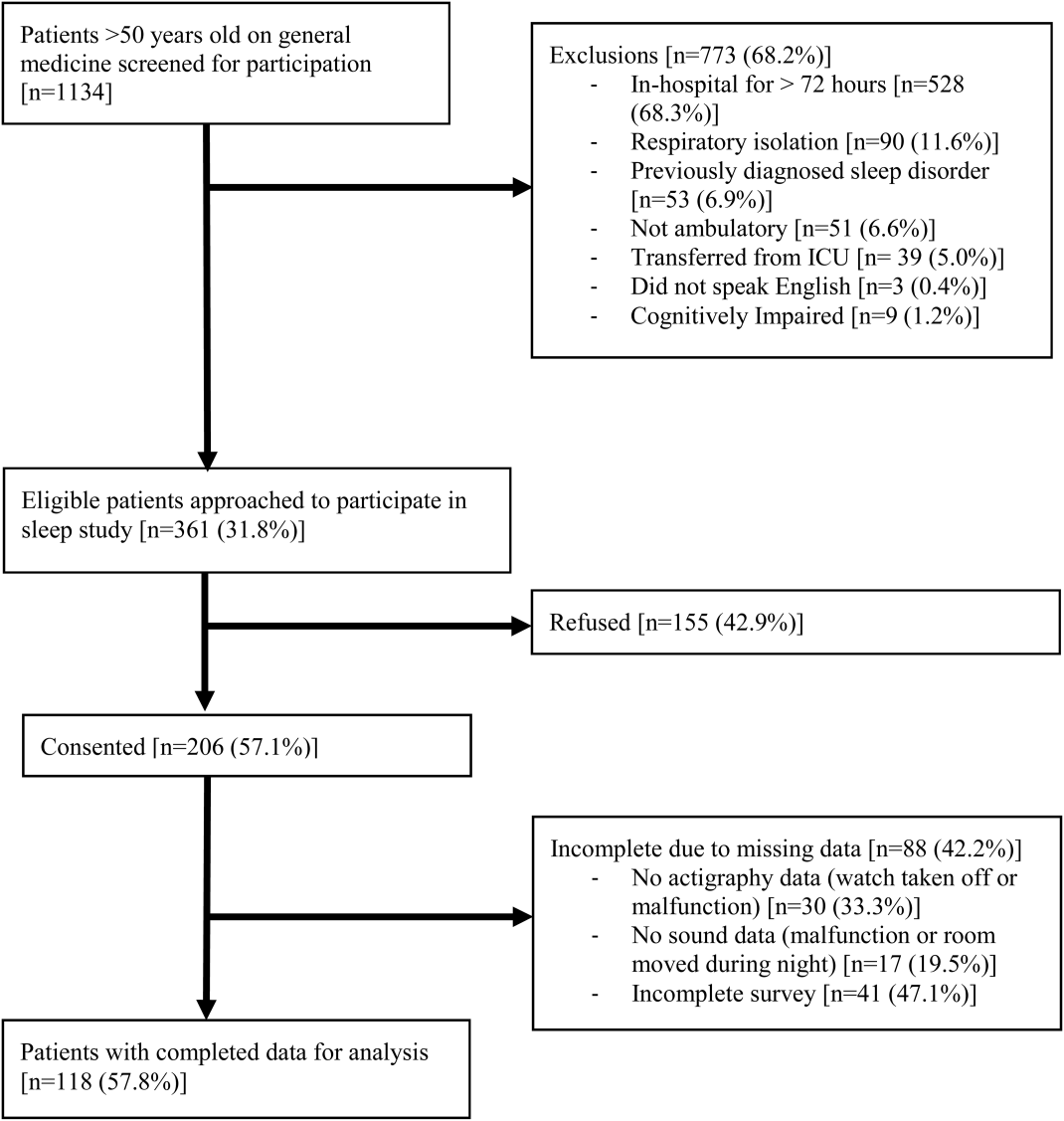

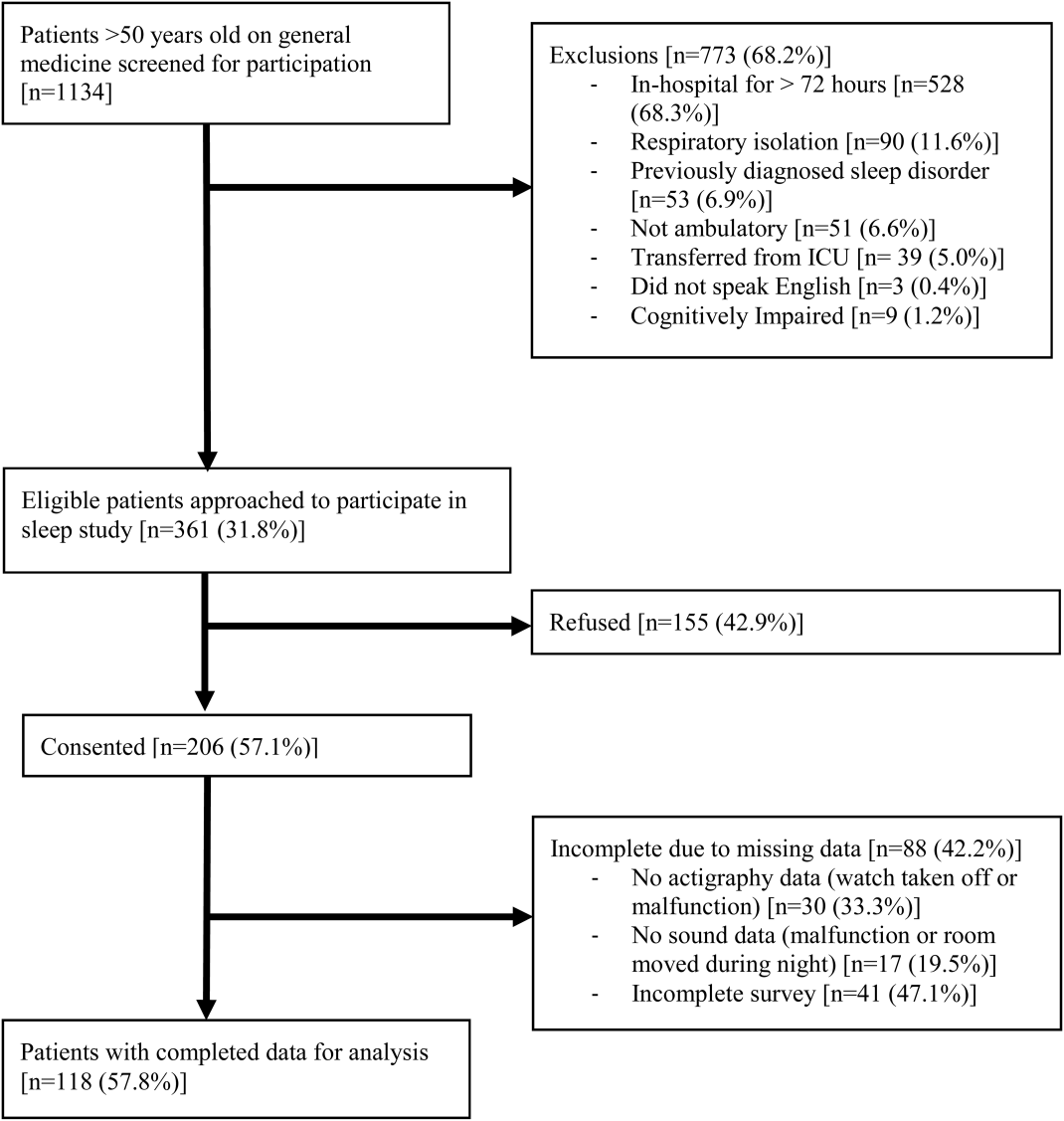

From April 2010 to May 2012, 1134 patients were screened by study personnel for this study via an ongoing study of hospitalized patients on the inpatient general medicine ward. Of the 361 (31.8%) eligible patients, 206 (57.1%) consented to participate. Of the subjects enrolled in the study, 118 were able to complete at least 1 night of actigraphy, sound monitoring, and subjective assessment for a total of 185 patient nights (Figure 1).

The majority of patients were female (57%), African American (67%), and non‐Hispanic (97%). The mean age was 65 years (standard deviation [SD], 11.6 years), and the median length of stay was 4 days (interquartile range [IQR], 36). The majority of patients also had hypertension (67%), with chronic obstructive pulmonary disease [COPD] (31%) and congestive heart failure (31%) being the next most common comorbidities. About two‐thirds of subjects (64%) were characterized as average or above average sleepers with Epworth Sleepiness Scale scores 9[20] (Table 1). Only 5% of patients received pharmacological sleep aids.

| Value, n (%)a | |

|---|---|

| |

| Patient characteristics | |

| Age, mean (SD), y | 63 (12) |

| Length of stay, median (IQR), db | 4 (36) |

| Female | 67 (57) |

| African American | 79 (67) |

| Hispanic | 3 (3) |

| High school graduate | 92 (78) |

| Comorbidities | |

| Hypertension | 79 (66) |

| Chronic obstructive pulmonary disease | 37 (31) |

| Congestive heart failure | 37 (31) |

| Diabetes | 36 (30) |

| End stage renal disease | 23 (19) |

| Baseline sleep characteristics | |