User login

For MD-IQ use only

‘Dr. Caveman’ had a leg up on amputation

Monkey see, monkey do (advanced medical procedures)

We don’t tend to think too kindly of our prehistoric ancestors. We throw around the word “caveman” – hardly a term of endearment – and depictions of Paleolithic humans rarely flatter their subjects. In many ways, though, our conceptions are correct. Humans of the Stone Age lived short, often brutish lives, but civilization had to start somewhere, and our prehistoric ancestors were often far more capable than we give them credit for.

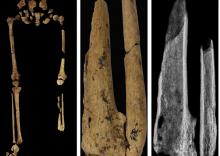

Case in point is a recent discovery from an archaeological dig in Borneo: A young adult who lived 31,000 years ago was discovered with the lower third of their left leg amputated. Save the clever retort about the person’s untimely death, because this individual did not die from the surgery. The amputation occurred when the individual was a child and the subject lived for several years after the operation.

Amputation is usually unnecessary given our current level of medical technology, but it’s actually quite an advanced procedure, and this example predates the previous first case of amputation by nearly 25,000 years. Not only did the surgeon need to cut at an appropriate place, they needed to understand blood loss, the risk of infection, and the need to preserve skin in order to seal the wound back up. That’s quite a lot for our Paleolithic doctor to know, and it’s even more impressive considering the, shall we say, limited tools they would have had available to perform the operation.

Rocks. They cut off the leg with a rock. And it worked.

This discovery also gives insight into the amputee’s society. Someone knew that amputation was the right move for this person, indicating that it had been done before. In addition, the individual would not have been able to spring back into action hunting mammoths right away, they would require care for the rest of their lives. And clearly the community provided, given the individual’s continued life post operation and their burial in a place of honor.

If only the American health care system was capable of such feats of compassion, but that would require the majority of politicians to be as clever as cavemen. We’re not hopeful on those odds.

The first step is admitting you have a crying baby. The second step is … a step

Knock, knock.

Who’s there?

Crying baby.

Crying baby who?

Crying baby who … umm … doesn’t have a punchline. Let’s try this again.

A priest, a rabbi, and a crying baby walk into a bar and … nope, that’s not going to work.

Why did the crying baby cross the road? Ugh, never mind.

Clearly, crying babies are no laughing matter. What crying babies need is science. And the latest innovation – it’s fresh from a study conducted at the RIKEN Center for Brain Science in Saitama, Japan – in the science of crying babies is … walking. Researchers observed 21 unhappy infants and compared their responses to four strategies: being held by their walking mothers, held by their sitting mothers, lying in a motionless crib, or lying in a rocking cot.

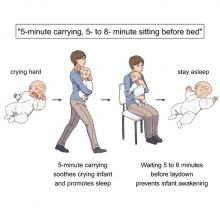

The best strategy is for the mother – the experiment only involved mothers, but the results should apply to any caregiver – to pick up the crying baby, walk around for 5 minutes, sit for another 5-8 minutes, and then put the infant back to bed, the researchers said in a written statement.

The walking strategy, however, isn’t perfect. “Walking for 5 minutes promoted sleep, but only for crying infants. Surprisingly, this effect was absent when babies were already calm beforehand,” lead author Kumi O. Kuroda, MD, PhD, explained in a separate statement from the center.

It also doesn’t work on adults. We could not get a crying LOTME writer to fall asleep no matter how long his mother carried him around the office.

New way to detect Parkinson’s has already passed the sniff test

We humans aren’t generally known for our superpowers, but a woman from Scotland may just be the Smelling Superhero. Not only was she able to literally smell Parkinson’s disease (PD) on her husband 12 years before his diagnosis; she is also the reason that scientists have found a new way to test for PD.

Joy Milne, a retired nurse, told the BBC that her husband “had this musty rather unpleasant smell especially round his shoulders and the back of his neck and his skin had definitely changed.” She put two and two together after he had been diagnosed with PD and she came in contact with others with the same scent at a support group.

Researchers at the University of Manchester, working with Ms. Milne, have now created a skin test that uses mass spectroscopy to analyze a sample of the patient’s sebum in just 3 minutes and is 95% accurate. They tested 79 people with Parkinson’s and 71 without using this method and found “specific compounds unique to PD sebum samples when compared to healthy controls. Furthermore, we have identified two classes of lipids, namely, triacylglycerides and diglycerides, as components of human sebum that are significantly differentially expressed in PD,” they said in JACS Au.

This test could be available to general physicians within 2 years, which would provide new opportunities to the people who are waiting in line for neurologic consults. Ms. Milne’s husband passed away in 2015, but her courageous help and amazing nasal abilities may help millions down the line.

The power of flirting

It’s a common office stereotype: Women flirt with the boss to get ahead in the workplace, while men in power sexually harass women in subordinate positions. Nobody ever suspects the guys in the cubicles. A recent study takes a different look and paints a different picture.

The investigators conducted multiple online and lab experiments in how social sexual identity drives behavior in a workplace setting in relation to job placement. They found that it was most often men in lower-power positions who are insecure about their roles who initiate social sexual behavior, even though they know it’s offensive. Why? Power.

They randomly paired over 200 undergraduate students in a male/female fashion, placed them in subordinate and boss-like roles, and asked them to choose from a series of social sexual questions they wanted to ask their teammate. Male participants who were placed in subordinate positions to a female boss chose social sexual questions more often than did male bosses, female subordinates, and female bosses.

So what does this say about the threat of workplace harassment? The researchers found that men and women differ in their strategy for flirtation. For men, it’s a way to gain more power. But problems arise when they rationalize their behavior with a character trait like being a “big flirt.”

“When we take on that identity, it leads to certain behavioral patterns that reinforce the identity. And then, people use that identity as an excuse,” lead author Laura Kray of the University of California, Berkeley, said in a statement from the school.

The researchers make a point to note that the study isn’t about whether flirting is good or bad, nor are they suggesting that people in powerful positions don’t sexually harass underlings. It’s meant to provide insight to improve corporate sexual harassment training. A comment or conversation held in jest could potentially be a warning sign for future behavior.

Monkey see, monkey do (advanced medical procedures)

We don’t tend to think too kindly of our prehistoric ancestors. We throw around the word “caveman” – hardly a term of endearment – and depictions of Paleolithic humans rarely flatter their subjects. In many ways, though, our conceptions are correct. Humans of the Stone Age lived short, often brutish lives, but civilization had to start somewhere, and our prehistoric ancestors were often far more capable than we give them credit for.

Case in point is a recent discovery from an archaeological dig in Borneo: A young adult who lived 31,000 years ago was discovered with the lower third of their left leg amputated. Save the clever retort about the person’s untimely death, because this individual did not die from the surgery. The amputation occurred when the individual was a child and the subject lived for several years after the operation.

Amputation is usually unnecessary given our current level of medical technology, but it’s actually quite an advanced procedure, and this example predates the previous first case of amputation by nearly 25,000 years. Not only did the surgeon need to cut at an appropriate place, they needed to understand blood loss, the risk of infection, and the need to preserve skin in order to seal the wound back up. That’s quite a lot for our Paleolithic doctor to know, and it’s even more impressive considering the, shall we say, limited tools they would have had available to perform the operation.

Rocks. They cut off the leg with a rock. And it worked.

This discovery also gives insight into the amputee’s society. Someone knew that amputation was the right move for this person, indicating that it had been done before. In addition, the individual would not have been able to spring back into action hunting mammoths right away, they would require care for the rest of their lives. And clearly the community provided, given the individual’s continued life post operation and their burial in a place of honor.

If only the American health care system was capable of such feats of compassion, but that would require the majority of politicians to be as clever as cavemen. We’re not hopeful on those odds.

The first step is admitting you have a crying baby. The second step is … a step

Knock, knock.

Who’s there?

Crying baby.

Crying baby who?

Crying baby who … umm … doesn’t have a punchline. Let’s try this again.

A priest, a rabbi, and a crying baby walk into a bar and … nope, that’s not going to work.

Why did the crying baby cross the road? Ugh, never mind.

Clearly, crying babies are no laughing matter. What crying babies need is science. And the latest innovation – it’s fresh from a study conducted at the RIKEN Center for Brain Science in Saitama, Japan – in the science of crying babies is … walking. Researchers observed 21 unhappy infants and compared their responses to four strategies: being held by their walking mothers, held by their sitting mothers, lying in a motionless crib, or lying in a rocking cot.

The best strategy is for the mother – the experiment only involved mothers, but the results should apply to any caregiver – to pick up the crying baby, walk around for 5 minutes, sit for another 5-8 minutes, and then put the infant back to bed, the researchers said in a written statement.

The walking strategy, however, isn’t perfect. “Walking for 5 minutes promoted sleep, but only for crying infants. Surprisingly, this effect was absent when babies were already calm beforehand,” lead author Kumi O. Kuroda, MD, PhD, explained in a separate statement from the center.

It also doesn’t work on adults. We could not get a crying LOTME writer to fall asleep no matter how long his mother carried him around the office.

New way to detect Parkinson’s has already passed the sniff test

We humans aren’t generally known for our superpowers, but a woman from Scotland may just be the Smelling Superhero. Not only was she able to literally smell Parkinson’s disease (PD) on her husband 12 years before his diagnosis; she is also the reason that scientists have found a new way to test for PD.

Joy Milne, a retired nurse, told the BBC that her husband “had this musty rather unpleasant smell especially round his shoulders and the back of his neck and his skin had definitely changed.” She put two and two together after he had been diagnosed with PD and she came in contact with others with the same scent at a support group.

Researchers at the University of Manchester, working with Ms. Milne, have now created a skin test that uses mass spectroscopy to analyze a sample of the patient’s sebum in just 3 minutes and is 95% accurate. They tested 79 people with Parkinson’s and 71 without using this method and found “specific compounds unique to PD sebum samples when compared to healthy controls. Furthermore, we have identified two classes of lipids, namely, triacylglycerides and diglycerides, as components of human sebum that are significantly differentially expressed in PD,” they said in JACS Au.

This test could be available to general physicians within 2 years, which would provide new opportunities to the people who are waiting in line for neurologic consults. Ms. Milne’s husband passed away in 2015, but her courageous help and amazing nasal abilities may help millions down the line.

The power of flirting

It’s a common office stereotype: Women flirt with the boss to get ahead in the workplace, while men in power sexually harass women in subordinate positions. Nobody ever suspects the guys in the cubicles. A recent study takes a different look and paints a different picture.

The investigators conducted multiple online and lab experiments in how social sexual identity drives behavior in a workplace setting in relation to job placement. They found that it was most often men in lower-power positions who are insecure about their roles who initiate social sexual behavior, even though they know it’s offensive. Why? Power.

They randomly paired over 200 undergraduate students in a male/female fashion, placed them in subordinate and boss-like roles, and asked them to choose from a series of social sexual questions they wanted to ask their teammate. Male participants who were placed in subordinate positions to a female boss chose social sexual questions more often than did male bosses, female subordinates, and female bosses.

So what does this say about the threat of workplace harassment? The researchers found that men and women differ in their strategy for flirtation. For men, it’s a way to gain more power. But problems arise when they rationalize their behavior with a character trait like being a “big flirt.”

“When we take on that identity, it leads to certain behavioral patterns that reinforce the identity. And then, people use that identity as an excuse,” lead author Laura Kray of the University of California, Berkeley, said in a statement from the school.

The researchers make a point to note that the study isn’t about whether flirting is good or bad, nor are they suggesting that people in powerful positions don’t sexually harass underlings. It’s meant to provide insight to improve corporate sexual harassment training. A comment or conversation held in jest could potentially be a warning sign for future behavior.

Monkey see, monkey do (advanced medical procedures)

We don’t tend to think too kindly of our prehistoric ancestors. We throw around the word “caveman” – hardly a term of endearment – and depictions of Paleolithic humans rarely flatter their subjects. In many ways, though, our conceptions are correct. Humans of the Stone Age lived short, often brutish lives, but civilization had to start somewhere, and our prehistoric ancestors were often far more capable than we give them credit for.

Case in point is a recent discovery from an archaeological dig in Borneo: A young adult who lived 31,000 years ago was discovered with the lower third of their left leg amputated. Save the clever retort about the person’s untimely death, because this individual did not die from the surgery. The amputation occurred when the individual was a child and the subject lived for several years after the operation.

Amputation is usually unnecessary given our current level of medical technology, but it’s actually quite an advanced procedure, and this example predates the previous first case of amputation by nearly 25,000 years. Not only did the surgeon need to cut at an appropriate place, they needed to understand blood loss, the risk of infection, and the need to preserve skin in order to seal the wound back up. That’s quite a lot for our Paleolithic doctor to know, and it’s even more impressive considering the, shall we say, limited tools they would have had available to perform the operation.

Rocks. They cut off the leg with a rock. And it worked.

This discovery also gives insight into the amputee’s society. Someone knew that amputation was the right move for this person, indicating that it had been done before. In addition, the individual would not have been able to spring back into action hunting mammoths right away, they would require care for the rest of their lives. And clearly the community provided, given the individual’s continued life post operation and their burial in a place of honor.

If only the American health care system was capable of such feats of compassion, but that would require the majority of politicians to be as clever as cavemen. We’re not hopeful on those odds.

The first step is admitting you have a crying baby. The second step is … a step

Knock, knock.

Who’s there?

Crying baby.

Crying baby who?

Crying baby who … umm … doesn’t have a punchline. Let’s try this again.

A priest, a rabbi, and a crying baby walk into a bar and … nope, that’s not going to work.

Why did the crying baby cross the road? Ugh, never mind.

Clearly, crying babies are no laughing matter. What crying babies need is science. And the latest innovation – it’s fresh from a study conducted at the RIKEN Center for Brain Science in Saitama, Japan – in the science of crying babies is … walking. Researchers observed 21 unhappy infants and compared their responses to four strategies: being held by their walking mothers, held by their sitting mothers, lying in a motionless crib, or lying in a rocking cot.

The best strategy is for the mother – the experiment only involved mothers, but the results should apply to any caregiver – to pick up the crying baby, walk around for 5 minutes, sit for another 5-8 minutes, and then put the infant back to bed, the researchers said in a written statement.

The walking strategy, however, isn’t perfect. “Walking for 5 minutes promoted sleep, but only for crying infants. Surprisingly, this effect was absent when babies were already calm beforehand,” lead author Kumi O. Kuroda, MD, PhD, explained in a separate statement from the center.

It also doesn’t work on adults. We could not get a crying LOTME writer to fall asleep no matter how long his mother carried him around the office.

New way to detect Parkinson’s has already passed the sniff test

We humans aren’t generally known for our superpowers, but a woman from Scotland may just be the Smelling Superhero. Not only was she able to literally smell Parkinson’s disease (PD) on her husband 12 years before his diagnosis; she is also the reason that scientists have found a new way to test for PD.

Joy Milne, a retired nurse, told the BBC that her husband “had this musty rather unpleasant smell especially round his shoulders and the back of his neck and his skin had definitely changed.” She put two and two together after he had been diagnosed with PD and she came in contact with others with the same scent at a support group.

Researchers at the University of Manchester, working with Ms. Milne, have now created a skin test that uses mass spectroscopy to analyze a sample of the patient’s sebum in just 3 minutes and is 95% accurate. They tested 79 people with Parkinson’s and 71 without using this method and found “specific compounds unique to PD sebum samples when compared to healthy controls. Furthermore, we have identified two classes of lipids, namely, triacylglycerides and diglycerides, as components of human sebum that are significantly differentially expressed in PD,” they said in JACS Au.

This test could be available to general physicians within 2 years, which would provide new opportunities to the people who are waiting in line for neurologic consults. Ms. Milne’s husband passed away in 2015, but her courageous help and amazing nasal abilities may help millions down the line.

The power of flirting

It’s a common office stereotype: Women flirt with the boss to get ahead in the workplace, while men in power sexually harass women in subordinate positions. Nobody ever suspects the guys in the cubicles. A recent study takes a different look and paints a different picture.

The investigators conducted multiple online and lab experiments in how social sexual identity drives behavior in a workplace setting in relation to job placement. They found that it was most often men in lower-power positions who are insecure about their roles who initiate social sexual behavior, even though they know it’s offensive. Why? Power.

They randomly paired over 200 undergraduate students in a male/female fashion, placed them in subordinate and boss-like roles, and asked them to choose from a series of social sexual questions they wanted to ask their teammate. Male participants who were placed in subordinate positions to a female boss chose social sexual questions more often than did male bosses, female subordinates, and female bosses.

So what does this say about the threat of workplace harassment? The researchers found that men and women differ in their strategy for flirtation. For men, it’s a way to gain more power. But problems arise when they rationalize their behavior with a character trait like being a “big flirt.”

“When we take on that identity, it leads to certain behavioral patterns that reinforce the identity. And then, people use that identity as an excuse,” lead author Laura Kray of the University of California, Berkeley, said in a statement from the school.

The researchers make a point to note that the study isn’t about whether flirting is good or bad, nor are they suggesting that people in powerful positions don’t sexually harass underlings. It’s meant to provide insight to improve corporate sexual harassment training. A comment or conversation held in jest could potentially be a warning sign for future behavior.

Targeted anti-IgE therapy found safe and effective for chronic urticaria

MILAN – The therapeutic .

Both doses of ligelizumab evaluated met the primary endpoint of superiority to placebo for a complete response at 16 weeks of therapy, reported Marcus Maurer, MD, director of the Urticaria Center for Reference and Excellence at the Charité Hospital, Berlin.

The data from the two identically designed trials, PEARL 1 and PEARL 2, were presented at the annual congress of the European Academy of Dermatology and Venereology. The two ligelizumab experimental arms (72 mg or 120 mg administered subcutaneously every 4 weeks) and the active comparative arm of omalizumab (300 mg administered subcutaneously every 4 weeks) demonstrated similar efficacy, all three of which were highly superior to placebo.

The data show that “another anti-IgE therapy – ligelizumab – is effective in CSU,” Dr. Maurer said.

“While the benefit was not different from omalizumab, ligelizumab showed remarkable results in disease activity and by demonstrating just how many patients achieved what we want them to achieve, which is to have no more signs and symptoms,” he added.

Majority of participants with severe urticaria

All of the patients entered into the two trials had severe (about 65%) or moderate (about 35%) symptoms at baseline. The results of the two trials were almost identical. In the randomization arms, a weekly Urticaria Activity Score (UAS7) of 0, which was the primary endpoint, was achieved at week 16 by 31.0% of those receiving 72-mg ligelizumab, 38.3% of those receiving 120-mg ligelizumab, and 34.1% of those receiving omalizumab (Xolair). The placebo response was 5.7%.

The UAS7 score is drawn from two components, wheals and itch. The range is 0 (no symptoms) to 42 (most severe). At baseline, the average patients’ scores were about 30, which correlates with a substantial symptom burden, according to Dr. Maurer.

The mean reduction in the UAS7 score in PEARL 2, which differed from PEARL 1 by no more than 0.4 points for any treatment group, was 19.2 points in the 72-mg ligelizumab group, 19.3 points in the 120-mg ligelizumab group, 19.6 points in the omalizumab group, and 9.2 points in the placebo group. There were no significant differences between any active treatment arm.

Complete symptom relief, meaning a UAS7 score of 0, was selected as the primary endpoint, because Dr. Maurer said that this is the goal of treatment. Although he admitted that a UAS7 score of 0 is analogous to a PASI score in psoriasis of 100 (complete clearing), he said, “Chronic urticaria is a debilitating disease, and we want to eliminate the symptoms. Gone is gone.”

Combined, the two phase 3 trials represent “the biggest chronic urticaria program ever,” according to Dr. Maurer. The 1,034 patients enrolled in PEARL 1 and the 1,023 enrolled in PEARL 2 were randomized in a 3:3:3:1 ratio with placebo representing the smaller group.

The planned follow-up is 52 weeks, but the placebo group will be switched to 120 mg ligelizumab every 4 weeks at the end of 24 weeks. The switch is required because “you cannot maintain patients with this disease on placebo over a long period,” Dr. Maurer said.

Ligelizumab associated with low discontinuation rate

Adverse events overall and stratified by severity have been similar across treatment arms, including placebo. The possible exception was a lower rate of moderate events (16.5%) in the placebo arm relative to the 72-mg ligelizumab arm (19.8%), the 120-mg ligelizumab arm (21.6%), and the omalizumab arm (22.3%). Discontinuations because of an adverse event were under 4% in every treatment arm.

Although Dr. Maurer did not present outcomes at 52 weeks, he did note that “only 15% of those who enrolled in these trials have discontinued treatment.” He considered this remarkable in that the study was conducted in the midst of the COVID-19 pandemic, and it appears that at least some of those left the trial did so because of concern for clinic visits.

Despite the similar benefit provided by ligelizumab and omalizumab, Dr. Maurer said that subgroup analyses will be coming. The possibility that some patients benefit more from one than the another cannot yet be ruled out. There are also, as of yet, no data to determine whether at least some patients respond to one after an inadequate response to the other.

Still, given the efficacy and the safety of ligelizumab, Dr. Maurer indicated that the drug is likely to find a role in routine management of CSU if approved.

“We only have two options for chronic spontaneous urticaria. There are antihistamines, which do not usually work, and omalizumab,” he said. “It is very important we develop more treatment options.”

Adam Friedman, MD, professor and chair of dermatology, George Washington University, Washington, agreed.

“More therapeutic options, especially for disease states that have a small armament – even if equivalent in efficacy to established therapies – is always a win for patients as it almost always increases access to treatment,” Dr. Friedman said in an interview.

“Furthermore, the heterogeneous nature of inflammatory skin diseases is often not captured in even phase 3 studies. Therefore, having additional options could offer relief where previous therapies have failed,” he added.

Dr. Maurer reports financial relationships with more than 10 pharmaceutical companies, including Novartis, which is developing ligelizumab. Dr. Friedman has a financial relationship with more than 20 pharmaceutical companies but has no current financial association with Novartis and was not involved in the PEARL 1 and 2 trials.

MILAN – The therapeutic .

Both doses of ligelizumab evaluated met the primary endpoint of superiority to placebo for a complete response at 16 weeks of therapy, reported Marcus Maurer, MD, director of the Urticaria Center for Reference and Excellence at the Charité Hospital, Berlin.

The data from the two identically designed trials, PEARL 1 and PEARL 2, were presented at the annual congress of the European Academy of Dermatology and Venereology. The two ligelizumab experimental arms (72 mg or 120 mg administered subcutaneously every 4 weeks) and the active comparative arm of omalizumab (300 mg administered subcutaneously every 4 weeks) demonstrated similar efficacy, all three of which were highly superior to placebo.

The data show that “another anti-IgE therapy – ligelizumab – is effective in CSU,” Dr. Maurer said.

“While the benefit was not different from omalizumab, ligelizumab showed remarkable results in disease activity and by demonstrating just how many patients achieved what we want them to achieve, which is to have no more signs and symptoms,” he added.

Majority of participants with severe urticaria

All of the patients entered into the two trials had severe (about 65%) or moderate (about 35%) symptoms at baseline. The results of the two trials were almost identical. In the randomization arms, a weekly Urticaria Activity Score (UAS7) of 0, which was the primary endpoint, was achieved at week 16 by 31.0% of those receiving 72-mg ligelizumab, 38.3% of those receiving 120-mg ligelizumab, and 34.1% of those receiving omalizumab (Xolair). The placebo response was 5.7%.

The UAS7 score is drawn from two components, wheals and itch. The range is 0 (no symptoms) to 42 (most severe). At baseline, the average patients’ scores were about 30, which correlates with a substantial symptom burden, according to Dr. Maurer.

The mean reduction in the UAS7 score in PEARL 2, which differed from PEARL 1 by no more than 0.4 points for any treatment group, was 19.2 points in the 72-mg ligelizumab group, 19.3 points in the 120-mg ligelizumab group, 19.6 points in the omalizumab group, and 9.2 points in the placebo group. There were no significant differences between any active treatment arm.

Complete symptom relief, meaning a UAS7 score of 0, was selected as the primary endpoint, because Dr. Maurer said that this is the goal of treatment. Although he admitted that a UAS7 score of 0 is analogous to a PASI score in psoriasis of 100 (complete clearing), he said, “Chronic urticaria is a debilitating disease, and we want to eliminate the symptoms. Gone is gone.”

Combined, the two phase 3 trials represent “the biggest chronic urticaria program ever,” according to Dr. Maurer. The 1,034 patients enrolled in PEARL 1 and the 1,023 enrolled in PEARL 2 were randomized in a 3:3:3:1 ratio with placebo representing the smaller group.

The planned follow-up is 52 weeks, but the placebo group will be switched to 120 mg ligelizumab every 4 weeks at the end of 24 weeks. The switch is required because “you cannot maintain patients with this disease on placebo over a long period,” Dr. Maurer said.

Ligelizumab associated with low discontinuation rate

Adverse events overall and stratified by severity have been similar across treatment arms, including placebo. The possible exception was a lower rate of moderate events (16.5%) in the placebo arm relative to the 72-mg ligelizumab arm (19.8%), the 120-mg ligelizumab arm (21.6%), and the omalizumab arm (22.3%). Discontinuations because of an adverse event were under 4% in every treatment arm.

Although Dr. Maurer did not present outcomes at 52 weeks, he did note that “only 15% of those who enrolled in these trials have discontinued treatment.” He considered this remarkable in that the study was conducted in the midst of the COVID-19 pandemic, and it appears that at least some of those left the trial did so because of concern for clinic visits.

Despite the similar benefit provided by ligelizumab and omalizumab, Dr. Maurer said that subgroup analyses will be coming. The possibility that some patients benefit more from one than the another cannot yet be ruled out. There are also, as of yet, no data to determine whether at least some patients respond to one after an inadequate response to the other.

Still, given the efficacy and the safety of ligelizumab, Dr. Maurer indicated that the drug is likely to find a role in routine management of CSU if approved.

“We only have two options for chronic spontaneous urticaria. There are antihistamines, which do not usually work, and omalizumab,” he said. “It is very important we develop more treatment options.”

Adam Friedman, MD, professor and chair of dermatology, George Washington University, Washington, agreed.

“More therapeutic options, especially for disease states that have a small armament – even if equivalent in efficacy to established therapies – is always a win for patients as it almost always increases access to treatment,” Dr. Friedman said in an interview.

“Furthermore, the heterogeneous nature of inflammatory skin diseases is often not captured in even phase 3 studies. Therefore, having additional options could offer relief where previous therapies have failed,” he added.

Dr. Maurer reports financial relationships with more than 10 pharmaceutical companies, including Novartis, which is developing ligelizumab. Dr. Friedman has a financial relationship with more than 20 pharmaceutical companies but has no current financial association with Novartis and was not involved in the PEARL 1 and 2 trials.

MILAN – The therapeutic .

Both doses of ligelizumab evaluated met the primary endpoint of superiority to placebo for a complete response at 16 weeks of therapy, reported Marcus Maurer, MD, director of the Urticaria Center for Reference and Excellence at the Charité Hospital, Berlin.

The data from the two identically designed trials, PEARL 1 and PEARL 2, were presented at the annual congress of the European Academy of Dermatology and Venereology. The two ligelizumab experimental arms (72 mg or 120 mg administered subcutaneously every 4 weeks) and the active comparative arm of omalizumab (300 mg administered subcutaneously every 4 weeks) demonstrated similar efficacy, all three of which were highly superior to placebo.

The data show that “another anti-IgE therapy – ligelizumab – is effective in CSU,” Dr. Maurer said.

“While the benefit was not different from omalizumab, ligelizumab showed remarkable results in disease activity and by demonstrating just how many patients achieved what we want them to achieve, which is to have no more signs and symptoms,” he added.

Majority of participants with severe urticaria

All of the patients entered into the two trials had severe (about 65%) or moderate (about 35%) symptoms at baseline. The results of the two trials were almost identical. In the randomization arms, a weekly Urticaria Activity Score (UAS7) of 0, which was the primary endpoint, was achieved at week 16 by 31.0% of those receiving 72-mg ligelizumab, 38.3% of those receiving 120-mg ligelizumab, and 34.1% of those receiving omalizumab (Xolair). The placebo response was 5.7%.

The UAS7 score is drawn from two components, wheals and itch. The range is 0 (no symptoms) to 42 (most severe). At baseline, the average patients’ scores were about 30, which correlates with a substantial symptom burden, according to Dr. Maurer.

The mean reduction in the UAS7 score in PEARL 2, which differed from PEARL 1 by no more than 0.4 points for any treatment group, was 19.2 points in the 72-mg ligelizumab group, 19.3 points in the 120-mg ligelizumab group, 19.6 points in the omalizumab group, and 9.2 points in the placebo group. There were no significant differences between any active treatment arm.

Complete symptom relief, meaning a UAS7 score of 0, was selected as the primary endpoint, because Dr. Maurer said that this is the goal of treatment. Although he admitted that a UAS7 score of 0 is analogous to a PASI score in psoriasis of 100 (complete clearing), he said, “Chronic urticaria is a debilitating disease, and we want to eliminate the symptoms. Gone is gone.”

Combined, the two phase 3 trials represent “the biggest chronic urticaria program ever,” according to Dr. Maurer. The 1,034 patients enrolled in PEARL 1 and the 1,023 enrolled in PEARL 2 were randomized in a 3:3:3:1 ratio with placebo representing the smaller group.

The planned follow-up is 52 weeks, but the placebo group will be switched to 120 mg ligelizumab every 4 weeks at the end of 24 weeks. The switch is required because “you cannot maintain patients with this disease on placebo over a long period,” Dr. Maurer said.

Ligelizumab associated with low discontinuation rate

Adverse events overall and stratified by severity have been similar across treatment arms, including placebo. The possible exception was a lower rate of moderate events (16.5%) in the placebo arm relative to the 72-mg ligelizumab arm (19.8%), the 120-mg ligelizumab arm (21.6%), and the omalizumab arm (22.3%). Discontinuations because of an adverse event were under 4% in every treatment arm.

Although Dr. Maurer did not present outcomes at 52 weeks, he did note that “only 15% of those who enrolled in these trials have discontinued treatment.” He considered this remarkable in that the study was conducted in the midst of the COVID-19 pandemic, and it appears that at least some of those left the trial did so because of concern for clinic visits.

Despite the similar benefit provided by ligelizumab and omalizumab, Dr. Maurer said that subgroup analyses will be coming. The possibility that some patients benefit more from one than the another cannot yet be ruled out. There are also, as of yet, no data to determine whether at least some patients respond to one after an inadequate response to the other.

Still, given the efficacy and the safety of ligelizumab, Dr. Maurer indicated that the drug is likely to find a role in routine management of CSU if approved.

“We only have two options for chronic spontaneous urticaria. There are antihistamines, which do not usually work, and omalizumab,” he said. “It is very important we develop more treatment options.”

Adam Friedman, MD, professor and chair of dermatology, George Washington University, Washington, agreed.

“More therapeutic options, especially for disease states that have a small armament – even if equivalent in efficacy to established therapies – is always a win for patients as it almost always increases access to treatment,” Dr. Friedman said in an interview.

“Furthermore, the heterogeneous nature of inflammatory skin diseases is often not captured in even phase 3 studies. Therefore, having additional options could offer relief where previous therapies have failed,” he added.

Dr. Maurer reports financial relationships with more than 10 pharmaceutical companies, including Novartis, which is developing ligelizumab. Dr. Friedman has a financial relationship with more than 20 pharmaceutical companies but has no current financial association with Novartis and was not involved in the PEARL 1 and 2 trials.

AT THE EADV CONGRESS

Your poop may hold the secret to long life

Lots of things can disrupt your gut health over the years. A high-sugar diet, stress, antibiotics – all are linked to bad changes in the gut microbiome, the microbes that live in your intestinal tract. And this can raise the risk of diseases.

It could be possible, scientists say, by having people take a sample of their own stool when they are young to be put back into their colons when they are older.

While the science to back this up isn’t quite there yet, some researchers are saying we shouldn’t wait. They are calling on existing stool banks to let people start banking their stool now, so it’s there for them to use if the science becomes available.

But how would that work?

First, you’d go to a stool bank and provide a fresh sample of your poop, which would be screened for diseases, washed, processed, and deposited into a long-term storage facility.

Then, down the road, if you get a condition such as inflammatory bowel disease, heart disease, or type 2 diabetes – or if you have a procedure that wipes out your microbiome, like a course of antibiotics or chemotherapy – doctors could use your preserved stool to “re-colonize” your gut, restoring it to its earlier, healthier state, said Scott Weiss, MD, professor of medicine at Harvard Medical School, Boston, and a coauthor of a recent paper on the topic. They would do that using fecal microbiota transplantation, or FMT.

Timing is everything. You’d want a sample from when you’re healthy – say, between the ages of 18 and 35, or before a chronic condition is likely, said Dr. Weiss. But if you’re still healthy into your late 30s, 40s, or even 50s, providing a sample then could still benefit you later in life.

If we could pull off a banking system like this, it could have the potential to treat autoimmune disease, inflammatory bowel disease, diabetes, obesity, and heart disease – or even reverse the effects of aging. How can we make this happen?

Stool banks of today

While stool banks do exist today, the samples inside are destined not for the original donors but rather for sick patients hoping to treat an illness. Using FMT, doctors transfer the fecal material to the patient’s colon, restoring helpful gut microbiota.

Some research shows FMT may help treat inflammatory bowel diseases, such as Crohn’s or ulcerative colitis. Animal studies suggest it could help treat obesity, lengthen lifespan, and reverse some effects of aging, such as age-related decline in brain function. Other clinical trials are looking into its potential as a cancer treatment, said Dr. Weiss.

But outside the lab, FMT is mainly used for one purpose: to treat Clostridioides difficile infection. It works even better than antibiotics, research shows.

But first you need to find a healthy donor, and that’s harder than you might think.

Finding healthy stool samples

Banking our bodily substances is nothing new. Blood banks, for example, are common throughout the United States, and cord blood banking – preserving blood from a baby’s umbilical cord to aid possible future medical needs of the child – is becoming more popular. Sperm donors are highly sought after, and doctors regularly transplant kidneys and bone marrow to patients in need.

So why are we so particular about poop?

Part of the reason may be because feces (like blood, for that matter) can harbor disease – which is why it’s so important to find healthy stool donors. Problem is, this can be surprisingly hard to do.

To donate fecal matter, people must go through a rigorous screening process, said Majdi Osman, MD, chief medical officer for OpenBiome, a nonprofit microbiome research organization.

Until recently, OpenBiome operated a stool donation program, though it has since shifted its focus to research. Potential donors were screened for diseases and mental health conditions, pathogens, and antibiotic resistance. The pass rate was less than 3%.

“We take a very cautious approach because the association between diseases and the microbiome is still being understood,” Dr. Osman said.

FMT also carries risks – though so far, they seem mild. Side effects include mild diarrhea, nausea, belly pain, and fatigue. (The reason? Even the healthiest donor stool may not mix perfectly with your own.)

That’s where the idea of using your own stool comes in, said Yang-Yu Liu, PhD, a Harvard researcher who studies the microbiome and the lead author of the paper mentioned above. It’s not just more appealing but may also be a better “match” for your body.

Should you bank your stool?

While the researchers say we have reason to be optimistic about the future, it’s important to remember that many challenges remain. FMT is early in development, and there’s a lot about the microbiome we still don’t know.

There’s no guarantee, for example, that restoring a person’s microbiome to its formerly disease-free state will keep diseases at bay forever, said Dr. Weiss. If your genes raise your odds of having Crohn’s, for instance, it’s possible the disease could come back.

We also don’t know how long stool samples can be preserved, said Dr. Liu. Stool banks currently store fecal matter for 1 or 2 years, not decades. To protect the proteins and DNA structures for that long, samples would likely need to be stashed at the liquid nitrogen storage temperature of –196° C. (Currently, samples are stored at about –80° C.) Even then, testing would be needed to confirm if the fragile microorganisms in the stool can survive.

This raises another question: Who’s going to regulate all this?

The FDA regulates the use of FMT as a drug for the treatment of C. diff, but as Dr. Liu pointed out, many gastroenterologists consider the gut microbiota an organ. In that case, human fecal matter could be regulated the same way blood, bone, or even egg cells are.

Cord blood banking may be a helpful model, Dr. Liu said.

“We don’t have to start from scratch.”

Then there’s the question of cost. Cord blood banks could be a point of reference for that too, the researchers say. They charge about $1,500 to $2,820 for the first collection and processing, plus a yearly storage fee of $185 to $370.

Despite the unknowns, one thing is for sure: The interest in fecal banking is real – and growing. At least one microbiome firm, Cordlife Group Limited, based in Singapore, announced that it has started to allow people to bank their stool for future use.

“More people should talk about it and think about it,” said Dr. Liu.

A version of this article first appeared on WebMD.com.

Lots of things can disrupt your gut health over the years. A high-sugar diet, stress, antibiotics – all are linked to bad changes in the gut microbiome, the microbes that live in your intestinal tract. And this can raise the risk of diseases.

It could be possible, scientists say, by having people take a sample of their own stool when they are young to be put back into their colons when they are older.

While the science to back this up isn’t quite there yet, some researchers are saying we shouldn’t wait. They are calling on existing stool banks to let people start banking their stool now, so it’s there for them to use if the science becomes available.

But how would that work?

First, you’d go to a stool bank and provide a fresh sample of your poop, which would be screened for diseases, washed, processed, and deposited into a long-term storage facility.

Then, down the road, if you get a condition such as inflammatory bowel disease, heart disease, or type 2 diabetes – or if you have a procedure that wipes out your microbiome, like a course of antibiotics or chemotherapy – doctors could use your preserved stool to “re-colonize” your gut, restoring it to its earlier, healthier state, said Scott Weiss, MD, professor of medicine at Harvard Medical School, Boston, and a coauthor of a recent paper on the topic. They would do that using fecal microbiota transplantation, or FMT.

Timing is everything. You’d want a sample from when you’re healthy – say, between the ages of 18 and 35, or before a chronic condition is likely, said Dr. Weiss. But if you’re still healthy into your late 30s, 40s, or even 50s, providing a sample then could still benefit you later in life.

If we could pull off a banking system like this, it could have the potential to treat autoimmune disease, inflammatory bowel disease, diabetes, obesity, and heart disease – or even reverse the effects of aging. How can we make this happen?

Stool banks of today

While stool banks do exist today, the samples inside are destined not for the original donors but rather for sick patients hoping to treat an illness. Using FMT, doctors transfer the fecal material to the patient’s colon, restoring helpful gut microbiota.

Some research shows FMT may help treat inflammatory bowel diseases, such as Crohn’s or ulcerative colitis. Animal studies suggest it could help treat obesity, lengthen lifespan, and reverse some effects of aging, such as age-related decline in brain function. Other clinical trials are looking into its potential as a cancer treatment, said Dr. Weiss.

But outside the lab, FMT is mainly used for one purpose: to treat Clostridioides difficile infection. It works even better than antibiotics, research shows.

But first you need to find a healthy donor, and that’s harder than you might think.

Finding healthy stool samples

Banking our bodily substances is nothing new. Blood banks, for example, are common throughout the United States, and cord blood banking – preserving blood from a baby’s umbilical cord to aid possible future medical needs of the child – is becoming more popular. Sperm donors are highly sought after, and doctors regularly transplant kidneys and bone marrow to patients in need.

So why are we so particular about poop?

Part of the reason may be because feces (like blood, for that matter) can harbor disease – which is why it’s so important to find healthy stool donors. Problem is, this can be surprisingly hard to do.

To donate fecal matter, people must go through a rigorous screening process, said Majdi Osman, MD, chief medical officer for OpenBiome, a nonprofit microbiome research organization.

Until recently, OpenBiome operated a stool donation program, though it has since shifted its focus to research. Potential donors were screened for diseases and mental health conditions, pathogens, and antibiotic resistance. The pass rate was less than 3%.

“We take a very cautious approach because the association between diseases and the microbiome is still being understood,” Dr. Osman said.

FMT also carries risks – though so far, they seem mild. Side effects include mild diarrhea, nausea, belly pain, and fatigue. (The reason? Even the healthiest donor stool may not mix perfectly with your own.)

That’s where the idea of using your own stool comes in, said Yang-Yu Liu, PhD, a Harvard researcher who studies the microbiome and the lead author of the paper mentioned above. It’s not just more appealing but may also be a better “match” for your body.

Should you bank your stool?

While the researchers say we have reason to be optimistic about the future, it’s important to remember that many challenges remain. FMT is early in development, and there’s a lot about the microbiome we still don’t know.

There’s no guarantee, for example, that restoring a person’s microbiome to its formerly disease-free state will keep diseases at bay forever, said Dr. Weiss. If your genes raise your odds of having Crohn’s, for instance, it’s possible the disease could come back.

We also don’t know how long stool samples can be preserved, said Dr. Liu. Stool banks currently store fecal matter for 1 or 2 years, not decades. To protect the proteins and DNA structures for that long, samples would likely need to be stashed at the liquid nitrogen storage temperature of –196° C. (Currently, samples are stored at about –80° C.) Even then, testing would be needed to confirm if the fragile microorganisms in the stool can survive.

This raises another question: Who’s going to regulate all this?

The FDA regulates the use of FMT as a drug for the treatment of C. diff, but as Dr. Liu pointed out, many gastroenterologists consider the gut microbiota an organ. In that case, human fecal matter could be regulated the same way blood, bone, or even egg cells are.

Cord blood banking may be a helpful model, Dr. Liu said.

“We don’t have to start from scratch.”

Then there’s the question of cost. Cord blood banks could be a point of reference for that too, the researchers say. They charge about $1,500 to $2,820 for the first collection and processing, plus a yearly storage fee of $185 to $370.

Despite the unknowns, one thing is for sure: The interest in fecal banking is real – and growing. At least one microbiome firm, Cordlife Group Limited, based in Singapore, announced that it has started to allow people to bank their stool for future use.

“More people should talk about it and think about it,” said Dr. Liu.

A version of this article first appeared on WebMD.com.

Lots of things can disrupt your gut health over the years. A high-sugar diet, stress, antibiotics – all are linked to bad changes in the gut microbiome, the microbes that live in your intestinal tract. And this can raise the risk of diseases.

It could be possible, scientists say, by having people take a sample of their own stool when they are young to be put back into their colons when they are older.

While the science to back this up isn’t quite there yet, some researchers are saying we shouldn’t wait. They are calling on existing stool banks to let people start banking their stool now, so it’s there for them to use if the science becomes available.

But how would that work?

First, you’d go to a stool bank and provide a fresh sample of your poop, which would be screened for diseases, washed, processed, and deposited into a long-term storage facility.

Then, down the road, if you get a condition such as inflammatory bowel disease, heart disease, or type 2 diabetes – or if you have a procedure that wipes out your microbiome, like a course of antibiotics or chemotherapy – doctors could use your preserved stool to “re-colonize” your gut, restoring it to its earlier, healthier state, said Scott Weiss, MD, professor of medicine at Harvard Medical School, Boston, and a coauthor of a recent paper on the topic. They would do that using fecal microbiota transplantation, or FMT.

Timing is everything. You’d want a sample from when you’re healthy – say, between the ages of 18 and 35, or before a chronic condition is likely, said Dr. Weiss. But if you’re still healthy into your late 30s, 40s, or even 50s, providing a sample then could still benefit you later in life.

If we could pull off a banking system like this, it could have the potential to treat autoimmune disease, inflammatory bowel disease, diabetes, obesity, and heart disease – or even reverse the effects of aging. How can we make this happen?

Stool banks of today

While stool banks do exist today, the samples inside are destined not for the original donors but rather for sick patients hoping to treat an illness. Using FMT, doctors transfer the fecal material to the patient’s colon, restoring helpful gut microbiota.

Some research shows FMT may help treat inflammatory bowel diseases, such as Crohn’s or ulcerative colitis. Animal studies suggest it could help treat obesity, lengthen lifespan, and reverse some effects of aging, such as age-related decline in brain function. Other clinical trials are looking into its potential as a cancer treatment, said Dr. Weiss.

But outside the lab, FMT is mainly used for one purpose: to treat Clostridioides difficile infection. It works even better than antibiotics, research shows.

But first you need to find a healthy donor, and that’s harder than you might think.

Finding healthy stool samples

Banking our bodily substances is nothing new. Blood banks, for example, are common throughout the United States, and cord blood banking – preserving blood from a baby’s umbilical cord to aid possible future medical needs of the child – is becoming more popular. Sperm donors are highly sought after, and doctors regularly transplant kidneys and bone marrow to patients in need.

So why are we so particular about poop?

Part of the reason may be because feces (like blood, for that matter) can harbor disease – which is why it’s so important to find healthy stool donors. Problem is, this can be surprisingly hard to do.

To donate fecal matter, people must go through a rigorous screening process, said Majdi Osman, MD, chief medical officer for OpenBiome, a nonprofit microbiome research organization.

Until recently, OpenBiome operated a stool donation program, though it has since shifted its focus to research. Potential donors were screened for diseases and mental health conditions, pathogens, and antibiotic resistance. The pass rate was less than 3%.

“We take a very cautious approach because the association between diseases and the microbiome is still being understood,” Dr. Osman said.

FMT also carries risks – though so far, they seem mild. Side effects include mild diarrhea, nausea, belly pain, and fatigue. (The reason? Even the healthiest donor stool may not mix perfectly with your own.)

That’s where the idea of using your own stool comes in, said Yang-Yu Liu, PhD, a Harvard researcher who studies the microbiome and the lead author of the paper mentioned above. It’s not just more appealing but may also be a better “match” for your body.

Should you bank your stool?

While the researchers say we have reason to be optimistic about the future, it’s important to remember that many challenges remain. FMT is early in development, and there’s a lot about the microbiome we still don’t know.

There’s no guarantee, for example, that restoring a person’s microbiome to its formerly disease-free state will keep diseases at bay forever, said Dr. Weiss. If your genes raise your odds of having Crohn’s, for instance, it’s possible the disease could come back.

We also don’t know how long stool samples can be preserved, said Dr. Liu. Stool banks currently store fecal matter for 1 or 2 years, not decades. To protect the proteins and DNA structures for that long, samples would likely need to be stashed at the liquid nitrogen storage temperature of –196° C. (Currently, samples are stored at about –80° C.) Even then, testing would be needed to confirm if the fragile microorganisms in the stool can survive.

This raises another question: Who’s going to regulate all this?

The FDA regulates the use of FMT as a drug for the treatment of C. diff, but as Dr. Liu pointed out, many gastroenterologists consider the gut microbiota an organ. In that case, human fecal matter could be regulated the same way blood, bone, or even egg cells are.

Cord blood banking may be a helpful model, Dr. Liu said.

“We don’t have to start from scratch.”

Then there’s the question of cost. Cord blood banks could be a point of reference for that too, the researchers say. They charge about $1,500 to $2,820 for the first collection and processing, plus a yearly storage fee of $185 to $370.

Despite the unknowns, one thing is for sure: The interest in fecal banking is real – and growing. At least one microbiome firm, Cordlife Group Limited, based in Singapore, announced that it has started to allow people to bank their stool for future use.

“More people should talk about it and think about it,” said Dr. Liu.

A version of this article first appeared on WebMD.com.

FDA warns of cancer risk in scar tissue around breast implants

.

The FDA safety communication is based on several dozen reports of these cancers occurring in the capsule or scar tissue around breast implants. This issue differs from breast implant–associated anaplastic large-cell lymphoma (BIA-ALCL) – a known risk among implant recipients.

“After preliminary review of published literature as part of our ongoing monitoring of the safety of breast implants, the FDA is aware of less than 20 cases of SCC and less than 30 cases of various lymphomas in the capsule around the breast implant,” the agency’s alert explains.

One avenue through which the FDA has identified cases is via medical device reports. As of Sept. 1, the FDA has received 10 medical device reports about SCC related to breast implants and 12 about various lymphomas.

The incidence rate and risk factors for these events are currently unknown, but reports of SCC and various lymphomas in the capsule around the breast implants have been reported for both textured and smooth breast implants, as well as for both saline and silicone breast implants. In some cases, the cancers were diagnosed years after breast implant surgery.

Reported signs and symptoms included swelling, pain, lumps, or skin changes.

Although the risks of SCC and lymphomas in the tissue around breast implants appears rare, “when safety risks with medical devices are identified, we wanted to provide clear and understandable information to the public as quickly as possible,” Binita Ashar, MD, director of the Office of Surgical and Infection Control Devices, FDA Center for Devices and Radiological Health, explained in a press release.

Patients and providers are strongly encouraged to report breast implant–related problems and cases of SCC or lymphoma of the breast implant capsule to MedWatch, the FDA’s adverse event reporting program.

The FDA plans to complete “a thorough literature review” as well as “identify ways to collect more detailed information regarding patient cases.”

A version of this article first appeared on Medscape.com.

.

The FDA safety communication is based on several dozen reports of these cancers occurring in the capsule or scar tissue around breast implants. This issue differs from breast implant–associated anaplastic large-cell lymphoma (BIA-ALCL) – a known risk among implant recipients.

“After preliminary review of published literature as part of our ongoing monitoring of the safety of breast implants, the FDA is aware of less than 20 cases of SCC and less than 30 cases of various lymphomas in the capsule around the breast implant,” the agency’s alert explains.

One avenue through which the FDA has identified cases is via medical device reports. As of Sept. 1, the FDA has received 10 medical device reports about SCC related to breast implants and 12 about various lymphomas.

The incidence rate and risk factors for these events are currently unknown, but reports of SCC and various lymphomas in the capsule around the breast implants have been reported for both textured and smooth breast implants, as well as for both saline and silicone breast implants. In some cases, the cancers were diagnosed years after breast implant surgery.

Reported signs and symptoms included swelling, pain, lumps, or skin changes.

Although the risks of SCC and lymphomas in the tissue around breast implants appears rare, “when safety risks with medical devices are identified, we wanted to provide clear and understandable information to the public as quickly as possible,” Binita Ashar, MD, director of the Office of Surgical and Infection Control Devices, FDA Center for Devices and Radiological Health, explained in a press release.

Patients and providers are strongly encouraged to report breast implant–related problems and cases of SCC or lymphoma of the breast implant capsule to MedWatch, the FDA’s adverse event reporting program.

The FDA plans to complete “a thorough literature review” as well as “identify ways to collect more detailed information regarding patient cases.”

A version of this article first appeared on Medscape.com.

.

The FDA safety communication is based on several dozen reports of these cancers occurring in the capsule or scar tissue around breast implants. This issue differs from breast implant–associated anaplastic large-cell lymphoma (BIA-ALCL) – a known risk among implant recipients.

“After preliminary review of published literature as part of our ongoing monitoring of the safety of breast implants, the FDA is aware of less than 20 cases of SCC and less than 30 cases of various lymphomas in the capsule around the breast implant,” the agency’s alert explains.

One avenue through which the FDA has identified cases is via medical device reports. As of Sept. 1, the FDA has received 10 medical device reports about SCC related to breast implants and 12 about various lymphomas.

The incidence rate and risk factors for these events are currently unknown, but reports of SCC and various lymphomas in the capsule around the breast implants have been reported for both textured and smooth breast implants, as well as for both saline and silicone breast implants. In some cases, the cancers were diagnosed years after breast implant surgery.

Reported signs and symptoms included swelling, pain, lumps, or skin changes.

Although the risks of SCC and lymphomas in the tissue around breast implants appears rare, “when safety risks with medical devices are identified, we wanted to provide clear and understandable information to the public as quickly as possible,” Binita Ashar, MD, director of the Office of Surgical and Infection Control Devices, FDA Center for Devices and Radiological Health, explained in a press release.

Patients and providers are strongly encouraged to report breast implant–related problems and cases of SCC or lymphoma of the breast implant capsule to MedWatch, the FDA’s adverse event reporting program.

The FDA plans to complete “a thorough literature review” as well as “identify ways to collect more detailed information regarding patient cases.”

A version of this article first appeared on Medscape.com.

Fish oil pills do not reduce fractures in healthy seniors: VITAL

Omega-3 supplements did not reduce fractures during a median 5.3-year follow-up in the more than 25,000 generally healthy men and women (≥ age 50 and ≥ age 55, respectively) in the Vitamin D and Omega-3 Trial (VITAL).

The large randomized controlled trial tested whether omega-3 fatty acid or vitamin D supplements prevented cardiovascular disease or cancer in a representative sample of midlife and older adults from 50 U.S. states – which they did not. In a further analysis of VITAL, vitamin D supplements (cholecalciferol, 2,000 IU/day) did not lower the risk of incident total, nonvertebral, and hip fractures, compared with placebo.

Now this new analysis shows that omega-3 fatty acid supplements (1 g/day of fish oil) did not reduce the risk of such fractures in the VITAL population either. Meryl S. LeBoff, MD, presented the latest findings during an oral session at the annual meeting of the American Society for Bone and Mineral Research.

“In this, the largest randomized controlled trial in the world, we did not find an effect of omega-3 fatty acid supplements on fractures,” Dr. LeBoff, from Brigham and Women’s Hospital and Harvard Medical School, both in Boston, told this news organization.

The current analysis did “unexpectedly” show that among participants who received the omega-3 fatty acid supplements, there was an increase in fractures in men, and fracture risk was higher in people with a normal or low body mass index and lower in people with higher BMI.

However, these subgroup findings need to be interpreted with caution and may be caused by chance, Dr. LeBoff warned. The researchers will be investigating these findings in further analyses.

Should patients take omega-3 supplements or not?

Asked whether, in the meantime, patients should start or keep taking fish oil supplements for possible health benefits, she noted that certain individuals might benefit.

For example, in VITAL, participants who ate less than 1.5 servings of fish per week and received omega-3 fatty acid supplements had a decrease in the combined cardiovascular endpoint, and Black participants who took fish oil supplements had a substantially reduced risk of the outcome, regardless of fish intake.

“I think everybody needs to review [the study findings] with clinicians and make a decision in terms of what would be best for them,” she said.

Session comoderator Bente Langdahl, MD, PhD, commented that “many people take omega-3 because they think it will help” knee, hip, or other joint pain.

Perhaps men are more prone to joint pain because of osteoarthritis and the supplements lessen the pain, so these men became more physically active and more prone to fractures, she speculated.

The current study shows that, “so far, we haven’t been able to demonstrate a reduced rate of fractures with fish oil supplements in clinical randomized trials” conducted in relatively healthy and not the oldest patients, she summarized. “We’re not talking about 80-year-olds.”

In this “well-conducted study, they were not able to see any difference” with omega-3 fatty acid supplements versus placebo, but apparently, there are no harms associated with taking these supplements, she said.

To patients who ask her about such supplements, Dr. Langdahl advised: “Try it out for 3 months. If it really helps you, if it takes away your joint pain or whatever, then that might work for you. But then remember to stop again because it might just be a temporary effect.”

Could fish oil supplements protect against fractures?

An estimated 22% of U.S. adults aged 60 and older take omega-3 fatty acid supplements, Dr. LeBoff noted.

Preclinical studies have shown that omega-3 fatty acids reduce bone resorption and have anti-inflammatory effects, but observational studies have reported conflicting findings.

The researchers conducted this ancillary study of VITAL to fill these knowledge gaps.

VITAL enrolled a national sample of 25,871 U.S. men and women, including 5,106 Black participants, with a mean age of 67 and a mean BMI of 28 kg/m2.

Importantly, participants were not recruited by low bone density, fractures, or vitamin D deficiency. Prior to entry, participants were required to stop taking omega-3 supplements and limit nonstudy vitamin D and calcium supplements.

The omega-3 fatty acid supplements used in the study contained eicosapentaenoic acid and docosahexaenoic acid in a 1.2:1 ratio.

VITAL had a 2x2 factorial design whereby 6,463 participants were randomized to receive the omega-3 fatty acid supplement and 6,474 were randomized to placebo. (Remaining participants were randomized to receive vitamin D or placebo.)

Participants in the omega-3 fatty acid and placebo groups had similar baseline characteristics. For example, about half (50.5%) were women, and on average, they ate 1.1 servings of dark-meat fish (such as salmon) per week.

Participants completed detailed questionnaires at baseline and each year.

Plasma omega-3 levels were measured at baseline and, in 1,583 participants, at 1 year of follow-up. The mean omega-3 index rose 54.7% in the omega-3 fatty acid group and changed less than 2% in the placebo group at 1 year.

Study pill adherence was 87.0% at 2 years and 85.7% at 5 years.

Fractures were self-reported on annual questionnaires and centrally adjudicated in medical record review.

No clinically meaningful effect of omega-3 fatty acids on fractures

During a median 5.3-year follow-up, researchers adjudicated 2,133 total fractures and confirmed 1,991 fractures (93%) in 1551 participants.

Incidences of total, nonvertebral, and hip fractures were similar in both groups.

Compared with placebo, omega-3 fatty acid supplements had no significant effect on risk of total fractures (hazard ratio, 1.02; 95% confidence interval, 0.92-1.13), nonvertebral fractures (HR, 1.01; 95% CI, 0.91-1.12), or hip fractures (HR, 0.89; 95% CI, 0.61-1.30), all adjusted for age, sex, and race.

The “confidence intervals were narrow, likely excluding a clinically meaningful effect,” Dr. LeBoff noted.

Among men, those who received fish oil supplements had a greater risk of fracture than those who received placebo (HR, 1.27; 95% CI, 1.07-1.51), but this result “was not corrected for multiple hypothesis testing,” Dr. LeBoff cautioned.

In the overall population, participants with a BMI less than 25 who received fish oil versus placebo had an increased risk of fracture, and those with a BMI of at least 30 who received fish oil versus placebo had a decreased risk of fracture, but the limits of the confidence intervals crossed 1.00.

After excluding digit, skull, and pathologic fractures, there was no significant reduction in total fractures (HR, 1.02; 95% CI, 0.92-1.14), nonvertebral fractures (HR, 1.02; 95% CI, 0.92-1.14), or hip fractures (HR, 0.90; 95% CI, 0.61-1.33), with omega-3 supplements versus placebo.

Similarly, there was no significant reduction in risk of major osteoporotic fractures (hip, wrist, humerus, and clinical spine fractures) or wrist fractures with omega-3 supplements versus placebo.

VITAL only studied one dose of omega-3 fatty acid supplements, and results may not be generalizable to younger adults, or older adults living in residential communities, Dr. LeBoff noted.

The study was supported by grants from the National Institute of Arthritis Musculoskeletal and Skin Diseases. VITAL was funded by the National Cancer Institute and the National Heart, Lung, and Blood Institute. Dr. LeBoff and Dr. Langdahl have reported no relevant financial relationships.

A version of this article first appeared on Medscape.com.

Omega-3 supplements did not reduce fractures during a median 5.3-year follow-up in the more than 25,000 generally healthy men and women (≥ age 50 and ≥ age 55, respectively) in the Vitamin D and Omega-3 Trial (VITAL).

The large randomized controlled trial tested whether omega-3 fatty acid or vitamin D supplements prevented cardiovascular disease or cancer in a representative sample of midlife and older adults from 50 U.S. states – which they did not. In a further analysis of VITAL, vitamin D supplements (cholecalciferol, 2,000 IU/day) did not lower the risk of incident total, nonvertebral, and hip fractures, compared with placebo.

Now this new analysis shows that omega-3 fatty acid supplements (1 g/day of fish oil) did not reduce the risk of such fractures in the VITAL population either. Meryl S. LeBoff, MD, presented the latest findings during an oral session at the annual meeting of the American Society for Bone and Mineral Research.

“In this, the largest randomized controlled trial in the world, we did not find an effect of omega-3 fatty acid supplements on fractures,” Dr. LeBoff, from Brigham and Women’s Hospital and Harvard Medical School, both in Boston, told this news organization.

The current analysis did “unexpectedly” show that among participants who received the omega-3 fatty acid supplements, there was an increase in fractures in men, and fracture risk was higher in people with a normal or low body mass index and lower in people with higher BMI.

However, these subgroup findings need to be interpreted with caution and may be caused by chance, Dr. LeBoff warned. The researchers will be investigating these findings in further analyses.

Should patients take omega-3 supplements or not?

Asked whether, in the meantime, patients should start or keep taking fish oil supplements for possible health benefits, she noted that certain individuals might benefit.

For example, in VITAL, participants who ate less than 1.5 servings of fish per week and received omega-3 fatty acid supplements had a decrease in the combined cardiovascular endpoint, and Black participants who took fish oil supplements had a substantially reduced risk of the outcome, regardless of fish intake.

“I think everybody needs to review [the study findings] with clinicians and make a decision in terms of what would be best for them,” she said.

Session comoderator Bente Langdahl, MD, PhD, commented that “many people take omega-3 because they think it will help” knee, hip, or other joint pain.

Perhaps men are more prone to joint pain because of osteoarthritis and the supplements lessen the pain, so these men became more physically active and more prone to fractures, she speculated.

The current study shows that, “so far, we haven’t been able to demonstrate a reduced rate of fractures with fish oil supplements in clinical randomized trials” conducted in relatively healthy and not the oldest patients, she summarized. “We’re not talking about 80-year-olds.”

In this “well-conducted study, they were not able to see any difference” with omega-3 fatty acid supplements versus placebo, but apparently, there are no harms associated with taking these supplements, she said.

To patients who ask her about such supplements, Dr. Langdahl advised: “Try it out for 3 months. If it really helps you, if it takes away your joint pain or whatever, then that might work for you. But then remember to stop again because it might just be a temporary effect.”

Could fish oil supplements protect against fractures?

An estimated 22% of U.S. adults aged 60 and older take omega-3 fatty acid supplements, Dr. LeBoff noted.

Preclinical studies have shown that omega-3 fatty acids reduce bone resorption and have anti-inflammatory effects, but observational studies have reported conflicting findings.

The researchers conducted this ancillary study of VITAL to fill these knowledge gaps.

VITAL enrolled a national sample of 25,871 U.S. men and women, including 5,106 Black participants, with a mean age of 67 and a mean BMI of 28 kg/m2.

Importantly, participants were not recruited by low bone density, fractures, or vitamin D deficiency. Prior to entry, participants were required to stop taking omega-3 supplements and limit nonstudy vitamin D and calcium supplements.

The omega-3 fatty acid supplements used in the study contained eicosapentaenoic acid and docosahexaenoic acid in a 1.2:1 ratio.

VITAL had a 2x2 factorial design whereby 6,463 participants were randomized to receive the omega-3 fatty acid supplement and 6,474 were randomized to placebo. (Remaining participants were randomized to receive vitamin D or placebo.)

Participants in the omega-3 fatty acid and placebo groups had similar baseline characteristics. For example, about half (50.5%) were women, and on average, they ate 1.1 servings of dark-meat fish (such as salmon) per week.

Participants completed detailed questionnaires at baseline and each year.

Plasma omega-3 levels were measured at baseline and, in 1,583 participants, at 1 year of follow-up. The mean omega-3 index rose 54.7% in the omega-3 fatty acid group and changed less than 2% in the placebo group at 1 year.

Study pill adherence was 87.0% at 2 years and 85.7% at 5 years.

Fractures were self-reported on annual questionnaires and centrally adjudicated in medical record review.

No clinically meaningful effect of omega-3 fatty acids on fractures

During a median 5.3-year follow-up, researchers adjudicated 2,133 total fractures and confirmed 1,991 fractures (93%) in 1551 participants.

Incidences of total, nonvertebral, and hip fractures were similar in both groups.

Compared with placebo, omega-3 fatty acid supplements had no significant effect on risk of total fractures (hazard ratio, 1.02; 95% confidence interval, 0.92-1.13), nonvertebral fractures (HR, 1.01; 95% CI, 0.91-1.12), or hip fractures (HR, 0.89; 95% CI, 0.61-1.30), all adjusted for age, sex, and race.

The “confidence intervals were narrow, likely excluding a clinically meaningful effect,” Dr. LeBoff noted.