User login

Identifying the Sickest During Triage: Using Point-of-Care Severity Scores to Predict Prognosis in Emergency Department Patients With Suspected Sepsis

Sepsis is the leading cause of in-hospital mortality in the United States.1 Sepsis is present on admission in 85% of cases, and each hour delay in antibiotic treatment is associated with 4% to 7% increased odds of mortality.2,3 Prompt identification and treatment of sepsis is essential for reducing morbidity and mortality, but identifying sepsis during triage is challenging.2

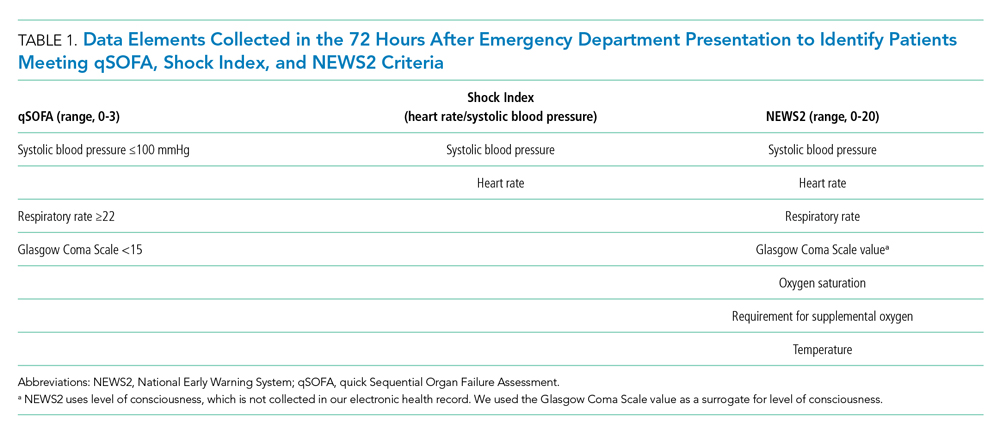

Risk stratification scores that rely solely on data readily available at the bedside have been developed to quickly identify those at greatest risk of poor outcomes from sepsis in real time. The quick Sequential Organ Failure Assessment (qSOFA) score, the National Early Warning System (NEWS2), and the Shock Index are easy-to-calculate measures that use routinely collected clinical data that are not subject to laboratory delay. These scores can be incorporated into electronic health record (EHR)-based alerts and can be calculated longitudinally to track the risk of poor outcomes over time. qSOFA was developed to quantify patient risk at bedside in non-intensive care unit (ICU) settings, but there is no consensus about its ability to predict adverse outcomes such as mortality and ICU admission.4-6 The United Kingdom’s National Health Service uses NEWS2 to identify patients at risk for sepsis.7 NEWS has been shown to have similar or better sensitivity in identifying poorer outcomes in sepsis patients compared with systemic inflammatory response syndrome (SIRS) criteria and qSOFA.4,8-11 However, since the latest update of NEWS2 in 2017, there has been little study of its predictive ability. The Shock Index is a simple bedside score (heart rate divided by systolic blood pressure) that was developed to detect changes in cardiovascular performance before systemic shock onset. Although it was not developed for infection and has not been regularly applied in the sepsis literature, the Shock Index might be useful for identifying patients at increased risk of poor outcomes. Patients with higher and sustained Shock Index scores are more likely to experience morbidity, such as hyperlactatemia, vasopressor use, and organ failure, and also have an increased risk of mortality.12-14

Although the predictive abilities of these bedside risk stratification scores have been assessed individually using standard binary cut-points, the comparative performance of qSOFA, the Shock Index, and NEWS2 has not been evaluated in patients presenting to an emergency department (ED) with suspected sepsis.

METHODS

Design and Setting

We conducted a retrospective cohort study of ED patients who presented with suspected sepsis to the University of California San Francisco (UCSF) Helen Diller Medical Center at Parnassus Heights between June 1, 2012, and December 31, 2018. Our institution is a 785-bed academic teaching hospital with approximately 30,000 ED encounters per year. The study was approved with a waiver of informed consent by the UCSF Human Research Protection Program.

Participants

We use an Epic-based EHR platform (Epic 2017, Epic Systems Corporation) for clinical care, which was implemented on June 1, 2012. All data elements were obtained from Clarity, the relational database that stores Epic’s inpatient data. The study included encounters for patients age ≥18 years who had blood cultures ordered within 24 hours of ED presentation and administration of intravenous antibiotics within 24 hours. Repeat encounters were treated independently in our analysis.

Outcomes and Measures

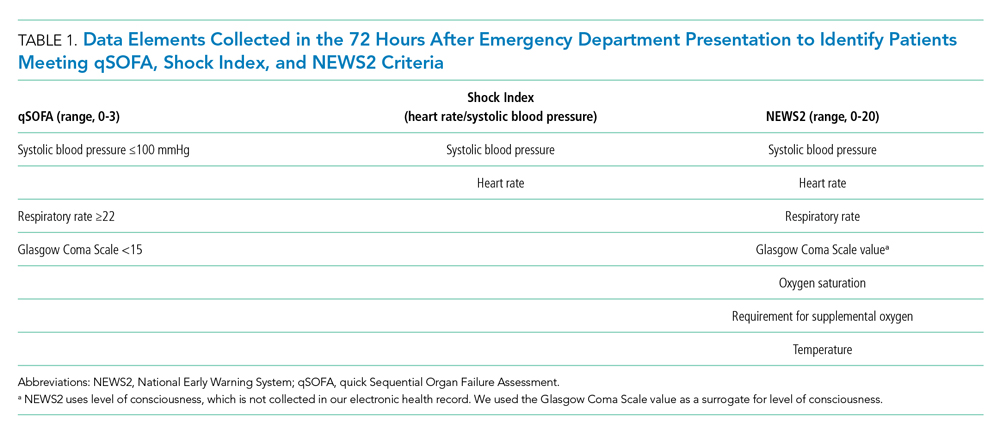

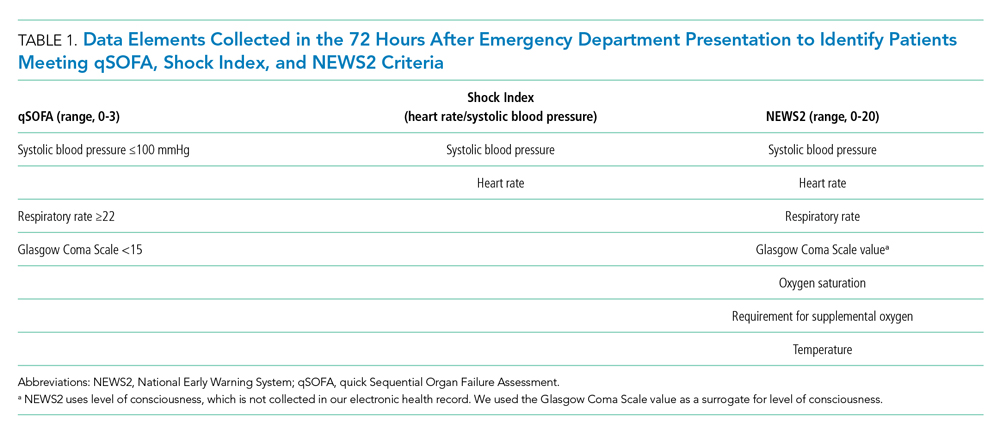

We compared the ability of qSOFA, the Shock Index, and NEWS2 to predict in-hospital mortality and admission to the ICU from the ED (ED-to-ICU admission). We used the

We compared demographic and clinical characteristics of patients who were positive for qSOFA, the Shock Index, and NEWS2. Demographic data were extracted from the EHR and included primary language, age, sex, and insurance status. All International Classification of Diseases (ICD)-9/10 diagnosis codes were pulled from Clarity billing tables. We used the Elixhauser comorbidity groupings19 of ICD-9/10 codes present on admission to identify preexisting comorbidities and underlying organ dysfunction. To estimate burden of comorbid illnesses, we calculated the validated van Walraven comorbidity index,20 which provides an estimated risk of in-hospital death based on documented Elixhauser comorbidities. Admission level of care (acute, stepdown, or intensive care) was collected for inpatient admissions to assess initial illness severity.21 We also evaluated discharge disposition and in-hospital mortality. Index blood culture results were collected, and dates and timestamps of mechanical ventilation, fluid, vasopressor, and antibiotic administration were obtained for the duration of the encounter.

UCSF uses an automated, real-time, algorithm-based severe sepsis alert that is triggered when a patient meets ≥2 SIRS criteria and again when the patient meets severe sepsis or septic shock criteria (ie, ≥2 SIRS criteria in addition to end-organ dysfunction and/or fluid nonresponsive hypotension). This sepsis screening alert was in use for the duration of our study.22

Statistical Analysis

We performed a subgroup analysis among those who were diagnosed with sepsis, according to the 2016 Third International Consensus Definitions for Sepsis and Septic Shock (Sepsis-3) criteria.

All statistical analyses were conducted using Stata 14 (StataCorp). We summarized differences in demographic and clinical characteristics among the populations meeting each severity score but elected not to conduct hypothesis testing because patients could be positive for one or more scores. We calculated sensitivity, specificity, positive predictive value (PPV), and negative predictive value (NPV) for each score to predict in-hospital mortality and ED-to-ICU admission. To allow comparison with other studies, we also created a composite outcome of either in-hospital mortality or ED-to-ICU admission.

RESULTS

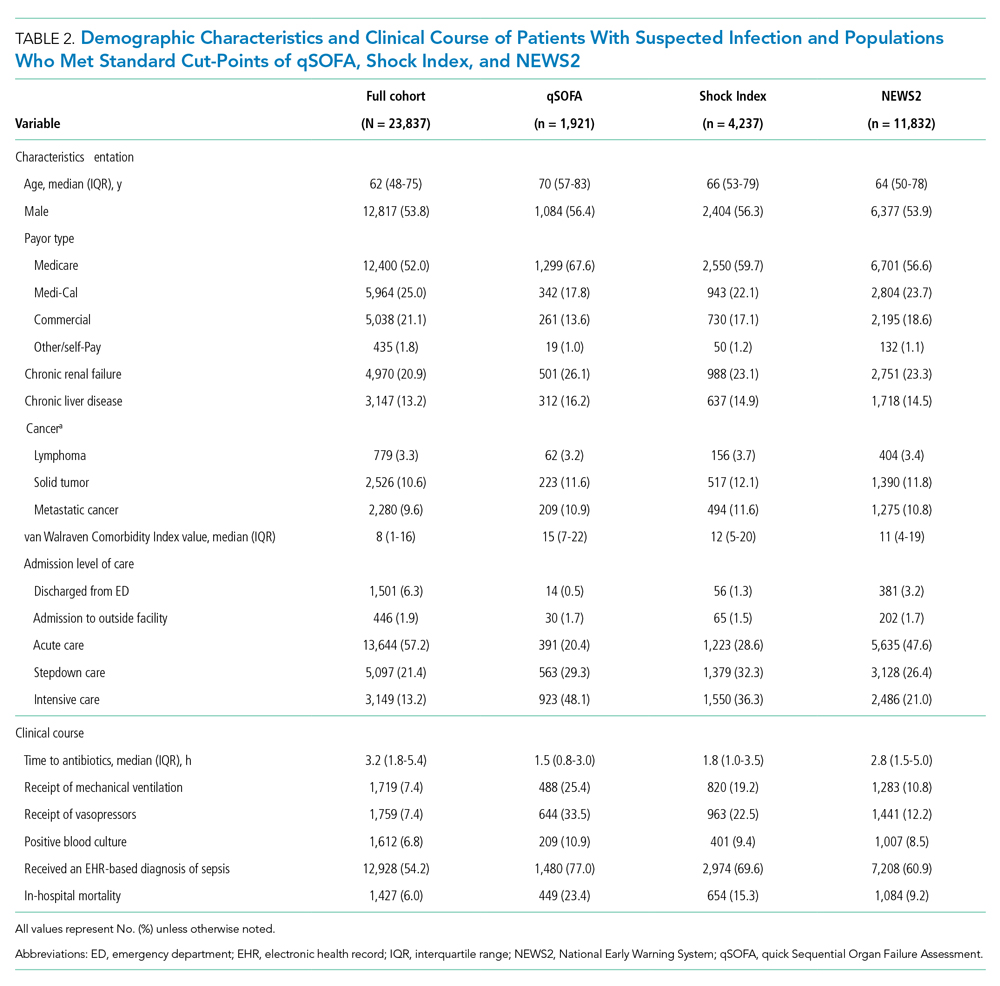

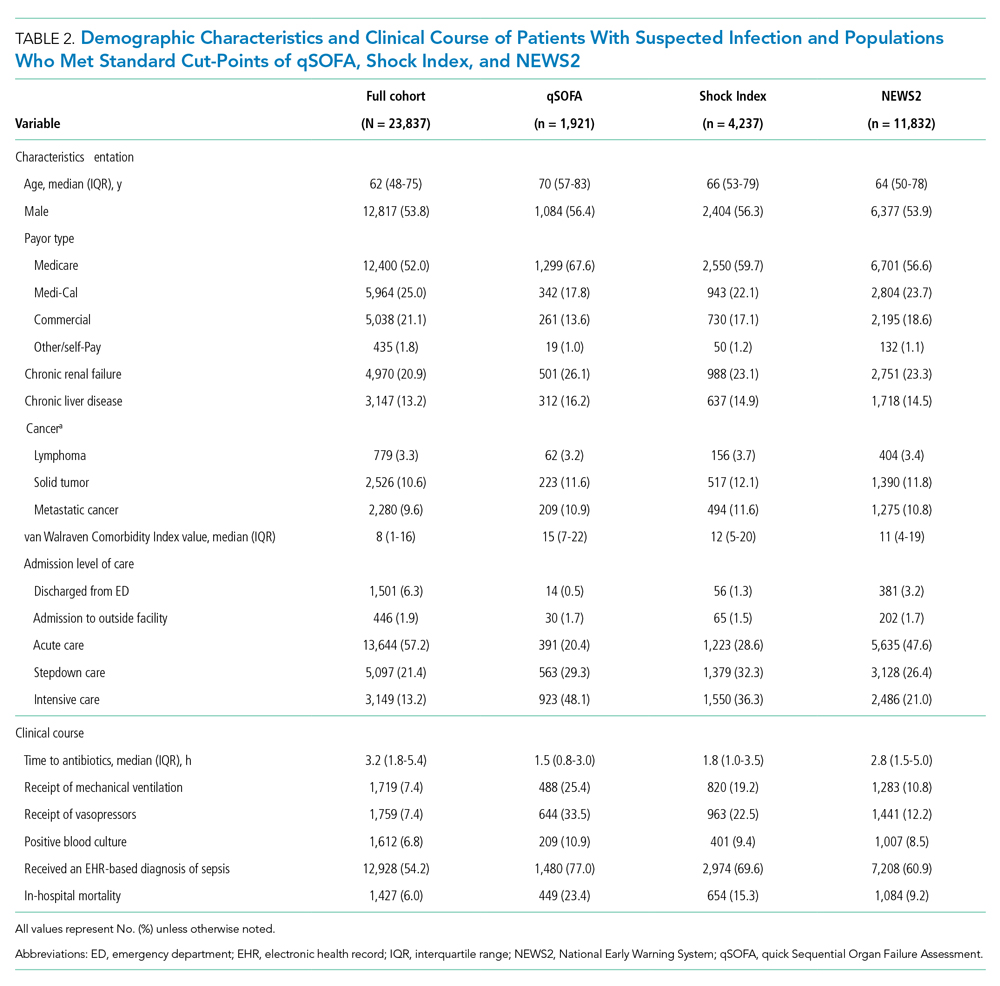

Within our sample 23,837 ED patients had blood cultures ordered within 24 hours of ED presentation and were considered to have suspected sepsis. The mean age of the cohort was 60.8 years, and 1,612 (6.8%) had positive blood cultures. A total of 12,928 patients (54.2%) were found to have sepsis. We documented 1,427 in-hospital deaths (6.0%) and 3,149 (13.2%) ED-to-ICU admissions. At ED triage 1,921 (8.1%) were qSOFA-positive, 4,273 (17.9%) were Shock Index-positive, and 11,832 (49.6%) were NEWS2-positive. At ED triage, blood pressure, heart rate, respiratory rate, and oxygen saturated were documented in >99% of patients, 93.5% had temperature documented, and 28.5% had GCS recorded. If the window of assessment was widened to 1 hour, GCS was only documented among 44.2% of those with suspected sepsis.

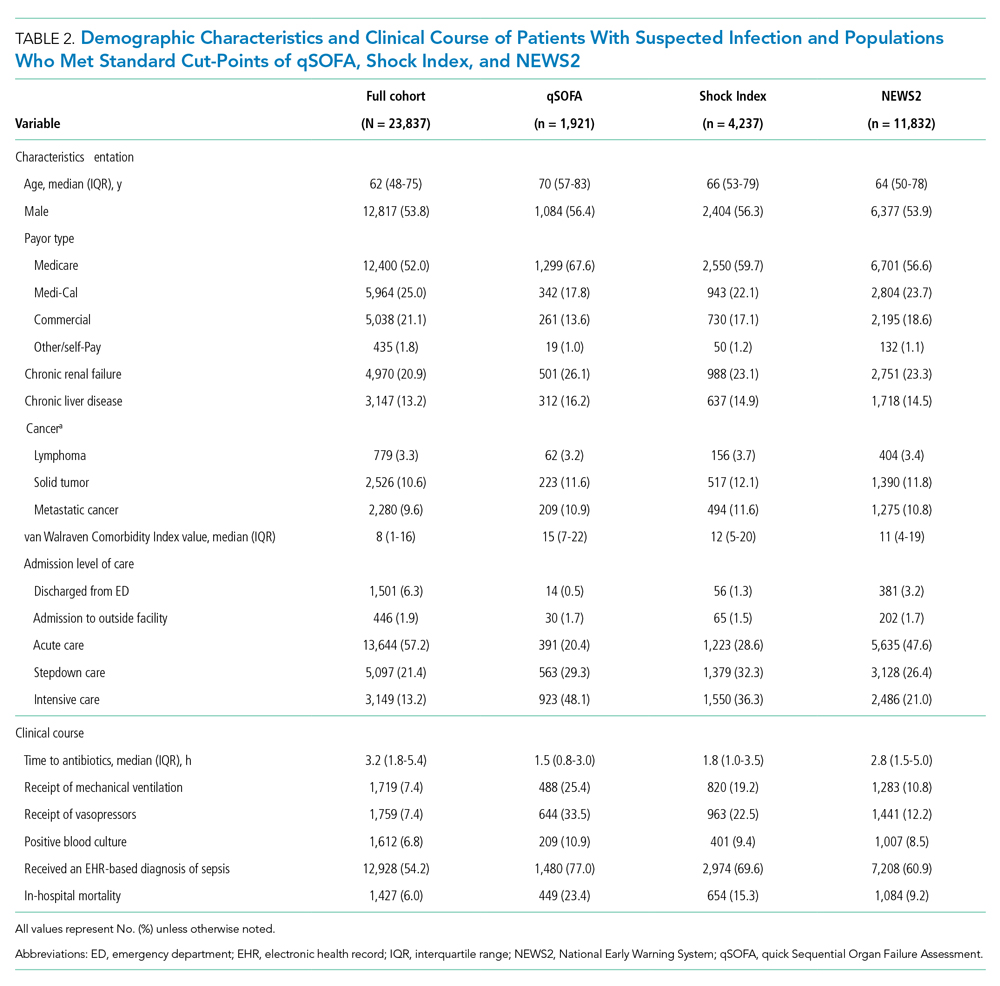

Demographic Characteristics and Clinical Course

qSOFA-positive patients received antibiotics more quickly than those who were Shock Index-positive or NEWS2-positive (median 1.5, 1.8, and 2.8 hours after admission, respectively). In addition, those who were qSOFA-positive were more likely to have a positive blood culture (10.9%, 9.4%, and 8.5%, respectively) and to receive an EHR-based diagnosis of sepsis (77.0%, 69.6%, and 60.9%, respectively) than those who were Shock Index- or NEWS2-positive. Those who were qSOFA-positive also were more likely to be mechanically ventilated during their hospital stay (25.4%, 19.2%, and 10.8%, respectively) and to receive vasopressors (33.5%, 22.5%, and 12.2%, respectively). In-hospital mortality also was more common among those who were qSOFA-positive at triage (23.4%, 15.3%, and 9.2%, respectively).

Because both qSOFA and NEWS2 incorporate GCS, we explored baseline characteristics of patients with GCS documented at triage (n = 6,794). These patients were older (median age 63 and 61 years, P < .0001), more likely to be male (54.9% and 53.4%, P = .0031), more likely to have renal failure (22.8% and 20.1%, P < .0001), more likely to have liver disease (14.2% and 12.8%, P = .006), had a higher van Walraven comorbidity score on presentation (median 10 and 8, P < .0001), and were more likely to go directly to the ICU from the ED (20.2% and 10.6%, P < .0001). However, among the 6,397 GCS scores documented at triage, only 1,579 (24.7%) were abnormal.

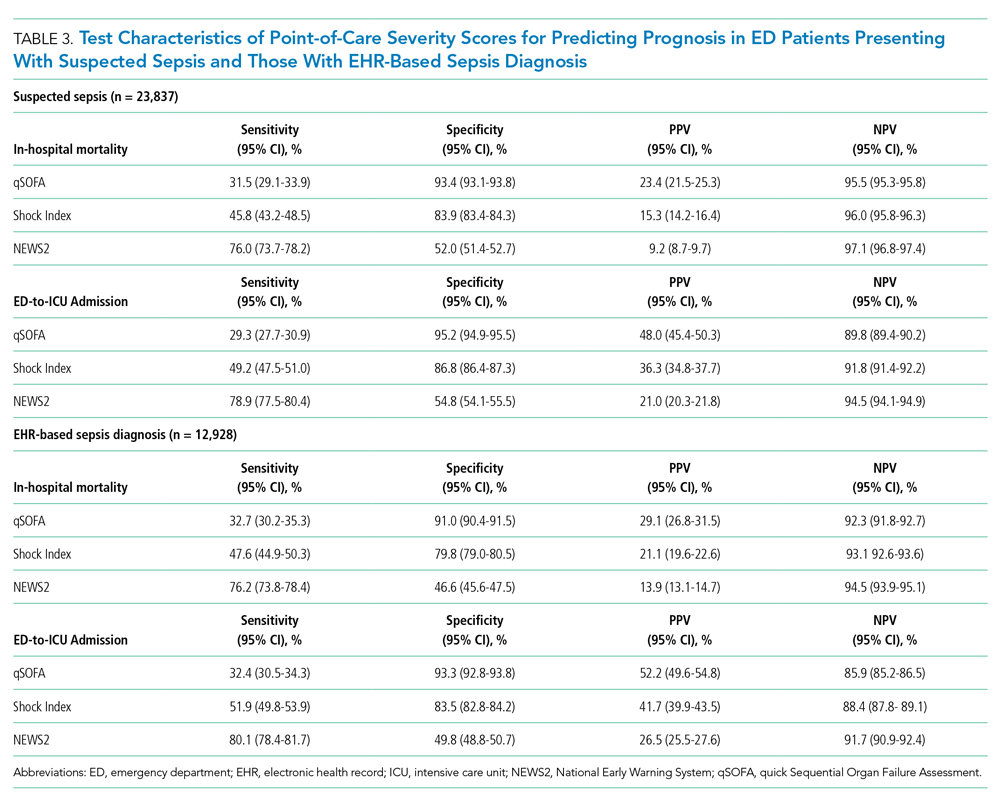

Test Characteristics of qSOFA, Shock Index, and NEWS2 for Predicting In-hospital Mortality and ED-to-ICU Admission

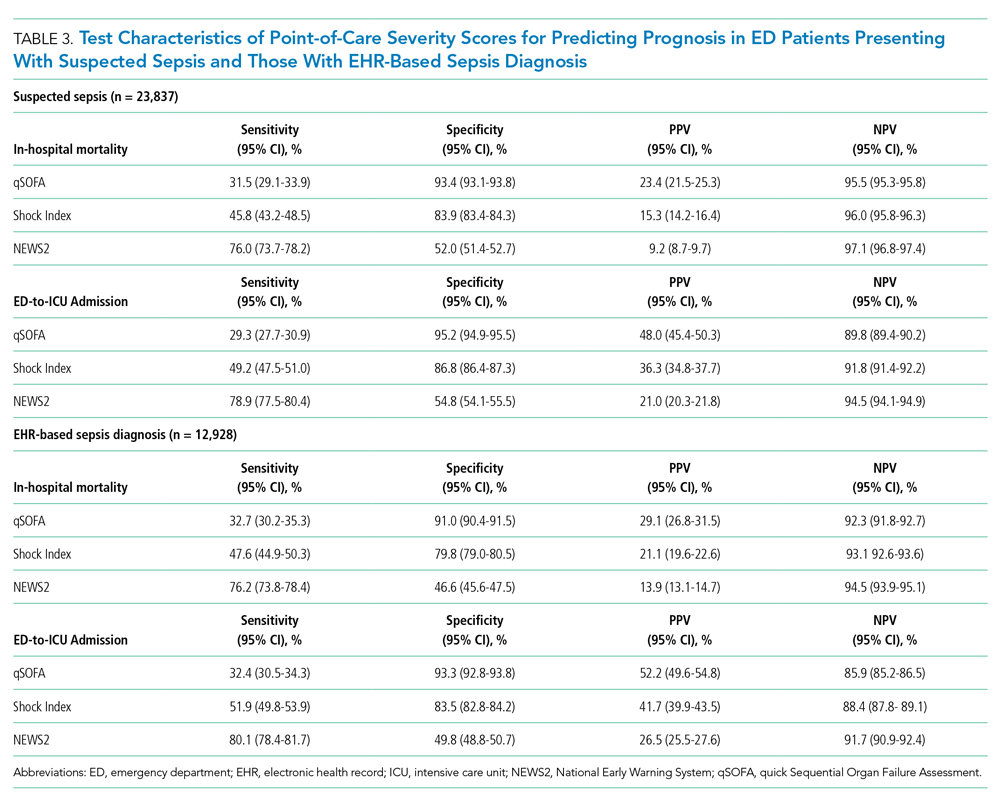

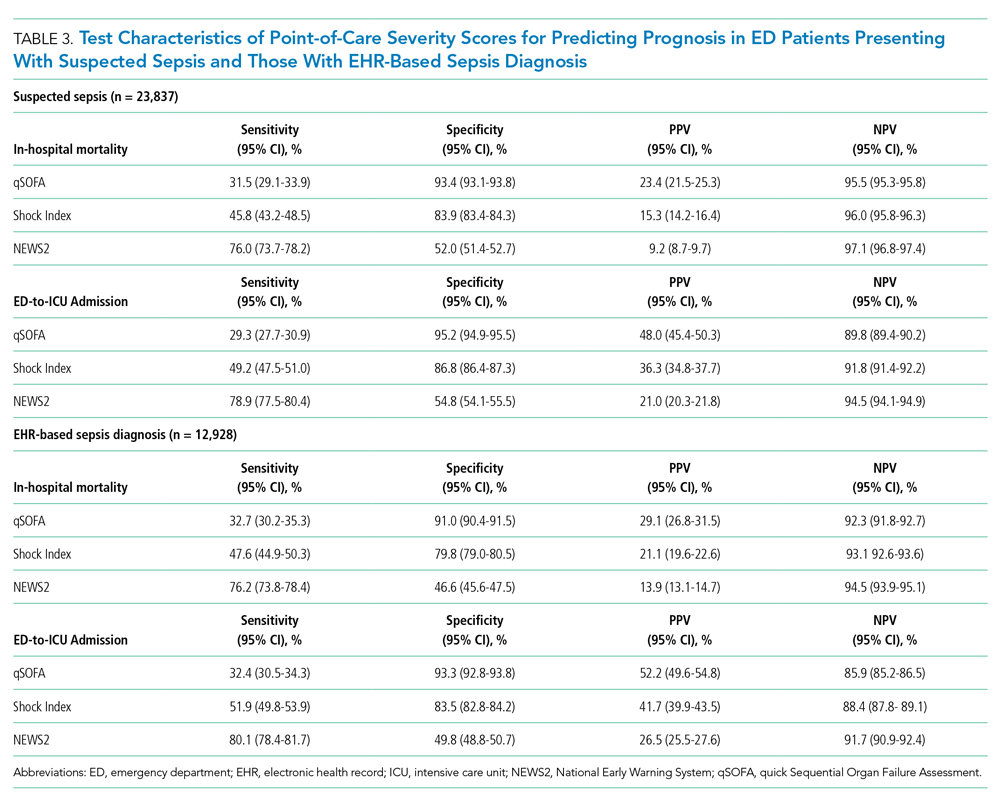

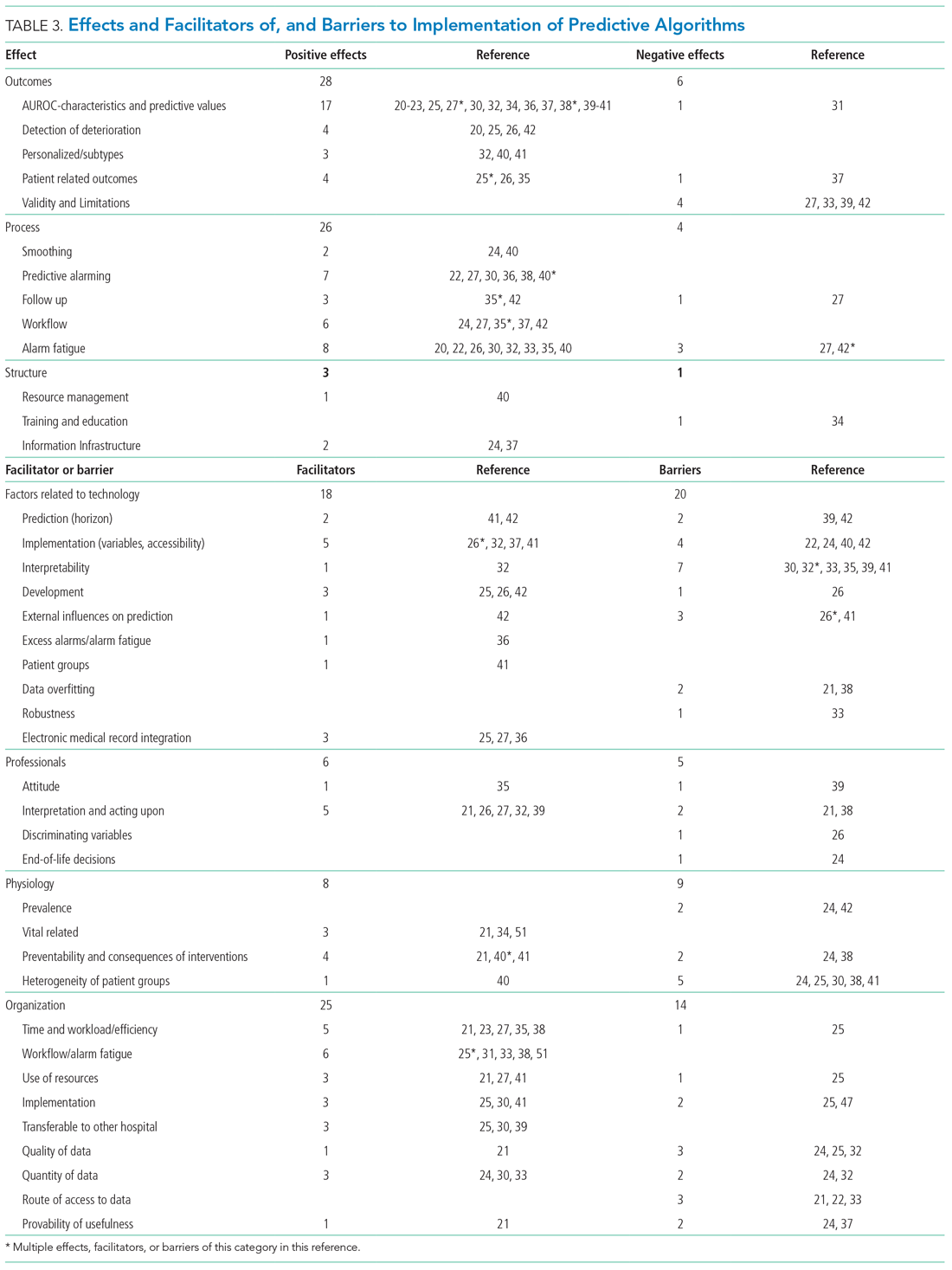

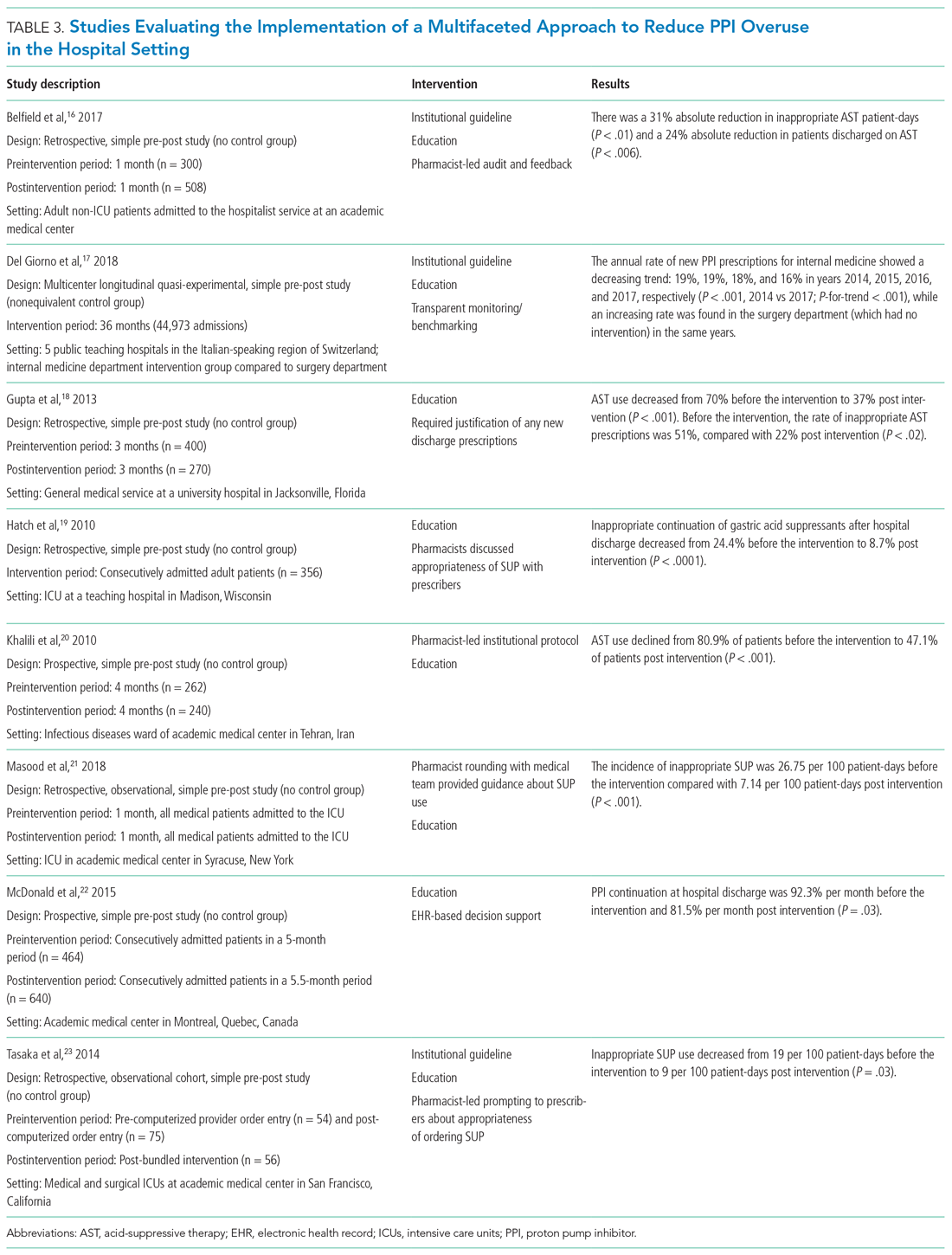

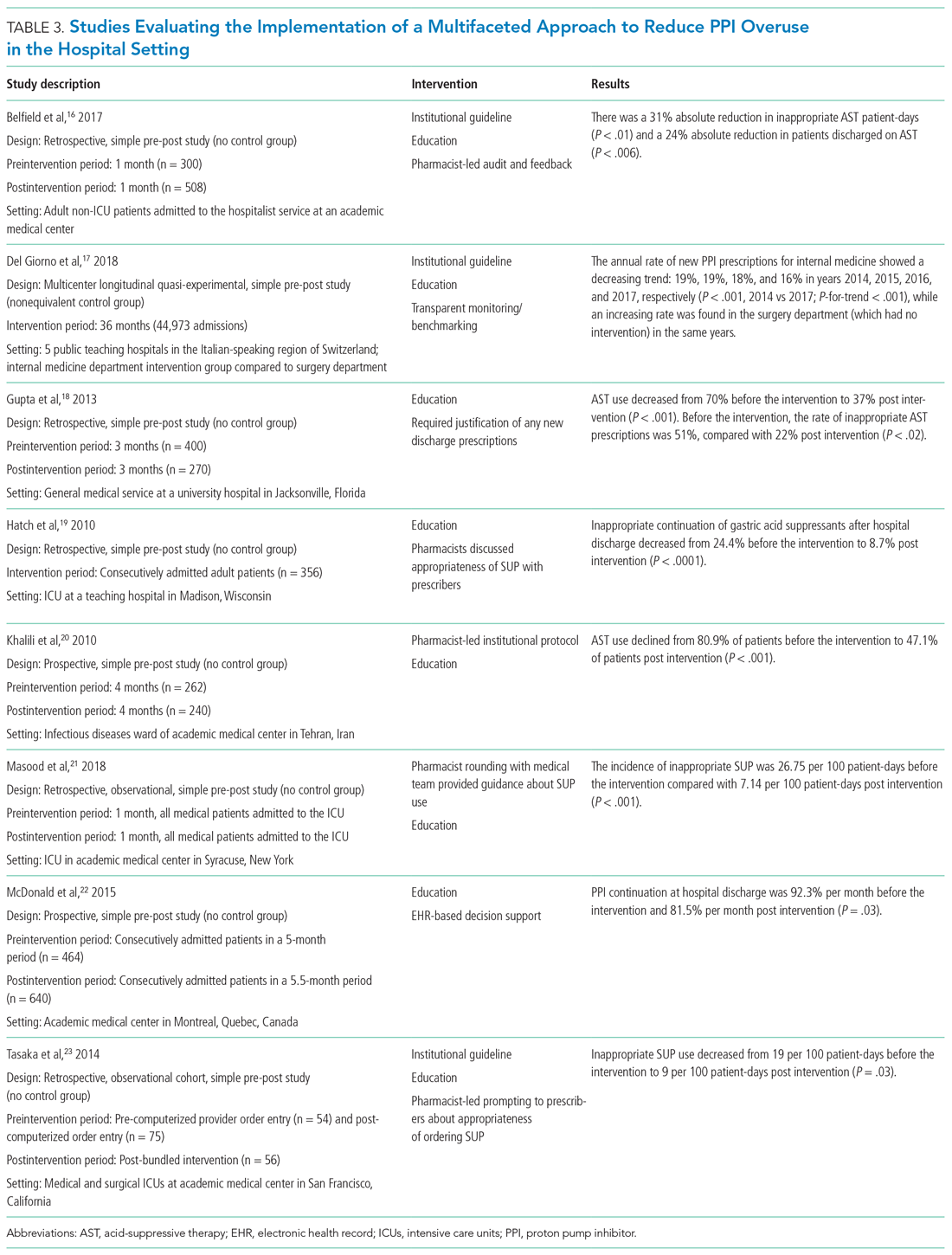

Among 23,837 patients with suspected sepsis, NEWS2 had the highest sensitivity for predicting in-hospital mortality (76.0%; 95% CI, 73.7%-78.2%) and ED-to-ICU admission (78.9%; 95% CI, 77.5%-80.4%) but had the lowest specificity for in-hospital mortality (52.0%; 95% CI, 51.4%-52.7%) and for ED-to-ICU admission (54.8%; 95% CI, 54.1%-55.5%) (Table 3). qSOFA had the lowest sensitivity for in-hospital mortality (31.5%; 95% CI, 29.1%-33.9%) and ED-to-ICU admission (29.3%; 95% CI, 27.7%-30.9%) but the highest specificity for in-hospital mortality (93.4%; 95% CI, 93.1%-93.8%) and ED-to-ICU admission (95.2%; 95% CI, 94.9%-95.5%). The Shock Index had a sensitivity that fell between qSOFA and NEWS2 for in-hospital mortality (45.8%; 95% CI, 43.2%-48.5%) and ED-to-ICU admission (49.2%; 95% CI, 47.5%-51.0%). The specificity of the Shock Index also was between qSOFA and NEWS2 for in-hospital mortality (83.9%; 95% CI, 83.4%-84.3%) and ED-to-ICU admission (86.8%; 95% CI, 86.4%-87.3%). All three scores exhibited relatively low PPV, ranging from 9.2% to 23.4% for in-hospital mortality and 21.0% to 48.0% for ED-to-ICU triage. Conversely, all three scores exhibited relatively high NPV, ranging from 95.5% to 97.1% for in-hospital mortality and 89.8% to 94.5% for ED-to-ICU triage.

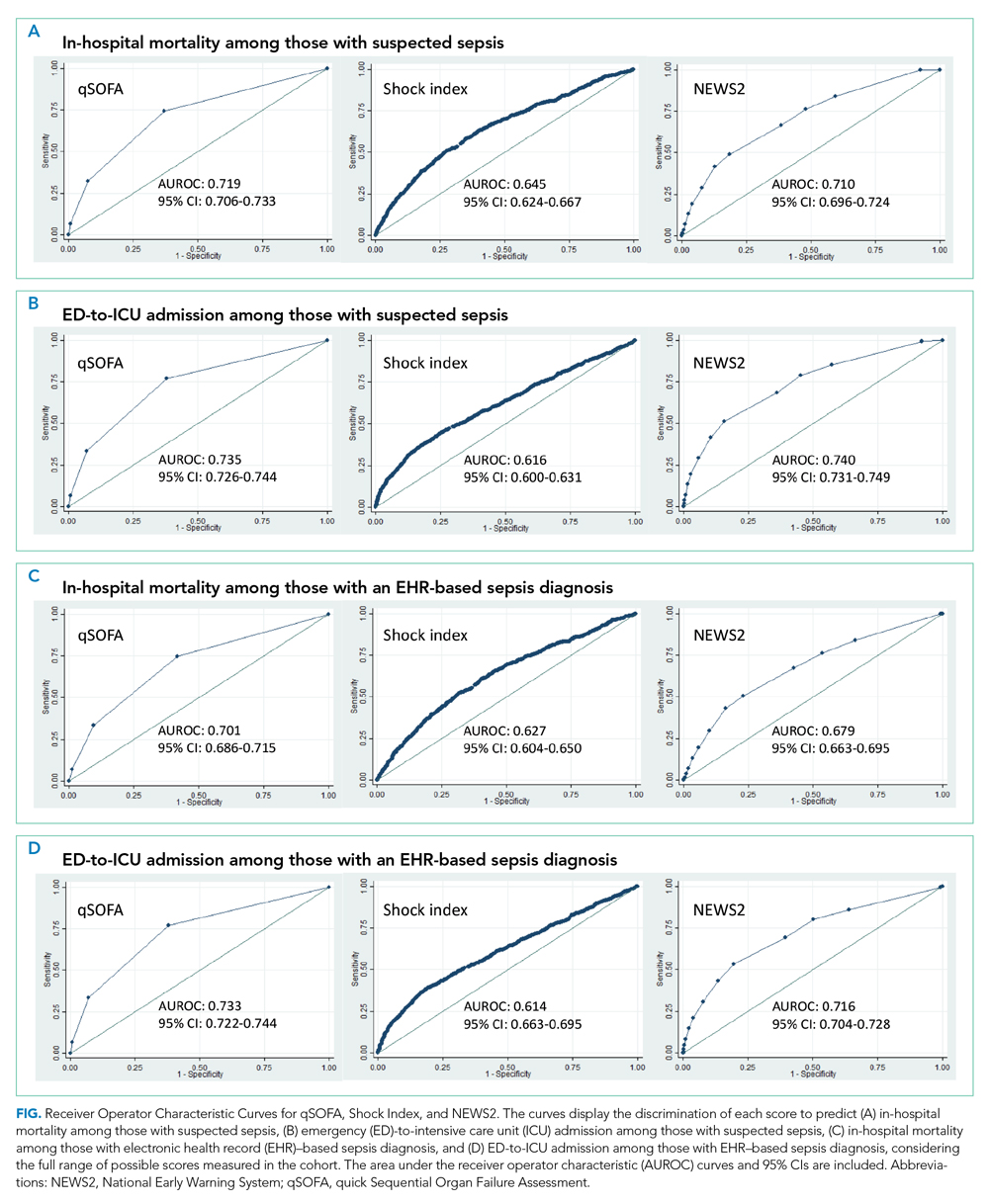

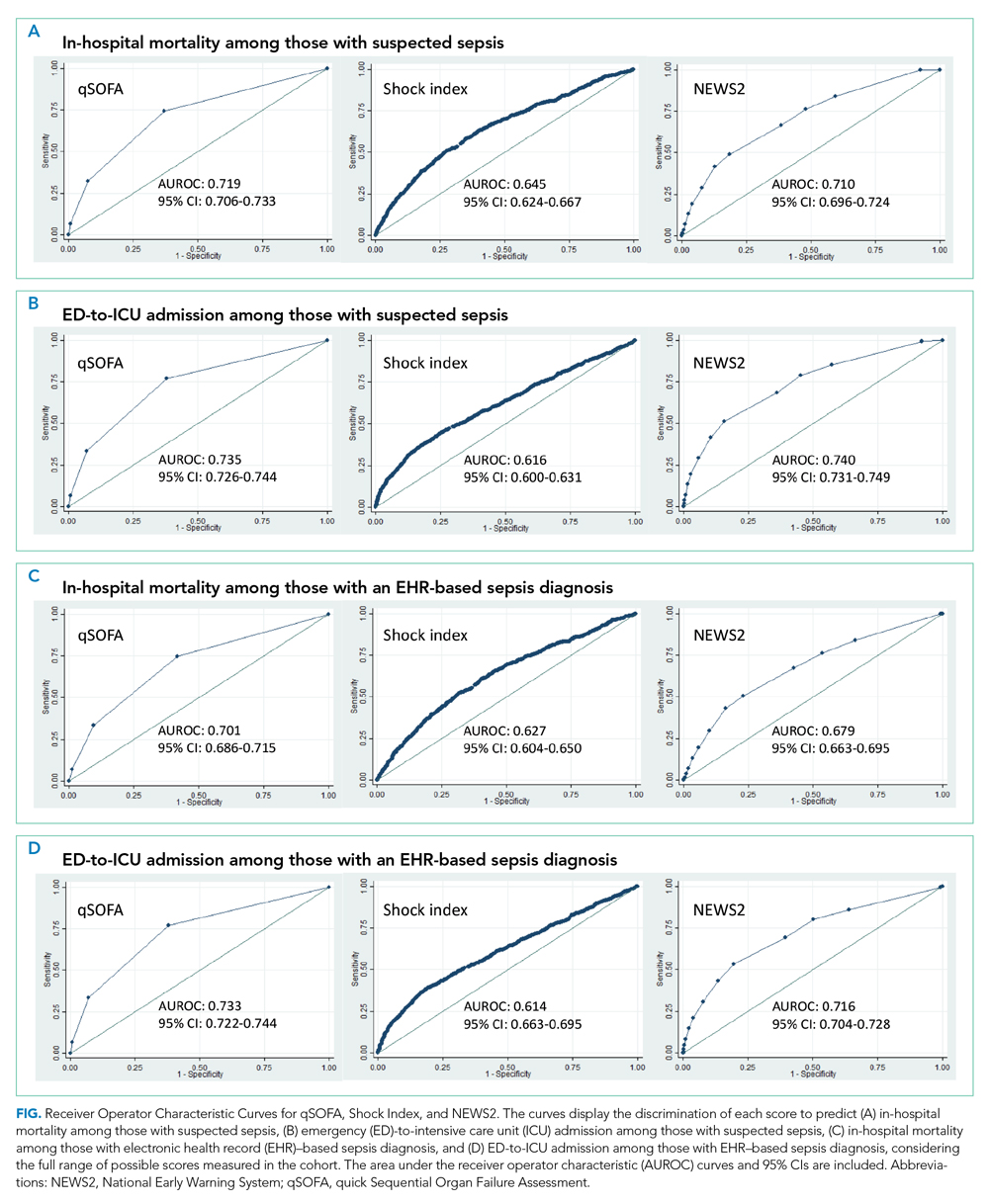

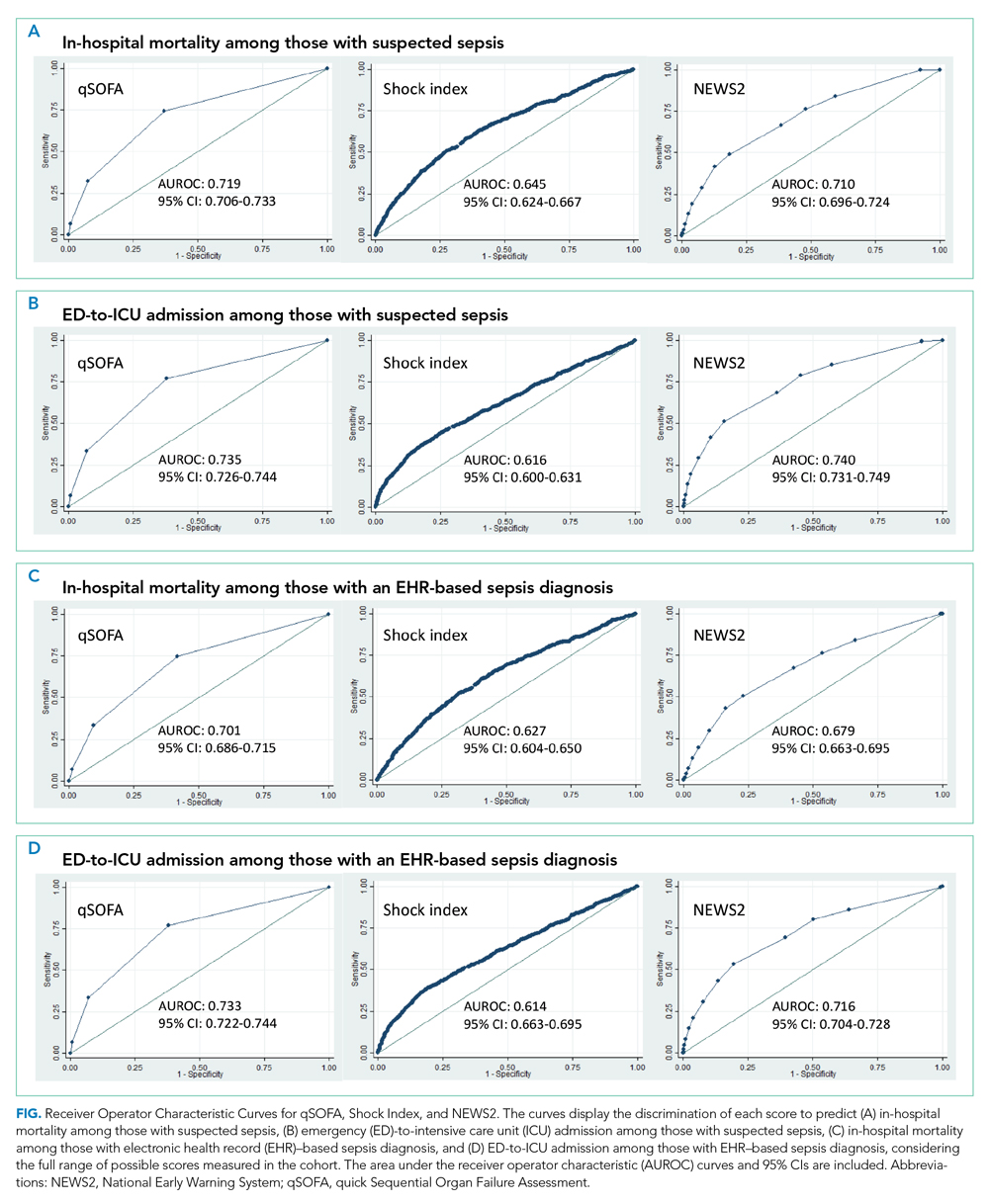

When considering a binary cutoff, the Shock Index exhibited the highest AUROC for in-hospital mortality (0.648; 95% CI, 0.635-0.662) and had a significantly higher AUROC than qSOFA (AUROC, 0.625; 95% CI, 0.612-0.637; P = .0005), but there was no difference compared with NEWS2 (AUROC, 0.640; 95% CI, 0.628-0.652; P = .2112). NEWS2 had a significantly higher AUROC than qSOFA for predicting in-hospital mortality (P = .0227). The Shock Index also exhibited the highest AUROC for ED-to-ICU admission (0.680; 95% CI, 0.617-0.689), which was significantly higher than the AUROC for qSOFA (P < .0001) and NEWS2 (P = 0.0151). NEWS2 had a significantly higher AUROC than qSOFA for predicting ED-to-ICU admission (P < .0001). Similar findings were seen in patients found to have sepsis.

DISCUSSION

In this retrospective cohort study of 23,837 patients who presented to the ED with suspected sepsis, the standard qSOFA threshold was met least frequently, followed by the Shock Index and NEWS2. NEWS2 had the highest sensitivity but the lowest specificity for predicting in-hospital mortality and ED-to-ICU admission, making it a challenging bedside risk stratification scale for identifying patients at risk of poor clinical outcomes. When comparing predictive performance among the three scales, qSOFA had the highest specificity and the Shock Index had the highest AUROC for in-hospital mortality and ED-to-ICU admission in this cohort of patients with suspected sepsis. These trends in sensitivity, specificity, and AUROC were consistent among those who met EHR criteria for a sepsis diagnosis. In the analysis of the three scoring systems using all available cut-points, qSOFA and NEWS2 had the highest AUROCs, followed by the Shock Index.

Considering the rapid progression from organ dysfunction to death in sepsis patients, as well as the difficulty establishing a sepsis diagnosis at triage,23 providers must quickly identify patients at increased risk of poor outcomes when they present to the ED. Sepsis alerts often are built using SIRS criteria,27 including the one used for sepsis surveillance at UCSF since 2012,22 but the white blood cell count criterion is subject to a laboratory lag and could lead to a delay in identification. Implementation of a point-of-care bedside score alert that uses readily available clinical data could allow providers to identify patients at greatest risk of poor outcomes immediately at ED presentation and triage, which motivated us to explore the predictive performance of qSOFA, the Shock Index, and NEWS2.

Our study is the first to provide a head-to-head comparison of the predictive performance of qSOFA, the Shock Index, and NEWS2, three easy-to-calculate bedside risk scores that use EHR data collected among patients with suspected sepsis. The Sepsis-3 guidelines recommend qSOFA to quickly identify non-ICU patients at greatest risk of poor outcomes because the measure exhibited predictive performance similar to the more extensive SOFA score outside the ICU.16,23 Although some studies have confirmed qSOFA’s high predictive performance,28-31 our test characteristics and AUROC findings are in line with other published analyses.4,6,10,17 The UK National Health Service is using NEWS2 to screen for patients at risk of poor outcomes from sepsis. Several analyses that assessed the predictive ability of NEWS have reported estimates in line with our findings.4,10,32 The Shock Index was introduced in 1967 and provided a metric to evaluate hemodynamic stability based on heart rate and systolic blood pressure.33 The Shock Index has been studied in several contexts, including sepsis,34 and studies show that a sustained Shock Index is associated with increased odds of vasopressor administration, higher prevalence of hyperlactatemia, and increased risk of poor outcomes in the ICU.13,14

For our study, we were particularly interested in exploring how the Shock Index would compare with more frequently used severity scores such as qSOFA and NEWS2 among patients with suspected sepsis, given the simplicity of its calculation and the easy availability of required data. In our cohort of 23,837 patients, only 159 people had missing blood pressure and only 71 had omitted heart rate. In contrast, both qSOFA and NEWS2 include an assessment of level of consciousness that can be subject to variability in assessment methods and EHR documentation across institutions.11 In our cohort, GCS within 30 minutes of ED presentation was missing in 72 patients, which could have led to incomplete calculation of qSOFA and NEWS2 if a missing value was not actually within normal limits.

Several investigations relate qSOFA to NEWS but few compare qSOFA with the newer NEWS2, and even fewer evaluate the Shock Index with any of these scores.10,11,18,29,35-37 In general, studies have shown that NEWS exhibits a higher AUROC for predicting mortality, sepsis with organ dysfunction, and ICU admission, often as a composite outcome.4,11,18,37,38 A handful of studies compare the Shock Index to SIRS; however, little has been done to compare the Shock Index to qSOFA or NEWS2, scores that have been used specifically for sepsis and might be more predictive of poor outcomes than SIRS.33 In our study, the Shock Index had a higher AUROC than either qSOFA or NEWS2 for predicting in-hospital mortality and ED-to-ICU admission measured as separate outcomes and as a composite outcome using standard cut-points for these scores.

When selecting a severity score to apply in an institution, it is important to carefully evaluate the score’s test characteristics, in addition to considering the availability of reliable data. Tests with high sensitivity and NPV for the population being studied can be useful to rule out disease or risk of poor outcome, while tests with high specificity and PPV can be useful to rule in disease or risk of poor outcome.39 When considering specificity, qSOFA’s performance was superior to the Shock Index and NEWS2 in our study, but a small percentage of the population was identified using a cut-point of qSOFA ≥2. If we used qSOFA and applied this standard cut-point at our institution, we could be confident that those identified were at increased risk, but we would miss a significant number of patients who would experience a poor outcome. When considering sensitivity, performance of NEWS2 was superior to qSOFA and the Shock Index in our study, but one-half of the population was identified using a cut-point of NEWS2 ≥5. If we were to apply this standard NEWS2 cut-point at our institution, we would assume that one-half of our population was at risk, which might drive resource use towards patients who will not experience a poor outcome. Although none of the scores exhibited a robust AUROC measure, the Shock Index had the highest AUROC for in-hospital mortality and ED-to-ICU admission when using the standard binary cut-point, and its sensitivity and specificity is between that of qSOFA and NEWS2, potentially making it a score to use in settings where qSOFA and NEWS2 score components, such as altered mentation, are not reliably collected. Finally, our sensitivity analysis varying the binary cut-point of each score within our population demonstrated that the standard cut-points might not be as useful within a specific population and might need to be tailored for implementation, balancing sensitivity, specificity, PPV, and NPV to meet local priorities and ICU capacity.

Our study has limitations. It is a single-center, retrospective analysis, factors that could reduce generalizability. However, it does include a large and diverse patient population spanning several years. Missing GCS data could have affected the predictive ability of qSOFA and NEWS2 in our cohort. We could not reliably perform imputation of GCS because of the high missingness and therefore we assumed missing was normal, as was done in the Sepsis-3 derivation studies.16 Previous studies have attempted to impute GCS and have not observed improved performance of qSOFA to predict mortality.40 Because manually collected variables such as GCS are less reliably documented in the EHR, there might be limitations in their use for triage risk scores.

Although the current analysis focused on the predictive performance of qSOFA, the Shock Index, and NEWS2 at triage, performance of these scores could affect the ED team’s treatment decisions before handoff to the hospitalist team and the expected level of care the patient will receive after in-patient admission. These tests also have the advantage of being easy to calculate at the bedside over time, which could provide an objective assessment of longitudinal predicted prognosis.

CONCLUSION

Local priorities should drive selection of a screening tool, balancing sensitivity, specificity, PPV, and NPV to achieve the institution’s goals. qSOFA, Shock Index, and NEWS2 are risk stratification tools that can be easily implemented at ED triage using data available at the bedside. Although none of these scores performed strongly when comparing AUROCs, qSOFA was highly specific for identifying patients with poor outcomes, and NEWS2 was the most sensitive for ruling out those at high risk among patients with suspected sepsis. The Shock Index exhibited a sensitivity and specificity that fell between qSOFA and NEWS2 and also might be considered to identify those at increased risk, given its ease of implementation, particularly in settings where altered mentation is unreliably or inconsistently documented.

Acknowledgment

The authors thank the UCSF Division of Hospital Medicine Data Core for their assistance with data acquisition.

1. Jones SL, Ashton CM, Kiehne LB, et al. Outcomes and resource use of sepsis-associated stays by presence on admission, severity, and hospital type. Med Care. 2016;54(3):303-310. https://doi.org/10.1097/MLR.0000000000000481

2. Seymour CW, Gesten F, Prescott HC, et al. Time to treatment and mortality during mandated emergency care for sepsis. N Engl J Med. 2017;376(23):2235-2244. https://doi.org/10.1056/NEJMoa1703058

3. Kumar A, Roberts D, Wood KE, et al. Duration of hypotension before initiation of effective antimicrobial therapy is the critical determinant of survival in human septic shock. Crit Care Med. 2006;34(6):1589-1596. https://doi.org/10.1097/01.CCM.0000217961.75225.E9

4. Churpek MM, Snyder A, Sokol S, Pettit NN, Edelson DP. Investigating the impact of different suspicion of infection criteria on the accuracy of Quick Sepsis-Related Organ Failure Assessment, Systemic Inflammatory Response Syndrome, and Early Warning Scores. Crit Care Med. 2017;45(11):1805-1812. https://doi.org/10.1097/CCM.0000000000002648

5. Abdullah SMOB, Sørensen RH, Dessau RBC, Sattar SMRU, Wiese L, Nielsen FE. Prognostic accuracy of qSOFA in predicting 28-day mortality among infected patients in an emergency department: a prospective validation study. Emerg Med J. 2019;36(12):722-728. https://doi.org/10.1136/emermed-2019-208456

6. Kim KS, Suh GJ, Kim K, et al. Quick Sepsis-related Organ Failure Assessment score is not sensitive enough to predict 28-day mortality in emergency department patients with sepsis: a retrospective review. Clin Exp Emerg Med. 2019;6(1):77-83. HTTPS://DOI.ORG/ 10.15441/ceem.17.294

7. National Early Warning Score (NEWS) 2: Standardising the assessment of acute-illness severity in the NHS. Royal College of Physicians; 2017.

8. Brink A, Alsma J, Verdonschot RJCG, et al. Predicting mortality in patients with suspected sepsis at the emergency department: a retrospective cohort study comparing qSOFA, SIRS and National Early Warning Score. PLoS One. 2019;14(1):e0211133. https://doi.org/ 10.1371/journal.pone.0211133

9. Redfern OC, Smith GB, Prytherch DR, Meredith P, Inada-Kim M, Schmidt PE. A comparison of the Quick Sequential (Sepsis-Related) Organ Failure Assessment Score and the National Early Warning Score in non-ICU patients with/without infection. Crit Care Med. 2018;46(12):1923-1933. https://doi.org/10.1097/CCM.0000000000003359

10. Churpek MM, Snyder A, Han X, et al. Quick Sepsis-related Organ Failure Assessment, Systemic Inflammatory Response Syndrome, and Early Warning Scores for detecting clinical deterioration in infected patients outside the intensive care unit. Am J Respir Crit Care Med. 2017;195(7):906-911. https://doi.org/10.1164/rccm.201604-0854OC

11. Goulden R, Hoyle MC, Monis J, et al. qSOFA, SIRS and NEWS for predicting inhospital mortality and ICU admission in emergency admissions treated as sepsis. Emerg Med J. 2018;35(6):345-349. https://doi.org/10.1136/emermed-2017-207120

12. Biney I, Shepherd A, Thomas J, Mehari A. Shock Index and outcomes in patients admitted to the ICU with sepsis. Chest. 2015;148(suppl 4):337A. https://doi.org/https://doi.org/10.1378/chest.2281151

13. Wira CR, Francis MW, Bhat S, Ehrman R, Conner D, Siegel M. The shock index as a predictor of vasopressor use in emergency department patients with severe sepsis. West J Emerg Med. 2014;15(1):60-66. https://doi.org/10.5811/westjem.2013.7.18472

14. Berger T, Green J, Horeczko T, et al. Shock index and early recognition of sepsis in the emergency department: pilot study. West J Emerg Med. 2013;14(2):168-174. https://doi.org/10.5811/westjem.2012.8.11546

15. Middleton DJ, Smith TO, Bedford R, Neilly M, Myint PK. Shock Index predicts outcome in patients with suspected sepsis or community-acquired pneumonia: a systematic review. J Clin Med. 2019;8(8):1144. https://doi.org/10.3390/jcm8081144

16. Seymour CW, Liu VX, Iwashyna TJ, et al. Assessment of clinical criteria for sepsis: for the Third International Consensus Definitions for Sepsis and Septic Shock (Sepsis-3). JAMA. 2016;315(8):762-774. https://doi.org/ 10.1001/jama.2016.0288

17. Abdullah S, Sørensen RH, Dessau RBC, Sattar S, Wiese L, Nielsen FE. Prognostic accuracy of qSOFA in predicting 28-day mortality among infected patients in an emergency department: a prospective validation study. Emerg Med J. 2019;36(12):722-728. https://doi.org/10.1136/emermed-2019-208456

18. Usman OA, Usman AA, Ward MA. Comparison of SIRS, qSOFA, and NEWS for the early identification of sepsis in the Emergency Department. Am J Emerg Med. 2018;37(8):1490-1497. https://doi.org/10.1016/j.ajem.2018.10.058

19. Elixhauser A, Steiner C, Harris DR, Coffey RM. Comorbidity measures for use with administrative data. Med Care. 1998;36(1):8-27. https://doi.org/10.1097/00005650-199801000-00004

20. van Walraven C, Austin PC, Jennings A, Quan H, Forster AJ. A modification of the Elixhauser comorbidity measures into a point system for hospital death using administrative data. Med Care. 2009;47(6):626-633. https://doi.org/10.1097/MLR.0b013e31819432e5

21. Prin M, Wunsch H. The role of stepdown beds in hospital care. Am J Respir Crit Care Med. 2014;190(11):1210-1216. https://doi.org/10.1164/rccm.201406-1117PP

22. Narayanan N, Gross AK, Pintens M, Fee C, MacDougall C. Effect of an electronic medical record alert for severe sepsis among ED patients. Am J Emerg Med. 2016;34(2):185-188. https://doi.org/10.1016/j.ajem.2015.10.005

23. Singer M, Deutschman CS, Seymour CW, et al. The Third International Consensus Definitions for Sepsis and Septic Shock (Sepsis-3). JAMA. 2016;315(8):801-810. https://doi.org/10.1001/jama.2016.0287

24. Rhee C, Dantes R, Epstein L, et al. Incidence and trends of sepsis in US hospitals using clinical vs claims data, 2009-2014. JAMA. 2017;318(13):1241-1249. https://doi.org/10.1001/jama.2017.13836

25. Safari S, Baratloo A, Elfil M, Negida A. Evidence based emergency medicine; part 5 receiver operating curve and area under the curve. Emerg (Tehran). 2016;4(2):111-113.

26. DeLong ER, DeLong DM, Clarke-Pearson DL. Comparing the areas under two or more correlated receiver operating characteristic curves: a nonparametric approach. Biometrics. 1988;44(3):837-845.

27. Kangas C, Iverson L, Pierce D. Sepsis screening: combining Early Warning Scores and SIRS Criteria. Clin Nurs Res. 2021;30(1):42-49. https://doi.org/10.1177/1054773818823334.

28. Freund Y, Lemachatti N, Krastinova E, et al. Prognostic accuracy of Sepsis-3 Criteria for in-hospital mortality among patients with suspected infection presenting to the emergency department. JAMA. 2017;317(3):301-308. https://doi.org/10.1001/jama.2016.20329

29. Finkelsztein EJ, Jones DS, Ma KC, et al. Comparison of qSOFA and SIRS for predicting adverse outcomes of patients with suspicion of sepsis outside the intensive care unit. Crit Care. 2017;21(1):73. https://doi.org/10.1186/s13054-017-1658-5

30. Canet E, Taylor DM, Khor R, Krishnan V, Bellomo R. qSOFA as predictor of mortality and prolonged ICU admission in Emergency Department patients with suspected infection. J Crit Care. 2018;48:118-123. https://doi.org/10.1016/j.jcrc.2018.08.022

31. Anand V, Zhang Z, Kadri SS, Klompas M, Rhee C; CDC Prevention Epicenters Program. Epidemiology of Quick Sequential Organ Failure Assessment criteria in undifferentiated patients and association with suspected infection and sepsis. Chest. 2019;156(2):289-297. https://doi.org/10.1016/j.chest.2019.03.032

32. Hamilton F, Arnold D, Baird A, Albur M, Whiting P. Early Warning Scores do not accurately predict mortality in sepsis: A meta-analysis and systematic review of the literature. J Infect. 2018;76(3):241-248. https://doi.org/10.1016/j.jinf.2018.01.002

33. Koch E, Lovett S, Nghiem T, Riggs RA, Rech MA. Shock Index in the emergency department: utility and limitations. Open Access Emerg Med. 2019;11:179-199. https://doi.org/10.2147/OAEM.S178358

34. Yussof SJ, Zakaria MI, Mohamed FL, Bujang MA, Lakshmanan S, Asaari AH. Value of Shock Index in prognosticating the short-term outcome of death for patients presenting with severe sepsis and septic shock in the emergency department. Med J Malaysia. 2012;67(4):406-411.

35. Siddiqui S, Chua M, Kumaresh V, Choo R. A comparison of pre ICU admission SIRS, EWS and q SOFA scores for predicting mortality and length of stay in ICU. J Crit Care. 2017;41:191-193. https://doi.org/10.1016/j.jcrc.2017.05.017

36. Costa RT, Nassar AP, Caruso P. Accuracy of SOFA, qSOFA, and SIRS scores for mortality in cancer patients admitted to an intensive care unit with suspected infection. J Crit Care. 2018;45:52-57. https://doi.org/10.1016/j.jcrc.2017.12.024

37. Mellhammar L, Linder A, Tverring J, et al. NEWS2 is Superior to qSOFA in detecting sepsis with organ dysfunction in the emergency department. J Clin Med. 2019;8(8):1128. https://doi.org/10.3390/jcm8081128

38. Szakmany T, Pugh R, Kopczynska M, et al. Defining sepsis on the wards: results of a multi-centre point-prevalence study comparing two sepsis definitions. Anaesthesia. 2018;73(2):195-204. https://doi.org/10.1111/anae.14062

39. Newman TB, Kohn MA. Evidence-Based Diagnosis: An Introduction to Clinical Epidemiology. Cambridge University Press; 2009.

40. Askim Å, Moser F, Gustad LT, et al. Poor performance of quick-SOFA (qSOFA) score in predicting severe sepsis and mortality - a prospective study of patients admitted with infection to the emergency department. Scand J Trauma Resusc Emerg Med. 2017;25(1):56. https://doi.org/10.1186/s13049-017-0399-4

Sepsis is the leading cause of in-hospital mortality in the United States.1 Sepsis is present on admission in 85% of cases, and each hour delay in antibiotic treatment is associated with 4% to 7% increased odds of mortality.2,3 Prompt identification and treatment of sepsis is essential for reducing morbidity and mortality, but identifying sepsis during triage is challenging.2

Risk stratification scores that rely solely on data readily available at the bedside have been developed to quickly identify those at greatest risk of poor outcomes from sepsis in real time. The quick Sequential Organ Failure Assessment (qSOFA) score, the National Early Warning System (NEWS2), and the Shock Index are easy-to-calculate measures that use routinely collected clinical data that are not subject to laboratory delay. These scores can be incorporated into electronic health record (EHR)-based alerts and can be calculated longitudinally to track the risk of poor outcomes over time. qSOFA was developed to quantify patient risk at bedside in non-intensive care unit (ICU) settings, but there is no consensus about its ability to predict adverse outcomes such as mortality and ICU admission.4-6 The United Kingdom’s National Health Service uses NEWS2 to identify patients at risk for sepsis.7 NEWS has been shown to have similar or better sensitivity in identifying poorer outcomes in sepsis patients compared with systemic inflammatory response syndrome (SIRS) criteria and qSOFA.4,8-11 However, since the latest update of NEWS2 in 2017, there has been little study of its predictive ability. The Shock Index is a simple bedside score (heart rate divided by systolic blood pressure) that was developed to detect changes in cardiovascular performance before systemic shock onset. Although it was not developed for infection and has not been regularly applied in the sepsis literature, the Shock Index might be useful for identifying patients at increased risk of poor outcomes. Patients with higher and sustained Shock Index scores are more likely to experience morbidity, such as hyperlactatemia, vasopressor use, and organ failure, and also have an increased risk of mortality.12-14

Although the predictive abilities of these bedside risk stratification scores have been assessed individually using standard binary cut-points, the comparative performance of qSOFA, the Shock Index, and NEWS2 has not been evaluated in patients presenting to an emergency department (ED) with suspected sepsis.

METHODS

Design and Setting

We conducted a retrospective cohort study of ED patients who presented with suspected sepsis to the University of California San Francisco (UCSF) Helen Diller Medical Center at Parnassus Heights between June 1, 2012, and December 31, 2018. Our institution is a 785-bed academic teaching hospital with approximately 30,000 ED encounters per year. The study was approved with a waiver of informed consent by the UCSF Human Research Protection Program.

Participants

We use an Epic-based EHR platform (Epic 2017, Epic Systems Corporation) for clinical care, which was implemented on June 1, 2012. All data elements were obtained from Clarity, the relational database that stores Epic’s inpatient data. The study included encounters for patients age ≥18 years who had blood cultures ordered within 24 hours of ED presentation and administration of intravenous antibiotics within 24 hours. Repeat encounters were treated independently in our analysis.

Outcomes and Measures

We compared the ability of qSOFA, the Shock Index, and NEWS2 to predict in-hospital mortality and admission to the ICU from the ED (ED-to-ICU admission). We used the

We compared demographic and clinical characteristics of patients who were positive for qSOFA, the Shock Index, and NEWS2. Demographic data were extracted from the EHR and included primary language, age, sex, and insurance status. All International Classification of Diseases (ICD)-9/10 diagnosis codes were pulled from Clarity billing tables. We used the Elixhauser comorbidity groupings19 of ICD-9/10 codes present on admission to identify preexisting comorbidities and underlying organ dysfunction. To estimate burden of comorbid illnesses, we calculated the validated van Walraven comorbidity index,20 which provides an estimated risk of in-hospital death based on documented Elixhauser comorbidities. Admission level of care (acute, stepdown, or intensive care) was collected for inpatient admissions to assess initial illness severity.21 We also evaluated discharge disposition and in-hospital mortality. Index blood culture results were collected, and dates and timestamps of mechanical ventilation, fluid, vasopressor, and antibiotic administration were obtained for the duration of the encounter.

UCSF uses an automated, real-time, algorithm-based severe sepsis alert that is triggered when a patient meets ≥2 SIRS criteria and again when the patient meets severe sepsis or septic shock criteria (ie, ≥2 SIRS criteria in addition to end-organ dysfunction and/or fluid nonresponsive hypotension). This sepsis screening alert was in use for the duration of our study.22

Statistical Analysis

We performed a subgroup analysis among those who were diagnosed with sepsis, according to the 2016 Third International Consensus Definitions for Sepsis and Septic Shock (Sepsis-3) criteria.

All statistical analyses were conducted using Stata 14 (StataCorp). We summarized differences in demographic and clinical characteristics among the populations meeting each severity score but elected not to conduct hypothesis testing because patients could be positive for one or more scores. We calculated sensitivity, specificity, positive predictive value (PPV), and negative predictive value (NPV) for each score to predict in-hospital mortality and ED-to-ICU admission. To allow comparison with other studies, we also created a composite outcome of either in-hospital mortality or ED-to-ICU admission.

RESULTS

Within our sample 23,837 ED patients had blood cultures ordered within 24 hours of ED presentation and were considered to have suspected sepsis. The mean age of the cohort was 60.8 years, and 1,612 (6.8%) had positive blood cultures. A total of 12,928 patients (54.2%) were found to have sepsis. We documented 1,427 in-hospital deaths (6.0%) and 3,149 (13.2%) ED-to-ICU admissions. At ED triage 1,921 (8.1%) were qSOFA-positive, 4,273 (17.9%) were Shock Index-positive, and 11,832 (49.6%) were NEWS2-positive. At ED triage, blood pressure, heart rate, respiratory rate, and oxygen saturated were documented in >99% of patients, 93.5% had temperature documented, and 28.5% had GCS recorded. If the window of assessment was widened to 1 hour, GCS was only documented among 44.2% of those with suspected sepsis.

Demographic Characteristics and Clinical Course

qSOFA-positive patients received antibiotics more quickly than those who were Shock Index-positive or NEWS2-positive (median 1.5, 1.8, and 2.8 hours after admission, respectively). In addition, those who were qSOFA-positive were more likely to have a positive blood culture (10.9%, 9.4%, and 8.5%, respectively) and to receive an EHR-based diagnosis of sepsis (77.0%, 69.6%, and 60.9%, respectively) than those who were Shock Index- or NEWS2-positive. Those who were qSOFA-positive also were more likely to be mechanically ventilated during their hospital stay (25.4%, 19.2%, and 10.8%, respectively) and to receive vasopressors (33.5%, 22.5%, and 12.2%, respectively). In-hospital mortality also was more common among those who were qSOFA-positive at triage (23.4%, 15.3%, and 9.2%, respectively).

Because both qSOFA and NEWS2 incorporate GCS, we explored baseline characteristics of patients with GCS documented at triage (n = 6,794). These patients were older (median age 63 and 61 years, P < .0001), more likely to be male (54.9% and 53.4%, P = .0031), more likely to have renal failure (22.8% and 20.1%, P < .0001), more likely to have liver disease (14.2% and 12.8%, P = .006), had a higher van Walraven comorbidity score on presentation (median 10 and 8, P < .0001), and were more likely to go directly to the ICU from the ED (20.2% and 10.6%, P < .0001). However, among the 6,397 GCS scores documented at triage, only 1,579 (24.7%) were abnormal.

Test Characteristics of qSOFA, Shock Index, and NEWS2 for Predicting In-hospital Mortality and ED-to-ICU Admission

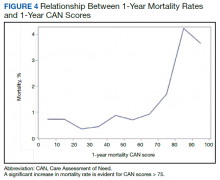

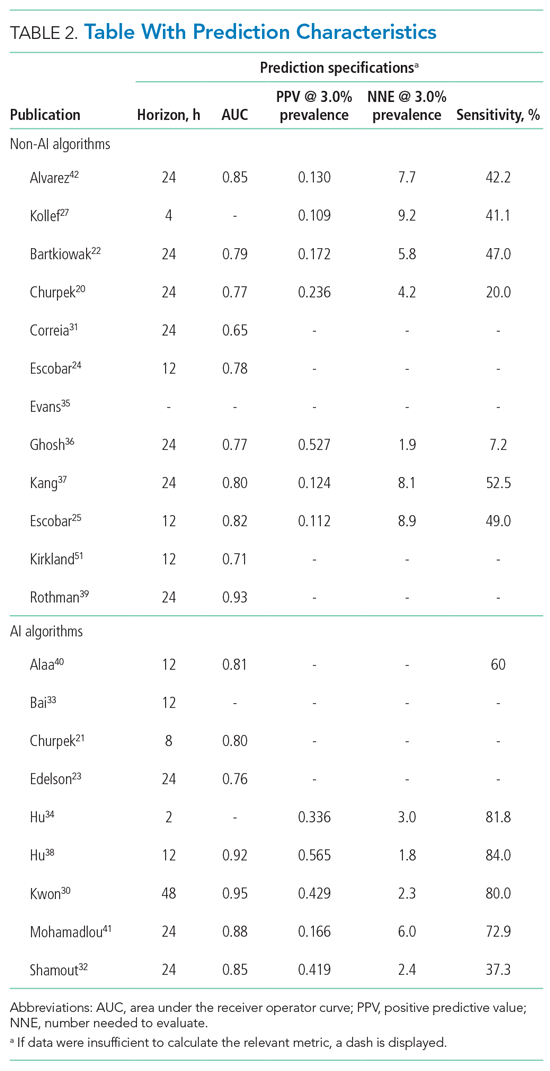

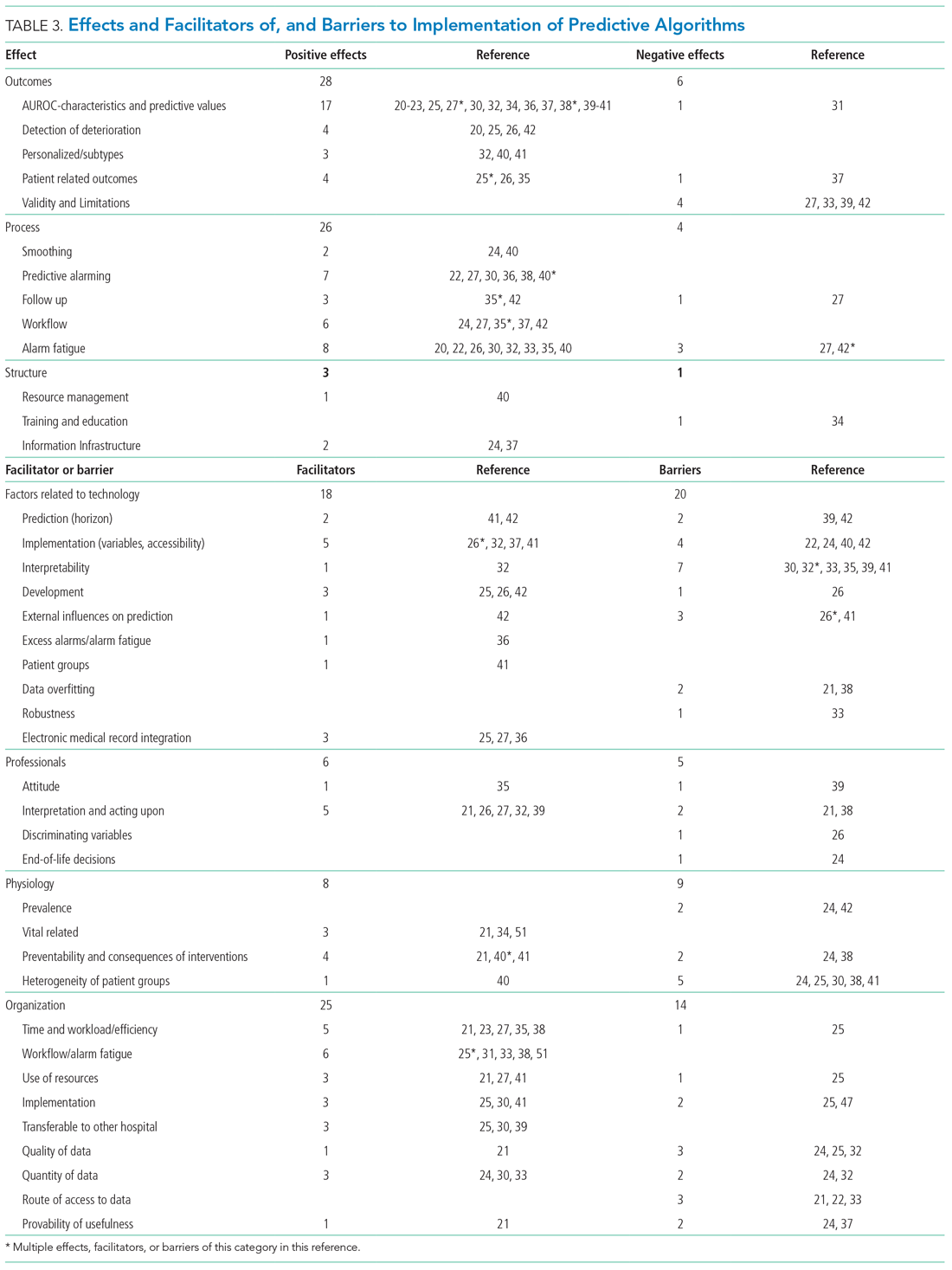

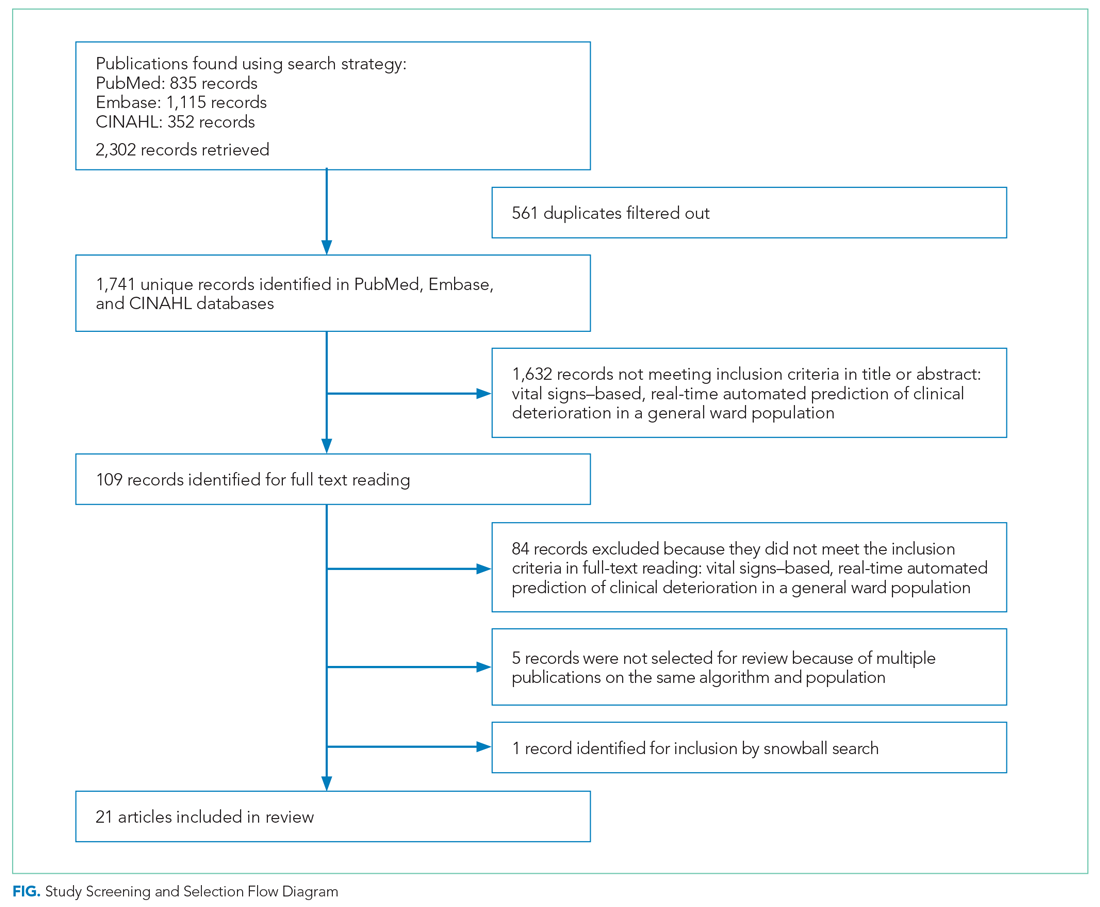

Among 23,837 patients with suspected sepsis, NEWS2 had the highest sensitivity for predicting in-hospital mortality (76.0%; 95% CI, 73.7%-78.2%) and ED-to-ICU admission (78.9%; 95% CI, 77.5%-80.4%) but had the lowest specificity for in-hospital mortality (52.0%; 95% CI, 51.4%-52.7%) and for ED-to-ICU admission (54.8%; 95% CI, 54.1%-55.5%) (Table 3). qSOFA had the lowest sensitivity for in-hospital mortality (31.5%; 95% CI, 29.1%-33.9%) and ED-to-ICU admission (29.3%; 95% CI, 27.7%-30.9%) but the highest specificity for in-hospital mortality (93.4%; 95% CI, 93.1%-93.8%) and ED-to-ICU admission (95.2%; 95% CI, 94.9%-95.5%). The Shock Index had a sensitivity that fell between qSOFA and NEWS2 for in-hospital mortality (45.8%; 95% CI, 43.2%-48.5%) and ED-to-ICU admission (49.2%; 95% CI, 47.5%-51.0%). The specificity of the Shock Index also was between qSOFA and NEWS2 for in-hospital mortality (83.9%; 95% CI, 83.4%-84.3%) and ED-to-ICU admission (86.8%; 95% CI, 86.4%-87.3%). All three scores exhibited relatively low PPV, ranging from 9.2% to 23.4% for in-hospital mortality and 21.0% to 48.0% for ED-to-ICU triage. Conversely, all three scores exhibited relatively high NPV, ranging from 95.5% to 97.1% for in-hospital mortality and 89.8% to 94.5% for ED-to-ICU triage.

When considering a binary cutoff, the Shock Index exhibited the highest AUROC for in-hospital mortality (0.648; 95% CI, 0.635-0.662) and had a significantly higher AUROC than qSOFA (AUROC, 0.625; 95% CI, 0.612-0.637; P = .0005), but there was no difference compared with NEWS2 (AUROC, 0.640; 95% CI, 0.628-0.652; P = .2112). NEWS2 had a significantly higher AUROC than qSOFA for predicting in-hospital mortality (P = .0227). The Shock Index also exhibited the highest AUROC for ED-to-ICU admission (0.680; 95% CI, 0.617-0.689), which was significantly higher than the AUROC for qSOFA (P < .0001) and NEWS2 (P = 0.0151). NEWS2 had a significantly higher AUROC than qSOFA for predicting ED-to-ICU admission (P < .0001). Similar findings were seen in patients found to have sepsis.

DISCUSSION

In this retrospective cohort study of 23,837 patients who presented to the ED with suspected sepsis, the standard qSOFA threshold was met least frequently, followed by the Shock Index and NEWS2. NEWS2 had the highest sensitivity but the lowest specificity for predicting in-hospital mortality and ED-to-ICU admission, making it a challenging bedside risk stratification scale for identifying patients at risk of poor clinical outcomes. When comparing predictive performance among the three scales, qSOFA had the highest specificity and the Shock Index had the highest AUROC for in-hospital mortality and ED-to-ICU admission in this cohort of patients with suspected sepsis. These trends in sensitivity, specificity, and AUROC were consistent among those who met EHR criteria for a sepsis diagnosis. In the analysis of the three scoring systems using all available cut-points, qSOFA and NEWS2 had the highest AUROCs, followed by the Shock Index.

Considering the rapid progression from organ dysfunction to death in sepsis patients, as well as the difficulty establishing a sepsis diagnosis at triage,23 providers must quickly identify patients at increased risk of poor outcomes when they present to the ED. Sepsis alerts often are built using SIRS criteria,27 including the one used for sepsis surveillance at UCSF since 2012,22 but the white blood cell count criterion is subject to a laboratory lag and could lead to a delay in identification. Implementation of a point-of-care bedside score alert that uses readily available clinical data could allow providers to identify patients at greatest risk of poor outcomes immediately at ED presentation and triage, which motivated us to explore the predictive performance of qSOFA, the Shock Index, and NEWS2.

Our study is the first to provide a head-to-head comparison of the predictive performance of qSOFA, the Shock Index, and NEWS2, three easy-to-calculate bedside risk scores that use EHR data collected among patients with suspected sepsis. The Sepsis-3 guidelines recommend qSOFA to quickly identify non-ICU patients at greatest risk of poor outcomes because the measure exhibited predictive performance similar to the more extensive SOFA score outside the ICU.16,23 Although some studies have confirmed qSOFA’s high predictive performance,28-31 our test characteristics and AUROC findings are in line with other published analyses.4,6,10,17 The UK National Health Service is using NEWS2 to screen for patients at risk of poor outcomes from sepsis. Several analyses that assessed the predictive ability of NEWS have reported estimates in line with our findings.4,10,32 The Shock Index was introduced in 1967 and provided a metric to evaluate hemodynamic stability based on heart rate and systolic blood pressure.33 The Shock Index has been studied in several contexts, including sepsis,34 and studies show that a sustained Shock Index is associated with increased odds of vasopressor administration, higher prevalence of hyperlactatemia, and increased risk of poor outcomes in the ICU.13,14

For our study, we were particularly interested in exploring how the Shock Index would compare with more frequently used severity scores such as qSOFA and NEWS2 among patients with suspected sepsis, given the simplicity of its calculation and the easy availability of required data. In our cohort of 23,837 patients, only 159 people had missing blood pressure and only 71 had omitted heart rate. In contrast, both qSOFA and NEWS2 include an assessment of level of consciousness that can be subject to variability in assessment methods and EHR documentation across institutions.11 In our cohort, GCS within 30 minutes of ED presentation was missing in 72 patients, which could have led to incomplete calculation of qSOFA and NEWS2 if a missing value was not actually within normal limits.

Several investigations relate qSOFA to NEWS but few compare qSOFA with the newer NEWS2, and even fewer evaluate the Shock Index with any of these scores.10,11,18,29,35-37 In general, studies have shown that NEWS exhibits a higher AUROC for predicting mortality, sepsis with organ dysfunction, and ICU admission, often as a composite outcome.4,11,18,37,38 A handful of studies compare the Shock Index to SIRS; however, little has been done to compare the Shock Index to qSOFA or NEWS2, scores that have been used specifically for sepsis and might be more predictive of poor outcomes than SIRS.33 In our study, the Shock Index had a higher AUROC than either qSOFA or NEWS2 for predicting in-hospital mortality and ED-to-ICU admission measured as separate outcomes and as a composite outcome using standard cut-points for these scores.

When selecting a severity score to apply in an institution, it is important to carefully evaluate the score’s test characteristics, in addition to considering the availability of reliable data. Tests with high sensitivity and NPV for the population being studied can be useful to rule out disease or risk of poor outcome, while tests with high specificity and PPV can be useful to rule in disease or risk of poor outcome.39 When considering specificity, qSOFA’s performance was superior to the Shock Index and NEWS2 in our study, but a small percentage of the population was identified using a cut-point of qSOFA ≥2. If we used qSOFA and applied this standard cut-point at our institution, we could be confident that those identified were at increased risk, but we would miss a significant number of patients who would experience a poor outcome. When considering sensitivity, performance of NEWS2 was superior to qSOFA and the Shock Index in our study, but one-half of the population was identified using a cut-point of NEWS2 ≥5. If we were to apply this standard NEWS2 cut-point at our institution, we would assume that one-half of our population was at risk, which might drive resource use towards patients who will not experience a poor outcome. Although none of the scores exhibited a robust AUROC measure, the Shock Index had the highest AUROC for in-hospital mortality and ED-to-ICU admission when using the standard binary cut-point, and its sensitivity and specificity is between that of qSOFA and NEWS2, potentially making it a score to use in settings where qSOFA and NEWS2 score components, such as altered mentation, are not reliably collected. Finally, our sensitivity analysis varying the binary cut-point of each score within our population demonstrated that the standard cut-points might not be as useful within a specific population and might need to be tailored for implementation, balancing sensitivity, specificity, PPV, and NPV to meet local priorities and ICU capacity.

Our study has limitations. It is a single-center, retrospective analysis, factors that could reduce generalizability. However, it does include a large and diverse patient population spanning several years. Missing GCS data could have affected the predictive ability of qSOFA and NEWS2 in our cohort. We could not reliably perform imputation of GCS because of the high missingness and therefore we assumed missing was normal, as was done in the Sepsis-3 derivation studies.16 Previous studies have attempted to impute GCS and have not observed improved performance of qSOFA to predict mortality.40 Because manually collected variables such as GCS are less reliably documented in the EHR, there might be limitations in their use for triage risk scores.

Although the current analysis focused on the predictive performance of qSOFA, the Shock Index, and NEWS2 at triage, performance of these scores could affect the ED team’s treatment decisions before handoff to the hospitalist team and the expected level of care the patient will receive after in-patient admission. These tests also have the advantage of being easy to calculate at the bedside over time, which could provide an objective assessment of longitudinal predicted prognosis.

CONCLUSION

Local priorities should drive selection of a screening tool, balancing sensitivity, specificity, PPV, and NPV to achieve the institution’s goals. qSOFA, Shock Index, and NEWS2 are risk stratification tools that can be easily implemented at ED triage using data available at the bedside. Although none of these scores performed strongly when comparing AUROCs, qSOFA was highly specific for identifying patients with poor outcomes, and NEWS2 was the most sensitive for ruling out those at high risk among patients with suspected sepsis. The Shock Index exhibited a sensitivity and specificity that fell between qSOFA and NEWS2 and also might be considered to identify those at increased risk, given its ease of implementation, particularly in settings where altered mentation is unreliably or inconsistently documented.

Acknowledgment

The authors thank the UCSF Division of Hospital Medicine Data Core for their assistance with data acquisition.

Sepsis is the leading cause of in-hospital mortality in the United States.1 Sepsis is present on admission in 85% of cases, and each hour delay in antibiotic treatment is associated with 4% to 7% increased odds of mortality.2,3 Prompt identification and treatment of sepsis is essential for reducing morbidity and mortality, but identifying sepsis during triage is challenging.2

Risk stratification scores that rely solely on data readily available at the bedside have been developed to quickly identify those at greatest risk of poor outcomes from sepsis in real time. The quick Sequential Organ Failure Assessment (qSOFA) score, the National Early Warning System (NEWS2), and the Shock Index are easy-to-calculate measures that use routinely collected clinical data that are not subject to laboratory delay. These scores can be incorporated into electronic health record (EHR)-based alerts and can be calculated longitudinally to track the risk of poor outcomes over time. qSOFA was developed to quantify patient risk at bedside in non-intensive care unit (ICU) settings, but there is no consensus about its ability to predict adverse outcomes such as mortality and ICU admission.4-6 The United Kingdom’s National Health Service uses NEWS2 to identify patients at risk for sepsis.7 NEWS has been shown to have similar or better sensitivity in identifying poorer outcomes in sepsis patients compared with systemic inflammatory response syndrome (SIRS) criteria and qSOFA.4,8-11 However, since the latest update of NEWS2 in 2017, there has been little study of its predictive ability. The Shock Index is a simple bedside score (heart rate divided by systolic blood pressure) that was developed to detect changes in cardiovascular performance before systemic shock onset. Although it was not developed for infection and has not been regularly applied in the sepsis literature, the Shock Index might be useful for identifying patients at increased risk of poor outcomes. Patients with higher and sustained Shock Index scores are more likely to experience morbidity, such as hyperlactatemia, vasopressor use, and organ failure, and also have an increased risk of mortality.12-14

Although the predictive abilities of these bedside risk stratification scores have been assessed individually using standard binary cut-points, the comparative performance of qSOFA, the Shock Index, and NEWS2 has not been evaluated in patients presenting to an emergency department (ED) with suspected sepsis.

METHODS

Design and Setting

We conducted a retrospective cohort study of ED patients who presented with suspected sepsis to the University of California San Francisco (UCSF) Helen Diller Medical Center at Parnassus Heights between June 1, 2012, and December 31, 2018. Our institution is a 785-bed academic teaching hospital with approximately 30,000 ED encounters per year. The study was approved with a waiver of informed consent by the UCSF Human Research Protection Program.

Participants

We use an Epic-based EHR platform (Epic 2017, Epic Systems Corporation) for clinical care, which was implemented on June 1, 2012. All data elements were obtained from Clarity, the relational database that stores Epic’s inpatient data. The study included encounters for patients age ≥18 years who had blood cultures ordered within 24 hours of ED presentation and administration of intravenous antibiotics within 24 hours. Repeat encounters were treated independently in our analysis.

Outcomes and Measures

We compared the ability of qSOFA, the Shock Index, and NEWS2 to predict in-hospital mortality and admission to the ICU from the ED (ED-to-ICU admission). We used the

We compared demographic and clinical characteristics of patients who were positive for qSOFA, the Shock Index, and NEWS2. Demographic data were extracted from the EHR and included primary language, age, sex, and insurance status. All International Classification of Diseases (ICD)-9/10 diagnosis codes were pulled from Clarity billing tables. We used the Elixhauser comorbidity groupings19 of ICD-9/10 codes present on admission to identify preexisting comorbidities and underlying organ dysfunction. To estimate burden of comorbid illnesses, we calculated the validated van Walraven comorbidity index,20 which provides an estimated risk of in-hospital death based on documented Elixhauser comorbidities. Admission level of care (acute, stepdown, or intensive care) was collected for inpatient admissions to assess initial illness severity.21 We also evaluated discharge disposition and in-hospital mortality. Index blood culture results were collected, and dates and timestamps of mechanical ventilation, fluid, vasopressor, and antibiotic administration were obtained for the duration of the encounter.

UCSF uses an automated, real-time, algorithm-based severe sepsis alert that is triggered when a patient meets ≥2 SIRS criteria and again when the patient meets severe sepsis or septic shock criteria (ie, ≥2 SIRS criteria in addition to end-organ dysfunction and/or fluid nonresponsive hypotension). This sepsis screening alert was in use for the duration of our study.22

Statistical Analysis

We performed a subgroup analysis among those who were diagnosed with sepsis, according to the 2016 Third International Consensus Definitions for Sepsis and Septic Shock (Sepsis-3) criteria.

All statistical analyses were conducted using Stata 14 (StataCorp). We summarized differences in demographic and clinical characteristics among the populations meeting each severity score but elected not to conduct hypothesis testing because patients could be positive for one or more scores. We calculated sensitivity, specificity, positive predictive value (PPV), and negative predictive value (NPV) for each score to predict in-hospital mortality and ED-to-ICU admission. To allow comparison with other studies, we also created a composite outcome of either in-hospital mortality or ED-to-ICU admission.

RESULTS

Within our sample 23,837 ED patients had blood cultures ordered within 24 hours of ED presentation and were considered to have suspected sepsis. The mean age of the cohort was 60.8 years, and 1,612 (6.8%) had positive blood cultures. A total of 12,928 patients (54.2%) were found to have sepsis. We documented 1,427 in-hospital deaths (6.0%) and 3,149 (13.2%) ED-to-ICU admissions. At ED triage 1,921 (8.1%) were qSOFA-positive, 4,273 (17.9%) were Shock Index-positive, and 11,832 (49.6%) were NEWS2-positive. At ED triage, blood pressure, heart rate, respiratory rate, and oxygen saturated were documented in >99% of patients, 93.5% had temperature documented, and 28.5% had GCS recorded. If the window of assessment was widened to 1 hour, GCS was only documented among 44.2% of those with suspected sepsis.

Demographic Characteristics and Clinical Course

qSOFA-positive patients received antibiotics more quickly than those who were Shock Index-positive or NEWS2-positive (median 1.5, 1.8, and 2.8 hours after admission, respectively). In addition, those who were qSOFA-positive were more likely to have a positive blood culture (10.9%, 9.4%, and 8.5%, respectively) and to receive an EHR-based diagnosis of sepsis (77.0%, 69.6%, and 60.9%, respectively) than those who were Shock Index- or NEWS2-positive. Those who were qSOFA-positive also were more likely to be mechanically ventilated during their hospital stay (25.4%, 19.2%, and 10.8%, respectively) and to receive vasopressors (33.5%, 22.5%, and 12.2%, respectively). In-hospital mortality also was more common among those who were qSOFA-positive at triage (23.4%, 15.3%, and 9.2%, respectively).

Because both qSOFA and NEWS2 incorporate GCS, we explored baseline characteristics of patients with GCS documented at triage (n = 6,794). These patients were older (median age 63 and 61 years, P < .0001), more likely to be male (54.9% and 53.4%, P = .0031), more likely to have renal failure (22.8% and 20.1%, P < .0001), more likely to have liver disease (14.2% and 12.8%, P = .006), had a higher van Walraven comorbidity score on presentation (median 10 and 8, P < .0001), and were more likely to go directly to the ICU from the ED (20.2% and 10.6%, P < .0001). However, among the 6,397 GCS scores documented at triage, only 1,579 (24.7%) were abnormal.

Test Characteristics of qSOFA, Shock Index, and NEWS2 for Predicting In-hospital Mortality and ED-to-ICU Admission

Among 23,837 patients with suspected sepsis, NEWS2 had the highest sensitivity for predicting in-hospital mortality (76.0%; 95% CI, 73.7%-78.2%) and ED-to-ICU admission (78.9%; 95% CI, 77.5%-80.4%) but had the lowest specificity for in-hospital mortality (52.0%; 95% CI, 51.4%-52.7%) and for ED-to-ICU admission (54.8%; 95% CI, 54.1%-55.5%) (Table 3). qSOFA had the lowest sensitivity for in-hospital mortality (31.5%; 95% CI, 29.1%-33.9%) and ED-to-ICU admission (29.3%; 95% CI, 27.7%-30.9%) but the highest specificity for in-hospital mortality (93.4%; 95% CI, 93.1%-93.8%) and ED-to-ICU admission (95.2%; 95% CI, 94.9%-95.5%). The Shock Index had a sensitivity that fell between qSOFA and NEWS2 for in-hospital mortality (45.8%; 95% CI, 43.2%-48.5%) and ED-to-ICU admission (49.2%; 95% CI, 47.5%-51.0%). The specificity of the Shock Index also was between qSOFA and NEWS2 for in-hospital mortality (83.9%; 95% CI, 83.4%-84.3%) and ED-to-ICU admission (86.8%; 95% CI, 86.4%-87.3%). All three scores exhibited relatively low PPV, ranging from 9.2% to 23.4% for in-hospital mortality and 21.0% to 48.0% for ED-to-ICU triage. Conversely, all three scores exhibited relatively high NPV, ranging from 95.5% to 97.1% for in-hospital mortality and 89.8% to 94.5% for ED-to-ICU triage.

When considering a binary cutoff, the Shock Index exhibited the highest AUROC for in-hospital mortality (0.648; 95% CI, 0.635-0.662) and had a significantly higher AUROC than qSOFA (AUROC, 0.625; 95% CI, 0.612-0.637; P = .0005), but there was no difference compared with NEWS2 (AUROC, 0.640; 95% CI, 0.628-0.652; P = .2112). NEWS2 had a significantly higher AUROC than qSOFA for predicting in-hospital mortality (P = .0227). The Shock Index also exhibited the highest AUROC for ED-to-ICU admission (0.680; 95% CI, 0.617-0.689), which was significantly higher than the AUROC for qSOFA (P < .0001) and NEWS2 (P = 0.0151). NEWS2 had a significantly higher AUROC than qSOFA for predicting ED-to-ICU admission (P < .0001). Similar findings were seen in patients found to have sepsis.

DISCUSSION

In this retrospective cohort study of 23,837 patients who presented to the ED with suspected sepsis, the standard qSOFA threshold was met least frequently, followed by the Shock Index and NEWS2. NEWS2 had the highest sensitivity but the lowest specificity for predicting in-hospital mortality and ED-to-ICU admission, making it a challenging bedside risk stratification scale for identifying patients at risk of poor clinical outcomes. When comparing predictive performance among the three scales, qSOFA had the highest specificity and the Shock Index had the highest AUROC for in-hospital mortality and ED-to-ICU admission in this cohort of patients with suspected sepsis. These trends in sensitivity, specificity, and AUROC were consistent among those who met EHR criteria for a sepsis diagnosis. In the analysis of the three scoring systems using all available cut-points, qSOFA and NEWS2 had the highest AUROCs, followed by the Shock Index.

Considering the rapid progression from organ dysfunction to death in sepsis patients, as well as the difficulty establishing a sepsis diagnosis at triage,23 providers must quickly identify patients at increased risk of poor outcomes when they present to the ED. Sepsis alerts often are built using SIRS criteria,27 including the one used for sepsis surveillance at UCSF since 2012,22 but the white blood cell count criterion is subject to a laboratory lag and could lead to a delay in identification. Implementation of a point-of-care bedside score alert that uses readily available clinical data could allow providers to identify patients at greatest risk of poor outcomes immediately at ED presentation and triage, which motivated us to explore the predictive performance of qSOFA, the Shock Index, and NEWS2.

Our study is the first to provide a head-to-head comparison of the predictive performance of qSOFA, the Shock Index, and NEWS2, three easy-to-calculate bedside risk scores that use EHR data collected among patients with suspected sepsis. The Sepsis-3 guidelines recommend qSOFA to quickly identify non-ICU patients at greatest risk of poor outcomes because the measure exhibited predictive performance similar to the more extensive SOFA score outside the ICU.16,23 Although some studies have confirmed qSOFA’s high predictive performance,28-31 our test characteristics and AUROC findings are in line with other published analyses.4,6,10,17 The UK National Health Service is using NEWS2 to screen for patients at risk of poor outcomes from sepsis. Several analyses that assessed the predictive ability of NEWS have reported estimates in line with our findings.4,10,32 The Shock Index was introduced in 1967 and provided a metric to evaluate hemodynamic stability based on heart rate and systolic blood pressure.33 The Shock Index has been studied in several contexts, including sepsis,34 and studies show that a sustained Shock Index is associated with increased odds of vasopressor administration, higher prevalence of hyperlactatemia, and increased risk of poor outcomes in the ICU.13,14

For our study, we were particularly interested in exploring how the Shock Index would compare with more frequently used severity scores such as qSOFA and NEWS2 among patients with suspected sepsis, given the simplicity of its calculation and the easy availability of required data. In our cohort of 23,837 patients, only 159 people had missing blood pressure and only 71 had omitted heart rate. In contrast, both qSOFA and NEWS2 include an assessment of level of consciousness that can be subject to variability in assessment methods and EHR documentation across institutions.11 In our cohort, GCS within 30 minutes of ED presentation was missing in 72 patients, which could have led to incomplete calculation of qSOFA and NEWS2 if a missing value was not actually within normal limits.

Several investigations relate qSOFA to NEWS but few compare qSOFA with the newer NEWS2, and even fewer evaluate the Shock Index with any of these scores.10,11,18,29,35-37 In general, studies have shown that NEWS exhibits a higher AUROC for predicting mortality, sepsis with organ dysfunction, and ICU admission, often as a composite outcome.4,11,18,37,38 A handful of studies compare the Shock Index to SIRS; however, little has been done to compare the Shock Index to qSOFA or NEWS2, scores that have been used specifically for sepsis and might be more predictive of poor outcomes than SIRS.33 In our study, the Shock Index had a higher AUROC than either qSOFA or NEWS2 for predicting in-hospital mortality and ED-to-ICU admission measured as separate outcomes and as a composite outcome using standard cut-points for these scores.

When selecting a severity score to apply in an institution, it is important to carefully evaluate the score’s test characteristics, in addition to considering the availability of reliable data. Tests with high sensitivity and NPV for the population being studied can be useful to rule out disease or risk of poor outcome, while tests with high specificity and PPV can be useful to rule in disease or risk of poor outcome.39 When considering specificity, qSOFA’s performance was superior to the Shock Index and NEWS2 in our study, but a small percentage of the population was identified using a cut-point of qSOFA ≥2. If we used qSOFA and applied this standard cut-point at our institution, we could be confident that those identified were at increased risk, but we would miss a significant number of patients who would experience a poor outcome. When considering sensitivity, performance of NEWS2 was superior to qSOFA and the Shock Index in our study, but one-half of the population was identified using a cut-point of NEWS2 ≥5. If we were to apply this standard NEWS2 cut-point at our institution, we would assume that one-half of our population was at risk, which might drive resource use towards patients who will not experience a poor outcome. Although none of the scores exhibited a robust AUROC measure, the Shock Index had the highest AUROC for in-hospital mortality and ED-to-ICU admission when using the standard binary cut-point, and its sensitivity and specificity is between that of qSOFA and NEWS2, potentially making it a score to use in settings where qSOFA and NEWS2 score components, such as altered mentation, are not reliably collected. Finally, our sensitivity analysis varying the binary cut-point of each score within our population demonstrated that the standard cut-points might not be as useful within a specific population and might need to be tailored for implementation, balancing sensitivity, specificity, PPV, and NPV to meet local priorities and ICU capacity.

Our study has limitations. It is a single-center, retrospective analysis, factors that could reduce generalizability. However, it does include a large and diverse patient population spanning several years. Missing GCS data could have affected the predictive ability of qSOFA and NEWS2 in our cohort. We could not reliably perform imputation of GCS because of the high missingness and therefore we assumed missing was normal, as was done in the Sepsis-3 derivation studies.16 Previous studies have attempted to impute GCS and have not observed improved performance of qSOFA to predict mortality.40 Because manually collected variables such as GCS are less reliably documented in the EHR, there might be limitations in their use for triage risk scores.

Although the current analysis focused on the predictive performance of qSOFA, the Shock Index, and NEWS2 at triage, performance of these scores could affect the ED team’s treatment decisions before handoff to the hospitalist team and the expected level of care the patient will receive after in-patient admission. These tests also have the advantage of being easy to calculate at the bedside over time, which could provide an objective assessment of longitudinal predicted prognosis.

CONCLUSION

Local priorities should drive selection of a screening tool, balancing sensitivity, specificity, PPV, and NPV to achieve the institution’s goals. qSOFA, Shock Index, and NEWS2 are risk stratification tools that can be easily implemented at ED triage using data available at the bedside. Although none of these scores performed strongly when comparing AUROCs, qSOFA was highly specific for identifying patients with poor outcomes, and NEWS2 was the most sensitive for ruling out those at high risk among patients with suspected sepsis. The Shock Index exhibited a sensitivity and specificity that fell between qSOFA and NEWS2 and also might be considered to identify those at increased risk, given its ease of implementation, particularly in settings where altered mentation is unreliably or inconsistently documented.

Acknowledgment

The authors thank the UCSF Division of Hospital Medicine Data Core for their assistance with data acquisition.

1. Jones SL, Ashton CM, Kiehne LB, et al. Outcomes and resource use of sepsis-associated stays by presence on admission, severity, and hospital type. Med Care. 2016;54(3):303-310. https://doi.org/10.1097/MLR.0000000000000481

2. Seymour CW, Gesten F, Prescott HC, et al. Time to treatment and mortality during mandated emergency care for sepsis. N Engl J Med. 2017;376(23):2235-2244. https://doi.org/10.1056/NEJMoa1703058

3. Kumar A, Roberts D, Wood KE, et al. Duration of hypotension before initiation of effective antimicrobial therapy is the critical determinant of survival in human septic shock. Crit Care Med. 2006;34(6):1589-1596. https://doi.org/10.1097/01.CCM.0000217961.75225.E9

4. Churpek MM, Snyder A, Sokol S, Pettit NN, Edelson DP. Investigating the impact of different suspicion of infection criteria on the accuracy of Quick Sepsis-Related Organ Failure Assessment, Systemic Inflammatory Response Syndrome, and Early Warning Scores. Crit Care Med. 2017;45(11):1805-1812. https://doi.org/10.1097/CCM.0000000000002648

5. Abdullah SMOB, Sørensen RH, Dessau RBC, Sattar SMRU, Wiese L, Nielsen FE. Prognostic accuracy of qSOFA in predicting 28-day mortality among infected patients in an emergency department: a prospective validation study. Emerg Med J. 2019;36(12):722-728. https://doi.org/10.1136/emermed-2019-208456

6. Kim KS, Suh GJ, Kim K, et al. Quick Sepsis-related Organ Failure Assessment score is not sensitive enough to predict 28-day mortality in emergency department patients with sepsis: a retrospective review. Clin Exp Emerg Med. 2019;6(1):77-83. HTTPS://DOI.ORG/ 10.15441/ceem.17.294

7. National Early Warning Score (NEWS) 2: Standardising the assessment of acute-illness severity in the NHS. Royal College of Physicians; 2017.

8. Brink A, Alsma J, Verdonschot RJCG, et al. Predicting mortality in patients with suspected sepsis at the emergency department: a retrospective cohort study comparing qSOFA, SIRS and National Early Warning Score. PLoS One. 2019;14(1):e0211133. https://doi.org/ 10.1371/journal.pone.0211133

9. Redfern OC, Smith GB, Prytherch DR, Meredith P, Inada-Kim M, Schmidt PE. A comparison of the Quick Sequential (Sepsis-Related) Organ Failure Assessment Score and the National Early Warning Score in non-ICU patients with/without infection. Crit Care Med. 2018;46(12):1923-1933. https://doi.org/10.1097/CCM.0000000000003359

10. Churpek MM, Snyder A, Han X, et al. Quick Sepsis-related Organ Failure Assessment, Systemic Inflammatory Response Syndrome, and Early Warning Scores for detecting clinical deterioration in infected patients outside the intensive care unit. Am J Respir Crit Care Med. 2017;195(7):906-911. https://doi.org/10.1164/rccm.201604-0854OC

11. Goulden R, Hoyle MC, Monis J, et al. qSOFA, SIRS and NEWS for predicting inhospital mortality and ICU admission in emergency admissions treated as sepsis. Emerg Med J. 2018;35(6):345-349. https://doi.org/10.1136/emermed-2017-207120

12. Biney I, Shepherd A, Thomas J, Mehari A. Shock Index and outcomes in patients admitted to the ICU with sepsis. Chest. 2015;148(suppl 4):337A. https://doi.org/https://doi.org/10.1378/chest.2281151

13. Wira CR, Francis MW, Bhat S, Ehrman R, Conner D, Siegel M. The shock index as a predictor of vasopressor use in emergency department patients with severe sepsis. West J Emerg Med. 2014;15(1):60-66. https://doi.org/10.5811/westjem.2013.7.18472

14. Berger T, Green J, Horeczko T, et al. Shock index and early recognition of sepsis in the emergency department: pilot study. West J Emerg Med. 2013;14(2):168-174. https://doi.org/10.5811/westjem.2012.8.11546

15. Middleton DJ, Smith TO, Bedford R, Neilly M, Myint PK. Shock Index predicts outcome in patients with suspected sepsis or community-acquired pneumonia: a systematic review. J Clin Med. 2019;8(8):1144. https://doi.org/10.3390/jcm8081144

16. Seymour CW, Liu VX, Iwashyna TJ, et al. Assessment of clinical criteria for sepsis: for the Third International Consensus Definitions for Sepsis and Septic Shock (Sepsis-3). JAMA. 2016;315(8):762-774. https://doi.org/ 10.1001/jama.2016.0288

17. Abdullah S, Sørensen RH, Dessau RBC, Sattar S, Wiese L, Nielsen FE. Prognostic accuracy of qSOFA in predicting 28-day mortality among infected patients in an emergency department: a prospective validation study. Emerg Med J. 2019;36(12):722-728. https://doi.org/10.1136/emermed-2019-208456

18. Usman OA, Usman AA, Ward MA. Comparison of SIRS, qSOFA, and NEWS for the early identification of sepsis in the Emergency Department. Am J Emerg Med. 2018;37(8):1490-1497. https://doi.org/10.1016/j.ajem.2018.10.058

19. Elixhauser A, Steiner C, Harris DR, Coffey RM. Comorbidity measures for use with administrative data. Med Care. 1998;36(1):8-27. https://doi.org/10.1097/00005650-199801000-00004

20. van Walraven C, Austin PC, Jennings A, Quan H, Forster AJ. A modification of the Elixhauser comorbidity measures into a point system for hospital death using administrative data. Med Care. 2009;47(6):626-633. https://doi.org/10.1097/MLR.0b013e31819432e5

21. Prin M, Wunsch H. The role of stepdown beds in hospital care. Am J Respir Crit Care Med. 2014;190(11):1210-1216. https://doi.org/10.1164/rccm.201406-1117PP

22. Narayanan N, Gross AK, Pintens M, Fee C, MacDougall C. Effect of an electronic medical record alert for severe sepsis among ED patients. Am J Emerg Med. 2016;34(2):185-188. https://doi.org/10.1016/j.ajem.2015.10.005

23. Singer M, Deutschman CS, Seymour CW, et al. The Third International Consensus Definitions for Sepsis and Septic Shock (Sepsis-3). JAMA. 2016;315(8):801-810. https://doi.org/10.1001/jama.2016.0287

24. Rhee C, Dantes R, Epstein L, et al. Incidence and trends of sepsis in US hospitals using clinical vs claims data, 2009-2014. JAMA. 2017;318(13):1241-1249. https://doi.org/10.1001/jama.2017.13836

25. Safari S, Baratloo A, Elfil M, Negida A. Evidence based emergency medicine; part 5 receiver operating curve and area under the curve. Emerg (Tehran). 2016;4(2):111-113.

26. DeLong ER, DeLong DM, Clarke-Pearson DL. Comparing the areas under two or more correlated receiver operating characteristic curves: a nonparametric approach. Biometrics. 1988;44(3):837-845.

27. Kangas C, Iverson L, Pierce D. Sepsis screening: combining Early Warning Scores and SIRS Criteria. Clin Nurs Res. 2021;30(1):42-49. https://doi.org/10.1177/1054773818823334.

28. Freund Y, Lemachatti N, Krastinova E, et al. Prognostic accuracy of Sepsis-3 Criteria for in-hospital mortality among patients with suspected infection presenting to the emergency department. JAMA. 2017;317(3):301-308. https://doi.org/10.1001/jama.2016.20329

29. Finkelsztein EJ, Jones DS, Ma KC, et al. Comparison of qSOFA and SIRS for predicting adverse outcomes of patients with suspicion of sepsis outside the intensive care unit. Crit Care. 2017;21(1):73. https://doi.org/10.1186/s13054-017-1658-5

30. Canet E, Taylor DM, Khor R, Krishnan V, Bellomo R. qSOFA as predictor of mortality and prolonged ICU admission in Emergency Department patients with suspected infection. J Crit Care. 2018;48:118-123. https://doi.org/10.1016/j.jcrc.2018.08.022

31. Anand V, Zhang Z, Kadri SS, Klompas M, Rhee C; CDC Prevention Epicenters Program. Epidemiology of Quick Sequential Organ Failure Assessment criteria in undifferentiated patients and association with suspected infection and sepsis. Chest. 2019;156(2):289-297. https://doi.org/10.1016/j.chest.2019.03.032

32. Hamilton F, Arnold D, Baird A, Albur M, Whiting P. Early Warning Scores do not accurately predict mortality in sepsis: A meta-analysis and systematic review of the literature. J Infect. 2018;76(3):241-248. https://doi.org/10.1016/j.jinf.2018.01.002

33. Koch E, Lovett S, Nghiem T, Riggs RA, Rech MA. Shock Index in the emergency department: utility and limitations. Open Access Emerg Med. 2019;11:179-199. https://doi.org/10.2147/OAEM.S178358

34. Yussof SJ, Zakaria MI, Mohamed FL, Bujang MA, Lakshmanan S, Asaari AH. Value of Shock Index in prognosticating the short-term outcome of death for patients presenting with severe sepsis and septic shock in the emergency department. Med J Malaysia. 2012;67(4):406-411.

35. Siddiqui S, Chua M, Kumaresh V, Choo R. A comparison of pre ICU admission SIRS, EWS and q SOFA scores for predicting mortality and length of stay in ICU. J Crit Care. 2017;41:191-193. https://doi.org/10.1016/j.jcrc.2017.05.017

36. Costa RT, Nassar AP, Caruso P. Accuracy of SOFA, qSOFA, and SIRS scores for mortality in cancer patients admitted to an intensive care unit with suspected infection. J Crit Care. 2018;45:52-57. https://doi.org/10.1016/j.jcrc.2017.12.024

37. Mellhammar L, Linder A, Tverring J, et al. NEWS2 is Superior to qSOFA in detecting sepsis with organ dysfunction in the emergency department. J Clin Med. 2019;8(8):1128. https://doi.org/10.3390/jcm8081128

38. Szakmany T, Pugh R, Kopczynska M, et al. Defining sepsis on the wards: results of a multi-centre point-prevalence study comparing two sepsis definitions. Anaesthesia. 2018;73(2):195-204. https://doi.org/10.1111/anae.14062

39. Newman TB, Kohn MA. Evidence-Based Diagnosis: An Introduction to Clinical Epidemiology. Cambridge University Press; 2009.

40. Askim Å, Moser F, Gustad LT, et al. Poor performance of quick-SOFA (qSOFA) score in predicting severe sepsis and mortality - a prospective study of patients admitted with infection to the emergency department. Scand J Trauma Resusc Emerg Med. 2017;25(1):56. https://doi.org/10.1186/s13049-017-0399-4

1. Jones SL, Ashton CM, Kiehne LB, et al. Outcomes and resource use of sepsis-associated stays by presence on admission, severity, and hospital type. Med Care. 2016;54(3):303-310. https://doi.org/10.1097/MLR.0000000000000481

2. Seymour CW, Gesten F, Prescott HC, et al. Time to treatment and mortality during mandated emergency care for sepsis. N Engl J Med. 2017;376(23):2235-2244. https://doi.org/10.1056/NEJMoa1703058

3. Kumar A, Roberts D, Wood KE, et al. Duration of hypotension before initiation of effective antimicrobial therapy is the critical determinant of survival in human septic shock. Crit Care Med. 2006;34(6):1589-1596. https://doi.org/10.1097/01.CCM.0000217961.75225.E9

4. Churpek MM, Snyder A, Sokol S, Pettit NN, Edelson DP. Investigating the impact of different suspicion of infection criteria on the accuracy of Quick Sepsis-Related Organ Failure Assessment, Systemic Inflammatory Response Syndrome, and Early Warning Scores. Crit Care Med. 2017;45(11):1805-1812. https://doi.org/10.1097/CCM.0000000000002648

5. Abdullah SMOB, Sørensen RH, Dessau RBC, Sattar SMRU, Wiese L, Nielsen FE. Prognostic accuracy of qSOFA in predicting 28-day mortality among infected patients in an emergency department: a prospective validation study. Emerg Med J. 2019;36(12):722-728. https://doi.org/10.1136/emermed-2019-208456

6. Kim KS, Suh GJ, Kim K, et al. Quick Sepsis-related Organ Failure Assessment score is not sensitive enough to predict 28-day mortality in emergency department patients with sepsis: a retrospective review. Clin Exp Emerg Med. 2019;6(1):77-83. HTTPS://DOI.ORG/ 10.15441/ceem.17.294

7. National Early Warning Score (NEWS) 2: Standardising the assessment of acute-illness severity in the NHS. Royal College of Physicians; 2017.