User login

Routine Oxygen at End of Life Typically Unhelpful

DENVER – The routine administration of oxygen to terminally ill patients who are near death is unwarranted, according to the results of a randomized, double-blind trial.

"I would suggest that we always use the patient in respiratory distress as their own control in an n-of-1 trial of oxygen. If oxygen does reduce their distress, then that patient should have oxygen, but if it does not – if there’s no change in patient distress – then that oxygen can be discontinued, or certainly not initiated in the first place," Mary L. Campbell, Ph.D., declared at the annual meeting of the American Academy of Hospice and Palliative Care Medicine.

Oxygen has well-established benefits in hypoxemic patients with acute or chronic exacerbations of an underlying pulmonary condition, but without ever having been subjected to scientific scrutiny, oxygen administration has become routine for patients who are near death, asserted Dr. Campbell of Wayne State University, Detroit.

"Oxygen has become almost an iconic intervention at the end of life – as common as golf clubs on a Wednesday afternoon," she said.

And oxygen support is not a benign intervention. It’s expensive, particularly in home care, where it requires additional personnel and materials in the home, including a noisy, intrusive concentrator at the bedside. It also causes nasal drying and nosebleeds as well as feelings of suffocation, Dr. Campbell said.

["Comfort Seldom Comes from Cannula" -- Commentary, Hospitalist News, 4/6/12]

To assess the value of routine oxygen administration, she conducted a double-blind, randomized, crossover study involving 32 terminally ill patients. None was in respiratory distress at baseline, but all were at high risk for distress because of underlying COPD, heart failure, pneumonia, or lung cancer. All participants had a Palliative Performance Scale score of 30 or less, which is associated with a median 9- to 14-day survival.

Each patient received a capnoline – that is, a nasal cannula with a piece of plastic hanging down over the patient’s mouth to capture exhaled carbon dioxide. Next, randomly alternating 10-minute intervals of oxygen, medical air, and no flow were administered for 90 minutes.

The key finding: 29 of 32 patients experienced no distress during the 90-minute protocol, indicating that they didn’t need the oxygen. Yet, at enrollment, 27 patients had oxygen flowing, reflecting this widespread clinical practice at the end of life, Dr. Campbell said.

The remaining three patients rapidly became hypoxemic and distressed when crossed over from oxygen to no flow. They were returned to baseline oxygen and respiratory comfort.

As many of the study participants were unconscious or cognitively impaired and couldn’t self report their distress, the Respiratory Distress Observation Scale was assessed at baseline and for 10 minutes after every flow change. A score of 4 or less on the 0-16 scale indicates little or no distress; the average baseline score was 1.47, and it didn’t vary significantly during the different flow conditions, she reported.

The average oxygen saturation at baseline was 93.6%, and it didn’t change significantly during the 90-minute protocol.

Dr. Campbell said that she determines the need for oxygen in an end-of-life patient by taking the patient off oxygen for 10 minutes and watching for distress.

Several audience members predicted that the patient’s family is likely to object to this approach because oxygen has become an expected part of end-of-life care. Dr. Campbell responded that the solution to that problem is simply good communication.

"I think if you explain to families that this is a treatment that can be helpful but has side effects, and we always take away the things that aren’t helping when they’re no longer helping, you won’t have pushback from family members," she said.

Dr. Campbell’s study was funded by the Blue Cross/Blue Shield of Michigan Foundation. She reported having no financial conflicts.

DENVER – The routine administration of oxygen to terminally ill patients who are near death is unwarranted, according to the results of a randomized, double-blind trial.

"I would suggest that we always use the patient in respiratory distress as their own control in an n-of-1 trial of oxygen. If oxygen does reduce their distress, then that patient should have oxygen, but if it does not – if there’s no change in patient distress – then that oxygen can be discontinued, or certainly not initiated in the first place," Mary L. Campbell, Ph.D., declared at the annual meeting of the American Academy of Hospice and Palliative Care Medicine.

Oxygen has well-established benefits in hypoxemic patients with acute or chronic exacerbations of an underlying pulmonary condition, but without ever having been subjected to scientific scrutiny, oxygen administration has become routine for patients who are near death, asserted Dr. Campbell of Wayne State University, Detroit.

"Oxygen has become almost an iconic intervention at the end of life – as common as golf clubs on a Wednesday afternoon," she said.

And oxygen support is not a benign intervention. It’s expensive, particularly in home care, where it requires additional personnel and materials in the home, including a noisy, intrusive concentrator at the bedside. It also causes nasal drying and nosebleeds as well as feelings of suffocation, Dr. Campbell said.

["Comfort Seldom Comes from Cannula" -- Commentary, Hospitalist News, 4/6/12]

To assess the value of routine oxygen administration, she conducted a double-blind, randomized, crossover study involving 32 terminally ill patients. None was in respiratory distress at baseline, but all were at high risk for distress because of underlying COPD, heart failure, pneumonia, or lung cancer. All participants had a Palliative Performance Scale score of 30 or less, which is associated with a median 9- to 14-day survival.

Each patient received a capnoline – that is, a nasal cannula with a piece of plastic hanging down over the patient’s mouth to capture exhaled carbon dioxide. Next, randomly alternating 10-minute intervals of oxygen, medical air, and no flow were administered for 90 minutes.

The key finding: 29 of 32 patients experienced no distress during the 90-minute protocol, indicating that they didn’t need the oxygen. Yet, at enrollment, 27 patients had oxygen flowing, reflecting this widespread clinical practice at the end of life, Dr. Campbell said.

The remaining three patients rapidly became hypoxemic and distressed when crossed over from oxygen to no flow. They were returned to baseline oxygen and respiratory comfort.

As many of the study participants were unconscious or cognitively impaired and couldn’t self report their distress, the Respiratory Distress Observation Scale was assessed at baseline and for 10 minutes after every flow change. A score of 4 or less on the 0-16 scale indicates little or no distress; the average baseline score was 1.47, and it didn’t vary significantly during the different flow conditions, she reported.

The average oxygen saturation at baseline was 93.6%, and it didn’t change significantly during the 90-minute protocol.

Dr. Campbell said that she determines the need for oxygen in an end-of-life patient by taking the patient off oxygen for 10 minutes and watching for distress.

Several audience members predicted that the patient’s family is likely to object to this approach because oxygen has become an expected part of end-of-life care. Dr. Campbell responded that the solution to that problem is simply good communication.

"I think if you explain to families that this is a treatment that can be helpful but has side effects, and we always take away the things that aren’t helping when they’re no longer helping, you won’t have pushback from family members," she said.

Dr. Campbell’s study was funded by the Blue Cross/Blue Shield of Michigan Foundation. She reported having no financial conflicts.

DENVER – The routine administration of oxygen to terminally ill patients who are near death is unwarranted, according to the results of a randomized, double-blind trial.

"I would suggest that we always use the patient in respiratory distress as their own control in an n-of-1 trial of oxygen. If oxygen does reduce their distress, then that patient should have oxygen, but if it does not – if there’s no change in patient distress – then that oxygen can be discontinued, or certainly not initiated in the first place," Mary L. Campbell, Ph.D., declared at the annual meeting of the American Academy of Hospice and Palliative Care Medicine.

Oxygen has well-established benefits in hypoxemic patients with acute or chronic exacerbations of an underlying pulmonary condition, but without ever having been subjected to scientific scrutiny, oxygen administration has become routine for patients who are near death, asserted Dr. Campbell of Wayne State University, Detroit.

"Oxygen has become almost an iconic intervention at the end of life – as common as golf clubs on a Wednesday afternoon," she said.

And oxygen support is not a benign intervention. It’s expensive, particularly in home care, where it requires additional personnel and materials in the home, including a noisy, intrusive concentrator at the bedside. It also causes nasal drying and nosebleeds as well as feelings of suffocation, Dr. Campbell said.

["Comfort Seldom Comes from Cannula" -- Commentary, Hospitalist News, 4/6/12]

To assess the value of routine oxygen administration, she conducted a double-blind, randomized, crossover study involving 32 terminally ill patients. None was in respiratory distress at baseline, but all were at high risk for distress because of underlying COPD, heart failure, pneumonia, or lung cancer. All participants had a Palliative Performance Scale score of 30 or less, which is associated with a median 9- to 14-day survival.

Each patient received a capnoline – that is, a nasal cannula with a piece of plastic hanging down over the patient’s mouth to capture exhaled carbon dioxide. Next, randomly alternating 10-minute intervals of oxygen, medical air, and no flow were administered for 90 minutes.

The key finding: 29 of 32 patients experienced no distress during the 90-minute protocol, indicating that they didn’t need the oxygen. Yet, at enrollment, 27 patients had oxygen flowing, reflecting this widespread clinical practice at the end of life, Dr. Campbell said.

The remaining three patients rapidly became hypoxemic and distressed when crossed over from oxygen to no flow. They were returned to baseline oxygen and respiratory comfort.

As many of the study participants were unconscious or cognitively impaired and couldn’t self report their distress, the Respiratory Distress Observation Scale was assessed at baseline and for 10 minutes after every flow change. A score of 4 or less on the 0-16 scale indicates little or no distress; the average baseline score was 1.47, and it didn’t vary significantly during the different flow conditions, she reported.

The average oxygen saturation at baseline was 93.6%, and it didn’t change significantly during the 90-minute protocol.

Dr. Campbell said that she determines the need for oxygen in an end-of-life patient by taking the patient off oxygen for 10 minutes and watching for distress.

Several audience members predicted that the patient’s family is likely to object to this approach because oxygen has become an expected part of end-of-life care. Dr. Campbell responded that the solution to that problem is simply good communication.

"I think if you explain to families that this is a treatment that can be helpful but has side effects, and we always take away the things that aren’t helping when they’re no longer helping, you won’t have pushback from family members," she said.

Dr. Campbell’s study was funded by the Blue Cross/Blue Shield of Michigan Foundation. She reported having no financial conflicts.

EXPERT ANALYSIS FROM THE ANNUAL MEETING OF THE AMERICAN ACADEMY OF HOSPICE AND PALLIATIVE CARE MEDICINE

Hemodialysis, Injection Drug Users Vulnerable to Recurrent Endocarditis

LONDON – Hemodialysis and injection drug use were major risk factors for recurrent episodes of infective endocarditis among patients enrolled in a large international prospective study.

Repeat infective endocarditis (IE) is a serious complication of patients who survive an initial episode. In previous studies, the incidence has ranged from 2% to 31%. "Repeat IE is associated with significant mortality. It is an uncommon complication but can be highly relevant among specific groups of patients," Dr. Laura Alagna of San Raffaele Hospital, Milan, said at the European Congress of Clinical Microbiology and Infectious Diseases.

The findings come from The International Collaboration on Endocarditis-Prospective Cohort Study (ICE-PCS), a contemporary cohort of more than 5,000 patients with infective endocarditis (IE) from 64 centers in 28 countries worldwide. Patients included in the current analysis were those enrolled from June 2000 to December 2006, with a diagnosis of definite IE on native or prosthetic valves who had at least a 1-year follow-up.

Of 1,857 patients who met inclusion criteria, 1,783 had one episode of IE and 91 (5%) had a repeat IE. Of those, 17 had a presumed relapse, defined clinically as infection with the same pathogen isolated within 6 months of the initial episode. The other 74 had a presumed new infection, defined clinically as a repeat episode with a different pathogen or the same pathogen isolated greater than 6 months from the initial episode.

On bivariate analysis, being from North America, infection with Staphylococcus aureus, hemodialysis dependence, intravenous drug use (IDU), HIV infection, history of previous IE, and non-nosocomial health care as a presumed source of infection were all associated with repeat IE. However, on multivariate analysis, only four independent risk factors emerged: North American location (odds ratio, 1.96), hemodialysis (OR, 2.54), IDU (OR, 2.89), and history of previous IE (2.76).

At 1-year follow-up, survival was significantly lower for those with repeat IE, 80% compared to 91% for those with only one episode (P = .0034), Dr. Alagna reported.

Molecular analysis with pulsed-gel electrophoresis performed in 12 of the repeat IE patients demonstrated concordance with the clinical definition in 10, including eight confirmed relapses and two confirmed new infections. Of the other two patients, one had S. aureus with the same molecular pattern isolated more than a year after the first episode. That patient was hemodialysis-dependent and had other risk factors. In the other discordant patient, S. bovis with the same molecular pattern was isolated after nearly 9 months. In that patient, a cardiac device had not been removed during the first episode, she explained.

"The clinical classification of repeat IE is satisfactory, but can be improved with molecular analysis," she concluded.

Dr. Alagna stated that she had no disclosures.

LONDON – Hemodialysis and injection drug use were major risk factors for recurrent episodes of infective endocarditis among patients enrolled in a large international prospective study.

Repeat infective endocarditis (IE) is a serious complication of patients who survive an initial episode. In previous studies, the incidence has ranged from 2% to 31%. "Repeat IE is associated with significant mortality. It is an uncommon complication but can be highly relevant among specific groups of patients," Dr. Laura Alagna of San Raffaele Hospital, Milan, said at the European Congress of Clinical Microbiology and Infectious Diseases.

The findings come from The International Collaboration on Endocarditis-Prospective Cohort Study (ICE-PCS), a contemporary cohort of more than 5,000 patients with infective endocarditis (IE) from 64 centers in 28 countries worldwide. Patients included in the current analysis were those enrolled from June 2000 to December 2006, with a diagnosis of definite IE on native or prosthetic valves who had at least a 1-year follow-up.

Of 1,857 patients who met inclusion criteria, 1,783 had one episode of IE and 91 (5%) had a repeat IE. Of those, 17 had a presumed relapse, defined clinically as infection with the same pathogen isolated within 6 months of the initial episode. The other 74 had a presumed new infection, defined clinically as a repeat episode with a different pathogen or the same pathogen isolated greater than 6 months from the initial episode.

On bivariate analysis, being from North America, infection with Staphylococcus aureus, hemodialysis dependence, intravenous drug use (IDU), HIV infection, history of previous IE, and non-nosocomial health care as a presumed source of infection were all associated with repeat IE. However, on multivariate analysis, only four independent risk factors emerged: North American location (odds ratio, 1.96), hemodialysis (OR, 2.54), IDU (OR, 2.89), and history of previous IE (2.76).

At 1-year follow-up, survival was significantly lower for those with repeat IE, 80% compared to 91% for those with only one episode (P = .0034), Dr. Alagna reported.

Molecular analysis with pulsed-gel electrophoresis performed in 12 of the repeat IE patients demonstrated concordance with the clinical definition in 10, including eight confirmed relapses and two confirmed new infections. Of the other two patients, one had S. aureus with the same molecular pattern isolated more than a year after the first episode. That patient was hemodialysis-dependent and had other risk factors. In the other discordant patient, S. bovis with the same molecular pattern was isolated after nearly 9 months. In that patient, a cardiac device had not been removed during the first episode, she explained.

"The clinical classification of repeat IE is satisfactory, but can be improved with molecular analysis," she concluded.

Dr. Alagna stated that she had no disclosures.

LONDON – Hemodialysis and injection drug use were major risk factors for recurrent episodes of infective endocarditis among patients enrolled in a large international prospective study.

Repeat infective endocarditis (IE) is a serious complication of patients who survive an initial episode. In previous studies, the incidence has ranged from 2% to 31%. "Repeat IE is associated with significant mortality. It is an uncommon complication but can be highly relevant among specific groups of patients," Dr. Laura Alagna of San Raffaele Hospital, Milan, said at the European Congress of Clinical Microbiology and Infectious Diseases.

The findings come from The International Collaboration on Endocarditis-Prospective Cohort Study (ICE-PCS), a contemporary cohort of more than 5,000 patients with infective endocarditis (IE) from 64 centers in 28 countries worldwide. Patients included in the current analysis were those enrolled from June 2000 to December 2006, with a diagnosis of definite IE on native or prosthetic valves who had at least a 1-year follow-up.

Of 1,857 patients who met inclusion criteria, 1,783 had one episode of IE and 91 (5%) had a repeat IE. Of those, 17 had a presumed relapse, defined clinically as infection with the same pathogen isolated within 6 months of the initial episode. The other 74 had a presumed new infection, defined clinically as a repeat episode with a different pathogen or the same pathogen isolated greater than 6 months from the initial episode.

On bivariate analysis, being from North America, infection with Staphylococcus aureus, hemodialysis dependence, intravenous drug use (IDU), HIV infection, history of previous IE, and non-nosocomial health care as a presumed source of infection were all associated with repeat IE. However, on multivariate analysis, only four independent risk factors emerged: North American location (odds ratio, 1.96), hemodialysis (OR, 2.54), IDU (OR, 2.89), and history of previous IE (2.76).

At 1-year follow-up, survival was significantly lower for those with repeat IE, 80% compared to 91% for those with only one episode (P = .0034), Dr. Alagna reported.

Molecular analysis with pulsed-gel electrophoresis performed in 12 of the repeat IE patients demonstrated concordance with the clinical definition in 10, including eight confirmed relapses and two confirmed new infections. Of the other two patients, one had S. aureus with the same molecular pattern isolated more than a year after the first episode. That patient was hemodialysis-dependent and had other risk factors. In the other discordant patient, S. bovis with the same molecular pattern was isolated after nearly 9 months. In that patient, a cardiac device had not been removed during the first episode, she explained.

"The clinical classification of repeat IE is satisfactory, but can be improved with molecular analysis," she concluded.

Dr. Alagna stated that she had no disclosures.

FROM THE EUROPEAN CONGRESS OF CLINICAL MICROBIOLOGY AND INFECTIOUS DISEASES

Major Finding: On multivariate analysis, independent risk factors for repeat infective endocarditis were North American location (odds ratio, 1.96), hemodialysis (OR, 2.54), IDU (OR, 2.89), and history of previous IE (2.76).

Data Source: The findings come from The International Collaboration on Endocarditis-Prospective Cohort Study (ICE-PCS), a contemporary cohort of more than 5,000 patients with infective endocarditis (IE) from 64 centers in 28 countries worldwide. Patients included in the current analysis were those enrolled from June 2000 to December 2006, with a diagnosis of definite IE on native or prosthetic valves who had at least a 1-year follow-up.

Disclosures: Dr. Alagna stated that she had no disclosures.

Benzodiazepines Improve Dyspnea in Palliative Care Patients

DENVER – Low-dose adjunctive benzodiazepines are effective in combination with opioids for dyspnea in palliative care patients who don’t respond to opioids alone, according to Dr. Patama Gomutbutra.

When opioids alone aren’t bringing significant improvement, adding a benzodiazepine is worthwhile, she said. The question of whether benzodiazepines alone are effective in the management of dyspnea must await answers from randomized clinical trials.

Dr. Gomutbutra conducted a retrospective chart review of 303 inpatients with dyspnea evaluated by members of the University of California, San Francisco, palliative care program. These were seriously ill patients: Twenty-three percent had primary lung cancer, 32% had cancer outside the lung, 12% had heart failure, and 7% had chronic obstructive pulmonary disease. Of these patients, 47% died in the hospital and 25% were discharged to hospice.

At baseline, physicians rated dyspnea as severe in 19% of patients, moderate in 28%, and mild in 53%. At baseline, 49% of patients were already on opioids at a median dose of 52 mg/day; 87% of these patients remained on opioids at 24 hours, with a bump up in dose to a median of 60 mg/day. Of the patients not initially taking an opioid, 41% were placed on the medication at a median dose of 22 mg/day.

"Our results should not dissuade people from using opioids as the first-line treatment."

At baseline, 17% of patients were on a benzodiazepine at a median dose of 1 mg/day of oral lorazepam or its equivalent. At 24 hours, 24% of patients were on a benzodiazepine, again at a median daily dose of 1 mg.

At follow-up 24 hours after adjustment of dosages or addition of an opioid or a benzodiazepine, the population with severe dyspnea had fallen from 19% to 4%. Dyspnea was rated moderate in 18% and mild in 44%, and was absent in 34%.

Overall, 57% of patients had a clinically meaningful improvement in dyspnea of one severity grade or more, 37% remained the same, and the rest became worse, according to Dr. Gomutbutra of Chiang Mai (Thailand) University.

Taking an opioid and a benzodiazepine at follow-up was independently associated with a 2.1-fold increased likelihood of significant improvement in dyspnea. Having moderate or severe dyspnea at baseline was associated with 4.1- and 4.5-fold increased likelihoods of improvement, respectively.

Surprisingly, being on an opioid at baseline wasn’t associated with significant improvement at follow-up, even though opioids are guideline-recommended therapy for dyspnea.

Dr. Gomutbutra cautioned against overinterpretation of this finding, given that her study was retrospective and thus vulnerable to confounding. For example, she noted, the respiratory rate typically slows near death, so affected patients may not have received continued or increased doses of opioids.

"Our results should not dissuade people from using opioids as the first-line treatment," Dr. Gomutbutra emphasized.

Dr. Gomutbutra carried out this study after observing big differences in how dyspnea is managed in palliative care settings in the United States, compared with Thailand. While the median daily dose of opioids at baseline in the San Francisco study was 52 mg/day, a typical dose in Thailand would be 6 mg/day. And benzodiazepines are far more widely used in treating dyspnea there, she added.

She reported having no financial conflicts.

DENVER – Low-dose adjunctive benzodiazepines are effective in combination with opioids for dyspnea in palliative care patients who don’t respond to opioids alone, according to Dr. Patama Gomutbutra.

When opioids alone aren’t bringing significant improvement, adding a benzodiazepine is worthwhile, she said. The question of whether benzodiazepines alone are effective in the management of dyspnea must await answers from randomized clinical trials.

Dr. Gomutbutra conducted a retrospective chart review of 303 inpatients with dyspnea evaluated by members of the University of California, San Francisco, palliative care program. These were seriously ill patients: Twenty-three percent had primary lung cancer, 32% had cancer outside the lung, 12% had heart failure, and 7% had chronic obstructive pulmonary disease. Of these patients, 47% died in the hospital and 25% were discharged to hospice.

At baseline, physicians rated dyspnea as severe in 19% of patients, moderate in 28%, and mild in 53%. At baseline, 49% of patients were already on opioids at a median dose of 52 mg/day; 87% of these patients remained on opioids at 24 hours, with a bump up in dose to a median of 60 mg/day. Of the patients not initially taking an opioid, 41% were placed on the medication at a median dose of 22 mg/day.

"Our results should not dissuade people from using opioids as the first-line treatment."

At baseline, 17% of patients were on a benzodiazepine at a median dose of 1 mg/day of oral lorazepam or its equivalent. At 24 hours, 24% of patients were on a benzodiazepine, again at a median daily dose of 1 mg.

At follow-up 24 hours after adjustment of dosages or addition of an opioid or a benzodiazepine, the population with severe dyspnea had fallen from 19% to 4%. Dyspnea was rated moderate in 18% and mild in 44%, and was absent in 34%.

Overall, 57% of patients had a clinically meaningful improvement in dyspnea of one severity grade or more, 37% remained the same, and the rest became worse, according to Dr. Gomutbutra of Chiang Mai (Thailand) University.

Taking an opioid and a benzodiazepine at follow-up was independently associated with a 2.1-fold increased likelihood of significant improvement in dyspnea. Having moderate or severe dyspnea at baseline was associated with 4.1- and 4.5-fold increased likelihoods of improvement, respectively.

Surprisingly, being on an opioid at baseline wasn’t associated with significant improvement at follow-up, even though opioids are guideline-recommended therapy for dyspnea.

Dr. Gomutbutra cautioned against overinterpretation of this finding, given that her study was retrospective and thus vulnerable to confounding. For example, she noted, the respiratory rate typically slows near death, so affected patients may not have received continued or increased doses of opioids.

"Our results should not dissuade people from using opioids as the first-line treatment," Dr. Gomutbutra emphasized.

Dr. Gomutbutra carried out this study after observing big differences in how dyspnea is managed in palliative care settings in the United States, compared with Thailand. While the median daily dose of opioids at baseline in the San Francisco study was 52 mg/day, a typical dose in Thailand would be 6 mg/day. And benzodiazepines are far more widely used in treating dyspnea there, she added.

She reported having no financial conflicts.

DENVER – Low-dose adjunctive benzodiazepines are effective in combination with opioids for dyspnea in palliative care patients who don’t respond to opioids alone, according to Dr. Patama Gomutbutra.

When opioids alone aren’t bringing significant improvement, adding a benzodiazepine is worthwhile, she said. The question of whether benzodiazepines alone are effective in the management of dyspnea must await answers from randomized clinical trials.

Dr. Gomutbutra conducted a retrospective chart review of 303 inpatients with dyspnea evaluated by members of the University of California, San Francisco, palliative care program. These were seriously ill patients: Twenty-three percent had primary lung cancer, 32% had cancer outside the lung, 12% had heart failure, and 7% had chronic obstructive pulmonary disease. Of these patients, 47% died in the hospital and 25% were discharged to hospice.

At baseline, physicians rated dyspnea as severe in 19% of patients, moderate in 28%, and mild in 53%. At baseline, 49% of patients were already on opioids at a median dose of 52 mg/day; 87% of these patients remained on opioids at 24 hours, with a bump up in dose to a median of 60 mg/day. Of the patients not initially taking an opioid, 41% were placed on the medication at a median dose of 22 mg/day.

"Our results should not dissuade people from using opioids as the first-line treatment."

At baseline, 17% of patients were on a benzodiazepine at a median dose of 1 mg/day of oral lorazepam or its equivalent. At 24 hours, 24% of patients were on a benzodiazepine, again at a median daily dose of 1 mg.

At follow-up 24 hours after adjustment of dosages or addition of an opioid or a benzodiazepine, the population with severe dyspnea had fallen from 19% to 4%. Dyspnea was rated moderate in 18% and mild in 44%, and was absent in 34%.

Overall, 57% of patients had a clinically meaningful improvement in dyspnea of one severity grade or more, 37% remained the same, and the rest became worse, according to Dr. Gomutbutra of Chiang Mai (Thailand) University.

Taking an opioid and a benzodiazepine at follow-up was independently associated with a 2.1-fold increased likelihood of significant improvement in dyspnea. Having moderate or severe dyspnea at baseline was associated with 4.1- and 4.5-fold increased likelihoods of improvement, respectively.

Surprisingly, being on an opioid at baseline wasn’t associated with significant improvement at follow-up, even though opioids are guideline-recommended therapy for dyspnea.

Dr. Gomutbutra cautioned against overinterpretation of this finding, given that her study was retrospective and thus vulnerable to confounding. For example, she noted, the respiratory rate typically slows near death, so affected patients may not have received continued or increased doses of opioids.

"Our results should not dissuade people from using opioids as the first-line treatment," Dr. Gomutbutra emphasized.

Dr. Gomutbutra carried out this study after observing big differences in how dyspnea is managed in palliative care settings in the United States, compared with Thailand. While the median daily dose of opioids at baseline in the San Francisco study was 52 mg/day, a typical dose in Thailand would be 6 mg/day. And benzodiazepines are far more widely used in treating dyspnea there, she added.

She reported having no financial conflicts.

FROM THE ANNUAL MEETING OF THE AMERICAN ACADEMY OF HOSPICE AND PALLIATIVE CARE MEDICINE

Major Finding: At follow-up 24 hours after adjustment of dosages or addition of an opioid or a benzodiazepine, the population with severe dyspnea had fallen from 19% to 4%. Dyspnea was rated moderate in 18% and mild in 44%, and was absent in 34%.

Data Source: Data were taken from a retrospective chart review of 303 inpatients with dyspnea evaluated by members of the University of California, San Francisco, palliative care program.

Disclosures: Dr. Gomutbutra reported having no financial conflicts.

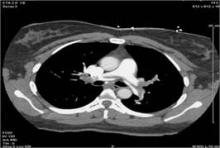

Reduced TPA Regimen Safely Treats Pulmonary Embolism

CHICAGO – A reduced-dose regimen of tissue plasminogen activator and parenteral anticoagulant safely led to improved outcomes in hemodynamically stable patients with a pulmonary embolism in a pilot study with a total of 121 patients treated at one U.S. center.

None of the 61 patients treated with the regimen, which halved the standard dosage of tissue plasminogen activator (TPA) and cut the dosage of enoxaparin or heparin by about 20%-30%, had an intracranial hemorrhage or a major bleeding event, compared with a historic 2%-6% incidence of intracranial hemorrhage and a 6%-20% incidence of major bleeds in hemodynamically unstable pulmonary embolism patients who receive the standard, full dose of both the thrombolytic and anticoagulant, Dr. Mohsen Sharifi said at the meeting.

While he acknowledged that the results need confirmation in a larger study, "in our experience treating deep vein thrombosis [with a similarly low dosage of TPA], we are comfortable that this amount of TPA can be given safely," said Dr. Sharifi, an interventional cardiologist who practices in Mesa, Ariz.

The findings also showed that applying this reduced-dose intervention to hemodynamically stable patients with a pulmonary embolism (PE), who are typically not treated, substantially improved their long-term prognosis by reducing their development of pulmonary hypertension. After an average of 28 months follow-up, 9 of the 58 patients (16%) followed long term and treated with the reduced-dose regimen had pulmonary hypertension, defined as a pulmonary artery systolic pressure greater than 40 mm Hg, compared with pulmonary hypertension in 32 of the 56 control patients (57%) managed by standard treatment with anticoagulation only.

Current guidelines from the American Heart Association call for fibrinolytic treatment only in patients with a massive, acute PE, or in patients with a submassive PE who are hemodynamically unstable or have other clinical evidence of an adverse prognosis (Circulation 2011;123:1788-830). According to Dr. Sharifi, about 5% of all PE patients fall into this category. He estimated that broadening thrombolytic treatment to hemodynamically stable patients who met his study’s inclusion criteria could broaden TPA treatment to an additional 70% of PE patients currently seen in emergency departments.

"I think that, based on the results of this pilot study, you won’t get broad acceptance of treating hemodynamically stable PE patients with thrombolysis," commented Dr. Michael Crawford, chief of general cardiology at the University of California, San Francisco. Two larger studies nearing completion are both examining the efficacy and safety of thrombolysis in patients with submassive PE.

Dr. Sharifi said that despite the small study size, he and his associates were convinced enough by their findings to use the reduced TPA dosage tested in this study on a routine basis when they see patients who meet their enrollment criteria.

The MOPETT (Moderate Pulmonary Embolism Treated with Thrombolysis) study enrolled patients with a PE affecting at least two lobar segments, pulmonary artery systolic pressure greater than 40 mm Hg; right ventricular hypokinesia and enlargement; and at least two symptoms, which could include chest pain, tachypnea greater than 22 respirations/min, tachycardia with a resting heart rate of more than 90 beats/min, dyspnea, cough, oxygen desaturation, and jugular venous pressure more than 12 mm H2O. The average age of the patients was about 59 years, and slightly more than half were women. Their average pulmonary artery systolic pressure at entry was about 50 mm Hg.

Dr. Sharifi and his associates randomized half the patients to receive conventional treatment with anticoagulant only, either enoxaparin or heparin plus warfarin. The other patients received thrombolytic treatment with an infusion of TPA at half the standard dosage, starting in patients who weighed at least 50 kg with a loading dose of 10 mg delivered in 1 minute, and followed by a 40-mg total additional dose administered over 2 hours. Patients who weighed less received the same 10-mg initial dose, but their total dose including the subsequent 2-hour infusion was limited to 0.5 mg/kg. The patients treated with TPA also received concomitant anticoagulation, with either enoxaparin given at 1 mg/kg but not to exceed 80 mg as an initial dose, or heparin at an initial dose of 70 U/kg but capped at 6,000 U, followed by heparin maintenance at 10 U/kg per hour during the TPA infusion (but not exceeding 1,000 U/hour), and then rising to 18 U/kg per hour starting 1 hour after TPA treatment stopped. About 80% of all patients in the study received enoxaparin, and about 20% received heparin.

At 48 hours after starting treatment, average pulmonary artery systolic pressure dropped by 16 mm Hg in the TPA group and by 5 mm Hg in the control patients. By the end of the average 28-month follow-up, average pulmonary artery systolic pressure was 28 mm Hg in the TPA patients and 43 mm Hg in the controls. Dr. Sharifi attributed the efficacy of reduced-dose TPA to the "exquisite sensitivity" of blood clots lodged in a patient’s lungs to the drug, a consequence of all the infused TPA passing through the lung’s arterial circulation.

In addition to showing a statistically significant benefit from TPA for the study’s primary end point, the average duration of hospitalization in the TPA recipients was 2.2 days, compared with an average of 4.9 days in the control patients, a statistically significant difference. And at the end of the average 28 months of follow-up, three patients in the control arm had a recurrent PE and another three had died, significantly more than the no recurrent PEs and one death in the TPA arm.

Dr. Sharifi and Dr. Crawford said that they had no relevant disclosures.

CHICAGO – A reduced-dose regimen of tissue plasminogen activator and parenteral anticoagulant safely led to improved outcomes in hemodynamically stable patients with a pulmonary embolism in a pilot study with a total of 121 patients treated at one U.S. center.

None of the 61 patients treated with the regimen, which halved the standard dosage of tissue plasminogen activator (TPA) and cut the dosage of enoxaparin or heparin by about 20%-30%, had an intracranial hemorrhage or a major bleeding event, compared with a historic 2%-6% incidence of intracranial hemorrhage and a 6%-20% incidence of major bleeds in hemodynamically unstable pulmonary embolism patients who receive the standard, full dose of both the thrombolytic and anticoagulant, Dr. Mohsen Sharifi said at the meeting.

While he acknowledged that the results need confirmation in a larger study, "in our experience treating deep vein thrombosis [with a similarly low dosage of TPA], we are comfortable that this amount of TPA can be given safely," said Dr. Sharifi, an interventional cardiologist who practices in Mesa, Ariz.

The findings also showed that applying this reduced-dose intervention to hemodynamically stable patients with a pulmonary embolism (PE), who are typically not treated, substantially improved their long-term prognosis by reducing their development of pulmonary hypertension. After an average of 28 months follow-up, 9 of the 58 patients (16%) followed long term and treated with the reduced-dose regimen had pulmonary hypertension, defined as a pulmonary artery systolic pressure greater than 40 mm Hg, compared with pulmonary hypertension in 32 of the 56 control patients (57%) managed by standard treatment with anticoagulation only.

Current guidelines from the American Heart Association call for fibrinolytic treatment only in patients with a massive, acute PE, or in patients with a submassive PE who are hemodynamically unstable or have other clinical evidence of an adverse prognosis (Circulation 2011;123:1788-830). According to Dr. Sharifi, about 5% of all PE patients fall into this category. He estimated that broadening thrombolytic treatment to hemodynamically stable patients who met his study’s inclusion criteria could broaden TPA treatment to an additional 70% of PE patients currently seen in emergency departments.

"I think that, based on the results of this pilot study, you won’t get broad acceptance of treating hemodynamically stable PE patients with thrombolysis," commented Dr. Michael Crawford, chief of general cardiology at the University of California, San Francisco. Two larger studies nearing completion are both examining the efficacy and safety of thrombolysis in patients with submassive PE.

Dr. Sharifi said that despite the small study size, he and his associates were convinced enough by their findings to use the reduced TPA dosage tested in this study on a routine basis when they see patients who meet their enrollment criteria.

The MOPETT (Moderate Pulmonary Embolism Treated with Thrombolysis) study enrolled patients with a PE affecting at least two lobar segments, pulmonary artery systolic pressure greater than 40 mm Hg; right ventricular hypokinesia and enlargement; and at least two symptoms, which could include chest pain, tachypnea greater than 22 respirations/min, tachycardia with a resting heart rate of more than 90 beats/min, dyspnea, cough, oxygen desaturation, and jugular venous pressure more than 12 mm H2O. The average age of the patients was about 59 years, and slightly more than half were women. Their average pulmonary artery systolic pressure at entry was about 50 mm Hg.

Dr. Sharifi and his associates randomized half the patients to receive conventional treatment with anticoagulant only, either enoxaparin or heparin plus warfarin. The other patients received thrombolytic treatment with an infusion of TPA at half the standard dosage, starting in patients who weighed at least 50 kg with a loading dose of 10 mg delivered in 1 minute, and followed by a 40-mg total additional dose administered over 2 hours. Patients who weighed less received the same 10-mg initial dose, but their total dose including the subsequent 2-hour infusion was limited to 0.5 mg/kg. The patients treated with TPA also received concomitant anticoagulation, with either enoxaparin given at 1 mg/kg but not to exceed 80 mg as an initial dose, or heparin at an initial dose of 70 U/kg but capped at 6,000 U, followed by heparin maintenance at 10 U/kg per hour during the TPA infusion (but not exceeding 1,000 U/hour), and then rising to 18 U/kg per hour starting 1 hour after TPA treatment stopped. About 80% of all patients in the study received enoxaparin, and about 20% received heparin.

At 48 hours after starting treatment, average pulmonary artery systolic pressure dropped by 16 mm Hg in the TPA group and by 5 mm Hg in the control patients. By the end of the average 28-month follow-up, average pulmonary artery systolic pressure was 28 mm Hg in the TPA patients and 43 mm Hg in the controls. Dr. Sharifi attributed the efficacy of reduced-dose TPA to the "exquisite sensitivity" of blood clots lodged in a patient’s lungs to the drug, a consequence of all the infused TPA passing through the lung’s arterial circulation.

In addition to showing a statistically significant benefit from TPA for the study’s primary end point, the average duration of hospitalization in the TPA recipients was 2.2 days, compared with an average of 4.9 days in the control patients, a statistically significant difference. And at the end of the average 28 months of follow-up, three patients in the control arm had a recurrent PE and another three had died, significantly more than the no recurrent PEs and one death in the TPA arm.

Dr. Sharifi and Dr. Crawford said that they had no relevant disclosures.

CHICAGO – A reduced-dose regimen of tissue plasminogen activator and parenteral anticoagulant safely led to improved outcomes in hemodynamically stable patients with a pulmonary embolism in a pilot study with a total of 121 patients treated at one U.S. center.

None of the 61 patients treated with the regimen, which halved the standard dosage of tissue plasminogen activator (TPA) and cut the dosage of enoxaparin or heparin by about 20%-30%, had an intracranial hemorrhage or a major bleeding event, compared with a historic 2%-6% incidence of intracranial hemorrhage and a 6%-20% incidence of major bleeds in hemodynamically unstable pulmonary embolism patients who receive the standard, full dose of both the thrombolytic and anticoagulant, Dr. Mohsen Sharifi said at the meeting.

While he acknowledged that the results need confirmation in a larger study, "in our experience treating deep vein thrombosis [with a similarly low dosage of TPA], we are comfortable that this amount of TPA can be given safely," said Dr. Sharifi, an interventional cardiologist who practices in Mesa, Ariz.

The findings also showed that applying this reduced-dose intervention to hemodynamically stable patients with a pulmonary embolism (PE), who are typically not treated, substantially improved their long-term prognosis by reducing their development of pulmonary hypertension. After an average of 28 months follow-up, 9 of the 58 patients (16%) followed long term and treated with the reduced-dose regimen had pulmonary hypertension, defined as a pulmonary artery systolic pressure greater than 40 mm Hg, compared with pulmonary hypertension in 32 of the 56 control patients (57%) managed by standard treatment with anticoagulation only.

Current guidelines from the American Heart Association call for fibrinolytic treatment only in patients with a massive, acute PE, or in patients with a submassive PE who are hemodynamically unstable or have other clinical evidence of an adverse prognosis (Circulation 2011;123:1788-830). According to Dr. Sharifi, about 5% of all PE patients fall into this category. He estimated that broadening thrombolytic treatment to hemodynamically stable patients who met his study’s inclusion criteria could broaden TPA treatment to an additional 70% of PE patients currently seen in emergency departments.

"I think that, based on the results of this pilot study, you won’t get broad acceptance of treating hemodynamically stable PE patients with thrombolysis," commented Dr. Michael Crawford, chief of general cardiology at the University of California, San Francisco. Two larger studies nearing completion are both examining the efficacy and safety of thrombolysis in patients with submassive PE.

Dr. Sharifi said that despite the small study size, he and his associates were convinced enough by their findings to use the reduced TPA dosage tested in this study on a routine basis when they see patients who meet their enrollment criteria.

The MOPETT (Moderate Pulmonary Embolism Treated with Thrombolysis) study enrolled patients with a PE affecting at least two lobar segments, pulmonary artery systolic pressure greater than 40 mm Hg; right ventricular hypokinesia and enlargement; and at least two symptoms, which could include chest pain, tachypnea greater than 22 respirations/min, tachycardia with a resting heart rate of more than 90 beats/min, dyspnea, cough, oxygen desaturation, and jugular venous pressure more than 12 mm H2O. The average age of the patients was about 59 years, and slightly more than half were women. Their average pulmonary artery systolic pressure at entry was about 50 mm Hg.

Dr. Sharifi and his associates randomized half the patients to receive conventional treatment with anticoagulant only, either enoxaparin or heparin plus warfarin. The other patients received thrombolytic treatment with an infusion of TPA at half the standard dosage, starting in patients who weighed at least 50 kg with a loading dose of 10 mg delivered in 1 minute, and followed by a 40-mg total additional dose administered over 2 hours. Patients who weighed less received the same 10-mg initial dose, but their total dose including the subsequent 2-hour infusion was limited to 0.5 mg/kg. The patients treated with TPA also received concomitant anticoagulation, with either enoxaparin given at 1 mg/kg but not to exceed 80 mg as an initial dose, or heparin at an initial dose of 70 U/kg but capped at 6,000 U, followed by heparin maintenance at 10 U/kg per hour during the TPA infusion (but not exceeding 1,000 U/hour), and then rising to 18 U/kg per hour starting 1 hour after TPA treatment stopped. About 80% of all patients in the study received enoxaparin, and about 20% received heparin.

At 48 hours after starting treatment, average pulmonary artery systolic pressure dropped by 16 mm Hg in the TPA group and by 5 mm Hg in the control patients. By the end of the average 28-month follow-up, average pulmonary artery systolic pressure was 28 mm Hg in the TPA patients and 43 mm Hg in the controls. Dr. Sharifi attributed the efficacy of reduced-dose TPA to the "exquisite sensitivity" of blood clots lodged in a patient’s lungs to the drug, a consequence of all the infused TPA passing through the lung’s arterial circulation.

In addition to showing a statistically significant benefit from TPA for the study’s primary end point, the average duration of hospitalization in the TPA recipients was 2.2 days, compared with an average of 4.9 days in the control patients, a statistically significant difference. And at the end of the average 28 months of follow-up, three patients in the control arm had a recurrent PE and another three had died, significantly more than the no recurrent PEs and one death in the TPA arm.

Dr. Sharifi and Dr. Crawford said that they had no relevant disclosures.

FROM THE ANNUAL MEETING OF THE AMERICAN COLLEGE OF CARDIOLOGY

Major Finding: Pulmonary embolism patients receiving reduced dosages of TPA and anticoagulant had a 16% pulmonary hypertension rate versus 57% in controls.

Data Source: Data came from a single-center, randomized study that enrolled 121 patients with hemodynamically stable pulmonary embolism.

Disclosures: Dr. Sharifi and Dr. Crawford said that they had no relevant disclosures.

Glucose Cocktail Halved Cardiac Arrest in Suspected ACS

CHICAGO – Glucose, insulin, and potassium given in the field to patients with suspected acute coronary syndrome cut in half the odds of pre- or in-hospital cardiac arrest or death in the prospective, double-blind, randomized IMMEDIATE trial.

The benefits of glucose, insulin, and potassium (GIK) were even more pronounced in patients with ST-elevation myocardial infarction (STEMI), reducing this outcome by a statistically significant 60%, compared with placebo (6% vs. 14%; risk ratio, 0.39).

The study’s primary end point of progression to myocardial infarction at 30 days was reported in 49% of GIK and 53% of placebo patients, a nonsignificant difference.

Although GIK did not prevent infarcts, it significantly reduced their size, coprincipal investigator Dr. Harry P. Selker said at the annual meeting of the American College of Cardiology.

"Risks and side effects rates from GIK are very low and GIK is inexpensive, potentially available in all communities, and deserves further evaluation in trials for widespread," he said.

Despite missing its primary end point, the panel of invited discussants was enthusiastic about the potential for IMMEDIATE (Immediate Myocardial Metabolic Enhancement During Initial Assessment and Treatment in Emergency Care) to revive the 50-year-old therapy, long advocated by the late Tufts researcher Dr. Carl Apstein.

Panelist Dr. Bernard Gersh, from the Mayo Clinic in Rochester, Minn., asked whether the investigators were surprised at the magnitude of the treatment effect, given that GIK has failed in prior trials involving more than 20,000 patients.

"No, first of all, we know that most of the mortality is in that first hour since cardiac arrest and a lot of its effect is [against] cardiac arrest," replied Dr. Selker, professor of medicine and director of the Center for Cardiovascular Health Services Research at Tufts Medical Center in Boston. Experimental animal studies have also shown a 50% reduction in cardiac arrest. GIK decreases plasma and cellular free fatty acid levels, which are known to damage cell membranes and cause arrhythmias, supports the myocardium when there is less blood flow, and preserves myocardial potassium, an antiarrhythmic.

Notably, a subgroup analysis confirmed a significant benefit for GIK on cardiac arrest or hospital mortality only in those patients who received the therapy within 1 hour of symptom onset (odds ratio, 0.28), compared with those receiving GIK at least 1-6 hours (OR, 0.39) or more than 6 hours after symptom onset (OR, 1.18).

Dr. Elliott Antman, professor of medicine at Harvard University and senior faculty member in the cardiovascular division at Brigham and Women’s Hospital in Boston, asked why IMMEDIATE succeeded where so many other earlier GIK trials failed.

GIK was used for 12 hours, not 24-48 hours as previously done in other trials, said Dr. Selker, who also remarked that larger trials are needed to validate the findings since there are opposing data.

IMMEDIATE randomized 911 patients with suspected acute coronary syndrome to usual care or 30% glucose plus 50 IU insulin and 80 mEq potassium chloride/L at 1.5 mL/kg per hour administered en route by paramedics. All patients had a 12-lead ambulance ECG with Acute Ca Ischemia–Time-Insensitive Predictive Instrument (ACI-TIPI) and Thrombolytic Predictive Instrument (TPI) decision support.

Patients had at least one of the following: at least 75% predicted probability of ACS on ACI-TIPI, TPI detection of STEMI, or STEMI identified by local EMS protocol. Their mean age was 63 years and one-third had a history of myocardial infarction. Paramedics were from 36 EMS systems in 13 states across the country.

Pre- or in-hospital cardiac arrest or mortality was reported in 4% of GIK vs. 9% of placebo patients, a significant difference. The individual components of the composite outcome trended in the right direction, but did not achieve significance, Dr. Selker said.

At 30 days, 4% of the 432 GIK patients and 6% of the 479 placebo patients had died, a nonsignificant difference.

Mortality or hospitalization for heart failure was also similar between groups, occurring in 6% of GIK and 8% of placebo patients at 30 days.

Among STEMI patients, only the composite of cardiac arrest or pre- or in-hospital mortality significantly favored the GIK arm.

The percentage of patients with any glucose greater than 300 mg/dL was significantly higher in the GIK arm at 21% vs. 10% in the placebo arm. GIK also raised glucose levels in patients with diabetes (44% vs. 29%), but this did not lead to any serious adverse events, Dr. Selker said.

Dr. Antman asked whether the investigators evaluated the location of the STEMI because of the potential for an imbalance in anterior versus inferior locations that might have favored the GIK group. Dr. Selker said they had not performed that subanalysis.

IMMEDIATE was simultaneously published in JAMA (JAMA 2012 March 27 [doi: 10.1001/jama.2012.426]).

This study was funded by the National Heart, Lung, and Blood Institute. Dr. Selker and his coauthors reported no relevant conflicts of interest.

CHICAGO – Glucose, insulin, and potassium given in the field to patients with suspected acute coronary syndrome cut in half the odds of pre- or in-hospital cardiac arrest or death in the prospective, double-blind, randomized IMMEDIATE trial.

The benefits of glucose, insulin, and potassium (GIK) were even more pronounced in patients with ST-elevation myocardial infarction (STEMI), reducing this outcome by a statistically significant 60%, compared with placebo (6% vs. 14%; risk ratio, 0.39).

The study’s primary end point of progression to myocardial infarction at 30 days was reported in 49% of GIK and 53% of placebo patients, a nonsignificant difference.

Although GIK did not prevent infarcts, it significantly reduced their size, coprincipal investigator Dr. Harry P. Selker said at the annual meeting of the American College of Cardiology.

"Risks and side effects rates from GIK are very low and GIK is inexpensive, potentially available in all communities, and deserves further evaluation in trials for widespread," he said.

Despite missing its primary end point, the panel of invited discussants was enthusiastic about the potential for IMMEDIATE (Immediate Myocardial Metabolic Enhancement During Initial Assessment and Treatment in Emergency Care) to revive the 50-year-old therapy, long advocated by the late Tufts researcher Dr. Carl Apstein.

Panelist Dr. Bernard Gersh, from the Mayo Clinic in Rochester, Minn., asked whether the investigators were surprised at the magnitude of the treatment effect, given that GIK has failed in prior trials involving more than 20,000 patients.

"No, first of all, we know that most of the mortality is in that first hour since cardiac arrest and a lot of its effect is [against] cardiac arrest," replied Dr. Selker, professor of medicine and director of the Center for Cardiovascular Health Services Research at Tufts Medical Center in Boston. Experimental animal studies have also shown a 50% reduction in cardiac arrest. GIK decreases plasma and cellular free fatty acid levels, which are known to damage cell membranes and cause arrhythmias, supports the myocardium when there is less blood flow, and preserves myocardial potassium, an antiarrhythmic.

Notably, a subgroup analysis confirmed a significant benefit for GIK on cardiac arrest or hospital mortality only in those patients who received the therapy within 1 hour of symptom onset (odds ratio, 0.28), compared with those receiving GIK at least 1-6 hours (OR, 0.39) or more than 6 hours after symptom onset (OR, 1.18).

Dr. Elliott Antman, professor of medicine at Harvard University and senior faculty member in the cardiovascular division at Brigham and Women’s Hospital in Boston, asked why IMMEDIATE succeeded where so many other earlier GIK trials failed.

GIK was used for 12 hours, not 24-48 hours as previously done in other trials, said Dr. Selker, who also remarked that larger trials are needed to validate the findings since there are opposing data.

IMMEDIATE randomized 911 patients with suspected acute coronary syndrome to usual care or 30% glucose plus 50 IU insulin and 80 mEq potassium chloride/L at 1.5 mL/kg per hour administered en route by paramedics. All patients had a 12-lead ambulance ECG with Acute Ca Ischemia–Time-Insensitive Predictive Instrument (ACI-TIPI) and Thrombolytic Predictive Instrument (TPI) decision support.

Patients had at least one of the following: at least 75% predicted probability of ACS on ACI-TIPI, TPI detection of STEMI, or STEMI identified by local EMS protocol. Their mean age was 63 years and one-third had a history of myocardial infarction. Paramedics were from 36 EMS systems in 13 states across the country.

Pre- or in-hospital cardiac arrest or mortality was reported in 4% of GIK vs. 9% of placebo patients, a significant difference. The individual components of the composite outcome trended in the right direction, but did not achieve significance, Dr. Selker said.

At 30 days, 4% of the 432 GIK patients and 6% of the 479 placebo patients had died, a nonsignificant difference.

Mortality or hospitalization for heart failure was also similar between groups, occurring in 6% of GIK and 8% of placebo patients at 30 days.

Among STEMI patients, only the composite of cardiac arrest or pre- or in-hospital mortality significantly favored the GIK arm.

The percentage of patients with any glucose greater than 300 mg/dL was significantly higher in the GIK arm at 21% vs. 10% in the placebo arm. GIK also raised glucose levels in patients with diabetes (44% vs. 29%), but this did not lead to any serious adverse events, Dr. Selker said.

Dr. Antman asked whether the investigators evaluated the location of the STEMI because of the potential for an imbalance in anterior versus inferior locations that might have favored the GIK group. Dr. Selker said they had not performed that subanalysis.

IMMEDIATE was simultaneously published in JAMA (JAMA 2012 March 27 [doi: 10.1001/jama.2012.426]).

This study was funded by the National Heart, Lung, and Blood Institute. Dr. Selker and his coauthors reported no relevant conflicts of interest.

CHICAGO – Glucose, insulin, and potassium given in the field to patients with suspected acute coronary syndrome cut in half the odds of pre- or in-hospital cardiac arrest or death in the prospective, double-blind, randomized IMMEDIATE trial.

The benefits of glucose, insulin, and potassium (GIK) were even more pronounced in patients with ST-elevation myocardial infarction (STEMI), reducing this outcome by a statistically significant 60%, compared with placebo (6% vs. 14%; risk ratio, 0.39).

The study’s primary end point of progression to myocardial infarction at 30 days was reported in 49% of GIK and 53% of placebo patients, a nonsignificant difference.

Although GIK did not prevent infarcts, it significantly reduced their size, coprincipal investigator Dr. Harry P. Selker said at the annual meeting of the American College of Cardiology.

"Risks and side effects rates from GIK are very low and GIK is inexpensive, potentially available in all communities, and deserves further evaluation in trials for widespread," he said.

Despite missing its primary end point, the panel of invited discussants was enthusiastic about the potential for IMMEDIATE (Immediate Myocardial Metabolic Enhancement During Initial Assessment and Treatment in Emergency Care) to revive the 50-year-old therapy, long advocated by the late Tufts researcher Dr. Carl Apstein.

Panelist Dr. Bernard Gersh, from the Mayo Clinic in Rochester, Minn., asked whether the investigators were surprised at the magnitude of the treatment effect, given that GIK has failed in prior trials involving more than 20,000 patients.

"No, first of all, we know that most of the mortality is in that first hour since cardiac arrest and a lot of its effect is [against] cardiac arrest," replied Dr. Selker, professor of medicine and director of the Center for Cardiovascular Health Services Research at Tufts Medical Center in Boston. Experimental animal studies have also shown a 50% reduction in cardiac arrest. GIK decreases plasma and cellular free fatty acid levels, which are known to damage cell membranes and cause arrhythmias, supports the myocardium when there is less blood flow, and preserves myocardial potassium, an antiarrhythmic.

Notably, a subgroup analysis confirmed a significant benefit for GIK on cardiac arrest or hospital mortality only in those patients who received the therapy within 1 hour of symptom onset (odds ratio, 0.28), compared with those receiving GIK at least 1-6 hours (OR, 0.39) or more than 6 hours after symptom onset (OR, 1.18).

Dr. Elliott Antman, professor of medicine at Harvard University and senior faculty member in the cardiovascular division at Brigham and Women’s Hospital in Boston, asked why IMMEDIATE succeeded where so many other earlier GIK trials failed.

GIK was used for 12 hours, not 24-48 hours as previously done in other trials, said Dr. Selker, who also remarked that larger trials are needed to validate the findings since there are opposing data.

IMMEDIATE randomized 911 patients with suspected acute coronary syndrome to usual care or 30% glucose plus 50 IU insulin and 80 mEq potassium chloride/L at 1.5 mL/kg per hour administered en route by paramedics. All patients had a 12-lead ambulance ECG with Acute Ca Ischemia–Time-Insensitive Predictive Instrument (ACI-TIPI) and Thrombolytic Predictive Instrument (TPI) decision support.

Patients had at least one of the following: at least 75% predicted probability of ACS on ACI-TIPI, TPI detection of STEMI, or STEMI identified by local EMS protocol. Their mean age was 63 years and one-third had a history of myocardial infarction. Paramedics were from 36 EMS systems in 13 states across the country.

Pre- or in-hospital cardiac arrest or mortality was reported in 4% of GIK vs. 9% of placebo patients, a significant difference. The individual components of the composite outcome trended in the right direction, but did not achieve significance, Dr. Selker said.

At 30 days, 4% of the 432 GIK patients and 6% of the 479 placebo patients had died, a nonsignificant difference.

Mortality or hospitalization for heart failure was also similar between groups, occurring in 6% of GIK and 8% of placebo patients at 30 days.

Among STEMI patients, only the composite of cardiac arrest or pre- or in-hospital mortality significantly favored the GIK arm.

The percentage of patients with any glucose greater than 300 mg/dL was significantly higher in the GIK arm at 21% vs. 10% in the placebo arm. GIK also raised glucose levels in patients with diabetes (44% vs. 29%), but this did not lead to any serious adverse events, Dr. Selker said.

Dr. Antman asked whether the investigators evaluated the location of the STEMI because of the potential for an imbalance in anterior versus inferior locations that might have favored the GIK group. Dr. Selker said they had not performed that subanalysis.

IMMEDIATE was simultaneously published in JAMA (JAMA 2012 March 27 [doi: 10.1001/jama.2012.426]).

This study was funded by the National Heart, Lung, and Blood Institute. Dr. Selker and his coauthors reported no relevant conflicts of interest.

FROM THE ANNUAL MEETING OF THE AMERICAN COLLEGE OF CARDIOLOGY

Major Finding: Administration of GIK in patients with suspected acute coronary syndrome reduced the combined end point of pre- or in-hospital cardiac arrest or death by 60%, compared with placebo, a significant difference.

Data Source: The prospective, double-blind randomized trial included 911 patients with suspected acute coronary syndrome.

Disclosures: This study was funded by the National Heart, Lung, and Blood Institute. Dr. Selker and his coauthors reported no relevant conflicts of interest.

Sleeping Too Much or Too Little Puts Heart at Risk

CHICAGO – Sleeping too much as well as too little appears to be detrimental to cardiovascular health, according to large retrospective analysis of the NHANES database.

Individuals who slept less than 6 hours per day had twice the risk of myocardial infarction (odds ratio 2.04) or stroke (OR 2.01), compared with those who slept 6-8 hours, even after adjusting for a multiple confounders associated with cardiovascular risk.

Individuals with less than 6 hours of sleep duration were also at increased risk of heart failure (OR 1.67), Dr. Rohit R. Arora reported at the annual meeting of the American College of Cardiology.

Intriguingly, persons who slept more than 8 hours per night had a twofold increased risk of angina (OR 2.07) as well as an increased risk of coronary artery disease (OR 1.19).

"It seems that the optimal time is 6 to 8 hours," said Dr. Arora, chair of cardiology and professor of medicine at the Chicago Medical School.

He stressed that the analysis could not establish a cause-and-effect relationship, but suggested that patients who sleep more than 8 hours per night may do so because of underlying comorbid conditions such as chronic obstructive pulmonary disease and diabetes or low socioeconomic status, all of which could contribute to their cardiovascular risk.

Previous studies have shown that insufficient sleep is associated with hyperactivation of the sympathetic nervous system, glucose intolerance, an increase in cortisol levels and blood pressure, decreased variability in heart rate, disruption of the hypothalamic axis, and a general increase in inflammatory markers.

The analysis included 3,019 individuals, at least 45 years of age, who participated in the 2007-2008 National Health and Nutrition Examination Survey (NHANES). Patients were asked about sleep quality and then stratified into one of three categories: fewer than 6 hours of sleep a night, 6-8 hours a night, and more than 8 hours of sleep per night.

The analysis adjusted for the covariates of age, systolic blood pressure, gender, body mass index, diabetes, smoking status, total cholesterol, HDL-cholesterol, sleep apnea, and family history of heart attack. The analysis could not determine the underlying level of cardiovascular or cerebrovascular disease in the participants, nor did it evaluate quality of sleep, which emerging data suggests plays a role in certain cardiovascular outcomes, Dr. Arora said.

What is clear from the analysis is that providers should talk to their patients about their sleep, particularly those who are at greater risk for heart disease. The data also support a recommendation for 6-8 hours of sleep per night in current guidelines. As for whether this recommendation should be given early on in life to adolescents, who are known to have inadequate sleep, the recommendation would not be amiss, he said in an interview.

Dr. Arora reported no relevant conflicts of interest.

myocardial infarction, stroke, American College of Cardiology

CHICAGO – Sleeping too much as well as too little appears to be detrimental to cardiovascular health, according to large retrospective analysis of the NHANES database.

Individuals who slept less than 6 hours per day had twice the risk of myocardial infarction (odds ratio 2.04) or stroke (OR 2.01), compared with those who slept 6-8 hours, even after adjusting for a multiple confounders associated with cardiovascular risk.

Individuals with less than 6 hours of sleep duration were also at increased risk of heart failure (OR 1.67), Dr. Rohit R. Arora reported at the annual meeting of the American College of Cardiology.

Intriguingly, persons who slept more than 8 hours per night had a twofold increased risk of angina (OR 2.07) as well as an increased risk of coronary artery disease (OR 1.19).

"It seems that the optimal time is 6 to 8 hours," said Dr. Arora, chair of cardiology and professor of medicine at the Chicago Medical School.

He stressed that the analysis could not establish a cause-and-effect relationship, but suggested that patients who sleep more than 8 hours per night may do so because of underlying comorbid conditions such as chronic obstructive pulmonary disease and diabetes or low socioeconomic status, all of which could contribute to their cardiovascular risk.

Previous studies have shown that insufficient sleep is associated with hyperactivation of the sympathetic nervous system, glucose intolerance, an increase in cortisol levels and blood pressure, decreased variability in heart rate, disruption of the hypothalamic axis, and a general increase in inflammatory markers.

The analysis included 3,019 individuals, at least 45 years of age, who participated in the 2007-2008 National Health and Nutrition Examination Survey (NHANES). Patients were asked about sleep quality and then stratified into one of three categories: fewer than 6 hours of sleep a night, 6-8 hours a night, and more than 8 hours of sleep per night.

The analysis adjusted for the covariates of age, systolic blood pressure, gender, body mass index, diabetes, smoking status, total cholesterol, HDL-cholesterol, sleep apnea, and family history of heart attack. The analysis could not determine the underlying level of cardiovascular or cerebrovascular disease in the participants, nor did it evaluate quality of sleep, which emerging data suggests plays a role in certain cardiovascular outcomes, Dr. Arora said.

What is clear from the analysis is that providers should talk to their patients about their sleep, particularly those who are at greater risk for heart disease. The data also support a recommendation for 6-8 hours of sleep per night in current guidelines. As for whether this recommendation should be given early on in life to adolescents, who are known to have inadequate sleep, the recommendation would not be amiss, he said in an interview.

Dr. Arora reported no relevant conflicts of interest.

CHICAGO – Sleeping too much as well as too little appears to be detrimental to cardiovascular health, according to large retrospective analysis of the NHANES database.

Individuals who slept less than 6 hours per day had twice the risk of myocardial infarction (odds ratio 2.04) or stroke (OR 2.01), compared with those who slept 6-8 hours, even after adjusting for a multiple confounders associated with cardiovascular risk.

Individuals with less than 6 hours of sleep duration were also at increased risk of heart failure (OR 1.67), Dr. Rohit R. Arora reported at the annual meeting of the American College of Cardiology.

Intriguingly, persons who slept more than 8 hours per night had a twofold increased risk of angina (OR 2.07) as well as an increased risk of coronary artery disease (OR 1.19).

"It seems that the optimal time is 6 to 8 hours," said Dr. Arora, chair of cardiology and professor of medicine at the Chicago Medical School.

He stressed that the analysis could not establish a cause-and-effect relationship, but suggested that patients who sleep more than 8 hours per night may do so because of underlying comorbid conditions such as chronic obstructive pulmonary disease and diabetes or low socioeconomic status, all of which could contribute to their cardiovascular risk.

Previous studies have shown that insufficient sleep is associated with hyperactivation of the sympathetic nervous system, glucose intolerance, an increase in cortisol levels and blood pressure, decreased variability in heart rate, disruption of the hypothalamic axis, and a general increase in inflammatory markers.

The analysis included 3,019 individuals, at least 45 years of age, who participated in the 2007-2008 National Health and Nutrition Examination Survey (NHANES). Patients were asked about sleep quality and then stratified into one of three categories: fewer than 6 hours of sleep a night, 6-8 hours a night, and more than 8 hours of sleep per night.

The analysis adjusted for the covariates of age, systolic blood pressure, gender, body mass index, diabetes, smoking status, total cholesterol, HDL-cholesterol, sleep apnea, and family history of heart attack. The analysis could not determine the underlying level of cardiovascular or cerebrovascular disease in the participants, nor did it evaluate quality of sleep, which emerging data suggests plays a role in certain cardiovascular outcomes, Dr. Arora said.

What is clear from the analysis is that providers should talk to their patients about their sleep, particularly those who are at greater risk for heart disease. The data also support a recommendation for 6-8 hours of sleep per night in current guidelines. As for whether this recommendation should be given early on in life to adolescents, who are known to have inadequate sleep, the recommendation would not be amiss, he said in an interview.

Dr. Arora reported no relevant conflicts of interest.

myocardial infarction, stroke, American College of Cardiology

myocardial infarction, stroke, American College of Cardiology

FROM THE ANNUAL MEETING OF THE AMERICAN COLLEGE OF CARDIOLOGY

Major Finding: Persons who slept more than eight hours per night had a twofold increased risk of angina (2.07) as well as an increased risk of coronary artery disease (OR 1.19).

Data Source: Retrospective analysis of 3,019 individuals who participated in the 2007-2008 National Health and Nutrition Examination Survey (NHANES).

Disclosures: Dr. Arora reported no relevant conflicts of interest.

Tracking Method Improves Outcomes in CRT

Speckle-tracking echocardiography has been shown to significantly improve clinical outcomes when used in cardiac resynchronization therapy, according to results from a randomized, controlled trial.

The finding adds to the increasing body of evidence that individualized placement of the ventricular pacing lead in CRT – away from scar and on the most delayed segment of contraction – can result in better outcomes.

In research published online March 7 in the Journal of the American College of Cardiology, Dr. Fakhar Z. Khan of Papworth Hospital, Cambridge, U.K., and colleagues, showed that 70% of heart-failure patients treated with CRT guided by speckle-tracking saw improvement at 6 months, compared with 55% treated with unguided CRT (J. Am. Coll. Cardiol. 2012 [(doi:10.1016/j.jacc.2011.12.030]).

Cardiac resynchronization therapy is used to coordinate contractions in people with heart failure who have failed medical therapies. Speckle-tracking echocardiography is an imaging technique that tracks interference patterns and natural acoustic reflections to show tissue deformation and motion.

Because recent evidence has increasingly suggested that the optimal positioning of the left ventricular pacing lead in CRT is at the most delayed site of contraction and away from myocardial scar (J. Am. Coll. Cardiol. 2010;55:566-75; J. Am. Coll. Cardiol. 2010;56:774-81), speckle tracking has been used to help identify the ideal sites for each patient. Conventional CRT, by contrast, places the LV lead at a lateral or postlateral branch of the coronary sinus in all patients.

For their study comparing conventional unguided CRT with guided CRT, Dr. Khan and colleagues randomized 220 men and women scheduled to undergo CRT. In the study group (N = 110), patients were analyzed with two-dimensional speckle-tracking radial strain imaging to determine the ideal LV lead placing.

Controls (N = 110) underwent standard unguided CRT. Both patients and assessors were blinded to group assignment before and after surgery. The primary end point of the study was response at 6 months, defined as a 15% or greater reduction in left ventricular end-systolic volume, or LVESV.