User login

Additional D1 biopsy increased diagnostic yield for celiac disease

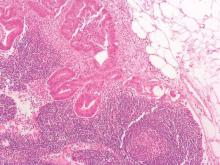

Among a large cohort of patients referred for endoscopy for suspected celiac disease as well as all upper gastrointestinal symptoms, a single additional D1 biopsy specimen from any site significantly increased the diagnostic yield for celiac disease, according to researchers.

Of 1,378 patients who had D2 and D1 biopsy specimens taken, 268 were newly diagnosed with celiac disease, and 26 had villous atrophy confined to D1, defined as ultrashort celiac disease (USCD). Compared with a standard D2 biopsy, an additional D1 biopsy increased the diagnostic yield by 9.7% (P less than .0001). Among the 26 diagnosed with USCD, 7 had normal D2 biopsy specimens, and 4 others had negative tests for endomysial antibodies (EMAs), totaling 11 patients for whom celiac disease would have been missed in the absence of a D1 biopsy.

“The addition of a D1 biopsy specimen to diagnose celiac disease may reduce the known delay in diagnosis that many patients with celiac disease experience. This may allow earlier institution of a gluten-free diet, potentially prevent nutritional deficiencies, and reduce the symptomatic burden of celiac disease,” wrote Dr. Peter Mooney of Royal Hallamshire Hospital, Sheffield, England, and his colleagues. (Gastroenterology 2016 April 7. doi: 10.1053/j-gastro.2016.01.029).

The prospective study recruited 1,378 consecutive patients referred to a single teaching hospital for endoscopy from 2008 to 2014. In total, 268 were newly diagnosed with celiac disease, and 26 were diagnosed with USCD.

To investigate the optimal site for targeted D1 sampling, 171 patients underwent quadrantic D1 biopsy, 61 of whom were diagnosed with celiac disease. Biopsy specimens from any topographical area resulted in high sensitivity, a fact that increases the feasibility of a D1 biopsy policy, since no specific target area is required, according to the researchers. Nonceliac abnormalities such as peptic duodenitis or gastric heterotopia have been suggested to impede interpretation of D1 biopsies, but these were rare in the study and did not interfere with the analysis.

USCD may be an early form of conventional celiac disease, an idea supported by the findings. Compared with patients diagnosed with conventional celiac disease, patients diagnosed with USCD were younger and had a much lower rate of diarrhea, which by decision-tree analysis was the single factor discriminating between the two groups. Compared with healthy controls, individuals with conventional celiac disease, but not USCD, were more likely to present with anemia, diarrhea, a family history of celiac disease, lethargy, and osteoporosis. Patients with USCD and conventional disease had similar rates of IgA tissue transglutaminase antibodies (tTG), but USCD patients had lower titers (P less than .001). The USCD group also had fewer ferritin and folate deficiencies.

The researchers suggested that clinical phenotypic differences may be due to minimal loss of absorptive capacity associated with a short segment of villous atrophy. Given the younger average age at diagnosis of USCD and lower tTG titers, USCD may represent an early stage of celiac disease, resulting in fewer nutritional deficiencies observed because of a shorter lead time to diagnosis.

Although USCD patients had a milder clinical phenotype, which has raised concerns that a strict gluten-free diet may be unnecessary, follow-up data demonstrated that a gluten-free diet produced improvement in symptoms and a significant decrease in the tTG titer. These results may indicate that the immune cascade was switched off, according to the researchers, and that early diagnosis may present a unique opportunity to prevent further micronutrient deficiency.

Dr. Mooney and his coauthors reported having no relevant financial disclosures.

Among a large cohort of patients referred for endoscopy for suspected celiac disease as well as all upper gastrointestinal symptoms, a single additional D1 biopsy specimen from any site significantly increased the diagnostic yield for celiac disease, according to researchers.

Of 1,378 patients who had D2 and D1 biopsy specimens taken, 268 were newly diagnosed with celiac disease, and 26 had villous atrophy confined to D1, defined as ultrashort celiac disease (USCD). Compared with a standard D2 biopsy, an additional D1 biopsy increased the diagnostic yield by 9.7% (P less than .0001). Among the 26 diagnosed with USCD, 7 had normal D2 biopsy specimens, and 4 others had negative tests for endomysial antibodies (EMAs), totaling 11 patients for whom celiac disease would have been missed in the absence of a D1 biopsy.

“The addition of a D1 biopsy specimen to diagnose celiac disease may reduce the known delay in diagnosis that many patients with celiac disease experience. This may allow earlier institution of a gluten-free diet, potentially prevent nutritional deficiencies, and reduce the symptomatic burden of celiac disease,” wrote Dr. Peter Mooney of Royal Hallamshire Hospital, Sheffield, England, and his colleagues. (Gastroenterology 2016 April 7. doi: 10.1053/j-gastro.2016.01.029).

The prospective study recruited 1,378 consecutive patients referred to a single teaching hospital for endoscopy from 2008 to 2014. In total, 268 were newly diagnosed with celiac disease, and 26 were diagnosed with USCD.

To investigate the optimal site for targeted D1 sampling, 171 patients underwent quadrantic D1 biopsy, 61 of whom were diagnosed with celiac disease. Biopsy specimens from any topographical area resulted in high sensitivity, a fact that increases the feasibility of a D1 biopsy policy, since no specific target area is required, according to the researchers. Nonceliac abnormalities such as peptic duodenitis or gastric heterotopia have been suggested to impede interpretation of D1 biopsies, but these were rare in the study and did not interfere with the analysis.

USCD may be an early form of conventional celiac disease, an idea supported by the findings. Compared with patients diagnosed with conventional celiac disease, patients diagnosed with USCD were younger and had a much lower rate of diarrhea, which by decision-tree analysis was the single factor discriminating between the two groups. Compared with healthy controls, individuals with conventional celiac disease, but not USCD, were more likely to present with anemia, diarrhea, a family history of celiac disease, lethargy, and osteoporosis. Patients with USCD and conventional disease had similar rates of IgA tissue transglutaminase antibodies (tTG), but USCD patients had lower titers (P less than .001). The USCD group also had fewer ferritin and folate deficiencies.

The researchers suggested that clinical phenotypic differences may be due to minimal loss of absorptive capacity associated with a short segment of villous atrophy. Given the younger average age at diagnosis of USCD and lower tTG titers, USCD may represent an early stage of celiac disease, resulting in fewer nutritional deficiencies observed because of a shorter lead time to diagnosis.

Although USCD patients had a milder clinical phenotype, which has raised concerns that a strict gluten-free diet may be unnecessary, follow-up data demonstrated that a gluten-free diet produced improvement in symptoms and a significant decrease in the tTG titer. These results may indicate that the immune cascade was switched off, according to the researchers, and that early diagnosis may present a unique opportunity to prevent further micronutrient deficiency.

Dr. Mooney and his coauthors reported having no relevant financial disclosures.

Among a large cohort of patients referred for endoscopy for suspected celiac disease as well as all upper gastrointestinal symptoms, a single additional D1 biopsy specimen from any site significantly increased the diagnostic yield for celiac disease, according to researchers.

Of 1,378 patients who had D2 and D1 biopsy specimens taken, 268 were newly diagnosed with celiac disease, and 26 had villous atrophy confined to D1, defined as ultrashort celiac disease (USCD). Compared with a standard D2 biopsy, an additional D1 biopsy increased the diagnostic yield by 9.7% (P less than .0001). Among the 26 diagnosed with USCD, 7 had normal D2 biopsy specimens, and 4 others had negative tests for endomysial antibodies (EMAs), totaling 11 patients for whom celiac disease would have been missed in the absence of a D1 biopsy.

“The addition of a D1 biopsy specimen to diagnose celiac disease may reduce the known delay in diagnosis that many patients with celiac disease experience. This may allow earlier institution of a gluten-free diet, potentially prevent nutritional deficiencies, and reduce the symptomatic burden of celiac disease,” wrote Dr. Peter Mooney of Royal Hallamshire Hospital, Sheffield, England, and his colleagues. (Gastroenterology 2016 April 7. doi: 10.1053/j-gastro.2016.01.029).

The prospective study recruited 1,378 consecutive patients referred to a single teaching hospital for endoscopy from 2008 to 2014. In total, 268 were newly diagnosed with celiac disease, and 26 were diagnosed with USCD.

To investigate the optimal site for targeted D1 sampling, 171 patients underwent quadrantic D1 biopsy, 61 of whom were diagnosed with celiac disease. Biopsy specimens from any topographical area resulted in high sensitivity, a fact that increases the feasibility of a D1 biopsy policy, since no specific target area is required, according to the researchers. Nonceliac abnormalities such as peptic duodenitis or gastric heterotopia have been suggested to impede interpretation of D1 biopsies, but these were rare in the study and did not interfere with the analysis.

USCD may be an early form of conventional celiac disease, an idea supported by the findings. Compared with patients diagnosed with conventional celiac disease, patients diagnosed with USCD were younger and had a much lower rate of diarrhea, which by decision-tree analysis was the single factor discriminating between the two groups. Compared with healthy controls, individuals with conventional celiac disease, but not USCD, were more likely to present with anemia, diarrhea, a family history of celiac disease, lethargy, and osteoporosis. Patients with USCD and conventional disease had similar rates of IgA tissue transglutaminase antibodies (tTG), but USCD patients had lower titers (P less than .001). The USCD group also had fewer ferritin and folate deficiencies.

The researchers suggested that clinical phenotypic differences may be due to minimal loss of absorptive capacity associated with a short segment of villous atrophy. Given the younger average age at diagnosis of USCD and lower tTG titers, USCD may represent an early stage of celiac disease, resulting in fewer nutritional deficiencies observed because of a shorter lead time to diagnosis.

Although USCD patients had a milder clinical phenotype, which has raised concerns that a strict gluten-free diet may be unnecessary, follow-up data demonstrated that a gluten-free diet produced improvement in symptoms and a significant decrease in the tTG titer. These results may indicate that the immune cascade was switched off, according to the researchers, and that early diagnosis may present a unique opportunity to prevent further micronutrient deficiency.

Dr. Mooney and his coauthors reported having no relevant financial disclosures.

FROM GASTROENTEROLOGY

Key clinical point: When added to a standard D2 biopsy, a single D1 biopsy from any site significantly increased the diagnostic yield for celiac disease.

Major finding: In total, 26 of 268 patients diagnosed with celiac disease had villous atrophy confined to D1 (ultrashort celiac disease); an additional D1 biopsy increased the diagnostic yield by 9.7% (P less than .0001), compared with a standard D2 biopsy.

Data source: A prospective study of 1,378 consecutive patients referred to a single teaching hospital for endoscopy from 2008 to 2014, 268 of whom were newly diagnosed with celiac disease and 26 with USCD.

Disclosures: Dr. Mooney and his coauthors reported having no relevant financial disclosures.

Racial disparities in colon cancer survival mainly driven by tumor stage at presentation

Although black patients with colon cancer received significantly less treatment than white patients, particularly for late stage disease, much of the overall survival disparity between black and white patients was explained by tumor presentation at diagnosis rather than treatment differences, according to an analysis of SEER data.

Among demographically matched black and white patients, the 5-year survival difference was 8.3% (P less than .0001). Presentation match reduced the difference to 5.0% (P less than .0001), which accounted for 39.8% of the overall disparity. Additional matching by treatment reduced the difference only slightly to 4.9% (P less than .0001), which accounted for 1.2% of the overall disparity. Black patients had lower rates for most treatments, including surgery, than presentation-matched white patients (88.5% vs. 91.4%), and these differences were most pronounced at advanced stages. For example, significant differences between black and white patients in the use of chemotherapy was observed for stage III (53.1% vs. 64.2%; P less than .0001) and stage IV (56.1% vs. 63.3%; P = .001).

“Our results indicate that tumor presentation, including tumor stage, is indeed one of the most important factors contributing to the racial disparity in colon cancer survival. We observed that, after controlling for demographic factors, black patients in comparison with white patients had a significantly higher proportion of stage IV and lower proportions of stages I and II disease. Adequately matching on tumor presentation variables (e.g., stage, grade, size, and comorbidity) significantly reduced survival disparities,” wrote Dr. Yinzhi Lai of the Department of Medical Oncology at Sidney Kimmel Cancer Center, Philadelphia, and colleagues (Gastroenterology. 2016 Apr 4. doi: 10.1053/j.gastro.2016.01.030).

Treatment differences in advanced-stage patients, compared with early-stage patients, explained a higher proportion of the demographic-matched survival disparity. For example, in stage II patients, treatment match resulted in modest reductions in 2-, 3-, and 5-year survival rate disparities (2.7%-2.8%, 4.1%-3.6%, and 4.6%-4.0%, respectively); by contrast, in stage III patients, treatment match resulted in more substantial reductions in 2-, 3-, and 5-year survival rate disparities (4.5%-2.2%, 3.1%-2.0%, and 4.3%-2.8%, respectively). A similar effect was observed in patients with stage IV disease. The results suggest that, “to control survival disparity, more efforts may need to be tailored to minimize treatment disparities (especially chemotherapy use) in patients with advanced-stage disease,” the investigators wrote.

The retrospective data analysis used patient information from 68,141 patients (6,190 black, 61,951 white) aged 66 years and older with colon cancer identified from the National Cancer Institute SEER-Medicare database. Using a novel minimum distance matching strategy, investigators drew from the pool of white patients to match three distinct comparison cohorts to the same 6,190 black patients. Close matches between black and white patients bypassed the need for model-based analysis.

The primary matching analysis was limited by the inability to control for substantial differences in socioeconomic status, marital status, and urban/rural residence. A subcohort analysis of 2,000 matched black and white patients showed that when socioeconomic status was added to the demographic match, survival differences were reduced, indicating the important role of socioeconomic status on racial survival disparities.

Significantly better survival was observed in all patients who were diagnosed in 2004 or later, the year the Food and Drug Administration approved the important chemotherapy medicines oxaliplatin and bevacizumab. Separating the cohorts into those who were diagnosed before and after 2004 revealed that the racial survival disparity was lower in the more recent group, indicating a favorable impact of oxaliplatin and/or bevacizumab in reducing the survival disparity.

Prior studies have documented racial disparities in the incidence and outcomes of colon cancer in the United States. Black men and women have a higher overall incidence and more advanced stage of disease at diagnosis than white men and women, while being less likely to receive guideline-concordant treatment.

|

| Dr. Jennifer Lund |

To extend this work, the authors evaluated treatment disparities between black and white colon cancer patients aged 66 years and older and examined the impact of a variety of patient characteristics on racial disparities in overall survival using a novel, sequential matching algorithm that minimized the overall distance between black and white patients based on demographic-, tumor specific–, and treatment-related variables. The authors found that differences in overall survival were mainly driven by tumor presentation; however, advanced-stage black colon cancer patients received less guideline concordant-treatment than white patients. While this minimum-distance algorithm provided close black-white matches on prespecified factors, it could not accommodate other factors (for example, socioeconomic, marital, and urban/rural status); therefore, methodologic improvements to this method and comparisons to other commonly used approaches (that is, propensity score matching and weighting) are warranted.

Finally, these results apply to older black and white colon cancer patients with Medicare fee-for-service coverage only. Additional research using similar methods in older Medicare Advantage populations or younger adults may uncover unique drivers of overall survival disparities by race, which may require tailored interventions.

Jennifer L. Lund, Ph.D., is an assistant professor, department of epidemiology, University of North Carolina at Chapel Hill. She receives research support from the UNC Oncology Clinical Translational Research Training Program (K12 CA120780), as well as through a Research Starter Award from the PhRMA Foundation to the UNC Department of Epidemiology.

Prior studies have documented racial disparities in the incidence and outcomes of colon cancer in the United States. Black men and women have a higher overall incidence and more advanced stage of disease at diagnosis than white men and women, while being less likely to receive guideline-concordant treatment.

|

| Dr. Jennifer Lund |

To extend this work, the authors evaluated treatment disparities between black and white colon cancer patients aged 66 years and older and examined the impact of a variety of patient characteristics on racial disparities in overall survival using a novel, sequential matching algorithm that minimized the overall distance between black and white patients based on demographic-, tumor specific–, and treatment-related variables. The authors found that differences in overall survival were mainly driven by tumor presentation; however, advanced-stage black colon cancer patients received less guideline concordant-treatment than white patients. While this minimum-distance algorithm provided close black-white matches on prespecified factors, it could not accommodate other factors (for example, socioeconomic, marital, and urban/rural status); therefore, methodologic improvements to this method and comparisons to other commonly used approaches (that is, propensity score matching and weighting) are warranted.

Finally, these results apply to older black and white colon cancer patients with Medicare fee-for-service coverage only. Additional research using similar methods in older Medicare Advantage populations or younger adults may uncover unique drivers of overall survival disparities by race, which may require tailored interventions.

Jennifer L. Lund, Ph.D., is an assistant professor, department of epidemiology, University of North Carolina at Chapel Hill. She receives research support from the UNC Oncology Clinical Translational Research Training Program (K12 CA120780), as well as through a Research Starter Award from the PhRMA Foundation to the UNC Department of Epidemiology.

Prior studies have documented racial disparities in the incidence and outcomes of colon cancer in the United States. Black men and women have a higher overall incidence and more advanced stage of disease at diagnosis than white men and women, while being less likely to receive guideline-concordant treatment.

|

| Dr. Jennifer Lund |

To extend this work, the authors evaluated treatment disparities between black and white colon cancer patients aged 66 years and older and examined the impact of a variety of patient characteristics on racial disparities in overall survival using a novel, sequential matching algorithm that minimized the overall distance between black and white patients based on demographic-, tumor specific–, and treatment-related variables. The authors found that differences in overall survival were mainly driven by tumor presentation; however, advanced-stage black colon cancer patients received less guideline concordant-treatment than white patients. While this minimum-distance algorithm provided close black-white matches on prespecified factors, it could not accommodate other factors (for example, socioeconomic, marital, and urban/rural status); therefore, methodologic improvements to this method and comparisons to other commonly used approaches (that is, propensity score matching and weighting) are warranted.

Finally, these results apply to older black and white colon cancer patients with Medicare fee-for-service coverage only. Additional research using similar methods in older Medicare Advantage populations or younger adults may uncover unique drivers of overall survival disparities by race, which may require tailored interventions.

Jennifer L. Lund, Ph.D., is an assistant professor, department of epidemiology, University of North Carolina at Chapel Hill. She receives research support from the UNC Oncology Clinical Translational Research Training Program (K12 CA120780), as well as through a Research Starter Award from the PhRMA Foundation to the UNC Department of Epidemiology.

Although black patients with colon cancer received significantly less treatment than white patients, particularly for late stage disease, much of the overall survival disparity between black and white patients was explained by tumor presentation at diagnosis rather than treatment differences, according to an analysis of SEER data.

Among demographically matched black and white patients, the 5-year survival difference was 8.3% (P less than .0001). Presentation match reduced the difference to 5.0% (P less than .0001), which accounted for 39.8% of the overall disparity. Additional matching by treatment reduced the difference only slightly to 4.9% (P less than .0001), which accounted for 1.2% of the overall disparity. Black patients had lower rates for most treatments, including surgery, than presentation-matched white patients (88.5% vs. 91.4%), and these differences were most pronounced at advanced stages. For example, significant differences between black and white patients in the use of chemotherapy was observed for stage III (53.1% vs. 64.2%; P less than .0001) and stage IV (56.1% vs. 63.3%; P = .001).

“Our results indicate that tumor presentation, including tumor stage, is indeed one of the most important factors contributing to the racial disparity in colon cancer survival. We observed that, after controlling for demographic factors, black patients in comparison with white patients had a significantly higher proportion of stage IV and lower proportions of stages I and II disease. Adequately matching on tumor presentation variables (e.g., stage, grade, size, and comorbidity) significantly reduced survival disparities,” wrote Dr. Yinzhi Lai of the Department of Medical Oncology at Sidney Kimmel Cancer Center, Philadelphia, and colleagues (Gastroenterology. 2016 Apr 4. doi: 10.1053/j.gastro.2016.01.030).

Treatment differences in advanced-stage patients, compared with early-stage patients, explained a higher proportion of the demographic-matched survival disparity. For example, in stage II patients, treatment match resulted in modest reductions in 2-, 3-, and 5-year survival rate disparities (2.7%-2.8%, 4.1%-3.6%, and 4.6%-4.0%, respectively); by contrast, in stage III patients, treatment match resulted in more substantial reductions in 2-, 3-, and 5-year survival rate disparities (4.5%-2.2%, 3.1%-2.0%, and 4.3%-2.8%, respectively). A similar effect was observed in patients with stage IV disease. The results suggest that, “to control survival disparity, more efforts may need to be tailored to minimize treatment disparities (especially chemotherapy use) in patients with advanced-stage disease,” the investigators wrote.

The retrospective data analysis used patient information from 68,141 patients (6,190 black, 61,951 white) aged 66 years and older with colon cancer identified from the National Cancer Institute SEER-Medicare database. Using a novel minimum distance matching strategy, investigators drew from the pool of white patients to match three distinct comparison cohorts to the same 6,190 black patients. Close matches between black and white patients bypassed the need for model-based analysis.

The primary matching analysis was limited by the inability to control for substantial differences in socioeconomic status, marital status, and urban/rural residence. A subcohort analysis of 2,000 matched black and white patients showed that when socioeconomic status was added to the demographic match, survival differences were reduced, indicating the important role of socioeconomic status on racial survival disparities.

Significantly better survival was observed in all patients who were diagnosed in 2004 or later, the year the Food and Drug Administration approved the important chemotherapy medicines oxaliplatin and bevacizumab. Separating the cohorts into those who were diagnosed before and after 2004 revealed that the racial survival disparity was lower in the more recent group, indicating a favorable impact of oxaliplatin and/or bevacizumab in reducing the survival disparity.

Although black patients with colon cancer received significantly less treatment than white patients, particularly for late stage disease, much of the overall survival disparity between black and white patients was explained by tumor presentation at diagnosis rather than treatment differences, according to an analysis of SEER data.

Among demographically matched black and white patients, the 5-year survival difference was 8.3% (P less than .0001). Presentation match reduced the difference to 5.0% (P less than .0001), which accounted for 39.8% of the overall disparity. Additional matching by treatment reduced the difference only slightly to 4.9% (P less than .0001), which accounted for 1.2% of the overall disparity. Black patients had lower rates for most treatments, including surgery, than presentation-matched white patients (88.5% vs. 91.4%), and these differences were most pronounced at advanced stages. For example, significant differences between black and white patients in the use of chemotherapy was observed for stage III (53.1% vs. 64.2%; P less than .0001) and stage IV (56.1% vs. 63.3%; P = .001).

“Our results indicate that tumor presentation, including tumor stage, is indeed one of the most important factors contributing to the racial disparity in colon cancer survival. We observed that, after controlling for demographic factors, black patients in comparison with white patients had a significantly higher proportion of stage IV and lower proportions of stages I and II disease. Adequately matching on tumor presentation variables (e.g., stage, grade, size, and comorbidity) significantly reduced survival disparities,” wrote Dr. Yinzhi Lai of the Department of Medical Oncology at Sidney Kimmel Cancer Center, Philadelphia, and colleagues (Gastroenterology. 2016 Apr 4. doi: 10.1053/j.gastro.2016.01.030).

Treatment differences in advanced-stage patients, compared with early-stage patients, explained a higher proportion of the demographic-matched survival disparity. For example, in stage II patients, treatment match resulted in modest reductions in 2-, 3-, and 5-year survival rate disparities (2.7%-2.8%, 4.1%-3.6%, and 4.6%-4.0%, respectively); by contrast, in stage III patients, treatment match resulted in more substantial reductions in 2-, 3-, and 5-year survival rate disparities (4.5%-2.2%, 3.1%-2.0%, and 4.3%-2.8%, respectively). A similar effect was observed in patients with stage IV disease. The results suggest that, “to control survival disparity, more efforts may need to be tailored to minimize treatment disparities (especially chemotherapy use) in patients with advanced-stage disease,” the investigators wrote.

The retrospective data analysis used patient information from 68,141 patients (6,190 black, 61,951 white) aged 66 years and older with colon cancer identified from the National Cancer Institute SEER-Medicare database. Using a novel minimum distance matching strategy, investigators drew from the pool of white patients to match three distinct comparison cohorts to the same 6,190 black patients. Close matches between black and white patients bypassed the need for model-based analysis.

The primary matching analysis was limited by the inability to control for substantial differences in socioeconomic status, marital status, and urban/rural residence. A subcohort analysis of 2,000 matched black and white patients showed that when socioeconomic status was added to the demographic match, survival differences were reduced, indicating the important role of socioeconomic status on racial survival disparities.

Significantly better survival was observed in all patients who were diagnosed in 2004 or later, the year the Food and Drug Administration approved the important chemotherapy medicines oxaliplatin and bevacizumab. Separating the cohorts into those who were diagnosed before and after 2004 revealed that the racial survival disparity was lower in the more recent group, indicating a favorable impact of oxaliplatin and/or bevacizumab in reducing the survival disparity.

FROM GASTROENTEROLOGY

Key clinical point: Tumor stage at diagnosis had a greater effect on survival disparities between black and white patients with colon cancer than treatment differences.

Major finding: Among demographically matched black and white patients, the 5-year survival difference was 8.3% (P less than .0001); matching by presentation reduced the difference to 5.0% (P less than .0001), and additional matching by treatment reduced the difference only slightly to 4.9% (P less than .0001).

Data sources: In total, 68,141 patients (6,190 black, 61,951 white) aged 66 years and older with colon cancer were identified from the National Cancer Institute SEER-Medicare database. Three white comparison cohorts were assembled and matched to the same 6,190 black patients.

Disclosures: Dr. Lai and coauthors reported having no disclosures.

New interventions improve symptoms of GERD

Patients with chronic gastroesophageal reflux disease (GERD) who have failed long-term proton pump inhibitor (PPI) therapy can benefit from surgical intervention with magnetic sphincter augmentation, according to a new study that has validated the long-term safety and efficacy of this procedure.

All 85 patients in the cohort had used PPIs at baseline, but this declined to 15.3% at 5 years. Moderate or severe regurgitation also decreased significantly. It was present in 57% of patients at baseline, but in 1.2% at the 5-year follow-up.

In a second related study, researchers found that compared with patients on esomeprazole therapy, GERD patients who underwent laparoscopic antireflux surgery (LARS), experienced significantly greater reductions in 24-hour esophageal acid exposure after 6 months and at 5 years. Both procedures were effective in achieving and maintaining a reduction in distal esophageal acid exposure down to a normal level, but LARS nearly abolished gastroesophageal acid reflux.

Both studies were published in the May issue of Clinical Gastroenterology and Hepatology (doi: 10.1016/j.cgh.2015.05.028; doi: 10.1016/j.cgh.2015.07.025).

Gastroesophageal reflux disease (GERD) is caused by excessive exposure of esophageal mucosa to gastric acid. Left unchecked, it can lead to chronic symptoms and complications, and is associated with a higher risk for Barrett’s esophagus and esophageal adenocarcinoma.

In the first study, Dr. Robert A. Ganz of Minnesota Gastroenterology PA, Plymouth, Minn., and colleagues, conducted a prospective international study that looked at the safety and efficacy of a magnetic device in adults with GERD.

The Food and Drug Administration approved this magnetic device in 2012, which augments lower esophageal sphincter function in patients with GERD, and the current paper now reports on the final results after 5 years of follow-up.

Quality of life, reflux control, use of PPIs, and side effects were evaluated, and the GERD health-related quality of life (GERD-HRQL) questionnaire was administered at baseline to patients on and off PPIs, and after placement of the device.

A partial response to PPIs was defined as a GERD-HRQL score of 10 or less on PPIs and a score of 15 or higher off PPIs, or a 6-point or more improvement when scores on vs. off PPI were compared.

During the follow-up period, there were no device erosions, migrations, or malfunctions. The median GERD-HRQL score was 27 in patients not taking PPIs and 11 in patients on PPIs at the start of the study. After 5 years with the device in place, this score decreased to 4.

All patients reported that they had the ability to belch and vomit if they needed to. The proportion of patients reporting bothersome swallowing was 5% at baseline and 6% at 5 years (P = .739), and bothersome gas-bloat was present in 52% at baseline but decreased to 8.3% at 5 years.

“Without a procedure to correct an incompetent lower esophageal sphincter, it is unlikely that continued medical therapy would have improved these reflux symptoms, and the severity and frequency of the symptoms may have worsened,” wrote the authors.

In the second study, Dr. Jan G. Hatlebakk of Haukeland University Hospital, Bergen, Norway, and his colleagues analyzed data from a prospective, randomized, open-label trial that compared the efficacy and safety of LARS with esomeprazole (20 or 40 mg/d) over a 5-year period in patients with chronic GERD.

Among patients in the LARS group (n = 116), the median 24-hour esophageal acid exposure was 8.6% at baseline and 0.7% after 6 months and 5 years (P less than .001 vs. baseline).

In the esomeprazole group (n = 151), the median 24-hour esophageal acid exposure was 8.8% at baseline, 2.1% after 6 months, and 1.9% after 5 years (P less than .001, therapy vs. baseline, and LARS vs. esomeprazole).

Gastric acidity was stable in both groups, and patients who needed a dose increase to 40 mg/d experienced more severe supine reflux at baseline, but less esophageal acid exposure (P less than .02) and gastric acidity after their dose was increased. Esophageal and intragastric pH parameters, both on and off therapy, did not seem to long-term symptom breakthrough.

“We found that neither intragastric nor intraesophageal pH parameters could predict the short- and long-term therapeutic outcome, which indicates that response to therapy in patients with GERD is individual and not related directly to normalization of acid reflux parameters alone,” wrote Dr. Hatlebakk and coauthors.

Patients with chronic gastroesophageal reflux disease (GERD) who have failed long-term proton pump inhibitor (PPI) therapy can benefit from surgical intervention with magnetic sphincter augmentation, according to a new study that has validated the long-term safety and efficacy of this procedure.

All 85 patients in the cohort had used PPIs at baseline, but this declined to 15.3% at 5 years. Moderate or severe regurgitation also decreased significantly. It was present in 57% of patients at baseline, but in 1.2% at the 5-year follow-up.

In a second related study, researchers found that compared with patients on esomeprazole therapy, GERD patients who underwent laparoscopic antireflux surgery (LARS), experienced significantly greater reductions in 24-hour esophageal acid exposure after 6 months and at 5 years. Both procedures were effective in achieving and maintaining a reduction in distal esophageal acid exposure down to a normal level, but LARS nearly abolished gastroesophageal acid reflux.

Both studies were published in the May issue of Clinical Gastroenterology and Hepatology (doi: 10.1016/j.cgh.2015.05.028; doi: 10.1016/j.cgh.2015.07.025).

Gastroesophageal reflux disease (GERD) is caused by excessive exposure of esophageal mucosa to gastric acid. Left unchecked, it can lead to chronic symptoms and complications, and is associated with a higher risk for Barrett’s esophagus and esophageal adenocarcinoma.

In the first study, Dr. Robert A. Ganz of Minnesota Gastroenterology PA, Plymouth, Minn., and colleagues, conducted a prospective international study that looked at the safety and efficacy of a magnetic device in adults with GERD.

The Food and Drug Administration approved this magnetic device in 2012, which augments lower esophageal sphincter function in patients with GERD, and the current paper now reports on the final results after 5 years of follow-up.

Quality of life, reflux control, use of PPIs, and side effects were evaluated, and the GERD health-related quality of life (GERD-HRQL) questionnaire was administered at baseline to patients on and off PPIs, and after placement of the device.

A partial response to PPIs was defined as a GERD-HRQL score of 10 or less on PPIs and a score of 15 or higher off PPIs, or a 6-point or more improvement when scores on vs. off PPI were compared.

During the follow-up period, there were no device erosions, migrations, or malfunctions. The median GERD-HRQL score was 27 in patients not taking PPIs and 11 in patients on PPIs at the start of the study. After 5 years with the device in place, this score decreased to 4.

All patients reported that they had the ability to belch and vomit if they needed to. The proportion of patients reporting bothersome swallowing was 5% at baseline and 6% at 5 years (P = .739), and bothersome gas-bloat was present in 52% at baseline but decreased to 8.3% at 5 years.

“Without a procedure to correct an incompetent lower esophageal sphincter, it is unlikely that continued medical therapy would have improved these reflux symptoms, and the severity and frequency of the symptoms may have worsened,” wrote the authors.

In the second study, Dr. Jan G. Hatlebakk of Haukeland University Hospital, Bergen, Norway, and his colleagues analyzed data from a prospective, randomized, open-label trial that compared the efficacy and safety of LARS with esomeprazole (20 or 40 mg/d) over a 5-year period in patients with chronic GERD.

Among patients in the LARS group (n = 116), the median 24-hour esophageal acid exposure was 8.6% at baseline and 0.7% after 6 months and 5 years (P less than .001 vs. baseline).

In the esomeprazole group (n = 151), the median 24-hour esophageal acid exposure was 8.8% at baseline, 2.1% after 6 months, and 1.9% after 5 years (P less than .001, therapy vs. baseline, and LARS vs. esomeprazole).

Gastric acidity was stable in both groups, and patients who needed a dose increase to 40 mg/d experienced more severe supine reflux at baseline, but less esophageal acid exposure (P less than .02) and gastric acidity after their dose was increased. Esophageal and intragastric pH parameters, both on and off therapy, did not seem to long-term symptom breakthrough.

“We found that neither intragastric nor intraesophageal pH parameters could predict the short- and long-term therapeutic outcome, which indicates that response to therapy in patients with GERD is individual and not related directly to normalization of acid reflux parameters alone,” wrote Dr. Hatlebakk and coauthors.

Patients with chronic gastroesophageal reflux disease (GERD) who have failed long-term proton pump inhibitor (PPI) therapy can benefit from surgical intervention with magnetic sphincter augmentation, according to a new study that has validated the long-term safety and efficacy of this procedure.

All 85 patients in the cohort had used PPIs at baseline, but this declined to 15.3% at 5 years. Moderate or severe regurgitation also decreased significantly. It was present in 57% of patients at baseline, but in 1.2% at the 5-year follow-up.

In a second related study, researchers found that compared with patients on esomeprazole therapy, GERD patients who underwent laparoscopic antireflux surgery (LARS), experienced significantly greater reductions in 24-hour esophageal acid exposure after 6 months and at 5 years. Both procedures were effective in achieving and maintaining a reduction in distal esophageal acid exposure down to a normal level, but LARS nearly abolished gastroesophageal acid reflux.

Both studies were published in the May issue of Clinical Gastroenterology and Hepatology (doi: 10.1016/j.cgh.2015.05.028; doi: 10.1016/j.cgh.2015.07.025).

Gastroesophageal reflux disease (GERD) is caused by excessive exposure of esophageal mucosa to gastric acid. Left unchecked, it can lead to chronic symptoms and complications, and is associated with a higher risk for Barrett’s esophagus and esophageal adenocarcinoma.

In the first study, Dr. Robert A. Ganz of Minnesota Gastroenterology PA, Plymouth, Minn., and colleagues, conducted a prospective international study that looked at the safety and efficacy of a magnetic device in adults with GERD.

The Food and Drug Administration approved this magnetic device in 2012, which augments lower esophageal sphincter function in patients with GERD, and the current paper now reports on the final results after 5 years of follow-up.

Quality of life, reflux control, use of PPIs, and side effects were evaluated, and the GERD health-related quality of life (GERD-HRQL) questionnaire was administered at baseline to patients on and off PPIs, and after placement of the device.

A partial response to PPIs was defined as a GERD-HRQL score of 10 or less on PPIs and a score of 15 or higher off PPIs, or a 6-point or more improvement when scores on vs. off PPI were compared.

During the follow-up period, there were no device erosions, migrations, or malfunctions. The median GERD-HRQL score was 27 in patients not taking PPIs and 11 in patients on PPIs at the start of the study. After 5 years with the device in place, this score decreased to 4.

All patients reported that they had the ability to belch and vomit if they needed to. The proportion of patients reporting bothersome swallowing was 5% at baseline and 6% at 5 years (P = .739), and bothersome gas-bloat was present in 52% at baseline but decreased to 8.3% at 5 years.

“Without a procedure to correct an incompetent lower esophageal sphincter, it is unlikely that continued medical therapy would have improved these reflux symptoms, and the severity and frequency of the symptoms may have worsened,” wrote the authors.

In the second study, Dr. Jan G. Hatlebakk of Haukeland University Hospital, Bergen, Norway, and his colleagues analyzed data from a prospective, randomized, open-label trial that compared the efficacy and safety of LARS with esomeprazole (20 or 40 mg/d) over a 5-year period in patients with chronic GERD.

Among patients in the LARS group (n = 116), the median 24-hour esophageal acid exposure was 8.6% at baseline and 0.7% after 6 months and 5 years (P less than .001 vs. baseline).

In the esomeprazole group (n = 151), the median 24-hour esophageal acid exposure was 8.8% at baseline, 2.1% after 6 months, and 1.9% after 5 years (P less than .001, therapy vs. baseline, and LARS vs. esomeprazole).

Gastric acidity was stable in both groups, and patients who needed a dose increase to 40 mg/d experienced more severe supine reflux at baseline, but less esophageal acid exposure (P less than .02) and gastric acidity after their dose was increased. Esophageal and intragastric pH parameters, both on and off therapy, did not seem to long-term symptom breakthrough.

“We found that neither intragastric nor intraesophageal pH parameters could predict the short- and long-term therapeutic outcome, which indicates that response to therapy in patients with GERD is individual and not related directly to normalization of acid reflux parameters alone,” wrote Dr. Hatlebakk and coauthors.

VIDEO: Eight new quality measures key to performance of esophageal manometry

Health care providers performing esophageal manometry should keep in mind eight new quality measures listed and validated in a recent study published in the April issue of Clinical Gastroenterology and Hepatology (Clin Gastroenterol Hepatol. 2015 Oct 20. doi: 10.1016/j.cgh.2015.10.006), which researchers believe will significantly improve the performance of esophageal manometry and interpretation of data culled from such procedures.

“Despite its critical importance in the diagnosis and management of esophageal motility disorders, features of a high-quality esophageal manometry [study] have not been formally defined,” said the study authors, led by Dr. Rena Yadlapati of Northwestern University in Chicago. “Standardizing key aspects of esophageal manometry is imperative to ensure the delivery of high-quality care.”

SOURCE: AMERICAN GASTROENTEROLOGICAL ASSOCIATION

Dr. Yadlapati and her coinvestigators carried out the study in accordance with guidelines set out by the RAND/UCLA Appropriateness Method (RAM), They began by recruiting a panel of 15 esophageal manometry experts with leadership, geographical diversity, and a wide range of practice settings being the key criteria in their selection.

Investigators then conducted a literature review, selecting the 30 most relevant randomized, controlled trials, retrospective studies, and systematic reviews from the past 10 years. From this review, investigators created a list of 30 possible quality measures, all of which were then sent to each member of the expert panel via email for them to rank on a 9-point interval scale, and modify if necessary.

Those rankings were then used to determine the appropriateness of each proposed quality measure at a face-to-face meeting among the investigators and the 15-member expert panel, at which 17 quality measures were determined to be appropriate. In all, 2 measures dealt with competency, 2 pertained to assessment before procedure, 3 were regarding performance of the procedure itself, and 10 were about interpretation of data obtained from esophageal manometry; the 10 measures concerning interpretation of data were compiled into 1 measure, leaving a total of 8 that were ultimately approved.

The quality measures for competency are as follows:

• “If esophageal manometry is performed, then the technician must be competent to perform esophageal manometry.”

• “If a physician is considered competent to interpret esophageal manometry, then the physician must interpret a minimum number of esophageal manometry studies annually.”

For assessment before procedure, the measures state the following:

• “If a patient is referred for esophageal manometry, then the patient should have undergone an evaluation for structural abnormalities before manometry.”

• “If an esophageal manometry is performed, then informed consent must be obtained and documented.”

Quality measures regarding the procedure itself state the following:

• “If an esophageal manometry study is performed, then a time interval of at least 30 seconds should occur between swallows.”

• “If an esophageal manometry study is performed, then at least 10 wet swallows should be attempted.”

• “If an esophageal manometry study is performed, then at least seven evaluable wet swallows should be included.”

Finally, regarding interpretation of data, the single quality measures states that “If an esophageal manometry study is interpreted, then a complete procedure report should document the following:

• “Reason for referral.”

• “Clinical diagnosis.”

• “Diagnosis according to formally validated classification scheme.”

• “Documentation of formally validated classification scheme used.”

• “Summary of results”

• “Tabulated results including upper esophageal sphincter activity, interpretation of esophagogastric junction relaxation, documentation of pressure inversion point if technically feasible, pressurization pattern and contractile pattern.”

• “Technical limitation (if applicable).”

• “Communication to referring provider.”

“These eight appropriate quality measures are considered absolutely necessary in the performance and interpretation of esophageal manometry,” the authors concluded. “In particular, measures 3-8 are clinically feasible and measurable, and should serve as an initial framework to benchmark quality and reduce variability in esophageal manometry practices.”

This study was funded by the Alumnae of Northwestern University, and a grant to Dr. Yadlapati (T32 DK101363-02). Five coinvestigators disclosed consultancy and speaking relationships with Boston Scientific, Cook Endoscopy, EndoStim, Given Imaging, Covidien, and Sandhill Scientific.

Health care providers performing esophageal manometry should keep in mind eight new quality measures listed and validated in a recent study published in the April issue of Clinical Gastroenterology and Hepatology (Clin Gastroenterol Hepatol. 2015 Oct 20. doi: 10.1016/j.cgh.2015.10.006), which researchers believe will significantly improve the performance of esophageal manometry and interpretation of data culled from such procedures.

“Despite its critical importance in the diagnosis and management of esophageal motility disorders, features of a high-quality esophageal manometry [study] have not been formally defined,” said the study authors, led by Dr. Rena Yadlapati of Northwestern University in Chicago. “Standardizing key aspects of esophageal manometry is imperative to ensure the delivery of high-quality care.”

SOURCE: AMERICAN GASTROENTEROLOGICAL ASSOCIATION

Dr. Yadlapati and her coinvestigators carried out the study in accordance with guidelines set out by the RAND/UCLA Appropriateness Method (RAM), They began by recruiting a panel of 15 esophageal manometry experts with leadership, geographical diversity, and a wide range of practice settings being the key criteria in their selection.

Investigators then conducted a literature review, selecting the 30 most relevant randomized, controlled trials, retrospective studies, and systematic reviews from the past 10 years. From this review, investigators created a list of 30 possible quality measures, all of which were then sent to each member of the expert panel via email for them to rank on a 9-point interval scale, and modify if necessary.

Those rankings were then used to determine the appropriateness of each proposed quality measure at a face-to-face meeting among the investigators and the 15-member expert panel, at which 17 quality measures were determined to be appropriate. In all, 2 measures dealt with competency, 2 pertained to assessment before procedure, 3 were regarding performance of the procedure itself, and 10 were about interpretation of data obtained from esophageal manometry; the 10 measures concerning interpretation of data were compiled into 1 measure, leaving a total of 8 that were ultimately approved.

The quality measures for competency are as follows:

• “If esophageal manometry is performed, then the technician must be competent to perform esophageal manometry.”

• “If a physician is considered competent to interpret esophageal manometry, then the physician must interpret a minimum number of esophageal manometry studies annually.”

For assessment before procedure, the measures state the following:

• “If a patient is referred for esophageal manometry, then the patient should have undergone an evaluation for structural abnormalities before manometry.”

• “If an esophageal manometry is performed, then informed consent must be obtained and documented.”

Quality measures regarding the procedure itself state the following:

• “If an esophageal manometry study is performed, then a time interval of at least 30 seconds should occur between swallows.”

• “If an esophageal manometry study is performed, then at least 10 wet swallows should be attempted.”

• “If an esophageal manometry study is performed, then at least seven evaluable wet swallows should be included.”

Finally, regarding interpretation of data, the single quality measures states that “If an esophageal manometry study is interpreted, then a complete procedure report should document the following:

• “Reason for referral.”

• “Clinical diagnosis.”

• “Diagnosis according to formally validated classification scheme.”

• “Documentation of formally validated classification scheme used.”

• “Summary of results”

• “Tabulated results including upper esophageal sphincter activity, interpretation of esophagogastric junction relaxation, documentation of pressure inversion point if technically feasible, pressurization pattern and contractile pattern.”

• “Technical limitation (if applicable).”

• “Communication to referring provider.”

“These eight appropriate quality measures are considered absolutely necessary in the performance and interpretation of esophageal manometry,” the authors concluded. “In particular, measures 3-8 are clinically feasible and measurable, and should serve as an initial framework to benchmark quality and reduce variability in esophageal manometry practices.”

This study was funded by the Alumnae of Northwestern University, and a grant to Dr. Yadlapati (T32 DK101363-02). Five coinvestigators disclosed consultancy and speaking relationships with Boston Scientific, Cook Endoscopy, EndoStim, Given Imaging, Covidien, and Sandhill Scientific.

Health care providers performing esophageal manometry should keep in mind eight new quality measures listed and validated in a recent study published in the April issue of Clinical Gastroenterology and Hepatology (Clin Gastroenterol Hepatol. 2015 Oct 20. doi: 10.1016/j.cgh.2015.10.006), which researchers believe will significantly improve the performance of esophageal manometry and interpretation of data culled from such procedures.

“Despite its critical importance in the diagnosis and management of esophageal motility disorders, features of a high-quality esophageal manometry [study] have not been formally defined,” said the study authors, led by Dr. Rena Yadlapati of Northwestern University in Chicago. “Standardizing key aspects of esophageal manometry is imperative to ensure the delivery of high-quality care.”

SOURCE: AMERICAN GASTROENTEROLOGICAL ASSOCIATION

Dr. Yadlapati and her coinvestigators carried out the study in accordance with guidelines set out by the RAND/UCLA Appropriateness Method (RAM), They began by recruiting a panel of 15 esophageal manometry experts with leadership, geographical diversity, and a wide range of practice settings being the key criteria in their selection.

Investigators then conducted a literature review, selecting the 30 most relevant randomized, controlled trials, retrospective studies, and systematic reviews from the past 10 years. From this review, investigators created a list of 30 possible quality measures, all of which were then sent to each member of the expert panel via email for them to rank on a 9-point interval scale, and modify if necessary.

Those rankings were then used to determine the appropriateness of each proposed quality measure at a face-to-face meeting among the investigators and the 15-member expert panel, at which 17 quality measures were determined to be appropriate. In all, 2 measures dealt with competency, 2 pertained to assessment before procedure, 3 were regarding performance of the procedure itself, and 10 were about interpretation of data obtained from esophageal manometry; the 10 measures concerning interpretation of data were compiled into 1 measure, leaving a total of 8 that were ultimately approved.

The quality measures for competency are as follows:

• “If esophageal manometry is performed, then the technician must be competent to perform esophageal manometry.”

• “If a physician is considered competent to interpret esophageal manometry, then the physician must interpret a minimum number of esophageal manometry studies annually.”

For assessment before procedure, the measures state the following:

• “If a patient is referred for esophageal manometry, then the patient should have undergone an evaluation for structural abnormalities before manometry.”

• “If an esophageal manometry is performed, then informed consent must be obtained and documented.”

Quality measures regarding the procedure itself state the following:

• “If an esophageal manometry study is performed, then a time interval of at least 30 seconds should occur between swallows.”

• “If an esophageal manometry study is performed, then at least 10 wet swallows should be attempted.”

• “If an esophageal manometry study is performed, then at least seven evaluable wet swallows should be included.”

Finally, regarding interpretation of data, the single quality measures states that “If an esophageal manometry study is interpreted, then a complete procedure report should document the following:

• “Reason for referral.”

• “Clinical diagnosis.”

• “Diagnosis according to formally validated classification scheme.”

• “Documentation of formally validated classification scheme used.”

• “Summary of results”

• “Tabulated results including upper esophageal sphincter activity, interpretation of esophagogastric junction relaxation, documentation of pressure inversion point if technically feasible, pressurization pattern and contractile pattern.”

• “Technical limitation (if applicable).”

• “Communication to referring provider.”

“These eight appropriate quality measures are considered absolutely necessary in the performance and interpretation of esophageal manometry,” the authors concluded. “In particular, measures 3-8 are clinically feasible and measurable, and should serve as an initial framework to benchmark quality and reduce variability in esophageal manometry practices.”

This study was funded by the Alumnae of Northwestern University, and a grant to Dr. Yadlapati (T32 DK101363-02). Five coinvestigators disclosed consultancy and speaking relationships with Boston Scientific, Cook Endoscopy, EndoStim, Given Imaging, Covidien, and Sandhill Scientific.

FROM CLINICAL GASTROENTEROLOGY AND HEPATOLOGY

Key clinical point: Health care providers should consider eight new validated quality measures when performing and interpreting esophageal manometry data.

Major finding: Of 30 possible measures, 10 regarding interpretation of data were compiled into a single quality measure, 2 were classified as competency measures, 2 were classified as assessments necessary prior to an esophageal manometry procedure, and 3 were classified as integral to the procedure of esophageal manometry, for a total of 8.

Data source: Survey of existing literature and expert interviews on validated quality measures on the basis of the RAM.

Disclosures: Study was partly funded by a grant from the Alumnae of Northwestern University; five coauthors reported financial disclosures.

VIDEO: Rectal indomethacin does not prevent pancreatitis post ERCP

Patients who receive rectal indomethacin after undergoing endoscopic retrograde cholangiopancreatography (ERCP) are not any less likely to develop pancreatitis than individuals who don’t, according to the findings of a recent study published in Gastroenterology (2016 Jan 9. doi: 10.1053/j.gastro.2015.12.018).

“These results are in contrast to recent studies highlighting the benefit of rectal NSAIDS to prevent PEP [post-ECRP pancreatitis] in high-risk patients [and] counter the guidelines espoused by the European Society for Gastrointestinal Endoscopy, which recently recommended giving rectal indomethacin to prevent PEP in all patients undergoing ERCP,” said the study authors, led by Dr. John M. Levenick of Penn State University in Hershey, Pa.

SOURCE: AMERICAN GASTROENTEROLOGICAL ASSOCIATION

Dr. Levenick and his coinvestigators screened 604 consecutive patients undergoing ERCP, with and without endoscopic ultrasound, at the Dartmouth-Hitchcock Medical Center between March 2013 and December 2014, eventually enrolling and randomizing 449 subjects into two cohorts: one in which subjects were given indomethacin after undergoing ERCP (n = 223), and one in which subjects were simply given a placebo (n = 226). Randomization happened after subjects’ major papilla had been reached, and cannulation attempts were started.

Individuals were excluded if they had active acute pancreatitis or had undergone ERCP to treat or diagnose acute pancreatitis, if they had any contraindications or allergies to NSAIDs, or were younger than 18 years of age, among other factors. The mean age of the indomethacin cohort was 64.9 years, with 118 (52.9%) females; in the placebo cohort, mean age was 64.3 years and 118 (52.2%) were female.

Pancreatitis occurred in 27 subjects overall, 16 (7.2%) of whom were in the indomethacin cohort and the other 11 (4.9%) were on placebo followed ERCP (P = .33). No subjects receiving indomethacin had severe or moderately severe PEP, but one subject had severe PEP and one had moderately severe PEP in the placebo cohort (P = 1.0). There was no necrotizing pancreatitis in either cohort, nor were there any significant differences in gastrointestinal bleeding (P = .75), death (P = .25), or 30-day hospital readmission (P = .1) between the two cohorts.

“Prophylactic rectal indomethacin did not reduce the incidence or severity of PEP in consecutive patients undergoing ERCP,” Dr. Levenick and his coauthors concluded, adding that “guidelines that recommend the administration of rectal indomethacin in all patients undergoing ERCP should be reconsidered.”

This study was funded by the National Pancreas Foundation and a grant from the National Institutes of Health. Dr. Levenick and his coauthors did not report any financial disclosures.

Acute pancreatitis is the most common and feared complication of endoscopic retrograde cholangiopancreatography (ERCP). The incidence of post-ERCP pancreatitis is around 10% with a mortality of 0.7% (Gastrointest Endosc. 2015;81:143-9). Recent advances in noninvasive pancreaticobiliary imaging, risk stratification before ERCP, prophylactic pancreatic stent placement, and administration of nonsteroidal anti-inflammatory drugs (NSAIDs) have improved the overall risk benefit ratio of ERCP.

NSAIDs are potent inhibitors of phospholipase A2, cyclooxygenase, and of the activation of platelets and endothelium, all of which play a central role in the pathogenesis of post-ERCP pancreatitis. NSAIDs constitute an attractive option in clinical practice, because they are inexpensive and widely available with a favorable risk profile. A recent multicenter randomized controlled trial (RCT) of 602 patients at high-risk for post-ERCP pancreatitis showed that rectal indomethacin is associated with a 7.7% absolute and a 46% relative risk reduction of post-ERCP pancreatitis (N Engl J Med. 2012;366:1414-22). These findings have been broadly adapted in endoscopic practice in the United States.

|

| Dr. Georgios Papachristou |

The presented RCT by Dr. Levenick and his colleagues evaluated the efficacy of rectal indomethacin in preventing post-ERCP pancreatitis among consecutive patients undergoing ERCP in a single U.S. center. This study was a well designed and conducted RCT following the CONSORT guidelines and utilizing an independent data and safety monitoring board.

The authors reported that rectal indomethacin did not result in reduction of post-ERCP pancreatitis (7.2%) when compared with placebo (4.9%). Of importance, 70% of patients included were at average risk for post-ERCP pancreatitis. Furthermore, despite a calculated sample size of 1,398 patients, the study was terminated early after enrolling only 449 patients based on the interim analysis showing futility to reach a statistically different outcome.

This well executed RCT reports no benefit in administering rectal indomethacin in all patients undergoing ERCP. Evidence strongly supports that rectal indomethacin remains an important advancement in preventing post-ERCP pancreatitis. However, its benefit is likely limited to a selected group of patients, those at high-risk for post-ERCP pancreatitis. Further studies are under way to clarify whether rectal indomethacin alone vs. indomethacin plus prophylactic pancreatic stenting is more effective in preventing post-ERCP pancreatitis in high-risk patients.

Dr. Georgios Papachristou is associate professor of medicine at the University of Pittsburgh. He is a consultant for Shire and has received funding from the National Institutes of Health and the VA Health System.

Acute pancreatitis is the most common and feared complication of endoscopic retrograde cholangiopancreatography (ERCP). The incidence of post-ERCP pancreatitis is around 10% with a mortality of 0.7% (Gastrointest Endosc. 2015;81:143-9). Recent advances in noninvasive pancreaticobiliary imaging, risk stratification before ERCP, prophylactic pancreatic stent placement, and administration of nonsteroidal anti-inflammatory drugs (NSAIDs) have improved the overall risk benefit ratio of ERCP.

NSAIDs are potent inhibitors of phospholipase A2, cyclooxygenase, and of the activation of platelets and endothelium, all of which play a central role in the pathogenesis of post-ERCP pancreatitis. NSAIDs constitute an attractive option in clinical practice, because they are inexpensive and widely available with a favorable risk profile. A recent multicenter randomized controlled trial (RCT) of 602 patients at high-risk for post-ERCP pancreatitis showed that rectal indomethacin is associated with a 7.7% absolute and a 46% relative risk reduction of post-ERCP pancreatitis (N Engl J Med. 2012;366:1414-22). These findings have been broadly adapted in endoscopic practice in the United States.

|

| Dr. Georgios Papachristou |

The presented RCT by Dr. Levenick and his colleagues evaluated the efficacy of rectal indomethacin in preventing post-ERCP pancreatitis among consecutive patients undergoing ERCP in a single U.S. center. This study was a well designed and conducted RCT following the CONSORT guidelines and utilizing an independent data and safety monitoring board.

The authors reported that rectal indomethacin did not result in reduction of post-ERCP pancreatitis (7.2%) when compared with placebo (4.9%). Of importance, 70% of patients included were at average risk for post-ERCP pancreatitis. Furthermore, despite a calculated sample size of 1,398 patients, the study was terminated early after enrolling only 449 patients based on the interim analysis showing futility to reach a statistically different outcome.

This well executed RCT reports no benefit in administering rectal indomethacin in all patients undergoing ERCP. Evidence strongly supports that rectal indomethacin remains an important advancement in preventing post-ERCP pancreatitis. However, its benefit is likely limited to a selected group of patients, those at high-risk for post-ERCP pancreatitis. Further studies are under way to clarify whether rectal indomethacin alone vs. indomethacin plus prophylactic pancreatic stenting is more effective in preventing post-ERCP pancreatitis in high-risk patients.

Dr. Georgios Papachristou is associate professor of medicine at the University of Pittsburgh. He is a consultant for Shire and has received funding from the National Institutes of Health and the VA Health System.

Acute pancreatitis is the most common and feared complication of endoscopic retrograde cholangiopancreatography (ERCP). The incidence of post-ERCP pancreatitis is around 10% with a mortality of 0.7% (Gastrointest Endosc. 2015;81:143-9). Recent advances in noninvasive pancreaticobiliary imaging, risk stratification before ERCP, prophylactic pancreatic stent placement, and administration of nonsteroidal anti-inflammatory drugs (NSAIDs) have improved the overall risk benefit ratio of ERCP.

NSAIDs are potent inhibitors of phospholipase A2, cyclooxygenase, and of the activation of platelets and endothelium, all of which play a central role in the pathogenesis of post-ERCP pancreatitis. NSAIDs constitute an attractive option in clinical practice, because they are inexpensive and widely available with a favorable risk profile. A recent multicenter randomized controlled trial (RCT) of 602 patients at high-risk for post-ERCP pancreatitis showed that rectal indomethacin is associated with a 7.7% absolute and a 46% relative risk reduction of post-ERCP pancreatitis (N Engl J Med. 2012;366:1414-22). These findings have been broadly adapted in endoscopic practice in the United States.

|

| Dr. Georgios Papachristou |

The presented RCT by Dr. Levenick and his colleagues evaluated the efficacy of rectal indomethacin in preventing post-ERCP pancreatitis among consecutive patients undergoing ERCP in a single U.S. center. This study was a well designed and conducted RCT following the CONSORT guidelines and utilizing an independent data and safety monitoring board.

The authors reported that rectal indomethacin did not result in reduction of post-ERCP pancreatitis (7.2%) when compared with placebo (4.9%). Of importance, 70% of patients included were at average risk for post-ERCP pancreatitis. Furthermore, despite a calculated sample size of 1,398 patients, the study was terminated early after enrolling only 449 patients based on the interim analysis showing futility to reach a statistically different outcome.

This well executed RCT reports no benefit in administering rectal indomethacin in all patients undergoing ERCP. Evidence strongly supports that rectal indomethacin remains an important advancement in preventing post-ERCP pancreatitis. However, its benefit is likely limited to a selected group of patients, those at high-risk for post-ERCP pancreatitis. Further studies are under way to clarify whether rectal indomethacin alone vs. indomethacin plus prophylactic pancreatic stenting is more effective in preventing post-ERCP pancreatitis in high-risk patients.

Dr. Georgios Papachristou is associate professor of medicine at the University of Pittsburgh. He is a consultant for Shire and has received funding from the National Institutes of Health and the VA Health System.

Patients who receive rectal indomethacin after undergoing endoscopic retrograde cholangiopancreatography (ERCP) are not any less likely to develop pancreatitis than individuals who don’t, according to the findings of a recent study published in Gastroenterology (2016 Jan 9. doi: 10.1053/j.gastro.2015.12.018).

“These results are in contrast to recent studies highlighting the benefit of rectal NSAIDS to prevent PEP [post-ECRP pancreatitis] in high-risk patients [and] counter the guidelines espoused by the European Society for Gastrointestinal Endoscopy, which recently recommended giving rectal indomethacin to prevent PEP in all patients undergoing ERCP,” said the study authors, led by Dr. John M. Levenick of Penn State University in Hershey, Pa.

SOURCE: AMERICAN GASTROENTEROLOGICAL ASSOCIATION

Dr. Levenick and his coinvestigators screened 604 consecutive patients undergoing ERCP, with and without endoscopic ultrasound, at the Dartmouth-Hitchcock Medical Center between March 2013 and December 2014, eventually enrolling and randomizing 449 subjects into two cohorts: one in which subjects were given indomethacin after undergoing ERCP (n = 223), and one in which subjects were simply given a placebo (n = 226). Randomization happened after subjects’ major papilla had been reached, and cannulation attempts were started.

Individuals were excluded if they had active acute pancreatitis or had undergone ERCP to treat or diagnose acute pancreatitis, if they had any contraindications or allergies to NSAIDs, or were younger than 18 years of age, among other factors. The mean age of the indomethacin cohort was 64.9 years, with 118 (52.9%) females; in the placebo cohort, mean age was 64.3 years and 118 (52.2%) were female.

Pancreatitis occurred in 27 subjects overall, 16 (7.2%) of whom were in the indomethacin cohort and the other 11 (4.9%) were on placebo followed ERCP (P = .33). No subjects receiving indomethacin had severe or moderately severe PEP, but one subject had severe PEP and one had moderately severe PEP in the placebo cohort (P = 1.0). There was no necrotizing pancreatitis in either cohort, nor were there any significant differences in gastrointestinal bleeding (P = .75), death (P = .25), or 30-day hospital readmission (P = .1) between the two cohorts.

“Prophylactic rectal indomethacin did not reduce the incidence or severity of PEP in consecutive patients undergoing ERCP,” Dr. Levenick and his coauthors concluded, adding that “guidelines that recommend the administration of rectal indomethacin in all patients undergoing ERCP should be reconsidered.”

This study was funded by the National Pancreas Foundation and a grant from the National Institutes of Health. Dr. Levenick and his coauthors did not report any financial disclosures.

Patients who receive rectal indomethacin after undergoing endoscopic retrograde cholangiopancreatography (ERCP) are not any less likely to develop pancreatitis than individuals who don’t, according to the findings of a recent study published in Gastroenterology (2016 Jan 9. doi: 10.1053/j.gastro.2015.12.018).

“These results are in contrast to recent studies highlighting the benefit of rectal NSAIDS to prevent PEP [post-ECRP pancreatitis] in high-risk patients [and] counter the guidelines espoused by the European Society for Gastrointestinal Endoscopy, which recently recommended giving rectal indomethacin to prevent PEP in all patients undergoing ERCP,” said the study authors, led by Dr. John M. Levenick of Penn State University in Hershey, Pa.

SOURCE: AMERICAN GASTROENTEROLOGICAL ASSOCIATION

Dr. Levenick and his coinvestigators screened 604 consecutive patients undergoing ERCP, with and without endoscopic ultrasound, at the Dartmouth-Hitchcock Medical Center between March 2013 and December 2014, eventually enrolling and randomizing 449 subjects into two cohorts: one in which subjects were given indomethacin after undergoing ERCP (n = 223), and one in which subjects were simply given a placebo (n = 226). Randomization happened after subjects’ major papilla had been reached, and cannulation attempts were started.

Individuals were excluded if they had active acute pancreatitis or had undergone ERCP to treat or diagnose acute pancreatitis, if they had any contraindications or allergies to NSAIDs, or were younger than 18 years of age, among other factors. The mean age of the indomethacin cohort was 64.9 years, with 118 (52.9%) females; in the placebo cohort, mean age was 64.3 years and 118 (52.2%) were female.

Pancreatitis occurred in 27 subjects overall, 16 (7.2%) of whom were in the indomethacin cohort and the other 11 (4.9%) were on placebo followed ERCP (P = .33). No subjects receiving indomethacin had severe or moderately severe PEP, but one subject had severe PEP and one had moderately severe PEP in the placebo cohort (P = 1.0). There was no necrotizing pancreatitis in either cohort, nor were there any significant differences in gastrointestinal bleeding (P = .75), death (P = .25), or 30-day hospital readmission (P = .1) between the two cohorts.

“Prophylactic rectal indomethacin did not reduce the incidence or severity of PEP in consecutive patients undergoing ERCP,” Dr. Levenick and his coauthors concluded, adding that “guidelines that recommend the administration of rectal indomethacin in all patients undergoing ERCP should be reconsidered.”

This study was funded by the National Pancreas Foundation and a grant from the National Institutes of Health. Dr. Levenick and his coauthors did not report any financial disclosures.

FROM GASTROENTEROLOGY

Key clinical point: Rectal indomethacin does not prevent pancreatitis in patients who undergo endoscopic retrograde cholangiopancreatography (ERCP).

Major finding: 7.2% of subjects on indomethacin and 4.9% on placebo developed post-ERCP pancreatitis, indicating no significant difference between the two cohorts (P = .33).

Data source: Prospective, double-blind, placebo-controlled study of 449 ERCP patients between March 2013 and December 2014.

Disclosures: Study funded by National Pancreas Foundation and National Institutes of Health. Dr. Levenick and his coauthors did not report any relevant financial disclosures.

VIDEO: Newer MRI hardware, software significantly better at detecting pancreatic cysts

As magnetic resonance imaging technology continues to advance year after year, so does MRI’s ability to accurately detect pancreatic cysts, according to a new study published in the April issue of Clinical Gastroenterology and Hepatology (doi: 10.1016/j.cgh.2015.08.038).

“To our knowledge, this is the first study to analyze the relationship between the technical improvements in imaging techniques (specifically, MRI) and the presence of incidentally found PCLs [pancreatic cystic lesions],” said the study authors, led by Dr. Michael B. Wallace of the Mayo Clinic in Jacksonville, Fla.

Dr. Wallace and his coinvestigators launched this retrospective descriptive study selecting the first 50 consecutive abdominal MRI patients at the Jacksonville Mayo Clinic during January and February of each year from 2005 through 2014, for a total of 500 cases who met inclusion criteria included in the study. Patients were excluded if they had preexisting symptomatic or asymptomatic pancreatitis, either acute or chronic, pancreatic masses, pancreatic cysts, pancreatic surgery, pancreatic symptoms, or any pancreas-related indications found by MRI.