User login

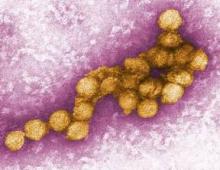

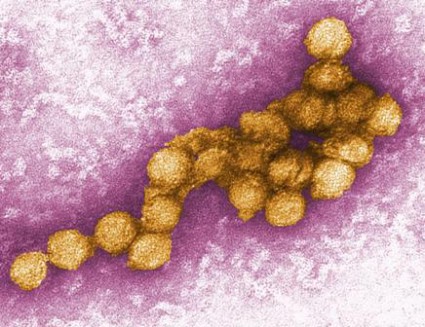

Analysis could help to predict West Nile epidemics

Analyzing three local factors helps to predict when an epidemic of West Nile disease is imminent or has just begun, according to a report in the July 17 issue of JAMA.

This in turn should allow augmented mosquito control efforts and other measures such as public education to limit human illness from the virus just as an epidemic commences, said Dr. Wendy M. Chung of Dallas County Health and Human Services and her associates.

By studying these three factors – local weather patterns, the geographical distribution of recent outbreaks, and the current mosquito vector index (an estimate of the average number of virus-infected mosquitoes collected in surveillance traps each night) – public health specialists may be able to identify rapid rises in West Nile activity before it is too late to intervene. Until now, the best that such experts could do was to "wait to initiate augmented vector control until significant numbers of human cases and deaths [had been] reported."

This wait has allowed epidemics to take root, since the incubation period is 1-2 weeks between mosquito bite and symptom onset, and since there is a further 1- to 2-week delay for viral cultures to be completed and positive results to be reported to authorities, the investigators said.

Dr. Chung and her colleagues came to these conclusions after analyzing data from the 2012 resurgence in West Nile virus that occurred nationwide but was particularly damaging in the Dallas County area. "Dallas has been a known focus of mosquito-borne encephalitis since 1966," they noted, and had an ongoing surveillance program that included collecting mosquitos in traps.

Before the 2012 epidemic there, Dallas had enjoyed 5 years of relative quietude in West Nile virus activity.

Then, between May and early December 2012, 1,162 cases of West Nile viremia were reported in Dallas County; 398 cases of viral illness were confirmed, including 19 fatal cases. This "record-setting outbreak" began a month earlier than previous West Nile seasons had, and the number of new cases also escalated more rapidly than in previous seasons.

An additional 17 cases of West Nile viremia were identified among blood donors, which was more than twice the number of viremic blood donors identified during the previous outbreak. The overall incidence of West Nile disease in Dallas County was 7.30 per 100,000 residents in 2012, more than twice as high as the previous peak incidence of 2.91 per 100,000.

The demographic and clinical characteristics of cases in the 2012 outbreak were similar to those in previous outbreaks. In 2012, 96% required hospitalization, 35% intensive care, and 18% assisted ventilation. The case fatality rate was 10%.

An analysis of local weather patterns revealed that the winter preceding the 2012 epidemic was the mildest in a decade, with no hard winter freezes, a record peak in the number of days with above-normal temperatures, and a record peak in the amount of winter rains. Summer temperatures also were warmer than average that year, and there was less wind during the months that usually are windy.

These findings suggest that West Nile activity increases when extreme weather conditions favor mosquito survival over the winter, a longer period of mosquito-to-bird transmission in early spring, and an early start to human infections, Dr. Chung and her associates said (JAMA 2013;310:297-307).

An analysis of the geographic distribution of cases over time revealed a "repeated predilection of cases" in the northern region of the county, with a particular hot spot in a defined north-central location. "The census tracts in this high-risk hot spot were distinguished from those in other areas by higher property values, greater housing density, and a higher percentage of houses unoccupied (reflecting the current economic downturn)," the researchers wrote.

These findings concur with those of previous studies in metropolitan areas. Such neighborhoods have more neglected swimming pools, which increase mosquito populations, and less forestation, which allows greater viral amplification among birds. "Whatever the biological explanation, identifying a perennial geographical pattern of human infections should be useful in targeting such areas for more intensive public health prevention measures, including preseason source reduction, larviciding, and education," Dr. Chung and her associates wrote.

Finally, mosquito surveillance and screening for West Nile virus carriage allowed the calculation of a species-specific vector index: an estimate of the average number of infected mosquitoes caught per trap-night. The weekly vector index in 2012 first detected West Nile in May, a full month earlier than in previous seasons.

The 2012 vector index also began increasing earlier and reached a higher peak than in previous seasons. "Sequential increases in the weekly vector index early in the 2012 season significantly predicted the number of patients with onset of symptoms of West Nile disease in the subsequent 1 to 2 weeks," the investigators said.

For predicting human illness, the vector index was superior to "other entomologic risk measures" such as mosquito abundance or mosquito infection rates, they added.

In the 2012 epidemic, the vector index passed a threshold of 0.5 by the end of June, at which time only three cases of West Nile disease had been reported. Taking action at this point instead of waiting for a sufficient number of cases to be reported would have averted much morbidity and mortality.

The researchers also addressed the concern that augmented spraying of insecticide at the onset of an outbreak of West Nile would harm residents. They analyzed the daily incidence of emergency department visits for skin rashes and acute upper respiratory distress and found no increase in these conditions during the 8-day period of ultralow-volume aerial spraying of minimally toxic pyrethroid insecticides approved by the Environmental Protection Agency for this purpose.

No financial conflicts of interest were reported.

Changing weather patterns, including increasingly mild winters and warmer summers that favor West Nile virus transmission, are becoming more common, said Dr. Stephen M. Ostroff.

Global climate change thus may expand both the zones of risk for West Nile disease as well as the duration of the typical disease "season."

The study by Dr. Chung and her colleagues highlights the importance of maintaining strong vector surveillance and management programs, rather than cutting their funding as many local, state, and federal sources are currently doing, he noted.

Dr. Ostroff is formerly of the Centers for Disease Control and Prevention, Atlanta, and the Pennsylvania Department of Health, Harrisburg. He reported no financial conflicts of interest. These remarks were taken from his editorial accompanying Dr. Chung’s report (JAMA 2013;310:267-8).

Changing weather patterns, including increasingly mild winters and warmer summers that favor West Nile virus transmission, are becoming more common, said Dr. Stephen M. Ostroff.

Global climate change thus may expand both the zones of risk for West Nile disease as well as the duration of the typical disease "season."

The study by Dr. Chung and her colleagues highlights the importance of maintaining strong vector surveillance and management programs, rather than cutting their funding as many local, state, and federal sources are currently doing, he noted.

Dr. Ostroff is formerly of the Centers for Disease Control and Prevention, Atlanta, and the Pennsylvania Department of Health, Harrisburg. He reported no financial conflicts of interest. These remarks were taken from his editorial accompanying Dr. Chung’s report (JAMA 2013;310:267-8).

Changing weather patterns, including increasingly mild winters and warmer summers that favor West Nile virus transmission, are becoming more common, said Dr. Stephen M. Ostroff.

Global climate change thus may expand both the zones of risk for West Nile disease as well as the duration of the typical disease "season."

The study by Dr. Chung and her colleagues highlights the importance of maintaining strong vector surveillance and management programs, rather than cutting their funding as many local, state, and federal sources are currently doing, he noted.

Dr. Ostroff is formerly of the Centers for Disease Control and Prevention, Atlanta, and the Pennsylvania Department of Health, Harrisburg. He reported no financial conflicts of interest. These remarks were taken from his editorial accompanying Dr. Chung’s report (JAMA 2013;310:267-8).

Analyzing three local factors helps to predict when an epidemic of West Nile disease is imminent or has just begun, according to a report in the July 17 issue of JAMA.

This in turn should allow augmented mosquito control efforts and other measures such as public education to limit human illness from the virus just as an epidemic commences, said Dr. Wendy M. Chung of Dallas County Health and Human Services and her associates.

By studying these three factors – local weather patterns, the geographical distribution of recent outbreaks, and the current mosquito vector index (an estimate of the average number of virus-infected mosquitoes collected in surveillance traps each night) – public health specialists may be able to identify rapid rises in West Nile activity before it is too late to intervene. Until now, the best that such experts could do was to "wait to initiate augmented vector control until significant numbers of human cases and deaths [had been] reported."

This wait has allowed epidemics to take root, since the incubation period is 1-2 weeks between mosquito bite and symptom onset, and since there is a further 1- to 2-week delay for viral cultures to be completed and positive results to be reported to authorities, the investigators said.

Dr. Chung and her colleagues came to these conclusions after analyzing data from the 2012 resurgence in West Nile virus that occurred nationwide but was particularly damaging in the Dallas County area. "Dallas has been a known focus of mosquito-borne encephalitis since 1966," they noted, and had an ongoing surveillance program that included collecting mosquitos in traps.

Before the 2012 epidemic there, Dallas had enjoyed 5 years of relative quietude in West Nile virus activity.

Then, between May and early December 2012, 1,162 cases of West Nile viremia were reported in Dallas County; 398 cases of viral illness were confirmed, including 19 fatal cases. This "record-setting outbreak" began a month earlier than previous West Nile seasons had, and the number of new cases also escalated more rapidly than in previous seasons.

An additional 17 cases of West Nile viremia were identified among blood donors, which was more than twice the number of viremic blood donors identified during the previous outbreak. The overall incidence of West Nile disease in Dallas County was 7.30 per 100,000 residents in 2012, more than twice as high as the previous peak incidence of 2.91 per 100,000.

The demographic and clinical characteristics of cases in the 2012 outbreak were similar to those in previous outbreaks. In 2012, 96% required hospitalization, 35% intensive care, and 18% assisted ventilation. The case fatality rate was 10%.

An analysis of local weather patterns revealed that the winter preceding the 2012 epidemic was the mildest in a decade, with no hard winter freezes, a record peak in the number of days with above-normal temperatures, and a record peak in the amount of winter rains. Summer temperatures also were warmer than average that year, and there was less wind during the months that usually are windy.

These findings suggest that West Nile activity increases when extreme weather conditions favor mosquito survival over the winter, a longer period of mosquito-to-bird transmission in early spring, and an early start to human infections, Dr. Chung and her associates said (JAMA 2013;310:297-307).

An analysis of the geographic distribution of cases over time revealed a "repeated predilection of cases" in the northern region of the county, with a particular hot spot in a defined north-central location. "The census tracts in this high-risk hot spot were distinguished from those in other areas by higher property values, greater housing density, and a higher percentage of houses unoccupied (reflecting the current economic downturn)," the researchers wrote.

These findings concur with those of previous studies in metropolitan areas. Such neighborhoods have more neglected swimming pools, which increase mosquito populations, and less forestation, which allows greater viral amplification among birds. "Whatever the biological explanation, identifying a perennial geographical pattern of human infections should be useful in targeting such areas for more intensive public health prevention measures, including preseason source reduction, larviciding, and education," Dr. Chung and her associates wrote.

Finally, mosquito surveillance and screening for West Nile virus carriage allowed the calculation of a species-specific vector index: an estimate of the average number of infected mosquitoes caught per trap-night. The weekly vector index in 2012 first detected West Nile in May, a full month earlier than in previous seasons.

The 2012 vector index also began increasing earlier and reached a higher peak than in previous seasons. "Sequential increases in the weekly vector index early in the 2012 season significantly predicted the number of patients with onset of symptoms of West Nile disease in the subsequent 1 to 2 weeks," the investigators said.

For predicting human illness, the vector index was superior to "other entomologic risk measures" such as mosquito abundance or mosquito infection rates, they added.

In the 2012 epidemic, the vector index passed a threshold of 0.5 by the end of June, at which time only three cases of West Nile disease had been reported. Taking action at this point instead of waiting for a sufficient number of cases to be reported would have averted much morbidity and mortality.

The researchers also addressed the concern that augmented spraying of insecticide at the onset of an outbreak of West Nile would harm residents. They analyzed the daily incidence of emergency department visits for skin rashes and acute upper respiratory distress and found no increase in these conditions during the 8-day period of ultralow-volume aerial spraying of minimally toxic pyrethroid insecticides approved by the Environmental Protection Agency for this purpose.

No financial conflicts of interest were reported.

Analyzing three local factors helps to predict when an epidemic of West Nile disease is imminent or has just begun, according to a report in the July 17 issue of JAMA.

This in turn should allow augmented mosquito control efforts and other measures such as public education to limit human illness from the virus just as an epidemic commences, said Dr. Wendy M. Chung of Dallas County Health and Human Services and her associates.

By studying these three factors – local weather patterns, the geographical distribution of recent outbreaks, and the current mosquito vector index (an estimate of the average number of virus-infected mosquitoes collected in surveillance traps each night) – public health specialists may be able to identify rapid rises in West Nile activity before it is too late to intervene. Until now, the best that such experts could do was to "wait to initiate augmented vector control until significant numbers of human cases and deaths [had been] reported."

This wait has allowed epidemics to take root, since the incubation period is 1-2 weeks between mosquito bite and symptom onset, and since there is a further 1- to 2-week delay for viral cultures to be completed and positive results to be reported to authorities, the investigators said.

Dr. Chung and her colleagues came to these conclusions after analyzing data from the 2012 resurgence in West Nile virus that occurred nationwide but was particularly damaging in the Dallas County area. "Dallas has been a known focus of mosquito-borne encephalitis since 1966," they noted, and had an ongoing surveillance program that included collecting mosquitos in traps.

Before the 2012 epidemic there, Dallas had enjoyed 5 years of relative quietude in West Nile virus activity.

Then, between May and early December 2012, 1,162 cases of West Nile viremia were reported in Dallas County; 398 cases of viral illness were confirmed, including 19 fatal cases. This "record-setting outbreak" began a month earlier than previous West Nile seasons had, and the number of new cases also escalated more rapidly than in previous seasons.

An additional 17 cases of West Nile viremia were identified among blood donors, which was more than twice the number of viremic blood donors identified during the previous outbreak. The overall incidence of West Nile disease in Dallas County was 7.30 per 100,000 residents in 2012, more than twice as high as the previous peak incidence of 2.91 per 100,000.

The demographic and clinical characteristics of cases in the 2012 outbreak were similar to those in previous outbreaks. In 2012, 96% required hospitalization, 35% intensive care, and 18% assisted ventilation. The case fatality rate was 10%.

An analysis of local weather patterns revealed that the winter preceding the 2012 epidemic was the mildest in a decade, with no hard winter freezes, a record peak in the number of days with above-normal temperatures, and a record peak in the amount of winter rains. Summer temperatures also were warmer than average that year, and there was less wind during the months that usually are windy.

These findings suggest that West Nile activity increases when extreme weather conditions favor mosquito survival over the winter, a longer period of mosquito-to-bird transmission in early spring, and an early start to human infections, Dr. Chung and her associates said (JAMA 2013;310:297-307).

An analysis of the geographic distribution of cases over time revealed a "repeated predilection of cases" in the northern region of the county, with a particular hot spot in a defined north-central location. "The census tracts in this high-risk hot spot were distinguished from those in other areas by higher property values, greater housing density, and a higher percentage of houses unoccupied (reflecting the current economic downturn)," the researchers wrote.

These findings concur with those of previous studies in metropolitan areas. Such neighborhoods have more neglected swimming pools, which increase mosquito populations, and less forestation, which allows greater viral amplification among birds. "Whatever the biological explanation, identifying a perennial geographical pattern of human infections should be useful in targeting such areas for more intensive public health prevention measures, including preseason source reduction, larviciding, and education," Dr. Chung and her associates wrote.

Finally, mosquito surveillance and screening for West Nile virus carriage allowed the calculation of a species-specific vector index: an estimate of the average number of infected mosquitoes caught per trap-night. The weekly vector index in 2012 first detected West Nile in May, a full month earlier than in previous seasons.

The 2012 vector index also began increasing earlier and reached a higher peak than in previous seasons. "Sequential increases in the weekly vector index early in the 2012 season significantly predicted the number of patients with onset of symptoms of West Nile disease in the subsequent 1 to 2 weeks," the investigators said.

For predicting human illness, the vector index was superior to "other entomologic risk measures" such as mosquito abundance or mosquito infection rates, they added.

In the 2012 epidemic, the vector index passed a threshold of 0.5 by the end of June, at which time only three cases of West Nile disease had been reported. Taking action at this point instead of waiting for a sufficient number of cases to be reported would have averted much morbidity and mortality.

The researchers also addressed the concern that augmented spraying of insecticide at the onset of an outbreak of West Nile would harm residents. They analyzed the daily incidence of emergency department visits for skin rashes and acute upper respiratory distress and found no increase in these conditions during the 8-day period of ultralow-volume aerial spraying of minimally toxic pyrethroid insecticides approved by the Environmental Protection Agency for this purpose.

No financial conflicts of interest were reported.

FROM JAMA

Major Finding: The overall West Nile neuroinvasive disease incidence rate in Dallas County was 7.30 per 100,000 residents in 2012, compared with 2.91 in 2006, the year of the second-largest outbreak in the county.

Data Source: An analysis of epidemiologic, meteorologic, and geospatial data collected during the 2012 West Nile virus epidemic in Dallas County.

Disclosures: No financial conflicts of interest were reported.

Longer duration of obesity linked to coronary calcification

Longer duration of both overall and abdominal obesity is strongly associated with subclinical coronary heart disease at midlife, as well as with increased progression of that disease over the course of 10 years, according to an analysis of the CARDIA study. The results were published in the July 17 issue of JAMA.

These associations are independent of the degree of adiposity, meaning that any level of overall or abdominal obesity corresponds with increased coronary risk, said Jared P. Reis, Ph.D., of the National Heart, Lung, and Blood Institute, Bethesda, Md., and his associates.

"Each additional year of overall or abdominal obesity beginning in early adulthood was associated with an HR or OR of 1.02 to 1.04 for coronary artery calcification and its progression" in middle age, they noted.

"Our findings suggest that preventing or at least delaying the onset of obesity in young adulthood may substantially reduce the risk of coronary atherosclerosis and limit its progression in later life," Dr. Reis and his colleagues said.

The investigators examined this issue using data from the CARDIA (Coronary Artery Risk Development in Young Adults) study, a multicenter, community-based, longitudinal cohort assessing the development and the determinants of cardiovascular disease over time. The study comprised 5,115 young adults aged 18-30 years at baseline in 1985-1986 who resided in Birmingham, Ala.; Chicago; Minneapolis; and Oakland, Calif. These subjects now have been reexamined at 2, 5, 7, 10, 15, 20, and 25 years after baseline.

The presence and degree of coronary artery calcification was measured using chest CT at year 15 (2000-2001), year 20 (2005-2006), and/or year 25 (2010-2011).

For their study, Dr. Reis and his associates focused on 3,275 of these CARDIA participants who were not obese at baseline. Roughly 46% were black and 51% were women.

A total of 40.4% of their study subjects developed overall obesity and 41.0% developed abdominal obesity during follow-up, with significant overlap in these two categories. The mean age at onset of overall obesity was 35.4 years, and mean age at onset of abdominal obesity was 37.7 years. The mean duration of overall obesity was 13 years, and that of abdominal obesity was 12 years.

Subclinical coronary artery calcification was identified in 27.5% of the 3,275 study subjects overall.

A total of 38.2% of subjects who had overall obesity for more than 20 years were found to have coronary artery calcification, as were 39.3% of those who had abdominal obesity for more than 20 years. In contrast, these rates were 24.9% and 24.7% in nonobese adults, the investigators said (JAMA 2013;310:280-8 [doi:10.1001/jama.2013.7833]).

Similarly, 28.4% of subjects who had overall obesity for more than 20 years were found to have high scores on a measure of coronary artery calcification, as were 28.2% of those who had abdominal obesity for more than 20 years. In contrast, these rates were 15.2% and 15.5% in nonobese adults.

In addition, the rates of coronary artery calcification were higher with increasing duration of obesity. For example, the rate of calcification was 11 per 1,000 person-years in subjects with 0 years of obesity, compared with 16.7 per 1,000 person-years in subjects with more than 20 years of obesity.

Coronary artery calcification also was more likely to progress over the course of 10 years in obese than in nonobese subjects. Rates of progression were 25.2% in adults with more than 20 years of overall obesity and 27.7% in those with more than 20 years of abdominal obesity, compared with 20.2% and 19.5%, respectively, in nonobese adults.

The association between obesity and coronary artery calcification did not differ by subjects’ race or sex.

"These findings suggest that the longer duration of exposure to excess adiposity as a result of the obesity epidemic and an earlier age at onset will have important implications [for] the future burden of coronary atherosclerosis and potentially [for] the rates of clinical cardiovascular disease in the United States," Dr. Reis and his associates said.

They added that although the mechanisms by which prolonged adiposity affects coronary artery calcification are not precisely known, it is likely that the sustained expression and secretion of proinflammatory adipocytokines plays a role. "Extended impairment of the fibrinolytic system via increased markers of hypercoagulability and hypofibrinolysis may also contribute to atherosclerotic vascular disease," the researchers wrote.

Obesity is also thought to impair nitric-oxide-dependent endothelial function, increase oxidative stress, and upregulate vasoconstrictor proteins, all of which may contribute to coronary atherosclerosis, they said.

This study was supported by the National Heart, Lung, and Blood Institute. Dr. Reis reported no financial conflicts of interest; one of his associates reported receiving grants from Novo Nordisk.

Longer duration of both overall and abdominal obesity is strongly associated with subclinical coronary heart disease at midlife, as well as with increased progression of that disease over the course of 10 years, according to an analysis of the CARDIA study. The results were published in the July 17 issue of JAMA.

These associations are independent of the degree of adiposity, meaning that any level of overall or abdominal obesity corresponds with increased coronary risk, said Jared P. Reis, Ph.D., of the National Heart, Lung, and Blood Institute, Bethesda, Md., and his associates.

"Each additional year of overall or abdominal obesity beginning in early adulthood was associated with an HR or OR of 1.02 to 1.04 for coronary artery calcification and its progression" in middle age, they noted.

"Our findings suggest that preventing or at least delaying the onset of obesity in young adulthood may substantially reduce the risk of coronary atherosclerosis and limit its progression in later life," Dr. Reis and his colleagues said.

The investigators examined this issue using data from the CARDIA (Coronary Artery Risk Development in Young Adults) study, a multicenter, community-based, longitudinal cohort assessing the development and the determinants of cardiovascular disease over time. The study comprised 5,115 young adults aged 18-30 years at baseline in 1985-1986 who resided in Birmingham, Ala.; Chicago; Minneapolis; and Oakland, Calif. These subjects now have been reexamined at 2, 5, 7, 10, 15, 20, and 25 years after baseline.

The presence and degree of coronary artery calcification was measured using chest CT at year 15 (2000-2001), year 20 (2005-2006), and/or year 25 (2010-2011).

For their study, Dr. Reis and his associates focused on 3,275 of these CARDIA participants who were not obese at baseline. Roughly 46% were black and 51% were women.

A total of 40.4% of their study subjects developed overall obesity and 41.0% developed abdominal obesity during follow-up, with significant overlap in these two categories. The mean age at onset of overall obesity was 35.4 years, and mean age at onset of abdominal obesity was 37.7 years. The mean duration of overall obesity was 13 years, and that of abdominal obesity was 12 years.

Subclinical coronary artery calcification was identified in 27.5% of the 3,275 study subjects overall.

A total of 38.2% of subjects who had overall obesity for more than 20 years were found to have coronary artery calcification, as were 39.3% of those who had abdominal obesity for more than 20 years. In contrast, these rates were 24.9% and 24.7% in nonobese adults, the investigators said (JAMA 2013;310:280-8 [doi:10.1001/jama.2013.7833]).

Similarly, 28.4% of subjects who had overall obesity for more than 20 years were found to have high scores on a measure of coronary artery calcification, as were 28.2% of those who had abdominal obesity for more than 20 years. In contrast, these rates were 15.2% and 15.5% in nonobese adults.

In addition, the rates of coronary artery calcification were higher with increasing duration of obesity. For example, the rate of calcification was 11 per 1,000 person-years in subjects with 0 years of obesity, compared with 16.7 per 1,000 person-years in subjects with more than 20 years of obesity.

Coronary artery calcification also was more likely to progress over the course of 10 years in obese than in nonobese subjects. Rates of progression were 25.2% in adults with more than 20 years of overall obesity and 27.7% in those with more than 20 years of abdominal obesity, compared with 20.2% and 19.5%, respectively, in nonobese adults.

The association between obesity and coronary artery calcification did not differ by subjects’ race or sex.

"These findings suggest that the longer duration of exposure to excess adiposity as a result of the obesity epidemic and an earlier age at onset will have important implications [for] the future burden of coronary atherosclerosis and potentially [for] the rates of clinical cardiovascular disease in the United States," Dr. Reis and his associates said.

They added that although the mechanisms by which prolonged adiposity affects coronary artery calcification are not precisely known, it is likely that the sustained expression and secretion of proinflammatory adipocytokines plays a role. "Extended impairment of the fibrinolytic system via increased markers of hypercoagulability and hypofibrinolysis may also contribute to atherosclerotic vascular disease," the researchers wrote.

Obesity is also thought to impair nitric-oxide-dependent endothelial function, increase oxidative stress, and upregulate vasoconstrictor proteins, all of which may contribute to coronary atherosclerosis, they said.

This study was supported by the National Heart, Lung, and Blood Institute. Dr. Reis reported no financial conflicts of interest; one of his associates reported receiving grants from Novo Nordisk.

Longer duration of both overall and abdominal obesity is strongly associated with subclinical coronary heart disease at midlife, as well as with increased progression of that disease over the course of 10 years, according to an analysis of the CARDIA study. The results were published in the July 17 issue of JAMA.

These associations are independent of the degree of adiposity, meaning that any level of overall or abdominal obesity corresponds with increased coronary risk, said Jared P. Reis, Ph.D., of the National Heart, Lung, and Blood Institute, Bethesda, Md., and his associates.

"Each additional year of overall or abdominal obesity beginning in early adulthood was associated with an HR or OR of 1.02 to 1.04 for coronary artery calcification and its progression" in middle age, they noted.

"Our findings suggest that preventing or at least delaying the onset of obesity in young adulthood may substantially reduce the risk of coronary atherosclerosis and limit its progression in later life," Dr. Reis and his colleagues said.

The investigators examined this issue using data from the CARDIA (Coronary Artery Risk Development in Young Adults) study, a multicenter, community-based, longitudinal cohort assessing the development and the determinants of cardiovascular disease over time. The study comprised 5,115 young adults aged 18-30 years at baseline in 1985-1986 who resided in Birmingham, Ala.; Chicago; Minneapolis; and Oakland, Calif. These subjects now have been reexamined at 2, 5, 7, 10, 15, 20, and 25 years after baseline.

The presence and degree of coronary artery calcification was measured using chest CT at year 15 (2000-2001), year 20 (2005-2006), and/or year 25 (2010-2011).

For their study, Dr. Reis and his associates focused on 3,275 of these CARDIA participants who were not obese at baseline. Roughly 46% were black and 51% were women.

A total of 40.4% of their study subjects developed overall obesity and 41.0% developed abdominal obesity during follow-up, with significant overlap in these two categories. The mean age at onset of overall obesity was 35.4 years, and mean age at onset of abdominal obesity was 37.7 years. The mean duration of overall obesity was 13 years, and that of abdominal obesity was 12 years.

Subclinical coronary artery calcification was identified in 27.5% of the 3,275 study subjects overall.

A total of 38.2% of subjects who had overall obesity for more than 20 years were found to have coronary artery calcification, as were 39.3% of those who had abdominal obesity for more than 20 years. In contrast, these rates were 24.9% and 24.7% in nonobese adults, the investigators said (JAMA 2013;310:280-8 [doi:10.1001/jama.2013.7833]).

Similarly, 28.4% of subjects who had overall obesity for more than 20 years were found to have high scores on a measure of coronary artery calcification, as were 28.2% of those who had abdominal obesity for more than 20 years. In contrast, these rates were 15.2% and 15.5% in nonobese adults.

In addition, the rates of coronary artery calcification were higher with increasing duration of obesity. For example, the rate of calcification was 11 per 1,000 person-years in subjects with 0 years of obesity, compared with 16.7 per 1,000 person-years in subjects with more than 20 years of obesity.

Coronary artery calcification also was more likely to progress over the course of 10 years in obese than in nonobese subjects. Rates of progression were 25.2% in adults with more than 20 years of overall obesity and 27.7% in those with more than 20 years of abdominal obesity, compared with 20.2% and 19.5%, respectively, in nonobese adults.

The association between obesity and coronary artery calcification did not differ by subjects’ race or sex.

"These findings suggest that the longer duration of exposure to excess adiposity as a result of the obesity epidemic and an earlier age at onset will have important implications [for] the future burden of coronary atherosclerosis and potentially [for] the rates of clinical cardiovascular disease in the United States," Dr. Reis and his associates said.

They added that although the mechanisms by which prolonged adiposity affects coronary artery calcification are not precisely known, it is likely that the sustained expression and secretion of proinflammatory adipocytokines plays a role. "Extended impairment of the fibrinolytic system via increased markers of hypercoagulability and hypofibrinolysis may also contribute to atherosclerotic vascular disease," the researchers wrote.

Obesity is also thought to impair nitric-oxide-dependent endothelial function, increase oxidative stress, and upregulate vasoconstrictor proteins, all of which may contribute to coronary atherosclerosis, they said.

This study was supported by the National Heart, Lung, and Blood Institute. Dr. Reis reported no financial conflicts of interest; one of his associates reported receiving grants from Novo Nordisk.

FROM JAMA

Major Finding: Among subjects with overall obesity of more than 20 years’ duration, 38.2% were found to have coronary artery calcification, as were 39.3% of those with abdominal obesity of more than 20 years’ duration. These rates were 24.9% and 24.7% in nonobese adults.

Data Source: A secondary analysis of data from a multicenter community-based longitudinal cohort study in 3,275 nonobese young adults who were followed for 25 years.

Disclosures: This study was supported by the National Heart, Lung, and Blood Institute. Dr. Reis reported no financial conflicts of interest; one of his associates reported receiving grants from Novo Nordisk.

Androgen deprivation therapy linked to acute kidney injury

Androgen deprivation therapy was strongly associated with an increased risk of acute kidney injury among men with nonmetastatic prostate cancer, according to a report in the July 17 issue of JAMA.

This elevation in risk varied slightly among different types of androgen deprivation agents, and was strongest with therapies that combine gonadotropin-releasing hormone agonists with oral antiandrogens. That suggests "a possible additive effect ... on both receptor antagonism and reduction of testosterone excretion," said Francesco Lapi, Pharm.D., Ph.D., of the Centre for Clinical Epidemiology, Jewish General Hospital, Montreal, and his associates (JAMA 2013;310:289-96).

The researchers discovered the risk elevation in what they described as the first population-based study to investigate the association between androgen deprivation therapy and acute kidney injury. They performed the study because even though the treatment traditionally has been reserved for advanced disease, it is now used increasingly in patients with earlier stages of prostate cancer.

In addition, the investigators were prompted to examine a possible link because of the high mortality (approximately 50%) associated with acute kidney injury.

"Although only one case report of flutamide-related acute kidney injury has been published to date, androgen deprivation therapy and its hypogonadal effect have well-known consequences consistent with our findings," they noted.

Dr. Lapi and his colleagues used two large databases in the United Kingdom, the Clinical Practice Research Datalink and the Hospital Episodes Statistics database, to identify 10,250 men newly diagnosed as having prostate cancer in 1998-2008 who were 40 years of age or older at diagnosis and were followed for a mean of 4 years. This yielded more than 42,000 person-years of follow-up.

A total of 232 cases of acute kidney injury occurred, for an overall incidence of 5.5/1,000 person-years, said Dr. Lapi and his associates.

These cases were matched for age, year of diagnosis, and duration of follow-up with 2,721 control subjects who did not develop acute kidney injury.

Compared with control subjects, men who were using androgen deprivation therapy had a significantly increased risk of acute kidney injury, with an odds ratio of 2.48. That association did not change when the data were adjusted to account for possible confounders, such as comorbidities known to impair kidney function, medications known to have renal toxicity, the severity of the underlying prostate cancer, and the intensity of other cancer treatments.

The investigators then analyzed the data according to type of androgen deprivation therapy, dividing the regimens into six mutually exclusive categories: gonadotropin-releasing hormone (GnRH) agonists (leuprolide, goserelin, triptorelin); oral antiandrogens (cyproterone acetate, flutamide, bicalutamide, nilutamide); combined androgen blockade (GnRH agonists plus oral antiandrogens); bilateral orchiectomy; estrogens; and combinations of those.

The odds ratios were highest for combined androgen blockade and also were significantly elevated for other combination therapies. Only the odd ratios for oral antiandrogens alone and for orchiectomy alone failed to reach statistical significance, although both were above 1.0, the investigators said.

The duration of androgen deprivation therapy was examined in a further analysis of the data. The risk of acute kidney injury was highest early in the course of treatment and decreased slightly, but it remained significantly elevated with longer duration of use.

Finally, in a sensitivity analysis that excluded the 54 cases and 842 controls who had abnormal creatinine levels at baseline, the results were consistent with those of the primary analysis.

The mechanism by which androgen deprivation therapy exerts an adverse effect on the kidney is not known, but the treatment is known to raise the risks of the metabolic syndrome and cardiovascular disease. "A similar rationale can be postulated for the risk of acute kidney injury," Dr. Lapi and his associates said.

The dyslipidemia and hyperglycemia of the metabolic syndrome may promote tubular atrophy and interstitial fibrosis, and may impair glomerular function by expanding and thickening the membranes of the interstitial tubules. Both dyslipidemia and hyperglycemia also raise the risk of thrombosis and induce oxidative stress, which can impact renal function.

In addition, testosterone is thought to protect the kidneys by inducing vasodilation in the renal vessels and enhancing nitric oxide production. So, antagonizing testosterone could promote damage to the glomerulus. And the hypogonadism induced by androgen deprivation can also lead to estrogen deficiency, reducing estrogen’s protective effect against ischemic renal injury, the investigators said.

The study was supported by Prostate Cancer Canada, the Canadian Institutes of Health Research, and Fonds de recherche en Sant

Androgen deprivation therapy was strongly associated with an increased risk of acute kidney injury among men with nonmetastatic prostate cancer, according to a report in the July 17 issue of JAMA.

This elevation in risk varied slightly among different types of androgen deprivation agents, and was strongest with therapies that combine gonadotropin-releasing hormone agonists with oral antiandrogens. That suggests "a possible additive effect ... on both receptor antagonism and reduction of testosterone excretion," said Francesco Lapi, Pharm.D., Ph.D., of the Centre for Clinical Epidemiology, Jewish General Hospital, Montreal, and his associates (JAMA 2013;310:289-96).

The researchers discovered the risk elevation in what they described as the first population-based study to investigate the association between androgen deprivation therapy and acute kidney injury. They performed the study because even though the treatment traditionally has been reserved for advanced disease, it is now used increasingly in patients with earlier stages of prostate cancer.

In addition, the investigators were prompted to examine a possible link because of the high mortality (approximately 50%) associated with acute kidney injury.

"Although only one case report of flutamide-related acute kidney injury has been published to date, androgen deprivation therapy and its hypogonadal effect have well-known consequences consistent with our findings," they noted.

Dr. Lapi and his colleagues used two large databases in the United Kingdom, the Clinical Practice Research Datalink and the Hospital Episodes Statistics database, to identify 10,250 men newly diagnosed as having prostate cancer in 1998-2008 who were 40 years of age or older at diagnosis and were followed for a mean of 4 years. This yielded more than 42,000 person-years of follow-up.

A total of 232 cases of acute kidney injury occurred, for an overall incidence of 5.5/1,000 person-years, said Dr. Lapi and his associates.

These cases were matched for age, year of diagnosis, and duration of follow-up with 2,721 control subjects who did not develop acute kidney injury.

Compared with control subjects, men who were using androgen deprivation therapy had a significantly increased risk of acute kidney injury, with an odds ratio of 2.48. That association did not change when the data were adjusted to account for possible confounders, such as comorbidities known to impair kidney function, medications known to have renal toxicity, the severity of the underlying prostate cancer, and the intensity of other cancer treatments.

The investigators then analyzed the data according to type of androgen deprivation therapy, dividing the regimens into six mutually exclusive categories: gonadotropin-releasing hormone (GnRH) agonists (leuprolide, goserelin, triptorelin); oral antiandrogens (cyproterone acetate, flutamide, bicalutamide, nilutamide); combined androgen blockade (GnRH agonists plus oral antiandrogens); bilateral orchiectomy; estrogens; and combinations of those.

The odds ratios were highest for combined androgen blockade and also were significantly elevated for other combination therapies. Only the odd ratios for oral antiandrogens alone and for orchiectomy alone failed to reach statistical significance, although both were above 1.0, the investigators said.

The duration of androgen deprivation therapy was examined in a further analysis of the data. The risk of acute kidney injury was highest early in the course of treatment and decreased slightly, but it remained significantly elevated with longer duration of use.

Finally, in a sensitivity analysis that excluded the 54 cases and 842 controls who had abnormal creatinine levels at baseline, the results were consistent with those of the primary analysis.

The mechanism by which androgen deprivation therapy exerts an adverse effect on the kidney is not known, but the treatment is known to raise the risks of the metabolic syndrome and cardiovascular disease. "A similar rationale can be postulated for the risk of acute kidney injury," Dr. Lapi and his associates said.

The dyslipidemia and hyperglycemia of the metabolic syndrome may promote tubular atrophy and interstitial fibrosis, and may impair glomerular function by expanding and thickening the membranes of the interstitial tubules. Both dyslipidemia and hyperglycemia also raise the risk of thrombosis and induce oxidative stress, which can impact renal function.

In addition, testosterone is thought to protect the kidneys by inducing vasodilation in the renal vessels and enhancing nitric oxide production. So, antagonizing testosterone could promote damage to the glomerulus. And the hypogonadism induced by androgen deprivation can also lead to estrogen deficiency, reducing estrogen’s protective effect against ischemic renal injury, the investigators said.

The study was supported by Prostate Cancer Canada, the Canadian Institutes of Health Research, and Fonds de recherche en Sant

Androgen deprivation therapy was strongly associated with an increased risk of acute kidney injury among men with nonmetastatic prostate cancer, according to a report in the July 17 issue of JAMA.

This elevation in risk varied slightly among different types of androgen deprivation agents, and was strongest with therapies that combine gonadotropin-releasing hormone agonists with oral antiandrogens. That suggests "a possible additive effect ... on both receptor antagonism and reduction of testosterone excretion," said Francesco Lapi, Pharm.D., Ph.D., of the Centre for Clinical Epidemiology, Jewish General Hospital, Montreal, and his associates (JAMA 2013;310:289-96).

The researchers discovered the risk elevation in what they described as the first population-based study to investigate the association between androgen deprivation therapy and acute kidney injury. They performed the study because even though the treatment traditionally has been reserved for advanced disease, it is now used increasingly in patients with earlier stages of prostate cancer.

In addition, the investigators were prompted to examine a possible link because of the high mortality (approximately 50%) associated with acute kidney injury.

"Although only one case report of flutamide-related acute kidney injury has been published to date, androgen deprivation therapy and its hypogonadal effect have well-known consequences consistent with our findings," they noted.

Dr. Lapi and his colleagues used two large databases in the United Kingdom, the Clinical Practice Research Datalink and the Hospital Episodes Statistics database, to identify 10,250 men newly diagnosed as having prostate cancer in 1998-2008 who were 40 years of age or older at diagnosis and were followed for a mean of 4 years. This yielded more than 42,000 person-years of follow-up.

A total of 232 cases of acute kidney injury occurred, for an overall incidence of 5.5/1,000 person-years, said Dr. Lapi and his associates.

These cases were matched for age, year of diagnosis, and duration of follow-up with 2,721 control subjects who did not develop acute kidney injury.

Compared with control subjects, men who were using androgen deprivation therapy had a significantly increased risk of acute kidney injury, with an odds ratio of 2.48. That association did not change when the data were adjusted to account for possible confounders, such as comorbidities known to impair kidney function, medications known to have renal toxicity, the severity of the underlying prostate cancer, and the intensity of other cancer treatments.

The investigators then analyzed the data according to type of androgen deprivation therapy, dividing the regimens into six mutually exclusive categories: gonadotropin-releasing hormone (GnRH) agonists (leuprolide, goserelin, triptorelin); oral antiandrogens (cyproterone acetate, flutamide, bicalutamide, nilutamide); combined androgen blockade (GnRH agonists plus oral antiandrogens); bilateral orchiectomy; estrogens; and combinations of those.

The odds ratios were highest for combined androgen blockade and also were significantly elevated for other combination therapies. Only the odd ratios for oral antiandrogens alone and for orchiectomy alone failed to reach statistical significance, although both were above 1.0, the investigators said.

The duration of androgen deprivation therapy was examined in a further analysis of the data. The risk of acute kidney injury was highest early in the course of treatment and decreased slightly, but it remained significantly elevated with longer duration of use.

Finally, in a sensitivity analysis that excluded the 54 cases and 842 controls who had abnormal creatinine levels at baseline, the results were consistent with those of the primary analysis.

The mechanism by which androgen deprivation therapy exerts an adverse effect on the kidney is not known, but the treatment is known to raise the risks of the metabolic syndrome and cardiovascular disease. "A similar rationale can be postulated for the risk of acute kidney injury," Dr. Lapi and his associates said.

The dyslipidemia and hyperglycemia of the metabolic syndrome may promote tubular atrophy and interstitial fibrosis, and may impair glomerular function by expanding and thickening the membranes of the interstitial tubules. Both dyslipidemia and hyperglycemia also raise the risk of thrombosis and induce oxidative stress, which can impact renal function.

In addition, testosterone is thought to protect the kidneys by inducing vasodilation in the renal vessels and enhancing nitric oxide production. So, antagonizing testosterone could promote damage to the glomerulus. And the hypogonadism induced by androgen deprivation can also lead to estrogen deficiency, reducing estrogen’s protective effect against ischemic renal injury, the investigators said.

The study was supported by Prostate Cancer Canada, the Canadian Institutes of Health Research, and Fonds de recherche en Sant

FROM JAMA

Major finding: Men with prostate cancer who used androgen deprivation therapy had a significantly increased risk of acute kidney injury, with an odds ratio of 2.48, compared with those who didn’t use the therapy.

Data Source: A population-based case-control study involving 10,250 men aged 40 years and older, newly diagnosed with nonmetastatic prostate cancer, who were followed for a mean of 4 years for the development of acute kidney injury.

Disclosures: The study was supported by Prostate Cancer Canada, the Canadian Institutes of Health Research, and Fonds de recherche en Sant

CD123 differentiates acute GVHD from infections, drug reactions

CD123 expression in the intestinal mucosa may be a useful immunohistochemical marker to differentiate acute colonic graft-versus-host disease in hematopoietic stem-cell transplant patients who develop nonspecific gastrointestinal symptoms, according to Dr. Jingmei Lin and her colleagues.

Immunostaining endoscopic biopsy samples with CD123, an interleukin-3 receptor subunit, can identify plasmacytoid dendritic cells that are critical to the development of graft-versus-host disease (GVHD) but are not known to be present in infectious processes, adverse drug reactions, or chemoradiation toxicities. CD123 immunostaining was associated with a sensitivity of 66% and a specificity of 97% for acute GVHD, the researchers said (Hum. Pathol. 2013 June 21 [doi:10.1016/j.humpath.2013.02.023]).

Gastrointestinal GVHD can present as a variety of nonspecific symptoms and can be difficult to distinguish pathologically from colonic cytomegaloivrus, a major complication following stem-cell transplantation. Gastrointestinal GVHD also can be hard to differentiate pathologically from other opportunistic infections, such as Clostridium difficile and adenovirus infections, as well as from adverse reactions to chemotherapy and radiation. Early distinction is crucial because treatment approaches differ for these three entities and because early therapy improves outcomes for acute GVHD, said Dr. Lin of the departments of pathology and laboratory medicine, Indiana University, Indianapolis, and her associates.

The researchers reviewed 38 colonic endoscopy samples from stem-cell transplant recipients known to have gastrointestinal GVHD and compared them with 14 samples from patients who had not undergone transplantation and who were known to have cytomegalovirus colitis.

The researchers also assessed 11 biopsy samples (colon, stomach, small bowel, and esophagus) from patients who had taken mycophenolate, which is used for the prophylaxis of GVHD. They additionally assessed 47 biopsies (upper and lower GI) from patients who had undergone hematopoietic stem-cell transplantation but had not developed GVHD or infection, and 5 colon biopsies from control subjects.

All of the GVHD patients had presented with nonspecific symptoms of diarrhea, abdominal pain, abdominal cramping, nausea, and/or vomiting.

Among the 38 samples from patients with acute GVHD, 25 (66%) were positive on CD123 staining, showing plasmacytoid dendritic cells in the lamina propria. This marker increased in sensitivity as lesion grade increased: 60% of grade-1 and grade-2 lesions were positive, compared with 72% of grade-3 and grade-4 lesions.

In contrast, 2 of the 14 (14%) samples from patients with CMV, none of the 47 samples from transplant patients without GVHD, none of the 11 samples from patients who took mycophenolate, and none of the 5 control samples were positive for CD123.

The investigators said that they have no funding or conflicts of interest to disclose.

CD123 expression in the intestinal mucosa may be a useful immunohistochemical marker to differentiate acute colonic graft-versus-host disease in hematopoietic stem-cell transplant patients who develop nonspecific gastrointestinal symptoms, according to Dr. Jingmei Lin and her colleagues.

Immunostaining endoscopic biopsy samples with CD123, an interleukin-3 receptor subunit, can identify plasmacytoid dendritic cells that are critical to the development of graft-versus-host disease (GVHD) but are not known to be present in infectious processes, adverse drug reactions, or chemoradiation toxicities. CD123 immunostaining was associated with a sensitivity of 66% and a specificity of 97% for acute GVHD, the researchers said (Hum. Pathol. 2013 June 21 [doi:10.1016/j.humpath.2013.02.023]).

Gastrointestinal GVHD can present as a variety of nonspecific symptoms and can be difficult to distinguish pathologically from colonic cytomegaloivrus, a major complication following stem-cell transplantation. Gastrointestinal GVHD also can be hard to differentiate pathologically from other opportunistic infections, such as Clostridium difficile and adenovirus infections, as well as from adverse reactions to chemotherapy and radiation. Early distinction is crucial because treatment approaches differ for these three entities and because early therapy improves outcomes for acute GVHD, said Dr. Lin of the departments of pathology and laboratory medicine, Indiana University, Indianapolis, and her associates.

The researchers reviewed 38 colonic endoscopy samples from stem-cell transplant recipients known to have gastrointestinal GVHD and compared them with 14 samples from patients who had not undergone transplantation and who were known to have cytomegalovirus colitis.

The researchers also assessed 11 biopsy samples (colon, stomach, small bowel, and esophagus) from patients who had taken mycophenolate, which is used for the prophylaxis of GVHD. They additionally assessed 47 biopsies (upper and lower GI) from patients who had undergone hematopoietic stem-cell transplantation but had not developed GVHD or infection, and 5 colon biopsies from control subjects.

All of the GVHD patients had presented with nonspecific symptoms of diarrhea, abdominal pain, abdominal cramping, nausea, and/or vomiting.

Among the 38 samples from patients with acute GVHD, 25 (66%) were positive on CD123 staining, showing plasmacytoid dendritic cells in the lamina propria. This marker increased in sensitivity as lesion grade increased: 60% of grade-1 and grade-2 lesions were positive, compared with 72% of grade-3 and grade-4 lesions.

In contrast, 2 of the 14 (14%) samples from patients with CMV, none of the 47 samples from transplant patients without GVHD, none of the 11 samples from patients who took mycophenolate, and none of the 5 control samples were positive for CD123.

The investigators said that they have no funding or conflicts of interest to disclose.

CD123 expression in the intestinal mucosa may be a useful immunohistochemical marker to differentiate acute colonic graft-versus-host disease in hematopoietic stem-cell transplant patients who develop nonspecific gastrointestinal symptoms, according to Dr. Jingmei Lin and her colleagues.

Immunostaining endoscopic biopsy samples with CD123, an interleukin-3 receptor subunit, can identify plasmacytoid dendritic cells that are critical to the development of graft-versus-host disease (GVHD) but are not known to be present in infectious processes, adverse drug reactions, or chemoradiation toxicities. CD123 immunostaining was associated with a sensitivity of 66% and a specificity of 97% for acute GVHD, the researchers said (Hum. Pathol. 2013 June 21 [doi:10.1016/j.humpath.2013.02.023]).

Gastrointestinal GVHD can present as a variety of nonspecific symptoms and can be difficult to distinguish pathologically from colonic cytomegaloivrus, a major complication following stem-cell transplantation. Gastrointestinal GVHD also can be hard to differentiate pathologically from other opportunistic infections, such as Clostridium difficile and adenovirus infections, as well as from adverse reactions to chemotherapy and radiation. Early distinction is crucial because treatment approaches differ for these three entities and because early therapy improves outcomes for acute GVHD, said Dr. Lin of the departments of pathology and laboratory medicine, Indiana University, Indianapolis, and her associates.

The researchers reviewed 38 colonic endoscopy samples from stem-cell transplant recipients known to have gastrointestinal GVHD and compared them with 14 samples from patients who had not undergone transplantation and who were known to have cytomegalovirus colitis.

The researchers also assessed 11 biopsy samples (colon, stomach, small bowel, and esophagus) from patients who had taken mycophenolate, which is used for the prophylaxis of GVHD. They additionally assessed 47 biopsies (upper and lower GI) from patients who had undergone hematopoietic stem-cell transplantation but had not developed GVHD or infection, and 5 colon biopsies from control subjects.

All of the GVHD patients had presented with nonspecific symptoms of diarrhea, abdominal pain, abdominal cramping, nausea, and/or vomiting.

Among the 38 samples from patients with acute GVHD, 25 (66%) were positive on CD123 staining, showing plasmacytoid dendritic cells in the lamina propria. This marker increased in sensitivity as lesion grade increased: 60% of grade-1 and grade-2 lesions were positive, compared with 72% of grade-3 and grade-4 lesions.

In contrast, 2 of the 14 (14%) samples from patients with CMV, none of the 47 samples from transplant patients without GVHD, none of the 11 samples from patients who took mycophenolate, and none of the 5 control samples were positive for CD123.

The investigators said that they have no funding or conflicts of interest to disclose.

FROM HUMAN PATHOLOGY

Major finding: Among 38 colonic endoscopy samples from patients with acute GVHD, 25 (66%) were positive on CD123 staining. The marker increased in sensitivity as lesion grade increased: 60% of grade-1 and grade-2 lesions were positive, compared with 72% of grade-3 and grade-4 lesions.

Data source: A review of endoscopic biopsy samples from stem-cell transplant recipients known to have gastrointestinal GVHD, patients known to have cytomegalovirus colitis, patients who had taken mycophenolate, patients who had hematopoietic stem cell transplants and had not developed GVHD or infection, and control subjects.

Disclosures: The investigators said that they have no funding or conflicts of interest to disclose.

Home + pharmacist BP telemonitoring found successful

An intervention involving home blood pressure monitoring and case management by a pharmacist achieved better control of hypertension than did usual care provided by a primary-care physician, in a study reported July 3 in JAMA.

This positive effect lasted well beyond the 1-year treatment period and through an additional 6 months of follow-up after the intervention was stopped, said Dr. Karen L. Margolis of HealthPartners Institute for Education and Research, Minneapolis, and her associates.

"If these results are found to be cost-effective and durable during an even longer period, it should spur wider testing and dissemination of similar alternative models of care for managing hypertension and other chronic conditions," they said.

The investigators performed the Home Blood Pressure Telemonitoring and Case Management to Control Hypertension (HyperLink) study to determine whether the intervention was safe, effective, and durable in real-world patients "representative of the range of comorbidity and hypertension severity in typical primary-care practice."

The cluster-randomized study was conducted at 16 primary-care clinics in a multispecialty practice that was part of an integrated health system. These clinics had an existing arrangement between primary-care physicians and pharmacists allowing the pharmacists to prescribe and change antihypertensive therapy according to specified protocols.

The clinics were matched by size and randomized to continue usual hypertension care managed by the primary-care physician (8 clinics) or to implement the telemonitoring intervention (8 clinics). A total of 450 patients with uncontrolled hypertension were included: 228 assigned to the intervention and 222 assigned to usual care.

The mean patient age was 61 years. The study population was almost equally divided between men and women, and 82% were white. Comorbidities were common, including obesity (54%), diabetes (19%), chronic kidney disease (19%), and cardiovascular disease (10%). The mean BP was 148/85 mm Hg.

In the intervention group, patients met with pharmacists for 1 hour to review their history, get general information about hypertension, and receive instructions for operating the home BP monitor that stored and transmitted data to a secure website accessed by the pharmacist. These study subjects were told to transmit at least 3 morning and 3 evening BP measurements each week. Pharmacists retrieved the information and altered medications accordingly.

The pharmacists and patients also consulted via telephone every 2 weeks until BP control was sustained for 6 weeks, and then their phone "visits" were decreased to once per month. After the first 6 months of the intervention, phone calls were scaled back to once every 2 months.

At these visits, the pharmacists discussed lifestyle changes, medication adherence, and adverse effects of medication, and they adjusted antihypertensive medications as necessary. They communicated with patients’ primary-care physicians through the electronic medical record after each call.

At 12 months, the intervention stopped. Patients discontinued using the telemonitors and returned to the care of their primary physicians.

During this intervention and for 6 months thereafter, the patients made periodic visits to the research clinic so the safety and efficacy of the intervention could be monitored.

The primary outcome was the percentage of patients with controlled BP, defined as <140/90 mm Hg, or <130/80 mm Hg if they had concomitant diabetes or kidney disease.

At 6 months, this outcome was attained by 72% of the intervention group, compared with 45% of the usual-care group. At 12 months, the corresponding rates were 71% and 53%, respectively. And at 18 months, they were 72% and 57%, respectively.

Overall, the intervention group achieved 25%-30% higher rates of BP control than did the usual-care group, Dr. Margolis and her associates said (JAMA 2013;310:46-56).

Patient satisfaction with care was similar between the two study groups. At 6 months, more patients who received the intervention felt their clinicians listened carefully, explained things clearly, and respected them than did patients who received usual care. However, this difference was no longer present at 12 or 18 months.

Patients who received the intervention were "substantially more confident" than were those who received usual care regarding communication with their health care team, mastering of the home BP monitoring routine, following their medication regimen, and keeping their BP under control.

The intervention group also self-reported that they added less salt to their food than did the control group at all time points, "but other lifestyle factors did not differ" between the two study groups.

Dr. Margolis and her associates calculated that the direct cost of this intervention would total $1,350 per patient.

This study was limited in that a very large number of potentially eligible patients (nearly 15,000) had to be screened to obtain a relatively small study population of 450 subjects. Also, these study subjects were, in general, well educated and had correspondingly high income levels, and approximately half had used a home BP monitor before, so they were not representative of the general population.

"We conclude that BP telemonitoring and pharmacist case-management was safe and effective for improving BP control compared with usual care during 12 months; and improved BP in the intervention group was maintained for 6 months following the intervention," they said.

"We plan future analyses that will take into account indirect costs during 18 months and long-term cost savings from averting hypertension-related events," they added.

No relevant financial conflicts of interest were reported. HealthPartners Institute for Education and Research has entered a royalty-bearing license agreement to commercialize a simulated learning technology for the purpose of broader dissemination.

Given the "consistent and substantial" evidence obtained in this and other studies, it is clear that moving hypertension care out of the office and into patients’ homes is safe and effective, said Dr. David J. Magid and Dr. Beverly B. Green.

Yet home-based HT management has not been widely adopted in the United States and isn’t likely to be, unless the current system of reimbursement and performance measurement is changed. Medical insurance must cover patients’ costs for BP telemonitors and reimburse providers for their related services. And home BP must be included in quality assurance assessments of HT care.

"If home BP monitoring and team-based care were implemented broadly, hypertension management would be easier for patients, and the magnitude of BP reductions ... could lead to substantial reductions in cardiovascular events and mortality," they said.

Dr. Magid is at Kaiser Permanente Colorado Institute for Health Research, Denver. Dr. Green is at Group Health Research Institute at the University of Washington, Seattle. They reported no financial conflicts of interest. These remarks were taken from their editorial accompanying Dr. Margolis’s report (JAMA 2013;310:40-1).

Given the "consistent and substantial" evidence obtained in this and other studies, it is clear that moving hypertension care out of the office and into patients’ homes is safe and effective, said Dr. David J. Magid and Dr. Beverly B. Green.

Yet home-based HT management has not been widely adopted in the United States and isn’t likely to be, unless the current system of reimbursement and performance measurement is changed. Medical insurance must cover patients’ costs for BP telemonitors and reimburse providers for their related services. And home BP must be included in quality assurance assessments of HT care.

"If home BP monitoring and team-based care were implemented broadly, hypertension management would be easier for patients, and the magnitude of BP reductions ... could lead to substantial reductions in cardiovascular events and mortality," they said.

Dr. Magid is at Kaiser Permanente Colorado Institute for Health Research, Denver. Dr. Green is at Group Health Research Institute at the University of Washington, Seattle. They reported no financial conflicts of interest. These remarks were taken from their editorial accompanying Dr. Margolis’s report (JAMA 2013;310:40-1).

Given the "consistent and substantial" evidence obtained in this and other studies, it is clear that moving hypertension care out of the office and into patients’ homes is safe and effective, said Dr. David J. Magid and Dr. Beverly B. Green.

Yet home-based HT management has not been widely adopted in the United States and isn’t likely to be, unless the current system of reimbursement and performance measurement is changed. Medical insurance must cover patients’ costs for BP telemonitors and reimburse providers for their related services. And home BP must be included in quality assurance assessments of HT care.

"If home BP monitoring and team-based care were implemented broadly, hypertension management would be easier for patients, and the magnitude of BP reductions ... could lead to substantial reductions in cardiovascular events and mortality," they said.

Dr. Magid is at Kaiser Permanente Colorado Institute for Health Research, Denver. Dr. Green is at Group Health Research Institute at the University of Washington, Seattle. They reported no financial conflicts of interest. These remarks were taken from their editorial accompanying Dr. Margolis’s report (JAMA 2013;310:40-1).

An intervention involving home blood pressure monitoring and case management by a pharmacist achieved better control of hypertension than did usual care provided by a primary-care physician, in a study reported July 3 in JAMA.

This positive effect lasted well beyond the 1-year treatment period and through an additional 6 months of follow-up after the intervention was stopped, said Dr. Karen L. Margolis of HealthPartners Institute for Education and Research, Minneapolis, and her associates.

"If these results are found to be cost-effective and durable during an even longer period, it should spur wider testing and dissemination of similar alternative models of care for managing hypertension and other chronic conditions," they said.

The investigators performed the Home Blood Pressure Telemonitoring and Case Management to Control Hypertension (HyperLink) study to determine whether the intervention was safe, effective, and durable in real-world patients "representative of the range of comorbidity and hypertension severity in typical primary-care practice."

The cluster-randomized study was conducted at 16 primary-care clinics in a multispecialty practice that was part of an integrated health system. These clinics had an existing arrangement between primary-care physicians and pharmacists allowing the pharmacists to prescribe and change antihypertensive therapy according to specified protocols.

The clinics were matched by size and randomized to continue usual hypertension care managed by the primary-care physician (8 clinics) or to implement the telemonitoring intervention (8 clinics). A total of 450 patients with uncontrolled hypertension were included: 228 assigned to the intervention and 222 assigned to usual care.

The mean patient age was 61 years. The study population was almost equally divided between men and women, and 82% were white. Comorbidities were common, including obesity (54%), diabetes (19%), chronic kidney disease (19%), and cardiovascular disease (10%). The mean BP was 148/85 mm Hg.

In the intervention group, patients met with pharmacists for 1 hour to review their history, get general information about hypertension, and receive instructions for operating the home BP monitor that stored and transmitted data to a secure website accessed by the pharmacist. These study subjects were told to transmit at least 3 morning and 3 evening BP measurements each week. Pharmacists retrieved the information and altered medications accordingly.

The pharmacists and patients also consulted via telephone every 2 weeks until BP control was sustained for 6 weeks, and then their phone "visits" were decreased to once per month. After the first 6 months of the intervention, phone calls were scaled back to once every 2 months.

At these visits, the pharmacists discussed lifestyle changes, medication adherence, and adverse effects of medication, and they adjusted antihypertensive medications as necessary. They communicated with patients’ primary-care physicians through the electronic medical record after each call.

At 12 months, the intervention stopped. Patients discontinued using the telemonitors and returned to the care of their primary physicians.

During this intervention and for 6 months thereafter, the patients made periodic visits to the research clinic so the safety and efficacy of the intervention could be monitored.

The primary outcome was the percentage of patients with controlled BP, defined as <140/90 mm Hg, or <130/80 mm Hg if they had concomitant diabetes or kidney disease.

At 6 months, this outcome was attained by 72% of the intervention group, compared with 45% of the usual-care group. At 12 months, the corresponding rates were 71% and 53%, respectively. And at 18 months, they were 72% and 57%, respectively.

Overall, the intervention group achieved 25%-30% higher rates of BP control than did the usual-care group, Dr. Margolis and her associates said (JAMA 2013;310:46-56).

Patient satisfaction with care was similar between the two study groups. At 6 months, more patients who received the intervention felt their clinicians listened carefully, explained things clearly, and respected them than did patients who received usual care. However, this difference was no longer present at 12 or 18 months.

Patients who received the intervention were "substantially more confident" than were those who received usual care regarding communication with their health care team, mastering of the home BP monitoring routine, following their medication regimen, and keeping their BP under control.

The intervention group also self-reported that they added less salt to their food than did the control group at all time points, "but other lifestyle factors did not differ" between the two study groups.

Dr. Margolis and her associates calculated that the direct cost of this intervention would total $1,350 per patient.

This study was limited in that a very large number of potentially eligible patients (nearly 15,000) had to be screened to obtain a relatively small study population of 450 subjects. Also, these study subjects were, in general, well educated and had correspondingly high income levels, and approximately half had used a home BP monitor before, so they were not representative of the general population.

"We conclude that BP telemonitoring and pharmacist case-management was safe and effective for improving BP control compared with usual care during 12 months; and improved BP in the intervention group was maintained for 6 months following the intervention," they said.

"We plan future analyses that will take into account indirect costs during 18 months and long-term cost savings from averting hypertension-related events," they added.

No relevant financial conflicts of interest were reported. HealthPartners Institute for Education and Research has entered a royalty-bearing license agreement to commercialize a simulated learning technology for the purpose of broader dissemination.

An intervention involving home blood pressure monitoring and case management by a pharmacist achieved better control of hypertension than did usual care provided by a primary-care physician, in a study reported July 3 in JAMA.

This positive effect lasted well beyond the 1-year treatment period and through an additional 6 months of follow-up after the intervention was stopped, said Dr. Karen L. Margolis of HealthPartners Institute for Education and Research, Minneapolis, and her associates.

"If these results are found to be cost-effective and durable during an even longer period, it should spur wider testing and dissemination of similar alternative models of care for managing hypertension and other chronic conditions," they said.